RBAC: Role Based Access Control

Kubeconfig

~/.kube/config

apiVersion: v1

kind: Config

preferences: {}

clusters:

- name: cluster.local

cluster:

certificate-authority-data: LS0tLS1...

server: https://127.0.0.1:6443

- name: mycluster

cluster:

server: https://1.2.3.4:6443

users:

- name: myadmin

- name: kubernetes-admin

user:

client-certificate-data: LS0tLS1...

client-key-data: LS0tLS1...

contexts:

- context:

cluster: mycluster

user: myadmin

name: myadmin@mycluster

- context:

cluster: cluster.local

user: kubernetes-admin

name: kubernetes-admin@cluster.local

current-context: kubernetes-admin@cluster.localkubectl config viewkubectl config get-clusters

kubectl config get-contexts

kubectl config get-userskubectl config use-context myadmin@mycluster인증

쿠버네티스의 사용자

- Service Account(sa): 쿠버네티스가 관리하는 SA 사용자

- 사용자 X

- Pod 사용 - Normal User: 일반 사용자(쿠버네티스가 관리 X)

- 사용자 O

- Pod X

인증 방법:

- x509 인증서

- 토큰

- Bearer Token

- http 헤더:

-Authorization: Bearer 31ada4fd-adec-460c-809a-9e56ceb75269

- SA Token

- JSON Web Token: JWT- OpenID Connect(OIDC)

- 외부 인증 표준화 인터페이스

- okta, AWS IAM

- OAuth2 Provider

- OpenID Connect(OIDC)

RBAC

- Role: 권한(NS)

- ClusterRole: 권한(Global)

- RoleBinding

- Role <-> RoleBinding <-> SA/User - ClusterRoleBinding

- ClusterRole <-> ClusterRoleBinding <-> SA/User

https://kubernetes.io/docs/reference/access-authn-authz/rbac/

요청 동사

- create

- kubectl create, kubectl apply - get

- kubectl get po myweb - list

- kubectl get pods - watch

- kubectl get po -w - update

- kubectl edit, replace - patch

- kubectl patch - delete

- kubectl delete po myweb - deletecollection

- kubectl delete po --all

ClusterRole

- view: 읽을 수 있는 권한

- edit: 생성/삭제/변경 할 수 있는 권한

- admin: 모든것 관리(-RBAC: ClusterRole 제외)

- cluster-admin: 모든것 관리

SA

kubectl create sa <NAME>사용자 생성을 위한 x509 인증서

Private Key

openssl genrsa -out myuser.key 2048x509 인증서 요청 생성

openssl req -new -key myuser.key -out myuser.csr -subj "/CN=myuser"cat myuser.csr | base64 | tr -d "\n"csr.yaml

apiVersion: certificates.k8s.io/v1

kind: CertificateSigningRequest

metadata:

name: myuser-csr

spec:

usages:

- client auth

signerName: kubernetes.io/kube-apiserver-client

request: LS0tLS1CRUdJTiBkubectl create -f csr.yamlkubectl get csr상태: Pending

kubectl certificate approve myuser-csrkubectl get csr상태: Approved, Issued

kubectl get csr myuser-csr -o yamlstatus.certificates

kubectl get csr myuser-csr -o jsonpath='{.status.certificate}' | base64 -d > myuser.crtKubeconfig 사용자 생성

kubectl config set-credentials myuser --client-certificate=myuser.crt --client-key=myuser.key --embed-certs=trueKubeconfig 컨텍스트 생성

kubectl config set-context myuser@cluster.local --cluster=cluster.local --user=myuser --namespace=defaultkubectl config get-users

kubectl config get-clusters

kubectl config get-contextskubectl config use-context myuser@cluster.local클러스터 롤 바인딩 생성

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: myuser-view-crb

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: view

subjects:

- apiGroup: rbac.authorization.k8s.io

kind: User

name: myuserHelm

용어

- Chart: 차트, 패키지

- Repository: 차트 저장소

- Release: 쿠버네티스 오브젝트 리소스 (패키지 -> 클러스터에 생성한 인스턴스)

helm v3는 tiller를 사용하지 않음

helm client 설치

curl https://baltocdn.com/helm/signing.asc | gpg --dearmor | sudo tee /usr/share/keyrings/helm.gpg > /dev/null

sudo apt-get install apt-transport-https --yes

echo "deb [arch=$(dpkg --print-architecture) signed-by=/usr/share/keyrings/helm.gpg] https://baltocdn.com/helm/stable/debian/ all main" | sudo tee /etc/apt/sources.list.d/helm-stable-debian.list

sudo apt-get update

sudo apt-get install helmHelm Chart 검색

https://artifacthub.io/

차트 구조

<Chart Name>/

Chart.yaml

values.yaml

templates/- Chart.yaml: 차트의 메타데이타

- values.yaml: 패키지를 커스터마이즈/사용자화(벨류)

- templates: YAML 오브젝트 파일

helm 사용법

aritifacthub 검색

helm search hub <PATTERN>저장소 추가

helm repo add bitnami https://charts.bitnami.com/bitnami저장소 검색

helm search repo wordpress차트 설치

helm install mywordpress bitnami/wordpress릴리즈 확인

helm list릴리즈 삭제

helm uninstall mywordpress차트 정보 확인

helm show readme binami/wordpress

helm show chart binami/wordpress

helm show values binami/wordpress차트 사용자화

helm install mywp bitnami/wordpress --set replicaCount=2

helm install mywp bitnami/wordpress --set replicaCount=2 --set service.type=NodePort릴리즈 업그레이드

helm show value bitnami/wordpress > wp-value.yaml

파일 수정helm upgrade mywp bitnami/wordpress -f wp-value.yaml릴리즈 업그레이드 히스토리

helm history mywp릴리즈 롤백

helm rollback mywp 1wp-value2.yaml

replicaCount: 1

service:

type: LoadBalancerhelm upgrade mywp bitnami/wordpress -f wp-value2.yamlMonitoring & Logging

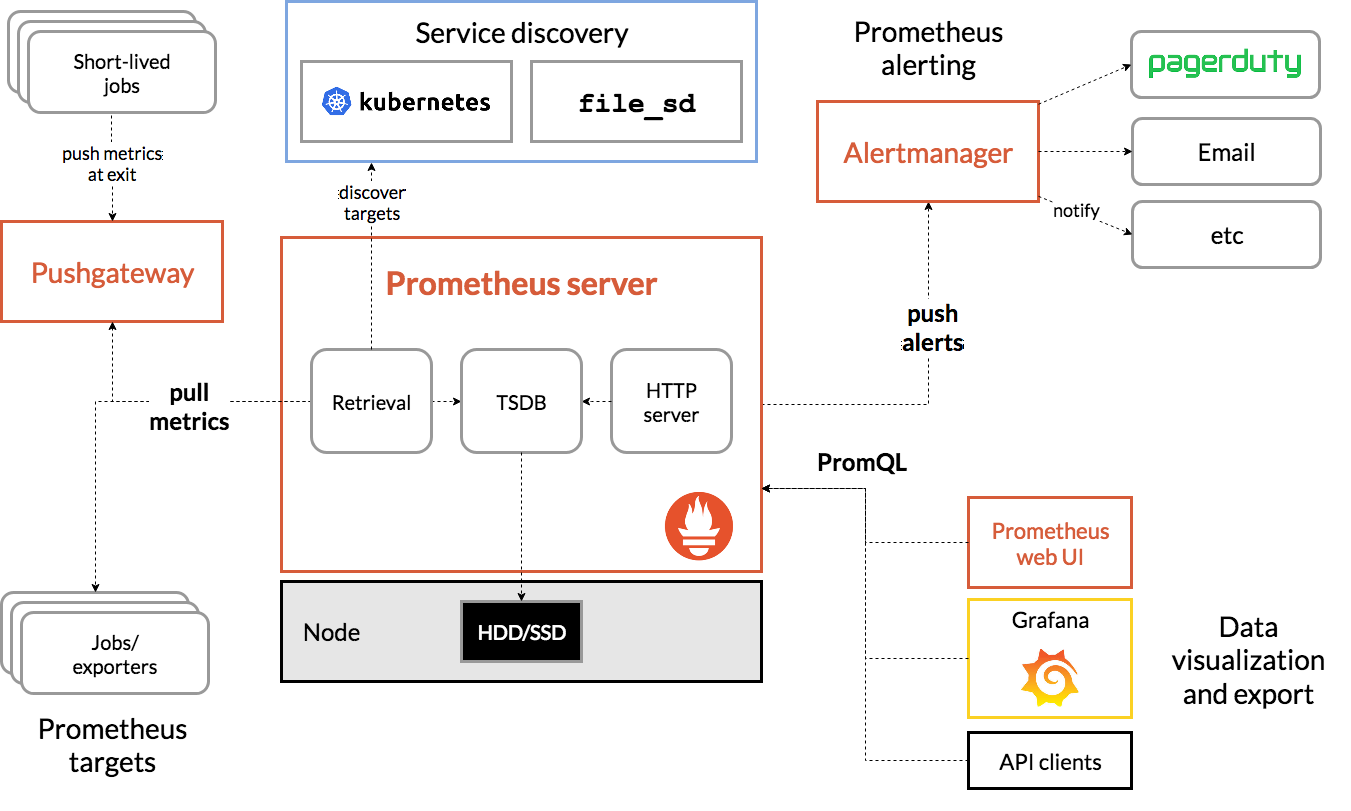

Prometheus Monitoring

CPU, Memoty, Network IO, Disk IO

- Heapster + InfluxDB: X

- metrics-server: DB 없음, 실시간

- CPU, Memory

- Prometheus

https://github.com/prometheus-community/helm-charts/tree/main/charts/kube-prometheus-stack

helm repo add prometheus-community https://prometheus-community.github.io/helm-charts

helm updateprom-value.yaml

grafana:

service:

type: LoadBalancerkubectl create ns monitorhelm install prom prometheus-community/kube-prometheus-stack -f prom-values.yaml -n monitor웹브라우저

http://192.168.100.24X

ID: admin

PWD: prom-operator

EFK Logging

ELK Stack: Elasticsearch + Logstash + Kibana

EFK Stack: Elasticsearch + Fluentd + Kibana

Elasticsearch + Fluent Bit + Kibana

Elastic Stack: Elasticsearch + Beat + Kibana

Elasticsearch

helm repo add elastic https://helm.elastic.co

helm repo updatehelm show values elastic/elasticsearch > es-value.yamles-value.yaml

18 replicas: 1

19 minimumMasterNodes: 1

80 resources:

81 requests:

82 cpu: "500m"

83 memory: "1Gi"

84 limits:

85 cpu: "500m"

86 memory: "1Gi"kubectl create ns logginghelm install elastic elastic/elasticsearch -f es-value.yaml -n loggingFluent Bit

git clone https://github.com/fluent/fluent-bit-kubernetes-logging.gitcd fluent-bit-kubernetes-loggingkubectl create -f fluent-bit-service-account.yaml

kubectl create -f fluent-bit-role-1.22.yaml

kubectl create -f fluent-bit-role-binding-1.22.yamlkubectl create -f output/elasticsearch/fluent-bit-configmap.yamloutput/elasticsearch/fluent-bit-ds.yaml

32 - name: FLUENT_ELASTICSEARCH_HOST

33 value: "elasticsearch-master"kubectl create -f output/elasticsearch/fluent-bit-ds.yamlKibana

helm show values elastic/kibana > kibana-value.yamlkibana-value.yaml

49 resources:

50 requests:

51 cpu: "500m"

52 memory: "1Gi"

53 limits:

54 cpu: "500m"

55 memory: "1Gi"

119 service:

120 type: LoadBalancerhelm install kibana elastic/kibana -f kibana-value.yaml -n logging- 햄버거 -> Management -> Stack Management

- Kibana -> Index Pattern

- Create Index Pattern 우상

- Name: logstash-*

- Timestamp: @timestamp - 햄버거 -> Analystics -> Discover

Tip

Powerlevel10k

git clone --depth=1 https://github.com/romkatv/powerlevel10k.git ${ZSH_CUSTOM:-$HOME/.oh-my-zsh/custom}/themes/powerlevel10k~/.zshrc

ZSH_THEME="powerlevel10k/powerlevel10k"exec zshp10k configurekubectx & kubens

wget https://github.com/ahmetb/kubectx/releases/download/v0.9.4/kubectxwget https://github.com/ahmetb/kubectx/releases/download/v0.9.4/kubenssudo install kubectx /usr/local/bin

sudo install kubens /usr/local/bin