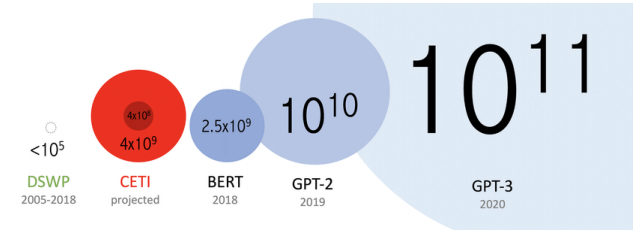

- 오늘날에는 데이터는 양이 많기 때문에 이를 처리하기 위한 장비가 필요하다.

Model parallel

- 다중 GPU에 학습을 분산하는 방법에는 모델을 나누거나 데이터를 나누는 두 가지 방법이 있다.

- 모델을 나누는 것은 생각보다 예전부터 사용해 왔으며 하나의 연구 분야로 자리를 잡고 있는데, 파이프라인이 원활하지 않음으로 생기는 병목 현상같은 어려움으로 인해 모델 병렬화는 난이도가 높다.

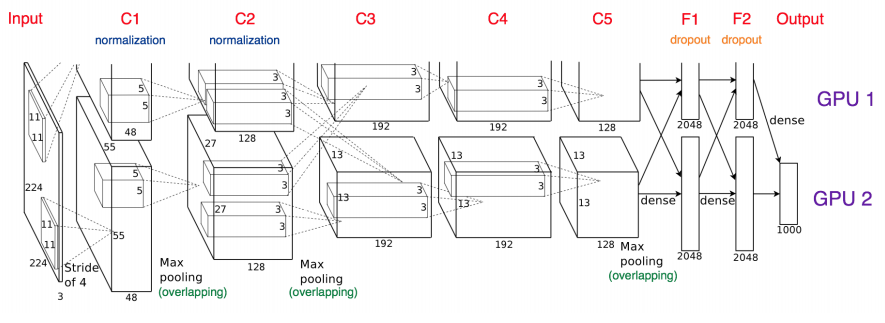

alexnet

- 두 개의 GPU로 나누어 데이터를 처리

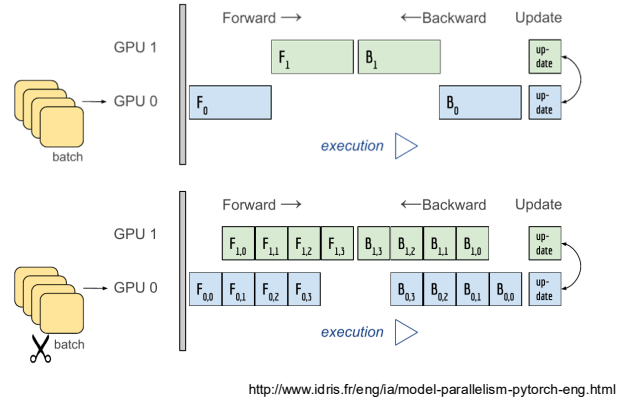

- 파이프 라인 구성

- 윗 부분의 파이프라인에는 서로 겹치는 부분이 없기 때문에 각각의 시간에 맞게 처리를 하는 것이므로 병렬화 하는 의미가 없다.

- 하지만, 아래 부분의 파이프라인에는 겹치는 부분이 존재함으로써, 동시에 처리할 수 있으므로, 이와 같이 파이프라인을 만들어야 한다.

class ModelParallelResNet50(ResNet):

def __init__(self, *args, **kwargs):

super(ModelParallelResNet50, self).__init__(Bottleneck, [3, 4, 6, 3],

num_classes=num_classes, *args, **kwargs)

# 첫번째 모델을 cuda 0에 할당 : GPU0

self.seq1 = nn.Sequential(self.conv1, self.bn1, self.relu, self.maxpool,

self.layer1, self.layer2).to('cuda:0')

# 두번째 모델을 cuda 1에 할당 : GPU1

self.seq2 = nn.Sequential(self.layer3, self.layer4, self.avgpool,).to('cuda:1')

self.fc.to('cuda:1')

# 두 모델 연결하기

def forward(self, x):

x = self.seq2(self.seq1(x).to('cuda:1'))

return self.fc(x.view(x.size(0), -1))Data parallel

-

데이터를 나눠 GPU에 할당후 결과의 평균을 취하는 방법

-

minibatch 수식과 유사한데 한번에 여러 GPU에서 수행

-

PyTorch에서는 DataParallel 과 DistributedDataParallel 을 제공

-

DataParallel

- 단순히 데이터를 분배한후 평균을 취하는 방식으

- 하나의 GPU에서 각각의 GPU에서 받은 데이터를 처리해야 하기 때문에 GPU사용 불균형 문제가 생길 수 있으며, Batch 사이즈 줄여야 한다(GPU 병목)

# 이게 전부.. 생각보다 많이 간단

parallel_model = torch.nn.DataParallel(model)- DistributedDataParallel

- 각 CPU마다 process를 생성하여 개별 GPU에 할당하는 방식

- 각각의 GPU에서 forward와 backward를 진행 후 gradient를 가지고 개별적으로 연산의 평균을 냄

# sampler 사용

train_sampler = torch.utils.data.distributed.DistributedSampler(train_data)

shuffle = False

pin_memory = True

# pin memory 사용

trainloader = torch.utils.data.DataLoader(train_data, batch_size=20, shuffle=Truepin_memory=pin_memory,

num_workers=3,shuffle=shuffle, sampler=train_sampler)

def main():

n_gpus = torch.cuda.device_count()

torch.multiprocessing.spawn(main_worker, nprocs=n_gpus, args=(n_gpus, ))

def main_worker(gpu, n_gpus):

image_size = 224

batch_size = 512

num_worker = 8

epochs = ...

# batch_size와 num_worker를 gpu만큼 잘라주어야 함

batch_size = int(batch_size / n_gpus)

num_worker = int(num_worker / n_gpus)

# 멀티 프로세싱 통신 규약 정의

torch.distributed.init_process_group(backend='nccl’, init_method='tcp://127.0.0.1:2568’, world_size=n_gpus, rank=gpu)

model = MODEL

torch.cuda.set_device(gpu)

model = model.cuda(gpu)

# Distributed DataParallel

model = torch.nn.parallel.DistributedDataParallel(model, device_ids=[gpu])- Python의 멀티프로세싱 코드

from multiprocessing import Pool

def f(x):

return x*x

if __name__ == '__main__':

with Pool(5) as p:

print(p.map(f, [1, 2, 3]))