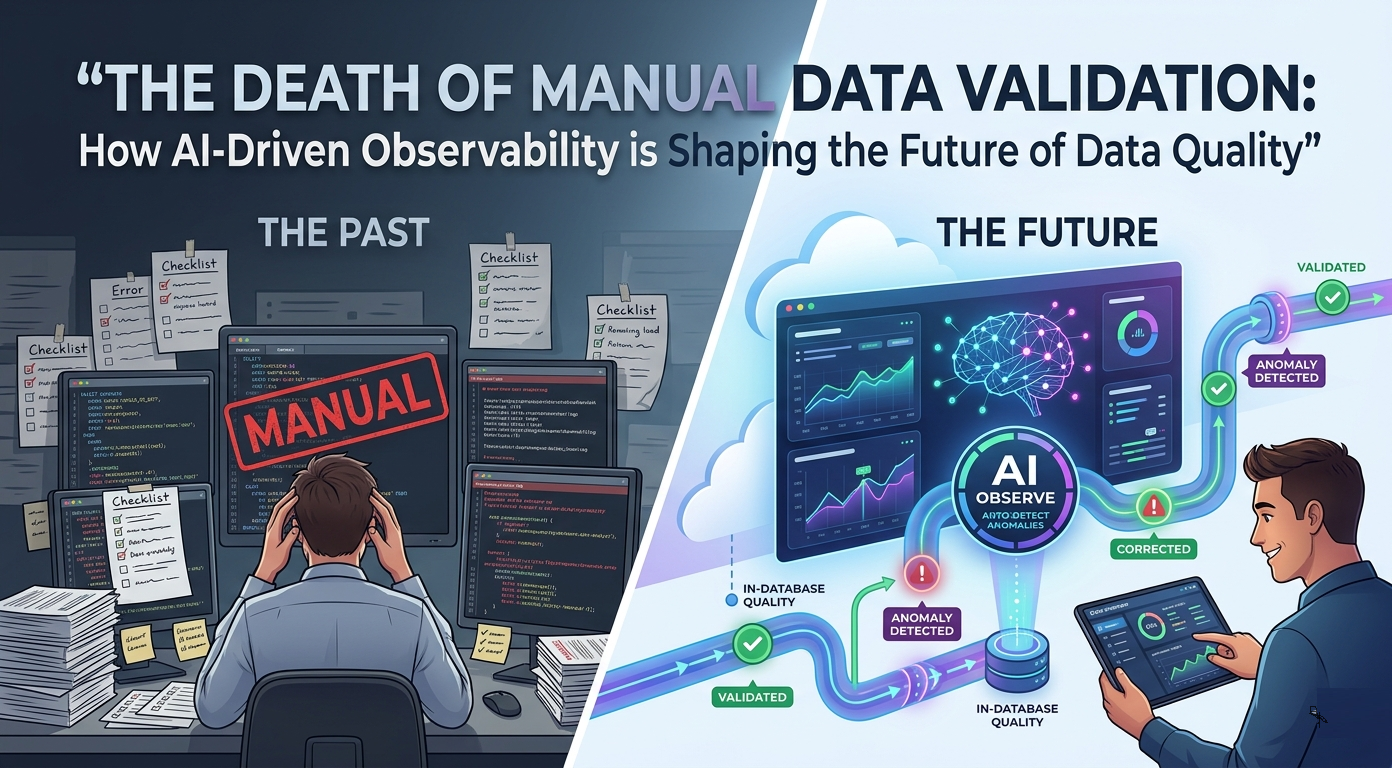

The Death of Manual Data Validation: How AI-Driven Observability is Shaping the Future of Data Quality

Data is no longer just a byproduct of business operations; it is the lifeblood of the modern enterprise. However, as organizations ingest petabytes of information from endless sources, the traditional methods of checking for errors are collapsing under their own weight. Understanding how modern data quality and observability platforms work is the first step toward reclaiming trust in your pipelines, as manual validation is simply failing to keep pace with the speed of today's digital business landscape.

Why Manual Data Validation Is Failing Modern Teams

In the past, data engineers could reasonably anticipate the errors that might occur in a dataset. They wrote custom SQL scripts to check for null values, verify ranges, or ensure that a date field followed a specific format. While this approach worked in smaller, slower environments, it is entirely insufficient for today's massive, cloud-native architectures. The sheer volume of data means that engineers are constantly buried under a mountain of maintenance work, struggling to update rules every time a business requirement changes.

Furthermore, the reactive nature of manual validation is a major bottleneck. When a data pipeline breaks, the engineering team is usually alerted by a business user who notices a missing report or an incorrect dashboard number. This creates a culture of firefighting rather than problem-solving. Ultimately, the engineering team spends most of their time patching old rules instead of building new value-added data products. This cycle of endless maintenance is why manual validation is failing, as it cannot scale with the speed or the complexity of current business requirements.

Additionally, this manual approach creates a significant dependency on institutional knowledge. If the engineer who wrote a complex validation script leaves the company, nobody knows why that specific rule exists or how to safely change it. This leads to brittle systems that everyone is afraid to touch, effectively slowing down the entire organization. Modern teams need to move away from these static checklists and toward a dynamic, automated approach that handles complexity without the constant need for human intervention.

The Limits of Rule-Based Data Quality in Modern Data Warehouses

Rule-based data quality presumes we are aware in advance of precisely what is wrong with our data. But modern data warehouses are defined by continual change and evolution, a phenomenon sometimes called schema drift. A change in a source system may silently destroy break downstream models, not detectable by simple static rules. When you are just searching the familiar patterns, then you are bound to overlook the unfamiliar anomalies which pose the greatest threat to your data integrity.

Moreover, the cost of maintaining a static rules increases exponentially with the size of your warehouse in terms of tables. Writing and maintaining thousands of specific rules, each column, is impossible if you have five hundred tables. You are effectively placing a bet that your rules will take care of all the possible failure modes, a losing game. The expense of maintaining such rules such as the time of writing, testing, deployment and debugging of these rules consumes resources, which could otherwise be used to perform actual data analysis.

Lastly, manual rules are binary. They inform you that a value falls within a range or not, but they are not subtle enough to inform you that the trend itself is suspicious. To illustrate, a value may technically fall within an acceptable range, but when it has just changed into a flat line it is a sign of a severe issue that a fixed rule will not be able to reflect. Fixed thresholds are virtually unaware of these minor yet crucial changes in data trends.

AI Observability: The Move towards Automated Quality Checks.

There is an evident transition in the industry toward the more modernized style of data quality management to the level of data observability. This approach does not rely on predefined rules but instead, it concentrates on comprehending the health of data in terms of its behaviour over time. AI-based observability is based on machine learning to learn patterns and create a baseline of what normal data should look like.

The important AI observability capabilities.

Contemporary AI-powered systems have a number of pragmatic benefits which render them more useful than rule-based approaches:

-

Unusual patterns and hidden anomalies are automatically detected.

-

Real time monitoring without manual rule development.

-

Timely notifications of problems before they go to reporting levels.

-

Historical data can be used to learn how to be more accurate over time.

-

Less data work due to automation.

This transformation enables organizations to cease to be reactive and embrace a more proactive data approach.

AI systems work by analyzing data directly at its source, which enables in-database data quality monitoring without moving data. This is especially important for organizations that care about security, compliance, and performance. By keeping analysis close to where data is stored, issues can be detected instantly, without delays caused by data transfers.

The functionality of AI systems is based on analyzing data at its origin, which allows in-database data quality monitoring without the movement of data. It is of particular significance to organizations that are concerned with security, compliance and performance. With analysis being maintained near data storage, problems can be recognized immediately, and delays associated with data migrations are removed.

The other advantage is the transformation of the role of data teams by this method. Engineers will not have to spend time manually checking errors but can work on self-monitoring systems. The system also only alerts when something really odd occurs which decreases noise and enhances efficiency.

This will also enable teams to respond sooner. Rather than identifying issues when a report breaks, AI can identify changes in data trends, including distribution or volume changes, way up the pipeline. When business users get reports, problems have been detected and are resolved. This becomes possible with the help of frameworks such as Digna which transforms the process of data monitoring into an automated and intelligent process.

How AI Detects Anomalies Without Human Input

At the core of AI-driven observability is the ability to learn from historical data. These systems use advanced time-series analysis to model how data behaves over hours, days, and months. By understanding the seasonality, trends, and typical volatility of a metric, the AI can automatically determine what constitutes a normal range. This eliminates the need for manual configuration, as the system creates its own rules dynamically.

Establishing Historical Baselines

The AI begins by ingesting past data patterns to calculate a standard behavior profile for every single table and column. It does not just look at a snapshot; it looks at the flow of data over long periods. This enables the system to recognize that, for example, a spike in data volume on Monday morning is perfectly normal for a retail business, but a similar spike on a Sunday night might indicate an error.

Key Anomaly Detection Capabilities

By using these learned baselines, the system can identify several types of critical data quality issues automatically:

-

Sudden Data Spikes or Drops: The system flags deviations in volume that do not match historical traffic patterns.

-

Schema Drift: It immediately detects if a column type has changed or if new, unexpected fields appear in the source data.

-

Missing Data Patterns: It alerts teams when data usually present at a specific time fails to arrive or shows empty values.

-

Stale Data Alerts: It monitors for delays in data arrival, ensuring that stakeholders always have access to the freshest information.

-

Statistical Distribution Shifts: It identifies when the actual values in a dataset start behaving differently from historical norms, such as a sudden skew in revenue data.

Intelligent Alerting and Noise Reduction

Because the system is constantly learning, it adapts to changes in business patterns. If a business enters a new growth phase where sales volume is expected to rise, a static rule would trigger false alarms, requiring human updates. An AI-driven system, however, recognizes this as a valid trend and adjusts its baseline accordingly. This reduces false positives significantly, which is vital for preventing alert fatigue among engineering teams. When engineers stop receiving hundreds of meaningless notifications, they are much more likely to pay attention to the few alerts that truly matter.

Additionally, AI systems can look across multiple variables simultaneously to find correlations that humans might overlook. If a sudden drop in revenue is accompanied by a subtle change in the timestamp format of an upstream feed, the AI can suggest that these events might be related. This holistic view of the data ecosystem allows for faster root cause analysis, saving teams hours of manual digging and debugging in complex environments.

Modular Platforms for Scalable Observability

One of the most significant barriers to adopting new data technologies is the fear of a high-friction implementation process. Many legacy tools require a massive, all-or-nothing commitment, forcing companies to ingest all their data into a proprietary cloud before they can see any value. However, modern platforms take a modular approach. This design allows teams to start small, perhaps by monitoring only one or two critical tables, and gradually expand the scope as they become more comfortable with the technology.

Furthermore, this modularity is incredibly important for budget control and ROI. Instead of paying for a blanket license that covers the entire enterprise, teams can pay based on the number of tables or modules they actively use. This pay-as-you-grow model makes it financially feasible for smaller teams to start improving their data quality without needing a huge capital expenditure. As the organization grows and the data infrastructure becomes more complex, the platform grows with it, ensuring that you are only paying for what you actually use.

Additionally, this approach empowers individual teams within a large company to own their data quality. Instead of waiting for a centralized IT department to configure a monolithic tool, a specific department can deploy modular observability to their own data warehouse. This decentralization promotes a culture of accountability where data producers take responsibility for the quality of the information they provide, leading to a more reliable data ecosystem throughout the entire organization.

Success Stories from Leading European Enterprises

The real-world impact of moving away from manual rules is best seen in the experiences of large-scale enterprises. For instance, organizations like ITSV, which manages complex data for social security and healthcare, have successfully transitioned to these new methods. By replacing static scripts with AI-based monitoring, they were able to reduce the time spent on manual data auditing while significantly increasing the accuracy of their reporting. This is a common theme among companies like A1 Telekom Austria and Adamed Pharma, who manage massive volumes of sensitive data that cannot afford downtime or inaccuracies.

Furthermore, these organizations found that the shift improved not just technical metrics but also human morale. Data engineers who were once trapped in a cycle of writing endless technical documentation and SQL scripts found themselves liberated to work on higher-level problems. They were able to focus on data modeling, architecture, and finding new ways to generate revenue from data, rather than being mere custodians of quality.

Ultimately, these stories demonstrate that the transition is not just about buying a new tool; it is about changing how teams collaborate. When the technical hurdles of data quality are solved by automation, the conversation between IT and the business side changes. They move from discussing "why is this dashboard broken" to "how can we use this data to improve our strategy." This change in the quality of conversation is perhaps the most valuable outcome for any data-driven company.

Roadmap to Rule-Free Data Quality Management

Embarking on the journey toward rule-free data quality management requires a strategic mindset. You do not need to delete every manual rule you have ever written on day one. Instead, start by identifying your most critical business processes the reports that, if broken, cause the most pain. Implement AI-driven observability on those specific tables first. This allows you to gain confidence in the technology and demonstrate immediate value to your leadership team.

Furthermore, invest time in training your team to interpret the insights provided by the AI. While the system handles the heavy lifting of detection, your humans still need to understand the business context. It is essential to treat the AI as an assistant that identifies potential issues, while your team decides on the appropriate corrective actions. This partnership ensures that you retain human oversight where it matters most, without the burden of manual oversight where it is unnecessary.

Solutions like the ones provided by the team behind Digna are proving that we can reach a point where data infrastructure is self-monitoring and self-optimizing. The final step of this roadmap is to bake data quality into the development lifecycle, ensuring that observability is a default feature for every new data product. When you stop treating quality as an afterthought or a "maintenance task" and start treating it as a core component of your data architecture, you will find that your organization becomes more agile, more reliable, and ultimately more capable of delivering high-quality insights in a fast-paced market.