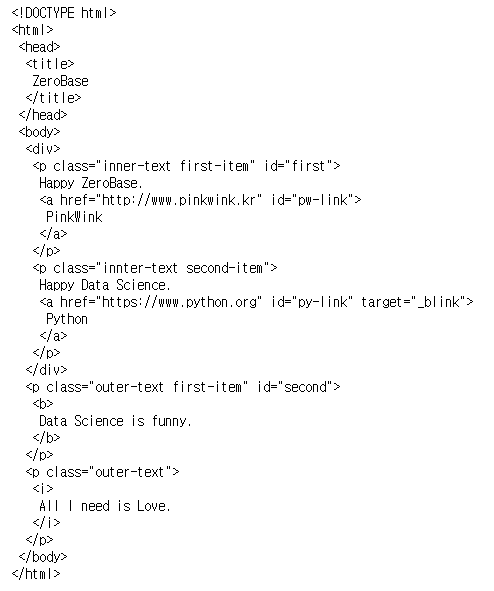

1. BeautifulSoup for web data

- install

- conda install -c anaconda beautifulsoup4

- pip install beautifulsoup4- import

from bs4 import BeautifulSoup- read

page = open("../data/03. zerobase.html", "r").read()

soup = BeautifulSoup(page, "html.parser")

print(soup.prettify())

# head 태그 확인

soup.head

# body 태그 확인

soup.body

# p 태그 확인

# 처음 발견한 p 태그만 출력

# find()

soup.p

soup.find("p")

soup.find("p", {"class":"inner-text first-item", "id":"first"}) # 다중 조건

# find_all(): 여러 개의 태그를 반환

# list 형태로 반환

soup.find_all("p")

# 특정 태그 확인

soup.find_all(id="pw-link")[0].text

soup.find_all("p", class_="innter-text second-item")

# 값만 출력

print(soup.find_all("p")[0].text)

print(soup.find_all("p")[1].string)

print(soup.find_all("p")[1].get_text())

# p 태그 리스트에서 텍스트 속성만 출력

for each_tag in soup.find_all("p"):

print("=" * 50)

print(each_tag.text)

# a 태그에서 href 속성값에 있는 값 추출

links = soup.find_all("a")

links[0].get("href"), links[1]["href"]

for each in links:

href = each.get("href") # each["href"]

text = each.get_text()

print(text + "=>" + href)BeautifulSoup 예제1-2 - 네이버 금융

- !pip install requests

- find, find_all

- select, select_one

- find, select_one : 단일 선택

- select, find_all : 다중 선택

import requests

# from urllib.request.Request

from bs4 import BeautifulSoup

url = "https://finance.naver.com/marketindex/"

response = requests.get(url)

# requests.get(), requests.post()

# response.text

soup = BeautifulSoup(response.text, "html.parser")

print(soup.prettify())

# soup.find_all("li", "on")

# id => #

# class => .

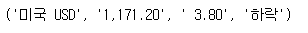

exchangeList = soup.select("#exchangeList > li")

len(exchangeList), exchangeList

title = exchangeList[0].select_one(".h_lst").text

exchange = exchangeList[0].select_one(".value").text

change = exchangeList[0].select_one(".change").text

updown = exchangeList[0].select_one(".head_info.point_dn > .blind").text

# link

title, exchange, change, updown

baseUrl = "https://finance.naver.com"

baseUrl + exchangeList[0].select_one("a").get("href")

# 4개 데이터 수집

exchange_datas = []

baseUrl = "https://finance.naver.com"

for item in exchangeList:

data = {

"title": item.select_one(".h_lst").text,

"exchnage": item.select_one(".value").text,

"change": item.select_one(".change").text,

"updown": item.select_one(".head_info.point_dn > .blind").text,

"link": baseUrl + item.select_one("a").get("href")

}

exchange_datas.append(data)

df = pd.DataFrame(exchange_datas)

df.to_excel("./naverfinance.xlsx", encoding="utf-8")

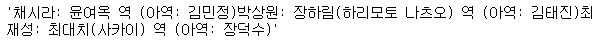

BeautifulSoup 예제2 - 위키백과 문서 정보 가져오기

import urllib

from urllib.request import urlopen, Request

html = "https://ko.wikipedia.org/wiki/{search_words}"

# https://ko.wikipedia.org/wiki/여명의_눈동자

req = Request(html.format(search_words=urllib.parse.quote("여명의_눈동자"))) # 글자를 URL로 인코딩

response = urlopen(req)

soup = BeautifulSoup(response, "html.parser")

print(soup.prettify())

n = 0

for each in soup.find_all("ul"):

print("=>" + str(n) + "========================")

print(each.get_text())

n += 1

soup.find_all("ul")[15].text.strip().replace("\xa0", "").replace("\n", "")

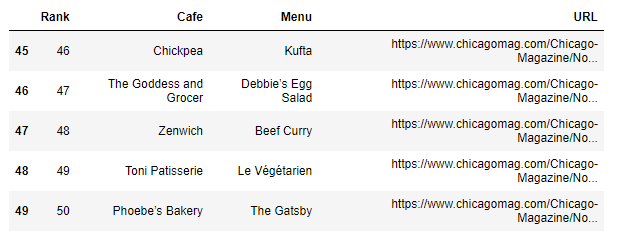

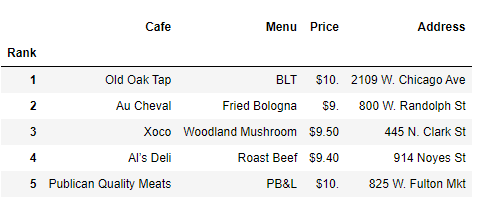

2. 시카고 맛집 데이터 분석 - 개요

- https://www.chicagomag.com/Chicago-Magazine/November-2012/Best-Sandwiches-Chicago/

- chicago magazine the 50 best sandwiches

최종목표

총 51개 페이지에서 각 가게의 정보를 가져온다

- 가게이름

- 대표메뉴

- 대표메뉴의 가격

- 가게주소3. 시카고 맛집 데이터 분석 - 메인페이지

# !pip install fake-useragent

from urllib.request import Request, urlopen

from fake_useragent import UserAgent

from bs4 import BeautifulSoup

url_base = "https://www.chicagomag.com/"

url_sub = "Chicago-Magazine/November-2012/Best-Sandwiches-Chicago/"

url = url_base + url_sub

ua = UserAgent()

req = Request(url, headers={"user-agent": ua.ie})

html = urlopen(req)

soup = BeautifulSoup(html, "html.parser")

print(soup.prettify())

soup.find_all("div", "sammy"), len(soup.find_all("div", "sammy"))

# soup.select(".sammy"), len(soup.select(".sammy"))- 샘플 코딩

tmp_one= soup.find_all("div", "sammy")[0]

type(tmp_one)

tmp_one.find(class_="sammyRank").get_text()

# tmp_one.select_one(".sammyRank").text

tmp_one.find("div", {"class":"sammyListing"}).get_text()

# tmp_one.select_one(".sammyListing").text

tmp_one.find("a")["href"]

# tmp_one.select_one("a").get("href")

import re

tmp_string = tmp_one.find(class_="sammyListing").get_text()

re.split(("\n|\r\n"), tmp_string)

print(re.split(("\n|\r\n"), tmp_string)[0]) # menu

print(re.split(("\n|\r\n"), tmp_string)[1]) # cafe - 리스트 추출

from urllib.parse import urljoin

url_base = "http://www.chicagomag.com"

# 필요한 내용을 담을 빈 리스트

# 리스트로 하나씩 컬럼을 만들고, DataFrame으로 합칠 예정

rank = []

main_menu = []

cafe_name = []

url_add = []

list_soup = soup.find_all("div", "sammy") # soup.select(".sammy")

for item in list_soup:

rank.append(item.find(class_="sammyRank").get_text())

tmp_string = item.find(class_="sammyListing").get_text()

main_menu.append(re.split(("\n|\r\n"), tmp_string)[0])

cafe_name.append(re.split(("\n|\r\n"), tmp_string)[1])

url_add.append(urljoin(url_base, item.find("a")["href"]))- 데이터프레임화

import pandas as pd

data = {

"Rank": rank,

"Menu": main_menu,

"Cafe": cafe_name,

"URL": url_add,

}

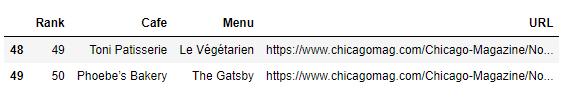

df = pd.DataFrame(data)

# 컬럼 순서 변경

df = pd.DataFrame(data, columns=["Rank", "Cafe", "Menu", "URL"])

df.tail()

# 데이터 저장

df.to_csv(

"../data/03. best_sandwiches_list_chicago.csv", sep=",", encoding="utf-8"

)

4. 시카고 맛집 데이터 분석 - 하위페이지

# requirements

import pandas as pd

from urllib.request import urlopen, Request

from fake_useragent import UserAgent

from bs4 import BeautifulSoup

df = pd.read_csv("../data/03. best_sandwiches_list_chicago.csv", index_col=0)

df["URL"][0]

req = Request(df["URL"][0], headers={"user-agent":ua.ie})

html = urlopen(req).read()

soup_tmp = BeautifulSoup(html, "html.parser")

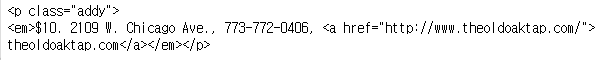

soup_tmp.find("p", "addy") # soup_find.select_one(".addy")

# regular expression

price_tmp = soup_tmp.find("p", "addy").text

price_tmp

import re

re.split(".,", price_tmp)

price_tmp = re.split(".,", price_tmp)[0]

price_tmp

tmp = re.search("\$\d+\.(\d+)?", price_tmp).group()

price_tmp[len(tmp) + 2:]

from tqdm import tqdm

price = []

address = []

for idx, row in tqdm(df[:5].iterrows()):

req = Request(row["URL"], headers={"user-agent":ua.ie})

html = urlopen(req).read()

soup_tmp = BeautifulSoup(html, "html.parser")

gettings = soup_tmp.find("p", "addy").get_text()

price_tmp = re.split(".,", gettings)[0]

tmp = re.search("\$\d+\.(\d+)?", price_tmp).group()

price.append(tmp)

address.append(price_tmp[len(tmp)+2:])

print(idx)

df.tail(2)

df["Price"] = price

df["Address"] = address

df = df.loc[:, ["Rank", "Cafe", "Menu", "Price", "Address"]]

df.set_index("Rank", inplace=True)

df.head()

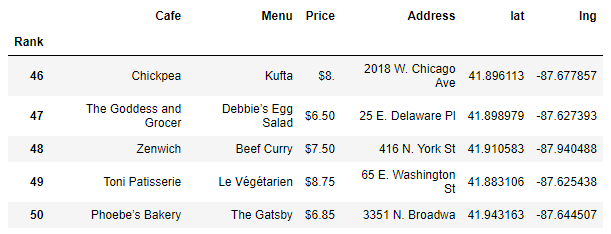

5. 시카고 맛집 데이터 지도 시각화

# requirements

import folium

import pandas as pd

import numpy as np

import googlemaps

from tqdm import tqdm

df = pd.read_csv("../data/03. best_sandwiches_list_chicago2.csv", index_col=0)

df.tail(10)

gmaps_key = ""

gmaps = googlemaps.Client(key=gmaps_key)

lat = []

lng = []

for idx, row in tqdm(df.iterrows()):

if not row["Address"] == "Multiple location":

target_name = row["Address"] + ", " + "Chicago"

# print(target_name)

gmaps_output = gmaps.geocode(target_name)

location_ouput = gmaps_output[0].get("geometry")

lat.append(location_ouput["location"]["lat"])

lng.append(location_ouput["location"]["lng"])

# location_output = gmaps_output[0]

else:

lat.append(np.nan)

lng.append(np.nan)

df["lat"] = lat

df["lng"] = lng

df.tail()

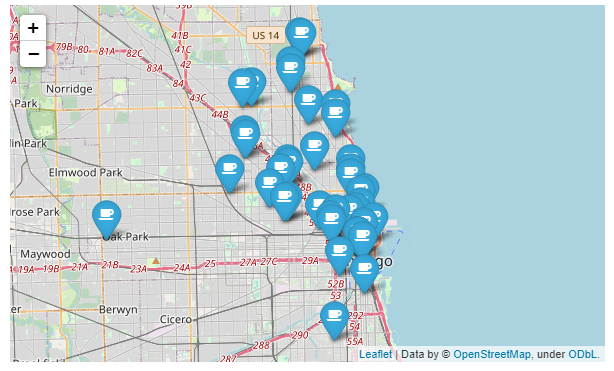

mapping = folium.Map(location=[41.8781136, -87.6297982], zoom_start=11)

for idx, row in df.iterrows():

if not row["Address"] == "Multiple location":

folium.Marker(

location=[row["lat"], row["lng"]],

popup=row["Cafe"],

tooltip=row["Menu"],

icon=folium.Icon(

icon="coffee",

prefix="fa"

)

).add_to(mapping)

mapping

🎅오늘의 한마디

데이터 수집을 자동화하는 BeautifulSoup. Html을 배웠던 것이 약간은 유용한 듯 하다. 어쨌거나 데이터 구조를 파악하고 원하는 데이터를 추출하는 코딩 역량도 필수로 가져야겠다.