state

- stateful(정보를 저장하고 있는) stateless(정보를 저장하고 있지 않은)

- 일반적인 방법으로는 웹서버에 내용이 클라이언트에 정보가 저장되지 않느다.

- 근데 웹서버가 저장할 때 가 있다. 로그인을 했을 때, 세션, 토큰 등 내용을 가지고 있다. 이건 개발자가 웹서버 코드를 어떻게 작성했냐에 따라 달라지게 된다.

- 서비스로 할 수 있는데 서비스를 이용하면 한단계를 굳이 거쳐서 오게 되니깐 불필요한 작업을 없애자!?

Stateless

- 일반적으로 똑같은 서버끼리 역할이 나눠져 있지 않은 것

ex) 웹서버 - 파드가 서로의 역할을 할 수 있다!

Stateful

- 일반적으로 똑같은 서버끼리 역할이 나눠져 있는 것

ex) DB 클러스터에서 마스터와 슬레이브 - 파드가 서로의 역할을 할 수 없다!

stafeful한 앱을 위한 볼륨

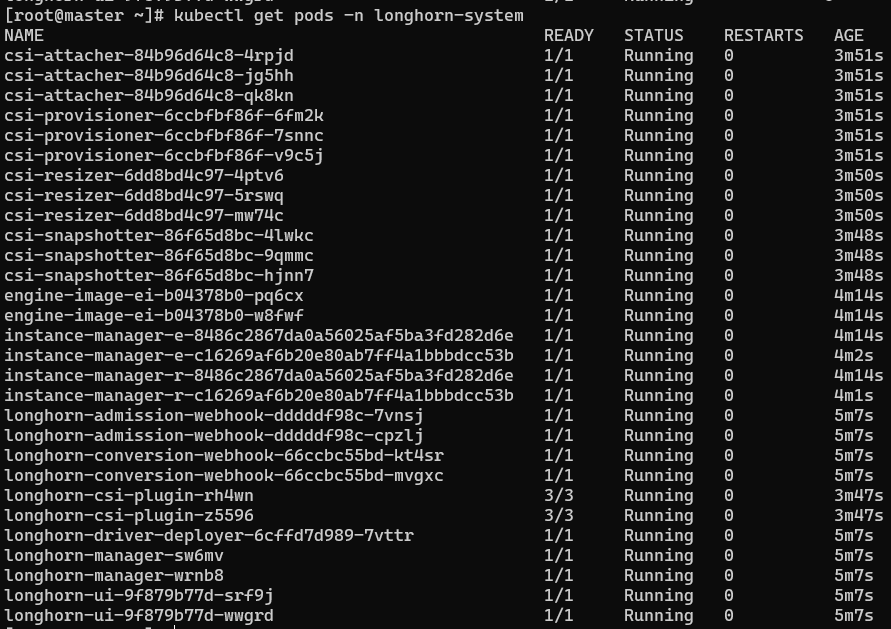

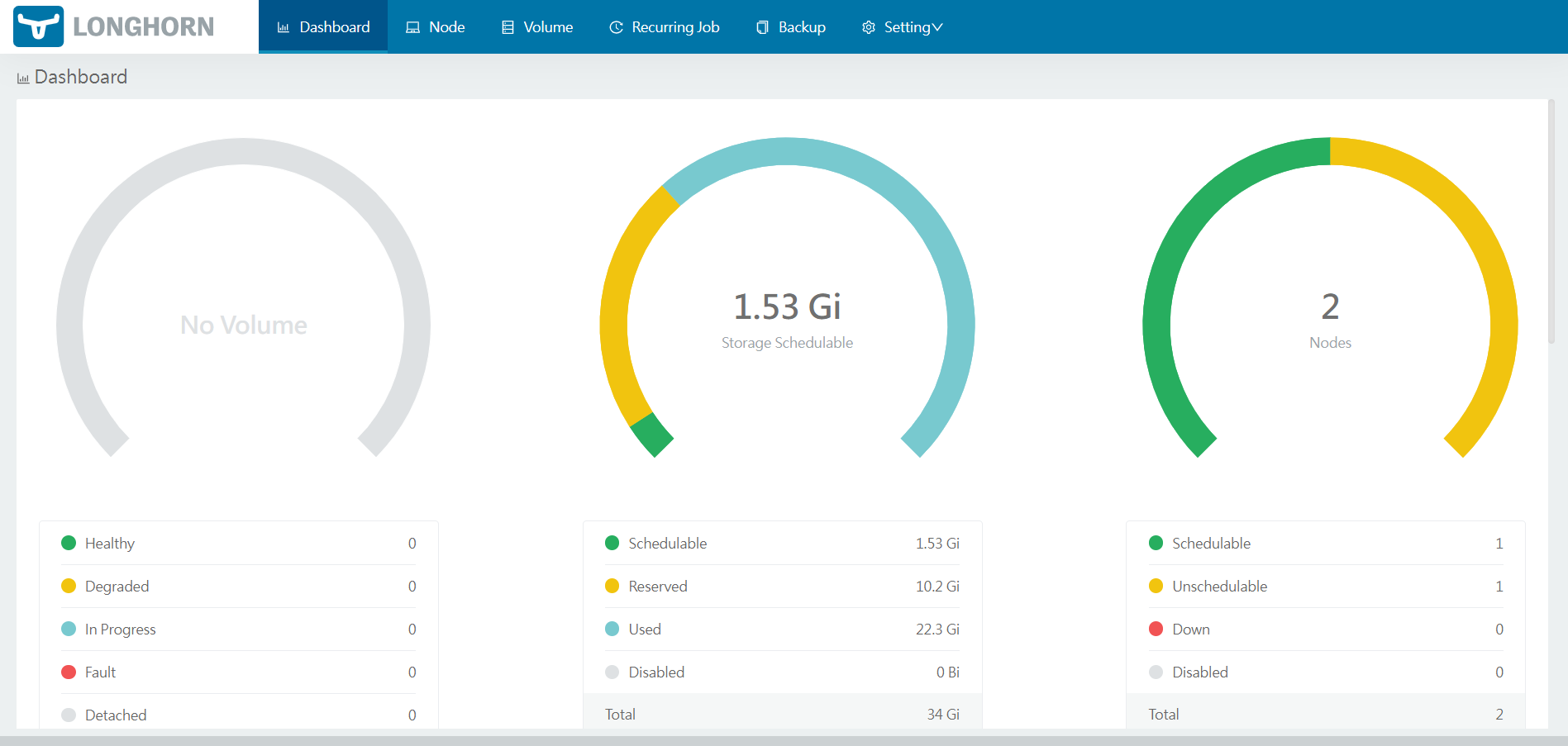

longhorn

-

쿠버네티스를 위한 볼륨 분산 저장소 역할!

Longhorn - Cloud native distributed block storage for Kubernetes

-

하나의 데이터를 쪼개서 저장해준다.

-

이 친구 설치하면 pv/pvc를 따로 설정해주지 않아도 된다!

-

master, node-01, node-02를 알아서 pv/pvc가 되도록!

-

미국 ps3로 슈퍼컴퓨터 만들었다.

-

분산처리를 해서 빠르게 실행되도록! 해주는 역할이다.

-

iscsi설치 (master, node-01, node-02)

yum install -y iscsi-initiator-utils -

loghorn 설치 (master)

kubectl apply -f https://raw.githubusercontent.com/kubetm/kubetm.github.io/master/yamls/longhorn/longhorn-1.3.3.yaml

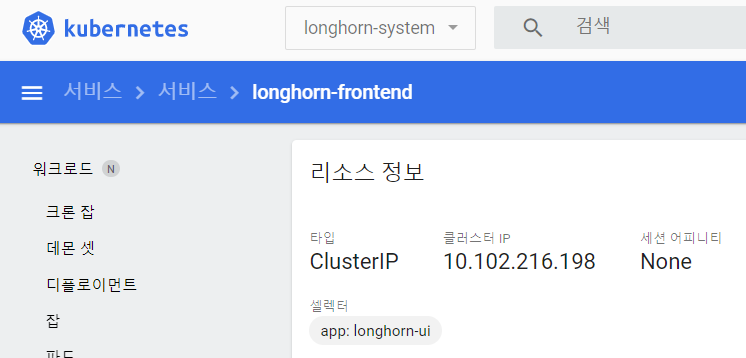

loghorn 대쉬보드 설정

- 지금 클러스터이니깐 외부에서 접속하기위해 노드포트로 변경!!

스토리지 클래스 생성

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: fast

namespace: ljh-dev

provisioner: driver.longhorn.io

parameters:

dataLocality: disabled

fromBackup: ""

fsType: ext4

numberOfReplicas: "3"

staleReplicaTimeout: "30"

- 파일시스템 방식은 ext4 옛날 리눅스에서 사용했 던것

- numberOfReplicas: "3" 복제본 수량!

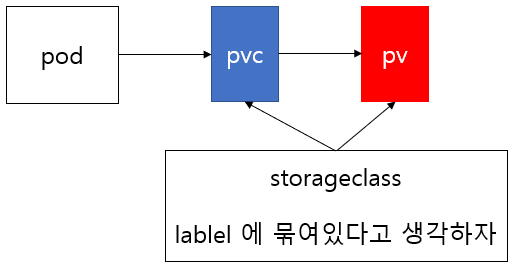

storageclass 사용!

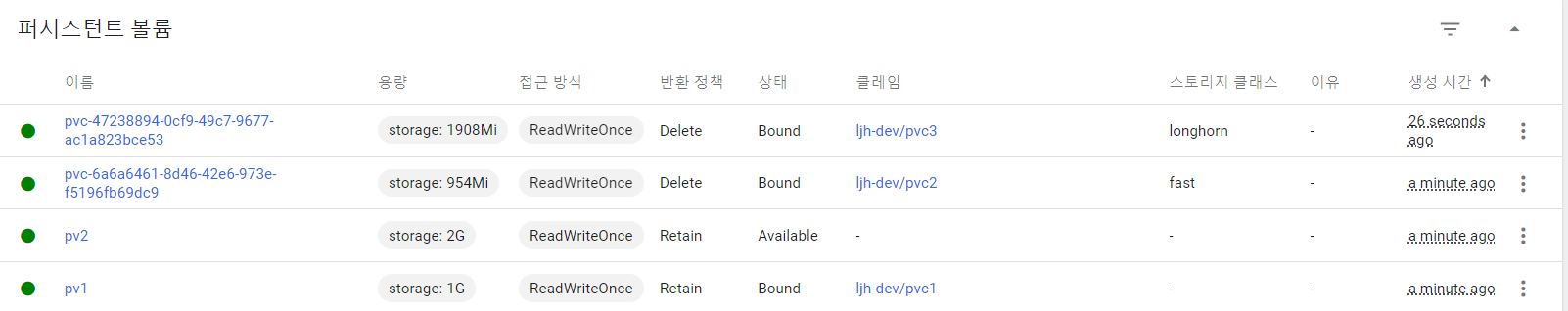

- pv 2개를 만들어 놓는다.

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv1

spec:

capacity:

storage: 1G

accessModes:

- ReadWriteOnce

hostPath:

path: /hostpath

type: DirectoryOrCreate

apiVersion: v1

kind: PersistentVolume

metadata:

name: pv2

spec:

capacity:

storage: 2G

accessModes:

- ReadWriteOnce

hostPath:

path: /hostpath

type: DirectoryOrCreate- 우선 2개의 pv를 생성하고 pvc 설정을 해준다.

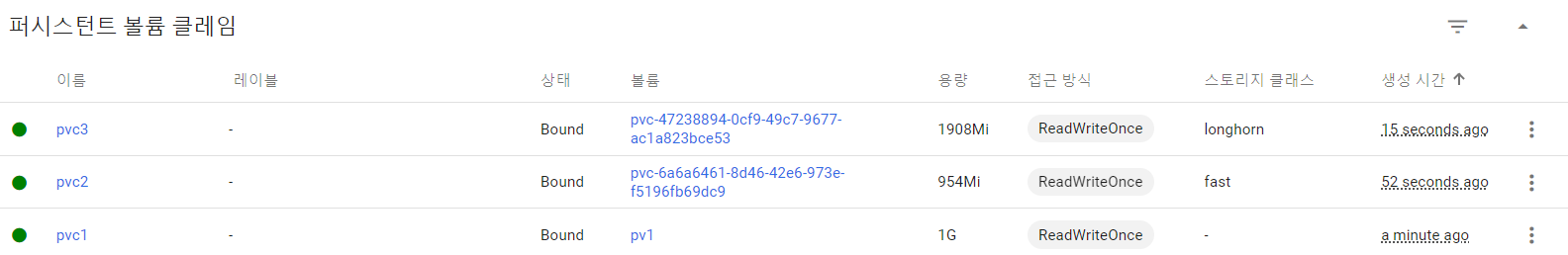

- pvc를 만들 때

storageClassName: ""설정에 따라 볼륨 연결이 달라진다. - 지정하지 않았을 때

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: pvc1

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1G

storageClassName: ""- 만들어 놓은 pv의 1g 할당된 친구랑 연결이 된다.

- 지정하면

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: pvc2

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1G

storageClassName: "fast"- longhorn으로 만들어 놓은 fast와 연결이 된다.

- 설정을 빼주면

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: pvc3

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 2G- longhorn을 설치해주었기 떄문에 새로운 이름(longhonr)의 pv도 새롭게 생성해 연결한다.

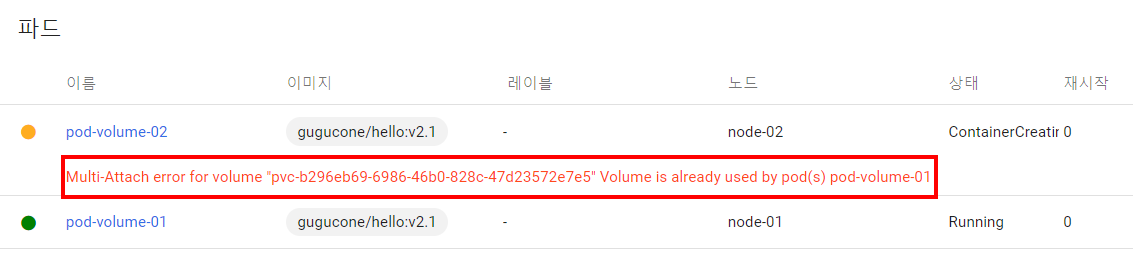

pvc 접근 모드

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: pvc3

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 2G- accessModes에 따라서 사용되는 노드가 달라진다.

모드는 총 3가지 RWO(ReadWriteOnce), ROM(ReadOnceMany), RWM(ReadWriteMany) - pvc를 만들고 처음 파드가 생성될 때 사용한(마운트된) 노드에서만 사용하도록!

- 그래서 아래와 같이 처음 생성되고 마운트를 node-01에 했기때문에 같으 pvc를 사용하려면 같은 노드(워커)에서 작동하도록 해야한다.

- 이건 RWO 모드 (노드가 같아야한다!)

- 이건 RWM 모드 (노드가 달라도 된다!)

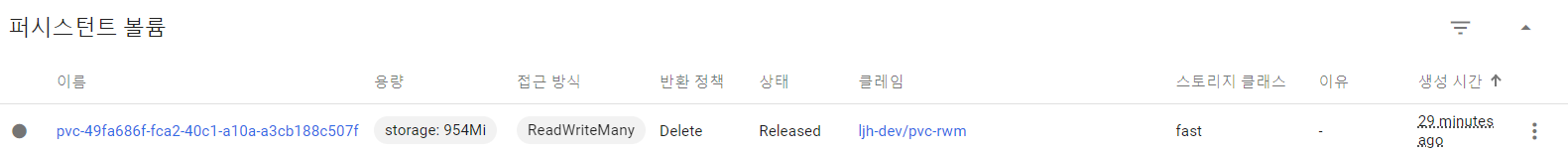

- pvc를 만들고 마운트 시켜주면 이렇게 pv가 알아서 생성!

stateful을 위한 앱 서비스

- pvc까지 자동으로! 만들어지도록 파드만 만들어도 자동화 될 수 있도

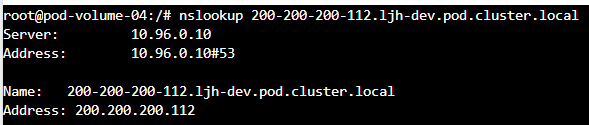

FQDN(Fully Qualified Domain Name)

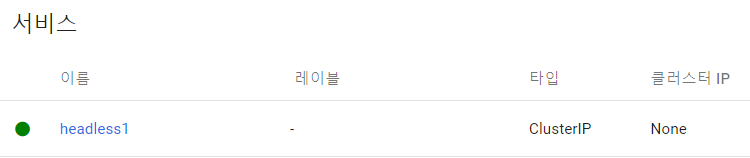

headless

- 파드의 이름을 가지고 연결할 수 있도록 해주자!

apiVersion: v1

kind: Service

metadata:

name: headless1

spec:

selector:

svc: headless

ports:

- port: 8000

targetPort: 8080

clusterIP: None- 서비스를 만들 때, clusterIP: None 를 주지 않겠 다고 선언을 해주고,

서비스가 셀레터로 파드에 지정된 레이블을 가져오면, 파드의 호스트네임 (파드이름)으로 통신을 할 수 가 있게 된다.

apiVersion: v1

kind: Pod

metadata:

name: pod1

labels:

svc: headless

spec:

hostname: pod-a

subdomain: headless1

containers:

- name: container

image: gugucone/hello:v2.1- hostname 설정해주고, 서브도메인 이름 설정해주고 파드 라벨 설정해주고 2개를 만들어준다.

- headless1 서비스에 모인 묶인 것을 확인!

- pod1에서 확인을 해보자!

- 일단 nslookup을 설치해준다.

apt updateapt install dnsutils

nslookup [서비스이름]

nslookup [호스트이름].[서비스이름] - 위 순서데로 확인하면 ip로 통신하는 것이 아닌 호스트이름으로 통신하는 것을 확인할 수 있다.

- 이를 활용하는 이유는 역할프로그램(stateful, db서버와 같은)들이 이름을 가지고 통신할 수 있도록 하기 위한 사전 작업이다.

만약 db의 마스터와 슬레이브 사이에 하나가 죽었다가 다시 살아나게 되면 IP가 변경 되기 때문에 미리 이름으로 작업이 될 수 있도록 지정해 준것이다!

statefulset

Replicaset, Statefulset 차이!

-

Replicaset은 파드의 이름이 랜덤으로 생성되고 순서가 없지만 Statefulset은 이름에 인덱스 번호가 붙고 순서를 가진다. (이렇게 정해지면 우린 이름으로 통신할 수 있다. 죽었다가 일어나도 이름이 바뀌지 않으니깐!)

-

Replicaset은 파드가 삭제되고 다시 생성되면 대부분의 정보가 새로 생성되지만 Statefulset은 유지된다.(이름은 유지된다!, 그럼 이름 호스트네임으로 통신하니깐)

-

Replicaset은 PVC로 볼륨을 연결할 때 PVC가 미리 생성되어있어야 하지만 Statefulset은 동적으로 PVC 생성 가능 (여기서는 PVC를 생성하지 않고 자동으로 생성해준다!!)

-

Replicaset은 만들 때, 한번에 쫙올라와서 만들어지고 삭제도 한번에 쫙 삭제가 된다. Statefulset은 하나씩, 순서, 인덱스번호가 적히면서 만들어지고 순서데로 삭제 된다.

-

Replicaset은 stateless 만들 때, Statefulset은 stateful한거 만들 때, 주로 사용!

-

이거말고도 더 있다!

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: statefulset-test2

spec:

replicas: 1

selector:

matchLabels:

type: db

template:

metadata:

labels:

type: db

spec:

containers:

- name: container

image: gugucone/hello:2.1

volumeMounts:

- name: volume

mountPath: /mount1

terminationGracePeriodSeconds: 10

volumeClaimTemplates:

- metadata:

name: volume

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 1G

storageClassName: "fast"- statefulset을 만들어 줄때, pvc 모드와 스토리지클래스를 입력해주면 자동으로 pvc와 pv를 생성해 준다!

- 이 친구도 컨트롤러중 하나이다, 여기서 스케일을 하나 더 늘려주면

- 번호 순서데로 생성되는 것을 확인 할 수 있다.

Run a Replicated Stateful Application

컨피그 맵

apiVersion: v1

kind: ConfigMap

metadata:

name: mysql

labels:

app: mysql

app.kubernetes.io/name: mysql

data:

primary.cnf: |

# Apply this config only on the primary.

[mysqld]

log-bin

replica.cnf: |

# Apply this config only on replicas.

[mysqld]

super-read-only - 각 마스터와 슬레이브의 설정파일을 다르게 해서 설정해준다.

서비스

# Headless service for stable DNS entries of StatefulSet members.

apiVersion: v1

kind: Service

metadata:

name: mysql

labels:

app: mysql

app.kubernetes.io/name: mysql

spec:

ports:

- name: mysql

port: 3306

clusterIP: None

selector:

app: mysql

---

# Client service for connecting to any MySQL instance for reads.

# For writes, you must instead connect to the primary: mysql-0.mysql.

apiVersion: v1

kind: Service

metadata:

name: mysql-read

labels:

app: mysql

app.kubernetes.io/name: mysql

readonly: "true"

spec:

ports:

- name: mysql

port: 3306

selector:

app: mysql- 여기서 헤드리스아이피를 주고 파드의 이름을 가지고 찾아갈 수 있도록! 그리고 데이터를 읽게해주는 서비스

스테이트 풀셋

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: mysql

spec:

selector:

matchLabels:

app: mysql

app.kubernetes.io/name: mysql

serviceName: mysql

replicas: 3

template:

metadata:

labels:

app: mysql

app.kubernetes.io/name: mysql

spec:

initContainers:

# 초기화 컨테이너!

- name: init-mysql

image: mysql:5.7

command:

- bash

- "-c"

- |

set -ex

# Generate mysql server-id from pod ordinal index.

[[ $HOSTNAME =~ -([0-9]+)$ ]] || exit 1

ordinal=${BASH_REMATCH[1]}

echo [mysqld] > /mnt/conf.d/server-id.cnf

# Add an offset to avoid reserved server-id=0 value.

echo server-id=$((100 + $ordinal)) >> /mnt/conf.d/server-id.cnf

# Copy appropriate conf.d files from config-map to emptyDir.

if [[ $ordinal -eq 0 ]]; then

cp /mnt/config-map/primary.cnf /mnt/conf.d/

else

cp /mnt/config-map/replica.cnf /mnt/conf.d/

fi

# 컨피그맵을 볼륨으로 형성해서 사용!

volumeMounts:

- name: conf

mountPath: /mnt/conf.d

- name: config-map

mountPath: /mnt/config-map

# 컨피그맵을 볼륨으로 만들어서 마운트할 수 도 있다!

- name: clone-mysql # 백업용?으로 사용하는 듯 하다

image: gcr.io/google-samples/xtrabackup:1.0

command:

- bash

- "-c"

- |

set -ex

# Skip the clone if data already exists.

[[ -d /var/lib/mysql/mysql ]] && exit 0

# Skip the clone on primary (ordinal index 0).

[[ `hostname` =~ -([0-9]+)$ ]] || exit 1

ordinal=${BASH_REMATCH[1]}

[[ $ordinal -eq 0 ]] && exit 0

# Clone data from previous peer.

ncat --recv-only mysql-$(($ordinal-1)).mysql 3307 | xbstream -x -C /var/lib/mysql

# Prepare the backup.

xtrabackup --prepare --target-dir=/var/lib/mysql

volumeMounts:

- name: data

mountPath: /var/lib/mysql

subPath: mysql

- name: conf

mountPath: /etc/mysql/conf.d

containers:

- name: mysql

image: mysql:5.7

env:

- name: MYSQL_ALLOW_EMPTY_PASSWORD

# 패스워드 없음

value: "1"

ports:

- name: mysql

containerPort: 3306

volumeMounts:

- name: data

mountPath: /var/lib/mysql

subPath: mysql

- name: conf

mountPath: /etc/mysql/conf.d

resources:

requests:

cpu: 500m

memory: 1Gi

livenessProbe:

# 프로브! 정찰병드! 파드의 상태를 보는 것이다. 파드 자체를 보는 것!

exec:

command: ["mysqladmin", "ping"]

initialDelaySeconds: 30

periodSeconds: 10

timeoutSeconds: 5

readinessProbe:

# 이 친구는 파드안에 프로그램의 상태를 보는 것! 프로그램을 읽을 수 있냐 없냐

# 이 말은 파드가 실행되었다고 해서 프로그램이 실행되는게 아니니깐,

# 예를 들어 mysql이 실행되는데 시간이 걸리는데 클라이언트들이 막 접속하면 실패니깐, 살아 있는지 확인해보고 시작

exec:

# Check we can execute queries over TCP (skip-networking is off).

command: ["mysql", "-h", "127.0.0.1", "-e", "SELECT 1"]

# 실행되었다면 이 명령어를 사용!

initialDelaySeconds: 5

periodSeconds: 2

timeoutSeconds: 1

- name: xtrabackup

image: gcr.io/google-samples/xtrabackup:1.0

ports:

- name: xtrabackup

containerPort: 3307

command:

- bash

- "-c"

- |

set -ex

cd /var/lib/mysql

# Determine binlog position of cloned data, if any.

if [[ -f xtrabackup_slave_info && "x$(<xtrabackup_slave_info)" != "x" ]]; then

# XtraBackup already generated a partial "CHANGE MASTER TO" query

# because we're cloning from an existing replica. (Need to remove the tailing semicolon!)

cat xtrabackup_slave_info | sed -E 's/;$//g' > change_master_to.sql.in

# Ignore xtrabackup_binlog_info in this case (it's useless).

rm -f xtrabackup_slave_info xtrabackup_binlog_info

elif [[ -f xtrabackup_binlog_info ]]; then

# We're cloning directly from primary. Parse binlog position.

[[ `cat xtrabackup_binlog_info` =~ ^(.*?)[[:space:]]+(.*?)$ ]] || exit 1

rm -f xtrabackup_binlog_info xtrabackup_slave_info

echo "CHANGE MASTER TO MASTER_LOG_FILE='${BASH_REMATCH[1]}',\

MASTER_LOG_POS=${BASH_REMATCH[2]}" > change_master_to.sql.in

fi

# Check if we need to complete a clone by starting replication.

if [[ -f change_master_to.sql.in ]]; then

echo "Waiting for mysqld to be ready (accepting connections)"

until mysql -h 127.0.0.1 -e "SELECT 1"; do sleep 1; done

echo "Initializing replication from clone position"

mysql -h 127.0.0.1 \

-e "$(<change_master_to.sql.in), \

MASTER_HOST='mysql-0.mysql', \

MASTER_USER='root', \

MASTER_PASSWORD='', \

MASTER_CONNECT_RETRY=10; \

START SLAVE;" || exit 1

# In case of container restart, attempt this at-most-once.

mv change_master_to.sql.in change_master_to.sql.orig

fi

# Start a server to send backups when requested by peers.

exec ncat --listen --keep-open --send-only --max-conns=1 3307 -c \

"xtrabackup --backup --slave-info --stream=xbstream --host=127.0.0.1 --user=root"

volumeMounts:

- name: data

mountPath: /var/lib/mysql

subPath: mysql

- name: conf

mountPath: /etc/mysql/conf.d

resources:

requests:

cpu: 100m

memory: 100Mi

volumes:

- name: conf

emptyDir: {}

- name: config-map

configMap:

name: mysql

volumeClaimTemplates:

# 이걸 사용하려면 동적프로비저닝을 사용해야하는데 우린 loghorn사용했으니

- metadata:

name: data

spec:

accessModes: ["ReadWriteOnce"]

resources:

requests:

storage: 10Gi- 주석 부분을 잘 읽어보자!

- 스테이트풀셋을 사용한 이유는 이니셜 컨테이너에서 이름 가지고 마스터와 슬레이브를 나눌 수 있기 떄문에! 스테이트 플셋은 0, 1, 2, 3, 4, 이렇게 인덱스 번호로 시작되니깐 0은 마스터로 그리고 나머지 숫자는 슬레이브로 사용하도록!

- if [[ $ordinal -eq 0 ]]; then

cp /mnt/config-map/primary.cnf /mnt/conf.d/

else

cp /mnt/config-map/replica.cnf /mnt/conf.d/

fi