HPA

쿠버네티스는 부하량에 따라 디플로이먼트의 파드 수를 유동적으로 관리하느 기능 제공

설정 방법

1. hpa-hname-pods 이름으로 생성

[root@m-k8s 3.3.4]# kubectl create deployment hpa-hname-pods --image=sysnet4admin/echo-hname

deployment.apps/hpa-hname-pods created2. expose를 통해 hpa-hname-pods 로드밸런서 서비스로 설정

[root@m-k8s 3.3.4]# kubectl expose deployment hpa-hname-pods --type=LoadBalancer --name=hpa-name-svc --port=80

service/hpa-name-svc exposed3. 로드밸런서 서비스와 부여된 IP를 확인

[root@m-k8s 3.3.4]# kubectl get service

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP X.X.X.X <none> 443/TCP 23d

lb-hname-svc LoadBalancer X.X.X.X X.X.X.X 80:31360/TCP 28s

lb-ip-svc LoadBalancer X.X.X.X X.X.X.X 80:32220/TCP 13s

4. 파드 부하 확인

[root@m-k8s 3.3.5]# kubectl top pods

Error from server(NotFound)

에러 발생 이유는 HPA가 자원을 요청할 때 메트릭 서버를 통해 계측값을 전달 받는데,

메트릭 서버가 없기 때문에 에러가 발생 합니다. 그러므로 메트릭 서버를 설정 해줘야 합니다.

5. 메트릭스 서버 생성

[root@m-k8s 3.3.5]# kubectl create -f metrics-server.yaml- 참고

metrics-server.yaml

#Main_Source_From:

# - https://github.com/kubernetes-sigs/metrics-server

#aggregated-metrics-reader.yaml

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: system:aggregated-metrics-reader

labels:

rbac.authorization.k8s.io/aggregate-to-view: "true"

rbac.authorization.k8s.io/aggregate-to-edit: "true"

rbac.authorization.k8s.io/aggregate-to-admin: "true"

rules:

- apiGroups: ["metrics.k8s.io"]

resources: ["pods", "nodes"]

verbs: ["get", "list", "watch"]

#auth-delegator.yaml

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: metrics-server:system:auth-delegator

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:auth-delegator

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

#auth-reader.yaml

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: metrics-server-auth-reader

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system

#metrics-apiservice.yaml

---

apiVersion: apiregistration.k8s.io/v1beta1

kind: APIService

metadata:

name: v1beta1.metrics.k8s.io

spec:

service:

name: metrics-server

namespace: kube-system

group: metrics.k8s.io

version: v1beta1

insecureSkipTLSVerify: true

groupPriorityMinimum: 100

versionPriority: 100

#metrics-server-deployment.yaml

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: metrics-server

namespace: kube-system

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: metrics-server

namespace: kube-system

labels:

k8s-app: metrics-server

spec:

selector:

matchLabels:

k8s-app: metrics-server

template:

metadata:

name: metrics-server

labels:

k8s-app: metrics-server

spec:

serviceAccountName: metrics-server

volumes:

# mount in tmp so we can safely use from-scratch images and/or read-only containers

- name: tmp-dir

emptyDir: {}

containers:

- name: metrics-server

image: k8s.gcr.io/metrics-server-amd64:v0.3.6

args:

# Manually Add for lab env(Sysnet4admin/k8s)

# skip tls internal usage purpose

- --kubelet-insecure-tls

# kubelet could use internalIP communication

- --kubelet-preferred-address-types=InternalIP

- --cert-dir=/tmp

- --secure-port=4443

ports:

- name: main-port

containerPort: 4443

protocol: TCP

securityContext:

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

imagePullPolicy: Always

volumeMounts:

- name: tmp-dir

mountPath: /tmp

nodeSelector:

beta.kubernetes.io/os: linux

kubernetes.io/arch: "amd64"

#metrics-server-service.yaml

---

apiVersion: v1

kind: Service

metadata:

name: metrics-server

namespace: kube-system

labels:

kubernetes.io/name: "Metrics-server"

kubernetes.io/cluster-service: "true"

spec:

selector:

k8s-app: metrics-server

ports:

- port: 443

protocol: TCP

targetPort: main-port

#resource-reader.yaml

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:

name: system:metrics-server

rules:

- apiGroups:

- ""

resources:

- pods

- nodes

- nodes/stats

- namespaces

- configmaps

verbs:

- get

- list

- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: system:metrics-server

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: system:metrics-server

subjects:

- kind: ServiceAccount

name: metrics-server

namespace: kube-system6. top 값 확인

[root@m-k8s 3.3.5]# kubectl top pods

NAME CPU(cores) MEMORY(bytes)

hpa-hname-pods-696b8fcc99-64k6s 0m 2Mi7. edit 명령어를 통해 부하가 걸리기 전에 scale이 되도록 변경

[root@m-k8s 3.3.5]# kubectl edit deployment hpa-hname-pods

[중략]

spec:

containers:

- image: sysnet4admin/echo-hname

imagePullPolicy: Always

name: echo-hname

## 해당부분 변경(cpu 50% 이상 사용 X, 1개의 pod가 처리 할 수 있는 부하 10m)

resources:

limits:

cpu: 50m

requests:

cpu: 10m

[생략] 일정 시간이 지난후 스펙이 변경된 pod가 새로 생성

변경 후 vi(vim)과 동일하게 :wq 로 저장

8. 자동 scale 수행 명령어

CPU 50%를 넘기면 autosacle

[root@m-k8s 3.3.5]# kubectl autoscale deployment hpa-hname-pods --min=1 --max=30 --cpu-percent=50

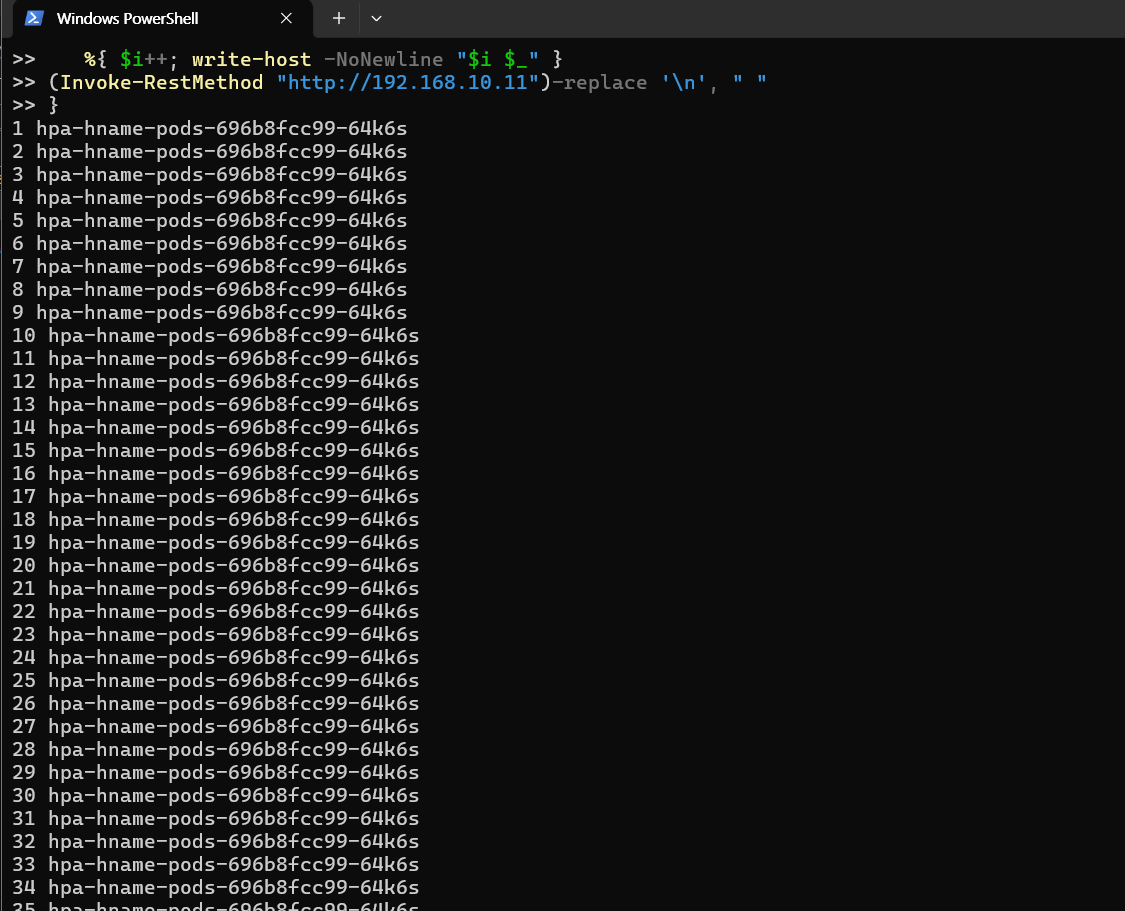

horizontalpodautoscaler.autoscaling/hpa-hname-pods autoscaled8-1 PowerShell

PS C:\Users> $i=0; while($true)

>> {

>> %{ $i++; write-host -NoNewline "$i $_" }

>> (Invoke-RestMethod "http://192.168.10.11")-replace '\n', " "

>> }

8-2 HPA가 파드 CPU 부하에 따른 autoscale 진행

[root@m-k8s 3.3.5]# kubectl get pods

NAME READY STATUS RESTARTS AGE

hpa-hname-pods-696b8fcc99-2r6mg 0/1 ContainerCreating 0 4s

hpa-hname-pods-696b8fcc99-64k6s 1/1 Running 0 9h

hpa-hname-pods-696b8fcc99-87x96 1/1 Running 0 20s

hpa-hname-pods-696b8fcc99-ckxdv 1/1 Running 0 20s

hpa-hname-pods-696b8fcc99-kzpsc 0/1 ContainerCreating 0 4s

hpa-hname-pods-696b8fcc99-npk6c 1/1 Running 0 20s

hpa-hname-pods-696b8fcc99-q6jl4 0/1 ContainerCreating 0 4s

hpa-hname-pods-696b8fcc99-zxc6m 0/1 ContainerCreating 0 4s

결론

HPA를 잘 활용한다면 자원의 사용을 극대화하면서 서비스 가동률을 높일 수 있다.