citation

Jiang, Albert Q., et al. "Mistral 7B." arXiv preprint arXiv:2310.06825 (2023).

Abstract

- Mistral 7B 소개

- Llama 2 13B 성능을 뛰어넘음

- 추론, 수학, 코드 생성 영역에서

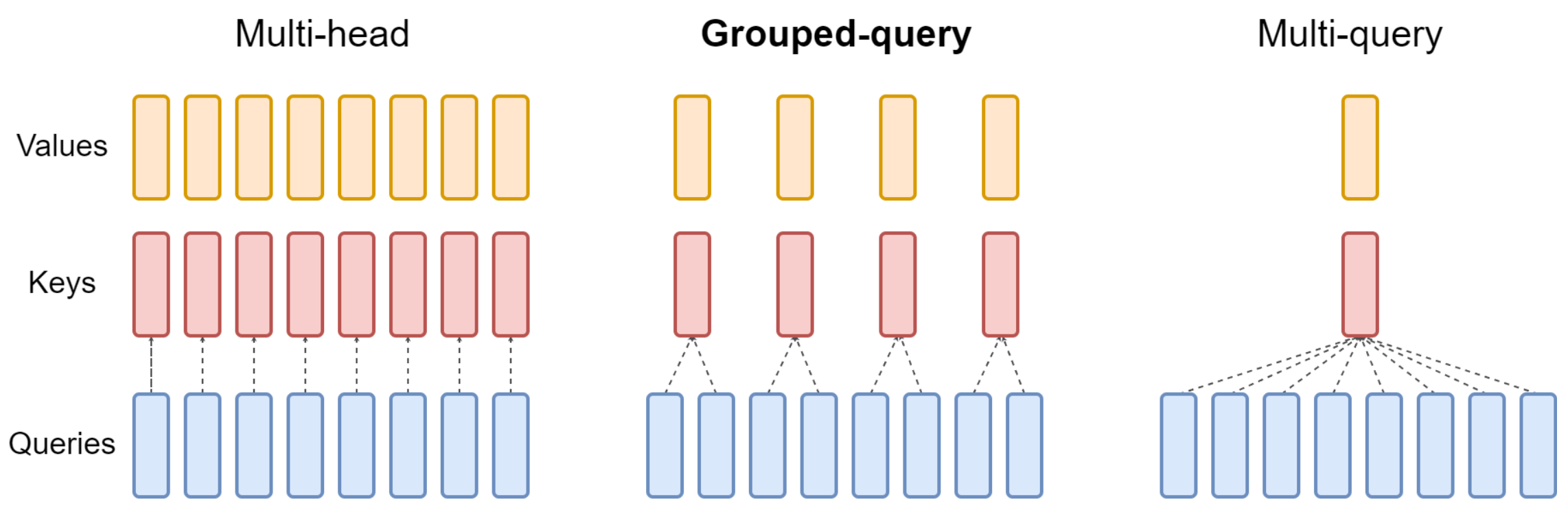

GQA(Grouped-Query Attention) +SWA(Sliding Window Attention)

|  |

|---|

1. Introduction

Mistral AI에 어떤 영향?

GQA

- 추론 속도 향상

- 디코딩 과정에서의 메모리 요구량 감소

- 실시간 애플리케이션의 중요한 사항인 높은 batch size → 높은 throughput

SWA

- 더 긴 시퀀스에 대해 컴퓨팅 자원을 절약하며 다룰 수 있음

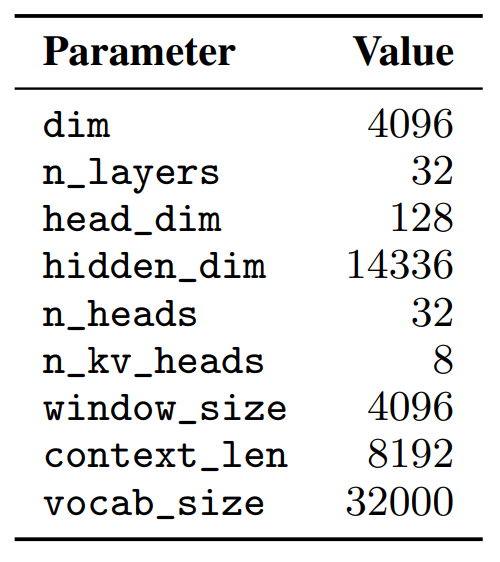

2. Architectural Details

기본 구조

transformer 기반

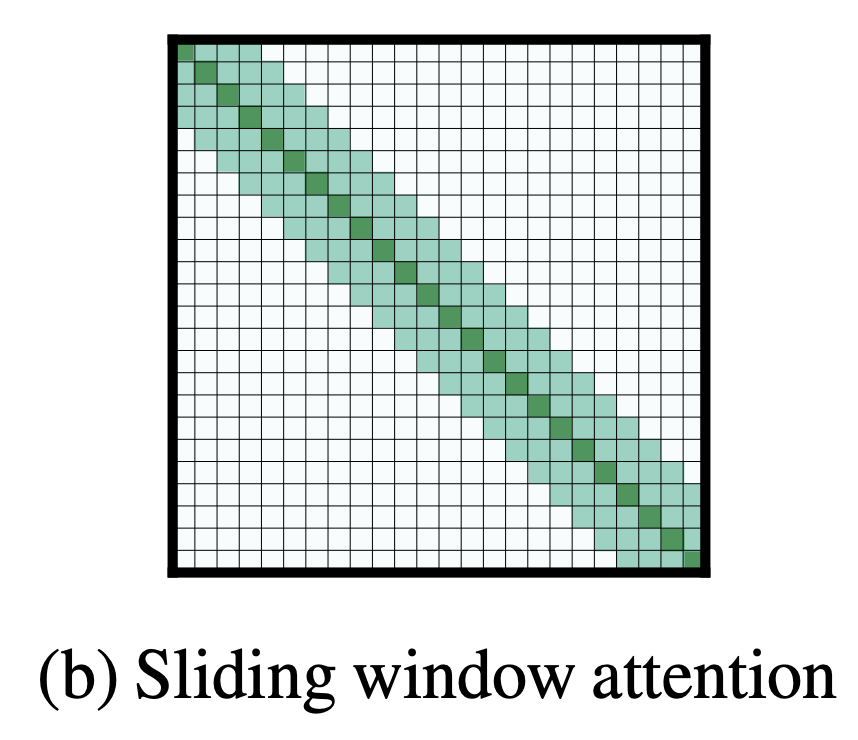

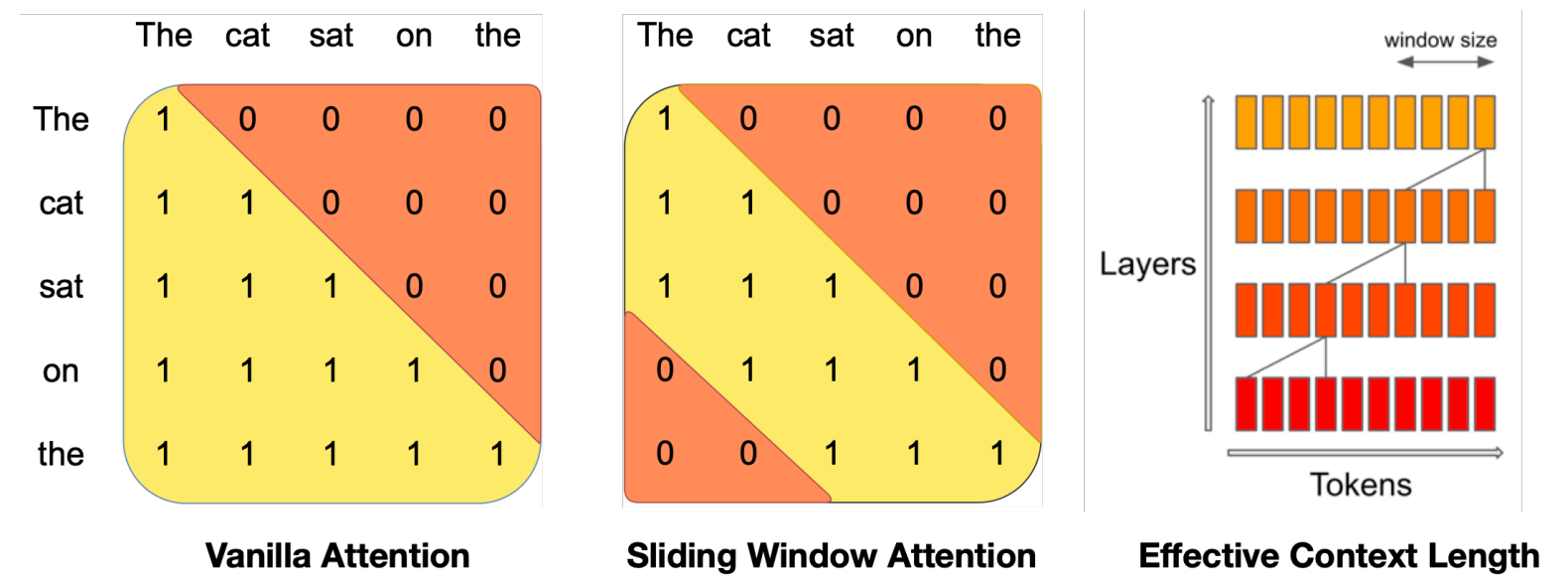

Sliding Window Attention

- transformer의 각 layer를 노출하여 W(window size)보다 큰 정보를 얻을 수 있음

- hi(k번째 layer의 i번째 위치의 상태)는 i번째 위치 이전 layer의 모든 숨겨진 상태와 관련 있음

- 반복적으로, hi는 입력 layer의 token들에 W*k까지의 위치에 접근 가능(Figure 1)

- 마지막 layer에서는 W=4096을 사용하여 이론적으로 약 131K개 token의 attention span이 있음

- 실제로 적용했을 때, 16K 길이의 시퀀스와 W=4096에 대해서 vanilla attention baseline에 비해 FlashAttention과 xFormers의 속도가 2배 개선됨

- vanilla attention에서의 연산량은 시퀀스 길이에 대해 4배, 메모리 사용량은 토큰의 개수에 대해 선형적으로 증가

- cache availability가 감소하여 추론 시간에 대해 더 큰 latency와 작은 throughput 발생

- 하지만, Mistral 7B는 sliding window attention을 사용하였기 때문에 각 토큰은 이전 layer에서 최대 W개의 token에 접근 가능

- 이 때 sliding window 바깥쪽 token들은 여전히 다음 단어 예측에 영향을 줌

- 각 attention layer에서 정보가 W개의 token들 앞으로 이동 가능 → 따라서 k개의 attention layers 이후에 정보가 k*W개 token들 앞으로 이동 가능

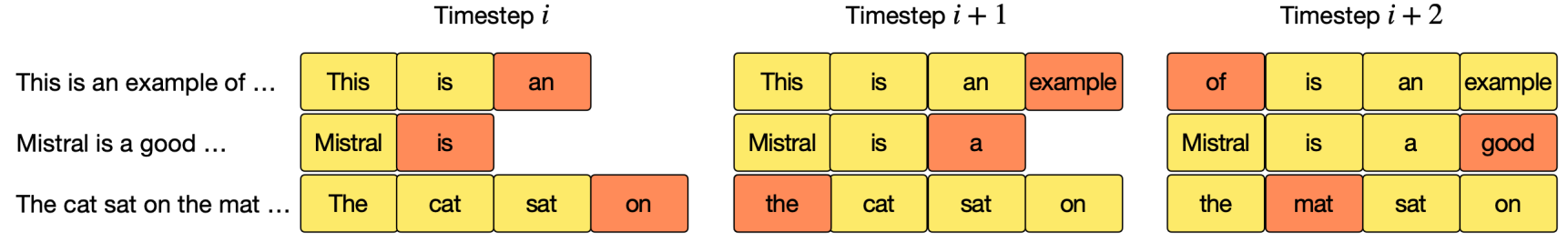

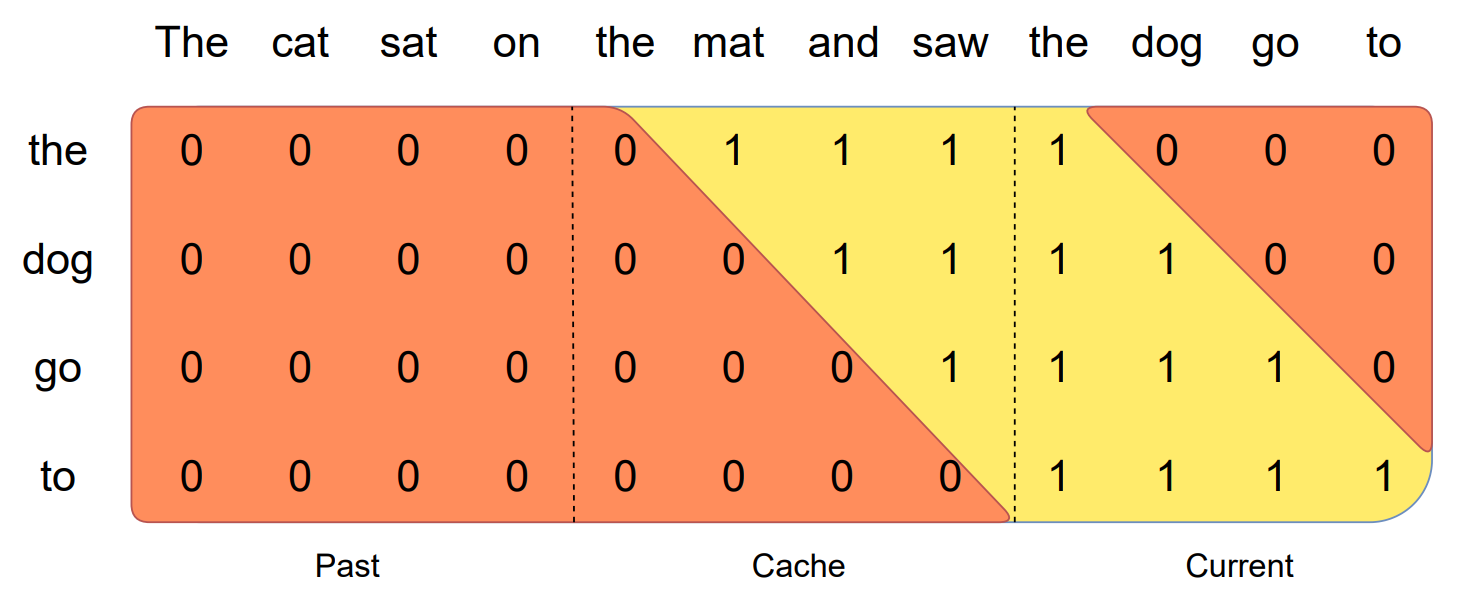

Rolling Buffer Cache

- fixed attention span: rolling buffer cache를 사용해서 최대 cache 사이즈를 정하는 기법

Figure 2: Rolling buffer cache

Figure 2: Rolling buffer cache

- cache 크기가 W=4로 고정되어 있을 때, i번째 단어는 i mod W번째의 cache에 저장

- i가 W보다 크다면 해당 위치의 cache는 overwritten

- 32k의 시퀀스 길이에 대해서 모델 성능을 해치지 않으면서 cache memory 사용량을 8x로 줄이는 효과

Pre-fill and Chunking

- 시퀀스를 생성할 때 토큰을 일대일로 예측하는데, 이 때 각 토큰은 이전 토큰에 영향을 받음

- 사전 작성된 prompt를 통해 (k, v) cache를 미리 채울 수 있음(?)

- prompt가 매우 크면 작은 조각(chunk)으로 미리 자른 뒤 cache를 각 chunk로 채움

- 효과: chunk size로 window size를 정할 수 있음

- 각 chunk에 대해서 cache와 chunk에 대한 attention을 계산해야 함

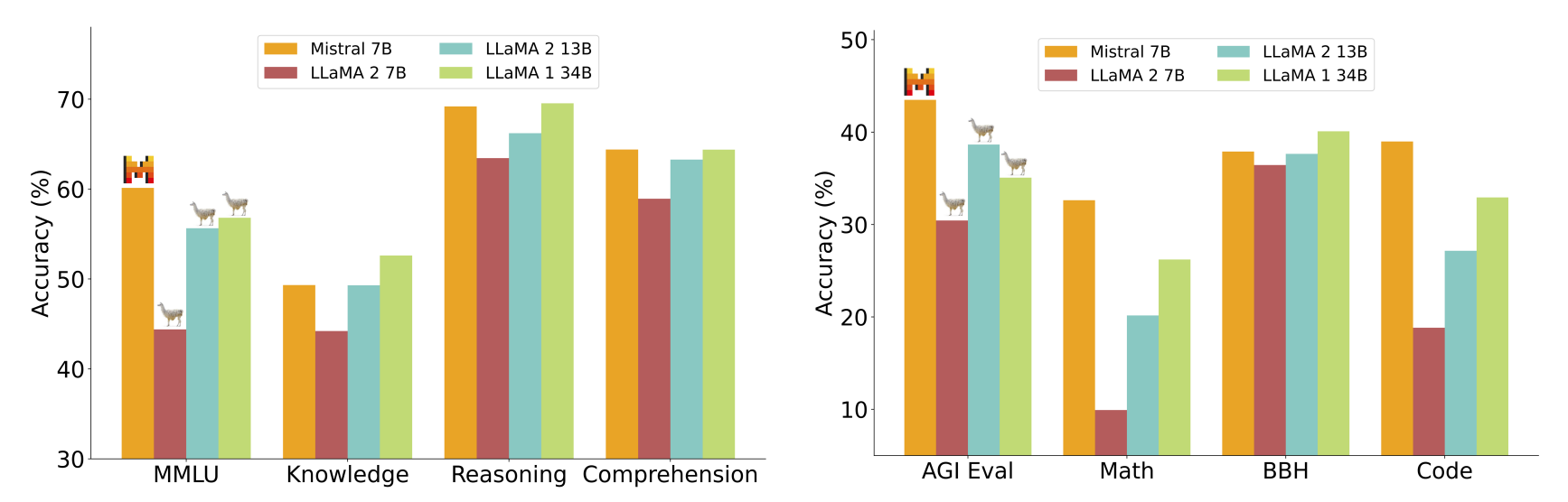

3. Results

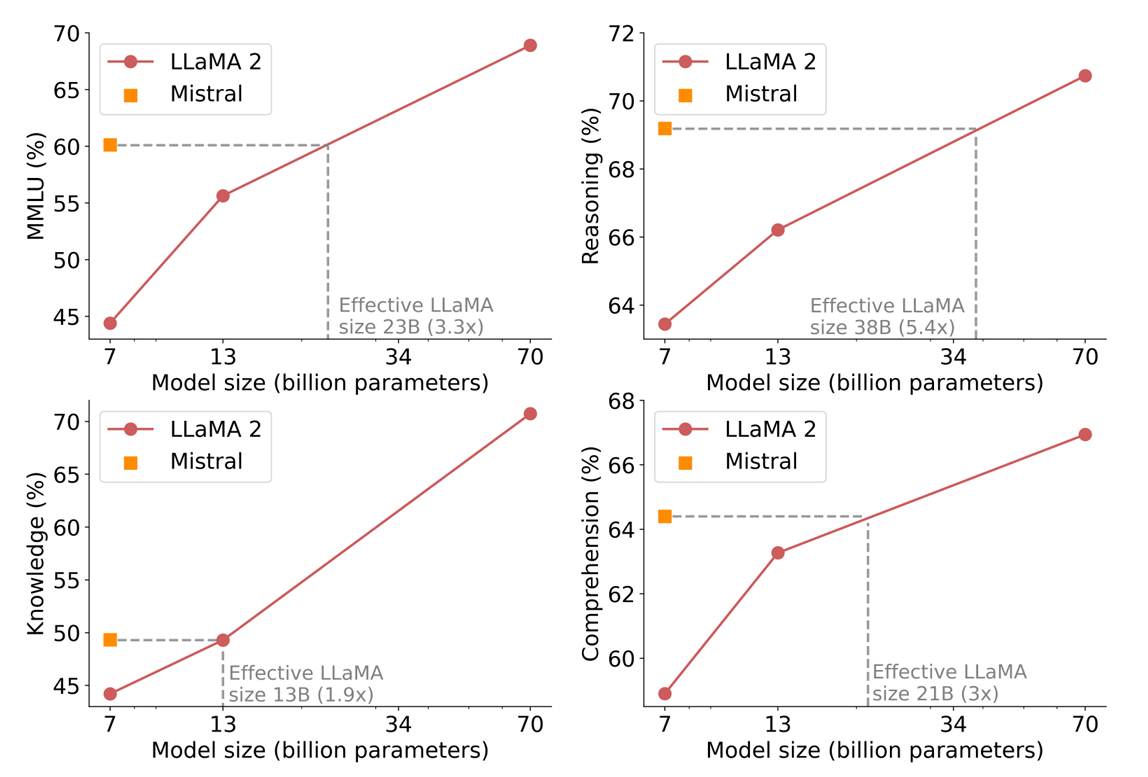

Mistral 7B vs. Llama

- re-run all benchmarks

- outperformed

Size and Efficiency

- 추론, 이해, STEM 추론에서 Llama 2와 비교하여 3배 이상 작은 사이즈로 더 좋은 성능을 보여줌

- Knowledge 벤치마크에서는 1.9x 정도 낮은 성능

- 적은 파라미터로 저장할 수 있는 지식의 양에 한계 존재

Evaluation Differences

- 일부 벤치마크에 대해서 Llama 2와 본 논문의 평가 지표의 차이점

- MBPP에서 hand-verified subset 사용 sanitized-mbpp.json

- TriviaQA에서 위키피디아를 제공 X

- MBPP에서 hand-verified subset 사용 sanitized-mbpp.json

4. Instruction Finetuning

- 일반화 능력을 강화하기 위해 직접 fine-tuned 결과

- 대충 일반화 성능도 좋았다는 이야기

- Hugging Face의 이 페이지 에서 Mistral 7B와 Llama 2의 성능 직접 비교 가능

5. Adding Guardrails for Front-Facing Applications

- AI 시대가 다가오며 guardrail 강화 능력의 중요성 대두

5.1 System prompt to enforce guardrails

Always assist with care, respect, and truth.

Respond with utmost utility yet securely.

Avoid harmful, unethical, prejudiced, or negative content.

Ensure replies promote fairness and positivity.- 위와 같은 175개의 prompt를 적용함으로써 안정성 추구

- 또한 ‘How to kill a linux process’라f는 질문에 대해서 적절한 응답 제공

- Mistral 7B는 ‘kill’ 명령어를 사용하여 리눅스를 종료하는 방법을 설명

- Llama 2는 ‘kill’이라는 단어가 도덕적이지 못하다며 적절하지 않은 응답 제공

5.2 Content moderation with self-reflection

- prompt 혹은 모델이 생성한 응답에 대해 스스로 검사할 수 있도록 self-reflection prompt 제작

- 결과적으로 recall 95.6%, accuracy 99.4% 도달

6. Conclusion

- Mistral 7B는 언어 모델이 기존의 생각을 압축하기보다 지식을 압축한다는 것을 시사한다.

- 기존: 2차원(모델 성능, 학습 비용) 내에서 기준을 정하는 것을 중요시

- Mistral 7B: 실제적인 문제는 3차원(모델 성능, 학습 비용, 추론 비용)이며, 가능한 작은 모델로 최고의 성능을 내는 것에 있다.