-

LSTM

- 타임스텝이 긴 데이터를 효과적으로 학습하기위해 고안된 순환층이다. 입력/삭제/출력 게이트 역할을 하는 작은 셀이 포함되있다.

- LSTM은닉상태와 셀상태를 출력한다. 셀상태는 다음층으로 전달되지않으며 현재 셀에서만 순환한다.

-

GRU

LSTM의 간소화 버전으로 성능도 조오타~!

LSTM 신경망 훈련하기

from tensorflow.keras.datasets import imdb

from sklearn.model_selection import train_test_split

(train_input, train_target), (test_input, test_target) = imdb.load_data(

num_words=500)

train_input, val_input, train_target, val_target = train_test_split(

train_input, train_target, test_size=0.2, random_state=42)

from tensorflow.keras.preprocessing.sequence import pad_sequences

train_seq = pad_sequences(train_input, maxlen=100)

val_seq = pad_sequences(val_input, maxlen=100)

from tensorflow import keras

model = keras.Sequential()

model.add(keras.layers.Embedding(500,16,input_length=100))

model.add(keras.layers.LSTM(8))

model.add(keras.layers.Dense(1,activation='sigmoid'))

model.summary()

- simpleRNN의 파라미터 개수는 200개였으나 LSTM셀에는 작은 셀이 4개나 있으므로 800개

rmsprop = keras.optimizers.RMSprop(learning_rate=1e-4)

model.compile(optimizer=rmsprop,loss='binary_crossentropy',metrics=['accuracy'])

checkpoint_cb = keras.callbacks.ModelCheckpoint('best-lstm-model.h5',save_best_only=True)

early_stopping_cb = keras.callbacks.EarlyStopping(patience=3,restore_best_weights=True)

history = model.fit(train_seq,train_target,epochs=100,batch_size=64,validation_data=(val_seq,val_target),callbacks=[checkpoint_cb,early_stopping_cb])import matplotlib.pyplot as plt

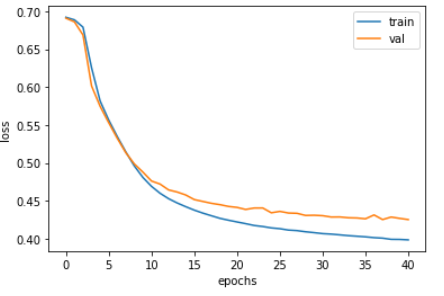

plt.plot(history.history['loss'])

plt.plot(history.history['val_loss'])

plt.xlabel('epochs')

plt.ylabel('loss')

plt.legend(['train','val'])

plt.show()

- 기본 순환층보다 LSTM이 과대적합을 억제하면서 훈련을 잘 수행함

- 경우에 따라서 과대적합을 더 강하게 제어할 필요가 있음 (규제, 드롭아웃 등을 활용하여)

순환층에 드롭아웃 적용하기

model2 = keras.Sequential()

model2.add(keras.layers.Embedding(500,16,input_length=100))

model2.add(keras.layers.LSTM(8,dropout=0.3))

model2.add(keras.layers.Dense(1,activation='sigmoid'))rmsprop = keras.optimizers.RMSprop(learning_rate=1e-4)

model2.compile(optimizer=rmsprop,loss='binary_crossentropy',metrics=['accuracy'])

checkpoint_cb = keras.callbacks.ModelCheckpoint('best-lstm-model.h5',save_best_only=True)

early_stopping_cb = keras.callbacks.EarlyStopping(patience=3,restore_best_weights=True)

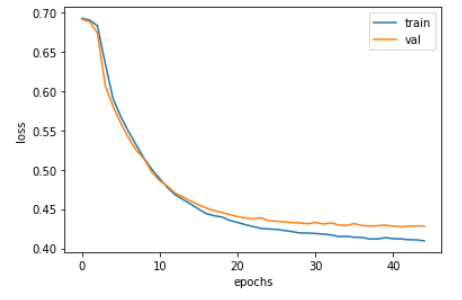

history = model2.fit(train_seq,train_target,epochs=100,batch_size=64,validation_data=(val_seq,val_target),callbacks=[checkpoint_cb,early_stopping_cb])plt.plot(history.history['loss'])

plt.plot(history.history['val_loss'])

plt.xlabel('epochs')

plt.ylabel('loss')

plt.legend(['train','val'])

plt.show()

- 드롭아웃을 통해 훈련손실과 검증손실의 차이가 좁혀짐

2개의 층을 연결하기

model3 = keras.Sequential()

model3.add(keras.layers.Embedding(500,16,input_length=100))

model3.add(keras.layers.LSTM(8,dropout=0.3,return_sequences=True))

model3.add(keras.layers.LSTM(8,dropout=0.3))

model3.add(keras.layers.Dense(1,activation='sigmoid'))

model.summary()

- 앞쪽의 순환층이 모든 타임스텝에 대한 은닉상태를 출력해야함

- 마지막 순환층만 마지막 타임스텝의 은닉상태를 출력함!!

- 마지막을 제외한 다른 모든 순환층에 return_sequence = True로 지정한다.

rmsprop = keras.optimizers.RMSprop(learning_rate=1e-4)

model3.compile(optimizer=rmsprop,loss='binary_crossentropy',metrics=['accuracy'])

checkpoint_cb = keras.callbacks.ModelCheckpoint('best-lstm-model.h5',save_best_only=True)

early_stopping_cb = keras.callbacks.EarlyStopping(patience=3,restore_best_weights=True)

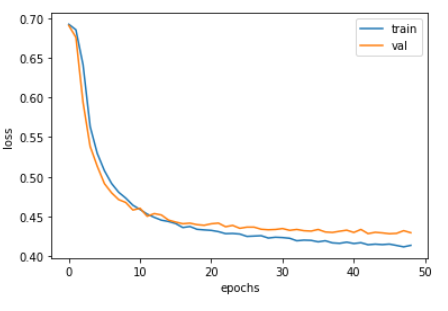

history = model3.fit(train_seq,train_target,epochs=100,batch_size=64,validation_data=(val_seq,val_target),callbacks=[checkpoint_cb,early_stopping_cb])plt.plot(history.history['loss'])

plt.plot(history.history['val_loss'])

plt.xlabel('epochs')

plt.ylabel('loss')

plt.legend(['train','val'])

plt.show()

GRU 구조

model4 = keras.Sequential()

model4.add(keras.layers.Embedding(500,16,input_length=100))

model4.add(keras.layers.GRU(8))

model4.add(keras.layers.Dense(1,activation='sigmoid'))

model.summary()

- GRU 셀에는 3개의 셀이 있다.

- 3 x (16x8 + 8x8 + 8 )= 600

- 어라? 요약에는 624개 인데??

- 실제로 작은 셀마다 하나의 절편이 추가되고 8개의 뉴런이 있으므로 24개가 더해짐

rmsprop = keras.optimizers.RMSprop(learning_rate=1e-4)

model4.compile(optimizer=rmsprop,loss='binary_crossentropy',metrics=['accuracy'])

checkpoint_cb = keras.callbacks.ModelCheckpoint('best-lstm-model.h5',save_best_only=True)

early_stopping_cb = keras.callbacks.EarlyStopping(patience=3,restore_best_weights=True)

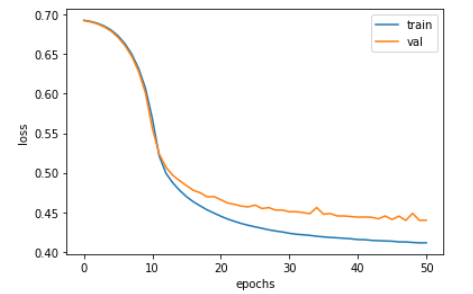

history = model4.fit(train_seq,train_target,epochs=100,batch_size=64,validation_data=(val_seq,val_target),callbacks=[checkpoint_cb,early_stopping_cb])plt.plot(history.history['loss'])

plt.plot(history.history['val_loss'])

plt.xlabel('epochs')

plt.ylabel('loss')

plt.legend(['train','val'])

plt.show()

- LSTM 보다 작은 가중치이지만 좋은 성능을 내고 있음