부트캠프에서 진행한 머신러닝 경진대회를 통해, 공공데이터를 기반으로 서울시 아파트 가격을 예측하는 프로젝트를 수행했습니다. 데이터 전처리부터 모델링, 그리고 AutoML까지의 흐름을 정리하면서, 실전 감각을 익히는 데 큰 도움이 되었던 경험을 작성해봅니다.

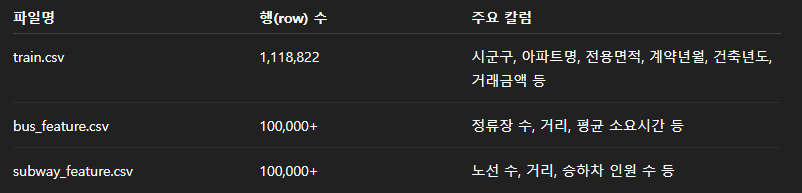

📦 데이터 구성

-

train.csv: 거래가 포함된 학습용 데이터셋 (약 111만 건)

-

test.csv: 예측을 위한 테스트셋 (약 9천 건)

-

bus_feature.csv: 단지별 버스정류장 통계

-

subway_feature.csv: 단지별 지하철 통계

🧼 데이터 전처리 및 특징

-

One-Hot Encoding: 범주형 변수(시군구, 거래유형 등)에 대해 적용

-

Missing 처리: 결측 비율이 높고 중요도가 낮은 변수는 제거하거나 평균/최빈값으로 대체

-

지하철/버스 정보 병합: 단지 단위로 aggregation 후 아파트 데이터와 조인

import matplotlib.pyplot as plt

plt.scatter(train_df['전용면적(㎡)'], train_df['거래금액(만원)'], alpha=0.3)

plt.xlabel("전용면적(㎡)")

plt.ylabel("거래금액(만원)")

plt.title("전용면적 vs 거래금액")

plt.show()🔹 A) 이상치 및 결측치 처리

- 거래금액(만원) 컬럼에 비정상적으로 낮은 값(예: 0원 거래)을 제외 처리

- 건축년도가 1900년 이하이거나 이상한 값으로 입력된 경우 제거

- 해제사유발생일, 등기신청일자 등 결측 비율이 80% 이상인 변수는 제거

🔹 B) 범주형 변수 전처리

- 시군구, 도로명, 아파트명, 거래유형 등 범주형 변수는 One-Hot Encoding 또는 Label Encoding 적용

- 특히 아파트명은 고유값이 많아 평균 거래금액을 groupby하여 target encoding 시도

train_df["아파트명_평균가"] = train_df.groupby("아파트명")["거래금액(만원)"].transform("mean")

🔹 C) 면적, 층, 건축년도 관련 변환

- 전용면적은 로그 변환하여 스케일 조정

- 층은 낮은층/고층 여부를 구간으로 나눠 범주화

- 건축년도는 경과연수 = 계약년월 - 건축년도 로 파생 변수 생성

🔹 D) 외부 데이터 병합 (버스 & 지하철)

- bus_feature.csv, subway_feature.csv는 단지별로 통합 후 도로명 또는 시군구 기준으로 병합

- 주변 대중교통 접근성 지표로 활용 (ex. 지하철역 수, 평균 거리, 노선 수 등)

🤖 모델링

🔸 1. 베이스라인 모델링

- Hyperparameter 설정: 기본 설정에서 num_leaves, max_depth, learning_rate 등을 Grid Search로 최적화

- EarlyStopping 및 eval_set 지정으로 과적합 방지

from lightgbm import LGBMRegressor

model = LGBMRegressor(num_leaves=64, max_depth=7, learning_rate=0.05, n_estimators=1000)

model.fit(X_train, y_train, eval_set=[(X_valid, y_valid)], early_stopping_rounds=50)

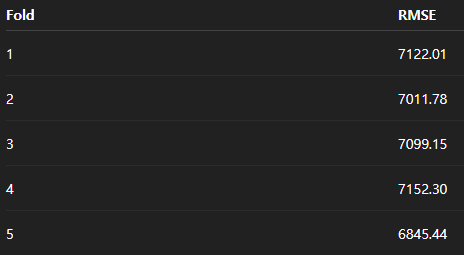

🔸 2. 교차검증 전략

- Stratified KFold: 거래금액을 구간으로 나누어 stratification 수행

- K=5 or K=10 교차검증을 통해 Validation RMSE 평균 계산

🔸 3. 성능 개선을 위한 앙상블

- 개별 모델(LGBM, XGB, CatBoost)의 예측 결과를 Weighted Average 방식으로 앙상블

- 예: final_pred = 0.5 lgb_pred + 0.3 xgb_pred + 0.2 * cat_pred

🔸 4. AutoML 적용 (AutoGluon)

- AutoGluon은 여러 모델을 자동으로 학습하고, stacking 및 bagging을 수행

- 단순히 .fit() 메서드만 호출하면 내부적으로 수십 개 모델을 실험하고 조합

from autogluon.tabular import TabularPredictor

predictor = TabularPredictor(label='거래금액(만원)', eval_metric='rmse').fit(train_data=train_df)✅ 성능 예시 (LightGBM)

⚙️ AutoML 적용 (AutoGluon)

마지막 단계에서는 Amazon의 AutoML 프레임워크인 AutoGluon을 활용했습니다. 이 프레임워크는 여러 모델(LGBM, XGB, NeuralNet 등)을 조합하고, Stacking/Bagging 앙상블까지 자동으로 수행

[장점]

복잡한 파라미터 튜닝 없이도 준수한 성능

다양한 모델 조합을 통한 스태킹 앙상블

Validation Set 분할 자동화

from autogluon.tabular import TabularPredictor

predictor = TabularPredictor(label="거래금액(만원)").fit(train_data=train_df)

🔍 회고 및 마무리

-

EDA와 피처 엔지니어링에서 시간 소요 (가장 많이 되고 가장 중요한듯)

-

AutoML을 사용하면서 baseline 모델을 이해하고 비교하는 과정이 중요함을 느낌

-

향후에는 시계열 고려 또는 지역별 모델 분리 등의 고도화를 고려할 예정

-

부동산이라는 실생활과 밀접한 데이터를 다뤄보며, 머신러닝이 어떤 방식으로 의사결정에 도움을 줄 수 있는지 체감할 수 있었음