nn.Softmax

import torch

from torch import nn

a = torch.tensor( [1,2,4,3,5,5], dtype=torch.float32)

a = a.view(3,2)

print(a)

print(a.shape)

# tensor([[1., 2.],

# [4., 3.],

# [5., 5.]])

# torch.Size([3, 2])

softmax = nn.Softmax(dim=0)

softmax_a = softmax(a)

print(softmax_a)

# tensor([[0.0132, 0.0420],

# [0.2654, 0.1142],

# [0.7214, 0.8438]]): dim=0 ) row

: row(행)들끼리 Softmax 계산

softmax_a.sum(dim=0)

tensor([1., 1.]): row끼리 softmax연산 했으므로 row들의 합 = 1

model parameter optimize

- loss function으로 loss 계산

- 역전파

- optimizer.zero_grad()

: backward()함수는 새로운 gradient를 기존 gradient에 (+=)누적시키므로 0으로 초기화해줘야 한다.

- loss.backward()

: loss function의 미분값, gradients 저장

- optimizer.step()

: gradients를 바탕으로 parameter 조정- autograd

loss.backward()

h : tensor 변수(w)가 포함된 수식

h.backward() : h를 w로 미분한 값

h.backward()의 결과값 -> w.grad에 저장됨.

- autograd

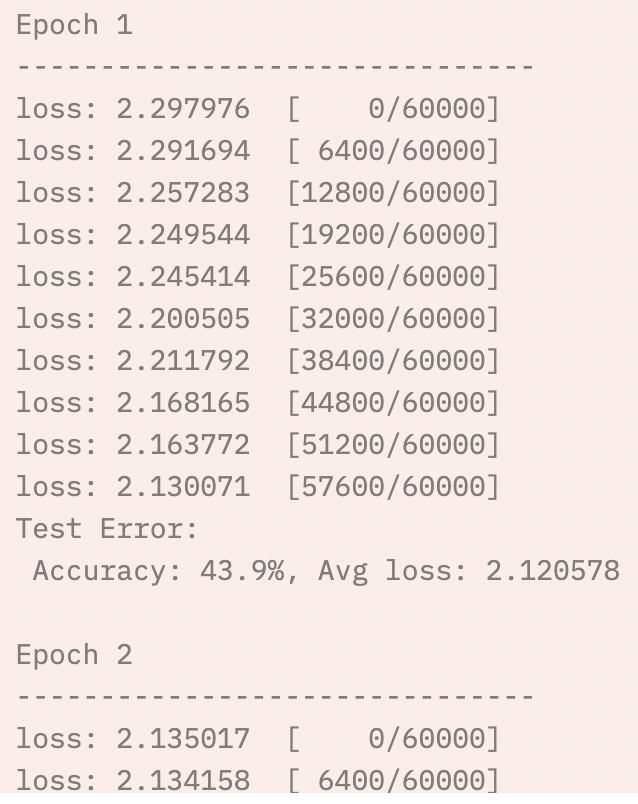

def train_loop(dataloader, model, loss_fn, optimizer):

size = len(dataloader.dataset)

for batch, (X, y) in enumerate(dataloader):

# 예측(prediction)과 손실(loss) 계산

pred = model(X)

loss = loss_fn(pred, y)

# 역전파

optimizer.zero_grad()

loss.backward()

optimizer.step()

if batch % 100 == 0:

loss, current = loss.item(), batch * len(X)

print(f"loss: {loss:>7f} [{current:>5d}/{size:>5d}]")

def test_loop(dataloader, model, loss_fn):

size = len(dataloader.dataset)

num_batches = len(dataloader)

test_loss, correct = 0, 0

with torch.no_grad():

for X, y in dataloader:

pred = model(X)

test_loss += loss_fn(pred, y).item()

correct += (pred.argmax(1) == y).type(torch.float).sum().item()

test_loss /= num_batches

correct /= size

print(f"Test Error: \n Accuracy: {(100*correct):>0.1f}%, Avg loss: {test_loss:>8f} \n")

loss_fn = nn.CrossEntropyLoss()

optimizer = torch.optim.SGD(model.parameters(), lr=learning_rate)

epochs = 10

for t in range(epochs):

print(f"Epoch {t+1}\n-------------------------------")

train_loop(train_dataloader, model, loss_fn, optimizer)

test_loop(test_dataloader, model, loss_fn)

print("Done!")