eks에서 입력

sudo su -

eksctl create cluster --name 4gl0717 --region ap-northeast-2 --node-type t2.small

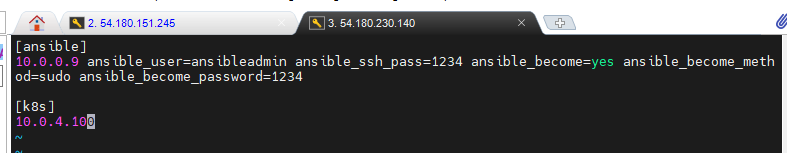

앤서블 인스턴스

vi /etc/ansible/hosts

[ansible]

10.0.0.9 ansible_user=ansibleadmin ansible_ssh_pass=1234 ansible_become=yes ansible_become_method=sudo ansible_become_password=1234[k8s]

10.0.4.100

eks 인스턴스

vi /etc/ssh/sshd_config

- 65행 yes (PasswordAuthentication yes)

systemctl restart sshd

useradd ansibleadmin

passwd ansibleadmin(1234)visudo

- ansibleadmin ALL=(ALL) NOPASSWD: ALL

앤서블 인스턴스

sudo su ansibleadmin

ssh-keygen

ssh-copy-id ansibleadmin@10.0.4.100(eks 인스턴스의 프라이빗IP)

ansible all -m ping

eks 인스턴스

vi k8s_app.yaml

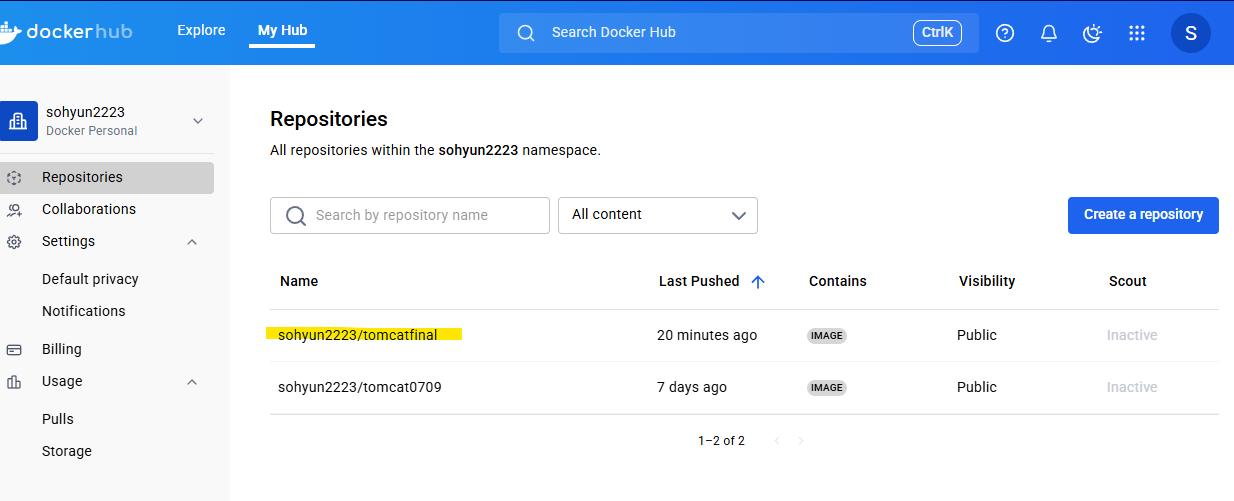

apiVersion: apps/v1 kind: Deployment metadata: name: my4glapp labels: app: my4glapp spec: replicas: 2 selector: matchLabels: app: my4glapp template: metadata: labels: app: my4glapp spec: containers: - name: my4glapp image: sohyun2223/tomcatfinal imagePullPolicy: Always ports: - containerPort: 8080 strategy: type: RollingUpdate rollingUpdate: maxSurge: 1 maxUnavailable: 1

vi k8s_svc.yaml

apiVersion: v1 kind: Service metadata: name: elbk8s labels: app: my4glapp spec: selector: app: my4glapp ports: - port: 8080 targetPort: 8080 type: LoadBalancer

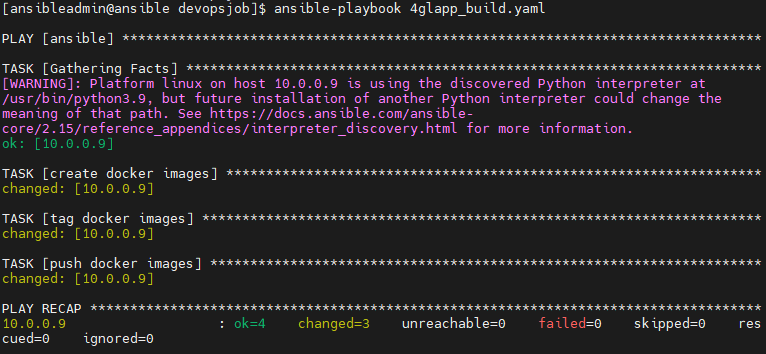

앤서블 인스턴스

cd /opt/devopsjob/

ansible-playbook 4glapp_build.yaml

cat 4glapp_build.yaml

--- - hosts: ansible tasks: - name: create docker images shell: docker build -t tomcatfinal . args: chdir: /opt/devopsjob - name: tag docker images shell: docker tag tomcatfinal sohyun2223/tomcatfinal - name: push docker images shell: docker push sohyun2223/tomcatfinal

eks 인스턴스

kubectl apply -f k8s_app.yaml

kubectl apply -f k8s_svc.yaml

watch kubectl get pod

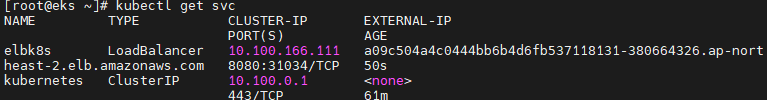

kubectl get svc

pod는 상태가 running으로 잘 뜨는것을 확인

svc도 로드밸런서의 주소 확인 가능

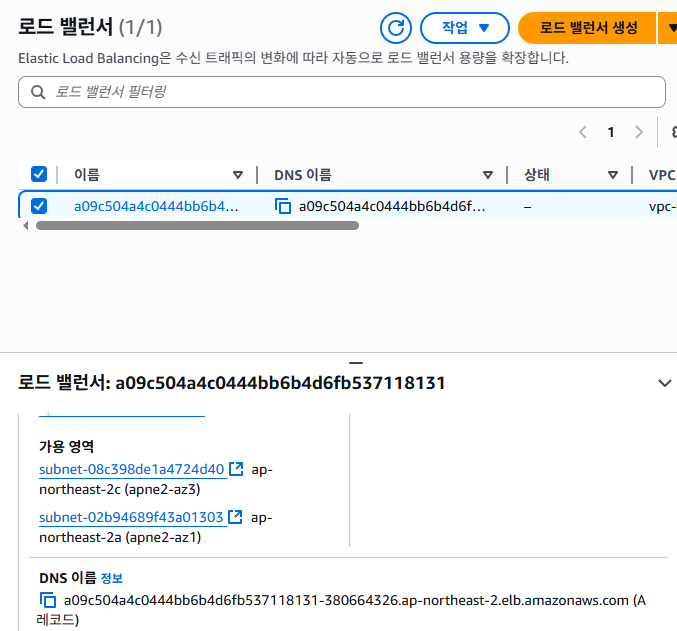

AWS 페이지에서 로드밸런서에 생성된 내역을 확인 가능

EKS 인스턴스

확인했으니 삭제

kubectl delete -f k8s_app.yaml

kubectl delete -f k8s_svc.yaml

앤서블 인스턴스

앤서블에서 해당 매니페스트들을 실행할 플레이북 작성

sudo su - ansibleadmin

cd /opt/devopsjob

vi k8s_deploy.yaml--- - hosts: k8s become: yes tasks: - name: deploy app on k8s shell: /root/bin/kubectl apply -f /root/k8s_app.yaml - name: deploy svc on k8s shell: /root/bin/kubectl apply -f /root/k8s_svc.yaml

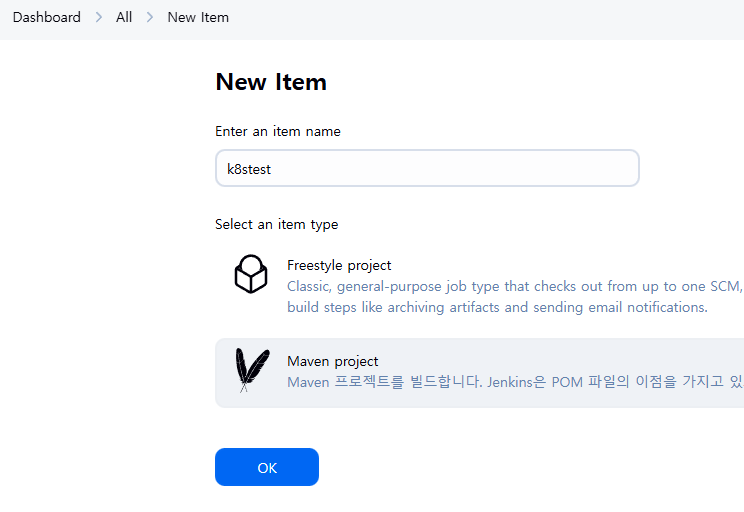

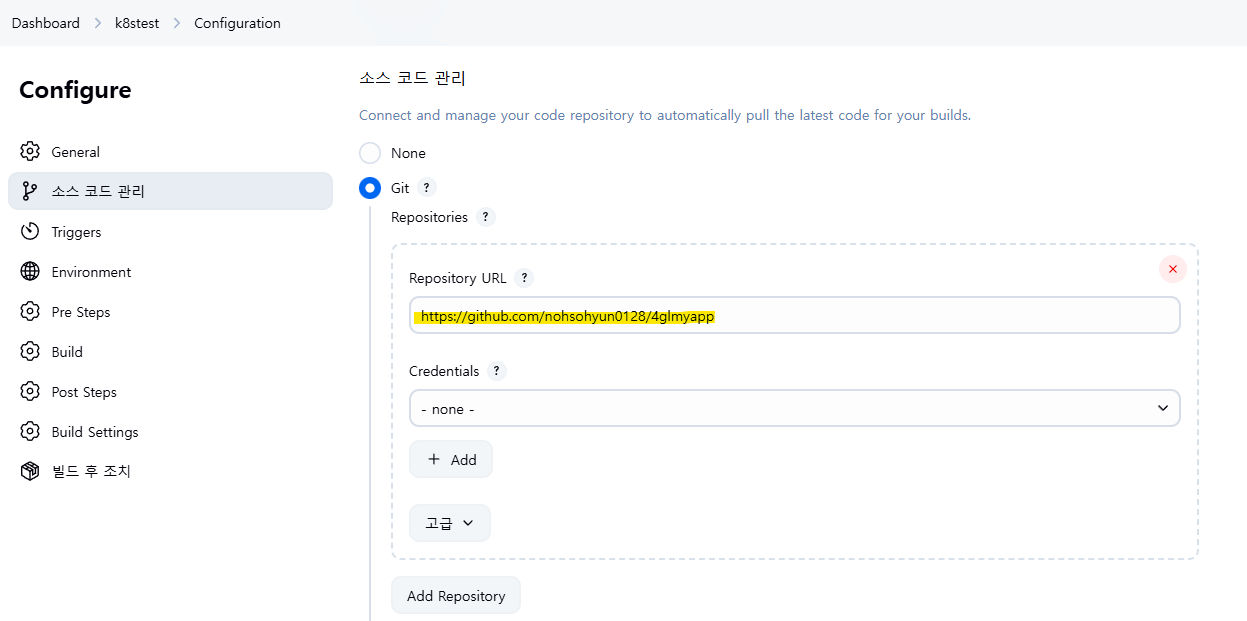

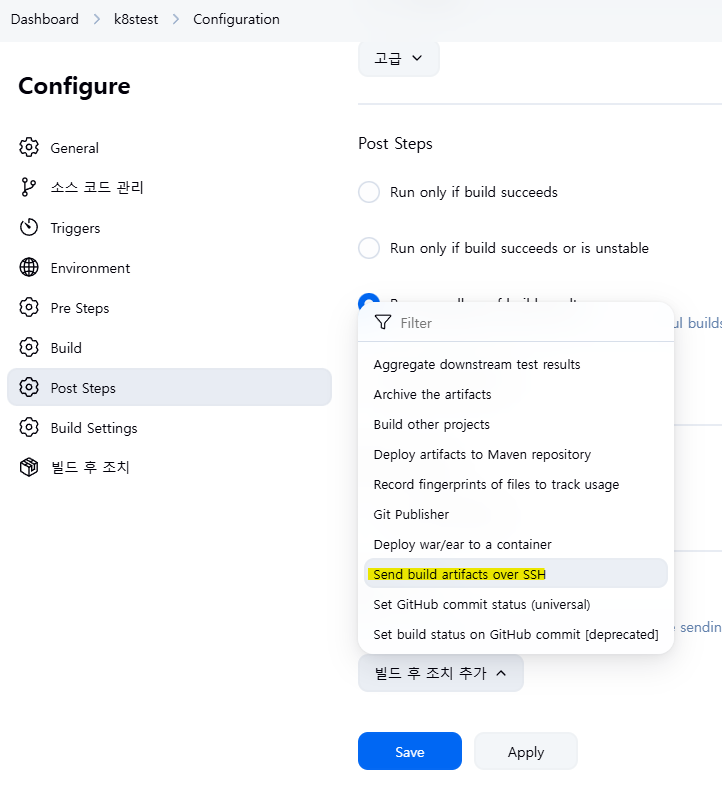

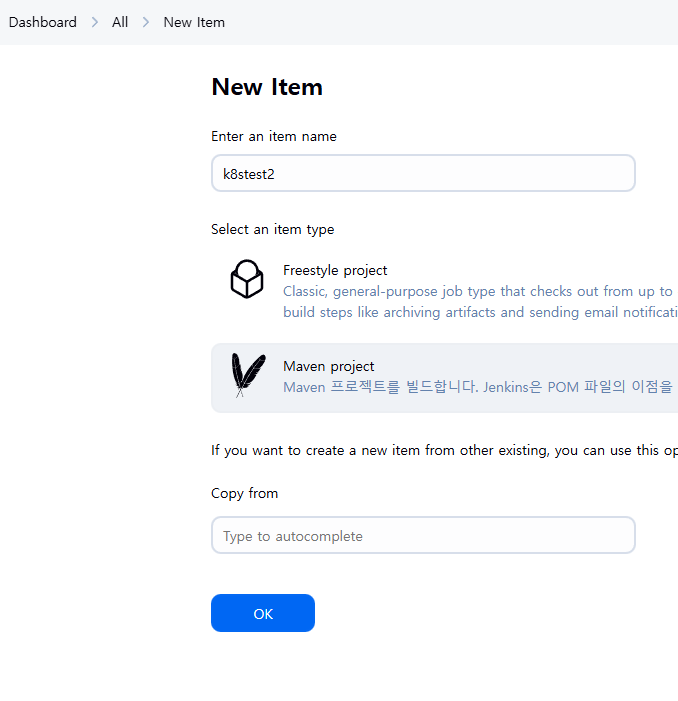

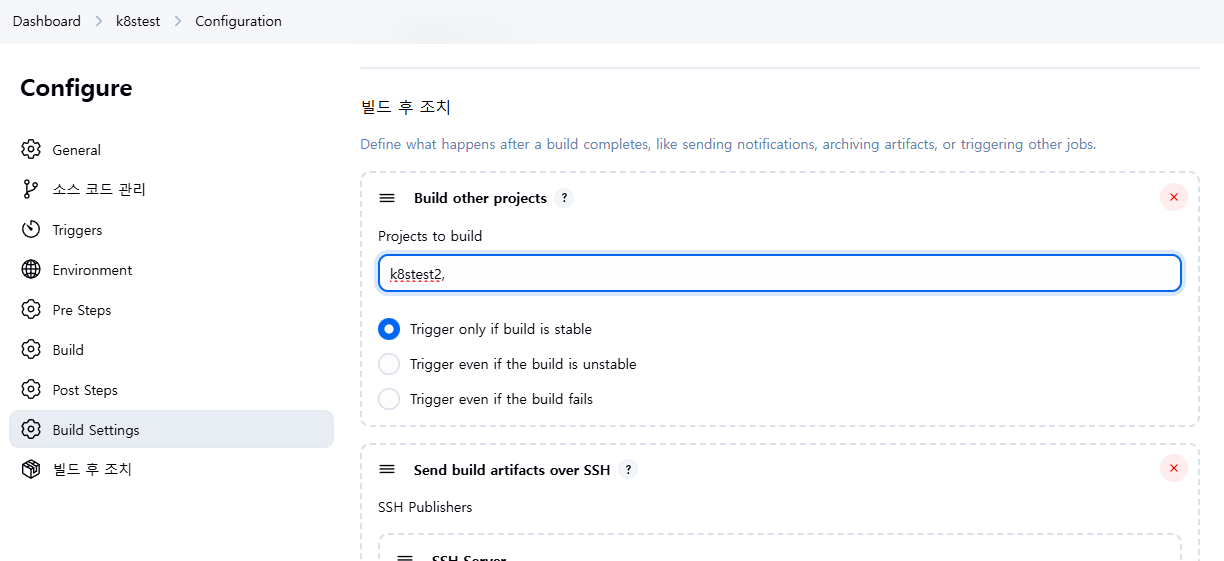

젠킨스 퍼블릭ip:8080 접속

clean package

target/*.war

target

//opt/devopsjob

ansible-playbook /opt/devopsjob/4glapp_build.yaml

sudo vi k8s_deploy.yaml

--- - hosts: k8s become: yes tasks: - name: deploy app on k8s shell: /root/bin/kubectl apply -f /root/k8s_app.yaml - name: deploy svc on k8s shell: /root/bin/kubectl apply -f /root/k8s_svc.yaml - name: rollout update on k8s shell: /root/bin/kubectl rollout restart deployment.apps/my4glapp

마지막에는 뭘 하는지도 모르겠다 걍 놓침

eks 인스턴스

eksctl delete cluster --name 4gl0717 --region ap-northeast-2

이제 돈나가는거 다 끄자