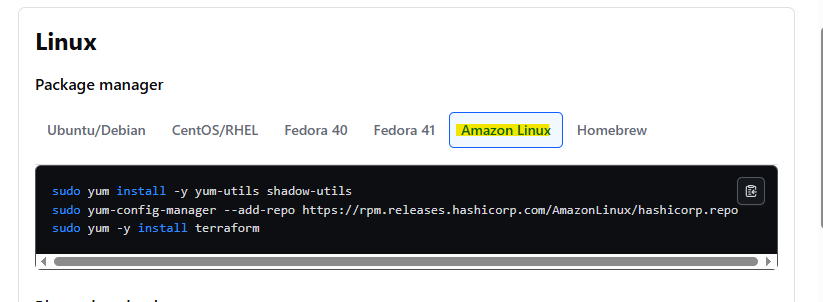

테라폼 설치

https://developer.hashicorp.com/terraform/install#linux

1. Terraform 설치 및 초기화

✅ 설치 (Amazon Linux 2023 기준)

sudo yum install -y yum-utils shadow-utils

sudo yum-config-manager --add-repo https://rpm.releases.hashicorp.com/AmazonLinux/hashicorp.repo

sudo yum -y install terraform

✅ 설치 확인

terraform -v

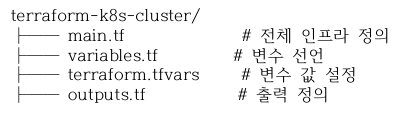

2. 프로젝트 디렉터리 구조

mkdir terraform-k8s-cluster

touch terraform-k8s-cluster/main.tf

touch terraform-k8s-cluster/variables.tf

touch terraform-k8s-cluster/terraform.tfvars

touch terraform-k8s-cluster/outputs.tf

https://v1-32.docs.kubernetes.io/docs/setup/production-environment/tools/kubeadm/install-kubeadm/

provider "aws" {

region = var.region

}

locals {

master_script = <<-EOM

#!/bin/bash

set -eux

cat <<'EOSH' > /root/master.sh

#!/bin/bash

set -eux

swapoff -a

sed -i '/ swap / s/^/#/' /etc/fstab

dnf install -y containerd

containerd config default > /etc/containerd/config.toml

sed -i 's/SystemdCgroup = false/SystemdCgroup = true/' /etc/containerd/config.toml

systemctl --now enable containerd

cat <<MODS > /etc/modules-load.d/k8s.conf

overlay

br_netfilter

MODS

modprobe overlay

modeprobe br_netfilter

cat <<SYSCTL> /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

SYSCTL

sysctl --system

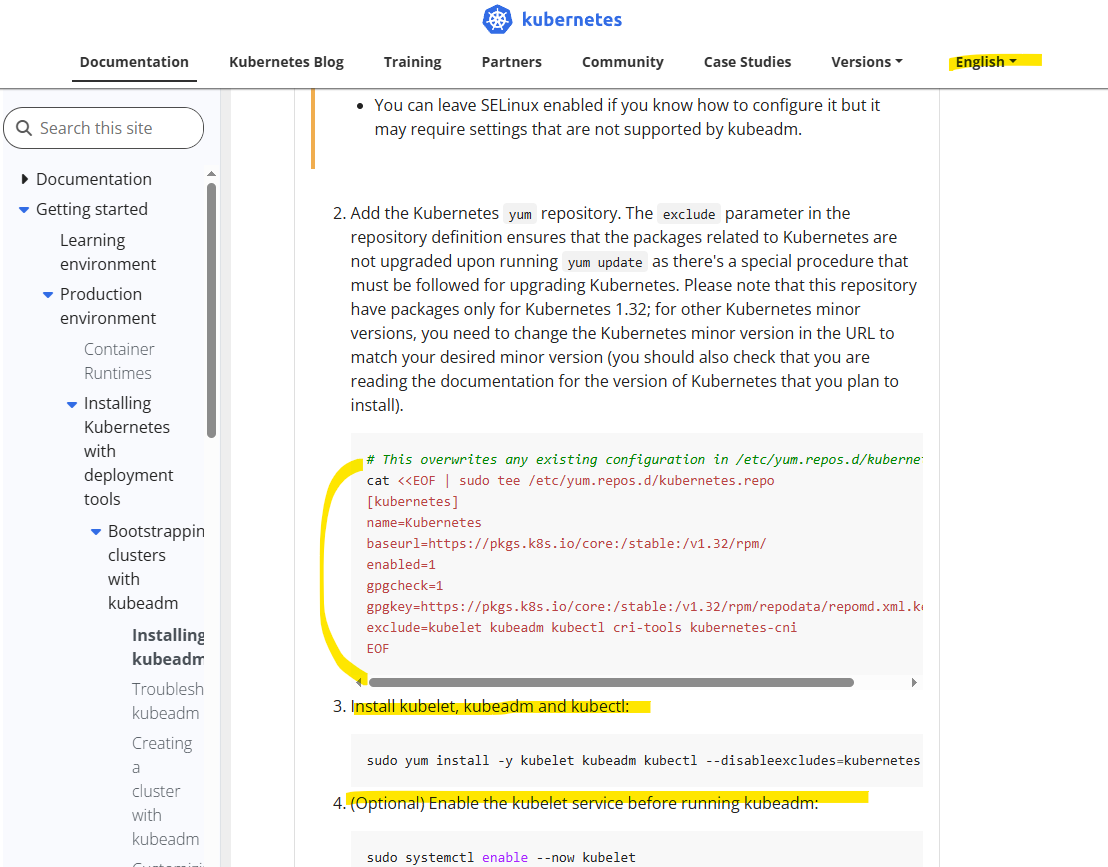

cat <<REPO | sudo tee /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://pkgs.k8s.io/core:/stable:/v1.32/rpm/

enabled=1

gpgcheck=1

gpgkey=https://pkgs.k8s.io/core:/stable:/v1.32/rpm/repodata/repomd.xml.key

exclude=kubelet kubeadm kubectl cri-tools kubernetes-cni

REPO

yum install -y kubelet kubeadm kubectl --disableexcludes=kubernetes

systemctl --now enable kubelet

kubeadm init --ignore-preflight -erros=NumCPU --ignore-preflight-errors= Mem > /root/init.log

mkdir -p /root/.kube

cp -i /etc/kubernetes/admin.conf /root/.kube/config

chown root:root /root/.kube/config

export KUBECONFIG=/etc/kubernetes/admin.conf

until KUBECONFIG=/etc/kubernetes/admin.conf kubectl get nodes > /dev/null/ 2>&1;

do

echo "Waiting for API server to respond..."

sleep 5

done

echo "Installing CNI(Weave) ..."

for i in {1..5}; do

if KUBECONFIG=/etc/kubernetes/admin.conf kubectl apply -f https://github.com/weaveworks/weave/releases/download/v2.8.1/weave-daemonset-k8s.yaml; then

echo "Weave CNI Install Success"

break

else

echo "Weave Install Failed, retry in 5 seconds..."

sleep 5

fi

done

echo "Master setup complete."

EOSH

chmod +x /root/master.sh

/root/master.sh

EOM

worker_script = <<-EOW

#!/bin/bash

set -eux

cat <<'EOSH' > /root/worker.sh

#!/bin/bash

set -eux

swapoff -a

sed -i '/ swap / s/^/#/' /etc/fstab

dnf install -y containerd

containerd config default > /etc/containerd/config.toml

sed -i 's/SystemdCgroup = false/SystemdCgroup = true/' /etc/containerd/config.toml

systemctl --now enable containerd

cat <<MODS > /etc/modules-load.d/k8s.conf

overlay

br_netfilter

MODS

modprobe overlay

modeprobe br_netfilter

cat <<SYSCTL> /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-ip6tables = 1

SYSCTL

sysctl --system

cat <<REPO | sudo tee /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://pkgs.k8s.io/core:/stable:/v1.32/rpm/

enabled=1

gpgcheck=1

gpgkey=https://pkgs.k8s.io/core:/stable:/v1.32/rpm/repodata/repomd.xml.key

exclude=kubelet kubeadm kubectl cri-tools kubernetes-cni

REPO

yum install -y kubelet kubeadm kubectl --disableexcludes=kubernetes

systemctl --now enable kubelet

echo "Worker node setup complete. Waiting for join."

EOSH

chmod +x /root/worker.sh

/root/worker.sh

EOW

}

resource "aws_vpc" "k8s_vpc" {

cidr_block = "10.0.0.0/16"

enable_dns_support = true

enable_dns_hostnames = true

tags = {

Name = "k8s-vpc"

}

}

resource "aws_subnet" "public_subnet" {

vpc_id = aws_vpc.k8s_vpc.id

cidr_block = "10.0.1.0/24"

availability_zone = "ap-northeast-2a"

map_public_ip_on_launch = true

tags = {

Name = "k8s-public-subnet"

}

}

resource "aws_subnet" "public_subnet_2" {

vpc_id = aws_vpc.k8s_vpc.id

cidr_block = "10.0.3.0/24"

availability_zone = "ap-northeast-2c"

map_public_ip_on_launch = true

tags = {

Name = "k8s-public-subnet-2"

}

}

resource "aws_subnet" "public_private" {

vpc_id = aws_vpc.k8s_vpc.id

cidr_block = "10.0.2.0/24"

availability_zone = "ap-northeast-2a"

tags = {

Name = "k8s-private-subnet"

}

}

resource "aws_internet_gateway" "k8s_igw" {

pvc_id = aws_vpc.k8s_vpc.id

}

resource "aws_route_table" "public_rt" {

vpc_id = aws_vpc.k8s_vpc.id

route {

cidr_block = "0.0.0.0/0"

gateway_id = aws_internet_gateway.k8s_igw.id

}

}

resource "aws_route_table_association" "public_rta_1" {

subnet_id = aws_subnet.public_subnet.id

route_table_id = aws_route_table.public_rt.id

}

resource "aws_route_table_association" "public_rta_2" {

subnet_id = aws_subnet.public_subnet_2.id

route_table_id = aws_route_table.public_rt.id

}

resource "aws_eip" "nat_eip" {

domain = "vpc"

}

resource "aws_nat_gateway" "nat_gw" {

subnet_id = aws_subnet.public_subnet.id

allocation_id = aws_eip.nat_eip.id

depends_on = [aws_internet_gateway.k8s_igw]

}

resource "aws_route_table" "private_rt" {

vpc_id = aws_vpc.k9s_vpc.id

route {

cidr_block = "0.0.0.0/0"

nat_gateway_id = aws_nat_gateway.nat_gw.id

}

}

resource "aws_route_table_association" "private_rta" {

subnet_id = aws_subnet.private_subnet.id

route_table_id = aws_route_table.private_rt.id

}

resource "aws_key_pair" "k8s_key" {

key_name = var.key_name

public_key = file(var.public_key_path)

}

resource "aws_security_group" "k8s_master_sg" {

name = "k8s-master-sg"

description = "Security group for Kubernetes master node"

vpc_id = aws_vpc.k8s_vpc.id

ingress {

from_port = 22

to_port = 22

protocol = "tcp"

cidr_blocks = ["0.0.0.0/0"]

}

ingress {

from_port = 6443

to_port = 6443

protocol = "tcp"

cidr_blocks = ["0.0.0.0/0"]

}

ingress {

from_port = 2379

to_port = 2380

protocol = "tcp"

cidr_blocks = ["10.0.0.0/16"]

}

ingress {

from_port = 10250

to_port = 10259

protocol = "tcp"

cidr_blocks = ["10.0.0.0/16"]

}

ingress {

from_port = 6783

to_port = 6783

protocol = "tcp"

cidr_blocks = ["10.0.0.0/16"]

}

ingress {

from_port = 6783

to_port = 6784

protocol = "udp"

cidr_blocks = ["10.0.0.0/16"]

}

egress {

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

tags = {

Name = "k8s-master-sg"

}

}

resource "aws_security_group" "k8s_worker_sg" {

name = "k8s-worker-sg"

description = "Security group for Kubernetes worker nodes"

vpc_id = aws_vpc.k8s_vpc.id

ingress {

from_port = 10250

to_port = 10250

protocol = "tcp"

cidr_blocks = ["10.0.0.0/16"]

}

ingress {

from_port = 30000

to_port = 32767

protocol = "tcp"

cidr_blocks = ["0.0.0.0/0"]

}

ingress {

from_port = 6783

to_port = 6783

protocol = "tcp"

cidr_blocks = ["10.0.0.0/16"]

}

ingress {

from_port = 6783

to_port = 6784

protocol = "udp"

cidr_blocks = ["10.0.0.0/16"]

}

egress {

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

tags = {

Name = "k8s-worker-sg"

}

}

resource "aws_instance" "k8s_nodes" {

count = 3

ami = "ami-03e38f46f79020a70"

instance_type = "t2.micro"

key_name = var.key_name

vpc_security_group_ids = [

aws_vpc.k8s_vpc.default_security_group_id,

count.index == 0 ? aws_security_group.k8s_master_sg.id : aws_security_group.k8s_worker_sg.id

]

user_data = (

count.index == 0 ? local.master_script : local.worker_script

)

subnet_id = (

count.index == 0 ? aws_subnet.public_subnet.id : aws_subnet.private_subnet.id

)

tags = {

Name = count.index == 0 ? "k8s-master" : "k8s-worker-${count.index}"

Role = count.index == 0 ? "master" : "worker"

}

}