How DORA Metrics Connect Testing Principles with Development Outcomes?

Modern engineering teams rely on measurable indicators to understand how effectively they deliver software. While metrics provide visibility, their real value comes from how they connect different parts of the development lifecycle. One of the most important relationships is between testing practices and deployment outcomes.

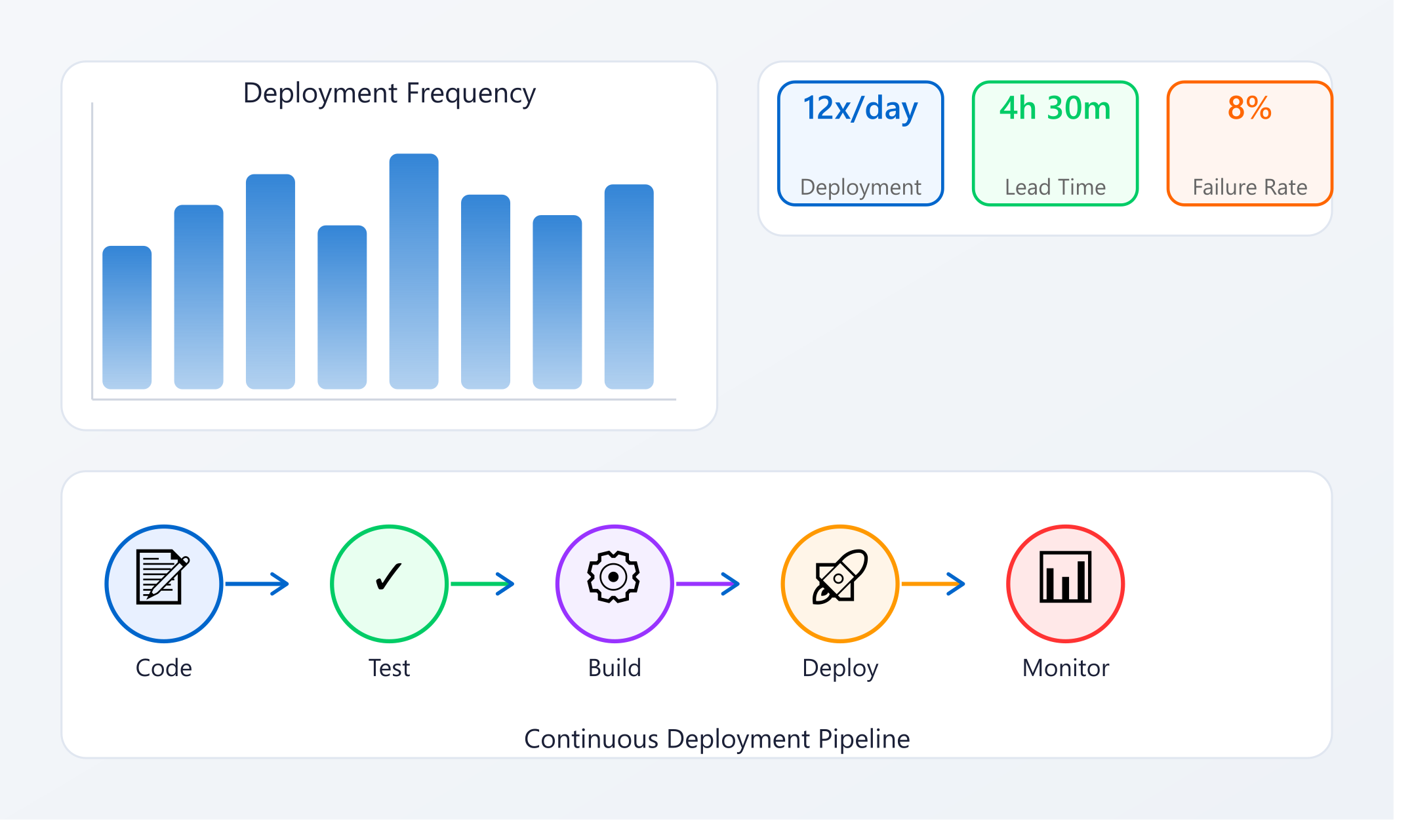

At a high level, dora metrics capture how quickly and reliably teams ship code. However, these outcomes are directly influenced by how testing is designed, executed, and maintained throughout the development process.

Why Testing Directly Impacts Deployment Outcomes

Deployment outcomes are not just a result of CI/CD pipelines or release strategies. They are heavily shaped by the quality and consistency of testing.

For example:

- Faster deployments depend on quick and reliable test execution

- Lower failure rates depend on effective validation before release

- Faster recovery times depend on how well issues are detected and diagnosed

Without strong testing practices, even well-optimized pipelines struggle to deliver stable results.

Connecting Testing to Key Performance Indicators

Each core metric reflects specific aspects of testing quality.

Deployment Frequency

Frequent deployments require confidence. Teams can only release often when tests provide reliable validation.

Strong testing enables:

- Quick verification of changes

- Reduced hesitation in releasing updates

- Consistent delivery cycles

When testing is slow or unreliable, deployment frequency naturally decreases.

Lead Time for Changes

Lead time reflects how quickly code moves from development to production.

Testing influences this by:

- Determining how fast changes can be validated

- Reducing delays caused by manual verification

- Preventing rework caused by late-stage issues

Efficient testing shortens the feedback loop and accelerates delivery.

Change Failure Rate

This metric is closely tied to testing effectiveness.

High-quality testing helps:

- Catch defects before deployment

- Validate edge cases and unexpected scenarios

- Ensure system stability under different conditions

Weak testing practices often lead to higher failure rates in production.

Time to Restore Service

Recovery time depends on how quickly issues can be identified and fixed.

Testing contributes by:

- Making failures easier to reproduce

- Providing clear signals about what went wrong

- Supporting faster debugging and resolution

Better testing leads to faster recovery and reduced impact.

The Role of Real-World Test Scenarios

One of the common gaps in testing is the difference between test environments and real-world usage.

Tests that rely only on predefined scenarios may miss:

- Unusual user behavior

- Complex system interactions

- Edge cases that emerge in production

Some approaches address this by aligning tests with real system behavior. For example, tools like Keploy capture actual API interactions and convert them into test cases. This helps teams validate realistic scenarios and improve confidence in deployment outcomes.

Continuous Testing as a Foundation

To effectively connect testing with deployment performance, testing must be continuous.

This includes:

- Running tests at every stage of the pipeline

- Validating changes as they are introduced

- Ensuring quick feedback for developers

Continuous testing ensures that issues are detected early, preventing them from affecting deployment metrics.

Common Disconnects Between Testing and Outcomes

Teams often struggle when testing and deployment goals are not aligned.

Typical issues include:

- Tests that are too slow to support frequent releases

- Coverage that focuses only on ideal scenarios

- Lack of visibility into test effectiveness

- Poor handling of dynamic or evolving systems

These gaps weaken the connection between testing practices and actual performance.

Strengthening the Connection

To improve deployment outcomes, teams should focus on aligning testing with delivery goals.

Key practices include:

- Designing tests for speed and reliability

- Prioritizing high-impact scenarios

- Keeping test suites maintainable and up to date

- Integrating testing deeply into CI/CD workflows

This ensures that testing supports, rather than slows down, delivery.

Real-World Perspective

In real-world systems, testing and deployment are tightly coupled. Changes in one directly affect the other.

Teams that successfully connect the two:

- Release more frequently with confidence

- Experience fewer production issues

- Recover faster when problems occur

- Maintain consistent delivery performance

This alignment is essential for modern engineering teams.

Conclusion

Metrics provide visibility into performance, but they are shaped by underlying practices. Testing is one of the most important factors influencing how systems are built, validated, and released.

By aligning testing strategies with delivery goals, teams can improve both speed and reliability. In the long run, the connection between testing practices and deployment outcomes is what determines how effectively teams deliver software.