cadvisor + docker swarm 에러 해결

docker swarm을 이용해서 프로젝트를 진행하던 중 cadvisor로 리소스 모니터링을 구축과정을 작성하고자 한다.

환경

OS: ubuntu 24.04.03

cadvisor: lastest

Docker Engine: 29.0.2

...

과정

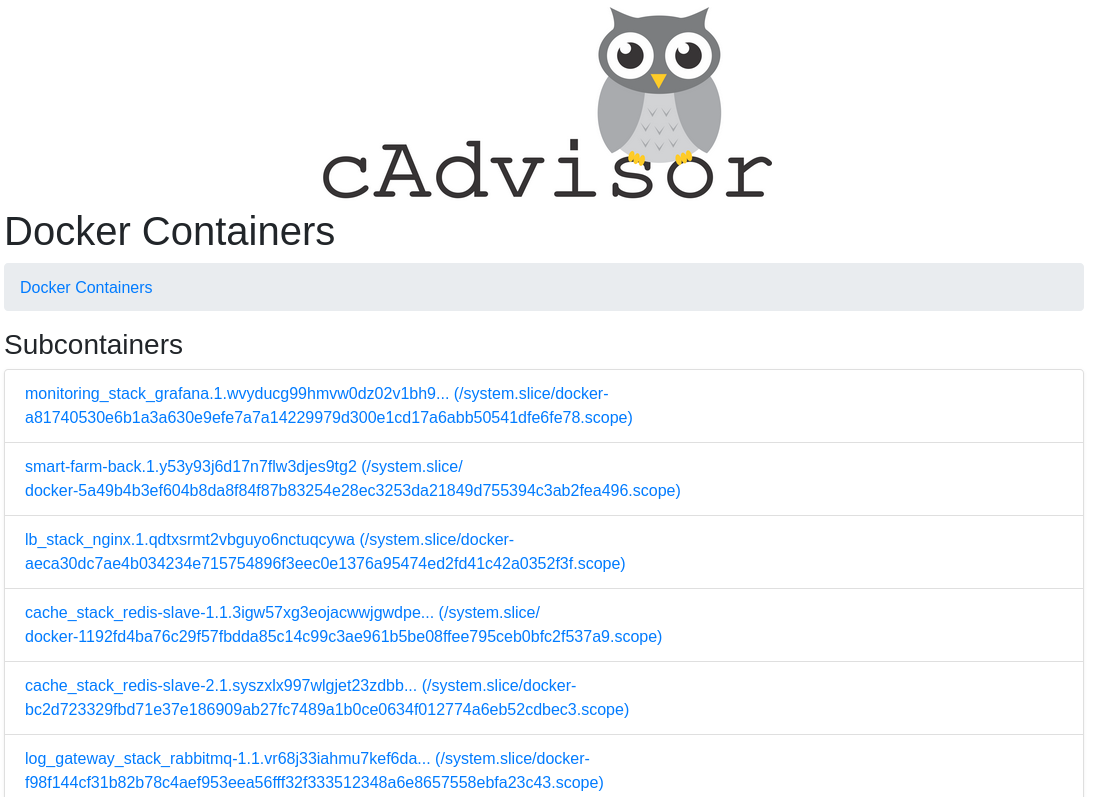

리소스를 모니터링 하기 위해서 cadvisor를 띄우고 http://localhost:8080으로 들어가 Docker Containers에 현재 동작중인 컨테이너들이 안 보여서 찾아보기 시작

구현방법을 찾아보면서 예제를 그대로 복붙해봤을 땐

I1111 00:05:15.305757 1 factory.go:219] Registration of the docker container factory failed: failed to validate Docker info: failed to detect Docker info: Error response from daemon: client version 1.41 is too old. Minimum supported API version is 1.44, please upgrade your client to a newer version

cadvisor가 사용하는 docker api가 사용중인 docker engine이 지원하는 버전이 안 되는 거 같아 최신버전중 하나인 0.52.0버전으로 업데이트하였다.

다시 cadvisor를 실행해보니

E1216 02:15:49.076080 1 manager.go:1116] Failed to create existing container: /system.slice/docker-b4259964611646840656f0c617b30be7dedd611f1697457d0b13363b63864acf.scope: failed to identify the read-write layer ID for container "b4259964611646840656f0c617b30be7dedd611f1697457d0b13363b63864acf". - open /rootfs/var/lib/docker/image/overlayfs/layerdb/mounts/

공식 레포에서 비슷한 이슈들을 찾아보다가 이걸 찾게됐다

cAdvisor occasionally gets into a state where it has no container metadata

여기서 나와 다른점을 찾아봤을 때 다른점은 storage-driver:overlay2란점

docker 29이상 버전을 새로 설치하면 overlayfs가 default storage driver로 적용된다고한다

해결

storage driver를 바꿀 방법을 찾아보았고 방법은 다음과 같다

- docker daemon config 파일 추가

#bash vi /etc/docker/daemon.json

{

"storage-driver": "overlay2"

}- docker 재시작

sudo systemctl restart docker- 적용 확인

#bash docker info

Client: Docker Engine - Community

Version: 29.0.2

Context: default

Debug Mode: false

Plugins:

buildx: Docker Buildx (Docker Inc.)

Version: v0.30.0

Path: /usr/libexec/docker/cli-plugins/docker-buildx

compose: Docker Compose (Docker Inc.)

Version: v2.40.3

Path: /usr/libexec/docker/cli-plugins/docker-compose

Server:

Containers: 23

Running: 15

Paused: 0

Stopped: 8

Images: 13

Server Version: 29.0.2

Storage Driver: overlay2

Backing Filesystem: extfs

Supports d_type: true

Using metacopy: false

Native Overlay Diff: true

userxattr: false

Logging Driver: json-file

Cgroup Driver: systemd

Cgroup Version: 2

Plugins:

Volume: local

Network: bridge host ipvlan macvlan null overlay

Log: awslogs fluentd gcplogs gelf journald json-file local splunk syslog

CDI spec directories:

/etc/cdi

/var/run/cdi

Swarm: active

NodeID: v6ky9ehc8w073mktg5ojvpp2z

Is Manager: true

ClusterID: 681un9fg22kivffzwt6lqenfk

Managers: 1

Nodes: 2

Default Address Pool: 10.0.0.0/8

SubnetSize: 24

Data Path Port: 4789

Orchestration:

Task History Retention Limit: 5

Raft:

Snapshot Interval: 10000

Number of Old Snapshots to Retain: 0

Heartbeat Tick: 1

Election Tick: 10

Dispatcher:

Heartbeat Period: 5 seconds

CA Configuration:

Expiry Duration: 3 months

Force Rotate: 0

Autolock Managers: false

Root Rotation In Progress: false

Node Address: 192.168.1.5

Manager Addresses:

192.168.1.5:2377

Runtimes: io.containerd.runc.v2 runc

Default Runtime: runc

Init Binary: docker-init

containerd version: fcd43222d6b07379a4be9786bda52438f0dd16a1

runc version: v1.3.3-0-gd842d771

init version: de40ad0

Security Options:

apparmor

seccomp

Profile: builtin

cgroupns

Kernel Version: 6.14.0-35-generic

Operating System: Ubuntu 24.04.3 LTS

OSType: linux

Architecture: x86_64

CPUs: 12

Total Memory: 30.73GiB

Name: hansl-ubuntu-To-Be-Filled-By-O-E-M

ID: df3599aa-532c-4095-9399-357c0967fb32

Docker Root Dir: /var/lib/docker

Debug Mode: false

Experimental: true

Insecure Registries:

::1/128

127.0.0.0/8

Live Restore Enabled: false

Firewall Backend: iptables

storage-driver가 overlay2로 동작중인 걸 확인했고 다시 cadvisor를 시작해보면

컨테이너들이 잘 보이는걸 볼 수 있다

ps

- 기존 실행중인 모든 서비스와 컨테이너가 다시 살아나기 때문에 운영환경에선 주의가 필요

- Add support for Docker with containerd-snapshotters #3643

수정이 된거 같긴 한데 아직 release되진 않은 것 같다

최종적으로 Grafana에서도 볼 수 있다

# docker-compose.yaml

services:

cadvisor:

image: gcr.io/cadvisor/cadvisor:v0.52.0

volumes:

- /:/rootfs:ro

- /var/run/docker.sock:/var/run/docker.sock:rw

- /sys:/sys:ro

- /var/lib/docker/:/var/lib/docker:ro

ports:

- '8080:8080'

networks:

- app-net

deploy:

mode: global

prometheus:

image: prom/prometheus:latest

ports:

- '9090:9090'

networks:

- app-net

volumes:

- ./prometheus.yaml:/etc/prometheus/prometheus.yml

depends_on:

- cadvisor

grafana:

image: grafana/grafana:latest

networks:

- app-net

ports:

- '3030:3000'

environment:

- GF_SECURITY_ADMIN_USER=admin

- GF_SECURITY_ADMIN_PASSWORD=admin

- GF_SERVER_ROOT_URL=http://localhost/grafana

- GF_SERVER_SERVE_FROM_SUB_PATH=true

volumes:

- grafana_data:/var/lib/grafana

depends_on:

- prometheus

- loki

loki:

image: grafana/loki:3.5.8

ports:

- '3100:3100'

networks:

- app-net

volumes:

- ./loki-config.yaml:/etc/loki/local-config.yaml

- loki_data:/loki

command: -config.file=/etc/loki/local-config.yaml

promtail:

image: grafana/promtail:3.5.8

networks:

- app-net

volumes:

- /var/log:/var/log

- /var/lib/docker/containers:/var/lib/docker/containers:ro

- /var/run/docker.sock:/var/run/docker.sock

- ./promtail-config.yaml:/etc/promtail/promtail-config.yaml

command: -config.file=/etc/promtail/promtail-config.yaml

depends_on:

- loki

networks:

app-net:

external: true

volumes:

grafana_data:

loki_data: