PyTorch Operations

- Tensor

- 다차원 Arrays를 표현하는 PyTorch 클래스

- 사실상 numpy의 ndarray와 동일 (TF의 Tensor와도 동일)

- Tensor를 생성하는 함수도 거의 동일

import numpy as np

n_array = np.arange(10).reshape(2,5)

print(n_array)

print("ndim :", n_array.ndim, "shape :", n_array.shape)

import torch

t_array = torch.FloatTensor(n_array)

print(t_array)

print("ndim :", t_array.ndim, "shape :", t_array.shape)- Array to Tensor

# data to tensor

data = [[3, 5],[10, 5]]

x_data = torch.tensor(data)

x_data

# ndarray to tensor

nd_array_ex = np.array(data)

tensor_array = torch.from_numpy(nd_array_ex)

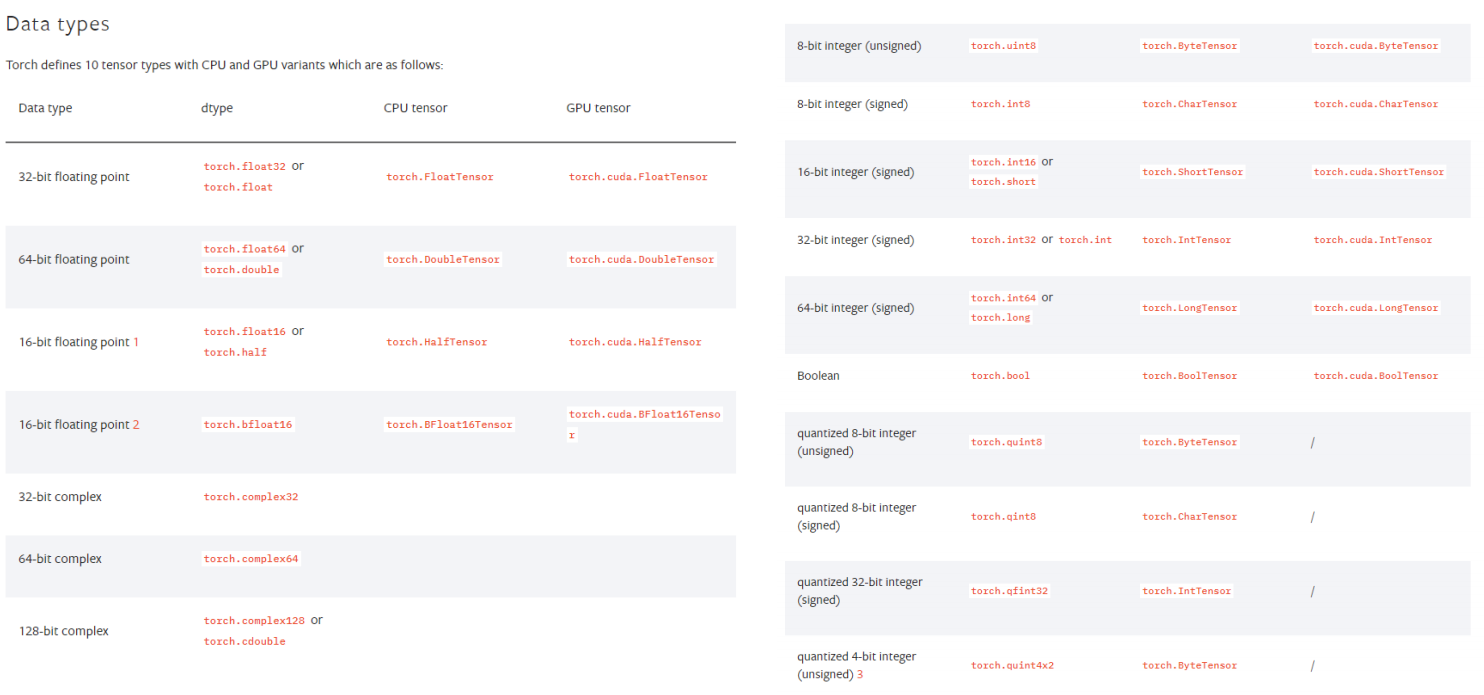

tensor_array- Tensor data types

- 기본적으로 tensor가 가질수 있는 data type은 numpy와 동일한데, GPU를 쓸

수 있게 해주냐 마느냐의 차이가 있다.

- numpy like opreations

- 기본적으로 pytorch의 대부분의 사용법이 그대로 적용됨

- 한 가지의 차이점은 pytorch의 tensor는 GPU에 올려서 사용가능

- gpu에 올릴것이냐 메모리에 올릴것이냐하는 device가 있다.

data = [[3, 5, 20],[10, 5, 50], [1, 5, 10]]

x_data = torch.tensor(data)

x_data[1:]

# tensor([[10,5,50],[1,5,10]])

x_data.flatten()

# tensor([3,5,20,10,5,50,1,5,10])

torch.ones_like(x_data)

# tensor([1,1,1],[1,1,1],[1,1,1])

torch.shape

# torch.Size([3,3])

# device확인

if torch.cuda.is_available():

x_data_cuda = x_data.to('cuda')

x_data_cuda.device

# device(type='cuda', index=0)Tensor handling

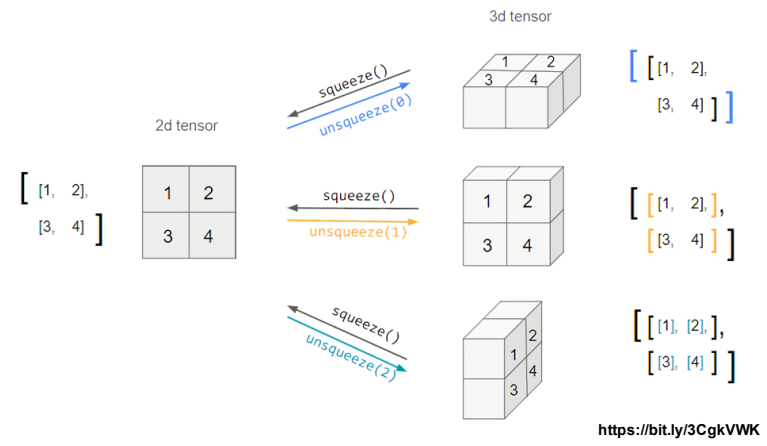

-

view : reshpae과 동일하게 tensor의 shape을 반환

-

squeeze : 차원의 개수가 1인 차원을 삭제(압축)

-

unsqueeze : 차원의 개수가 1인 차원을 추가

-

view, reshape

tensor_ex = torch.rand(size=(2, 3, 2))

tensor_ex

tensor_ex.view([-1, 6])

tensor_ex.reshape([-1,6])

# view

a = torch.zeros(3, 2)

b = a.view(2, 3)

a.fill_(1)

print(a,"\n")

print(b)

# reshape

a = torch.zeros(3, 2)

b = a.t().reshape(6)

a.fill_(1)

print(a,"\n")

print(b)- squeeze, unsqueeze

# squeeze

tensor_ex = torch.rand(size=(2, 1, 2))

tensor_ex.squeeze()

tensor_ex = torch.rand(size=(2, 2))

tensor_ex.unsqueeze(0).shape

tensor_ex.unsqueeze(1).shape

tensor_ex.unsqueeze(2).shapeTensor operations

- pytorch에서는 내적을 구하는 dot이라는 함수와 행렬곱을 하는 mm을 구분해서 사용

# dot & mm

n2 = np.arange(10).reshape(5,2)

t2 = torch.FloatTensor(n2)

t1.mm(t2) # 연산 가능

t1.dot(t2) # 연산 불가능

# 스칼라인 경우 dot은 문제되지 않음

a = torch.rand(10)

b = torch.rand(10)

a.dot(b)

# 대신 mm은 문제가 됨

a = torch.rand(10)

b = torch.rand(10)

a.mm(b)- mm과 matmul은 broadcasting 지원 처리

# broadcasting되어 연산 가능

a = torch.rand(5,2, 3)

b = torch.rand(3)

a.matmul(b)

# 위의 결과와 동일

print(a[0].mm(torch.unsqueeze(b,1)))

print(a[1].mm(torch.unsqueeze(b,1)))

print(a[2].mm(torch.unsqueeze(b,1)))

print(a[3].mm(torch.unsqueeze(b,1)))

print(a[4].mm(torch.unsqueeze(b,1)))Tensor operations for ML/DL formula

- nn.functional 모듈을 통해 다양한 수식 변환을 지원함

- 외울필요는 없고 필요할 때 키워드를 통해 찾아보면 된다.

# 모든 경우의 수 표현

# 1.

import itertools

a = [1, 2, 3]

b = [4, 5]

list(itertools.product(a, b))

# 2.

tensor_a = torch.tensor(a)

tensor_b = torch.tensor(b)

torch.cartesian_prod(tensor_a, tensor_b)AutoGrad

- PyTorch의 핵심은 자동 미분의 지원 → backward 함수 사용

- requires_grad=True를 이용해 미분 → 잘 안쓰이고 linear함수를 대신 사용