1. 다중 분류

다중 분류란?

다중 분류(Multiclass Classification)는 여러 개의 클래스 중 하나를 예측하는 문제를 의미한다.

이는 두 개의 클래스 중 하나를 예측하는 이진 분류(Binary Classification)와는 달리, 세 개 이상의 클래스를 다루는 분류 문제이다.

예를 들어, 손글씨 숫자 인식 문제에서 0부터 9까지의 숫자 중 하나를 예측하는 문제는 다중 분류 문제이다.

다중 분류의 특징

1. 클래스의 수:

- 다중 분류 문제는 세 개 이상의 클래스가 존재한다.

- 예를 들어, 꽃의 종류를 분류하는 문제에서는 클래스가 “Setosa”, “Versicolor”, “Virginica” 세 개일 수 있다.

2. 레이블 인코딩:

- 다중 분류 문제에서는 클래스 레이블을 숫자로 인코딩한다.

- 예를 들어, [“Setosa”, “Versicolor”, “Virginica”]는 각각 [0, 1, 2]로 인코딩될 수 있다.

3. 출력 형태:

- 모델의 출력은 각 클래스에 대한 확률 분포로 나타낸다.

- 소프트맥스(Softmax) 함수가 마지막 출력층에 사용되어 각 클래스의 확률을 계산한다.

다중 분류의 예

예제 1: 손글씨 숫자 인식

- MNIST 데이터셋을 사용하여 손글씨 숫자(0부터 9까지)를 분류하는 문제를 다중 분류의 예로 들 수 있다.

- 각 이미지는 28x28 픽셀 크기의 손글씨 숫자를 나타내며, 10개의 클래스(0부터 9까지) 중 하나로 분류된다.

주요 개념

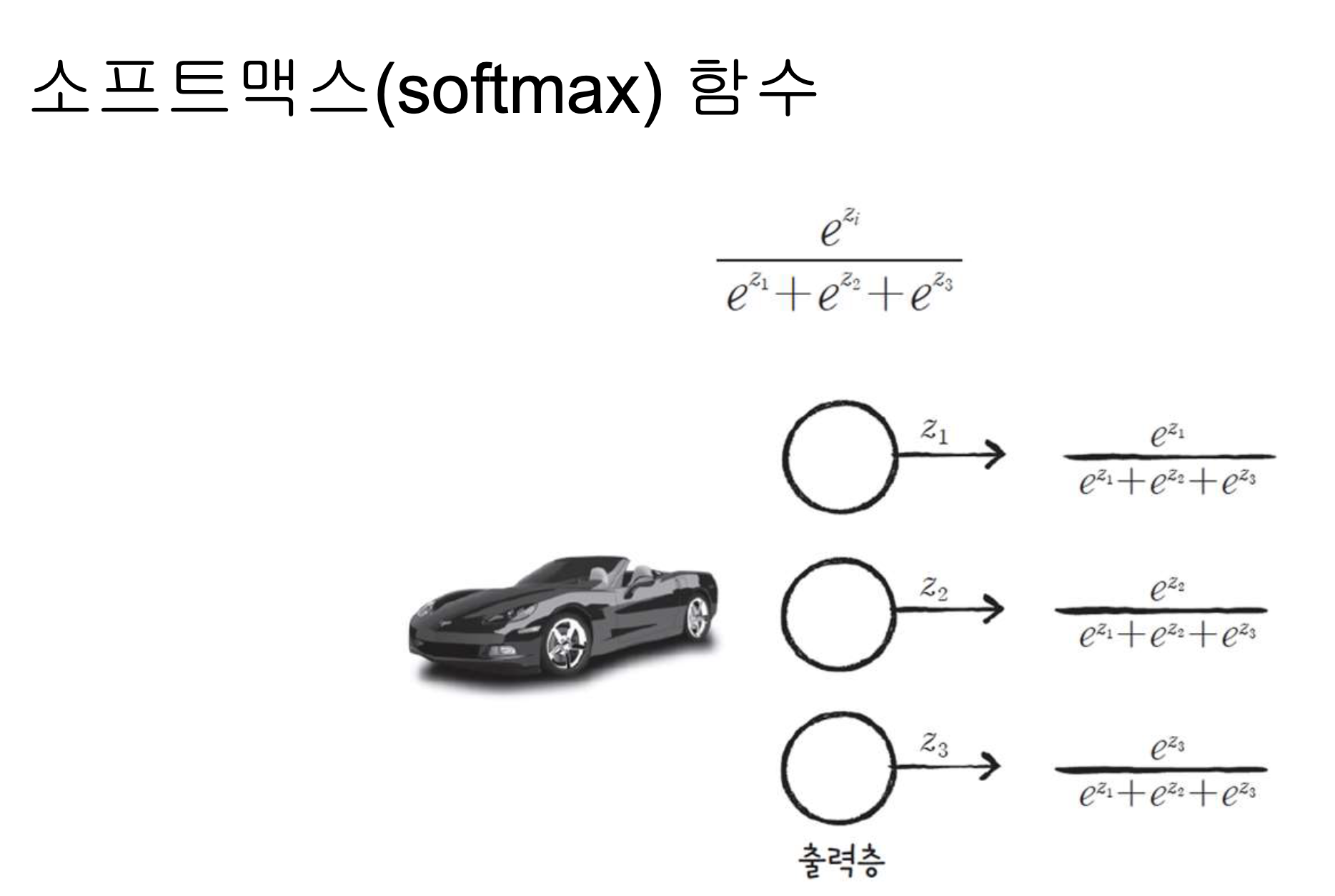

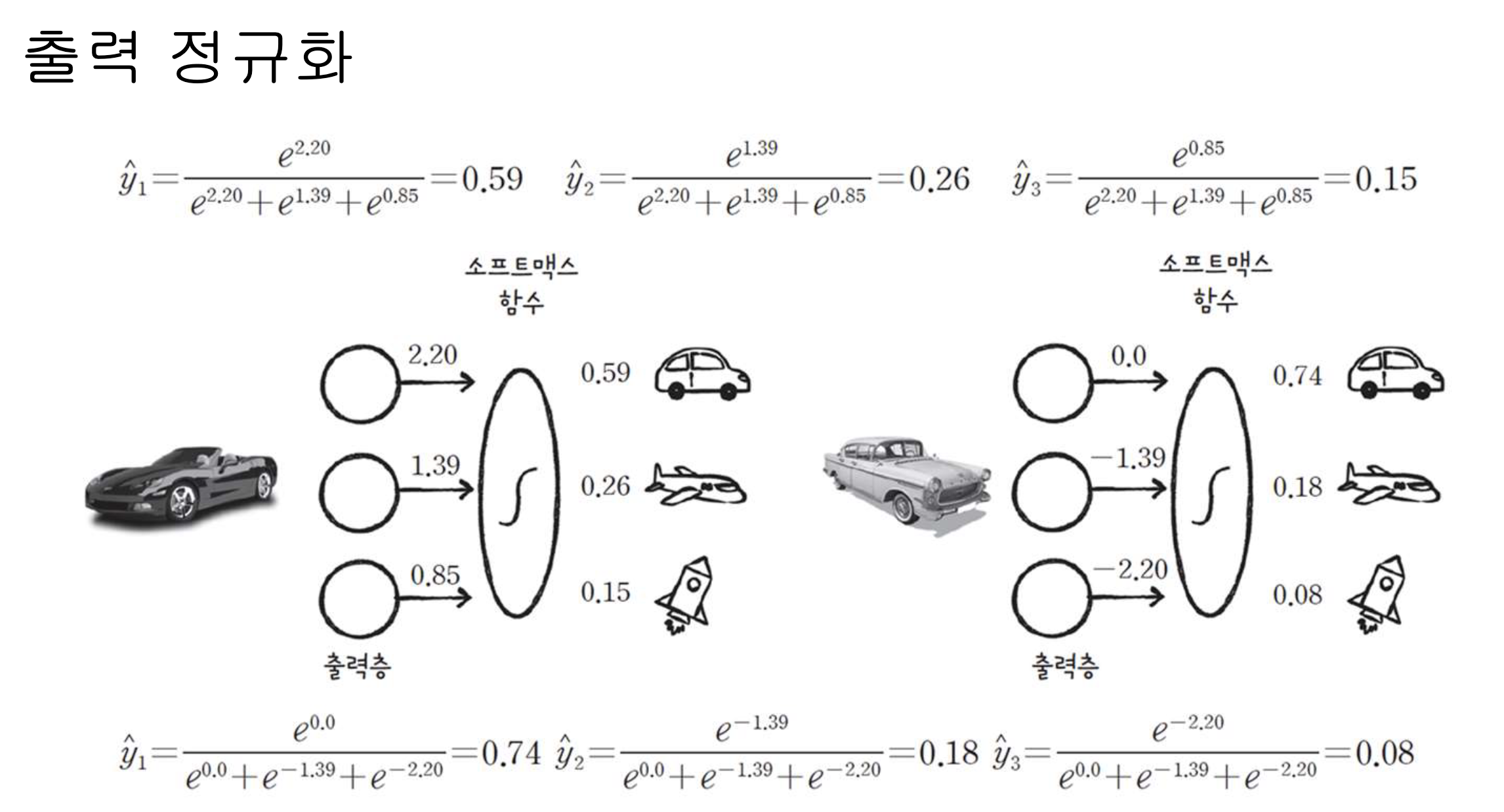

1. 소프트맥스 함수(Softmax Function):

- 소프트맥스 함수는 모델의 출력 값을 각 클래스에 대한 확률 값으로 변환한다.

- 각 클래스에 대한 확률 값은 0과 1 사이의 값을 가지며, 모든 클래스의 확률 값의 합은 1이 된다.

- 수식:

여기서 는 클래스 의 점수, 는 클래스의 수

2. 크로스 엔트로피 손실(Categorical Crossentropy Loss):

- 다중 분류 문제에서 모델의 손실 함수로 사용된다.

- 크로스 엔트로피 손실 함수는 예측 확률 분포와 실제 클래스 분포 간의 차이를 측정한다.

- 수식:

- 여기서 는 실제 클래스의 원-핫 인코딩 값, 는 예측 확률 값

3. 원-핫 인코딩(One-Hot Encoding):

- 다중 분류 문제에서 클래스 레이블을 이진 벡터로 변환한다.

- 예를 들어, 클래스가 3개인 경우 [0, 1, 2]는 각각 [1, 0, 0], [0, 1, 0], [0, 0, 1]로 인코딩 된다.

다중 분류의 응용

다중 분류는 이미지 분류, 텍스트 분류, 음성 인식 등 다양한 분야에서 널리 사용된다.

각 분야에서 다양한 모델 구조와 알고리즘이 활용되며, 데이터의 특성에 맞게 모델을 조정할 수 있다.

다중 분류 문제를 해결하기 위해서는 데이터 전처리, 모델 선택, 하이퍼파라미터 튜닝 등 다양한 요소를 고려해야 한다.

2. 소프트 맥스 함수

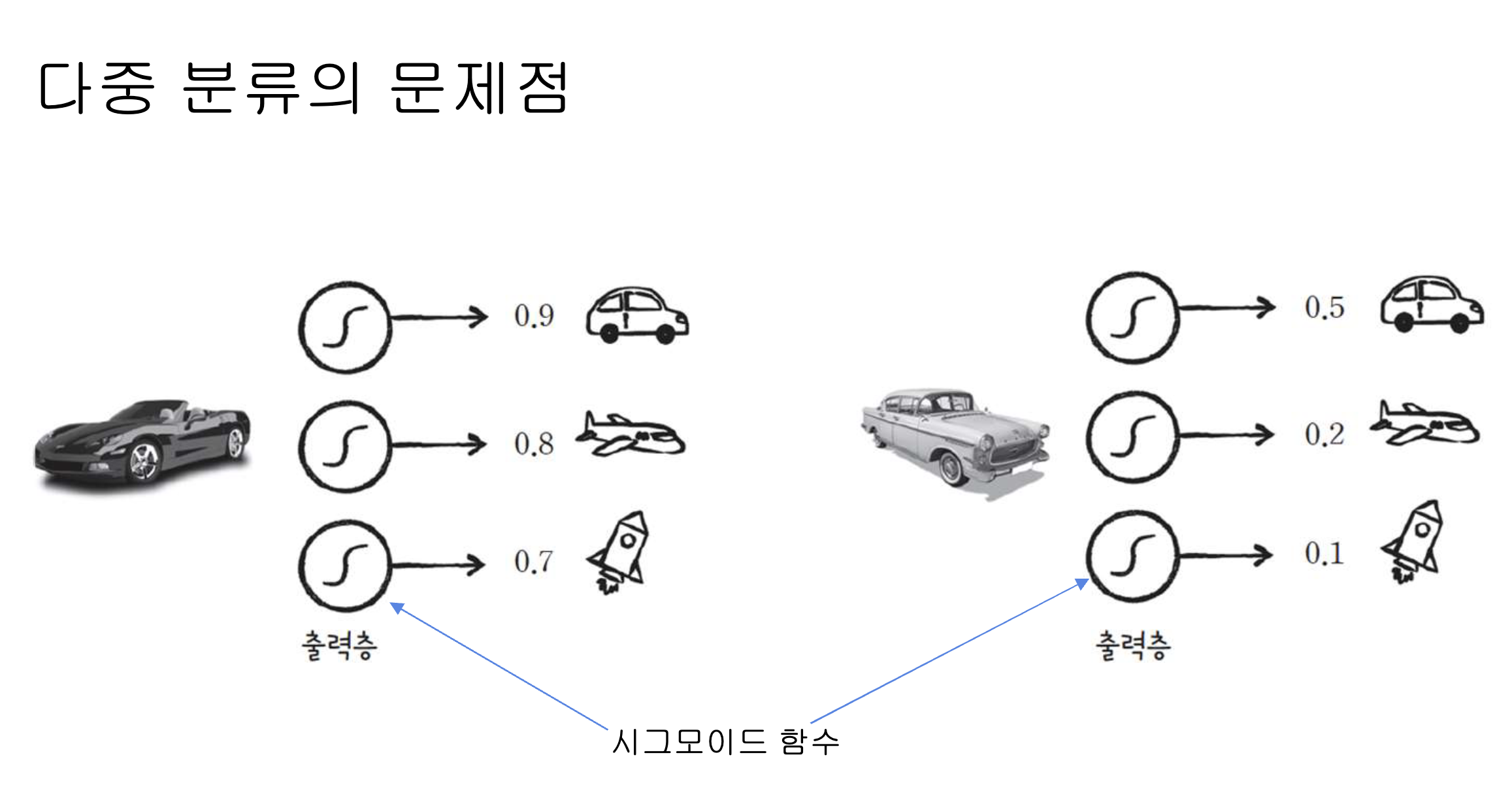

다중 분류의 문제점은 기존 처럼 시그모이드 함수로 계산시 총 합이 1이 넘거나 1이 되지 않는 문제점이 생긴다.

따라서 이러한 문제점을 해결 하기 위해 소프트 맥스 함수를 사용한다.

각 숫자의 합이 1이 되므로 이 중 가장 적합한 것을 찾아 낼 수 있다.

import numpy as np

class MultiClassNetwork:

def __init__(self, units=10, batch_size=32, learning_rate=0.1, l1=0, l2=0):

self.units = units # 은닉층의 뉴런 개수

self.batch_size = batch_size # 배치 크기

self.w1 = None # 은닉층의 가중치

self.b1 = None # 은닉층의 절편

self.w2 = None # 출력층의 가중치

self.b2 = None # 출력층의 절편

self.a1 = None # 은닉층의 활성화 출력

self.losses = [] # 훈련 손실

self.val_losses = [] # 검증 손실

self.lr = learning_rate # 학습률

self.l1 = l1 # L1 손실 하이퍼파라미터

self.l2 = l2 # L2 손실 하이퍼파라미터

def forpass(self, x):

z1 = np.dot(x, self.w1) + self.b1 # 첫 번째 층의 선형 식을 계산합니다

self.a1 = self.sigmoid(z1) # 활성화 함수를 적용합니다

z2 = np.dot(self.a1, self.w2) + self.b2 # 두 번째 층의 선형 식을 계산합니다.

return z2

def backprop(self, x, err):

m = len(x) # 샘플 개수

# 출력층의 가중치와 절편에 대한 그래디언트를 계산합니다.

w2_grad = np.dot(self.a1.T, err) / m

b2_grad = np.sum(err) / m

# 시그모이드 함수까지 그래디언트를 계산합니다.

err_to_hidden = np.dot(err, self.w2.T) * self.a1 * (1 - self.a1)

# 은닉층의 가중치와 절편에 대한 그래디언트를 계산합니다.

w1_grad = np.dot(x.T, err_to_hidden) / m

b1_grad = np.sum(err_to_hidden, axis=0) / m

return w1_grad, b1_grad, w2_grad, b2_grad

def sigmoid(self, z):

z = np.clip(z, -100, None) # 안전한 np.exp() 계산을 위해

a = 1 / (1 + np.exp(-z)) # 시그모이드 계산

return a

def softmax(self, z):

# 소프트맥스 함수

z = np.clip(z, -100, None) # 안전한 np.exp() 계산을 위해

exp_z = np.exp(z)

return exp_z / np.sum(exp_z, axis=1).reshape(-1, 1)

def init_weights(self, n_features, n_classes):

self.w1 = np.random.normal(0, 1,

(n_features, self.units)) # (특성 개수, 은닉층의 크기)

self.b1 = np.zeros(self.units) # 은닉층의 크기

self.w2 = np.random.normal(0, 1,

(self.units, n_classes)) # (은닉층의 크기, 클래스 개수)

self.b2 = np.zeros(n_classes)

def fit(self, x, y, epochs=100, x_val=None, y_val=None):

np.random.seed(42)

self.init_weights(x.shape[1], y.shape[1]) # 은닉층과 출력층의 가중치를 초기화합니다.

# epochs만큼 반복합니다.

for i in range(epochs):

loss = 0

print('.', end='')

# 제너레이터 함수에서 반환한 미니배치를 순환합니다.

for x_batch, y_batch in self.gen_batch(x, y):

a = self.training(x_batch, y_batch)

# 안전한 로그 계산을 위해 클리핑합니다.

a = np.clip(a, 1e-10, 1-1e-10)

# 로그 손실과 규제 손실을 더하여 리스트에 추가합니다.

loss += np.sum(-y_batch*np.log(a))

self.losses.append((loss + self.reg_loss()) / len(x))

# 검증 세트에 대한 손실을 계산합니다.

self.update_val_loss(x_val, y_val)

# 미니배치 제너레이터 함수

def gen_batch(self, x, y):

length = len(x)

bins = length // self.batch_size # 미니배치 횟수

if length % self.batch_size:

bins += 1 # 나누어 떨어지지 않을 때

indexes = np.random.permutation(np.arange(len(x))) # 인덱스를 섞습니다.

x = x[indexes]

y = y[indexes]

for i in range(bins):

start = self.batch_size * i

end = self.batch_size * (i + 1)

yield x[start:end], y[start:end] # batch_size만큼 슬라이싱하여 반환합니다.

def training(self, x, y):

m = len(x) # 샘플 개수를 저장합니다.

z = self.forpass(x) # 정방향 계산을 수행합니다.

a = self.softmax(z) # 활성화 함수를 적용합니다.

err = -(y - a) # 오차를 계산합니다.

# 오차를 역전파하여 그래디언트를 계산합니다.

w1_grad, b1_grad, w2_grad, b2_grad = self.backprop(x, err)

# 그래디언트에서 페널티 항의 미분 값을 뺍니다

w1_grad += (self.l1 * np.sign(self.w1) + self.l2 * self.w1) / m

w2_grad += (self.l1 * np.sign(self.w2) + self.l2 * self.w2) / m

# 은닉층의 가중치와 절편을 업데이트합니다.

self.w1 -= self.lr * w1_grad

self.b1 -= self.lr * b1_grad

# 출력층의 가중치와 절편을 업데이트합니다.

self.w2 -= self.lr * w2_grad

self.b2 -= self.lr * b2_grad

return a

def predict(self, x):

z = self.forpass(x) # 정방향 계산을 수행합니다.

return np.argmax(z, axis=1) # 가장 큰 값의 인덱스를 반환합니다.

def score(self, x, y):

# 예측과 타깃 열 벡터를 비교하여 True의 비율을 반환합니다.

return np.mean(self.predict(x) == np.argmax(y, axis=1))

def reg_loss(self):

# 은닉층과 출력층의 가중치에 규제를 적용합니다.

return self.l1 * (np.sum(np.abs(self.w1)) + np.sum(np.abs(self.w2))) + \

self.l2 / 2 * (np.sum(self.w1**2) + np.sum(self.w2**2))

def update_val_loss(self, x_val, y_val):

z = self.forpass(x_val) # 정방향 계산을 수행합니다.

a = self.softmax(z) # 활성화 함수를 적용합니다.

a = np.clip(a, 1e-10, 1-1e-10) # 출력 값을 클리핑합니다.

# 크로스 엔트로피 손실과 규제 손실을 더하여 리스트에 추가합니다.

val_loss = np.sum(-y_val*np.log(a))

self.val_losses.append((val_loss + self.reg_loss()) / len(y_val))import tensorflow as tf

tf.config.set_visible_devices([], 'GPU')해당 코드는 필자가 M1 맥북이라 추가한 것으로 무시해도 된다.

단순 tensorflow만 import 해도 된다.

(x_train_all, y_train_all), (x_test, y_test) = tf.keras.datasets.fashion_mnist.load_data()

print(x_train_all.shape, y_train_all.shape)

### 출력 결과

(60000, 28, 28) (60000,)

import matplotlib.pyplot as plt

plt.rcParams["figure.figsize"] = (2, 2)

plt.imshow(x_train_all[0], cmap='gray')

plt.show()

### 출력 결과

print(y_train_all[:10])

### 출력 결과

[9 0 0 3 0 2 7 2 5 5]

class_names = ['티셔츠/윗도리', '바지', '스웨터', '드레스', '코트',

'샌들', '셔츠', '스니커즈', '가방', '앵클부츠']

print(class_names[y_train_all[0]])

### 출력 결과

앵클부츠

np.bincount(y_train_all)

### 출력 결과

array([6000, 6000, 6000, 6000, 6000, 6000, 6000, 6000, 6000, 6000])from sklearn.model_selection import train_test_split

x_train, x_val, y_train, y_val = train_test_split(x_train_all, y_train_all, stratify=y_train_all,

test_size=0.2, random_state=42)

x_train = x_train / 255

x_val = x_val / 255

x_train = x_train.reshape(-1, 784)

x_val = x_val.reshape(-1, 784)

print(x_train.shape, x_val.shape)

### 출력 결과

(48000, 784) (12000, 784)3. Tensorflow & Keras

from tensorflow.keras import Sequential

from tensorflow.keras.layers import Dense

model = Sequential()

model.add(Dense(100, activation='sigmoid', input_shape=(784,)))

model.add(Dense(10, activation='softmax'))

model.compile(optimizer='sgd', loss='categorical_crossentropy',

metrics=['accuracy'])

history = model.fit(x_train, y_train_encoded, epochs=200,

validation_data=(x_val, y_val_encoded))

### 출력 결과

Epoch 1/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 713us/step - accuracy: 0.8690 - loss: 0.3735 - val_accuracy: 0.8653 - val_loss: 0.3810

Epoch 2/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 634us/step - accuracy: 0.8654 - loss: 0.3809 - val_accuracy: 0.8662 - val_loss: 0.3793

Epoch 3/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 628us/step - accuracy: 0.8652 - loss: 0.3758 - val_accuracy: 0.8668 - val_loss: 0.3783

Epoch 4/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 631us/step - accuracy: 0.8668 - loss: 0.3748 - val_accuracy: 0.8674 - val_loss: 0.3768

Epoch 5/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 658us/step - accuracy: 0.8715 - loss: 0.3682 - val_accuracy: 0.8693 - val_loss: 0.3754

Epoch 6/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 629us/step - accuracy: 0.8680 - loss: 0.3711 - val_accuracy: 0.8662 - val_loss: 0.3773

Epoch 7/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 630us/step - accuracy: 0.8678 - loss: 0.3744 - val_accuracy: 0.8677 - val_loss: 0.3747

Epoch 8/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 627us/step - accuracy: 0.8673 - loss: 0.3757 - val_accuracy: 0.8673 - val_loss: 0.3740

Epoch 9/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 663us/step - accuracy: 0.8688 - loss: 0.3748 - val_accuracy: 0.8698 - val_loss: 0.3720

Epoch 10/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 649us/step - accuracy: 0.8695 - loss: 0.3690 - val_accuracy: 0.8654 - val_loss: 0.3754

Epoch 11/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 680us/step - accuracy: 0.8711 - loss: 0.3668 - val_accuracy: 0.8704 - val_loss: 0.3696

Epoch 12/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 732us/step - accuracy: 0.8702 - loss: 0.3668 - val_accuracy: 0.8692 - val_loss: 0.3694

Epoch 13/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 643us/step - accuracy: 0.8703 - loss: 0.3634 - val_accuracy: 0.8711 - val_loss: 0.3677

Epoch 14/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 611us/step - accuracy: 0.8721 - loss: 0.3615 - val_accuracy: 0.8689 - val_loss: 0.3679

Epoch 15/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 720us/step - accuracy: 0.8726 - loss: 0.3552 - val_accuracy: 0.8715 - val_loss: 0.3663

Epoch 16/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 704us/step - accuracy: 0.8723 - loss: 0.3606 - val_accuracy: 0.8691 - val_loss: 0.3699

Epoch 17/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 643us/step - accuracy: 0.8723 - loss: 0.3614 - val_accuracy: 0.8711 - val_loss: 0.3651

Epoch 18/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 673us/step - accuracy: 0.8708 - loss: 0.3647 - val_accuracy: 0.8716 - val_loss: 0.3651

Epoch 19/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 637us/step - accuracy: 0.8730 - loss: 0.3612 - val_accuracy: 0.8737 - val_loss: 0.3640

Epoch 20/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 636us/step - accuracy: 0.8748 - loss: 0.3510 - val_accuracy: 0.8724 - val_loss: 0.3642

Epoch 21/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 644us/step - accuracy: 0.8756 - loss: 0.3534 - val_accuracy: 0.8740 - val_loss: 0.3627

Epoch 22/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 667us/step - accuracy: 0.8729 - loss: 0.3582 - val_accuracy: 0.8731 - val_loss: 0.3617

Epoch 23/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 630us/step - accuracy: 0.8738 - loss: 0.3528 - val_accuracy: 0.8744 - val_loss: 0.3605

Epoch 24/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 633us/step - accuracy: 0.8731 - loss: 0.3524 - val_accuracy: 0.8750 - val_loss: 0.3600

Epoch 25/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 630us/step - accuracy: 0.8723 - loss: 0.3535 - val_accuracy: 0.8722 - val_loss: 0.3603

Epoch 26/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 664us/step - accuracy: 0.8740 - loss: 0.3530 - val_accuracy: 0.8730 - val_loss: 0.3592

Epoch 27/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 630us/step - accuracy: 0.8765 - loss: 0.3487 - val_accuracy: 0.8758 - val_loss: 0.3572

Epoch 28/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 631us/step - accuracy: 0.8767 - loss: 0.3494 - val_accuracy: 0.8733 - val_loss: 0.3575

Epoch 29/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 629us/step - accuracy: 0.8747 - loss: 0.3478 - val_accuracy: 0.8751 - val_loss: 0.3567

Epoch 30/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 664us/step - accuracy: 0.8772 - loss: 0.3462 - val_accuracy: 0.8737 - val_loss: 0.3563

Epoch 31/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 622us/step - accuracy: 0.8786 - loss: 0.3461 - val_accuracy: 0.8752 - val_loss: 0.3554

Epoch 32/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 636us/step - accuracy: 0.8790 - loss: 0.3402 - val_accuracy: 0.8757 - val_loss: 0.3543

Epoch 33/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 664us/step - accuracy: 0.8774 - loss: 0.3461 - val_accuracy: 0.8763 - val_loss: 0.3540

Epoch 34/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 635us/step - accuracy: 0.8779 - loss: 0.3409 - val_accuracy: 0.8754 - val_loss: 0.3529

Epoch 35/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 631us/step - accuracy: 0.8773 - loss: 0.3447 - val_accuracy: 0.8771 - val_loss: 0.3525

Epoch 36/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 628us/step - accuracy: 0.8781 - loss: 0.3440 - val_accuracy: 0.8747 - val_loss: 0.3538

Epoch 37/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 665us/step - accuracy: 0.8797 - loss: 0.3373 - val_accuracy: 0.8758 - val_loss: 0.3522

Epoch 38/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 631us/step - accuracy: 0.8797 - loss: 0.3387 - val_accuracy: 0.8771 - val_loss: 0.3509

Epoch 39/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 635us/step - accuracy: 0.8809 - loss: 0.3357 - val_accuracy: 0.8768 - val_loss: 0.3505

Epoch 40/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 662us/step - accuracy: 0.8813 - loss: 0.3379 - val_accuracy: 0.8778 - val_loss: 0.3502

Epoch 41/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 635us/step - accuracy: 0.8824 - loss: 0.3327 - val_accuracy: 0.8776 - val_loss: 0.3491

Epoch 42/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 635us/step - accuracy: 0.8821 - loss: 0.3399 - val_accuracy: 0.8774 - val_loss: 0.3498

Epoch 43/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 633us/step - accuracy: 0.8829 - loss: 0.3296 - val_accuracy: 0.8762 - val_loss: 0.3494

Epoch 44/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 664us/step - accuracy: 0.8803 - loss: 0.3410 - val_accuracy: 0.8787 - val_loss: 0.3496

Epoch 45/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 640us/step - accuracy: 0.8816 - loss: 0.3369 - val_accuracy: 0.8787 - val_loss: 0.3489

Epoch 46/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 633us/step - accuracy: 0.8837 - loss: 0.3309 - val_accuracy: 0.8791 - val_loss: 0.3471

Epoch 47/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 667us/step - accuracy: 0.8812 - loss: 0.3323 - val_accuracy: 0.8779 - val_loss: 0.3473

Epoch 48/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 630us/step - accuracy: 0.8833 - loss: 0.3327 - val_accuracy: 0.8798 - val_loss: 0.3457

Epoch 49/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 642us/step - accuracy: 0.8848 - loss: 0.3264 - val_accuracy: 0.8782 - val_loss: 0.3454

Epoch 50/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 640us/step - accuracy: 0.8814 - loss: 0.3334 - val_accuracy: 0.8788 - val_loss: 0.3474

Epoch 51/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 657us/step - accuracy: 0.8871 - loss: 0.3241 - val_accuracy: 0.8777 - val_loss: 0.3498

Epoch 52/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 640us/step - accuracy: 0.8857 - loss: 0.3252 - val_accuracy: 0.8791 - val_loss: 0.3445

Epoch 53/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 633us/step - accuracy: 0.8841 - loss: 0.3269 - val_accuracy: 0.8792 - val_loss: 0.3435

Epoch 54/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 667us/step - accuracy: 0.8840 - loss: 0.3285 - val_accuracy: 0.8780 - val_loss: 0.3443

Epoch 55/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 628us/step - accuracy: 0.8822 - loss: 0.3278 - val_accuracy: 0.8806 - val_loss: 0.3434

Epoch 56/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 636us/step - accuracy: 0.8858 - loss: 0.3212 - val_accuracy: 0.8797 - val_loss: 0.3434

Epoch 57/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 664us/step - accuracy: 0.8848 - loss: 0.3225 - val_accuracy: 0.8776 - val_loss: 0.3445

Epoch 58/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 634us/step - accuracy: 0.8858 - loss: 0.3225 - val_accuracy: 0.8805 - val_loss: 0.3446

Epoch 59/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 658us/step - accuracy: 0.8865 - loss: 0.3184 - val_accuracy: 0.8805 - val_loss: 0.3426

Epoch 60/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 754us/step - accuracy: 0.8878 - loss: 0.3153 - val_accuracy: 0.8788 - val_loss: 0.3415

Epoch 61/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 702us/step - accuracy: 0.8844 - loss: 0.3261 - val_accuracy: 0.8807 - val_loss: 0.3412

Epoch 62/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 683us/step - accuracy: 0.8875 - loss: 0.3162 - val_accuracy: 0.8796 - val_loss: 0.3397

Epoch 63/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 723us/step - accuracy: 0.8879 - loss: 0.3193 - val_accuracy: 0.8800 - val_loss: 0.3407

Epoch 64/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 657us/step - accuracy: 0.8880 - loss: 0.3146 - val_accuracy: 0.8815 - val_loss: 0.3404

Epoch 65/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 621us/step - accuracy: 0.8865 - loss: 0.3189 - val_accuracy: 0.8819 - val_loss: 0.3399

Epoch 66/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 642us/step - accuracy: 0.8874 - loss: 0.3179 - val_accuracy: 0.8823 - val_loss: 0.3383

Epoch 67/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 627us/step - accuracy: 0.8870 - loss: 0.3166 - val_accuracy: 0.8820 - val_loss: 0.3394

Epoch 68/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 611us/step - accuracy: 0.8886 - loss: 0.3124 - val_accuracy: 0.8824 - val_loss: 0.3373

Epoch 69/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 654us/step - accuracy: 0.8882 - loss: 0.3154 - val_accuracy: 0.8814 - val_loss: 0.3371

Epoch 70/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 618us/step - accuracy: 0.8890 - loss: 0.3168 - val_accuracy: 0.8806 - val_loss: 0.3365

Epoch 71/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 619us/step - accuracy: 0.8883 - loss: 0.3181 - val_accuracy: 0.8826 - val_loss: 0.3364

Epoch 72/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 644us/step - accuracy: 0.8900 - loss: 0.3126 - val_accuracy: 0.8832 - val_loss: 0.3359

Epoch 73/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 763us/step - accuracy: 0.8903 - loss: 0.3130 - val_accuracy: 0.8831 - val_loss: 0.3352

Epoch 74/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 715us/step - accuracy: 0.8897 - loss: 0.3063 - val_accuracy: 0.8833 - val_loss: 0.3349

Epoch 75/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 640us/step - accuracy: 0.8895 - loss: 0.3105 - val_accuracy: 0.8823 - val_loss: 0.3351

Epoch 76/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 632us/step - accuracy: 0.8915 - loss: 0.3067 - val_accuracy: 0.8826 - val_loss: 0.3342

Epoch 77/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 675us/step - accuracy: 0.8947 - loss: 0.3012 - val_accuracy: 0.8831 - val_loss: 0.3343

Epoch 78/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 774us/step - accuracy: 0.8910 - loss: 0.3074 - val_accuracy: 0.8825 - val_loss: 0.3340

Epoch 79/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 689us/step - accuracy: 0.8908 - loss: 0.3071 - val_accuracy: 0.8813 - val_loss: 0.3347

Epoch 80/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 683us/step - accuracy: 0.8951 - loss: 0.3009 - val_accuracy: 0.8812 - val_loss: 0.3364

Epoch 81/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 627us/step - accuracy: 0.8899 - loss: 0.3106 - val_accuracy: 0.8847 - val_loss: 0.3334

Epoch 82/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 940us/step - accuracy: 0.8915 - loss: 0.3076 - val_accuracy: 0.8839 - val_loss: 0.3352

Epoch 83/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 721us/step - accuracy: 0.8944 - loss: 0.3001 - val_accuracy: 0.8832 - val_loss: 0.3347

Epoch 84/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 616us/step - accuracy: 0.8893 - loss: 0.3138 - val_accuracy: 0.8837 - val_loss: 0.3319

Epoch 85/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 734us/step - accuracy: 0.8935 - loss: 0.3026 - val_accuracy: 0.8820 - val_loss: 0.3331

Epoch 86/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 670us/step - accuracy: 0.8904 - loss: 0.3075 - val_accuracy: 0.8852 - val_loss: 0.3328

Epoch 87/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 763us/step - accuracy: 0.8910 - loss: 0.3053 - val_accuracy: 0.8841 - val_loss: 0.3323

Epoch 88/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 652us/step - accuracy: 0.8920 - loss: 0.3057 - val_accuracy: 0.8856 - val_loss: 0.3313

Epoch 89/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 674us/step - accuracy: 0.8966 - loss: 0.2946 - val_accuracy: 0.8841 - val_loss: 0.3302

Epoch 90/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 648us/step - accuracy: 0.8925 - loss: 0.2990 - val_accuracy: 0.8844 - val_loss: 0.3294

Epoch 91/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 647us/step - accuracy: 0.8935 - loss: 0.2994 - val_accuracy: 0.8852 - val_loss: 0.3295

Epoch 92/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 690us/step - accuracy: 0.8933 - loss: 0.2998 - val_accuracy: 0.8841 - val_loss: 0.3289

Epoch 93/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 644us/step - accuracy: 0.8957 - loss: 0.2972 - val_accuracy: 0.8842 - val_loss: 0.3298

Epoch 94/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 788us/step - accuracy: 0.8934 - loss: 0.2990 - val_accuracy: 0.8846 - val_loss: 0.3285

Epoch 95/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 694us/step - accuracy: 0.8927 - loss: 0.2969 - val_accuracy: 0.8825 - val_loss: 0.3298

Epoch 96/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 709us/step - accuracy: 0.8941 - loss: 0.2969 - val_accuracy: 0.8846 - val_loss: 0.3274

Epoch 97/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 630us/step - accuracy: 0.8965 - loss: 0.2949 - val_accuracy: 0.8862 - val_loss: 0.3274

Epoch 98/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 666us/step - accuracy: 0.8942 - loss: 0.2985 - val_accuracy: 0.8867 - val_loss: 0.3277

Epoch 99/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 632us/step - accuracy: 0.8961 - loss: 0.2948 - val_accuracy: 0.8840 - val_loss: 0.3264

Epoch 100/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 635us/step - accuracy: 0.8935 - loss: 0.2980 - val_accuracy: 0.8838 - val_loss: 0.3307

Epoch 101/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 670us/step - accuracy: 0.8957 - loss: 0.2926 - val_accuracy: 0.8868 - val_loss: 0.3271

Epoch 102/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 678us/step - accuracy: 0.8960 - loss: 0.2967 - val_accuracy: 0.8852 - val_loss: 0.3273

Epoch 103/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 696us/step - accuracy: 0.8969 - loss: 0.2929 - val_accuracy: 0.8848 - val_loss: 0.3275

Epoch 104/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 719us/step - accuracy: 0.8977 - loss: 0.2892 - val_accuracy: 0.8860 - val_loss: 0.3261

Epoch 105/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 688us/step - accuracy: 0.8974 - loss: 0.2900 - val_accuracy: 0.8860 - val_loss: 0.3254

Epoch 106/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 712us/step - accuracy: 0.8978 - loss: 0.2881 - val_accuracy: 0.8842 - val_loss: 0.3262

Epoch 107/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 802us/step - accuracy: 0.8944 - loss: 0.2930 - val_accuracy: 0.8861 - val_loss: 0.3258

Epoch 108/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 700us/step - accuracy: 0.8960 - loss: 0.2904 - val_accuracy: 0.8857 - val_loss: 0.3250

Epoch 109/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 631us/step - accuracy: 0.8959 - loss: 0.2908 - val_accuracy: 0.8858 - val_loss: 0.3235

Epoch 110/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 672us/step - accuracy: 0.8985 - loss: 0.2875 - val_accuracy: 0.8856 - val_loss: 0.3240

Epoch 111/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 760us/step - accuracy: 0.8975 - loss: 0.2918 - val_accuracy: 0.8867 - val_loss: 0.3241

Epoch 112/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 767us/step - accuracy: 0.8988 - loss: 0.2867 - val_accuracy: 0.8852 - val_loss: 0.3238

Epoch 113/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 680us/step - accuracy: 0.9007 - loss: 0.2818 - val_accuracy: 0.8862 - val_loss: 0.3234

Epoch 114/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 735us/step - accuracy: 0.8966 - loss: 0.2916 - val_accuracy: 0.8862 - val_loss: 0.3249

Epoch 115/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 686us/step - accuracy: 0.8970 - loss: 0.2867 - val_accuracy: 0.8858 - val_loss: 0.3228

Epoch 116/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 756us/step - accuracy: 0.9012 - loss: 0.2803 - val_accuracy: 0.8865 - val_loss: 0.3221

Epoch 117/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 636us/step - accuracy: 0.8985 - loss: 0.2827 - val_accuracy: 0.8875 - val_loss: 0.3240

Epoch 118/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 637us/step - accuracy: 0.8999 - loss: 0.2830 - val_accuracy: 0.8864 - val_loss: 0.3235

Epoch 119/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 674us/step - accuracy: 0.8982 - loss: 0.2839 - val_accuracy: 0.8874 - val_loss: 0.3206

Epoch 120/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 614us/step - accuracy: 0.8994 - loss: 0.2841 - val_accuracy: 0.8871 - val_loss: 0.3207

Epoch 121/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 639us/step - accuracy: 0.8990 - loss: 0.2829 - val_accuracy: 0.8862 - val_loss: 0.3218

Epoch 122/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 608us/step - accuracy: 0.9003 - loss: 0.2797 - val_accuracy: 0.8864 - val_loss: 0.3210

Epoch 123/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 619us/step - accuracy: 0.9002 - loss: 0.2831 - val_accuracy: 0.8878 - val_loss: 0.3202

Epoch 124/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 644us/step - accuracy: 0.8992 - loss: 0.2800 - val_accuracy: 0.8874 - val_loss: 0.3209

Epoch 125/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 645us/step - accuracy: 0.9004 - loss: 0.2823 - val_accuracy: 0.8863 - val_loss: 0.3198

Epoch 126/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 741us/step - accuracy: 0.9024 - loss: 0.2812 - val_accuracy: 0.8873 - val_loss: 0.3187

Epoch 127/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 754us/step - accuracy: 0.9025 - loss: 0.2769 - val_accuracy: 0.8876 - val_loss: 0.3198

Epoch 128/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 643us/step - accuracy: 0.9001 - loss: 0.2800 - val_accuracy: 0.8864 - val_loss: 0.3217

Epoch 129/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 642us/step - accuracy: 0.8981 - loss: 0.2833 - val_accuracy: 0.8878 - val_loss: 0.3192

Epoch 130/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 679us/step - accuracy: 0.9035 - loss: 0.2754 - val_accuracy: 0.8876 - val_loss: 0.3181

Epoch 131/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 649us/step - accuracy: 0.9009 - loss: 0.2747 - val_accuracy: 0.8855 - val_loss: 0.3196

Epoch 132/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 670us/step - accuracy: 0.8997 - loss: 0.2833 - val_accuracy: 0.8870 - val_loss: 0.3223

Epoch 133/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 659us/step - accuracy: 0.9005 - loss: 0.2770 - val_accuracy: 0.8878 - val_loss: 0.3177

Epoch 134/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 642us/step - accuracy: 0.9011 - loss: 0.2775 - val_accuracy: 0.8858 - val_loss: 0.3205

Epoch 135/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 679us/step - accuracy: 0.9034 - loss: 0.2725 - val_accuracy: 0.8879 - val_loss: 0.3173

Epoch 136/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 641us/step - accuracy: 0.9007 - loss: 0.2757 - val_accuracy: 0.8885 - val_loss: 0.3179

Epoch 137/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 678us/step - accuracy: 0.9034 - loss: 0.2720 - val_accuracy: 0.8887 - val_loss: 0.3173

Epoch 138/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 638us/step - accuracy: 0.9036 - loss: 0.2741 - val_accuracy: 0.8880 - val_loss: 0.3174

Epoch 139/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 635us/step - accuracy: 0.9032 - loss: 0.2731 - val_accuracy: 0.8889 - val_loss: 0.3182

Epoch 140/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 676us/step - accuracy: 0.9032 - loss: 0.2736 - val_accuracy: 0.8888 - val_loss: 0.3167

Epoch 141/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 641us/step - accuracy: 0.9067 - loss: 0.2677 - val_accuracy: 0.8888 - val_loss: 0.3164

Epoch 142/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 672us/step - accuracy: 0.9028 - loss: 0.2737 - val_accuracy: 0.8874 - val_loss: 0.3164

Epoch 143/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 630us/step - accuracy: 0.9063 - loss: 0.2669 - val_accuracy: 0.8892 - val_loss: 0.3177

Epoch 144/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 677us/step - accuracy: 0.9046 - loss: 0.2703 - val_accuracy: 0.8894 - val_loss: 0.3161

Epoch 145/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 635us/step - accuracy: 0.9046 - loss: 0.2701 - val_accuracy: 0.8894 - val_loss: 0.3158

Epoch 146/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 672us/step - accuracy: 0.9042 - loss: 0.2684 - val_accuracy: 0.8887 - val_loss: 0.3156

Epoch 147/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 633us/step - accuracy: 0.9039 - loss: 0.2718 - val_accuracy: 0.8888 - val_loss: 0.3156

Epoch 148/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 643us/step - accuracy: 0.9039 - loss: 0.2725 - val_accuracy: 0.8893 - val_loss: 0.3145

Epoch 149/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 664us/step - accuracy: 0.9037 - loss: 0.2689 - val_accuracy: 0.8875 - val_loss: 0.3156

Epoch 150/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 640us/step - accuracy: 0.9057 - loss: 0.2657 - val_accuracy: 0.8903 - val_loss: 0.3149

Epoch 151/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 657us/step - accuracy: 0.9060 - loss: 0.2613 - val_accuracy: 0.8888 - val_loss: 0.3158

Epoch 152/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 646us/step - accuracy: 0.9032 - loss: 0.2705 - val_accuracy: 0.8876 - val_loss: 0.3145

Epoch 153/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 631us/step - accuracy: 0.9053 - loss: 0.2705 - val_accuracy: 0.8898 - val_loss: 0.3135

Epoch 154/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 670us/step - accuracy: 0.9052 - loss: 0.2669 - val_accuracy: 0.8898 - val_loss: 0.3129

Epoch 155/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 640us/step - accuracy: 0.9067 - loss: 0.2626 - val_accuracy: 0.8903 - val_loss: 0.3133

Epoch 156/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 671us/step - accuracy: 0.9096 - loss: 0.2575 - val_accuracy: 0.8887 - val_loss: 0.3137

Epoch 157/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 642us/step - accuracy: 0.9069 - loss: 0.2619 - val_accuracy: 0.8900 - val_loss: 0.3132

Epoch 158/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 671us/step - accuracy: 0.9031 - loss: 0.2712 - val_accuracy: 0.8898 - val_loss: 0.3136

Epoch 159/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 634us/step - accuracy: 0.9085 - loss: 0.2602 - val_accuracy: 0.8896 - val_loss: 0.3118

Epoch 160/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 636us/step - accuracy: 0.9056 - loss: 0.2638 - val_accuracy: 0.8892 - val_loss: 0.3116

Epoch 161/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 660us/step - accuracy: 0.9072 - loss: 0.2642 - val_accuracy: 0.8882 - val_loss: 0.3132

Epoch 162/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 638us/step - accuracy: 0.9080 - loss: 0.2577 - val_accuracy: 0.8898 - val_loss: 0.3121

Epoch 163/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 664us/step - accuracy: 0.9039 - loss: 0.2642 - val_accuracy: 0.8914 - val_loss: 0.3132

Epoch 164/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 641us/step - accuracy: 0.9064 - loss: 0.2631 - val_accuracy: 0.8875 - val_loss: 0.3144

Epoch 165/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 666us/step - accuracy: 0.9049 - loss: 0.2660 - val_accuracy: 0.8900 - val_loss: 0.3125

Epoch 166/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 639us/step - accuracy: 0.9069 - loss: 0.2596 - val_accuracy: 0.8903 - val_loss: 0.3115

Epoch 167/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 639us/step - accuracy: 0.9079 - loss: 0.2628 - val_accuracy: 0.8888 - val_loss: 0.3115

Epoch 168/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 676us/step - accuracy: 0.9086 - loss: 0.2576 - val_accuracy: 0.8898 - val_loss: 0.3108

Epoch 169/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 636us/step - accuracy: 0.9079 - loss: 0.2591 - val_accuracy: 0.8907 - val_loss: 0.3109

Epoch 170/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 665us/step - accuracy: 0.9082 - loss: 0.2593 - val_accuracy: 0.8903 - val_loss: 0.3103

Epoch 171/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 635us/step - accuracy: 0.9082 - loss: 0.2573 - val_accuracy: 0.8892 - val_loss: 0.3132

Epoch 172/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 676us/step - accuracy: 0.9096 - loss: 0.2506 - val_accuracy: 0.8886 - val_loss: 0.3149

Epoch 173/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 638us/step - accuracy: 0.9075 - loss: 0.2577 - val_accuracy: 0.8888 - val_loss: 0.3125

Epoch 174/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 662us/step - accuracy: 0.9105 - loss: 0.2516 - val_accuracy: 0.8900 - val_loss: 0.3094

Epoch 175/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 644us/step - accuracy: 0.9099 - loss: 0.2550 - val_accuracy: 0.8917 - val_loss: 0.3094

Epoch 176/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 640us/step - accuracy: 0.9086 - loss: 0.2581 - val_accuracy: 0.8914 - val_loss: 0.3103

Epoch 177/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 667us/step - accuracy: 0.9083 - loss: 0.2560 - val_accuracy: 0.8919 - val_loss: 0.3097

Epoch 178/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 634us/step - accuracy: 0.9101 - loss: 0.2537 - val_accuracy: 0.8898 - val_loss: 0.3097

Epoch 179/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 675us/step - accuracy: 0.9108 - loss: 0.2519 - val_accuracy: 0.8906 - val_loss: 0.3093

Epoch 180/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 635us/step - accuracy: 0.9100 - loss: 0.2573 - val_accuracy: 0.8888 - val_loss: 0.3110

Epoch 181/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 675us/step - accuracy: 0.9092 - loss: 0.2569 - val_accuracy: 0.8898 - val_loss: 0.3094

Epoch 182/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 641us/step - accuracy: 0.9105 - loss: 0.2532 - val_accuracy: 0.8915 - val_loss: 0.3101

Epoch 183/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 669us/step - accuracy: 0.9105 - loss: 0.2541 - val_accuracy: 0.8910 - val_loss: 0.3084

Epoch 184/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 633us/step - accuracy: 0.9114 - loss: 0.2488 - val_accuracy: 0.8911 - val_loss: 0.3088

Epoch 185/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 632us/step - accuracy: 0.9113 - loss: 0.2489 - val_accuracy: 0.8905 - val_loss: 0.3116

Epoch 186/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 665us/step - accuracy: 0.9130 - loss: 0.2464 - val_accuracy: 0.8903 - val_loss: 0.3098

Epoch 187/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 639us/step - accuracy: 0.9117 - loss: 0.2522 - val_accuracy: 0.8913 - val_loss: 0.3082

Epoch 188/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 668us/step - accuracy: 0.9117 - loss: 0.2519 - val_accuracy: 0.8922 - val_loss: 0.3082

Epoch 189/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 640us/step - accuracy: 0.9110 - loss: 0.2506 - val_accuracy: 0.8916 - val_loss: 0.3080

Epoch 190/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 674us/step - accuracy: 0.9127 - loss: 0.2485 - val_accuracy: 0.8908 - val_loss: 0.3086

Epoch 191/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 647us/step - accuracy: 0.9110 - loss: 0.2502 - val_accuracy: 0.8902 - val_loss: 0.3109

Epoch 192/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 669us/step - accuracy: 0.9093 - loss: 0.2524 - val_accuracy: 0.8917 - val_loss: 0.3068

Epoch 193/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 661us/step - accuracy: 0.9131 - loss: 0.2457 - val_accuracy: 0.8907 - val_loss: 0.3071

Epoch 194/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 673us/step - accuracy: 0.9103 - loss: 0.2501 - val_accuracy: 0.8912 - val_loss: 0.3087

Epoch 195/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 644us/step - accuracy: 0.9095 - loss: 0.2507 - val_accuracy: 0.8916 - val_loss: 0.3061

Epoch 196/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 671us/step - accuracy: 0.9125 - loss: 0.2480 - val_accuracy: 0.8925 - val_loss: 0.3065

Epoch 197/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 639us/step - accuracy: 0.9156 - loss: 0.2419 - val_accuracy: 0.8925 - val_loss: 0.3070

Epoch 198/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 668us/step - accuracy: 0.9125 - loss: 0.2484 - val_accuracy: 0.8927 - val_loss: 0.3075

Epoch 199/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 638us/step - accuracy: 0.9128 - loss: 0.2463 - val_accuracy: 0.8926 - val_loss: 0.3059

Epoch 200/200

[1m1500/1500[0m [32m━━━━━━━━━━━━━━━━━━━━[0m[37m[0m [1m1s[0m 664us/step - accuracy: 0.9145 - loss: 0.2444 - val_accuracy: 0.8902 - val_loss: 0.3075

print(history.history.keys())

### 출력 결과

dict_keys(['accuracy', 'loss', 'val_accuracy', 'val_loss'])

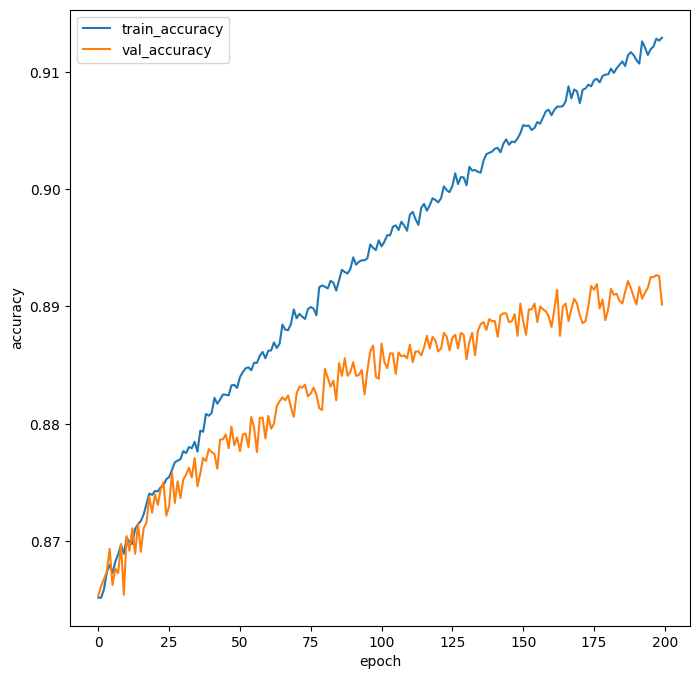

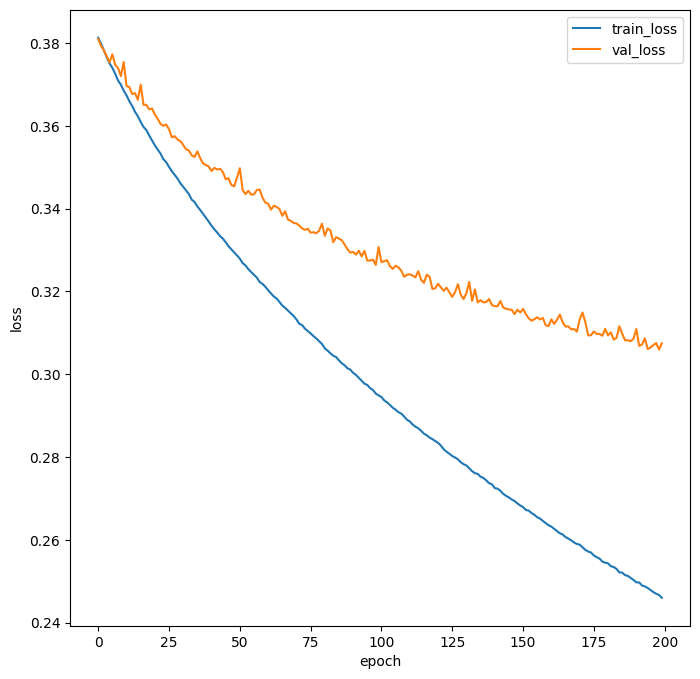

plt.figure(figsize=(8, 8))

plt.plot(history.history['loss'])

plt.plot(history.history['val_loss'])

plt.ylabel('loss')

plt.xlabel('epoch')

plt.legend(['train_loss', 'val_loss'])

plt.show()

### 출력 결과

plt.figure(figsize=(8, 8))

plt.plot(history.history['accuracy'])

plt.plot(history.history['val_accuracy'])

plt.ylabel('accuracy')

plt.xlabel('epoch')

plt.legend(['train_accuracy', 'val_accuracy'])

plt.show()

### 출력 결과