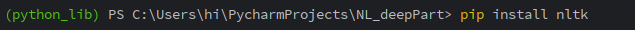

사전 준비 설치

pip install nltk

NLTK(Natural Language Toolkit) 자연어처리 라이브러리 설치

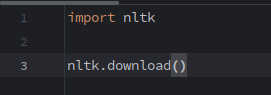

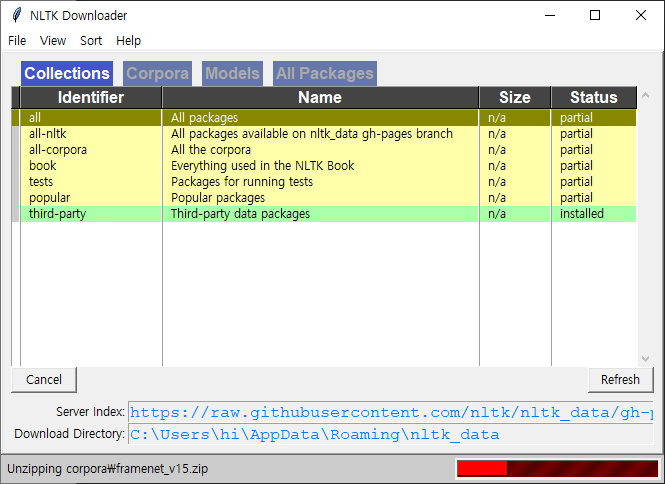

all -> all packages 다운로드

완료시 녹색으로 표시

토큰화

자연어 처리에서 크롤링 등으로 얻어낸 코퍼스 데이터가 필요에 맞게 전처리 되지 않은 상태이다.

해당 데이터를 용도에 맞게 토큰화(tokenization) & 정제(cleaning) & 정규화(noramlization)작업을 수행해야 한다.

주어진 코퍼스(corpus)에서 토큰(token)이라 불리는 단위로 나누는 직업을 토큰화(tokenization) 한다.

토큰의 단위가 상황에 따라 다르지만, 보통 의미있는 단위로 토큰을 정의 한다.

NLTK, KoNLPY를 통해 토큰화를 진행할 수 있다.

자연어 토크나이징 도구

자연어 처리를 위해서는 우선 텍스트에 대한 정보를 단위별로 나누는 것이 일반적이다.

예를 들어, 영화 리뷰 내용을 예측한다고 하면 한 문장을 단어 단위로 쪼개서 분석할 수 있다.

이처럼 예측해야 할 입력 정보를 하나의 특정 기본 단위로 자르는 것을 토크나이징이라고 한다.

영어 토크나이징 라이브러리

NLTK(Natural Language Toolkit)

파이썬에서 영어 텍스트 전처리 작업을 하는데 많이 쓰이는 라이브러리이다.

50 여개가 넘는 말뭉치 리소스를 활용해 영어 텍스트를 분석할 수 있게 제공한다.

Spacy

주로 교육, 연구 목적이 아닌 상업용 목적으로 만들어진 영어 텍스트 전처리 라이브러리이다.

영어를 포함한 8개 국어에 대한 자연어 전처리 모듈을 제공하고, 빠른 속도로 전처리 할 수 있다.

NLTK 토크나이징

단어 단위 토크나이징 : 텍스트 데이터를 각 단어 별로 나누는 것을 의미한다.

from nltk.tokenize import word_tokenize

문장 단위 토크나이징 : 텍스트 데이터를 문장으로 나누는 것을 의미한다.

from nltk.tokenize import sent_tokenize

from nltk.tokenize import sent_tokenize, word_tokenize

sentence = 'Natural language preprocessing (NLP) is a sufield of computer science, information engineering'\

'and artificial intelligence cencerned with the interactions between computer and human language. it is a good day'

print(sent_tokenize(sentence))

print(len(sent_tokenize(sentence)))

print(word_tokenize(sentence))

print()

# 품사 분류

from nltk.tag import pos_tag

sentence = 'Smith woodhouse, handsome, clever, and rich, with a comfortable home and happy disposition'

tagged_list = pos_tag(word_tokenize(sentence))

print(tagged_list)

print()

'''

NNP : 단수 고유명사

NN : 명사

VB : 동사

VBP : 동사 현재형

TO : to 부정사

DT : 관형사

CC : 등위 접속사

JJ : 형용사

IN : 전치사

'''

nouns = [w[0] for w in tagged_list if w[1] == 'NN']

print(nouns)

['Natural language preprocessing (NLP) is a sufield of computer science, information engineeringand artificial intelligence cencerned with the interactions between computer and human language.', 'it is a good day']

2

['Natural', 'language', 'preprocessing', '(', 'NLP', ')', 'is', 'a', 'sufield', 'of', 'computer', 'science', ',', 'information', 'engineeringand', 'artificial', 'intelligence', 'cencerned', 'with', 'the', 'interactions', 'between', 'computer', 'and', 'human', 'language', '.', 'it', 'is', 'a', 'good', 'day']

[('Smith', 'NNP'), ('woodhouse', 'NN'), (',', ','), ('handsome', 'NN'), (',', ','), ('clever', 'NN'), (',', ','), ('and', 'CC'), ('rich', 'JJ'), (',', ','), ('with', 'IN'), ('a', 'DT'), ('comfortable', 'JJ'), ('home', 'NN'), ('and', 'CC'), ('happy', 'JJ'), ('disposition', 'NN')]

['woodhouse', 'handsome', 'clever', 'home', 'disposition']

stopwords

from nltk.tokenize import word_tokenize

from nltk.corpus import stopwords

print(stopwords.words('english'))

print(len(stopwords.words('english')))

print()

sentence = 'Family is not an important thing it is everything'

word_tokens = word_tokenize(sentence)

stopwords = set(stopwords.words('english'))

result = []

for w in word_tokens:

if w not in stopwords:

result.append(w)

print(result)['a', 'about', 'above', 'after', 'again', 'against', 'ain', 'all', 'am', 'an', 'and', 'any', 'are', 'aren', "aren't", 'as', 'at', 'be', 'because', 'been', 'before', 'being', 'below', 'between', 'both', 'but', 'by', 'can', 'couldn', "couldn't", 'd', 'did', 'didn', "didn't", 'do', 'does', 'doesn', "doesn't", 'doing', 'don', "don't", 'down', 'during', 'each', 'few', 'for', 'from', 'further', 'had', 'hadn', "hadn't", 'has', 'hasn', "hasn't", 'have', 'haven', "haven't", 'having', 'he', "he'd", "he'll", 'her', 'here', 'hers', 'herself', "he's", 'him', 'himself', 'his', 'how', 'i', "i'd", 'if', "i'll", "i'm", 'in', 'into', 'is', 'isn', "isn't", 'it', "it'd", "it'll", "it's", 'its', 'itself', "i've", 'just', 'll', 'm', 'ma', 'me', 'mightn', "mightn't", 'more', 'most', 'mustn', "mustn't", 'my', 'myself', 'needn', "needn't", 'no', 'nor', 'not', 'now', 'o', 'of', 'off', 'on', 'once', 'only', 'or', 'other', 'our', 'ours', 'ourselves', 'out', 'over', 'own', 're', 's', 'same', 'shan', "shan't", 'she', "she'd", "she'll", "she's", 'should', 'shouldn', "shouldn't", "should've", 'so', 'some', 'such', 't', 'than', 'that', "that'll", 'the', 'their', 'theirs', 'them', 'themselves', 'then', 'there', 'these', 'they', "they'd", "they'll", "they're", "they've", 'this', 'those', 'through', 'to', 'too', 'under', 'until', 'up', 've', 'very', 'was', 'wasn', "wasn't", 'we', "we'd", "we'll", "we're", 'were', 'weren', "weren't", "we've", 'what', 'when', 'where', 'which', 'while', 'who', 'whom', 'why', 'will', 'with', 'won', "won't", 'wouldn', "wouldn't", 'y', 'you', "you'd", "you'll", 'your', "you're", 'yours', 'yourself', 'yourselves', "you've"]

198

['Family', 'important', 'thing', 'everything']RegexpTokenizer

import nltk

print(nltk.corpus.gutenberg.fileids())

print()

emma_txt = nltk.corpus.gutenberg.raw('austen-emma.txt')

print(emma_txt)

print()

from nltk.tokenize import RegexpTokenizer

rt = RegexpTokenizer('[\w]+') # [a-z A-Z 0-9 _] 중 하나

print(rt.tokenize(emma_txt[:200]))

['austen-emma.txt', 'austen-persuasion.txt', 'austen-sense.txt', 'bible-kjv.txt', 'blake-poems.txt', 'bryant-stories.txt', 'burgess-busterbrown.txt', 'carroll-alice.txt', 'chesterton-ball.txt', 'chesterton-brown.txt', 'chesterton-thursday.txt', 'edgeworth-parents.txt', 'melville-moby_dick.txt', 'milton-paradise.txt', 'shakespeare-caesar.txt', 'shakespeare-hamlet.txt', 'shakespeare-macbeth.txt', 'whitman-leaves.txt']

[Emma by Jane Austen 1816]

VOLUME I

CHAPTER I

Emma Woodhouse, handsome, clever, and rich, with a comfortable home

and happy disposition, seemed to unite some of the best blessings

of existence; and had lived nearly twenty-one years in the world

with very little to distress or vex her.

She was the youngest of the two daughters of a most affectionate,

indulgent father; and had, in consequence of her sister's marriage,

been mistress of his house from a very early period. Her mother

had died too long ago for her to have more than an indistinct

remembrance of her caresses; and her place had been supplied

by an excellent woman as governess, who had fallen little short

of a mother in affection.

Sixteen years had Miss Taylor been in Mr. Woodhouse's family,

less as a governess than a friend, very fond of both daughters,

but particularly of Emma. Between _them_ it was more the intimacy

of sisters. Even before Miss Taylor had ceased to hold the nominal

office of governess, the mildness of her temper had hardly allowed

her to impose any restraint; and the shadow of authority being

now long passed away, they had been living together as friend and

friend very mutually attached, and Emma doing just what she liked;

highly esteeming Miss Taylor's judgment, but directed chiefly by

her own.

...

...

In this state of suspense they were befriended, not by any sudden

illumination of Mr. Woodhouse's mind, or any wonderful change of his

nervous system, but by the operation of the same system in another way.--

Mrs. Weston's poultry-house was robbed one night of all her turkeys--

evidently by the ingenuity of man. Other poultry-yards in the

neighbourhood also suffered.--Pilfering was _housebreaking_ to

Mr. Woodhouse's fears.--He was very uneasy; and but for the sense

of his son-in-law's protection, would have been under wretched alarm

every night of his life. The strength, resolution, and presence

of mind of the Mr. Knightleys, commanded his fullest dependence.

While either of them protected him and his, Hartfield was safe.--

But Mr. John Knightley must be in London again by the end of the

first week in November.

The result of this distress was, that, with a much more voluntary,

cheerful consent than his daughter had ever presumed to hope for at

the moment, she was able to fix her wedding-day--and Mr. Elton was

called on, within a month from the marriage of Mr. and Mrs. Robert

Martin, to join the hands of Mr. Knightley and Miss Woodhouse.

The wedding was very much like other weddings, where the parties

have no taste for finery or parade; and Mrs. Elton, from the

particulars detailed by her husband, thought it all extremely shabby,

and very inferior to her own.--"Very little white satin, very few

lace veils; a most pitiful business!--Selina would stare when she

heard of it."--But, in spite of these deficiencies, the wishes,

the hopes, the confidence, the predictions of the small band

of true friends who witnessed the ceremony, were fully answered

in the perfect happiness of the union.

FINIS

['Emma', 'by', 'Jane', 'Austen', '1816', 'VOLUME', 'I', 'CHAPTER', 'I', 'Emma', 'Woodhouse', 'handsome', 'clever', 'and', 'rich', 'with', 'a', 'comfortable', 'home', 'and', 'happy', 'disposition', 'seemed', 'to', 'unite', 'some', 'of', 'the', 'best', 'blessings', 'of', 'existence', 'an']

tokenize 후 정렬

import numpy as np

from nltk.tokenize import sent_tokenize, word_tokenize

from nltk.corpus import stopwords

raw_text = 'a barber is a person. a barber is good person. a barber is huge person. '\

'he knew a secret! The secret he kept is huge secret. Huge secret. '\

'His barber kept his word. a barber kept his word. His barber kept his secret. '\

'But keeping and keeping such a huge secret to himself was driving the barber crazy.'\

'the barber went up a huge mountain.'

sentences = sent_tokenize(raw_text)

print(sentences)

'''

['a barber is a person.', 'a barber is good person.', 'a barber is huge person.', 'he knew a secret!', 'The secret he kept is huge secret.', 'Huge secret.', 'His barber kept his word.', 'a barber kept his word.', 'His barber kept his secret.', 'But keeping and keeping such a huge secret to himself was driving the barber crazy.the barber went up a huge mountain.']

'''

vocab = {}

preprocessed_sentences = []

stop_words = set(stopwords.words('english'))

for sentence in sentences:

tokenized_sentence = word_tokenize(sentence)

# print(tokenized_sentence)

result = []

for word in tokenized_sentence:

word = word.lower()

if word not in stop_words:

if len(word) > 2:

result.append(word)

if word not in vocab:

vocab[word] = 0

vocab[word] += 1

preprocessed_sentences.append(result)

print(preprocessed_sentences)

'''

[['barber', 'person'], ['barber', 'good', 'person'], ['barber', 'huge', 'person'], ['knew', 'secret'], ['secret', 'kept', 'huge', 'secret'], ['huge', 'secret'], ['barber', 'kept', 'word'], ['barber', 'kept', 'word'], ['barber', 'kept', 'secret'], ['keeping', 'keeping', 'huge', 'secret', 'driving', 'barber', 'crazy.the', 'barber', 'went', 'huge', 'mountain']]

'''

print(vocab)

'''

{'barber': 8, 'person': 3, 'good': 1, 'huge': 5, 'knew': 1, 'secret': 6, 'kept': 4, 'word': 2, 'keeping': 2, 'driving': 1, 'crazy.the': 1, 'went': 1, 'mountain': 1}

'''

print()

sort_vocab = sorted(vocab.items(), key=lambda x : x[1], reverse=True)

print(sort_vocab)

'''

[('barber', 8), ('secret', 6), ('huge', 5), ('kept', 4), ('person', 3), ('word', 2), ('keeping', 2), ('good', 1), ('knew', 1), ('driving', 1), ('crazy.the', 1), ('went', 1), ('mountain', 1)]

'''

print()

word_to_index = {}

i = 0

for word, frequency in sort_vocab:

if frequency > 1:

i += 1

word_to_index[word] = i

print(word_to_index)

'''

{'barber': 1, 'secret': 2, 'huge': 3, 'kept': 4, 'person': 5, 'word': 6, 'keeping': 7}

'''

print()

vocab_size = 5

word_frequency = [word for word, index in word_to_index.items() if index >= vocab_size + 1]

for w in word_frequency:

del word_to_index[w]

print(word_to_index)

'''

{'barber': 1, 'secret': 2, 'huge': 3, 'kept': 4, 'person': 5}

'''

print()

word_to_index['OOV'] = len(word_to_index) + 1 # out of vocaburary

print(word_to_index)

'''

{'barber': 1, 'secret': 2, 'huge': 3, 'kept': 4, 'person': 5, 'OOV': 6}

'''

print(preprocessed_sentences)

'''

[['barber', 'person'], ['barber', 'good', 'person'], ['barber', 'huge', 'person'], ['knew', 'secret'], ['secret', 'kept', 'huge', 'secret'], ['huge', 'secret'], ['barber', 'kept', 'word'], ['barber', 'kept', 'word'], ['barber', 'kept', 'secret'], ['keeping', 'keeping', 'huge', 'secret', 'driving', 'barber', 'crazy.the', 'barber', 'went', 'huge', 'mountain']]

'''

encoded_sentences = []

for sentence in preprocessed_sentences:

encoded_sentence = []

for word in sentence:

try:

encoded_sentence.append(word_to_index[word])

except KeyError:

encoded_sentence.append(word_to_index['OOV'])

encoded_sentences.append(encoded_sentence)

print(encoded_sentences)

'''

[[1, 5], [1, 6, 5], [1, 3, 5], [6, 2], [2, 4, 3, 2], [3, 2], [1, 4, 6], [1, 4, 6], [1, 4, 2], [6, 6, 3, 2, 6, 1, 6, 1, 6, 3, 6]]

'''

print(end='\n\n\n')

from collections import Counter

print(preprocessed_sentences)

'''

[['barber', 'person'], ['barber', 'good', 'person'], ['barber', 'huge', 'person'], ['knew', 'secret'], ['secret', 'kept', 'huge', 'secret'], ['huge', 'secret'], ['barber', 'kept', 'word'], ['barber', 'kept', 'word'], ['barber', 'kept', 'secret'], ['keeping', 'keeping', 'huge', 'secret', 'driving', 'barber', 'crazy.the', 'barber', 'went', 'huge', 'mountain']]

'''

all_word_list = sum(preprocessed_sentences, [])

print(all_word_list)

'''

['barber', 'person', 'barber', 'good', 'person', 'barber', 'huge', 'person', 'knew', 'secret', 'secret', 'kept', 'huge', 'secret', 'huge', 'secret', 'barber', 'kept', 'word', 'barber', 'kept', 'word', 'barber', 'kept', 'secret', 'keeping', 'keeping', 'huge', 'secret', 'driving', 'barber', 'crazy.the', 'barber', 'went', 'huge', 'mountain']

'''

vocab = Counter(all_word_list)

print(vocab)

'''

Counter({'barber': 8, 'secret': 6, 'huge': 5, 'kept': 4, 'person': 3, 'word': 2, 'keeping': 2, 'good': 1, 'knew': 1, 'driving': 1, 'crazy.the': 1, 'went': 1, 'mountain': 1})

'''

print()

vocab_size = 5

vocab = vocab.most_common(vocab_size)

print(vocab)

'''

[('barber', 8), ('secret', 6), ('huge', 5), ('kept', 4), ('person', 3)]

'''

word_to_index = {}

for word, frequency in vocab:

i += 1

word_to_index[word] = i

print(word_to_index)

'''

{'barber': 8, 'secret': 9, 'huge': 10, 'kept': 11, 'person': 12}

'''

print()

from nltk import FreqDist

print(np.hstack(preprocessed_sentences))

'''

['barber' 'person' 'barber' 'good' 'person' 'barber' 'huge' 'person'

'knew' 'secret' 'secret' 'kept' 'huge' 'secret' 'huge' 'secret' 'barber'

'kept' 'word' 'barber' 'kept' 'word' 'barber' 'kept' 'secret' 'keeping'

'keeping' 'huge' 'secret' 'driving' 'barber' 'crazy.the' 'barber' 'went'

'huge' 'mountain']

'''

vocab = FreqDist(np.hstack(preprocessed_sentences))

print(vocab.items())

'''

dict_items([('barber', 8), ('person', 3), ('good', 1), ('huge', 5), ('knew', 1), ('secret', 6), ('kept', 4), ('word', 2), ('keeping', 2), ('driving', 1), ('crazy.the', 1), ('went', 1), ('mountain', 1)])

[('barber', 8), ('secret', 6), ('huge', 5), ('kept', 4), ('person', 3)]

'''

vocab = vocab.most_common(vocab_size)

print(vocab)

'''

[('barber', 8), ('secret', 6), ('huge', 5), ('kept', 4), ('person', 3)]

'''

word_to_index = {word[0] : index + 1 for index, word in enumerate(vocab)}

print(word_to_index)

'''

{'barber': 1, 'secret': 2, 'huge': 3, 'kept': 4, 'person': 5}

'''응용

import pandas as pd

from nltk.tokenize import sent_tokenize, word_tokenize

from nltk.corpus import stopwords

'''

1. 미국 전 대통령 트럼프 연설문(trumph.txt) 파일을 읽어 아래의 조건에 맞게 출력하시오

ㄱ. 단어별로 구분한다.

ㄴ. 불용어를 제거한다.

ㄷ. 단어별 빈도수를 출력한다 (상위 100개)

ㄹ. 2글자 미만이거나 10 글자 이상인 단어를 삭제하고 출력한다.

'''

data = open('trumph.txt','r', encoding='UTF8')

data = data.read()

print(data) # 1

from nltk.tokenize.regexp import RegexpTokenizer

tokenize = RegexpTokenizer("[\w']+")

tdata = tokenize.tokenize(data)

print(tdata) # ㄱ

tdata = [each_word for each_word in tdata if each_word not in stopwords.words('english')]

print(tdata) # ㄴ

from collections import Counter

cdata = Counter(tdata)

print(cdata)

oh_data = dict(cdata.most_common(100))

print(oh_data) # ㄷ

new_data = []

for i in range(len(tdata)):

if len(tdata[i]) >= 2 | len(tdata[i]) <= 10:

new_data.append(tdata[i])

print(new_data) # ㄹ

new_data2 = Counter(new_data)

new_data2 = dict(new_data2.most_common(100))

print(new_data2)

데이터 전처리 후 저장

# 필요한 라이브러리 import

import re # 정규 표현식을 위한 모듈

import numpy as np # 수치 계산을 위한 모듈

import pandas as pd # 데이터프레임 처리를 위한 모듈

import collections # 기본 자료구조 모음

from argparse import Namespace # 인자 관리를 위한 네임스페이스

# 하이퍼파라미터 및 파일 경로 등의 설정

args = Namespace(

raw_train_dataset_csv = 'data/yelp/raw_train.csv', # 원본 학습 데이터 경로

raw_test_dataset_csv = 'data/yelp/raw_test.csv', # 원본 테스트 데이터 경로 (사용 안 함)

proportion_subset_of_train = 0.1, # 학습 데이터의 일부만 사용 (10%)

train_propotion = 0.7, # train: 70%

val_propotion = 0.15, # validation: 15%

test_propotion = 0.15, # test: 15%

output_munged_csv = 'data/yelp/reviews_with_splits_list2.csv', # 최종 저장 경로

seed = 1337 # 랜덤 시드 고정 (재현성 보장)

)

# CSV에서 데이터 읽어오기 (헤더 없음, 컬럼 이름 지정)

train_reviews = pd.read_csv(args.raw_train_dataset_csv, header=None, names=['rating','review'])

# rating(점수) 별로 리뷰를 분류하기 위한 딕셔너리 초기화

by_rating = collections.defaultdict(list)

# 각 리뷰를 rating 기준으로 분류

for _, row in train_reviews.iterrows():

by_rating[row.rating].append(row.to_dict())

# 각 평점 그룹에서 일부 리뷰만 추출 (비율: proportion_subset_of_train)

review_subset = []

for _, item_list in sorted(by_rating.items()):

n_total = len(item_list)

n_subset = int(args.proportion_subset_of_train * n_total) # 비율만큼 추출

review_subset.extend(item_list[:n_subset]) # 앞쪽에서부터 일부만 추출

# 리스트를 DataFrame으로 변환

review_subset = pd.DataFrame(review_subset)

# 다시 rating 기준으로 묶기 (이번에는 샘플 수가 줄어든 상태)

by_rating = collections.defaultdict(list)

for _, row in review_subset.iterrows():

by_rating[row.rating].append(row.to_dict())

# 학습, 검증, 테스트 세트로 나누기

final_list = []

np.random.seed(args.seed) # 랜덤 시드 고정

for _, item_list in sorted(by_rating.items()):

np.random.shuffle(item_list) # 각 그룹 내부를 섞음

n_total = len(item_list)

n_train = int(args.train_propotion * n_total)

n_val = int(args.val_propotion * n_total)

n_test = int(args.test_propotion * n_total)

# 각각 split 라벨 부여

for item in item_list[:n_train]:

item['split'] = 'train'

for item in item_list[n_train : n_train + n_val]:

item['split'] = 'val'

for item in item_list[n_train + n_val : n_train + n_val + n_test]:

item['split'] = 'test'

final_list.extend(item_list) # 최종 리스트에 합침

# 리스트를 다시 DataFrame으로 변환

final_review = pd.DataFrame(final_list)

# 리뷰 텍스트를 전처리하는 함수 정의

def preprocess_text(text):

text = text.lower() # 소문자로 변환

text = re.sub(r'([.,!?])', r'\1', text) # 마침표, 느낌표, 쉼표 등을 살림

text = re.sub(r'[^a-zA-Z.,!?]+', r' ', text) # 알파벳과 구두점 외에는 공백으로 대체

return text

# 텍스트 전처리 적용

final_review.review = final_review.review.apply(preprocess_text)

# rating 값을 숫자에서 텍스트 레이블로 변환 (1 → negative, 2 → positive)

final_review['rating'] = final_review.rating.apply({1: 'negative', 2: 'positive'}.get)

# 최종 결과 확인

print(final_review.head()) # 앞부분 출력

print(final_review.tail()) # 뒷부분 출력

print(final_review.split.value_counts()) # 데이터 분할 비율 확인

# 최종 결과를 CSV로 저장

final_review.to_csv(args.output_munged_csv, index=False)

defaultdict(<class 'list'>, {})

rating review split

0 1 Terrible place to work for I just heard a stor... train

1 1 3 hours, 15 minutes-- total time for an extrem... train

2 1 My less than stellar review is for service. ... train

3 1 I'm granting one star because there's no way t... train

4 1 The food here is mediocre at best. I went afte... train

rating review split

55995 2 Great food. Wonderful, friendly service. I ... test

55996 2 Charlotte should be the new standard for moder... test

55997 2 Get the encore sandwich!! Make sure to get it ... test

55998 2 I'm a pretty big ice cream/gelato fan. Pretty ... test

55999 2 where else can you find all the parts and piec... test

split

train 39200

val 8400

test 8400

Name: count, dtype: int64

rating review split

0 negative terrible place to work for i just heard a stor... train

1 negative hours, minutes total time for an extremely si... train

2 negative my less than stellar review is for service. we... train

3 negative i m granting one star because there s no way t... train

4 negative the food here is mediocre at best. i went afte... train

rating review split

55995 positive great food. wonderful, friendly service. i hig... test

55996 positive charlotte should be the new standard for moder... test

55997 positive get the encore sandwich!! make sure to get it ... test

55998 positive i m a pretty big ice cream gelato fan. pretty ... test

55999 positive where else can you find all the parts and piec... test

terrible place to work for i just heard a story of them find a girl over her biological father coming in there who she hadn t seen in years she said hi to him which upset his wife and they left she finished the rest of her day working fine the next day when she went into work they fired over that situation. i for one and boycotting texas roadhouse because any place that could be that cruel to their staff does not deserve my business... yelp wants me to give them a star but i don t believe they deserve it

한글 토크나이징 라이브러리

KoNLPy

한글 자연어 처리를 쉽고 간결하게 처리할 수 있도록 만들어진 오픈소스 라이브러리이다.

국내에 이미 만들어져 사용되고 있는 여러 형태소 분석기를 사용할 수 있게 허용한다.

KoNLPy 의 경우 기존의 자바로 쓰여진 형태소 분석기를 사용하기 때문에 윈도우에서 자바가 설치 되어 있어야 한다.

형태소 단위 토크나이징

한글 텍스트의 경우에는 형태소 단위 토크나이징이 필요할 때가 있다.

KoNLPy 에서는 여러 형태소 분석기를 제공하며, 각 형태소 분석기별로 분석한 결과는 다를 수 있다.

형태소 분석 및 품사 태깅

형태소란 의미를 가지는 가장 작은 단위로서 더 쪼개지면 의미를 상실하는 것들을 말한다.

따라서 형태소 분석이란 의미를 가지는 단위를 기준으로 문장을 살펴 보는 것을 의미한다.

형태소 분석기 목록

-Hannanum

-kkma

-komoran

-Mecab

-Okt(Twitter)

Okt

okt.morphs() : 텍스트를 형태소 단위로 나눈다. Stem은 각 단어에서 어간을 추출하는 기능이다.

okt.nouns() : 텍스트에서 명사만 뽑아낸다.