Ex1

import torch

import torch.nn as nn

inputs = torch.Tensor(1,1,28,28)

print(inputs.shape)

conv1 = nn.Conv2d(in_channels=1, out_channels=32, kernel_size=3, padding=1, stride=1)

print(conv1)

conv2 = nn.Conv2d(32,64, 3,padding=1)

print(conv2)

pool = nn.MaxPool2d(kernel_size=2)

print(pool)

print()

output = conv1(inputs)

print(output.size())

output = conv2(output)

print(output.size())

output = pool(output)

print(output.size())

output = output.view(output.size(0),-1)

print(output.size())

fclayer = nn.Linear(12544, 10)

output = fclayer(output)

print(output.size())

torch.Size([1, 1, 28, 28])

Conv2d(1, 32, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

Conv2d(32, 64, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))

MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)

torch.Size([1, 32, 28, 28])

torch.Size([1, 64, 28, 28])

torch.Size([1, 64, 14, 14])

torch.Size([1, 12544])

torch.Size([1, 10])

Ex2

import torch

import torch.nn as nn

import torch.optim as optim

import torchvision.datasets as dset

import torchvision.transforms as transforms

from torch.utils.data import DataLoader

train_epochs = 10

batch_size = 100

mnist_train = dset.MNIST(root='MNIST_data/',

train=True,

transform=transforms.ToTensor(),

download=True)

mnist_test = dset.MNIST(root='MNIST_data/',

train=False,

transform=transforms.ToTensor(),

download=True)

data_loader = DataLoader(dataset=mnist_train,

batch_size=batch_size,

shuffle=True,

drop_last=True)

class CNNet(nn.Module):

def __init__(self):

super().__init__()

self.layer1 = nn.Sequential(

nn.Conv2d(1, 32, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2)

)

self.layer2 = nn.Sequential(

nn.Conv2d(32, 64, kernel_size=3, stride=1, padding=1),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2, stride=2)

)

self.fc = nn.Linear(64 * 7 * 7,10)

def forward(self,x):

out = self.layer1(x)

out = self.layer2(out)

out = out.view(out.size(0), -1)

y = self.fc(out)

return y

model = CNNet()

loss_func = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=0.001)

total_batch = len(data_loader)

for epoch in range(train_epochs):

avg_loss = 0

for x_train, y_train in data_loader:

optimizer.zero_grad()

hypothesis = model(x_train)

loss = loss_func(hypothesis, y_train)

loss.backward()

optimizer.step()

avg_loss += loss / total_batch

print(f'epoch:{epoch+1} avg_loss:{avg_loss:.4f}')

with torch.no_grad():

x_test = mnist_test.test_data.view(len(mnist_test),1,28,28).float()

y_test = mnist_test.test_labels

prediction = model(x_test)

correction_prediction = torch.argmax(prediction, dim=1) == y_test

accuracy = correction_prediction.float().mean()

print('accuracy:{:2.2f}'.format(accuracy.item()*100))

epoch:1 avg_loss:0.2044

C:\Users\hi\Desktop\PS\python_lib\lib\site-packages\torchvision\datasets\mnist.py:81: UserWarning: test_data has been renamed data

warnings.warn("test_data has been renamed data")

C:\Users\hi\Desktop\PS\python_lib\lib\site-packages\torchvision\datasets\mnist.py:71: UserWarning: test_labels has been renamed targets

warnings.warn("test_labels has been renamed targets")

accuracy:97.70

epoch:2 avg_loss:0.0592

accuracy:97.13

epoch:3 avg_loss:0.0428

accuracy:98.26

epoch:4 avg_loss:0.0350

accuracy:98.67

epoch:5 avg_loss:0.0285

accuracy:98.37

epoch:6 avg_loss:0.0238

accuracy:98.35

epoch:7 avg_loss:0.0196

accuracy:98.90

epoch:8 avg_loss:0.0166

accuracy:98.38

epoch:9 avg_loss:0.0139

accuracy:98.63

epoch:10 avg_loss:0.0110

accuracy:98.56

Ex3

import numpy as np

import torch

from torchvision import datasets

from torchvision import transforms

from torch.utils.data import DataLoader

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

import matplotlib.pyplot as plt

import ssl

ssl._create_default_https_context = ssl._create_unverified_context

cifar10_train = datasets.CIFAR10('CIFAR10_data/',

train=True,

download=True,

transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.5,0.5,0.5),(0.5,0.5,0.5))

]))

cifar10_test = datasets.CIFAR10('CIFAR10_data/',

train=False,

download=True,

transform=transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

]))

trainloader = DataLoader(cifar10_train, batch_size=16, shuffle=True)

testloader = DataLoader(cifar10_test, batch_size=16, shuffle=False)

class CNNet(nn.Module):

def __init__(self):

super().__init__()

self.conv1 = nn.Conv2d(3,32,5)

self.conv2 = nn.Conv2d(32,64,5)

self.conv3 = nn.Conv2d(64,128,5)

self.pool = nn.MaxPool2d(2,2,padding=1)

self.fc1 = nn.Linear(128*2*2, 256)

self.fc2 = nn.Linear(256, 128)

self.fc3 = nn.Linear(128, 84)

self.fc4 = nn.Linear(84, 10)

self.dropout = nn.Dropout(p=0.5)

def forward(self,x):

x = F.relu(self.conv1(x)) # 32, 28, 28

x = self.pool(x) # 14

x = F.relu(self.conv2(x)) # 64, 10 , 10

x = self.pool(x) # 5

x = F.relu(self.conv3(x)) # 128, 4, 4

x = self.dropout(x)

x = self.pool(x) # 2

x = x.view(-1, 128 * 2 * 2)

x = F.relu(self.fc1(x))

x = self.dropout(x)

x = F.relu(self.fc2(x))

x = F.relu(self.fc3(x))

y = self.fc4(x)

return y

model = CNNet()

loss_func = nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.001, momentum=0.9)

# for epoch in range(20):

# running_loss = 0.

# for idx, (x_train, y_train) in enumerate(trainloader):

# optimizer.zero_grad()

# hypothesis = model(x_train)

# loss = loss_func(hypothesis, y_train)

# loss.backward()

# optimizer.step()

#

# running_loss += loss.item()

# if idx % 2000 == 0:

# print(f'epoch:{epoch+1} / {idx+1} loss:{running_loss/2000:.5f}')

# running_loss = 0.

PATH = './cifar_net.pt'

# torch.save(model.state_dict(), PATH)

model.load_state_dict(torch.load(PATH))

correct = 0

total = 0

with torch.no_grad():

for (x_test, y_test) in testloader:

outputs = model(x_test)

prediction = torch.argmax(outputs.data, dim=1)

total += y_test.size(0)

correct += (prediction == y_test).sum().item()

print(f'accuracy : {correct / total * 100:2.2f}%')

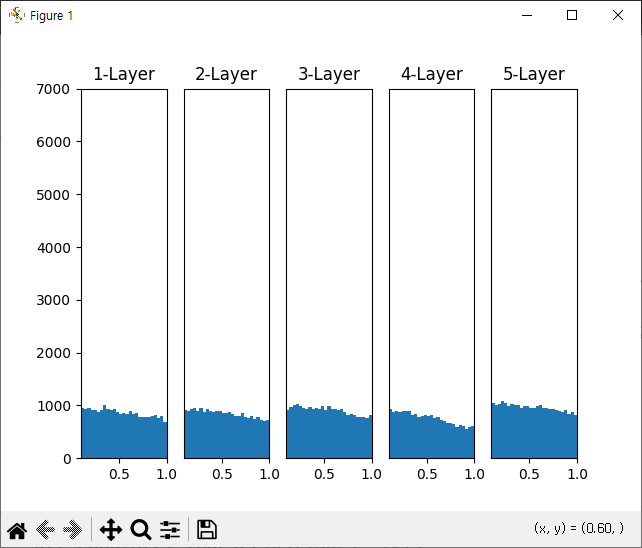

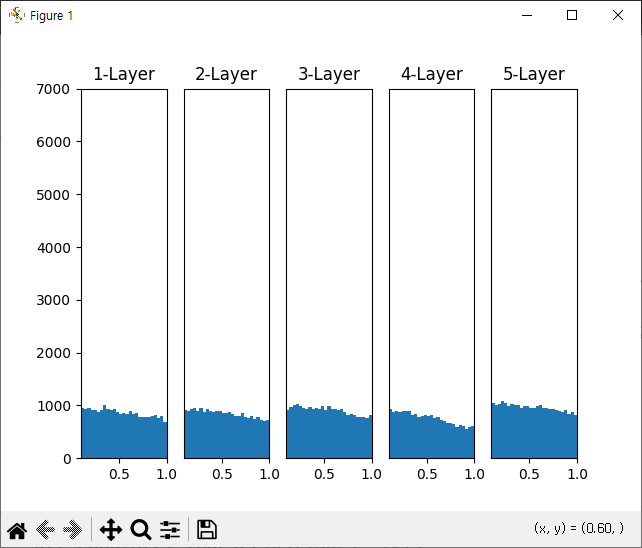

Ex4

import numpy as np

import matplotlib.pyplot as plt

def sigmoid(x):

return 1 / (1+np.exp(-x))

def Relu(x):

return np.maximum(0,x)

input_data = np.random.randn(1000, 100)

node_num = 100

hidden_layer_size = 5

activations = {}

x = input_data

for i in range(hidden_layer_size):

if i != 0:

x = activations[i-1]

# w = np.random.randn(node_num, node_num)

# w = np.random.randn(node_num, node_num) * np.sqrt(2.0 / (node_num + node_num))

w = np.random.randn(node_num, node_num) * np.sqrt(2.0 * 2 / (node_num + node_num))

a = np.dot(x, w)

# output = sigmoid(a)

output = Relu(a)

activations[i] = output

for i, a in activations.items():

plt.subplot(1,len(activations), i+1)

plt.title(str(i+1) + '-Layer')

if i != 0:

plt.yticks([],[])

plt.hist(a.flatten(),30, range=(0,1))

plt.xlim(0.1,1)

plt.ylim(0,7000)

plt.show()

Ex5

import torch.nn as nn

import torch.optim as optim

import torchvision.datasets as dset

import torchvision.transforms as transforms

from torch.utils.data import DataLoader

import torch.nn.init as init

batch_size = 100

learning_rate = 0.001

total_epochs = 10

fmnist_train = dset.FashionMNIST('FashionMNIST_data/',

train=True,

transform=transforms.Compose([

transforms.Resize(34),

transforms.CenterCrop(28),

transforms.Lambda(lambda x:x.rotate(90)),

transforms.ToTensor()

]),

target_transform=None,

download=True)

fmnist_test = dset.FashionMNIST('FashionMNIST_data/',

train=False,

transform=transforms.ToTensor(),

target_transform=None,

download=True)

train_loader = DataLoader(fmnist_train,

batch_size=batch_size,

shuffle=True,

drop_last=True)

test_loader = DataLoader(fmnist_test,

batch_size=batch_size,

shuffle=True,

drop_last=True)

class CNNet2(nn.Module):

def __init__(self):

super().__init__()

self.Clayer = nn.Sequential(

nn.Conv2d(1,16,3,padding=1),

nn.ReLU(),

nn.Conv2d(16,32,3,padding=1),

nn.ReLU(),

nn.MaxPool2d(2,2),

nn.Conv2d(32,64,3,padding=1),

nn.ReLU(),

nn.MaxPool2d(2,2)

)

self.fclayer = nn.Sequential(

nn.Linear(64 * 7 * 7, 100),

nn.ReLU(),

nn.Linear(100,10)

)

for m in self.modules():

if isinstance(m, nn.Conv2d):

init.kaiming_normal_(m.weight.data)

m.bias.data.fill_(0)

elif isinstance(m, nn.Linear):

init.xavier_normal_(m.weight.data)

def forward(self, x):

out = self.Clayer(x)

out = out.view(batch_size, -1)

y = self.fclayer(out)

return y

model = CNNet2()

loss_func = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), learning_rate, weight_decay=0.000001)

for i in range(total_epochs):

for x_train, y_train in train_loader:

optimizer.zero_grad()

hypothesis = model(x_train)

loss = loss_func(hypothesis, y_train)

loss.backward()

optimizer.step()

print(f'epoch:{i+1}, loss:{loss.item():.4f}')

epoch:1, loss:0.4182

epoch:2, loss:0.2388

epoch:3, loss:0.1964

epoch:4, loss:0.1773

epoch:5, loss:0.1309

epoch:6, loss:0.1042

epoch:7, loss:0.1469

epoch:8, loss:0.0516

epoch:9, loss:0.2053

epoch:10, loss:0.1007