AWQ: Activation-aware Weight Quantization for LLM Compression and Acceleration

📝 AWQ: Activation-aware Weight Quantization for On-Device LLM Compression and Acceleration

한 줄 요약:

대규모 언어 모델(LLM)의 가중치를 4비트로 양자화할 때, 활성화(activation) 분포에 기반하여 중요한 가중치 채널을 보호함으로써 정확도 손실 없이 3배 이상의 추론 속도 향상을 달성한 연구.

1. 서론 및 연구 배경 (Introduction)

연구의 필요성: 기존 연구의 한계점

대규모 언어 모델(LLM)은 챗봇, 가상 비서, 자율주행차 등 다양한 분야에서 혁신을 가져왔지만, 천문학적인 모델 크기가 온디바이스 배포의 최대 걸림돌이었습니다. 예를 들어:

- GPT-3는 175B 파라미터로 FP16 기준 350GB의 메모리를 요구

- 최신 B200 GPU도 192GB 메모리에 불과하여, 엣지 디바이스는 말할 것도 없음

- 기존 양자화 방법(GPTQ 등)은 보정(calibration) 데이터셋에 과적합되어, 범용성이 떨어짐

특히 Post-Training Quantization(PTQ) 방식의 기존 연구들은 다음 문제를 겪었습니다:

- GPTQ: 2차 정보를 활용한 오류 보정(error compensation)을 수행하지만, 재구성(reconstruction) 과정에서 보정 데이터에 과적합되어 도메인 외(out-of-distribution) 성능이 저하

- Round-to-Nearest(RTN): 단순 반올림 방식으로 INT3/INT4 저비트에서 성능 급락

연구 목표: 새로운 접근법의 필요성

저자들은 다음과 같은 핵심 통찰(insight)에서 출발했습니다:

"LLM의 모든 가중치가 동등하게 중요하지 않다. 소수(0.1~1%)의 핵심(salient) 가중치만 보호해도 양자화 오류를 크게 줄일 수 있다."

그러나 중요한 가중치를 혼합 정밀도(mixed-precision)로 유지하면 하드웨어 구현이 비효율적

- 활성화 분포(activation distribution)를 기반으로 중요 채널을 식별

- 채널별 스케일링(per-channel scaling)으로 중요 가중치를 보호하되, 전체를 동일 비트로 유지(하드웨어 친화적)

- 역전파나 재구성 없이 작동하여 일반화 성능 우수

2. 제안 방법론 (Methodology) - 매우 상세하게

핵심 아이디어: 활성화 인지(Activation-aware) 양자화

AWQ의 핵심은 "가중치의 중요도는 가중치 자체의 크기가 아닌, 해당 채널을 통과하는 활성화의 크기에 의해 결정된다"는 원리

1단계: 중요 가중치 채널 식별

논문의 Table 1 실험 결과를 보면:

| 모델 | RTN (w3-g128) | 활성화 기반 1% FP16 | 가중치 기반 1% FP16 | 랜덤 1% FP16 |

|---|---|---|---|---|

| OPT-6.7B | 23.54 PPL | 11.39 PPL | 22.37 PPL | 23.54 PPL |

- 활성화 분포 기반으로 선택된 1%의 채널만 FP16으로 유지했을 때, Perplexity가 23.54 → 11.39로 급감 (성능 대폭 개선)

- 반면 가중치 크기(L2-norm) 기반 선택이나 랜덤 선택은 거의 효과 없음

해석: 활성화 값이 큰 채널은 더 중요한 특징(feature)을 처리하므로, 해당 가중치를 정밀하게 유지

2단계: 스케일링을 통한 양자화 오류 감소

혼합 정밀도는 하드웨어 구현이 복잡하므로, AWQ는 수학적으로 동등한 변환(equivalent transformation)을 활용

양자화 함수:

Q(w) = Δ · Round(w/Δ), 여기서 Δ = max(|w|) / (2^(N-1))(N: 양자화 비트 수, Δ: 스케일러)

특정 가중치 w를 s배(s>1) 스케일업하고, 입력 활성화 x를 1/s로 스케일다운하면:

Q(w·s) · (x/s) = Δ' · Round(ws/Δ') · x · (1/s)핵심 발견 (Table 2 실험):

| s 값 | Δ 변화 비율 | 평균 오류 감소율 | Wiki-2 PPL |

|---|---|---|---|

| 1.0 | 0% | 1.0 | 23.54 |

| 2.0 | 8.2% | 0.519 | 11.92 |

| 4.0 | 21.2% | 0.303 | 12.36 |

- s=2일 때, 중요 채널의 상대 양자화 오류가 약 절반으로 감소

- s가 너무 크면(s=4) 비중요 채널의 Δ가 증가하여 오히려 성능 저하

직관적 이해:

중요한 가중치를 크게 만들면(s배), 양자화 스텝(Δ)은 거의 변하지 않지만, 반올림 오차는 상대적으로 작아집니다. 마치 작은 물체를 확대한 후 디지털화하면 디테일이 더 잘 보존되는 원리와 유사

Step-by-Step 프로세스

1. 보정 데이터셋(calibration set)에서 각 채널별 활성화 평균 크기(sₓ) 측정

2. 최적 스케일 s = sₓ^α 형태로 탐색 공간 설정

3. α ∈ [0, 1] 범위에서 그리드 서치(20단계)로 최적 α 찾기

- 목표: ||Q(W·diag(s))·(diag(s)⁻¹·X) - WX|| 최소화

4. 찾아진 스케일로 가중치 변환 후 양자화

5. 추론 시 s⁻¹·X는 이전 레이어 연산에 융합(fuse) 가능장점:

- 역전파 불필요 → 연산 효율적

- 보정 데이터 의존도 낮음 → 일반화 우수

- 하드웨어 친화적 (단일 정밀도 유지)

3. 주요 실험 결과 (Experiments & Results)

LLaMA/Llama-2 모델 성능 비교

논문의 핵심 결과 테이블:

| 모델 크기 | FP16 | RTN (INT3) | GPTQ | GPTQ-R | AWQ |

|---|---|---|---|---|---|

| Llama-2 7B | 5.47 | 6.66 | 6.43 | 6.42 | 6.24 |

| Llama-2 70B | 3.32 | 3.98 | 3.88 | 3.86 | 3.74 |

| LLaMA 7B | 5.68 | 7.01 | 8.81 | 6.53 | 6.35 |

시각적 내용 (WikiText-2 Perplexity, 낮을수록 좋음):

- X축: 모델 크기 (7B ~ 70B)

- Y축: Perplexity 수치

- AWQ(주황선)가 모든 모델에서 RTN, GPTQ보다 일관되게 낮은 PPL 달성

해석:

1. INT3 양자화에서 AWQ는 RTN 대비 7B 모델에서 6.66→6.24 (6.3% 개선), GPTQ보다도 우수

2. 특히 LLaMA 7B에서 GPTQ는 8.81로 실패했으나, AWQ는 6.35로 안정적

3. 70B 초대형 모델에서도 FP16 3.32 대비 AWQ는 3.74로 손실 최소화

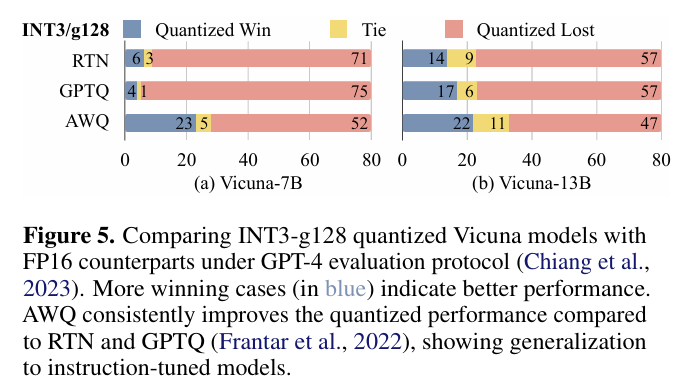

Instruction-tuned 모델(Vicuna) GPT-4 평가

시각적 내용:

- 80개 샘플 질문에 대해 양자화 모델 vs FP16 응답을 GPT-4가 평가

- 파란색(Quantized Win): 양자화 모델이 더 좋은 답변

- 회색(Tie): 동등

- 빨간색(Quantized Lost): 양자화 모델이 나쁜 답변

| 모델 | RTN Win | GPTQ Win | AWQ Win |

|---|---|---|---|

| Vicuna-7B | 52 | 71 | 75 |

| Vicuna-13B | 47 | 57 | 57 (동률 최고) |

해석:

AWQ는 instruction-tuned 모델에서도 가장 많은 승리 케이스를 기록하여, 일반화 능력이 뛰어남을 입증

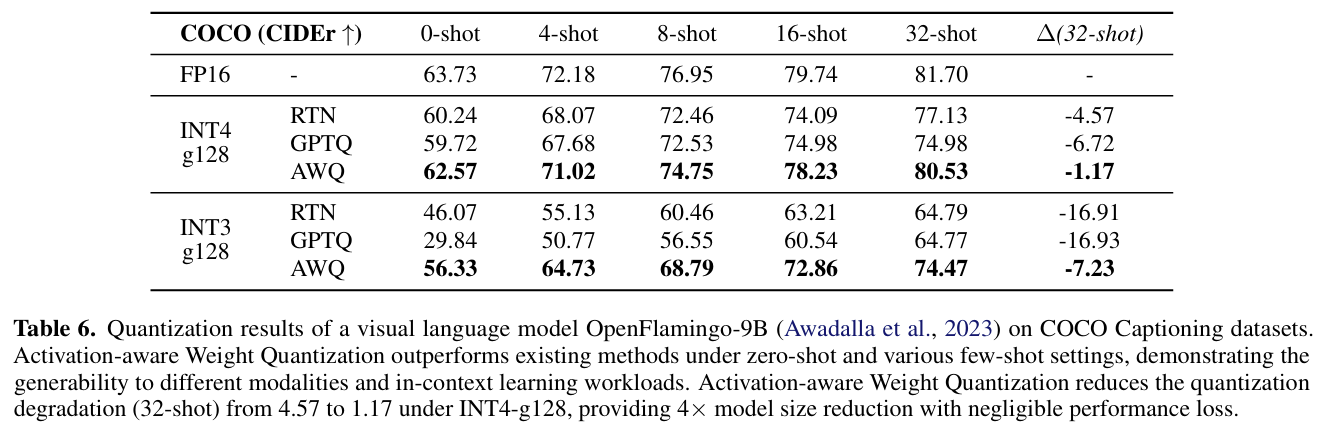

멀티모달 모델 OpenFlamingo-9B (COCO Captioning)

| Few-shot | FP16 | RTN (INT4) | GPTQ | AWQ |

|---|---|---|---|---|

| 32-shot | 81.70 CIDEr | 77.13 (-4.57) | 74.98 (-6.72) | 80.53 (-1.17) |

| 0-shot | 63.73 | 60.24 | 59.72 | 62.57 |

시각적 내용:

그래프에서 AWQ(주황선)는 모든 few-shot 설정(0/4/8/16/32-shot)에서 RTN, GPTQ보다 FP16에 근접

해석:

- 멀티모달 LLM의 첫 저비트 양자화 성공 사례

- 32-shot에서 AWQ는 FP16 대비 단 1.17 CIDEr 감소로, 거의 무손실 수준

- GPTQ는 6.72 하락하여 과적합 문제 노출

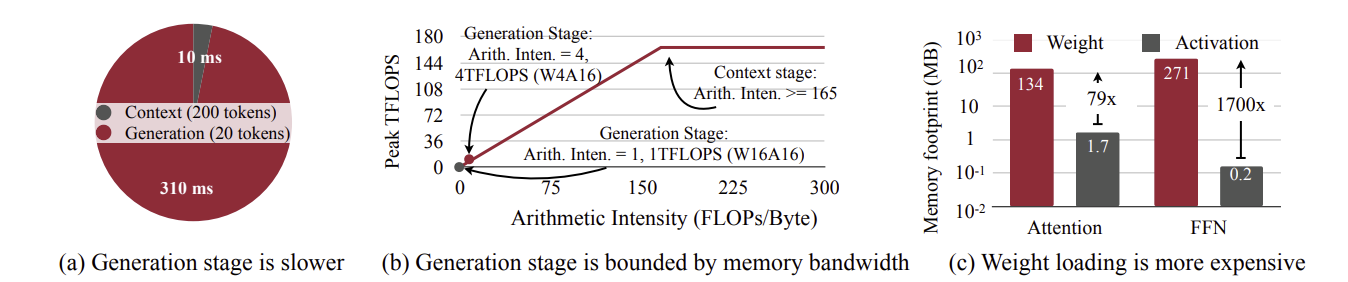

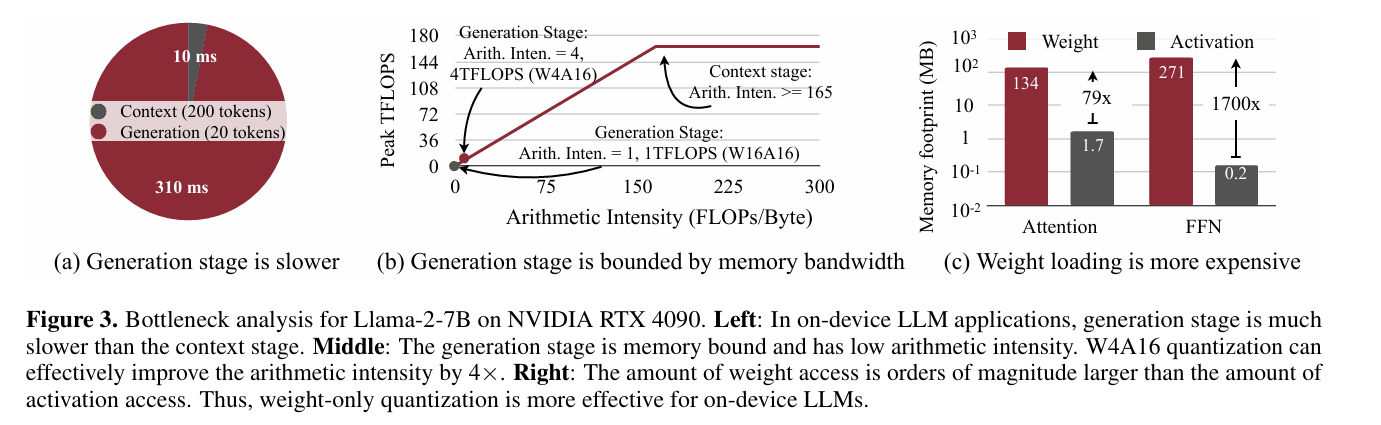

병목 현상 분석 (RTX 4090 GPU)

왼쪽 그래프: Context vs Generation 시간

- Context 단계(200 토큰): 10ms

- Generation 단계(20 토큰): 310ms → 생성 단계가 31배 느림

중간 그래프: Roofline 분석

- Y축: Peak TFLOPS (최대 165)

- X축: Arithmetic Intensity (연산/메모리 비율)

- FP16 Generation: Intensity=1 → 메모리 바운드

- AWQ W4A16: Intensity=4 → 4배 개선으로 4 TFLOPS 달성 가능

오른쪽 그래프: 메모리 접근 비중

- Weight 접근: 134MB (압도적)

- Activation 접근: 1.7MB

- 가중치 접근이 79배 많음 → 가중치 압축이 핵심

해석:

온디바이스 LLM은 메모리 대역폭(memory bandwidth)에 의해 성능이 제한됩니다. AWQ는 가중치를 4비트로 압축하여 이론상 4배 메모리 절감 → 실제 3배 이상 속도 향상 가능.

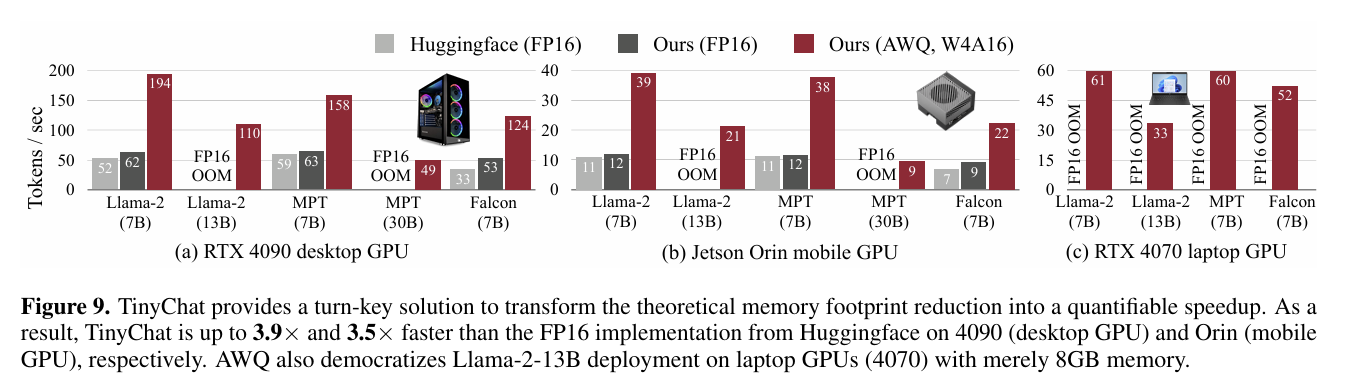

TinyChat 시스템 실측 성능

RTX 4090 Desktop GPU:

| 모델 | Huggingface FP16 | TinyChat FP16 | TinyChat AWQ (W4A16) |

|---|---|---|---|

| Llama-2-7B | 52 tok/s | 62 tok/s | 194 tok/s |

| Llama-2-13B | 49 tok/s | - | 158 tok/s |

| Falcon-7B | 124 tok/s | - | 194 tok/s |

Jetson Orin Mobile GPU:

| 모델 | Huggingface FP16 | TinyChat AWQ |

|---|---|---|

| Llama-2-7B | 22 tok/s | 38 tok/s |

| Llama-2-13B | OOM | 21 tok/s |

해석:

1. Desktop GPU: AWQ+TinyChat은 Huggingface FP16 대비 3.1~3.9배 속도 향상

2. Mobile GPU: 13B 모델이 FP16에서는 메모리 초과(OOM)지만, AWQ로 21 tok/s 달성

3. 8GB 메모리 노트북(RTX 4070)에서도 Llama-2-13B를 33 tok/s로 구동 가능

시각적 내용 (Figure 9 막대 그래프):

- 파란색(Huggingface FP16): 낮은 막대

- 회색(TinyChat FP16): 중간 막대

- 빨간색(TinyChat AWQ): 가장 높은 막대 → 시각적으로도 압도적 우위

5. 결론 및 인사이트 (Conclusion & Insight)

핵심 기여(Contribution) 3가지

-

활성화 인지 양자화 원리 정립

가중치 중요도를 활성화 분포로 판단하는 새로운 패러다임 제시. 이는 GPTQ의 재구성 기반 접근보다 일반화 성능이 뛰어남을 실험적으로 입증 -

하드웨어 친화적 설계

혼합 정밀도 없이 단일 비트 양자화 + 채널 스케일링으로 동등한 효과 달성. 이는 CUDA 커널 구현을 단순화하여 실제 배포 가능성을 높임 -

범용성 확장

- Instruction-tuned 모델(Vicuna)

- 멀티모달 모델(OpenFlamingo, VILA, LLaVA)

- 코딩/수학 특화 모델(CodeLlama, GSM8K)

모두에서 우수한 성능 → 첫 범용 저비트 양자화 솔루션

한계점 및 향후 과제

저자가 밝힌 한계:

- INT2 극저비트에서는 여전히 성능 저하 발생 (Table 9: RTN 완전 실패, AWQ+GPTQ 조합 필요)

- 보정 데이터 의존도가 낮지만, 완전히 제로는 아님

5. 참고 문헌 및 링크 (References)

논문 링크:

- arXiv: https://arxiv.org/abs/2306.00978

- MLSys 2024 (Best Paper Award)

💡 마무리 코멘트

AWQ는 "LLM 양자화의 JPEG 압축"이라 할 만합니다. JPEG이 이미지에서 인간 눈에 덜 중요한 고주파 성분을 제거하듯, AWQ는 활성화 분포를 기준으로 덜 중요한 가중치의 정밀도를 낮춥니다.

특히 인상 깊었던 점은 이론(수식 유도)과 실무(TinyChat 시스템)를 완벽히 연결한 연구 설계입니다. 많은 논문이 "이론상 가능"에 그치는 반면, AWQ는 실제 GPU에서 3배 속도 향상을 실측하여 즉시 도입 가능한 솔루션임을 증명했습니다.

2024년 기준, 온디바이스 AI가 대세로 떠오르는 시점에서 이 연구는 모바일 LLM 혁명의 핵심 기술로 자리매김할 것으로 보입니다. 🚀