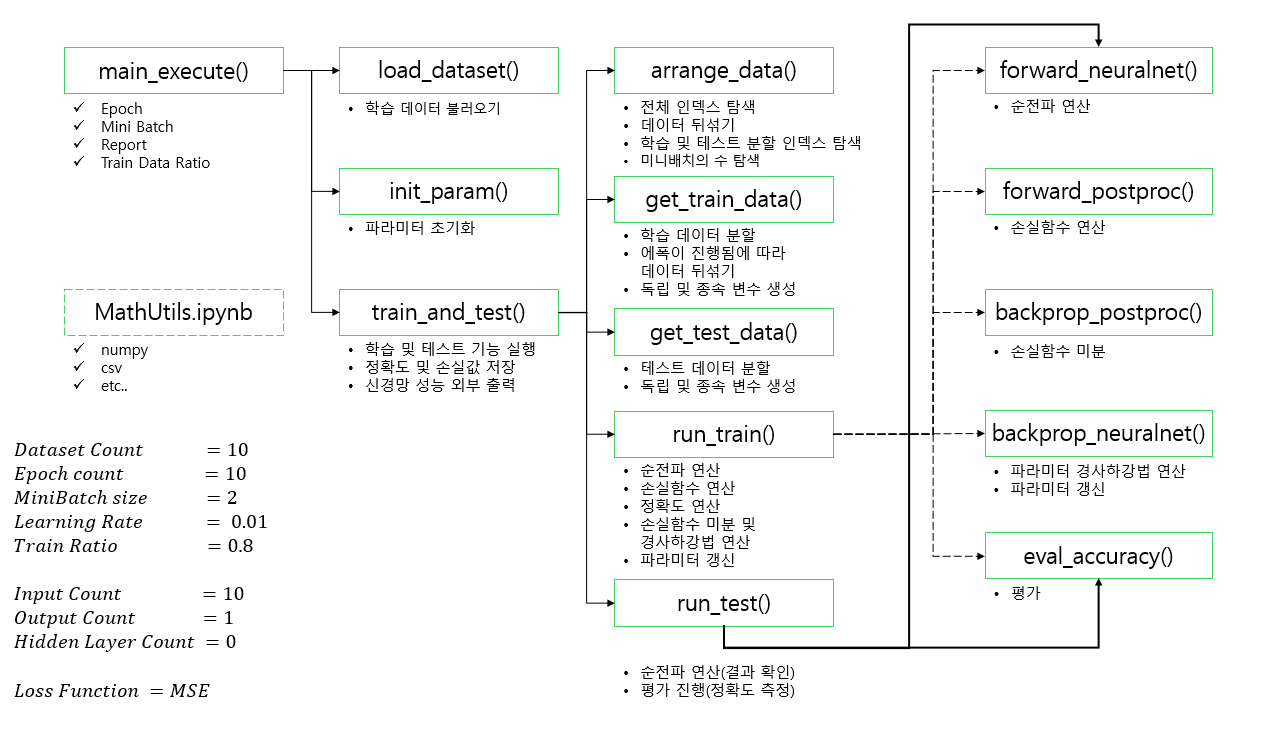

신경망 처음부터 끝까지 구현하기 05

- foward_neuralnet 함수 생성

def forward_neuralnet(x):

# x와 가중치의 행렬곱 연산에 편향을 더한 값을 y_hat에 넣는다 (y_hat = 예측 값)

y_hat = np.matmul(x, weight) + bias

return y_hat, x- forward_postproc 함수 생성

def forward_postproc(y_hat, y):

# 예측 값에서 실제 값을 빼 diff에 저장

diff = y_hat - y

# 행렬 안의 각 값을 제곱

square = np.square(diff)

# 행렬 전체의 평균을 loss에 저장

loss = np.mean(square)

return loss, diff

- eval_accuracy 함수 생성

def eval_accuracy(y_hat, y):

mdiff = np.mean(np.abs((y_hat - y) / y))

return 1 - mdiff - backprop_neuralnet 함수 생성

def backprop_neuralnet(G_output, x):

global weight, bias

x_transpose = x.transpose()

G_w = np.matmul(x_transpose, G_output)

G_b = np.sum(G_output, axis = 0)

weight -= LEARNING_RATE * G_w

bias -= LEARNING_RATE * G_b- backprop_postproc 함수 생성

def backprop_postproc(diff):

M_N = diff.shape

g_mse_square = np.ones(M_N) / np.prod(M_N)

g_square_diff = 2 * diff

g_diff_output = 1

G_diff = g_mse_square * g_square_diff

G_output = g_diff_output * G_diff

return G_output