!pip install -U keras-tuner

import kerastuner as kt

import numpy as np

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, Dropout, Flatten

import keras

import tensorflow as tf

import IPython

from tensorflow.keras.wrappers.scikit_learn import KerasClassifier

from sklearn.model_selection import GridSearchCV

# 모델 만들기

tf.random.set_seed(7)

def model_builder(nodes=16, activation='relu'):

model = Sequential()

model.add(Dense(nodes, activation=activation))

model.add(Dense(nodes, activation=activation))

model.add(Dense(1, activation='sigmoid')) # 이진분류니까 노드수 1, 활성함수로는 시그모이드

model.compile(optimizer='adam',

loss='binary_crossentropy',

metrics=['accuracy'])

return model

#keras.wrapper 분류기

model = KerasClassifier(build_fn=model_builder, verbose=0)

# GridSearch 파라미터 설정

batch_size = [50, 100, 300]

epochs = [10, 20, 30]

nodes = [64, 128, 256]

activation = ['relu', 'sigmoid']

param_grid = dict(batch_size=batch_size, epochs=epochs, nodes=nodes, activation=activation)

# GridSearch CV를 만들기

grid = GridSearchCV(estimator=model, param_grid=param_grid, cv=3, verbose=1, n_jobs=-1)

grid_result = grid.fit(X_train_scaled, y_train)

# 최적의 결과값을 낸 파라미터를 출력합니다

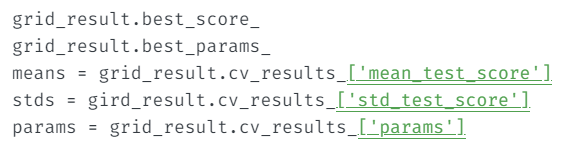

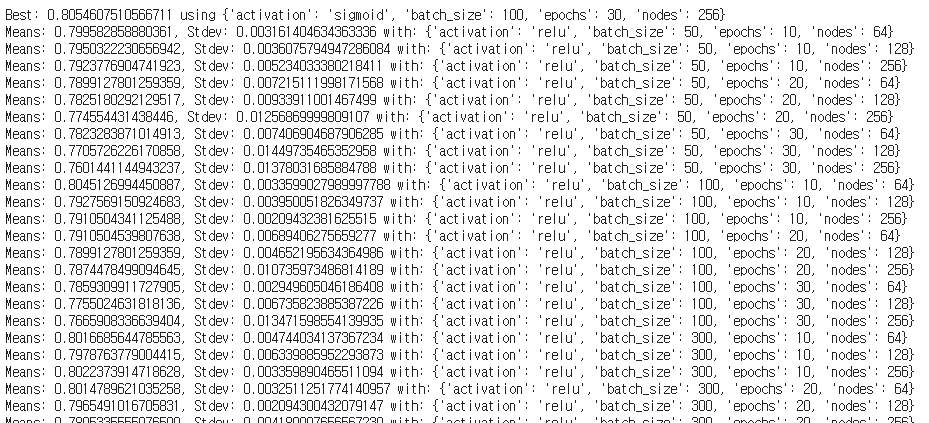

print(f"Best: {grid_result.best_score_} using {grid_result.best_params_}")

means = grid_result.cv_results_['mean_test_score']

stds = grid_result.cv_results_['std_test_score']

params = grid_result.cv_results_['params']

for mean, stdev, param in zip(means, stds, params):

print(f"Means: {mean}, Stdev: {stdev} with: {param}")

##그리드 서치에서 결괏값을 알려주는 코드