이론 정리

리소스 부족 대응 기술

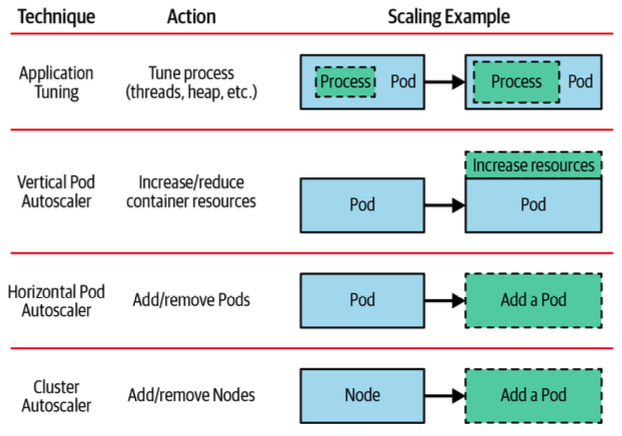

출처 : kubernetes patterns ‘2nd 발췌

출처 : kubernetes patterns ‘2nd 발췌

-

애플리케이션 튜닝: 개발자가 코드를 변경해 애플리케이션 자체를 튜닝

-

Vertical Pod Autoscaler: Pod를 scale up 하는 방법

-

Horizontal Pod Autoscaler: Pod를 scale out 하는 방법

-

Cluster Autoscaler: Pod가 아닌 Node를 추가 하는 방법

K8S Auto Scaling 정책

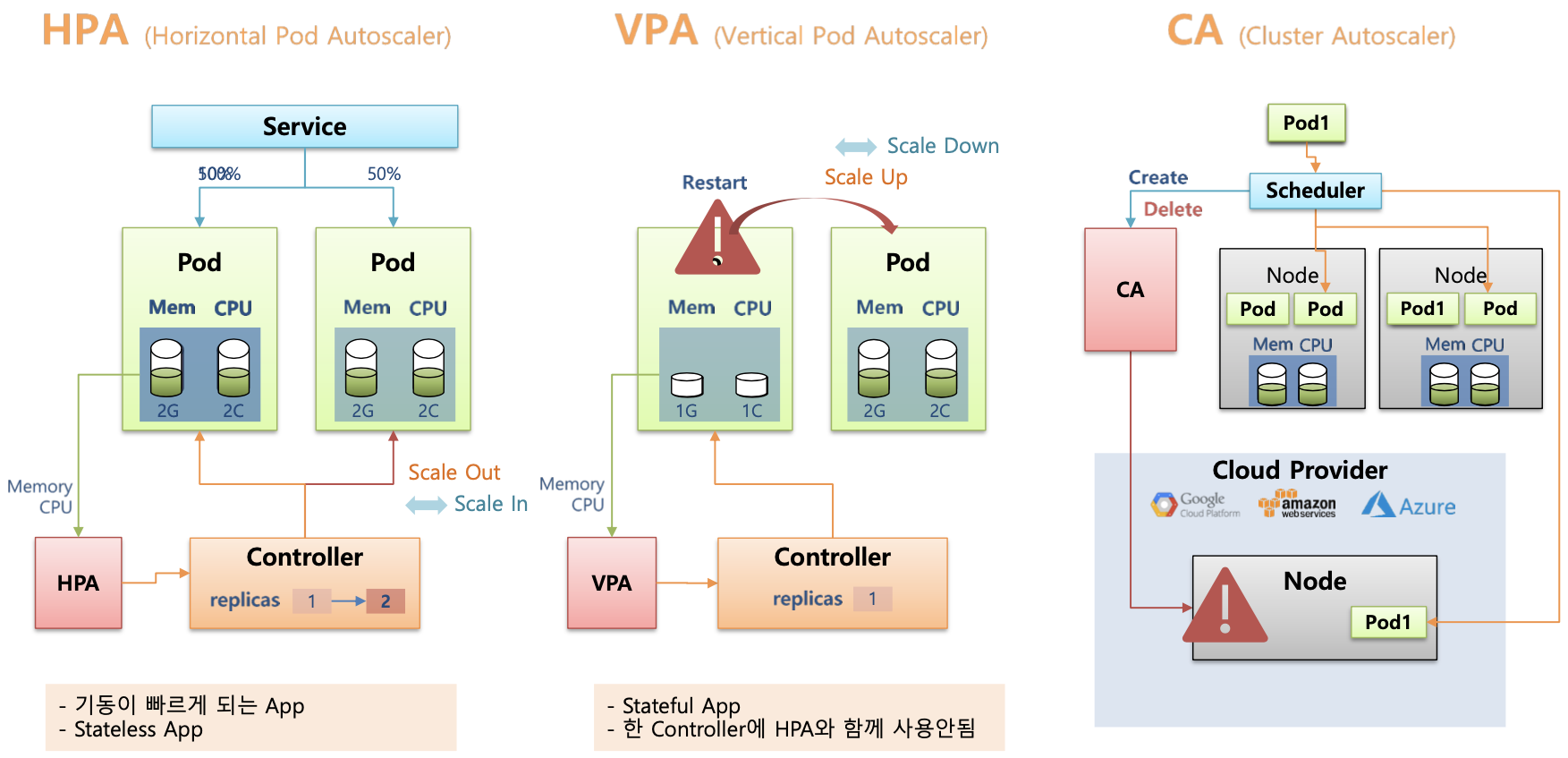

HPA (Horizontal Pod Autoscaling)

- 정의 : CPU, 메모리, 사용자가 정의한 메트릭을 기준으로 파드 개수를 자동으로 조절

- 적용 대상 : Deployment, StatefulSet, ReplicaSet 등

- 단점.

- pod의 개수만 조절. 가능

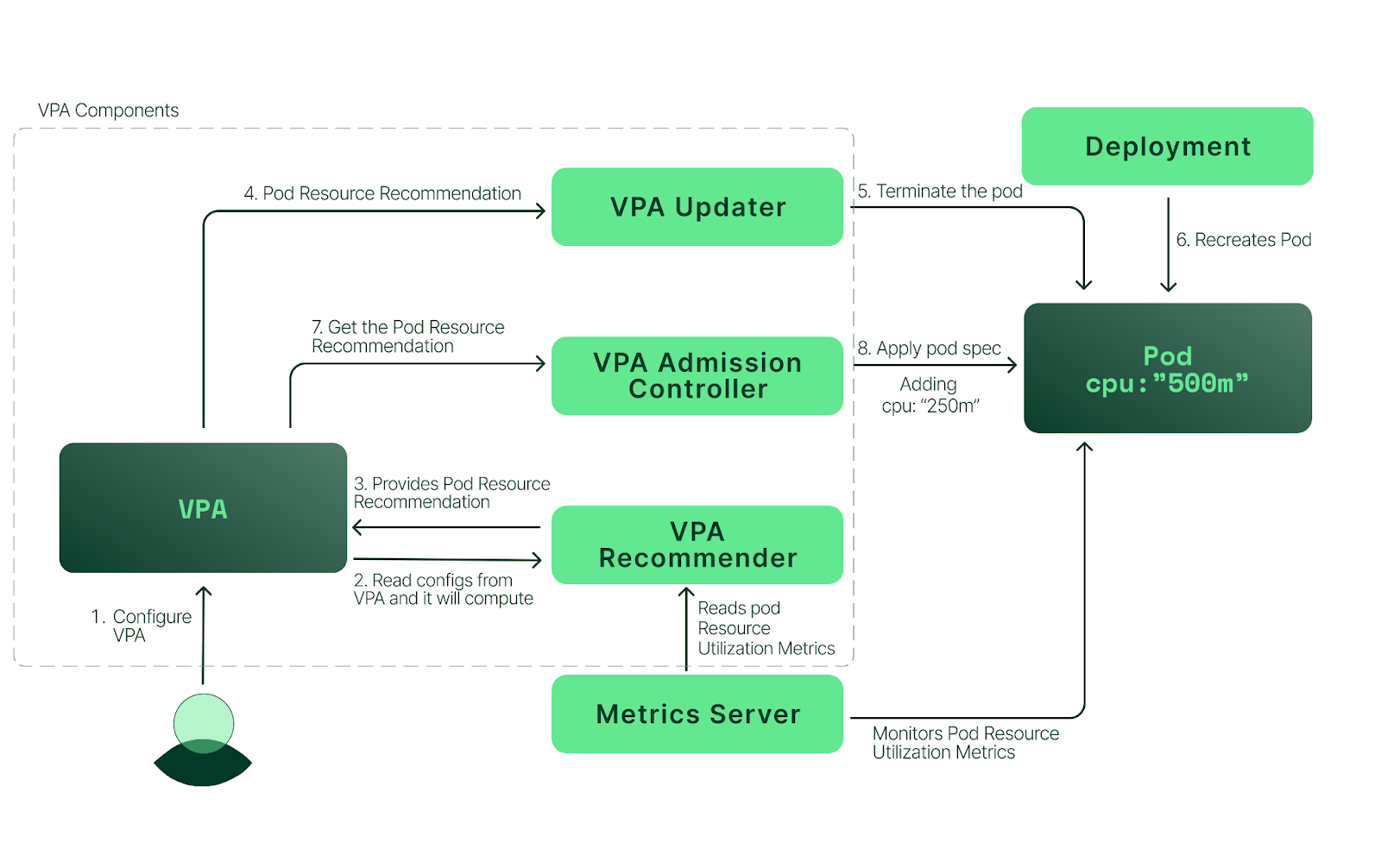

VPA (Vertical Pod Autoscaling)

- 정의 : 실행중인 파드의 CPU 및. ㅔ모리 요청값을 동적으로 조정

- 적용 대상 : Deployment, StatefulSet, ReplicaSet 등

- 단점

- 파드 재시작이 필요함 → 다운 타임 발생

- HPA과 함께 사용하는 경우 충돌 가능

CAS(Cluster Autoscaler)

- 정의 : 클러스터의 노드 수를 자동으로 조절

- 기반 매트릭 : 파드의 스케줄링 가능 여부 (리소스 부족시 확장, 불필요한 노드는 축소)

- 단점

- 클라우드 환경 에서만 기본 지원

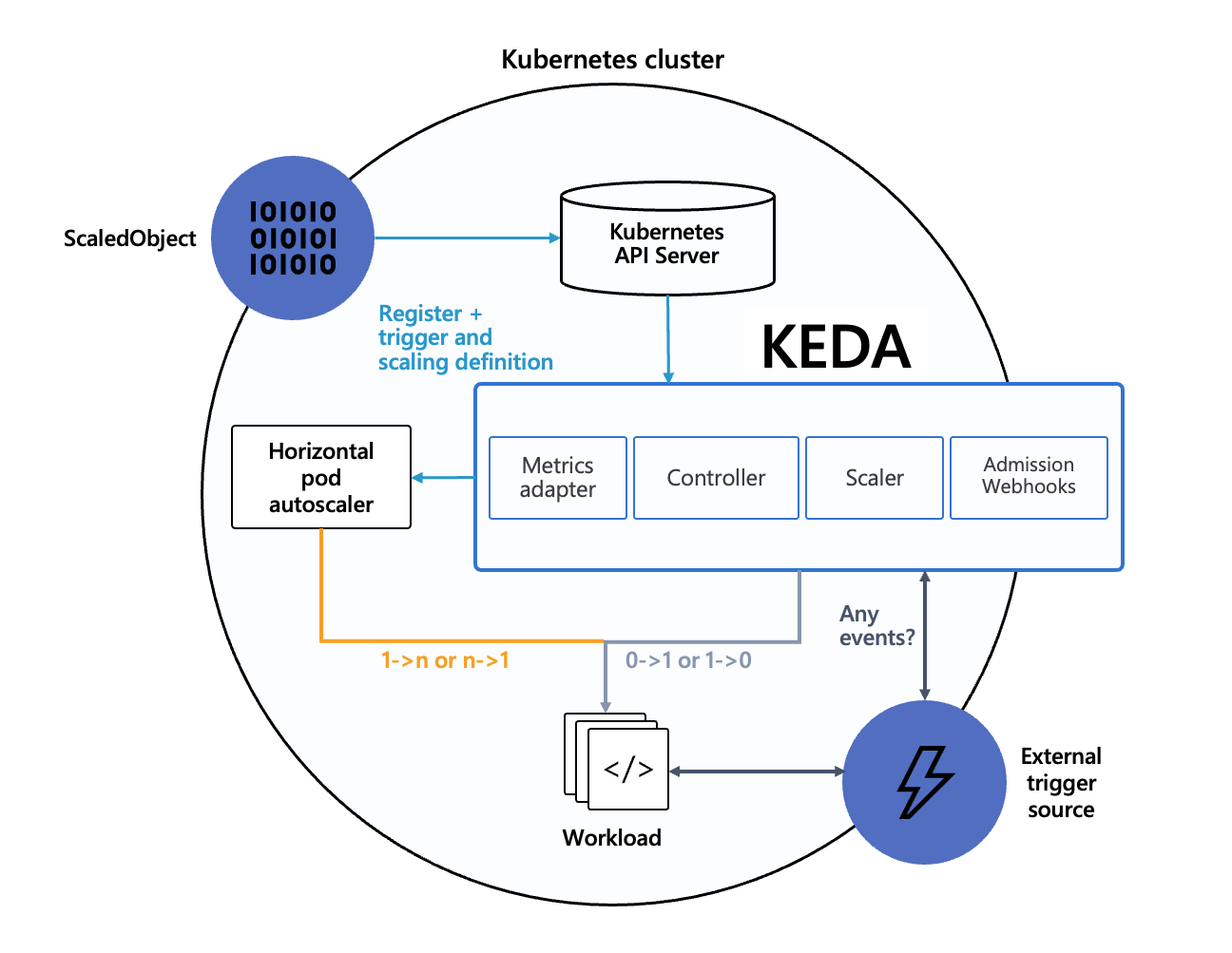

KEDA (Kubernetes Event Driven Autoscaler)

- 정의 : 이벤트 기반의 오토스케일링 으로 ,Kafka, RabbitMQ, Prometheus 등의 외부 메트릭 기준으로 Pod 개수 를 조절

- 단점 : HPA 연동이 필요함 (단독으로 사용하기 어려움)

동작원리

출처 : AEWS 3기 스터디

출처 : AEWS 3기 스터디

- 에이전트 역할 –

keda-operator가 Deployment를 이벤트 발생 여부에 따라 0까지 스케일 조정 - 메트릭 서버 역할 –

keda-operator-metrics-apiserver가 HPA에 이벤트 메트릭 제공 - 어드미션 웹훅 –

keda-admission-webhooks가 설정 검증 및 오류 방지

정리

| 오토스케일링 유형 | 대상 | 특징 | 단점 |

|---|---|---|---|

| HPA | Pod 개수 | CPU, Memory 기반 스케일링 | Pod 리소스 크기 변경 불가 |

| VPA | Pod 리소스 | CPU, Memory 설정 변경 | Pod 재기시작 필요 |

| CAS | 클러스터 너도 | 필요시 노드 추가/제거 | 클라우드에 종속적 |

| KEDA | 이벤트 기반 | Kafka, RabbitMQ 등과의 연동 | 설정이 복잡함 |

AWS Autoscaling 정책

Simple/Step scaling

- 고객이 정의한 단계에 따라 메트릭을 모니터링하고 인스턴스를 추가 또는 제거

- Manual Reactive, Dynamic scaling

Target tracking

- 고객이 정의한 목표 메트릭을 유지하기 위해 자동으로 인스턴스를 추가 또는 제거

- Automated Reactive, Dynamic scaling

Scheduled scaling

- 고객이 정의한 일정에 따라 인스턴스를 시작하거나 종료

- Manual Proactive

Predictive scaling

- 과거 트렌드를 기반으로 용량을 선제적으로 시작

- Automated Proactive

EKS Auto Scaling 정책

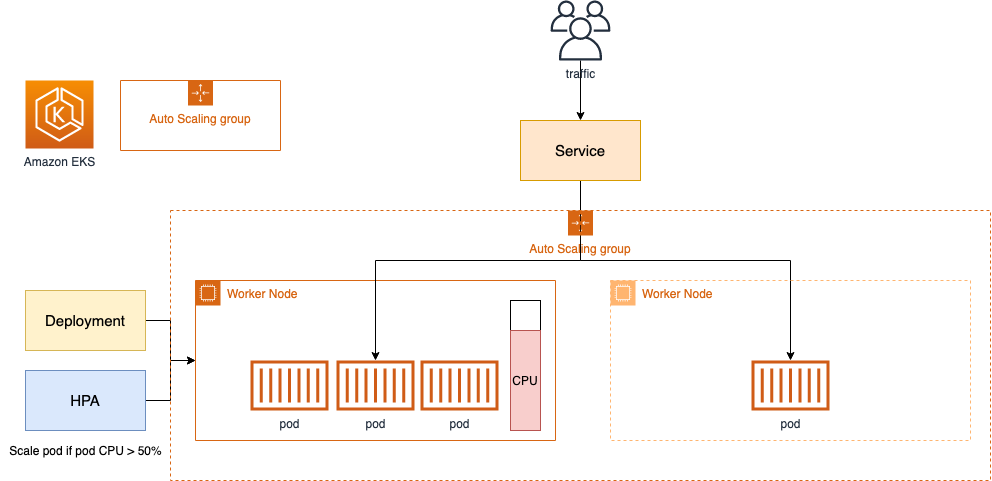

- HPA : 서비스를 처리할 파드 자원이 부족한 경우 신규 파드 Provisioning → 파드 Scale Out

- VPA : 서비스를 처리할 파드 자원이 부족한 경우 파드 교체(자동 or 수동) → 파드 Scale Up

- CAS : 파드를 배포할 노드가 부족한 경우 신규 노드 Provisioning → 노드 Scale Out

- Karpenter : Unscheduled 파드가 있는 경우 새로운 노드 및 파드 Provisioning → 노드 Scale Up/Out

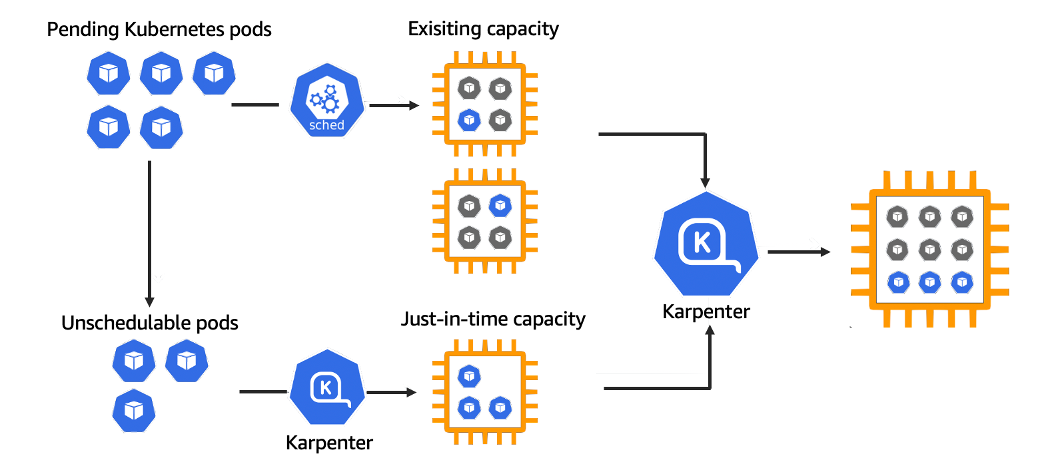

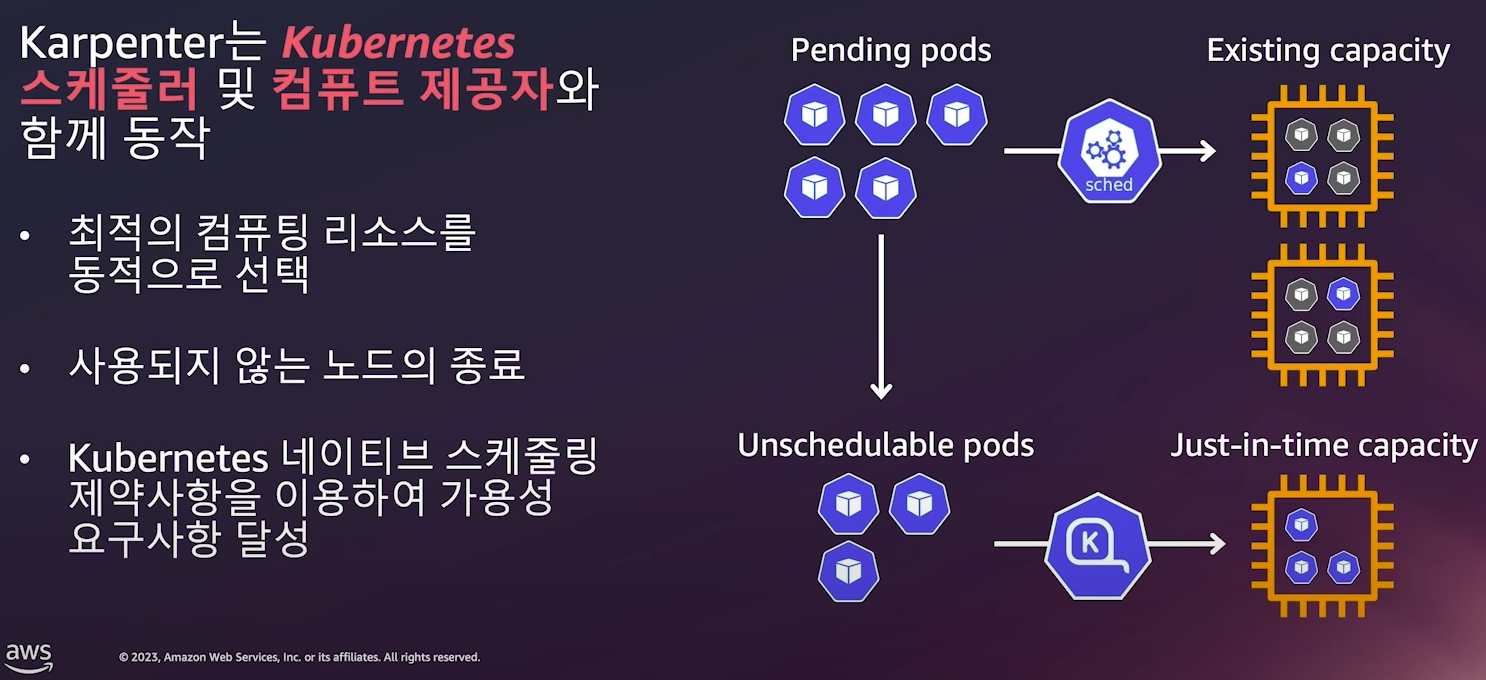

Karpenter??

Kubernetes 클러스터의 노드 프로비저닝을 자동화하는 오픈소스 자동 확장 도구로, 효율성 향상과 비용 최적화를 목표로 한다.

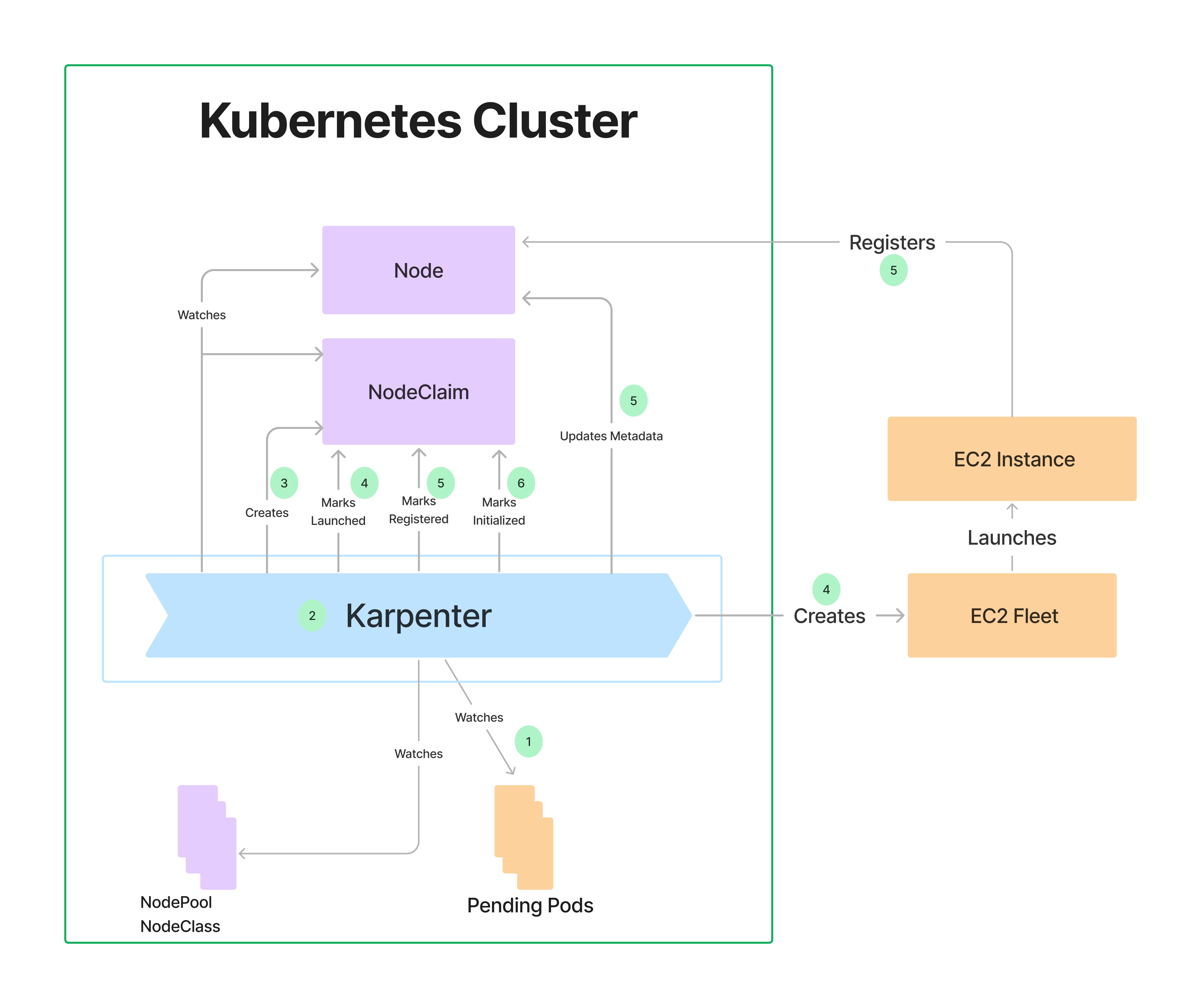

동작 원리

출처 : https://aws.amazon.com/ko/blogs/korea/introducing-karpenter-an-open-source-high-performance-kubernetes-cluster-autoscaler/

출처 : https://aws.amazon.com/ko/blogs/korea/introducing-karpenter-an-open-source-high-performance-kubernetes-cluster-autoscaler/

- HPA에 의한 Pod의 수평적 확장이 한계에 다다르면, Pod는 적절한 Node를 배정받지 못한채로 pending 상태에이른다.

- 이때 Karpenter 는 지속적으로 unscheduled 인 Pod를 관찰하고 있다가, 새로운 Worker Node를 추가를 결정하고 직접 배포한다.

- 추가된 Worker Node가 Ready 상태가 되면 Karpenter 는 kube-scheduler를 대신하여 pod의 Node binding 요청 작업을 수행한다.

실습 환경 구성 및 소개

배포

# YAML 파일 다운로드

curl -O https://s3.ap-northeast-2.amazonaws.com/cloudformation.cloudneta.net/K8S/myeks-5week.yaml

# 변수 지정

CLUSTER_NAME=myeks

SSHKEYNAME=bocopile-key

MYACCESSKEY=<IAM Uesr 액세스 키>

MYSECRETKEY=<IAM Uesr 시크릿 키>

# CloudFormation 스택 배포

aws cloudformation deploy --template-file myeks-5week.yaml --stack-name $CLUSTER_NAME --parameter-overrides KeyName=$SSHKEYNAME SgIngressSshCidr=$(curl -s ipinfo.io/ip)/32 MyIamUserAccessKeyID=$MYACCESSKEY MyIamUserSecretAccessKey=$MYSECRETKEY ClusterBaseName=$CLUSTER_NAME --region ap-northeast-2

# CloudFormation 스택 배포 완료 후 작업용 EC2 IP 출력

aws cloudformation describe-stacks --stack-name myeks --query 'Stacks[*].Outputs[0].OutputValue' --output textAWS LoadBalancer Controller 설치

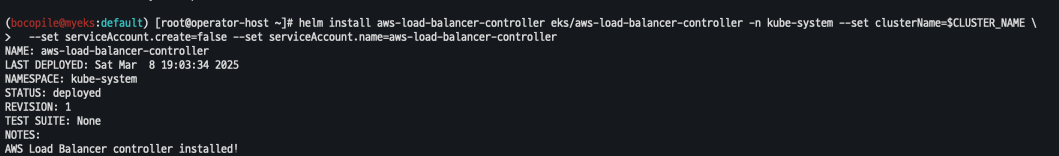

# AWS LoadBalancerController

helm repo add eks https://aws.github.io/eks-charts

helm install aws-load-balancer-controller eks/aws-load-balancer-controller -n kube-system --set clusterName=$CLUSTER_NAME \

--set serviceAccount.create=false --set serviceAccount.name=aws-load-balancer-controller

ExternalDNS 설치

# ExternalDNS

echo $MyDomain

curl -s https://raw.githubusercontent.com/gasida/PKOS/main/aews/externaldns.yaml | MyDomain=$MyDomain MyDnzHostedZoneId=$MyDnzHostedZoneId envsubst | kubectl apply -f -gp3 스토리지 클래스 생성

# gp3 스토리지 클래스 생성

cat <<EOF | kubectl apply -f -

kind: StorageClass

apiVersion: storage.k8s.io/v1

metadata:

name: gp3

annotations:

storageclass.kubernetes.io/is-default-class: "true"

allowVolumeExpansion: true

provisioner: ebs.csi.aws.com

volumeBindingMode: WaitForFirstConsumer

parameters:

type: gp3

allowAutoIOPSPerGBIncrease: 'true'

encrypted: 'true'

fsType: xfs # 기본값이 ext4

EOF

kubectl get sc

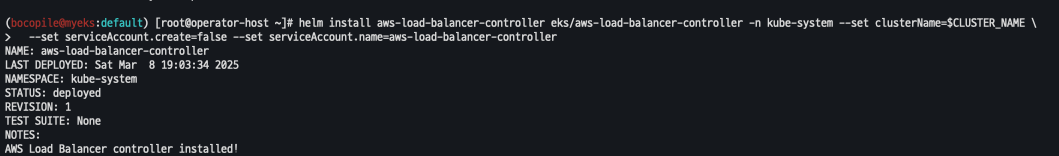

kubeopsview 설치

# kube-ops-view

helm repo add geek-cookbook https://geek-cookbook.github.io/charts/

helm install kube-ops-view geek-cookbook/kube-ops-view --version 1.2.2 --set service.main.type=ClusterIP --set env.TZ="Asia/Seoul" --namespace kube-system

# kubeopsview 용 Ingress 설정 : group 설정으로 1대의 ALB를 여러개의 ingress 에서 공용 사용

echo $CERT_ARN

cat <<EOF | kubectl apply -f -

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:

annotations:

alb.ingress.kubernetes.io/certificate-arn: $CERT_ARN

alb.ingress.kubernetes.io/group.name: study

alb.ingress.kubernetes.io/listen-ports: '[{"HTTPS":443}, {"HTTP":80}]'

alb.ingress.kubernetes.io/load-balancer-name: e-ingress-alb

alb.ingress.kubernetes.io/scheme: internet-facing

alb.ingress.kubernetes.io/ssl-redirect: "443"

alb.ingress.kubernetes.io/success-codes: 200-399

alb.ingress.kubernetes.io/target-type: ip

labels:

app.kubernetes.io/name: kubeopsview

name: kubeopsview

namespace: kube-system

spec:

ingressClassName: alb

rules:

- host: kubeopsview.e

http:

paths:

- backend:

service:

name: kube-ops-view

port:

number: 8080 # name: http

path: /

pathType: Prefix

EOF설치 확인

# 설치된 파드 정보 확인

kubectl get pods -n kube-system

# service, ep, ingress 확인

kubectl get ingress,svc,ep -n kube-system

# Kube Ops View 접속 정보 확인 : 조금 오래 기다리면 접속됨...

echo -e "Kube Ops View URL = https://kubeopsview.$MyDomain/#scale=1.5"

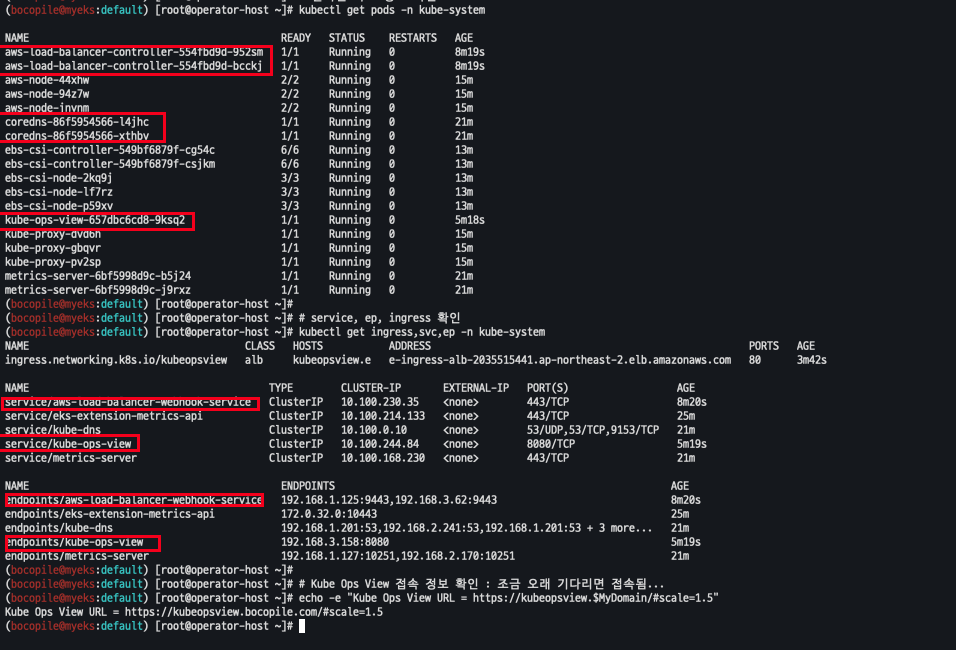

사이트 접근

open "https://kubeopsview.$MyDomain" # macOS

프로메테우스 & 그라파나

설치

# repo 추가

helm repo add prometheus-community https://prometheus-community.github.io/helm-charts

# 파라미터 파일 생성 : PV/PVC(AWS EBS) 삭제에 불편하니, 4주차 실습과 다르게 PV/PVC 미사용

cat <<EOT > monitor-values.yaml

prometheus:

prometheusSpec:

scrapeInterval: "15s"

evaluationInterval: "15s"

podMonitorSelectorNilUsesHelmValues: false

serviceMonitorSelectorNilUsesHelmValues: false

retention: 5d

retentionSize: "10GiB"

# Enable vertical pod autoscaler support for prometheus-operator

verticalPodAutoscaler:

enabled: true

ingress:

enabled: true

ingressClassName: alb

hosts:

- prometheus.$MyDomain

paths:

- /*

annotations:

alb.ingress.kubernetes.io/scheme: internet-facing

alb.ingress.kubernetes.io/target-type: ip

alb.ingress.kubernetes.io/listen-ports: '[{"HTTPS":443}, {"HTTP":80}]'

alb.ingress.kubernetes.io/certificate-arn: $CERT_ARN

alb.ingress.kubernetes.io/success-codes: 200-399

alb.ingress.kubernetes.io/load-balancer-name: myeks-ingress-alb

alb.ingress.kubernetes.io/group.name: study

alb.ingress.kubernetes.io/ssl-redirect: '443'

grafana:

defaultDashboardsTimezone: Asia/Seoul

adminPassword: prom-operator

defaultDashboardsEnabled: false

ingress:

enabled: true

ingressClassName: alb

hosts:

- grafana.$MyDomain

paths:

- /*

annotations:

alb.ingress.kubernetes.io/scheme: internet-facing

alb.ingress.kubernetes.io/target-type: ip

alb.ingress.kubernetes.io/listen-ports: '[{"HTTPS":443}, {"HTTP":80}]'

alb.ingress.kubernetes.io/certificate-arn: $CERT_ARN

alb.ingress.kubernetes.io/success-codes: 200-399

alb.ingress.kubernetes.io/load-balancer-name: myeks-ingress-alb

alb.ingress.kubernetes.io/group.name: study

alb.ingress.kubernetes.io/ssl-redirect: '443'

kube-state-metrics:

rbac:

extraRules:

- apiGroups: ["autoscaling.k8s.io"]

resources: ["verticalpodautoscalers"]

verbs: ["list", "watch"]

customResourceState:

enabled: true

config:

kind: CustomResourceStateMetrics

spec:

resources:

- groupVersionKind:

group: autoscaling.k8s.io

kind: "VerticalPodAutoscaler"

version: "v1"

labelsFromPath:

verticalpodautoscaler: [metadata, name]

namespace: [metadata, namespace]

target_api_version: [apiVersion]

target_kind: [spec, targetRef, kind]

target_name: [spec, targetRef, name]

metrics:

- name: "vpa_containerrecommendations_target"

help: "VPA container recommendations for memory."

each:

type: Gauge

gauge:

path: [status, recommendation, containerRecommendations]

valueFrom: [target, memory]

labelsFromPath:

container: [containerName]

commonLabels:

resource: "memory"

unit: "byte"

- name: "vpa_containerrecommendations_target"

help: "VPA container recommendations for cpu."

each:

type: Gauge

gauge:

path: [status, recommendation, containerRecommendations]

valueFrom: [target, cpu]

labelsFromPath:

container: [containerName]

commonLabels:

resource: "cpu"

unit: "core"

selfMonitor:

enabled: true

alertmanager:

enabled: false

defaultRules:

create: false

kubeControllerManager:

enabled: false

kubeEtcd:

enabled: false

kubeScheduler:

enabled: false

prometheus-windows-exporter:

prometheus:

monitor:

enabled: false

EOT

cat monitor-values.yaml

# helm 배포

helm install kube-prometheus-stack prometheus-community/kube-prometheus-stack --version 69.3.1 \

-f monitor-values.yaml --create-namespace --namespace monitoring

확인

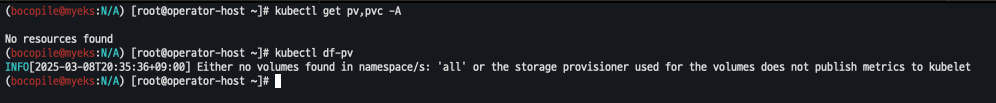

- pv 사용하지 않음 확인

# helm 확인

helm get values -n monitoring kube-prometheus-stack

kubectl get pv,pvc -A

kubectl df-pv

사이트 접근

# 프로메테우스 웹 접속

open "https://prometheus.$MyDomain" # macOS

# 그라파나 웹 접속 : admin / prom-operator

open "https://grafana.$MyDomain" # macOS

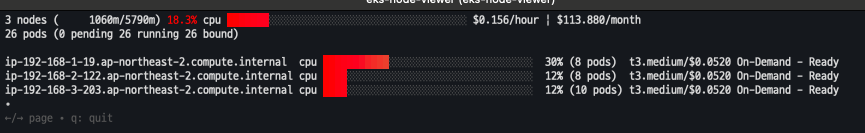

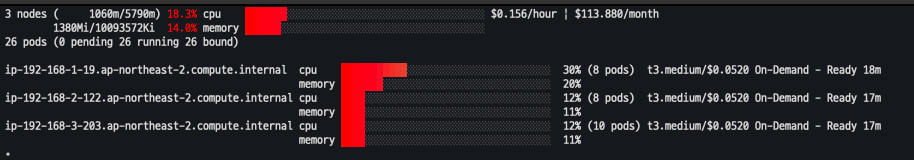

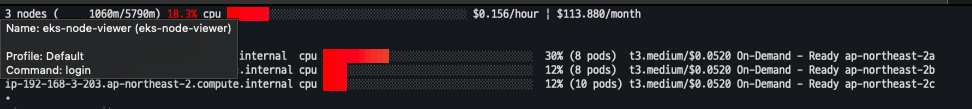

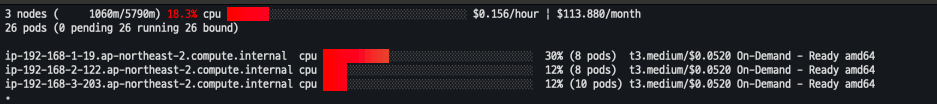

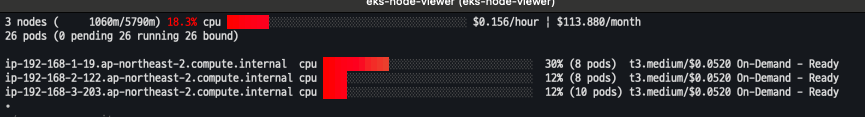

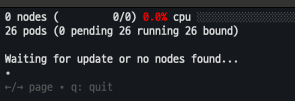

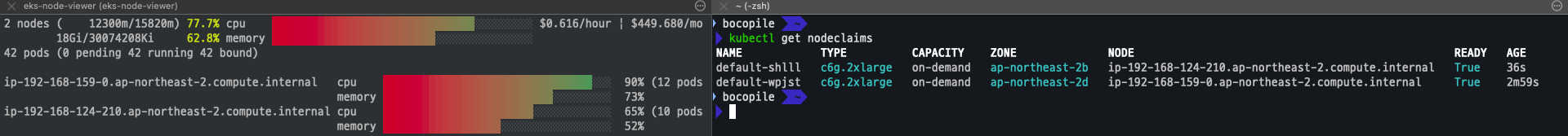

EKS Node Viewer

- 노드 할당 가능 용량과 요청 Request 리소스 표시

설치

# macOS 설치

brew tap aws/tap

brew install eks-node-viewer

명령어 사용

- cluster 확인

eks-node-viewer

- cpu , memory 사용

eks-node-viewer --resources cpu,memory --extra-labels eks-node-viewer/node-age

- extra labels

eks-node-viewer --extra-labels topology.kubernetes.io/zone

eks-node-viewer --extra-labels kubernetes.io/arch

- cpu 사용률에 따른 정렬

eks-node-viewer --node-sort=eks-node-viewer/node-cpu-usage=dsc

-

karpenter Node만 조회 (현재 Karpenter가 미설치 되어 조회 되지 않음)

# Karenter nodes only eks-node-viewer --node-selector "karpenter.sh/provisioner-name"

HPA

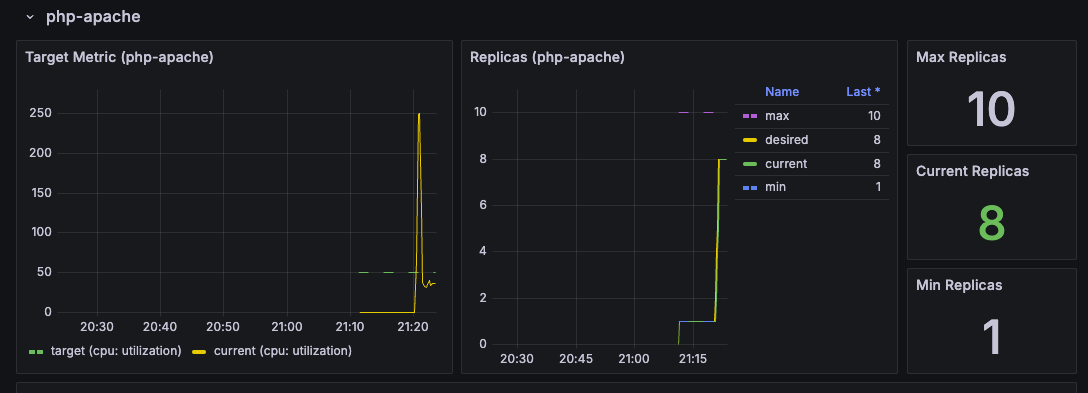

kube-ops-view와 그라파나 연동

샘플 애플리케이션 배포

배포

# Run and expose php-apache server

cat << EOF > php-apache.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: php-apache

spec:

selector:

matchLabels:

run: php-apache

template:

metadata:

labels:

run: php-apache

spec:

containers:

- name: php-apache

image: registry.k8s.io/hpa-example

ports:

- containerPort: 80

resources:

limits:

cpu: 500m

requests:

cpu: 200m

---

apiVersion: v1

kind: Service

metadata:

name: php-apache

labels:

run: php-apache

spec:

ports:

- port: 80

selector:

run: php-apache

EOF

kubectl apply -f php-apache.yaml배포 확인

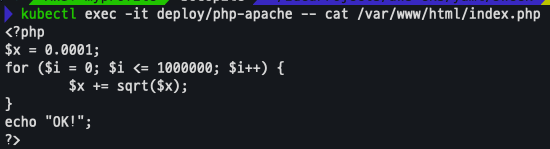

kubectl exec -it deploy/php-apache -- cat /var/www/html/index.php

HPA 정책 생성, 부하 발생후 오토스케일링 테스트

HPA 정책 생성

# 방법 1

cat <<EOF | kubectl apply -f -

apiVersion: autoscaling/v2

kind: HorizontalPodAutoscaler

metadata:

name: *hp-apache

spec:

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: php-apache

minReplicas: 1

maxReplicas: 10

metrics:

- type: Resource

resource:

name: cpu

target:

averageUtilization: 50

type: Utilization

EOF

# 방법 2

kubectl autoscale deployment php-apache --cpu-percent=50 --min=1 --max=10

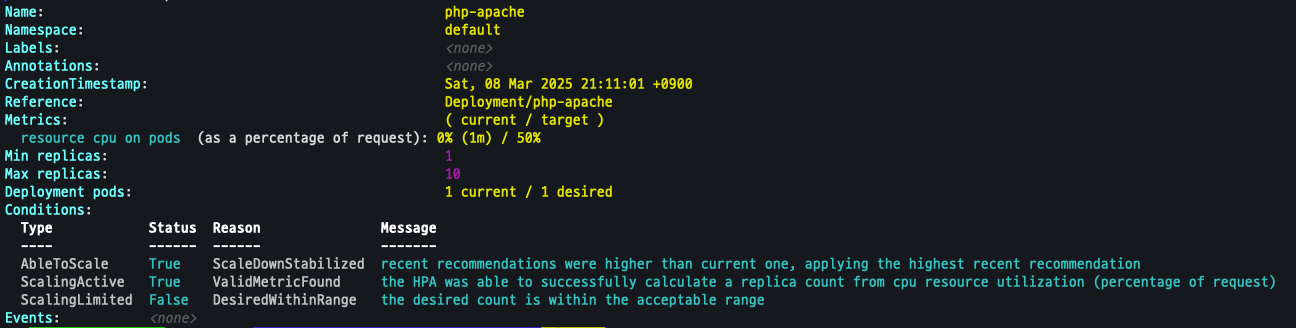

배포 확인

kubectl describe hpa

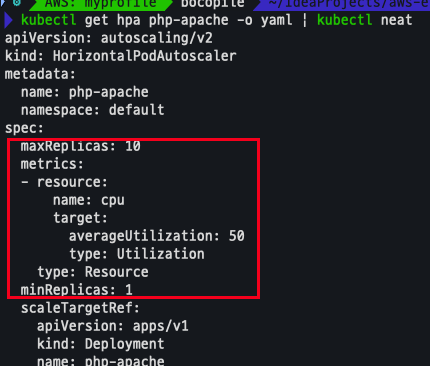

HPA 설정 확인

- MinRreplicas, MaxReplicas, Resource 설정 확인

kubectl get hpa php-apache -o yaml | kubectl neat

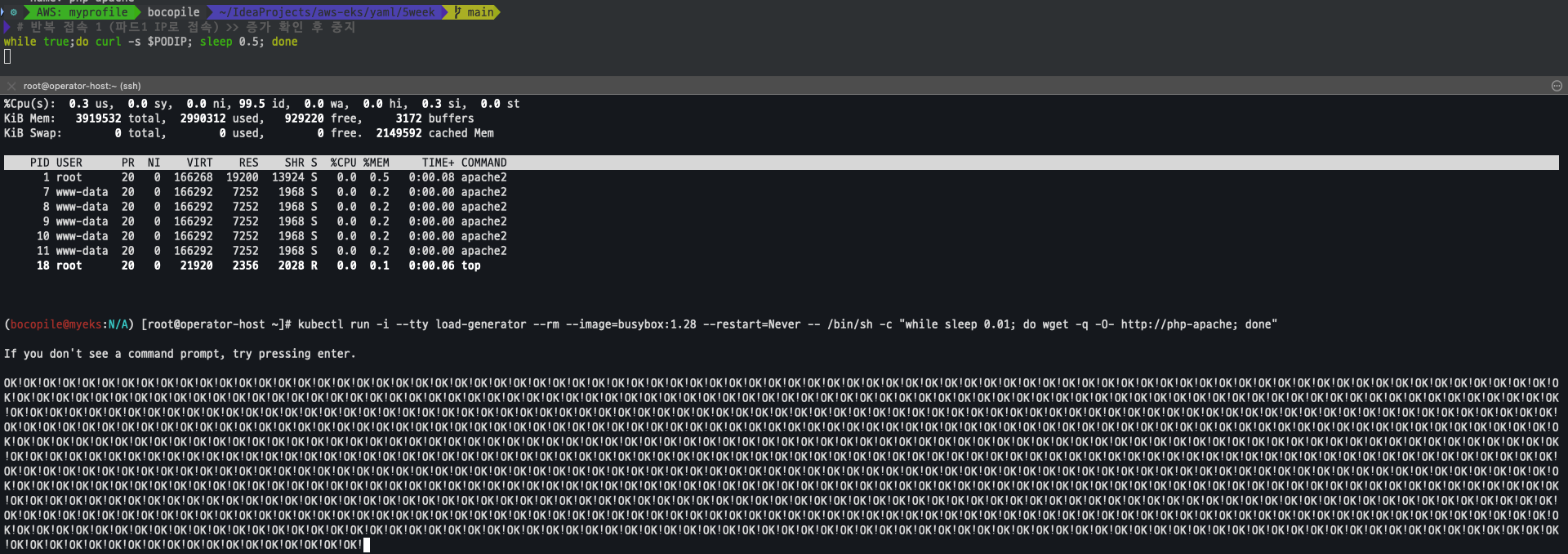

반복 접속

# 반복 접속 1 (파드1 IP로 접속) >> 증가 확인 후 중지

while true;do curl -s $PODIP; sleep 0.5; done

# 반복 접속 2 (서비스명 도메인으로 파드들 분산*접속) >> 증가 확인(몇개까지 증가되는가? 그 이유는?) 후 중지

kubectl run -i --tty load-generator --rm --image=busybox:1.28 --restart=Never -- /bin/sh -c "while sleep 0.01; do wget -q -O- http://php-apache; done"

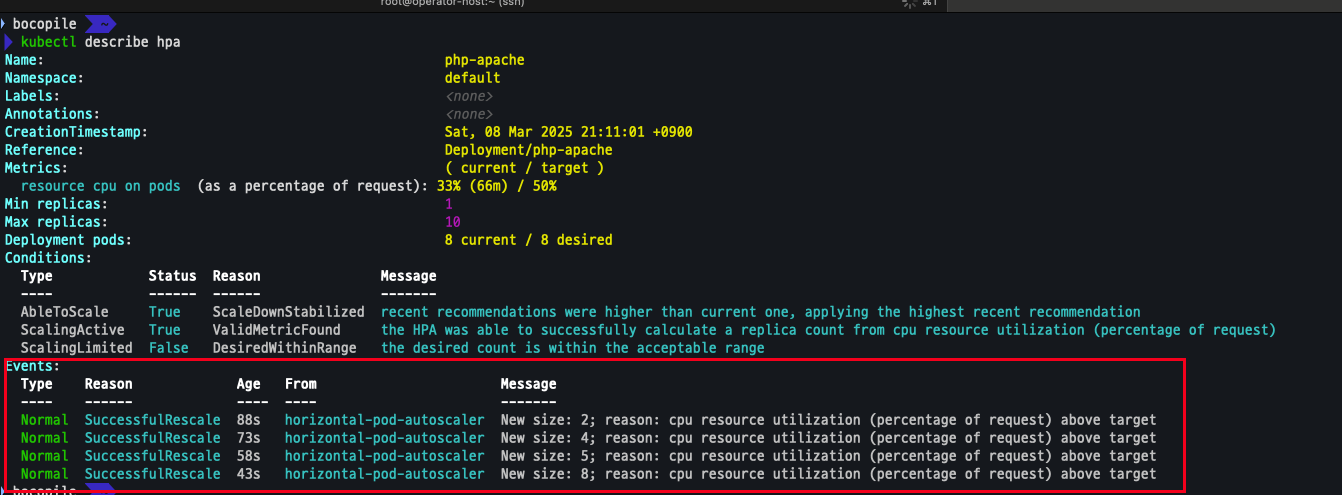

HPA Evnet 확인

- size 가 점차적으로 증가 되는 것을 확인

kubectl describe hpa

- 해당 지표는 그라파나에서도 확인이 됨

관련 오브젝트 제거

- 테스트가 완료되었다면 삭제를 진행한다.

kubectl delete deploy,svc,hpa,pod --all2. KEDA - Kubernetes based Event Driven Autoscaler

KEDA With Helm

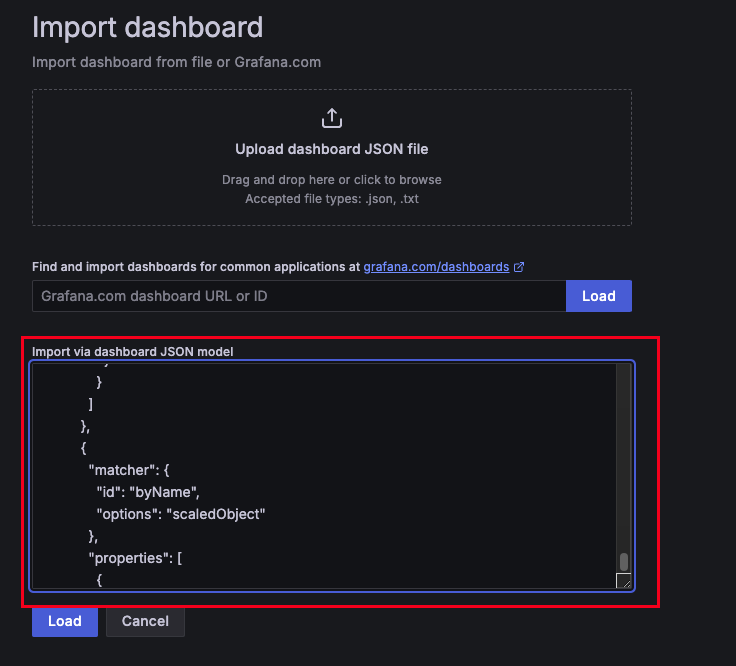

- 특정 이벤트 (cron 등) 기반의 파드 오트 스케일링

- KEDA 대시보드 import : : https://github.com/kedacore/keda/blob/main/config/grafana/keda-dashboard.json

- 위의 사이트에서 복사한 json 을그라파나에서 Import via dashboard JSON model 으로 진행

설치

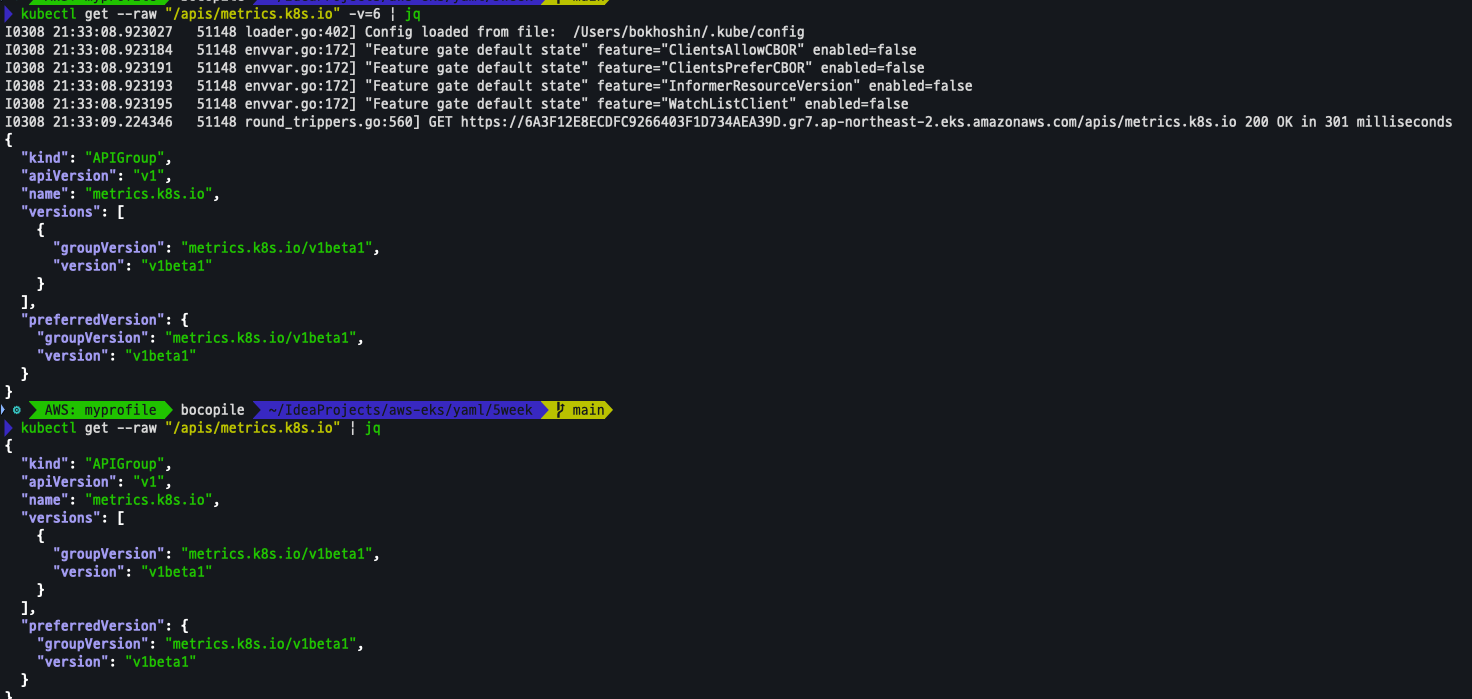

설치 전 기존 metrics-server 제공 Metris API 확인

kubectl get --raw "/apis/metrics.k8s.io" -v=6 | jq

kubectl get --raw "/apis/metrics.k8s.io" | jq

KEDA 설치

cat <<EOT > keda-values.yaml

metricsServer*

useHostNetwork: true

prometheus:

metricServer:

enabled: true

port: 9022

portName: metrics

path: /metrics

serviceMonitor:

# Enables ServiceMonitor creation for the Prometheus Operator

enabled: true

podMonitor:

# Enables PodMonitor creation for the Prometheus Operator

enabled: true

operator:

enabled: true

port: 8080

serviceMonitor:

# Enables ServiceMonitor creation for the Prometheus Operator

enabled: true

podMonitor:

# Enables PodMonitor creation for the Prometheus Operator

enabled: true

webhooks:

enabled: true

port: 8020

serviceMonitor:

# Enables ServiceMonitor creation for the Prometheus webhooks

enabled: true

EOT

helm repo add kedacore https://kedacore.github.io/charts

helm repo update

helm install keda kedacore/keda --version 2.16.0 --namespace keda --create-namespace -f keda-values.yaml

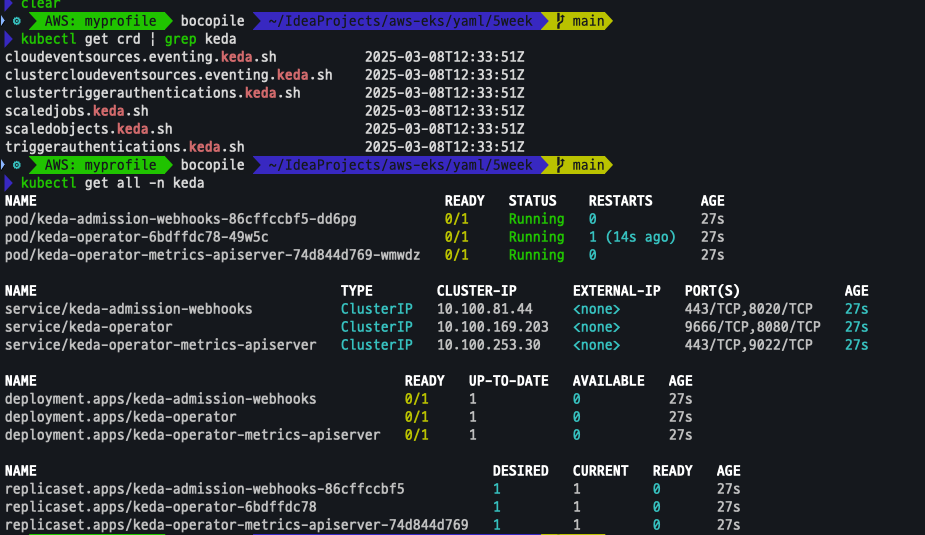

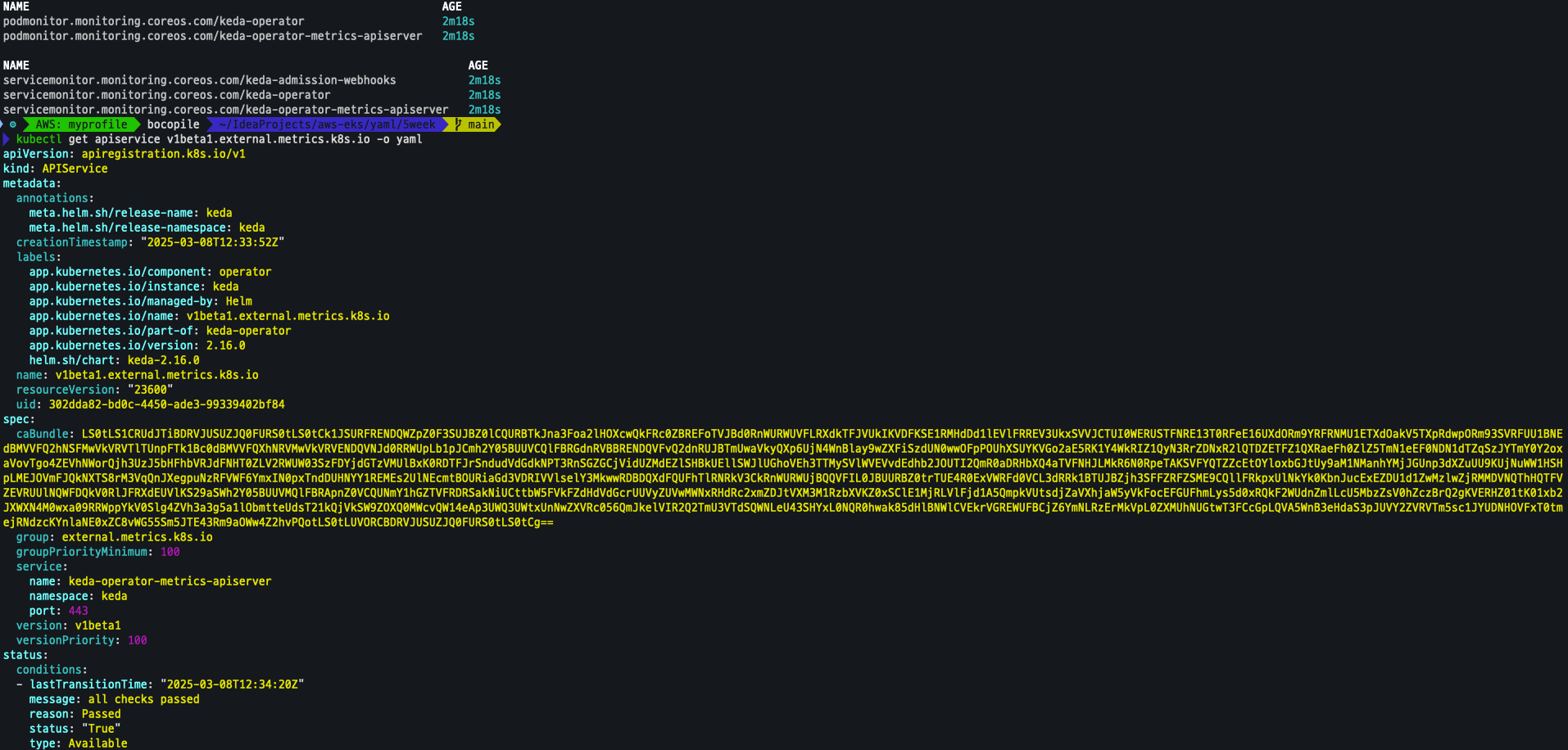

KEDA 설치 확인

kubectl get crd | grep keda

kubectl get all -n keda

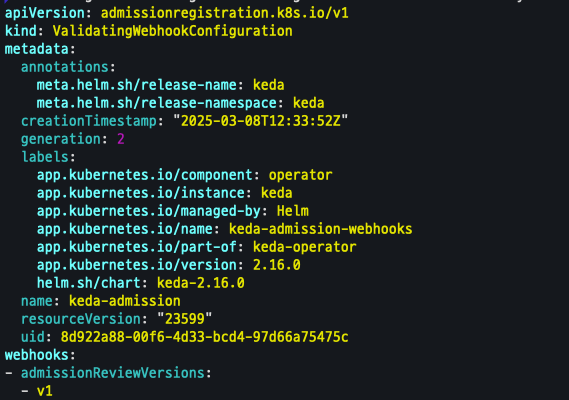

kubectl get validatingwebhookconfigurations keda-admission -o yaml

kubectl get podmonitor,servicemonitors -n keda

kubectl get apiservice v1beta1.external.metrics.k8s.io -o yaml

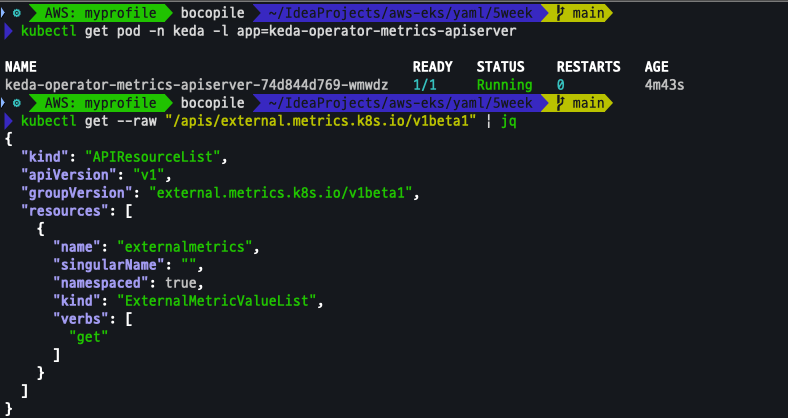

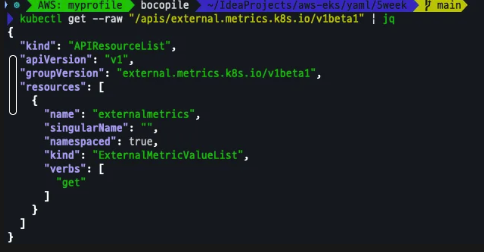

매트릭 노출

kubectl get pod -n keda -l app=keda-operator-metrics-apiserver

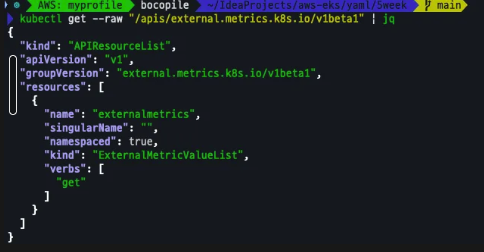

kubectl get --raw "/apis/external.metrics.k8s.io/v1beta1" | jq

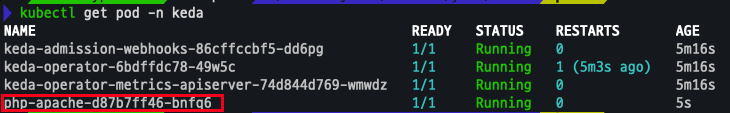

keda 네임스페이스에 디플로이먼트 생성

kubectl apply -f php-apache.yaml -n keda

kubectl get pod -n keda

ScaleObject 정책 생성

cat <<EOT > keda-cron.yaml

apiVersion: keda.sh/v1alpha1

kind: ScaledObject

metadata:

name: php-apache-cron-scaled

spec:

minReplicaCount: 0

maxReplicaCount: 2 # Specifies the maximum number of replicas to scale up to (defaults to 100).

pollingInterval: 30 # Specifies how often KEDA should check for scaling events

cooldownPeriod: 300 # Specifies the cool-down period in seconds after a scaling event

scaleTargetRef: # Identifies the Kubernetes deployment or other resource that should be scaled.

apiVersion: apps/v1

kind: Deployment

name: php-apache

triggers: # Defines the specific configuration for your chosen scaler, including any required parameters or settings

- type: cron

metadata:

timezone: Asia/Seoul

start: 00,15,30,45 * * * *

end: 05,20,35,50 * * * *

desiredReplicas: "1"

EOT

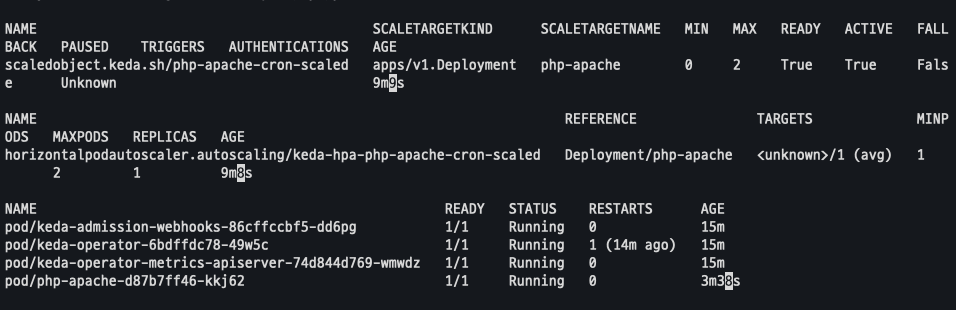

kubectl apply -f keda-cron.yaml -n keda설치 확인

kubectl get ScaledObject,hpa,pod -n keda

kubectl get hpa -o jsonpath="{.items[0].spec}" -n keda | jq

모니터링

watch -d 'kubectl get ScaledObject,hpa,pod -n keda'

kubectl get ScaledObject -w확인

kubectl get ScaledObject,hpa,pod -n keda

kubectl get hpa -o jsonpath="{.items[0].spec}" -n keda | jq

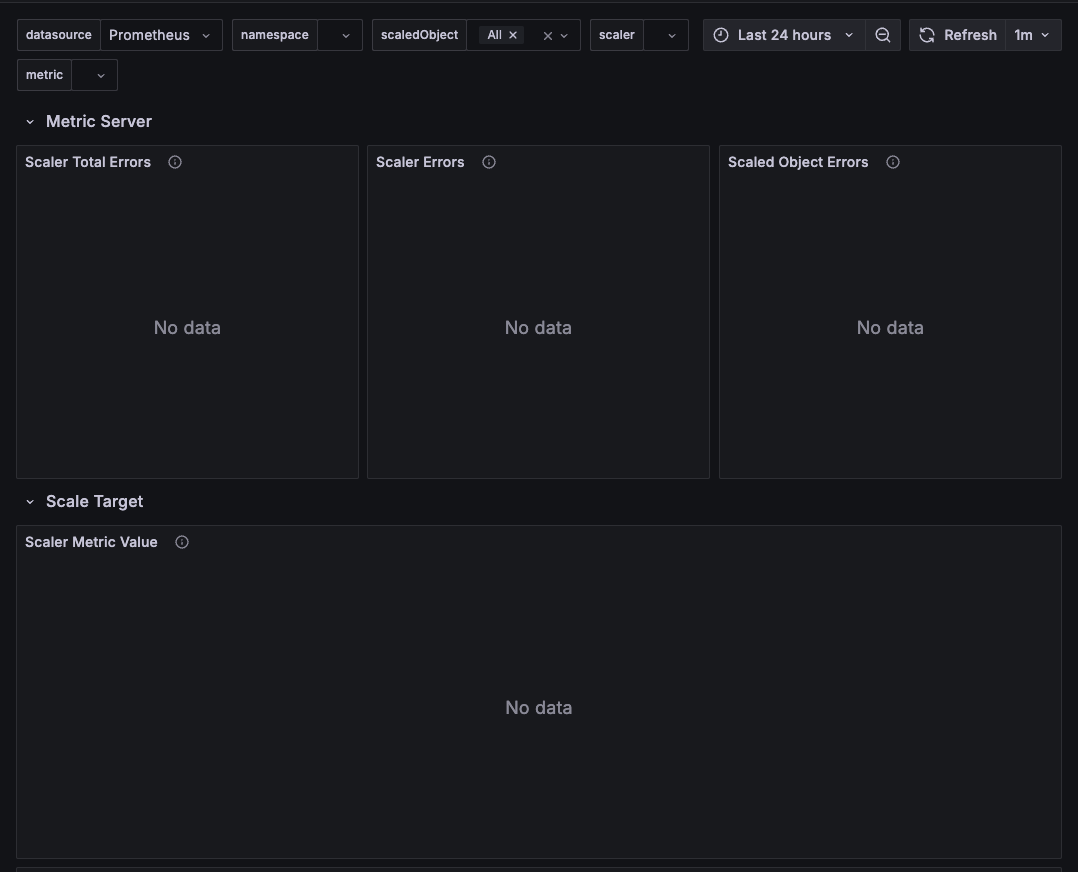

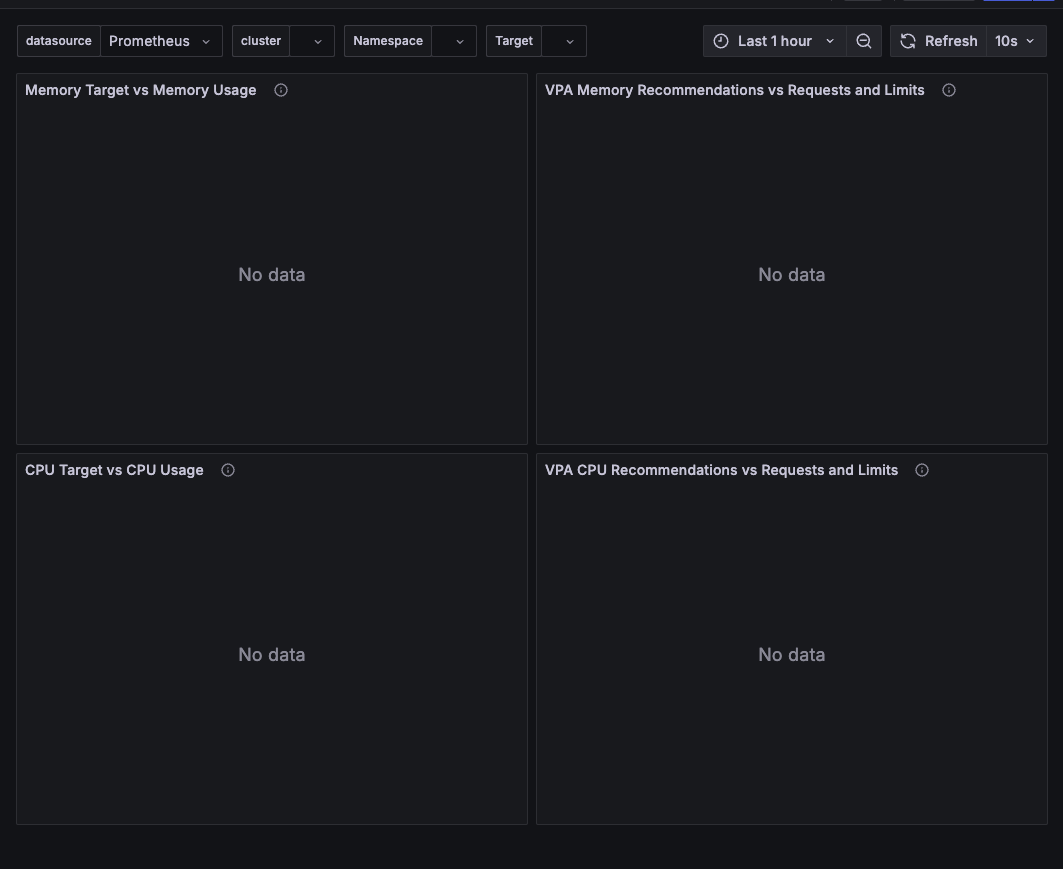

그라파나 확인

관련 오브젝트 제거

- 테스트가 완료되었다면 삭제를 진행한다.

kubectl delete ScaledObject -n keda php-apache-cron-scaled && kubectl delete deploy php-apache -n keda && helm uninstall keda -n keda

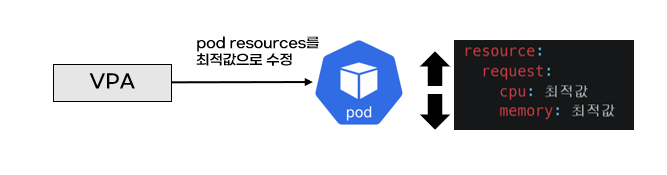

kubectl delete namespace keda3. VPA - Vertical Pod Autoscaler

pod자원을 최적값으로 수정하기 위해 pod를 재실행

최적값을 찾는 방식 : 기준값(파드가 동작하는데 필요한 최소한의 값)’ 결정

그라파나 대시보드 적용

- 14588 import 작업 진행

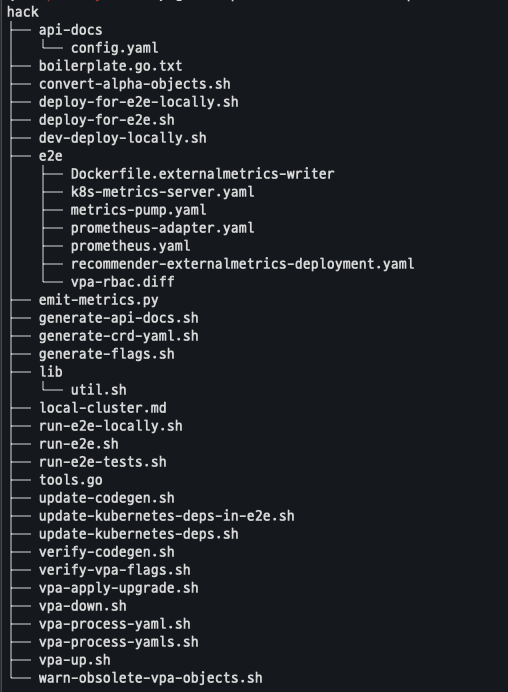

설치

VPA 설치

- EC2에 미리 설치 되어 있음

cd ~/autoscaler/vertical-pod-autoscaler/

tree hack

OpenSSL 버전 확인

- VPA 를 적용하기 위해선 openssl 1.1.1 이상의 버전이 필요함

openssl version

# 확인 결과

OpenSSL 1.0.2k-fips 26 Jan 2017OpenSSL 제거 및 설치

# 1.0 제거

yum remove openssl -y

# openssl 1.1.1 이상 버전 확인

yum install openssl11 -y

openssl11 version

# 확인 결과

OpenSSL 1.1.1zb 11 Feb 2025스크립트 파일 수정

sed -i 's/openssl/openssl11/g' ~/autoscaler/vertical-pod-autoscaler/pkg/admission-controller/gencerts.sh

git status

git config --global user.email "you@example.com"

git config --global user.name "Your Name"

git add .

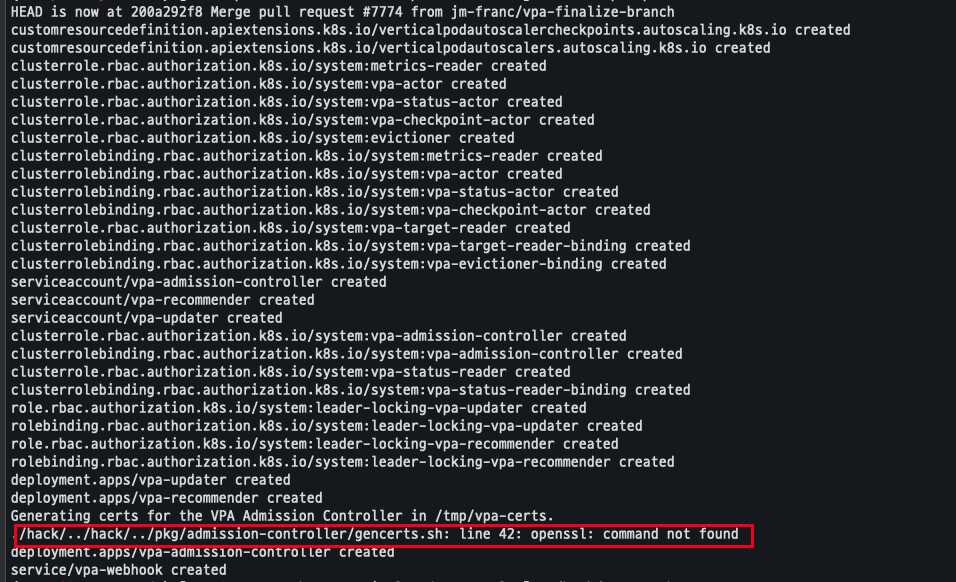

git commit -m "openssl version modify"VPA 적용

- 해당 스크립트 실행시 설치 되지 않는 것이 존재함

./hack/vpa-up.sh

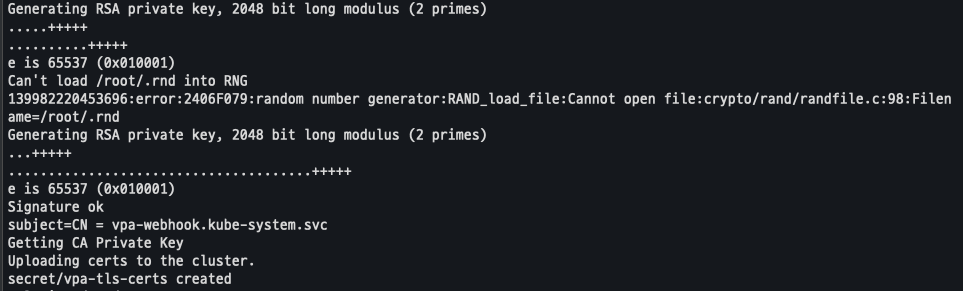

shell 파일 변경 및 재실행

sed -i 's/openssl/openssl11/g' ~/autoscaler/vertical-pod-autoscaler/pkg/admission-controller/gencerts.sh

./hack/vpa-up.sh

배포 확인

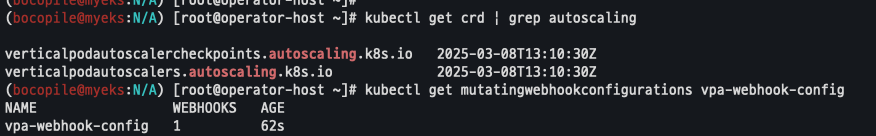

kubectl get crd | grep autoscaling

kubectl get mutatingwebhookconfigurations vpa-webhook-config

kubectl get mutatingwebhookconfigurations vpa-webhook-config -o json | jq

Pod 테스트 배포 진행

모니터링

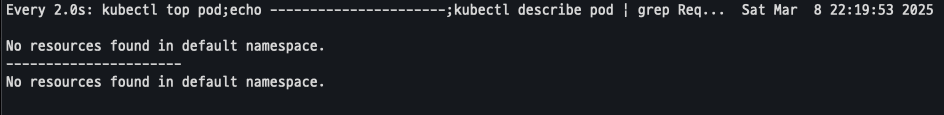

watch -d "kubectl top pod;echo "----------------------";kubectl describe pod | grep Requests: -A2"

배포 진행

cat examples/hamster.yaml

kubectl apply -f examples/hamster.yaml && kubectl get vpa -w배포 확인

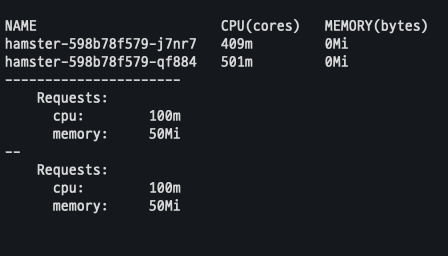

- 파드 리소스 Requests 확인

# 파드 리소스 Requestes 확인

kubectl describe pod | grep Requests: -A2

-

VPA에 의해 기존 파드 삭제 되고 신규 파드가 생성됨

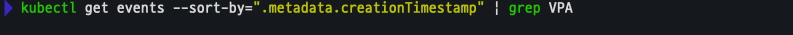

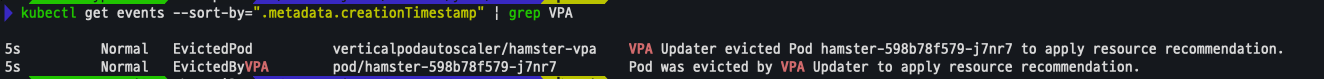

kubectl get events --sort-by=".metadata.creationTimestamp" | grep VPA- 기존

- 기존

- 변경

-

CPU Recommendation과 Requests and Limits 지표는 그라파나에서도 확인이 가능함

관련 오브젝트 제거

- 테스트가 완료되었다면 삭제를 진행한다.

kubectl delete -f examples/hamster.yaml && cd ~/autoscaler/vertical-pod-autoscaler/ && ./hack/vpa-down.sh4. CAS - Cluster Autoscaler

Kubernetes 클러스터의 크기를 자동으로 조정하여 모든 파드가 실행될 수 있도록 하고 불필요한 노드가 없도록 관리

출처 : https://catalog.us-east-1.prod.workshops.aws/workshops/9c0aa9ab-90a9-44a6-abe1-8dff360ae428/ko-KR/100-scaling/200-cluster-scaling

출처 : https://catalog.us-east-1.prod.workshops.aws/workshops/9c0aa9ab-90a9-44a6-abe1-8dff360ae428/ko-KR/100-scaling/200-cluster-scaling

- cluster-autoscaler 파드(디플로이먼트)를 배포하여 클러스터 자동 확장을 수행

- Pending 상태의 파드가 있을 경우, 워커 노드를 자동으로 스케일 아웃 진행

- 일정한 간격으로 노드 사용률을 확인하여 스케일 인/아웃을 조정

- AWS에서는 Auto Scaling Group(ASG)을 활용하여 Cluster Autoscaler를 적용

CAS 설정 진행

설정 전 확인 사항

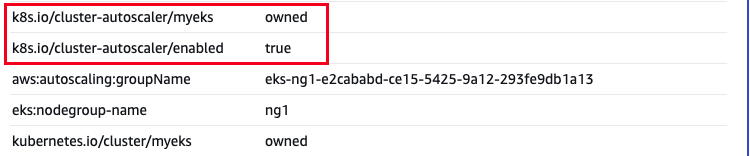

Tags 확인

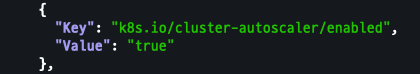

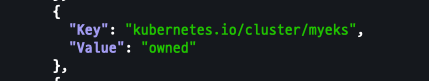

- k8s.io/cluster-autoscaler/enabled : true

- k8s.io/cluster-autoscaler/myeks : owned

aws ec2 describe-instances --filters Name=tag:Name,Values=$CLUSTER_NAME-ng1-Node --query "Reservations[*].Instances[*].Tags[*]" --output json | jq

-

해당 tags는 AWS Console에서도 확인이 가능

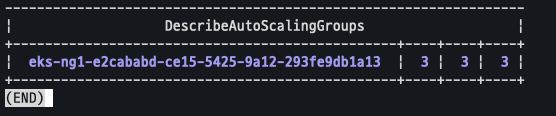

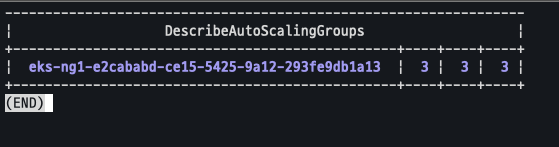

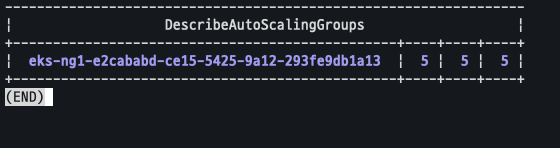

현재 autoscaling 정책 확인

aws autoscaling describe-auto-scaling-groups \

--query "AutoScalingGroups[? Tags[? (Key=='eks:cluster-name') && Value=='myeks']].[AutoScalingGroupName, MinSize, MaxSize,DesiredCapacity]" \

--output table

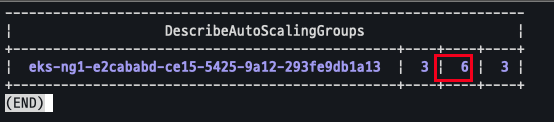

MaxSize 6개로 변경

export ASG_NAME=$(aws autoscaling describe-auto-scaling-groups --query "AutoScalingGroups[? Tags[? (Key=='eks:cluster-name') && Value=='myeks']].AutoScalingGroupName" --output text)

aws autoscaling update-auto-scaling-group --auto-scaling-group-name ${ASG_NAME} --min-size 3 --desired-capacity 3 --max-size 6

변경 후 확인

- maxsize가 6으로 변경됨을 확인

aws autoscaling describe-auto-scaling-groups \

--query "AutoScalingGroups[? Tags[? (Key=='eks:cluster-name') && Value=='myeks']].[AutoScalingGroupName, MinSize, MaxSize,DesiredCapacity]" \

--output table

CAS 배포

yaml 파일 다운로드

curl -s -O https://raw.githubusercontent.com/kubernetes/autoscaler/master/cluster-autoscaler/cloudprovider/aws/examples/cluster-autoscaler-autodiscover.yaml파일 확인

yaml 파일을 분석하다 보면 ‘’ 가 존재함을 확인

```bash

cat cluster-autoscaler-autodiscover.yaml

```

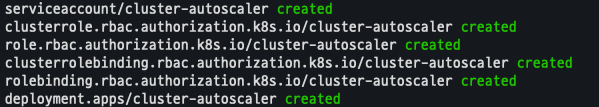

파일 수정 및 배포

sed -i -e "s|<YOUR CLUSTER NAME>|$CLUSTER_NAME|g" cluster-autoscaler-autodiscover.yaml

kubectl apply -f cluster-autoscaler-autodiscover.yaml

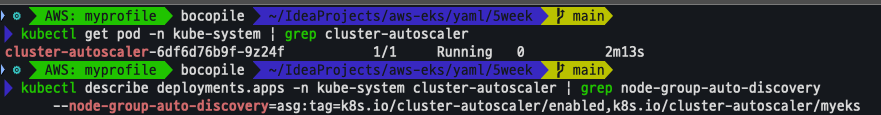

배포 확인

kubectl get pod -n kube-system | grep cluster-autoscaler

kubectl describe deployments.apps -n kube-system cluster-autoscaler

kubectl describe deployments.apps -n kube-system cluster-autoscaler | grep node-group-auto-discovery

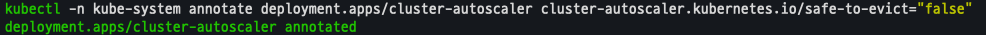

추가 옵션

- cluster-autoscaler 파드가 동작하는 워커 노드가 퇴출(evict) 되지 않게 설정

Scale A Cluster With CA

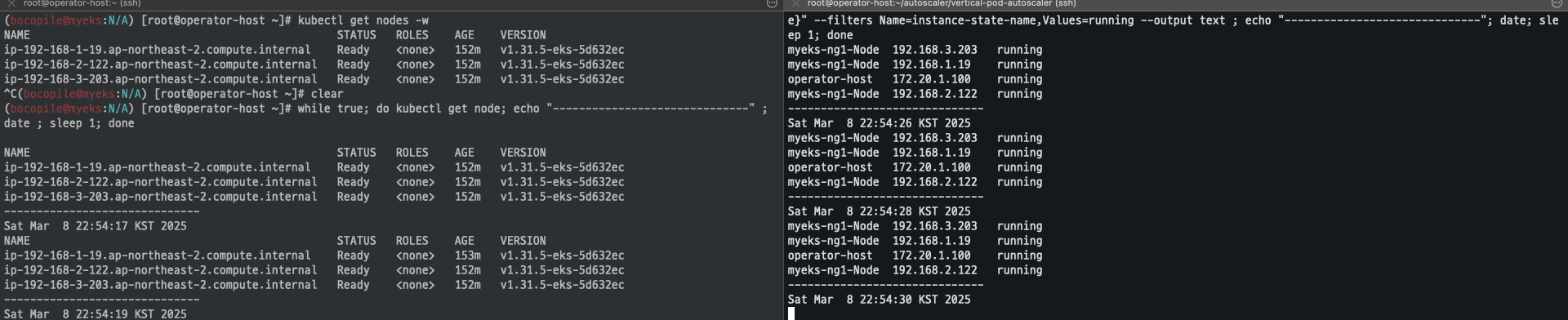

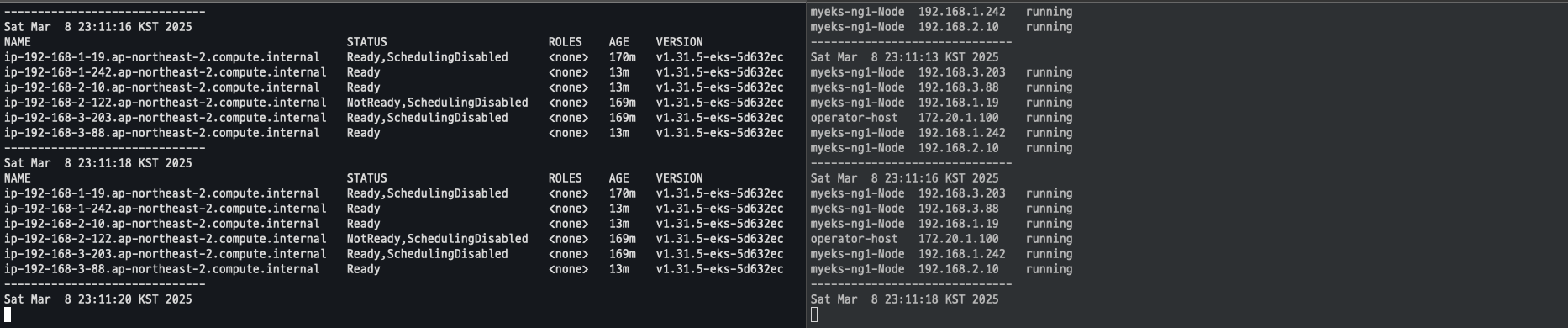

모니터링

while true; do kubectl get node; echo "------------------------------" ; date ; sleep 1; done

while true; do aws ec2 describe-instances --query "Reservations[*].Instances[*].{PrivateIPAdd:PrivateIpAddress,InstanceName:Tags[?Key=='Name']|[0].Value,Status:State.Name}" --filters Name=instance-state-name,Values=running --output text ; echo "------------------------------"; date; sleep 1; done

Sample 애플리케이션 배포

cat << EOF > nginx.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-to-scaleout

spec:

replicas: 1

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

service: nginx

app: nginx

spec:

containers:

- image: nginx

name: nginx-to-scaleout

resources:

limits:

cpu: 500m

memory: 512Mi

requests:

cpu: 500m

memory: 512Mi

EOF

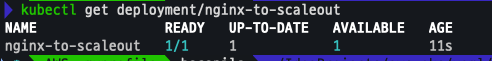

kubectl apply -f nginx.yaml

kubectl get deployment/nginx-to-scaleout

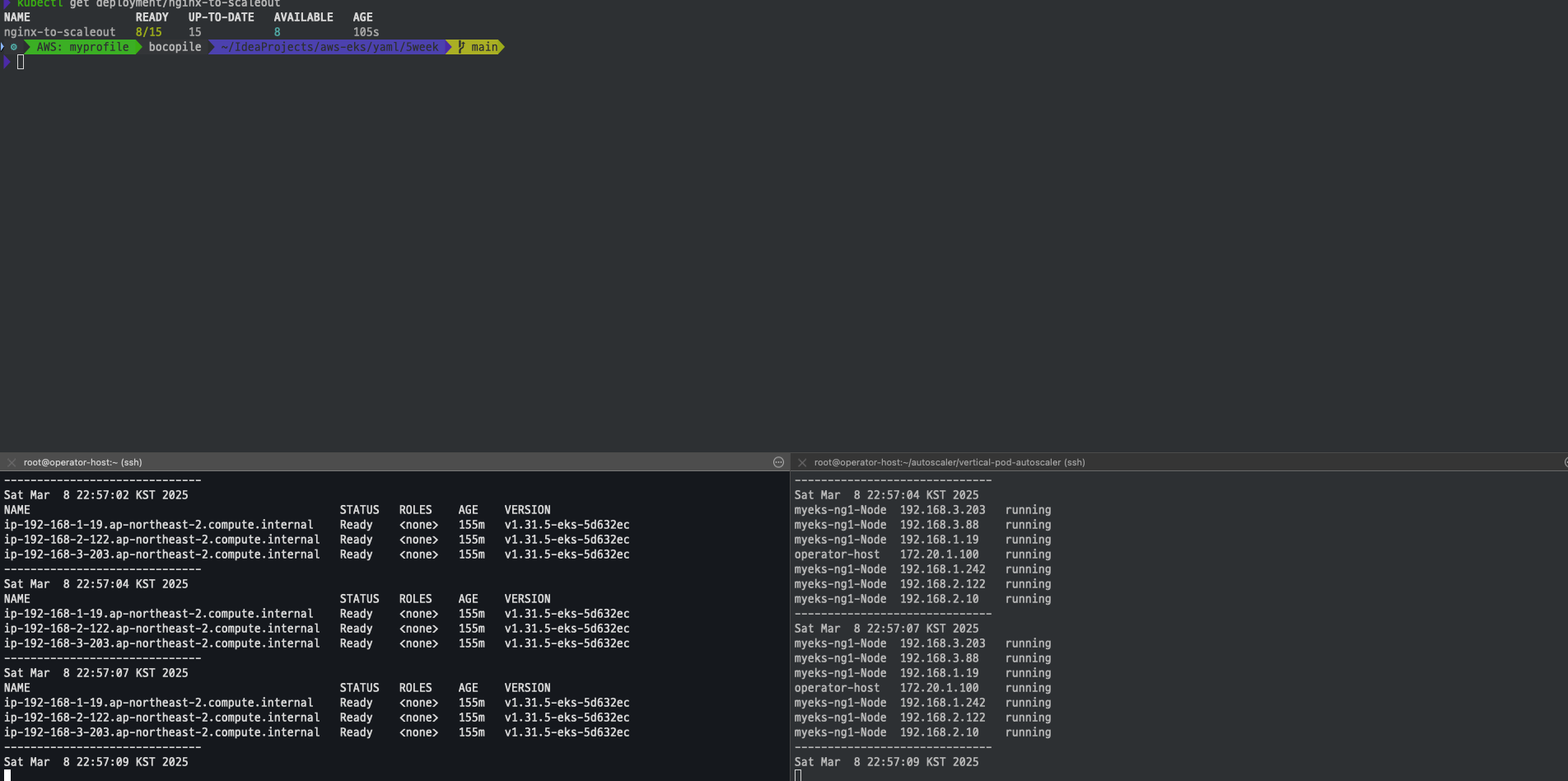

Scale 15로 변경

kubectl scale --replicas=15 deployment/nginx-to-scaleout && date

확인

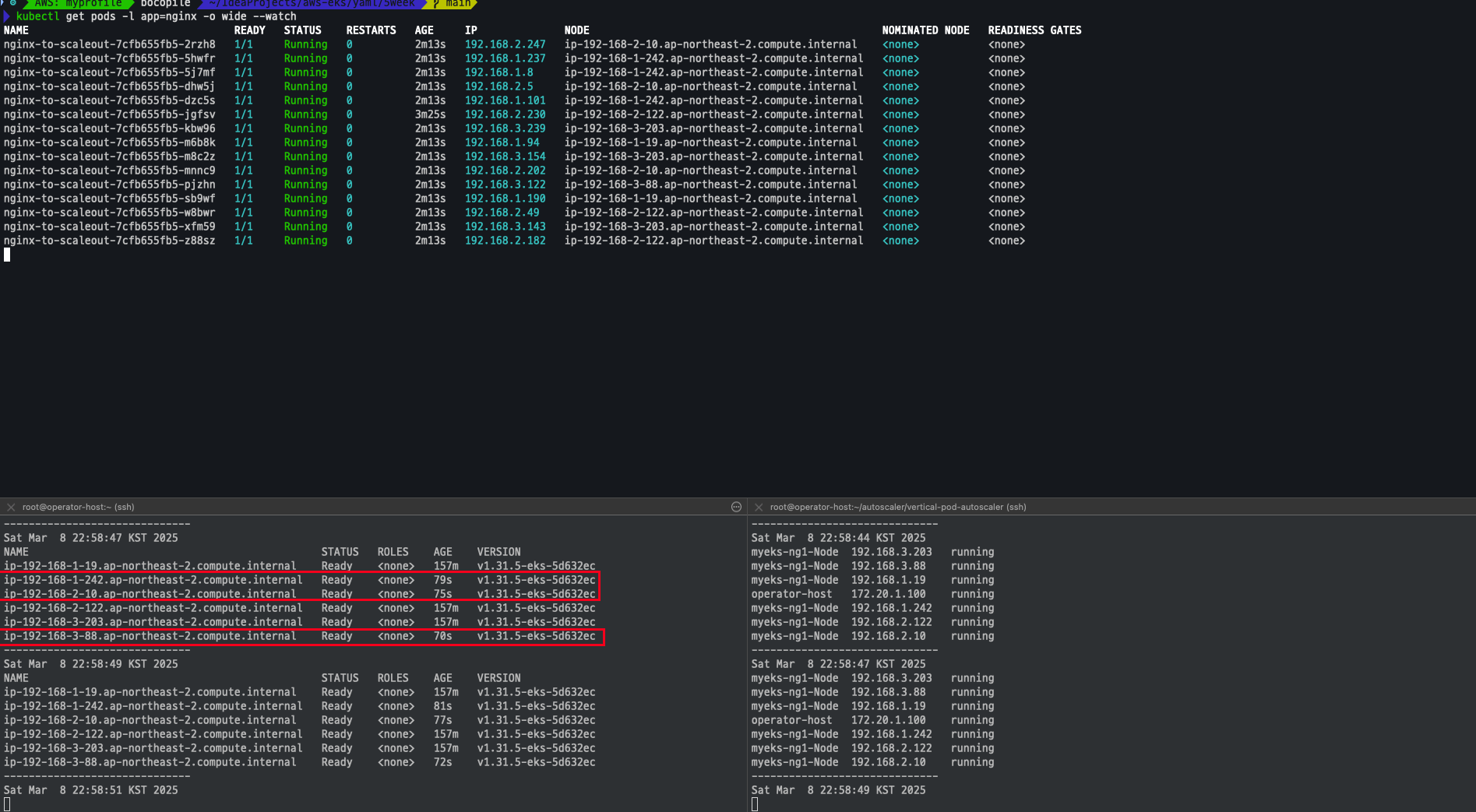

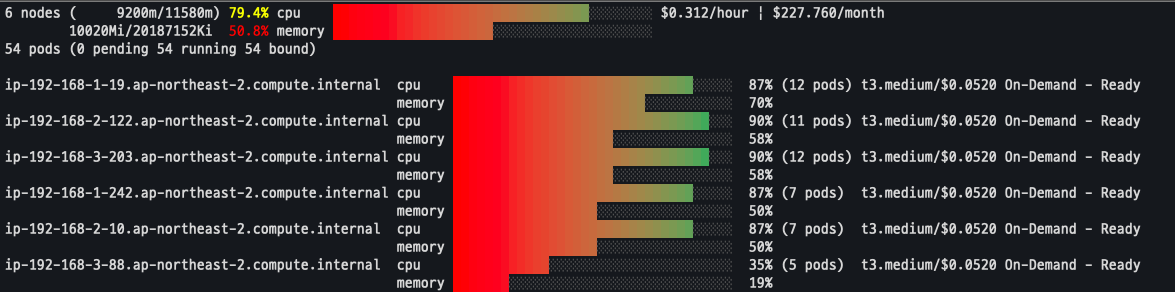

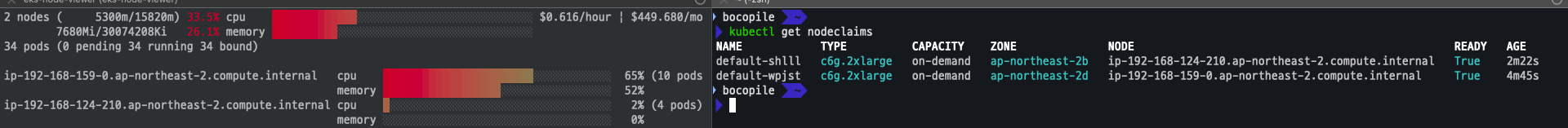

- Node 리소스가 부족함에 따라서 3개의 Node가 추가 되고 파드가 생성 되는 것을 확인

kubectl get pods -l app=nginx -o wide --watch

kubectl -n kube-system logs -f deployment/cluster-autoscaler

노드 자동 증가 확인

eks-node-viewer --resources cpu,memory

디플로이먼트 삭제 진행

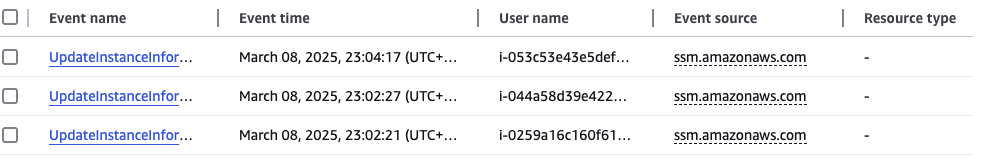

kubectl delete -f nginx.yaml && dateCloudTrail에 CreateFleet 이벤트 확인

Node 자동으로 생성된것은 CloudTrail에서도 확인이 가능

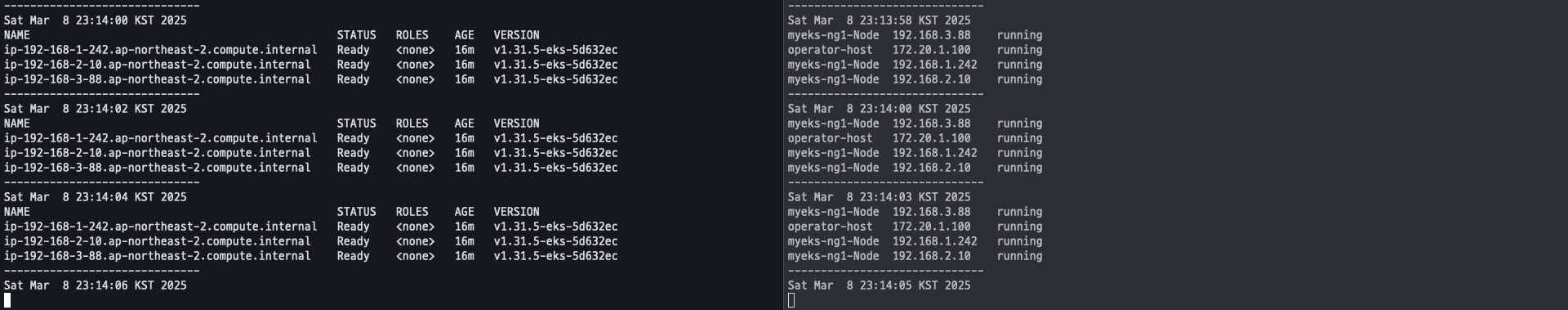

삭제

디플로이먼트 삭제

kubectl delete -f nginx.yamlNode Size 변경

aws autoscaling update-auto-scaling-group --auto-scaling-group-name ${ASG_NAME} --min-size 3 --desired-capacity 3 --max-size 3

aws autoscaling describe-auto-scaling-groups --query "AutoScalingGroups[? Tags[? (Key=='eks:cluster-name') && Value=='myeks']].[AutoScalingGroupName, MinSize, MaxSize,DesiredCapacity]" --output table

-

삭제 까지 약 10분 정도 소요됨

-

삭제 진행중

-

- 삭제 완료

CA 삭제

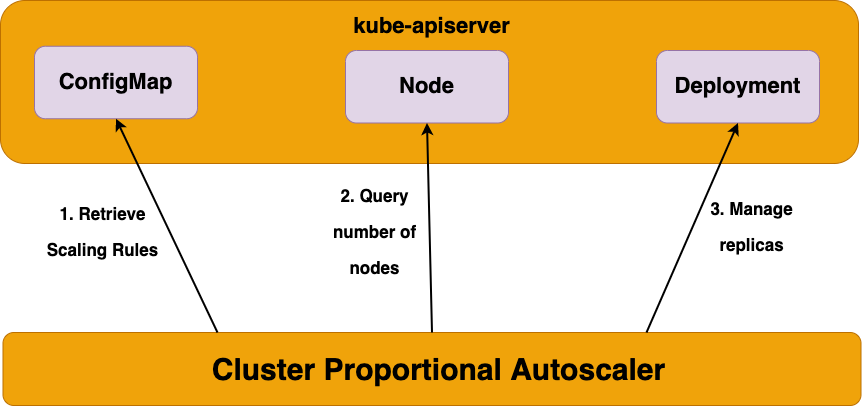

kubectl delete -f cluster-autoscaler-autodiscover.yaml5. CPA - Cluster Proportional Autoscaler

노드수 증가에 비례하여 성능 처리가 필요한 애플리케이션 (파드)를 수평으로 자동확장하는 기능

배포 진행

Helm Repo 추가 및 install 진행

helm repo add cluster-proportional-autoscaler https://kubernetes-sigs.github.io/cluster-proportional-autoscaler

# CPA규칙을 설정하고 helm차트를 릴리즈 필요

helm upgrade --install cluster-proportional-autoscaler cluster-proportional-autoscaler/cluster-proportional-autoscalerNginx 디플로이먼트 배포

cat <<EOT > cpa-nginx.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

spec:

replicas: 1

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:latest

resources:

limits:

cpu: "100m"

memory: "64Mi"

requests:

cpu: "100m"

memory: "64Mi"

ports:

- containerPort: 80

EOT

kubectl apply -f cpa-nginx.yamlCPA 규칙 설정

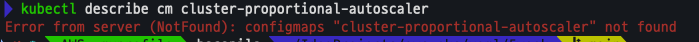

- 현재 CPA 규칙이 적용되지 않음을 확인

cat <<EOF > cpa-values.yam

config:

ladder:

nodesToReplicas:

- [1, 1]

- [2, 2]

- [3, 3]

- [4, 3]

- [5, 5]

options:

namespace: default

target: "deployment/nginx-deployment"

EOF

kubectl describe cm cluster-proportional-autoscaler

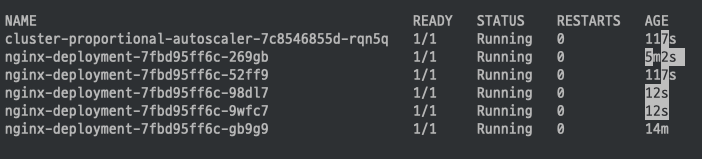

모니터링

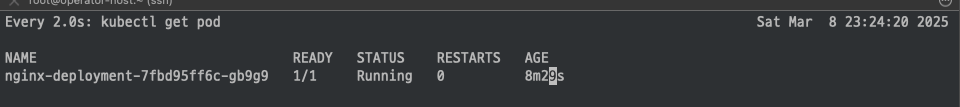

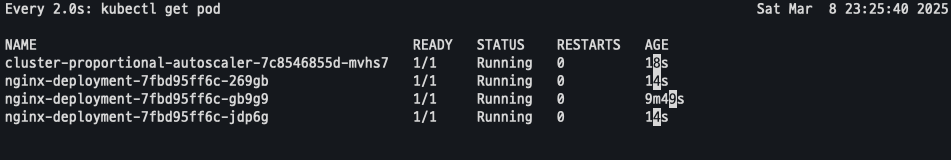

watch -d kubectl get pod

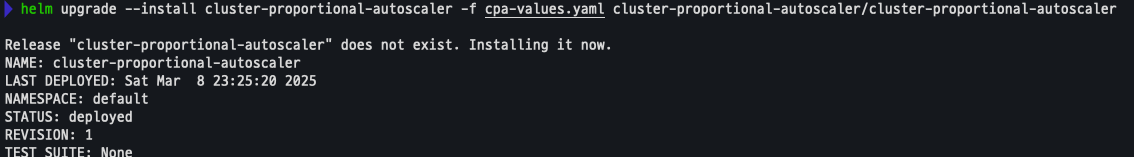

helm 업그레이드

helm upgrade --install cluster-proportional-autoscaler -f cpa-values.yaml cluster-proportional-autoscaler/cluster-proportional-autoscaler

모니터링 결과CPA 및 Nginx pod 2개 추가 된것을 확인 할수 있다.

Node 5개로 증가

export ASG_NAME=$(aws autoscaling describe-auto-scaling-groups --query "AutoScalingGroups[? Tags[? (Key=='eks:cluster-name') && Value=='myeks']].AutoScalingGroupName" --output text)

aws autoscaling update-auto-scaling-group --auto-scaling-group-name ${ASG_NAME} --min-size 5 --desired-capacity 5 --max-size 5

aws autoscaling describe-auto-scaling-groups --query "AutoScalingGroups[? Tags[? (Key=='eks:cluster-name') && Value=='myeks']].[AutoScalingGroupName, MinSize, MaxSize,DesiredCapacity]" --output table

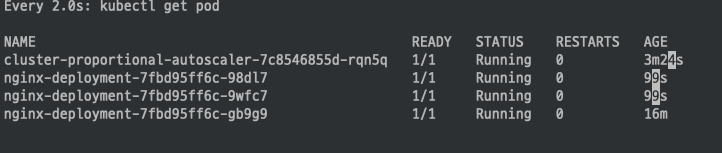

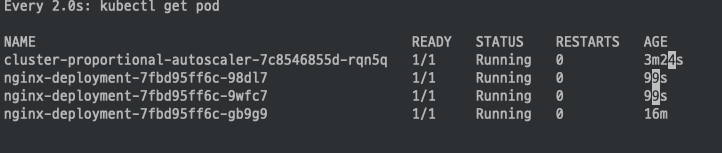

Node가 증가 됨에 따라 Nginx pod도 5개로 변경 된것을 확인

Node 4개로 축소

aws autoscaling update-auto-scaling-group --auto-scaling-group-name ${ASG_NAME} --min-size 4 --desired-capacity 4 --max-size 4

aws autoscaling describe-auto-scaling-groups --query "AutoScalingGroups[? Tags[? (Key=='eks:cluster-name') && Value=='myeks']].[AutoScalingGroupName, MinSize, MaxSize,DesiredCapacity]" --output table

Node개 4개로 축소 됨에 따라 Nginx Pod도 4개로 변경 된것을 확인

삭제

helm uninstall cluster-proportional-autoscaler && kubectl delete -f cpa-nginx.yaml6.Karpenter

kubernetes 클러스터에서 노드를 자동으로 프로비저닝하고 관리하기 위한 오픈 소스 도구.

특징

- 지능형의 동적인 인스턴스 유형 선택 - Spot, AWS Graviton 등

- 자동 워크로드 Consolidation 기능

- 일관성 있는 더 빠른 노드 구동시간을 통해 시간/비용 낭비 최소화

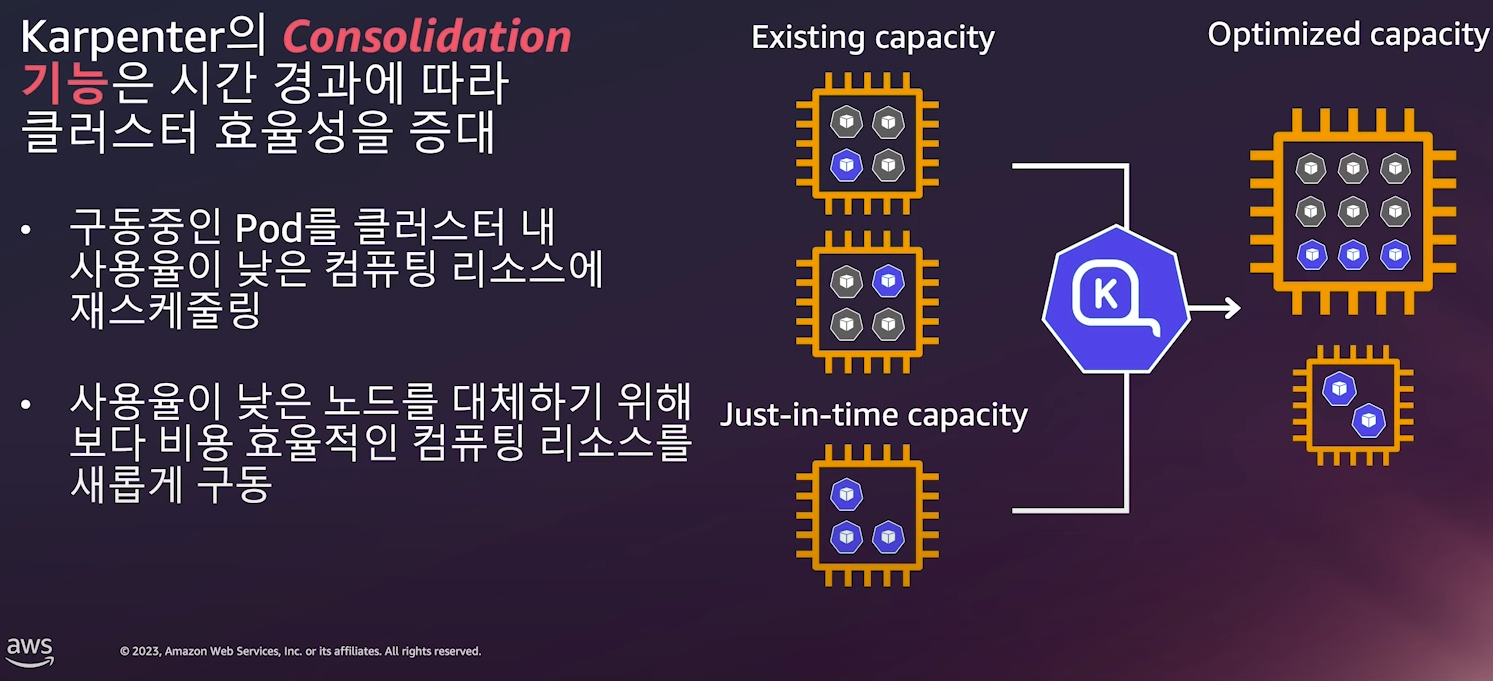

동작

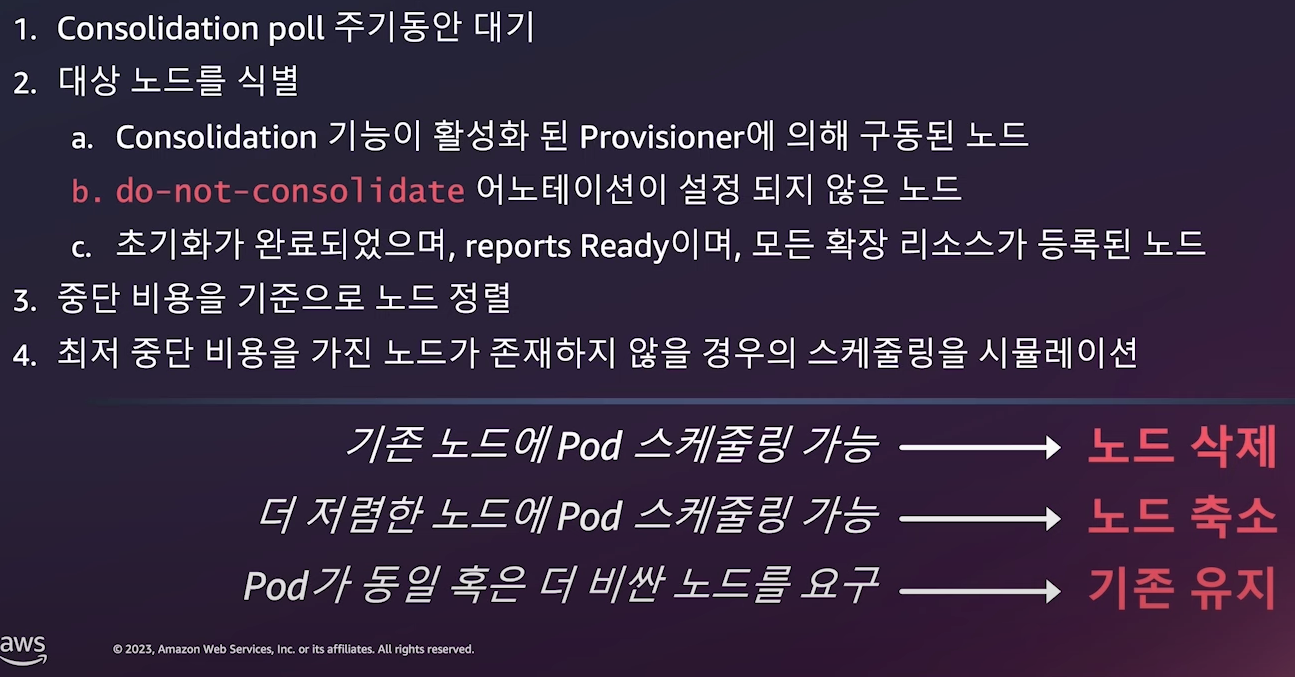

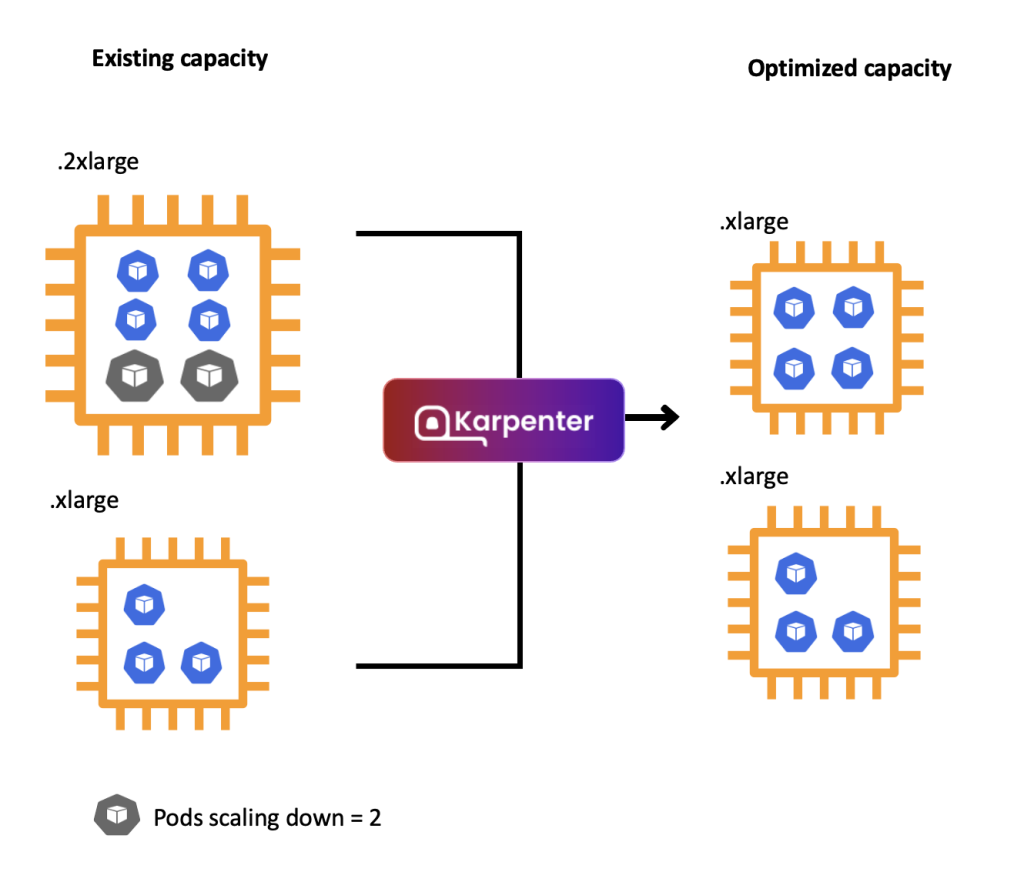

Consolidation

Consolidation 동작 방식

기본 환경 설치

변수 선언

# 변수 설정

export KARPENTER_NAMESPACE="kube-system"

export KARPENTER_VERSION="1.2.1"

export K8S_VERSION="1.32"

export AWS_PARTITION="aws" # if you are not using standard partitions, you may need to configure to aws-cn / aws-us-gov

export CLUSTER_NAME="bocopile-karpenter-demo" # ${USER}-karpenter-demo

export AWS_DEFAULT_REGION="ap-northeast-2"

export AWS_ACCOUNT_ID="$(aws sts get-caller-identity --query Account --output text)"

export TEMPOUT="$(mktemp)"

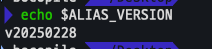

export ALIAS_VERSION="$(aws ssm get-parameter --name "/aws/service/eks/optimized-ami/${K8S_VERSION}/amazon-linux-2023/x86_64/standard/recommended/image_id" --query Parameter.Value | xargs aws ec2 describe-images --query 'Images[0].Name' --image-ids | sed -r 's/^.*(v[[:digit:]]+).*$/\1/')"

# 확인

echo "${KARPENTER_NAMESPACE}" "${KARPENTER_VERSION}" "${K8S_VERSION}" "${CLUSTER_NAME}" "${AWS_DEFAULT_REGION}" "${AWS_ACCOUNT_ID}" "${TEMPOUT}" "${ALIAS_VERSION}"

Cluster 생성

CloudFormation 스택으로 IAM Policy/Role, SQS, Event/Rule 생성

## IAM Policy : KarpenterControllerPolicy-gasida-karpenter-demo

## IAM Role : KarpenterNodeRole-gasida-karpenter-demo

curl -fsSL https://raw.githubusercontent.com/aws/karpenter-provider-aws/v"${KARPENTER_VERSION}"/website/content/en/preview/getting-started/getting-started-with-karpenter/cloudformation.yaml > "${TEMPOUT}" \

&& aws cloudformation deploy \

--stack-name "Karpenter-${CLUSTER_NAME}" \

--template-file "${TEMPOUT}" \

--capabilities CAPABILITY_NAMED_IAM \

--parameter-overrides "ClusterName=${CLUSTER_NAME}"

cluster 생성

eksctl create cluster -f - <<EOF

---

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: ${CLUSTER_NAME}

region: ${AWS_DEFAULT_REGION}

version: "${K8S_VERSION}"

tags:

karpenter.sh/discovery: ${CLUSTER_NAME}

iam:

withOIDC: true

podIdentityAssociations:

- namespace: "${KARPENTER_NAMESPACE}"

serviceAccountName: karpenter

roleName: ${CLUSTER_NAME}-karpenter

permissionPolicyARNs:

- arn:${AWS_PARTITION}:iam::${AWS_ACCOUNT_ID}:policy/KarpenterControllerPolicy-${CLUSTER_NAME}

iamIdentityMappings:

- arn: "arn:${AWS_PARTITION}:iam::${AWS_ACCOUNT_ID}:role/KarpenterNodeRole-${CLUSTER_NAME}"

username: system:node:{{EC2PrivateDNSName}}

groups:

- system:bootstrappers

- system:nodes

## If you intend to run Windows workloads, the kube-proxy group should be specified.

# For more information, see https://github.com/aws/karpenter/issues/5099.

# - eks:kube-proxy-windows

managedNodeGroups:

- instanceType: m5.large

amiFamily: AmazonLinux2023

name: ${CLUSTER_NAME}-ng

desiredCapacity: 2

minSize: 1

maxSize: 10

iam:

withAddonPolicies:

externalDNS: true

addons:

- name: eks-pod-identity-agent

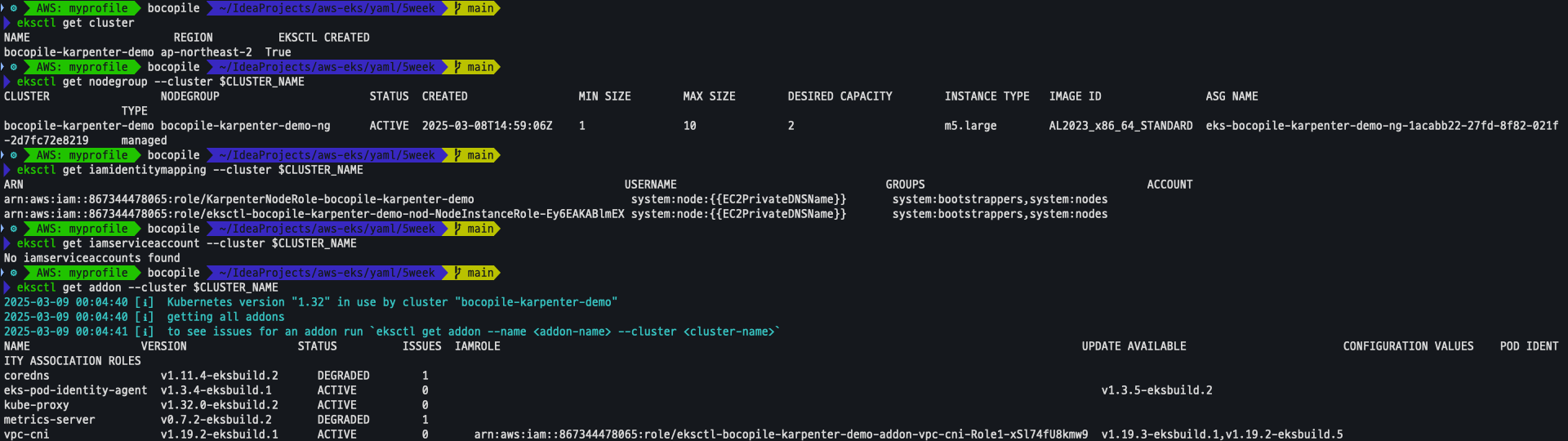

EOFEKS 배포 확인

eksctl get cluster

eksctl get nodegroup --cluster $CLUSTER_NAME

eksctl get iamidentitymapping --cluster $CLUSTER_NAME

eksctl get iamserviceaccount --cluster $CLUSTER_NAME

eksctl get addon --cluster $CLUSTER_NAME

기타 설정 확인

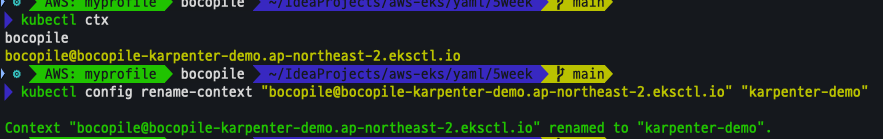

kubectl ctx

kubectl config rename-context "<각자 자신의 IAM User>@<자신의 Nickname>-karpenter-demo.ap-northeast-2.eksctl.io" "karpenter-demo"

kubectl config rename-context "bocopile@bocopile-karpenter-demo.ap-northeast-2.eksctl.io" "karpenter-demo"

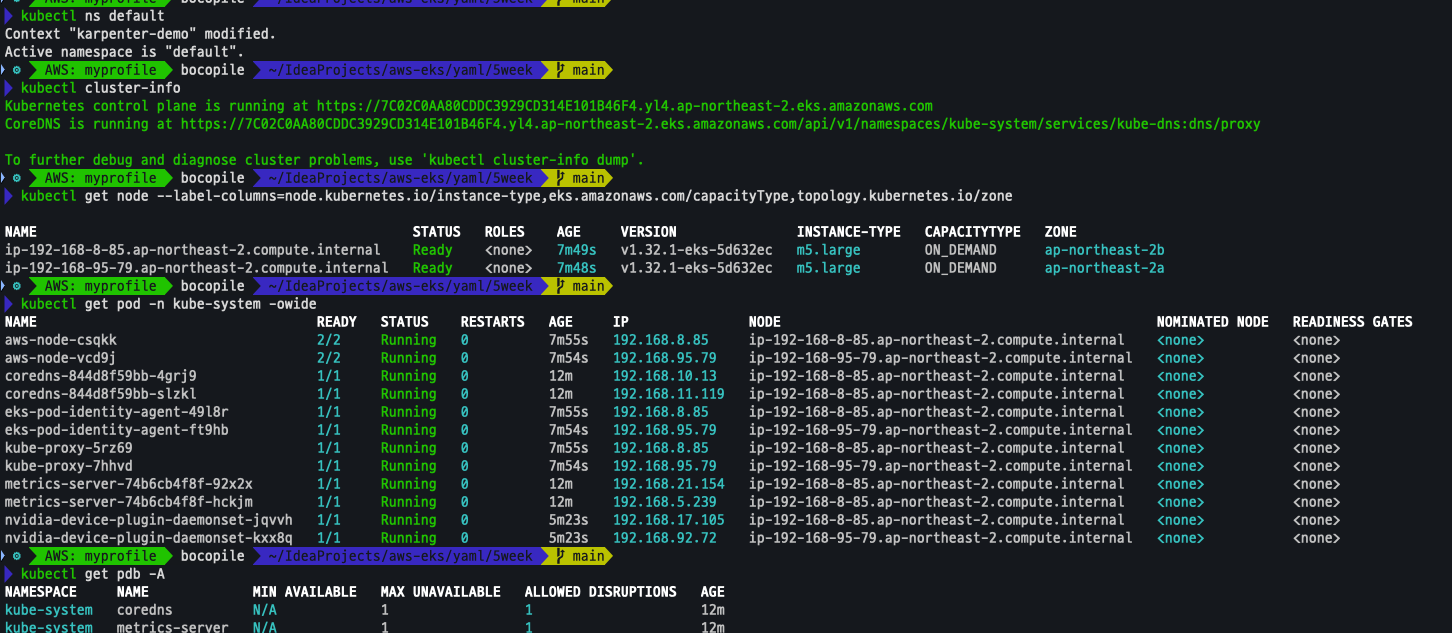

k8s 확인

kubectl ns default

kubectl cluster-info

kubectl get node --label-columns=node.kubernetes.io/instance-type,eks.amazonaws.com/capacityType,topology.kubernetes.io/zone

kubectl get pod -n kube-system -owide

kubectl get pdb -A

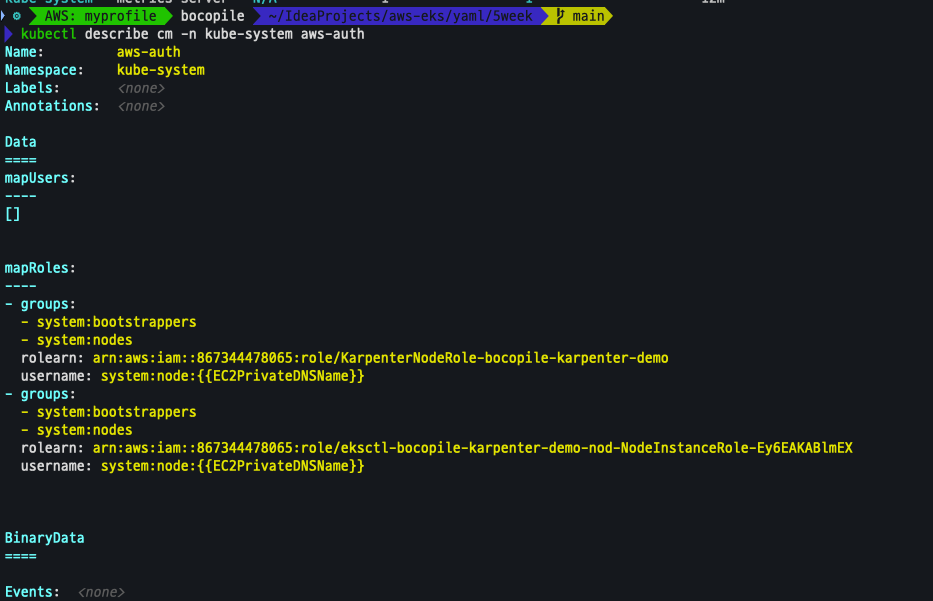

kubectl describe cm -n kube-system aws-auth

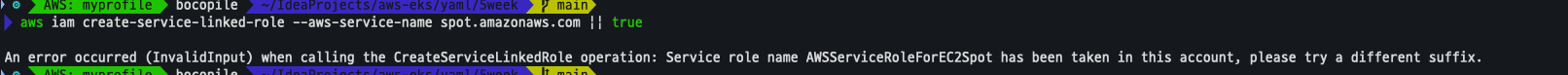

EC2 Spot Fleet의 service-linked-role 생성 확인

-

생성되지 않음을 확인

aws iam create-service-linked-role --aws-service-name spot.amazonaws.com || true

Install Karpenter

- 기존 로그인 되어 있는 helm 로그아웃

helm registry logout public.ecr.aws

- Karpenter 변수 선언

export CLUSTER_ENDPOINT="$(aws eks describe-cluster --name "${CLUSTER_NAME}" --query "cluster.endpoint" --output text)" export KARPENTER_IAM_ROLE_ARN="arn:${AWS_PARTITION}:iam::${AWS_ACCOUNT_ID}:role/${CLUSTER_NAME}-karpenter" echo "${CLUSTER_ENDPOINT} ${KARPENTER_IAM_ROLE_ARN}"

- Karpenter 설치 진행

helm upgrade --install karpenter oci://public.ecr.aws/karpenter/karpenter --version "${KARPENTER_VERSION}" --namespace "${KARPENTER_NAMESPACE}" --create-namespace \ --set "settings.clusterName=${CLUSTER_NAME}" \ --set "settings.interruptionQueue=${CLUSTER_NAME}" \ --set controller.resources.requests.cpu=1 \ --set controller.resources.requests.memory=1Gi \ --set controller.resources.limits.cpu=1 \ --set controller.resources.limits.memory=1Gi \ --wait

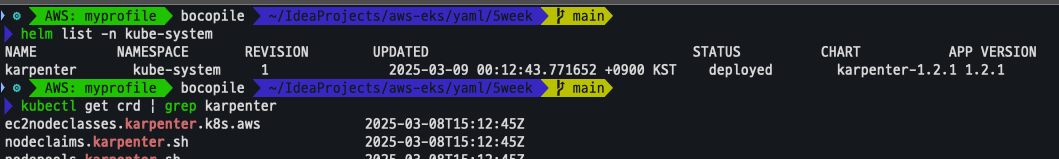

- 설치 확인

helm list -n kube-system kubectl get-all -n $KARPENTER_NAMESPACE kubectl get all -n $KARPENTER_NAMESPACE kubectl get crd | grep karpenter

프로메테우스 / 그라파나 설치

-

사전 작업

helm repo add grafana-charts https://grafana.github.io/helm-charts helm repo add prometheus-community https://prometheus-community.github.io/helm-charts helm repo update kubectl create namespace monitoring -

프로메테우스 설치

curl -fsSL https://raw.githubusercontent.com/aws/karpenter-provider-aws/v"${KARPENTER_VERSION}"/website/content/en/preview/getting-started/getting-started-with-karpenter/prometheus-values.yaml | envsubst | tee prometheus-values.yaml helm install --namespace monitoring prometheus prometheus-community/prometheus --values prometheus-values.yaml

-

알럿 매니저 삭제 (사용하지 않음)

kubectl delete sts -n monitoring prometheus-alertmanager

-

프로메테우스 접속 설정

export POD_NAME=$(kubectl get pods --namespace monitoring -l "app.kubernetes.io/name=prometheus,app.kubernetes.io/instance=prometheus" -o jsonpath="{.items[0].metadata.name}") kubectl --namespace monitoring port-forward $POD_NAME 9090 & open http://127.0.0.1:9090

-

그라파나 설치

curl -fsSL https://raw.githubusercontent.com/aws/karpenter-provider-aws/v"${KARPENTER_VERSION}"/website/content/en/preview/getting-started/getting-started-with-karpenter/grafana-values.yaml | tee grafana-values.yaml helm install --namespace monitoring grafana grafana-charts/grafana --values grafana-values.yaml

-

admin 암호 확인

kubectl get secret --namespace monitoring grafana -o jsonpath="{.data.admin-password}" | base64 --decode ; echo

-

그라파나 접속

kubectl port-forward --namespace monitoring svc/grafana 3000:80 & open http://127.0.0.1:3000

NodePool 정의

- 관리 리소스 선택:

securityGroupSelector및subnetSelector를 사용해 리소스를 검색함. - 노드 정리 정책 (

consolidationPolicy): 미사용 노드를 제거하여 비용을 절감하되, 데몬셋은 제외됨.consolidateAfter=Never로 설정하면 통합 비활성화 가능. - 단일 NodePool의 역할: 여러 유형의 파드를 지원하며, 레이블 및 친화성을 기반으로 자동 스케줄링 및 프로비저닝 수행 → 개별 노드 그룹 관리 필요 없음.

- 기본 NodePool 생성:

securityGroupSelectorTerms및subnetSelectorTerms를 사용해 노드 시작 시 필요한 리소스를 검색함. 리소스 공유 방식에 따라 태그 지정 방식이 달라질 수 있음. - 용량 제한: NodePool에서 생성된 모든 용량의 합이 지정된 한도를 초과하지 않는 범위에서 용량을 확장함.

NoodPool 테스트

버전 확인

echo $ALIAS_VERSION

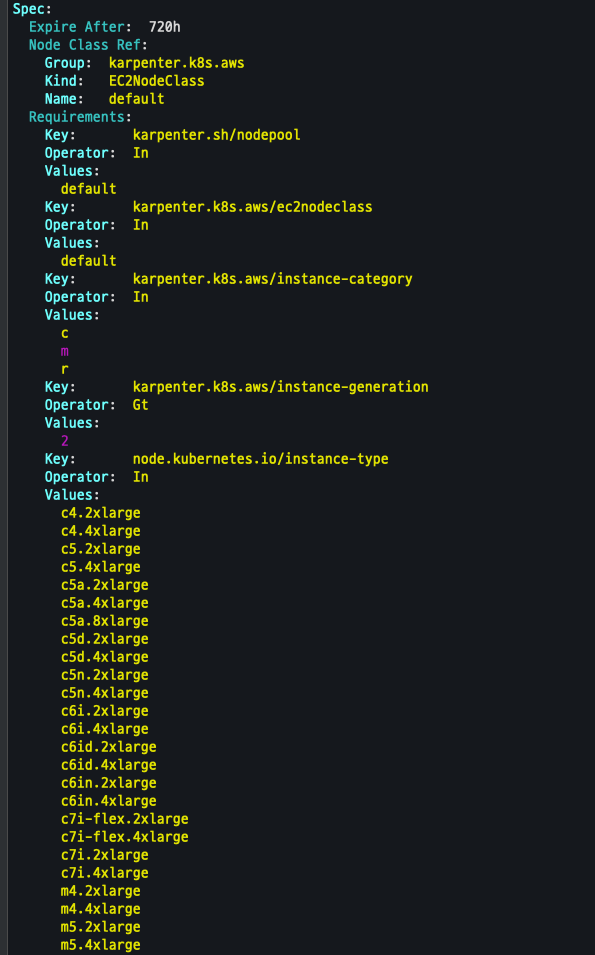

NodePool 생성

cat <<EOF | envsubst | kubectl apply -f -

apiVersion: karpenter.sh/v1

kind: NodePool

metadata:

name: default

spec:

template:

spec:

requirements:

- key: kubernetes.io/arch

operator: In

values: ["amd64"]

- key: kubernetes.io/os

operator: In

values: ["linux"]

- key: karpenter.sh/capacity-type

operator: In

values: ["on-demand"]

- key: karpenter.k8s.aws/instance-category

operator: In

values: ["c", "m", "r"]

- key: karpenter.k8s.aws/instance-generation

operator: Gt

values: ["2"]

nodeClassRef:

group: karpenter.k8s.aws

kind: EC2NodeClass

name: default

expireAfter: 720h # 30 * 24h = 720h

limits:

cpu: 1000

disruption:

consolidationPolicy: WhenEmptyOrUnderutilized

consolidateAfter: 1m

---

apiVersion: karpenter.k8s.aws/v1

kind: EC2NodeClass

metadata:

name: default

spec:

role: "KarpenterNodeRole-${CLUSTER_NAME}" # replace with your cluster name

amiSelectorTerms:

- alias: "al2023@${ALIAS_VERSION}" # ex) al2023@latest

subnetSelectorTerms:

- tags:

karpenter.sh/discovery: "${CLUSTER_NAME}" # replace with your cluster name

securityGroupSelectorTerms:

- tags:

karpenter.sh/discovery: "${CLUSTER_NAME}" # replace with your cluster name

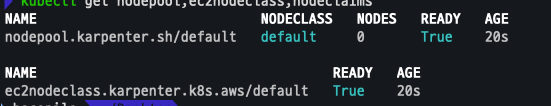

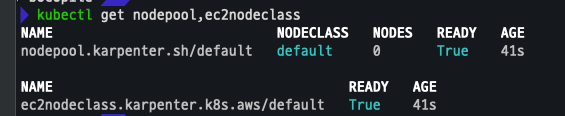

EOF확인

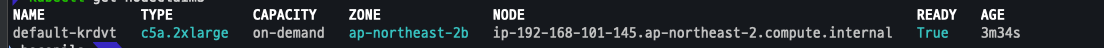

kubectl get nodepool,ec2nodeclass,nodeclaims

Scale up deployment

-

deployment 배포

# pause 파드 1개에 CPU 1개 최소 보장 할당할 수 있게 디플로이먼트 배포 cat <<EOF | kubectl apply -f - apiVersion: apps/v1 kind: Deployment metadata: name: inflate spec: replicas: 0 selector: matchLabels: app: inflate template: metadata: labels: app: inflate spec: terminationGracePeriodSeconds: 0 securityContext: runAsUser: 1000 runAsGroup: 3000 fsGroup: 2000 containers: - name: inflate image: public.ecr.aws/eks-distro/kubernetes/pause:3.7 resources: requests: cpu: 1 securityContext: allowPrivilegeEscalation: false EOF

-

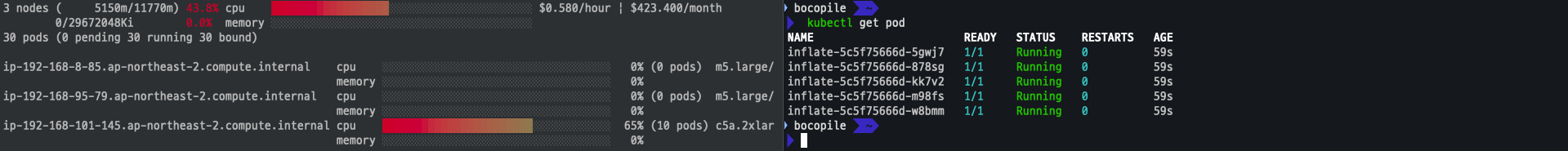

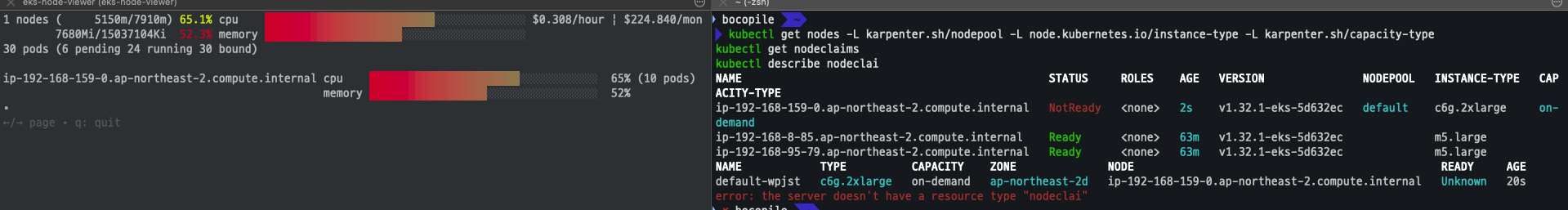

eks 모니터링

eks-node-viewer --resources cpu,memory

-

scale up

kubectl scale deployment inflate --replicas 5

-

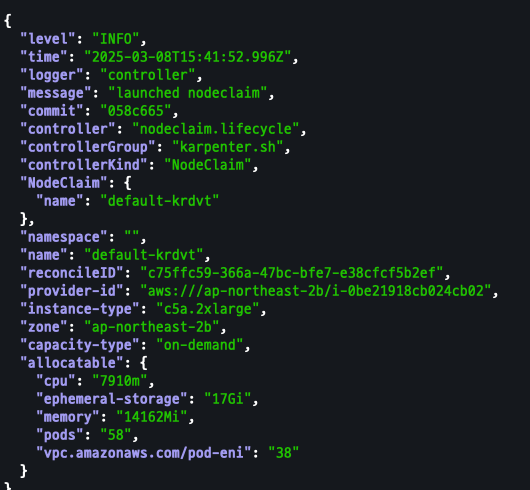

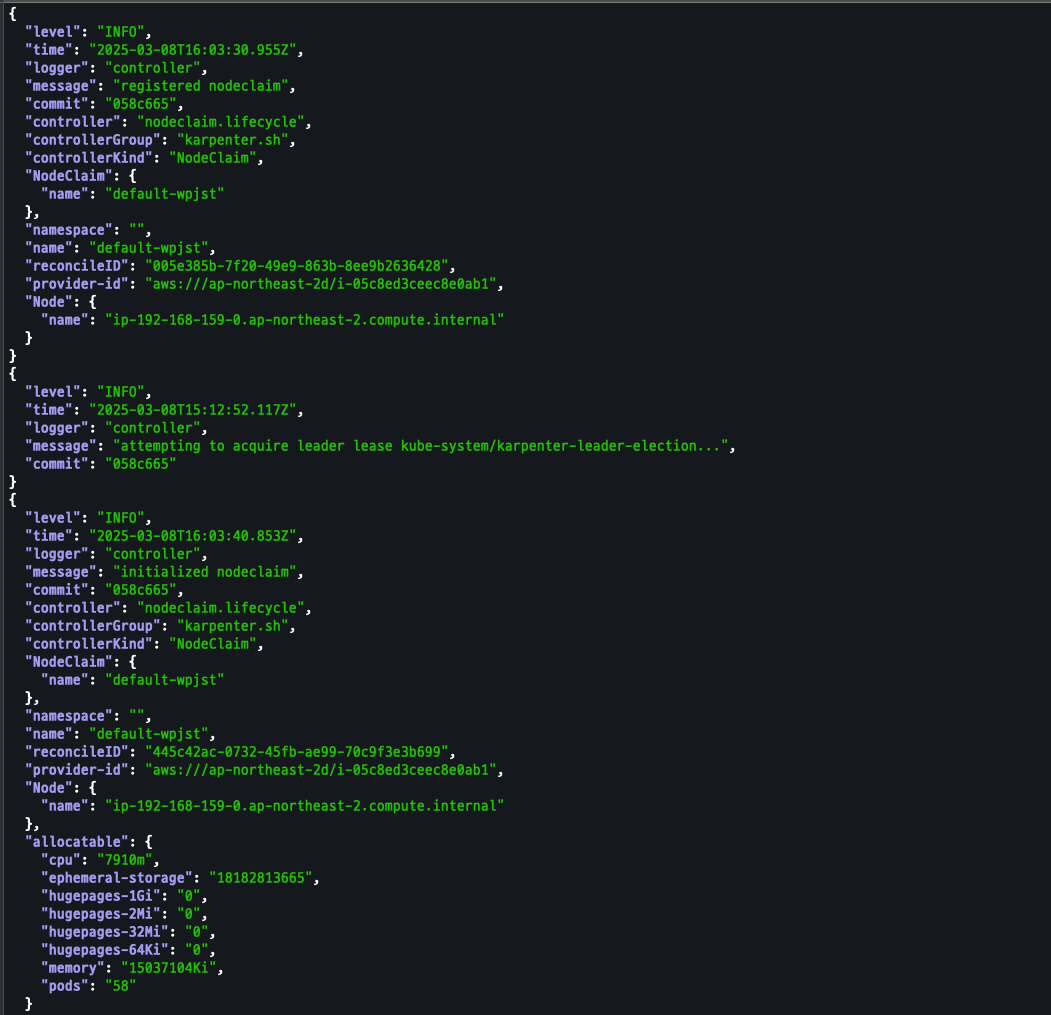

출력 로그 분석

kubectl logs -f -n "${KARPENTER_NAMESPACE}" -l app.kubernetes.io/name=karpenter -c controller kubectl logs -f -n "${KARPENTER_NAMESPACE}" -l app.kubernetes.io/name=karpenter -c controller | jq '.' kubectl logs -n "${KARPENTER_NAMESPACE}" -l app.kubernetes.io/name=karpenter -c controller | grep 'launched nodeclaim' | jq '.'

-

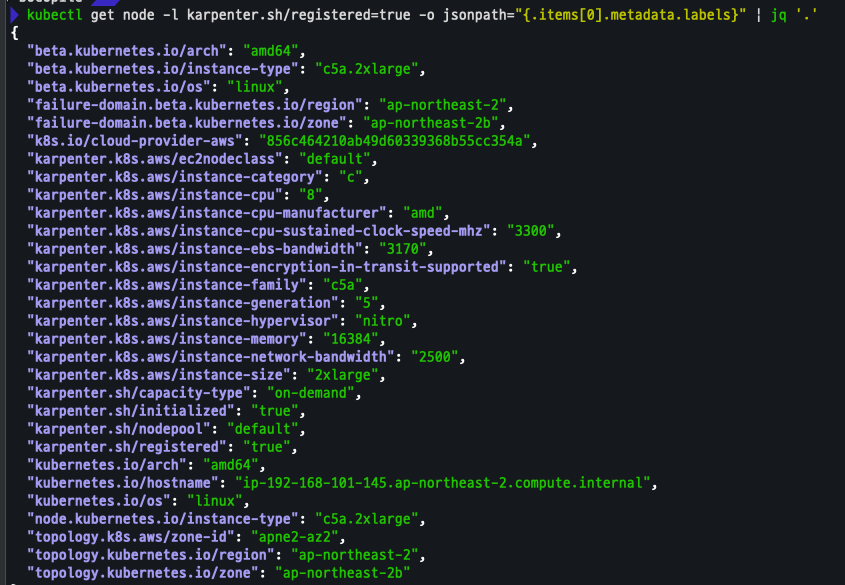

NodeClaim 확인

kubectl get nodeclaims kubectl describe nodeclaims kubectl get node -l karpenter.sh/registered=true -o jsonpath="{.items[0].metadata.labels}" | jq '.'

위의 스팩 중에서 현재 상태에서 최적인 스팩을 선정한다.

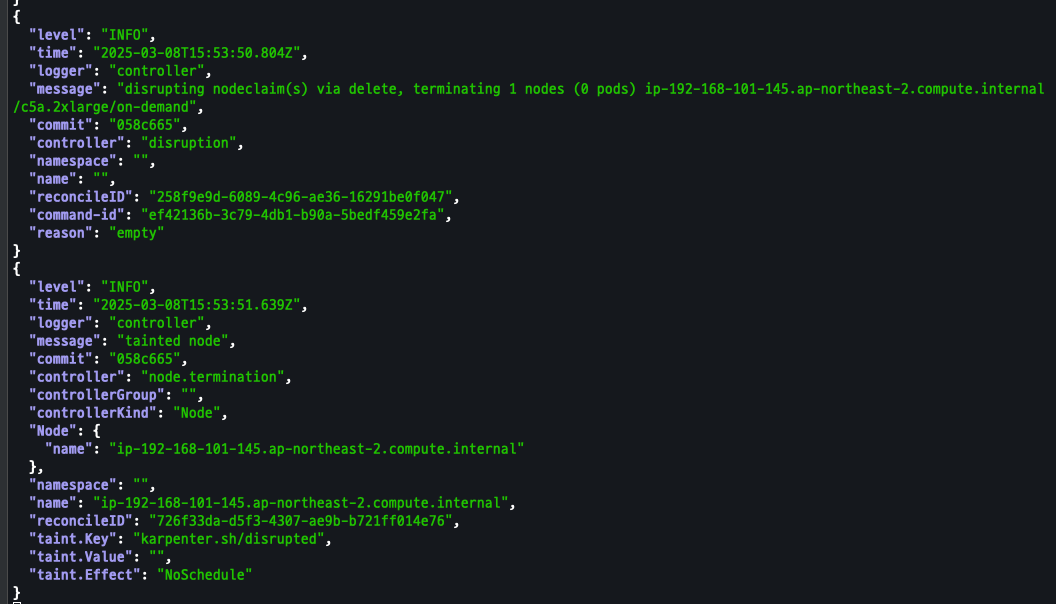

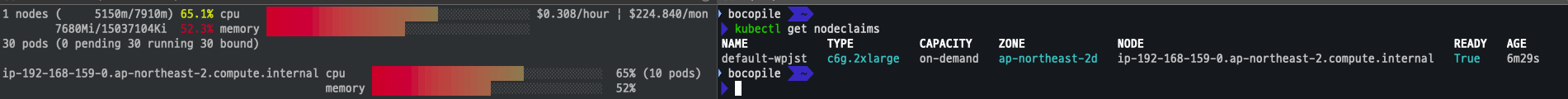

Scale Down Deployment

-

deployment 삭제

# Now, delete the deployment. After a short amount of time, Karpenter should terminate the empty nodes due to consolidation. kubectl delete deployment inflate && date

-

출력 로그 확인 => 삭제 된 것을 확인

# 출력 로그 분석해보자! kubectl logs -f -n "${KARPENTER_NAMESPACE}" -l app.kubernetes.io/name=karpenter -c controller | jq '.'

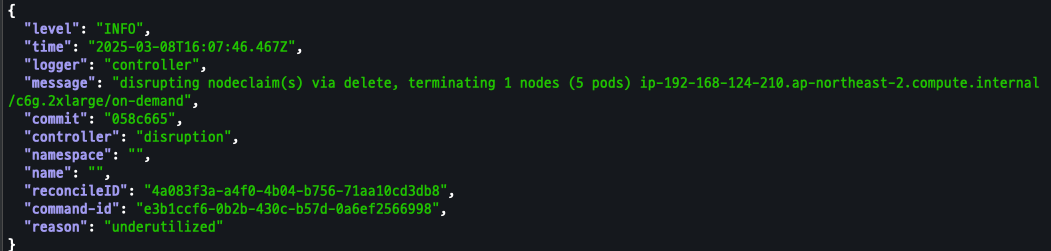

Disruption (구 Consolidation)

- Expiration (만료): 기본 30일(720시간) 후 인스턴스를 자동 만료하여 노드를 최신 상태로 유지

- Drift (드리프트): NodePool 및 EC2NodeClass의 구성 변경을 감지하고 필요한 수정 사항 적용

- Consolidation (통합): 저활용 인스턴스를 통합하여 비용 효율적인 컴퓨팅 환경 유지

- 스팟 인스턴스 운영:

- Karpenter는 AWS EC2 Fleet Instance API를 호출해 NodePool 기반으로 적절한 인스턴스 유형 선택

- API는 시작 성공 및 실패한 인스턴스 목록을 반환하며, 실패 시 대체 용량 요청 또는 일정 제약 조건 완화

spot-to-Spot Consolidation

- 온디맨드 통합과 달리 스팟 간 통합은 다른 접근 방식 필요 (온디맨드는 규모 조정 및 최저 가격이 주요 지표)

- 스팟 통합 최적화를 위해 Karpenter에는 최소 15개 이상의 인스턴스 유형이 포함된 다양한 인스턴스 구성이 필요

- 다양한 인스턴스 구성이 없으면 가용성이 낮고 중단 빈도가 높은 인스턴스를 선택할 위험이 존재

배포 진행

-

기존 NodePool 제거

kubectl delete nodepool,ec2nodeclass default

-

모니터링

kubectl logs -f -n "${KARPENTER_NAMESPACE}" -l app.kubernetes.io/name=karpenter -c controller | jq '.' eks-node-viewer --resources cpu,memory --node-selector "karpenter.sh/registered=true" --extra-labels eks-node-viewer/node-age watch -d "kubectl get nodes -L karpenter.sh/nodepool -L node.kubernetes.io/instance-type -L karpenter.sh/capacity-type"

-

Karpenter Node Pool 및 EC2 Node Class 생성

cat <<EOF | envsubst | kubectl apply -f - apiVersion: karpenter.sh/v1 kind: NodePool metadata: name: default spec: template: spec: nodeClassRef: group: karpenter.k8s.aws kind: EC2NodeClass name: default requirements: - key: kubernetes.io/os operator: In values: ["linux"] - key: karpenter.sh/capacity-type operator: In values: ["on-demand"] - key: karpenter.k8s.aws/instance-category operator: In values: ["c", "m", "r"] - key: karpenter.k8s.aws/instance-size operator: NotIn values: ["nano","micro","small","medium"] - key: karpenter.k8s.aws/instance-hypervisor operator: In values: ["nitro"] expireAfter: 1h # nodes are terminated automatically after 1 hour limits: cpu: "1000" memory: 1000Gi disruption: consolidationPolicy: WhenEmptyOrUnderutilized # policy enables Karpenter to replace nodes when they are either empty or underutilized consolidateAfter: 1m --- apiVersion: karpenter.k8s.aws/v1 kind: EC2NodeClass metadata: name: default spec: role: "KarpenterNodeRole-${CLUSTER_NAME}" # replace with your cluster name amiSelectorTerms: - alias: "al2023@latest" subnetSelectorTerms: - tags: karpenter.sh/discovery: "${CLUSTER_NAME}" # replace with your cluster name securityGroupSelectorTerms: - tags: karpenter.sh/discovery: "${CLUSTER_NAME}" # replace with your cluster name EOF

-

확인

kubectl get nodepool,ec2nodeclass

-

샘플 워크로드 배포

cat <<EOF | kubectl apply -f - apiVersion: apps/v1 kind: Deployment metadata: name: inflate spec: replicas: 5 selector: matchLabels: app: inflate template: metadata: labels: app: inflate spec: terminationGracePeriodSeconds: 0 securityContext: runAsUser: 1000 runAsGroup: 3000 fsGroup: 2000 containers: - name: inflate image: public.ecr.aws/eks-distro/kubernetes/pause:3.7 resources: requests: cpu: 1 memory: 1.5Gi securityContext: allowPrivilegeEscalation: false EOF

-

확인

kubectl get nodes -L karpenter.sh/nodepool -L node.kubernetes.io/instance-type -L karpenter.sh/capacity-type kubectl get nodeclaims kubectl describe nodeclai kubectl logs -f -n "${KARPENTER_NAMESPACE}" -l app.kubernetes.io/name=karpenter -c controller | jq '.' kubectl logs -n "${KARPENTER_NAMESPACE}" -l app.kubernetes.io/name=karpenter -c controller | grep 'launched nodeclaim' | jq '.'

-

scale 12 로 변경 및 확인

kubectl scale deployment/inflate --replicas 12 kubectl get nodeclaims

-

scale 5로 변경 및 확인

-

바로 변경되지 않음 (일정 시간이 소요)

kubectl scale deployment/inflate --replicas 5 kubectl get nodeclaims -

변경 직후

-

일정 시간 경과 후

-

-

로그 확인

kubectl logs -f -n "${KARPENTER_NAMESPACE}" -l app.kubernetes.io/name=karpenter -c controller | jq '.'

g)

-

삭제

kubectl delete deployment inflate kubectl delete nodepool,ec2nodeclass default

실습 완료 후 삭제 진행

# Karpenter helm 삭제

helm uninstall karpenter --namespace "${KARPENTER_NAMESPACE}"

# Karpenter IAM Role 등 생성한 CloudFormation 삭제

aws cloudformation delete-stack --stack-name "Karpenter-${CLUSTER_NAME}"

# EC2 Launch Template 삭제

aws ec2 describe-launch-templates --filters "Name=tag:karpenter.k8s.aws/cluster,Values=${CLUSTER_NAME}" |

jq -r ".LaunchTemplates[].LaunchTemplateName" |

xargs -I{} aws ec2 delete-launch-template --launch-template-name {}

# 클러스터 삭제

eksctl delete cluster --name "${CLUSTER_NAME}"