전이학습 : 특정 분야에서 학습된 신경망을 다른 분야의 학습에 이용하는 것

전이 학습의 특징

- 합성곱 신경망의 미세조정(finetuning): 무작위 초기화 대신 미리 학습한 신경망으로 초기화 함.

- 신경망 마지막 계층 교체: 신경망의 마지막 완전 연결 계층이 새로운 클래스의 수와 동일한 수의 노드 및 새로운 분류 계층을 포함하도록 수정됨. 이 새로운 분류 계층은 소프트맥스 계층에 의해 계산된 확률을 기반으로 출력값 생성

from __future__ import print_function, division

import torch

import torch.nn as nn

import torch.optim as optim

from torch.optim import lr_scheduler

import torch.backends.cudnn as cudnn

import numpy as np

import torchvision

from torchvision import datasets, models, transforms

import matplotlib.pyplot as plt

import time

import os

import copy

cudnn.benchmark = True데이터 불러오기

data_transforms = {

'train': transforms.Compose([

transforms.RandomResizedCrop(224),

transforms.RandomHorizontalFlip(),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

'val': transforms.Compose([

transforms.Resize(256),

transforms.CenterCrop(224),

transforms.ToTensor(),

transforms.Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225])

]),

}

data_dir = '/content/drive/MyDrive/hymenoptera_data'

image_datasets = {x: datasets.ImageFolder(os.path.join(data_dir, x),

data_transforms[x])

for x in ['train', 'val']}

dataloaders = {x: torch.utils.data.DataLoader(image_datasets[x], batch_size=4,

shuffle=True, num_workers=4)

for x in ['train', 'val']}

dataset_sizes = {x: len(image_datasets[x]) for x in ['train', 'val']}

class_names = image_datasets['train'].classes

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")일부 이미지 시각화

def imshow(inp, title=None):

"""Imshow for Tensor."""

inp = inp.numpy().transpose((1, 2, 0))

mean = np.array([0.485, 0.456, 0.406])

std = np.array([0.229, 0.224, 0.225])

inp = std * inp + mean

inp = np.clip(inp, 0, 1)

plt.imshow(inp)

if title is not None:

plt.title(title)

plt.pause(0.001)

inputs, classes = next(iter(dataloaders['train']))

out = torchvision.utils.make_grid(inputs)

imshow(out, title=[class_names[x] for x in classes])

모델 학습

def train_model(model, criterion, optimizer, scheduler, num_epochs=25):

since = time.time()

best_model_wts = copy.deepcopy(model.state_dict())

best_acc = 0.0

for epoch in range(num_epochs):

print(f'Epoch {epoch}/{num_epochs - 1}')

print('-' * 10)

for phase in ['train', 'val']:

if phase == 'train':

model.train()

else:

model.eval()

running_loss = 0.0

running_corrects = 0

for inputs, labels in dataloaders[phase]:

inputs = inputs.to(device)

labels = labels.to(device)

optimizer.zero_grad()

with torch.set_grad_enabled(phase == 'train'):

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

loss = criterion(outputs, labels)

if phase == 'train':

loss.backward()

optimizer.step()

running_loss += loss.item() * inputs.size(0)

running_corrects += torch.sum(preds == labels.data)

if phase == 'train':

scheduler.step()

epoch_loss = running_loss / dataset_sizes[phase]

epoch_acc = running_corrects.double() / dataset_sizes[phase]

print(f'{phase} Loss: {epoch_loss:.4f} Acc: {epoch_acc:.4f}')

if phase == 'val' and epoch_acc > best_acc:

best_acc = epoch_acc

best_model_wts = copy.deepcopy(model.state_dict())

print()

time_elapsed = time.time() - since

print(f'Training complete in {time_elapsed // 60:.0f}m {time_elapsed % 60:.0f}s')

print(f'Best val Acc: {best_acc:4f}')

model.load_state_dict(best_model_wts)

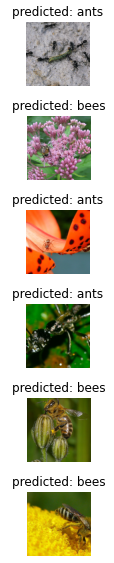

return model모델 예측값 시각화 함수 정의

def visualize_model(model, num_images=6):

was_training = model.training

model.eval()

images_so_far = 0

fig = plt.figure()

with torch.no_grad():

for i, (inputs, labels) in enumerate(dataloaders['val']):

inputs = inputs.to(device)

labels = labels.to(device)

outputs = model(inputs)

_, preds = torch.max(outputs, 1)

for j in range(inputs.size()[0]):

images_so_far += 1

ax = plt.subplot(num_images//2, 2, images_so_far)

ax.axis('off')

ax.set_title(f'predicted: {class_names[preds[j]]}')

imshow(inputs.cpu().data[j])

if images_so_far == num_images:

model.train(mode=was_training)

return

model.train(mode=was_training)전이 학습을 위한 합성곱 신경망 미세 조정 (finetuning)

model_ft = models.resnet18(pretrained=True)

num_ftrs = model_ft.fc.in_features

model_ft.fc = nn.Linear(num_ftrs, 2)

model_ft = model_ft.to(device)

criterion = nn.CrossEntropyLoss()

optimizer_ft = optim.SGD(model_ft.parameters(), lr=0.001, momentum=0.9)

exp_lr_scheduler = lr_scheduler.StepLR(optimizer_ft, step_size=7, gamma=0.1)학습 및 평가

model_ft = train_model(model_ft, criterion, optimizer_ft, exp_lr_scheduler,

num_epochs=25)Epoch 0/24

----------

train Loss: 0.5073 Acc: 0.7582

val Loss: 0.2956 Acc: 0.8431

Epoch 1/24

----------

train Loss: 0.5482 Acc: 0.7910

val Loss: 0.4578 Acc: 0.8497

Epoch 2/24

----------

train Loss: 0.5924 Acc: 0.7295

val Loss: 0.8167 Acc: 0.7320

Epoch 3/24

----------

train Loss: 0.6617 Acc: 0.7418

val Loss: 0.3200 Acc: 0.8758

Epoch 4/24

----------

train Loss: 0.4940 Acc: 0.7869

val Loss: 0.3306 Acc: 0.8954

Epoch 5/24

----------

train Loss: 0.3569 Acc: 0.8484

val Loss: 1.0382 Acc: 0.6471

Epoch 6/24

----------

train Loss: 0.4264 Acc: 0.8443

val Loss: 0.3347 Acc: 0.9085

Epoch 7/24

----------

train Loss: 0.3745 Acc: 0.8484

val Loss: 0.2260 Acc: 0.9150

Epoch 8/24

----------

train Loss: 0.4113 Acc: 0.8402

val Loss: 0.2062 Acc: 0.9412

Epoch 9/24

----------

train Loss: 0.2836 Acc: 0.8607

val Loss: 0.2039 Acc: 0.9412

Epoch 10/24

----------

train Loss: 0.2826 Acc: 0.8811

val Loss: 0.1899 Acc: 0.9412

Epoch 11/24

----------

train Loss: 0.2928 Acc: 0.8934

val Loss: 0.1776 Acc: 0.9542

Epoch 12/24

----------

train Loss: 0.2210 Acc: 0.8934

val Loss: 0.1576 Acc: 0.9608

Epoch 13/24

----------

train Loss: 0.3341 Acc: 0.8566

val Loss: 0.1616 Acc: 0.9542

Epoch 14/24

----------

train Loss: 0.2615 Acc: 0.8689

val Loss: 0.1892 Acc: 0.9477

Epoch 15/24

----------

train Loss: 0.3531 Acc: 0.8484

val Loss: 0.1612 Acc: 0.9412

Epoch 16/24

----------

train Loss: 0.3057 Acc: 0.8443

val Loss: 0.1468 Acc: 0.9542

Epoch 17/24

----------

train Loss: 0.3125 Acc: 0.8525

val Loss: 0.1663 Acc: 0.9477

Epoch 18/24

----------

train Loss: 0.3196 Acc: 0.8525

val Loss: 0.1656 Acc: 0.9608

Epoch 19/24

----------

train Loss: 0.3579 Acc: 0.8770

val Loss: 0.1603 Acc: 0.9346

Epoch 20/24

----------

train Loss: 0.2483 Acc: 0.9180

val Loss: 0.1700 Acc: 0.9412

Epoch 21/24

----------

train Loss: 0.3053 Acc: 0.8648

val Loss: 0.1628 Acc: 0.9412

Epoch 22/24

----------

train Loss: 0.2553 Acc: 0.8893

val Loss: 0.1716 Acc: 0.9412

Epoch 23/24

----------

train Loss: 0.2478 Acc: 0.9057

val Loss: 0.1663 Acc: 0.9412

Epoch 24/24

----------

train Loss: 0.2874 Acc: 0.8607

val Loss: 0.1571 Acc: 0.9346

Training complete in 2m 27s

Best val Acc: 0.960784모델 예측값 시각화

visualize_model(model_ft)

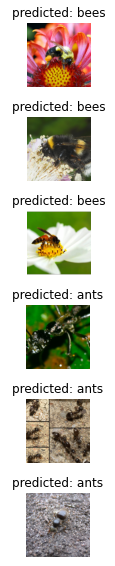

전이 학습을 위해 신경망 마지막 계층을 제외하고 모든 부분 고정

model_conv = torchvision.models.resnet18(pretrained=True)

for param in model_conv.parameters():

param.requires_grad = False

num_ftrs = model_conv.fc.in_features

model_conv.fc = nn.Linear(num_ftrs, 2)

model_conv = model_conv.to(device)

criterion = nn.CrossEntropyLoss()

optimizer_conv = optim.SGD(model_conv.fc.parameters(), lr=0.001, momentum=0.9)

exp_lr_scheduler = lr_scheduler.StepLR(optimizer_conv, step_size=7, gamma=0.1)학습 및 평가

model_conv = train_model(model_conv, criterion, optimizer_conv,

exp_lr_scheduler, num_epochs=25)Epoch 0/24

----------

train Loss: 0.6325 Acc: 0.6762

val Loss: 0.2050 Acc: 0.9477

Epoch 1/24

----------

train Loss: 0.5001 Acc: 0.7664

val Loss: 0.2253 Acc: 0.9150

Epoch 2/24

----------

train Loss: 0.6085 Acc: 0.7295

val Loss: 0.1986 Acc: 0.9542

Epoch 3/24

----------

train Loss: 0.4103 Acc: 0.7910

val Loss: 0.2520 Acc: 0.8954

Epoch 4/24

----------

train Loss: 0.4319 Acc: 0.8156

val Loss: 0.2173 Acc: 0.9346

Epoch 5/24

----------

train Loss: 0.4228 Acc: 0.8361

val Loss: 0.1651 Acc: 0.9477

Epoch 6/24

----------

train Loss: 0.3106 Acc: 0.8525

val Loss: 0.2060 Acc: 0.9281

Epoch 7/24

----------

train Loss: 0.4058 Acc: 0.7910

val Loss: 0.1622 Acc: 0.9542

Epoch 8/24

----------

train Loss: 0.3217 Acc: 0.8484

val Loss: 0.1744 Acc: 0.9477

Epoch 9/24

----------

train Loss: 0.3235 Acc: 0.8607

val Loss: 0.1812 Acc: 0.9477

Epoch 10/24

----------

train Loss: 0.3116 Acc: 0.8770

val Loss: 0.1952 Acc: 0.9346

Epoch 11/24

----------

train Loss: 0.3638 Acc: 0.8361

val Loss: 0.1824 Acc: 0.9346

Epoch 12/24

----------

train Loss: 0.3677 Acc: 0.8238

val Loss: 0.1784 Acc: 0.9477

Epoch 13/24

----------

train Loss: 0.3347 Acc: 0.8525

val Loss: 0.2028 Acc: 0.9412

Epoch 14/24

----------

train Loss: 0.3159 Acc: 0.8811

val Loss: 0.1937 Acc: 0.9412

Epoch 15/24

----------

train Loss: 0.3403 Acc: 0.8443

val Loss: 0.1860 Acc: 0.9412

Epoch 16/24

----------

train Loss: 0.2895 Acc: 0.8770

val Loss: 0.1743 Acc: 0.9542

Epoch 17/24

----------

train Loss: 0.4108 Acc: 0.8320

val Loss: 0.1992 Acc: 0.9346

Epoch 18/24

----------

train Loss: 0.2579 Acc: 0.8934

val Loss: 0.1907 Acc: 0.9412

Epoch 19/24

----------

train Loss: 0.2699 Acc: 0.8607

val Loss: 0.1755 Acc: 0.9477

Epoch 20/24

----------

train Loss: 0.4246 Acc: 0.7992

val Loss: 0.1685 Acc: 0.9477

Epoch 21/24

----------

train Loss: 0.3424 Acc: 0.8402

val Loss: 0.1993 Acc: 0.9412

Epoch 22/24

----------

train Loss: 0.3436 Acc: 0.8361

val Loss: 0.1669 Acc: 0.9412

Epoch 23/24

----------

train Loss: 0.3777 Acc: 0.8279

val Loss: 0.1694 Acc: 0.9477

Epoch 24/24

----------

train Loss: 0.3828 Acc: 0.8361

val Loss: 0.1712 Acc: 0.9412

Training complete in 1m 37s

Best val Acc: 0.954248모델 예측값 시각화

visualize_model(model_conv)

plt.ioff()

plt.show()

자료 출처: https://pytorch.org/tutorials/beginner/transfer_learning_tutorial.html