머신러닝_2

타이타닉 생존자 분석 - 개요

-

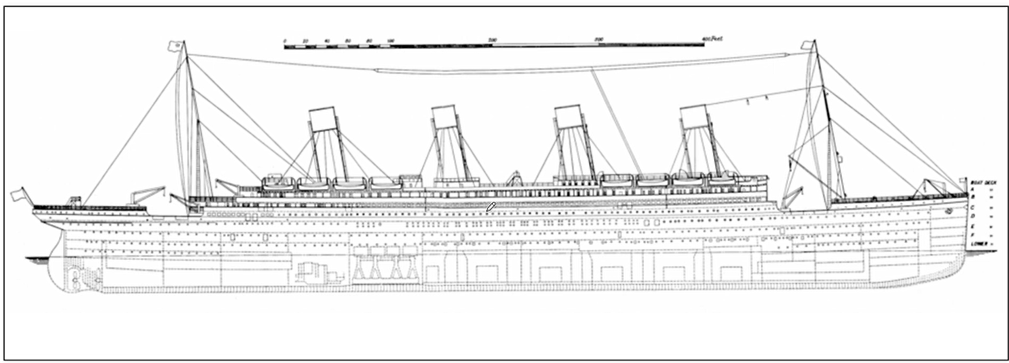

타이타닉?

-

1910년대 당시 최대 여객선 - 타이타닉

-

영국에서 미국 뉴욕으로 가던 국제선

-

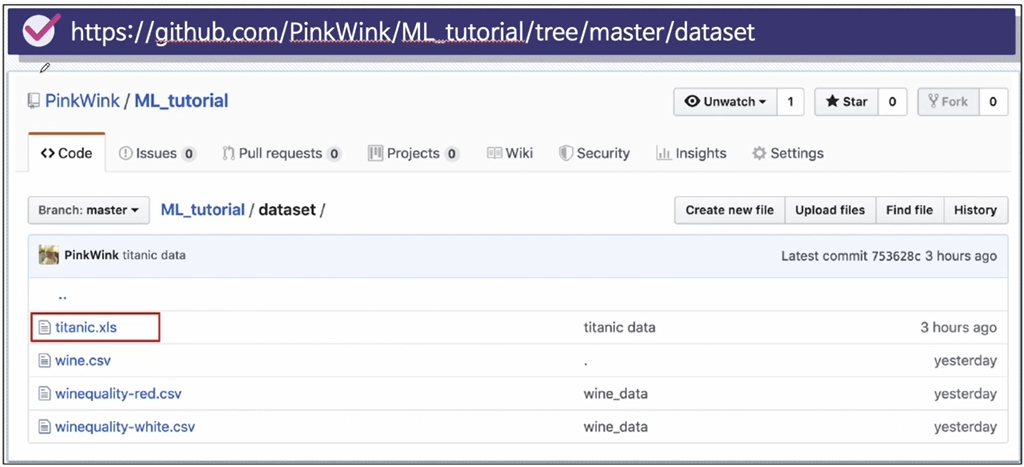

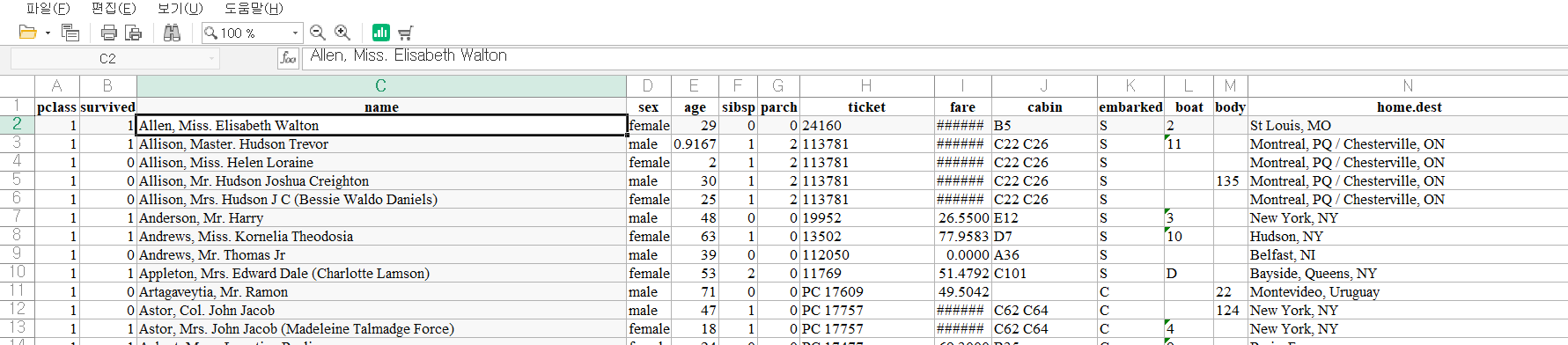

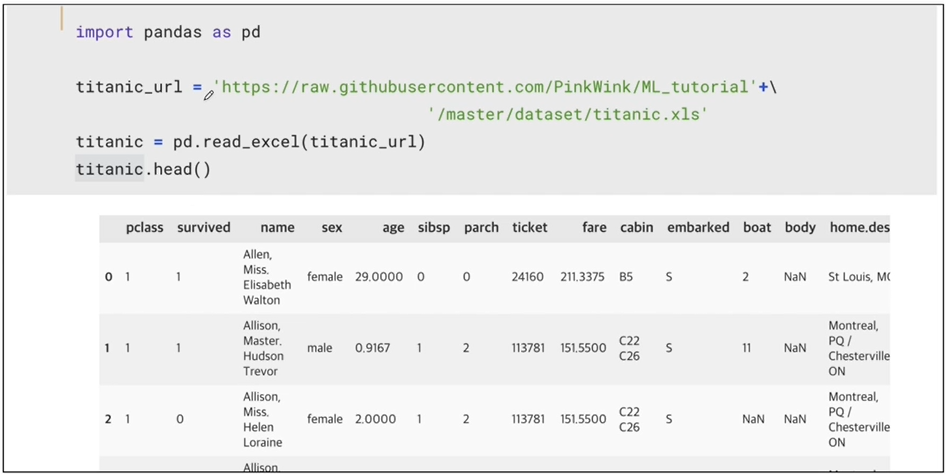

데이터

- 데이터 컬럼의 의미

데이터 탐색적 분석 - EDA

- 시작하기전 시각화에 사용할 플로틀리(plotly_express) 모듈을 설치 하기

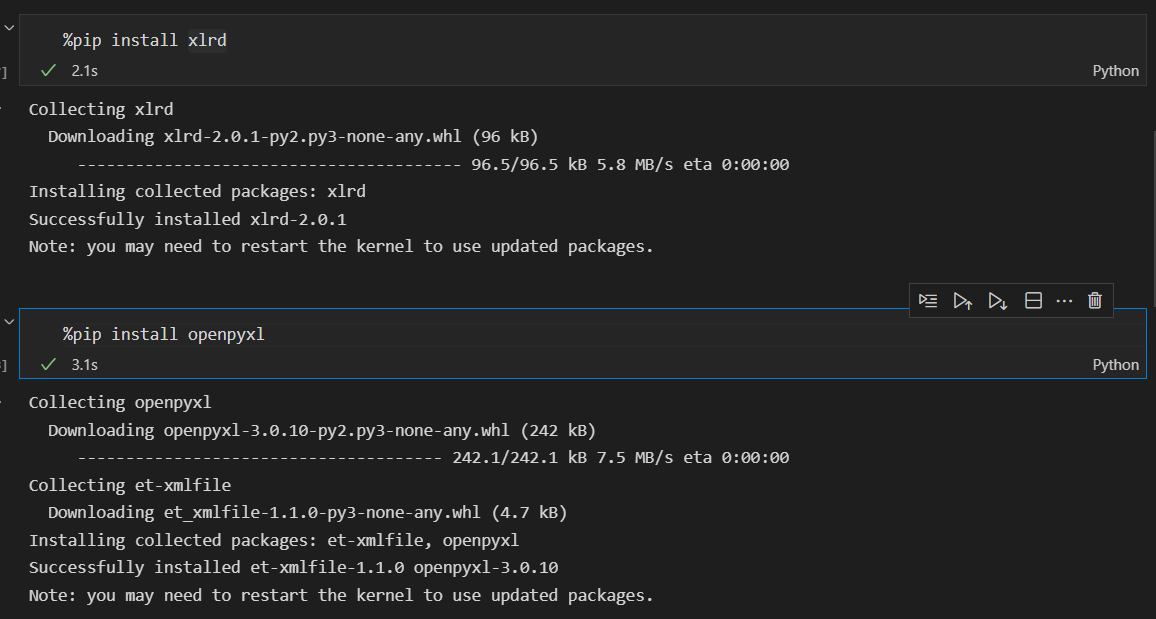

pip install plotly_express데이터 읽기

- import 에러가 발생할 수 있음.

- 대처로는 xlrd, openpyxl를 설치해주면 성공적으로 불러올수 있음

- 대처로는 xlrd, openpyxl를 설치해주면 성공적으로 불러올수 있음

import pandas as pd

titanic_url = 'https://raw.githubusercontent.com/PinkWink/ML_tutorial/master/dataset/titanic.xls'

titanic = pd.read_excel(titanic_url)

titanic.head()생존상황

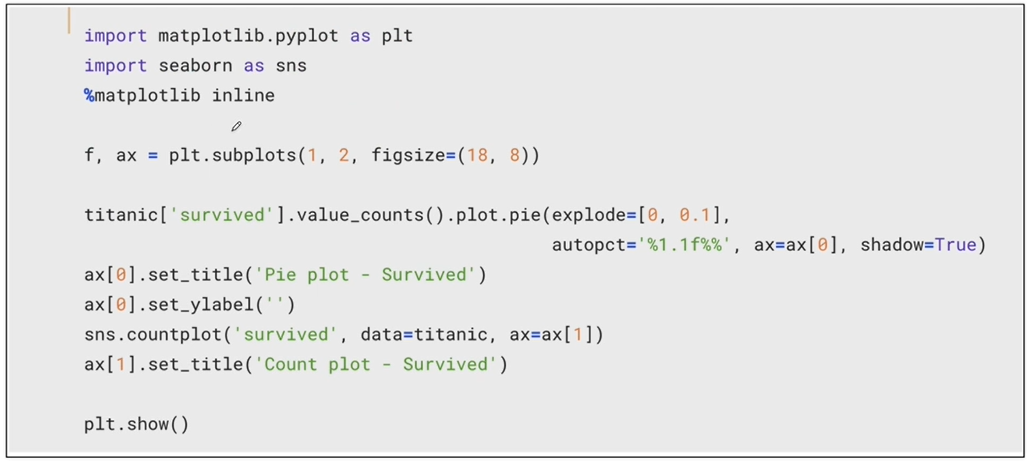

import matplotlib.pyplot as plt

import seaborn as sns

f, ax = plt.subplots(1, 2, figsize=(18, 8))

titanic['survived'].value_counts().plot.pie(explode=[0,0.05],autopct='%1.1f%%', ax = ax[0], shadow=True)

ax[0].set_title('Pie plot - Survived')

ax[0].set_ylabel('')

sns.countplot(x='survived', data=titanic, ax=ax[1])

# 버전 문제로 'survived'를 x 데이터라고 명시해주어야 한다.

ax[1].set_title('Count plot - Survived')

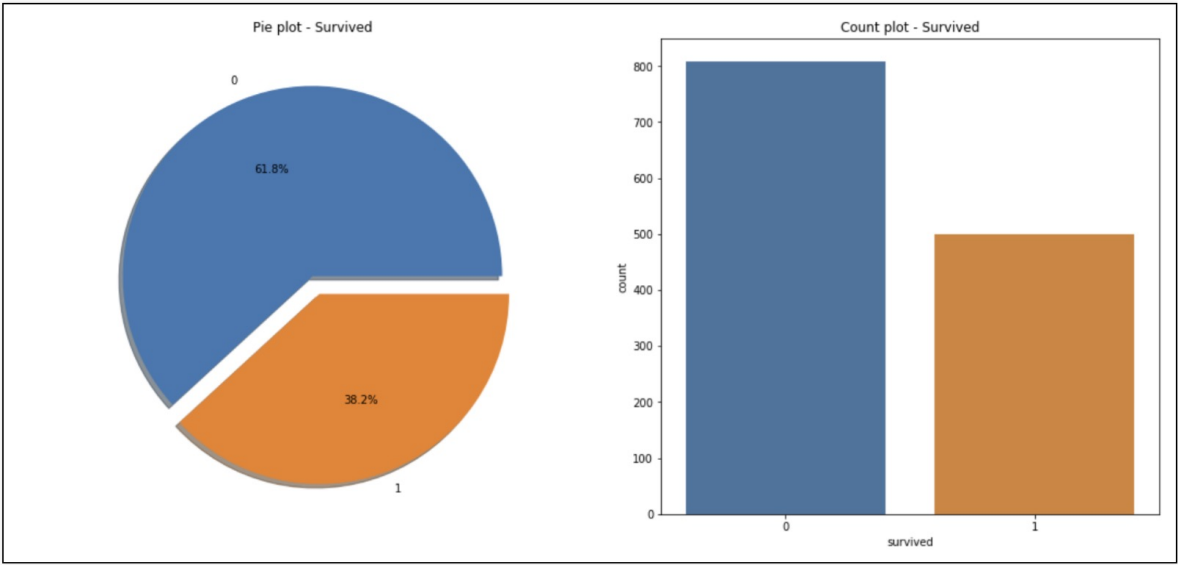

plt.show()- 38.2% 생존률

성별에 따른 생존 상황은?

f, ax = plt.subplots(1, 2, figsize=(18, 8))

sns.countplot(x='sex', data=titanic, ax=ax[0])

ax[0].set_title('Count of Passengers of Sex')

ax[0].set_ylabel('')

sns.countplot(x='sex', hue='survived', data=titanic, ax=ax[1])

ax[1].set_title('Sex:Survived and Unsurvived')

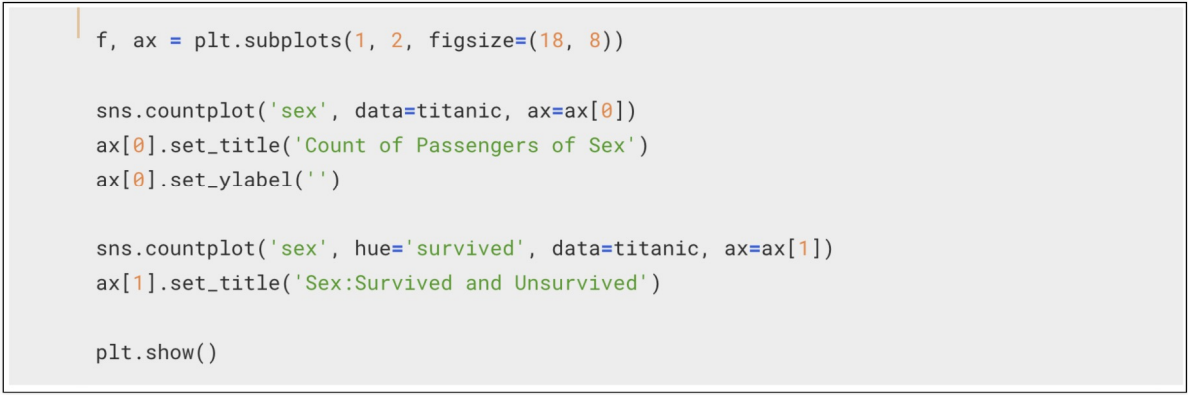

plt.show()-

남성의 생존 가능성이 더 낮다

-

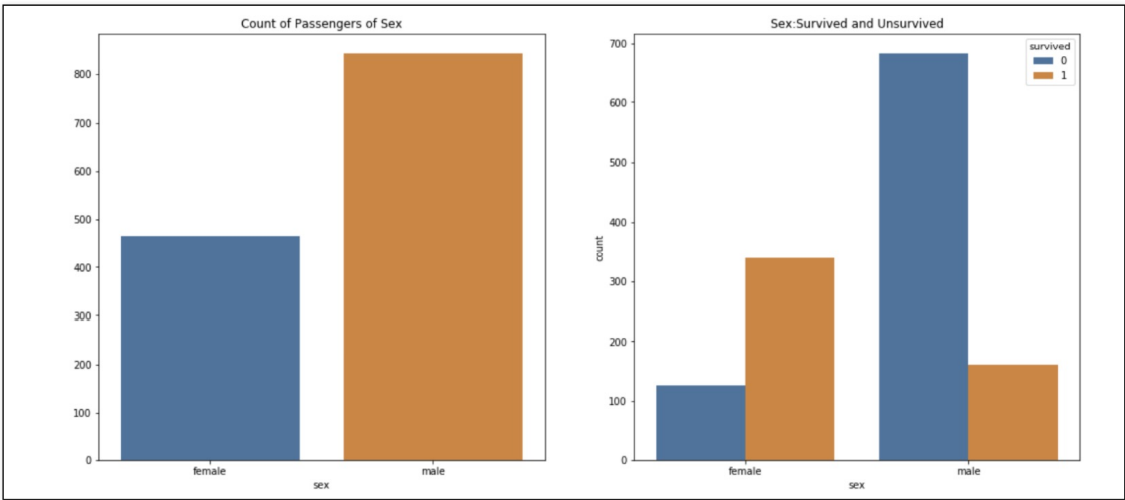

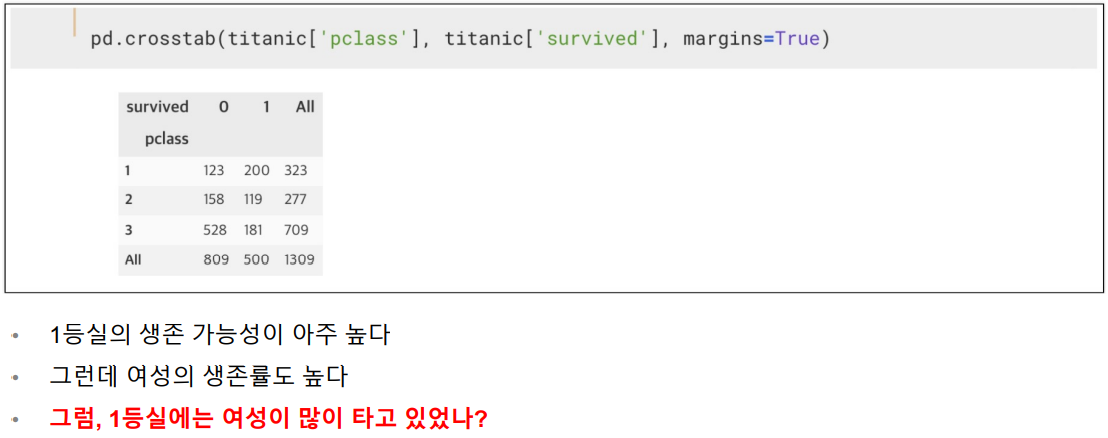

경쟁력 대비 생존률

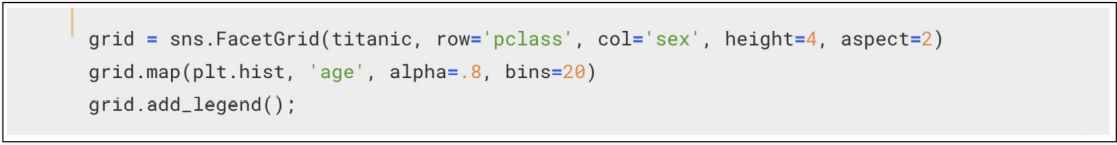

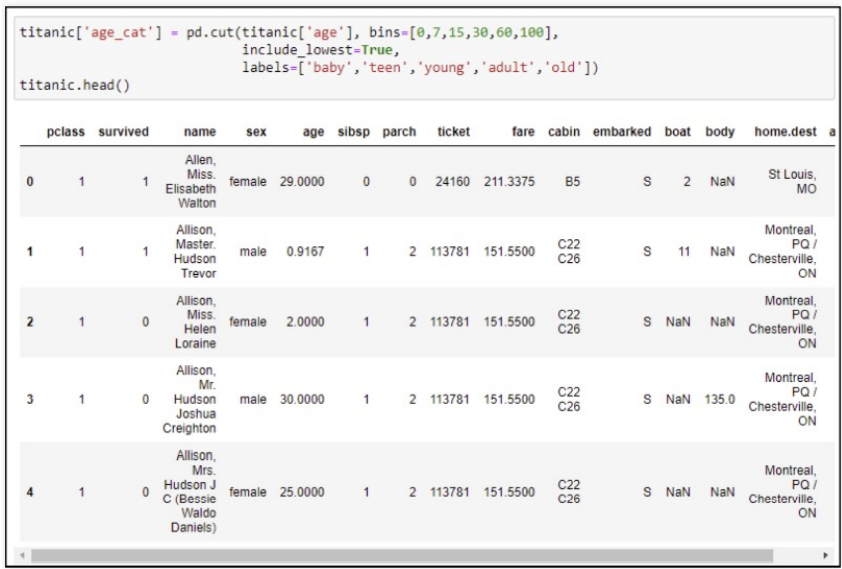

선실 등급별 성별 상황

grid = sns.FacetGrid(titanic, row='pclass', col='sex', height=4, aspect=2)

grid.map(plt.hist, 'age', alpha=.8, bins=20)

grid.add_legend()- 3등실에는 남성이 많았다 - 특히 20대 남성

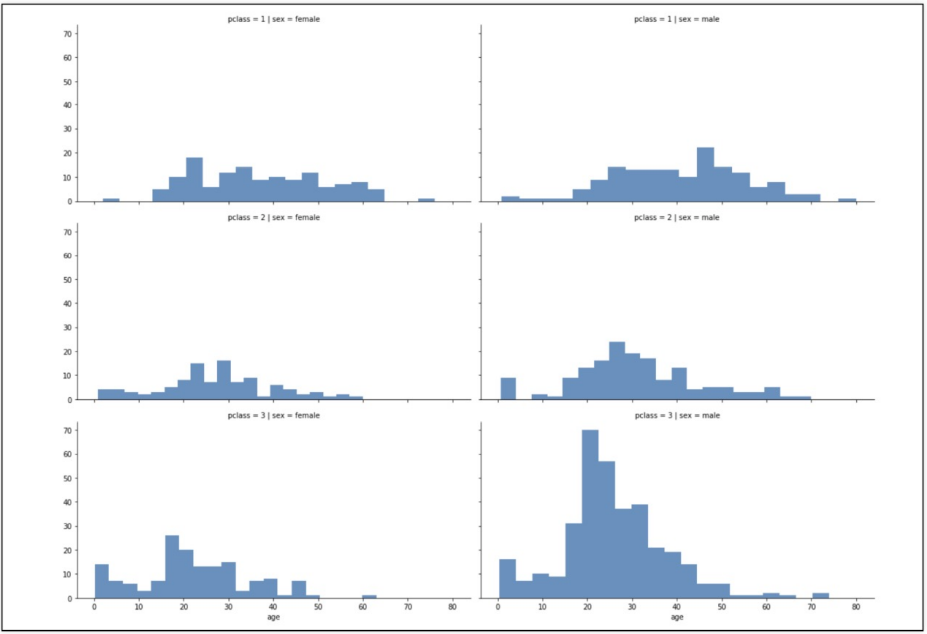

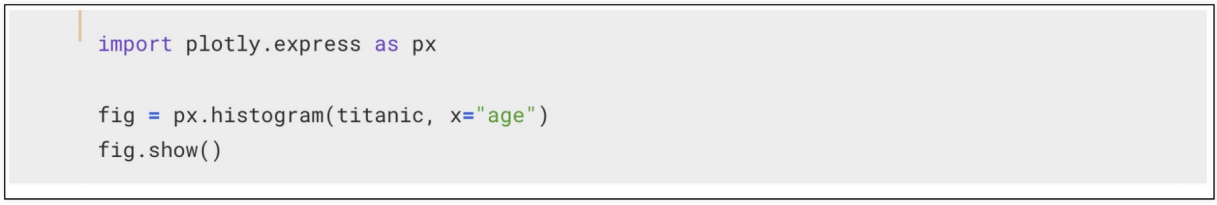

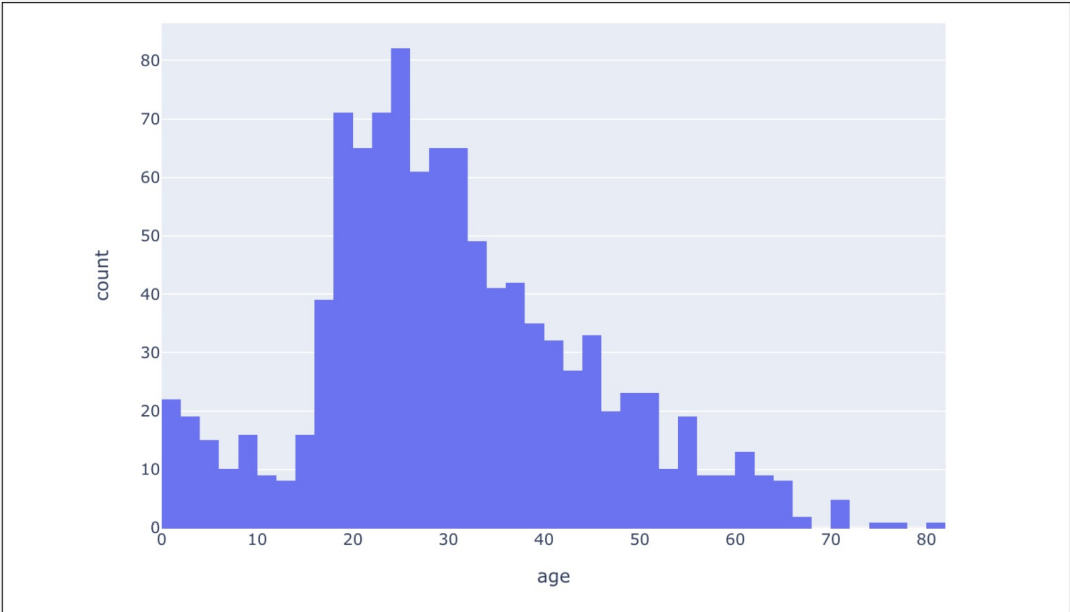

나이별 승객 현황

- 나이별 승객 현황도 확인해보자

- ValueError: Mime type rendering requires nbformat>=4.2.0 but it is not installed -> 이렇게 에러가 나올때는 커널을 재설치한 후 nbformat 모듈을 설치하고 재실행 해보자.

import plotly.express as px

fig = px.histogram(titanic, x='age')

fig.show()- 아이들과 20~30대가 많았다는걸 알 수 있다.

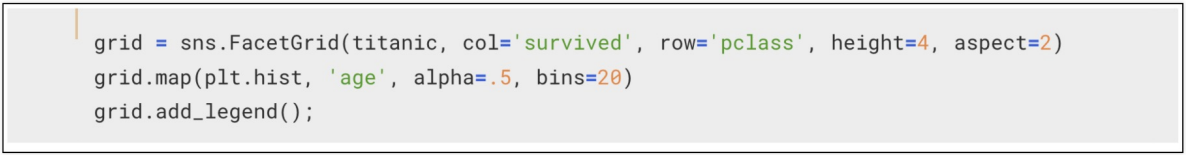

등실별 생존률을 연령별로 관찰해 보자

grid = sns.FacetGrid(titanic, col='survived', row='pclass', height=4, aspect=2)

grid.map(plt.hist, 'age', alpha=.5, bins=20)

grid.add_legend()- 확실히 선실 등급이 높으면 생존률이 높은 듯 하다

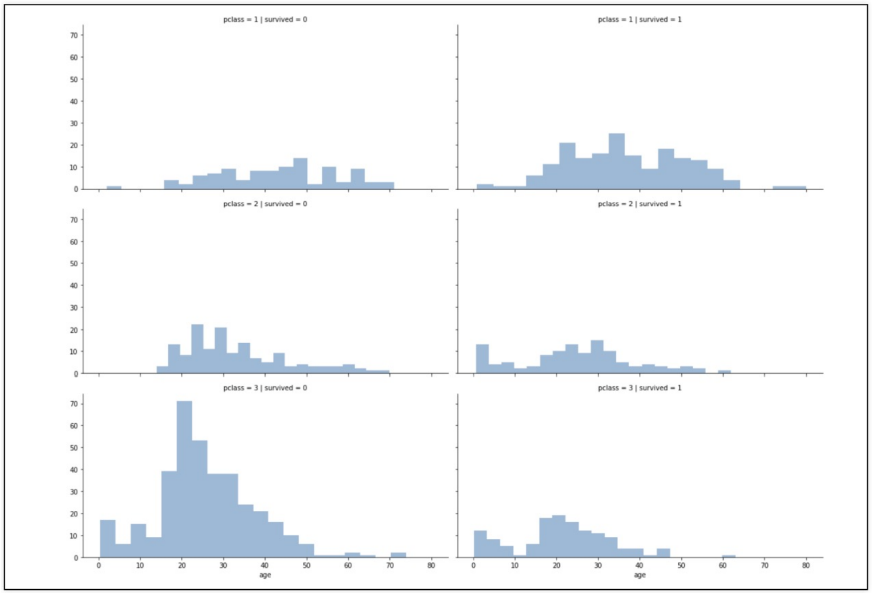

나이를 5단계로 정리하기

titanic['age_cat'] = pd.cut(titanic['age'], bins=[0,7,15,30,60,100], include_lowest=True,

labels=['baby', 'teen', 'young', 'adult', 'old'])

titanic.head()나이, 성별, 등급별 생존자 수를 한번에 파악할 수 있을까?

plt.figure(figsize=(12,4))

plt.subplot(131)

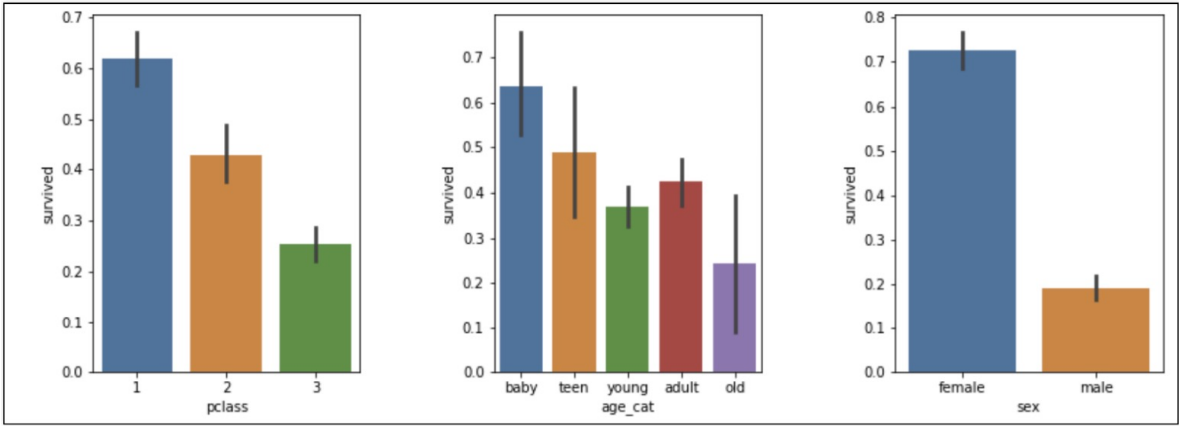

sns.barplot(x="pclass", y='survived', data=titanic)

plt.subplot(132)

sns.barplot(x='age_cat', y='survived', data=titanic)

plt.subplot(133)

sns.barplot(x='sex', y='survived', data=titanic)

plt.subplots_adjust(top=1, bottom=0.1, left=0.1, right=1, hspace=0.5, wspace=0.5)- 어리고, 여성이고, 1등실일 수록 생존하기 유리했을까?

남/여 나이별 생존 상황을 보다 더 들여다보자

fig, axes = plt.subplots(nrows=1, ncols=2, figsize=(14,6))

women = titanic[titanic['sex']=='female']

men = titanic[titanic['sex']=='male']

ax = sns.distplot(women[women['survived']==1]['age'], bins=20, label='survived', ax=axes[0], kde=False)

ax = sns.distplot(women[women['survived']==0]['age'], bins=40, label='not_survived', ax=axes[0], kde=False)

ax.legend()

ax.set_title('Female')

ax = sns.distplot(men[men['survived']==1]['age'], bins=18, label='survived', ax=axes[1], kde=False)

ax = sns.distplot(men[men['survived']==0]['age'], bins=40, label='not_survived', ax=axes[1], kde=False)

ax.legend()

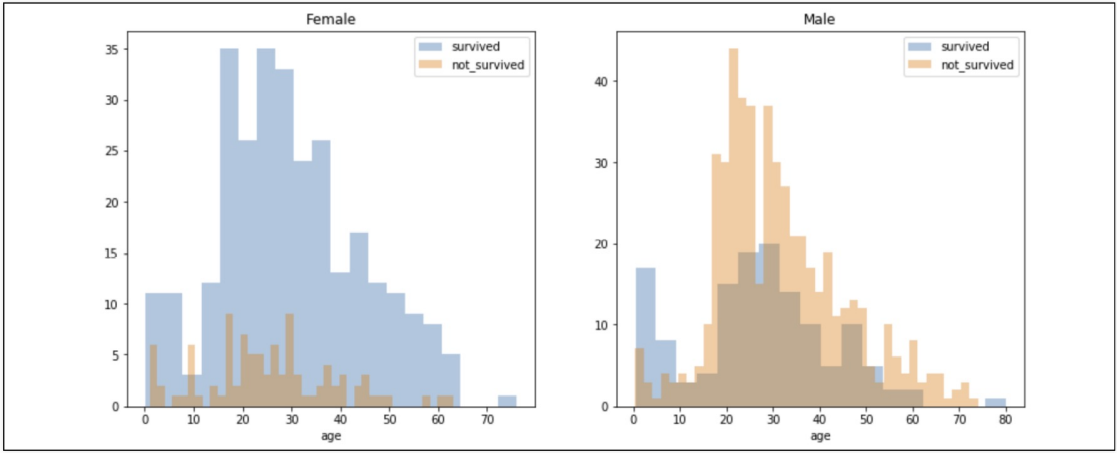

ax.set_title('Male')- 이 결과는 어떤 것을 의미할까

- 여성은 전체적으로 많은 수의 사람이 살아있는걸 확인할 수 있고, 남성은 어린아이이후부턴 살아있는 사람이 없는걸 확인할 수 있다

- 여성은 전체적으로 많은 수의 사람이 살아있는걸 확인할 수 있고, 남성은 어린아이이후부턴 살아있는 사람이 없는걸 확인할 수 있다

탑승객의 이름에서 신분을 알 수 있다

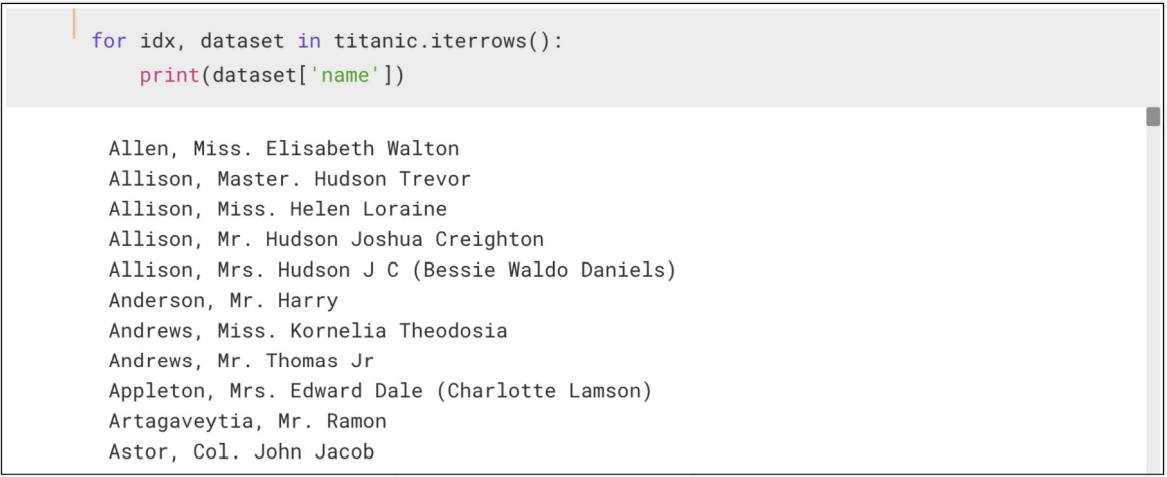

for idx, dataset in titanic.iterrows():

print(dataset['name'])- 정규식을 이용해서 문장 사이의 신분에 대한 정보를 얻을 수 있다

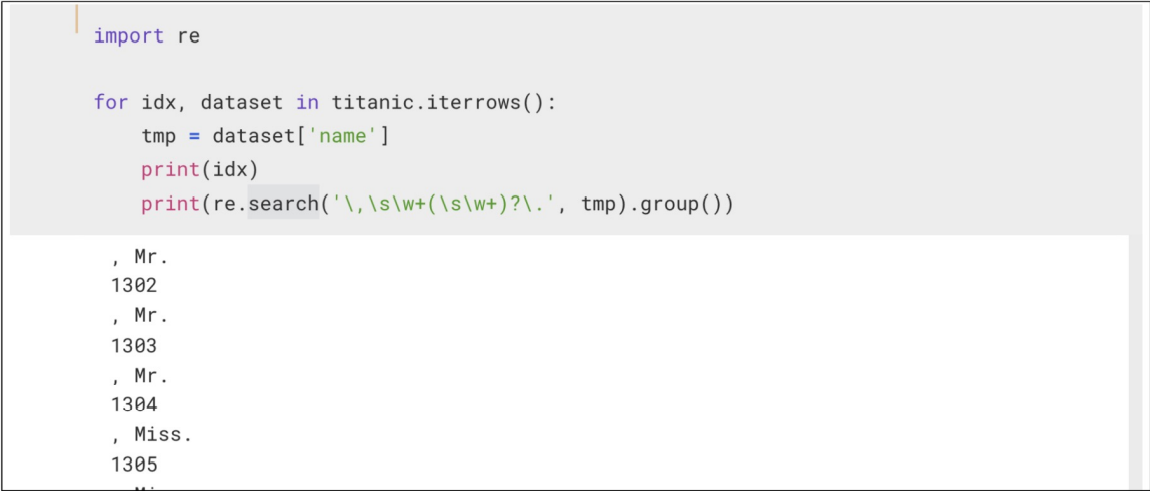

- '\,\s\w+(\s\w+)?.' : \,(콤마로 시작), \s(한칸 비우기), \w+(문자열), ? (단어가 하나이거나 두개일 수도있다는 뜻), . (점으로 끝난다)

- '\,\s\w+(\s\w+)?.' : \,(콤마로 시작), \s(한칸 비우기), \w+(문자열), ? (단어가 하나이거나 두개일 수도있다는 뜻), . (점으로 끝난다)

import re

for idx, dataset in titanic.iterrows():

tmp = dataset['name']

print(idx)

print(re.search('\,\s\w+(\s\w+)?\.', tmp).group())- 살짝 응용하면

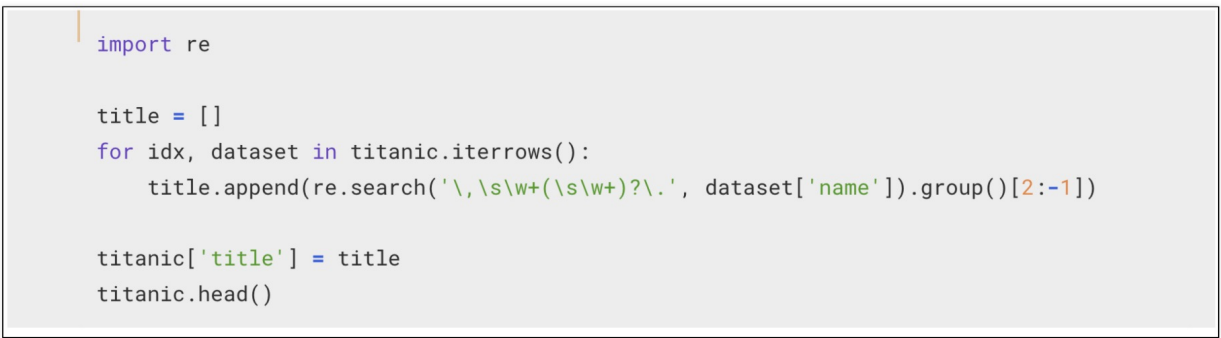

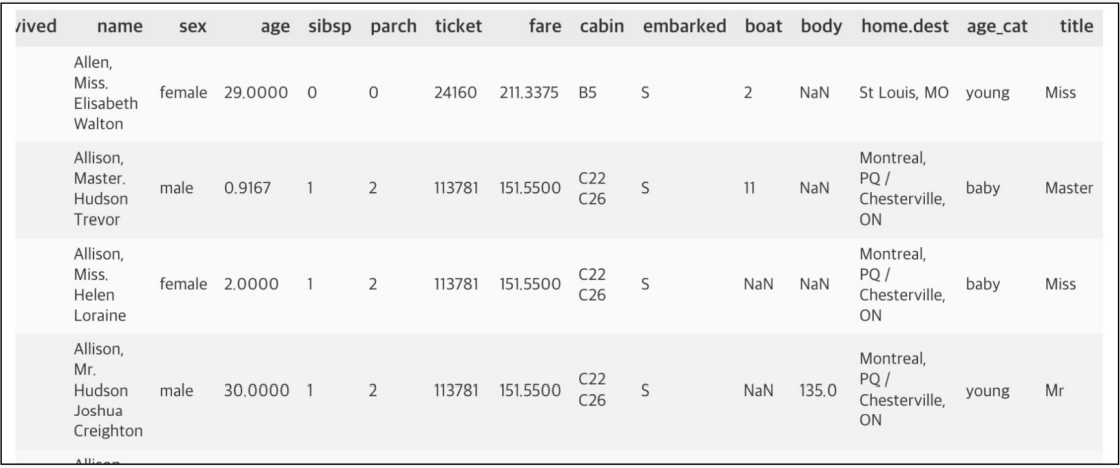

import re

title = []

for idx, dataset in titanic.iterrows():

title.append(re.search('\,\s\w+(\s\w+)?\.', dataset['name']).group()[2:-1])

titanic['title'] = title

titanic.head()- 사회적 신분을 알 수 있다

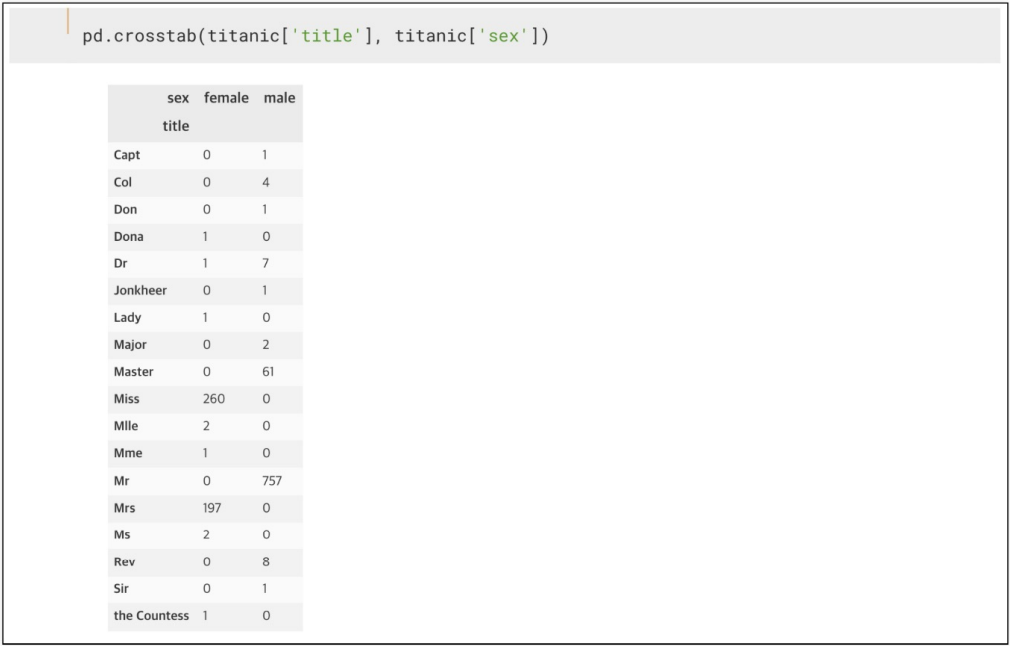

성별별로 본 귀족

pd.crosstab(titanic['title'], titanic['sex'])- 사회적 신분을 조금 더 정리하자

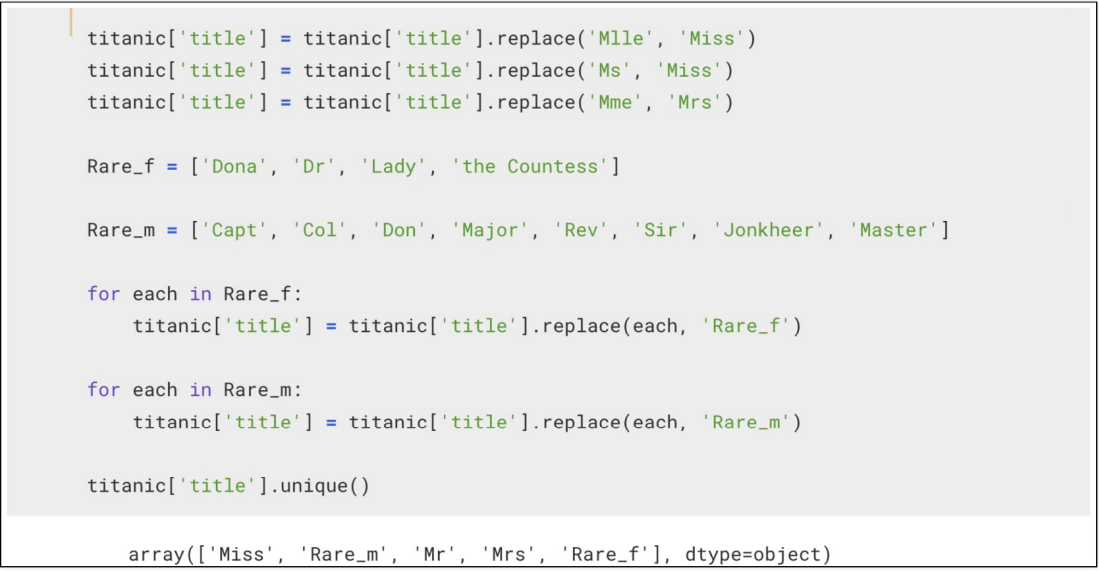

titanic['title'] = titanic['title'].replace('Mlle', 'Miss')

titanic['title'] = titanic['title'].replace('Ms', 'Miss')

titanic['title'] = titanic['title'].replace('Mme', 'Mrs')

Rare_f = ['Dona', 'Dr', 'Lady', 'the Countess']

Rare_m = ['Capt', 'Col', 'Don', 'Major', 'Rev', 'Sir', 'Jonkheer', 'Master']

for each in Rare_f:

titanic['title'] = titanic['title'].replace(each, 'Rare_f')

for each in Rare_m:

titanic['title'] = titanic['title'].replace(each, 'Rare_m')

titanic['title'].unique()- 결과

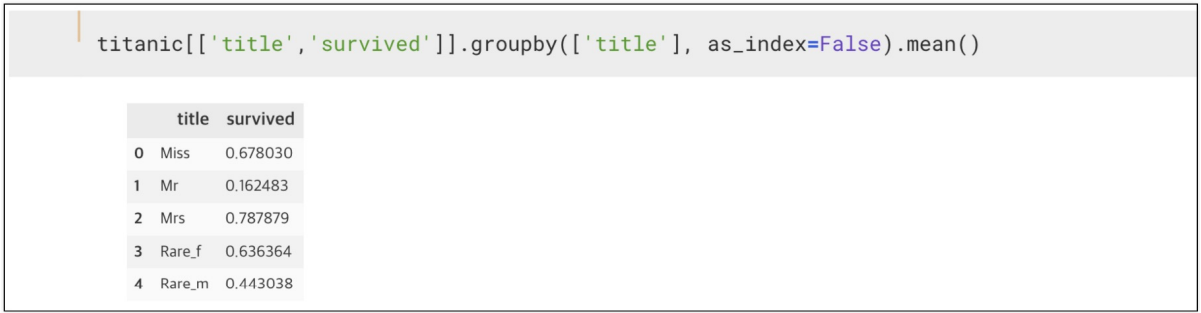

titanic[['title','survived']].groupby(['title'], as_index=False).mean()타이타닉 배의 선장

-

빙산과 충돌한 타이타닉의 탈출 원칙

-

타이타닉의 최후

머신러닝을 이용한 생존자 예측

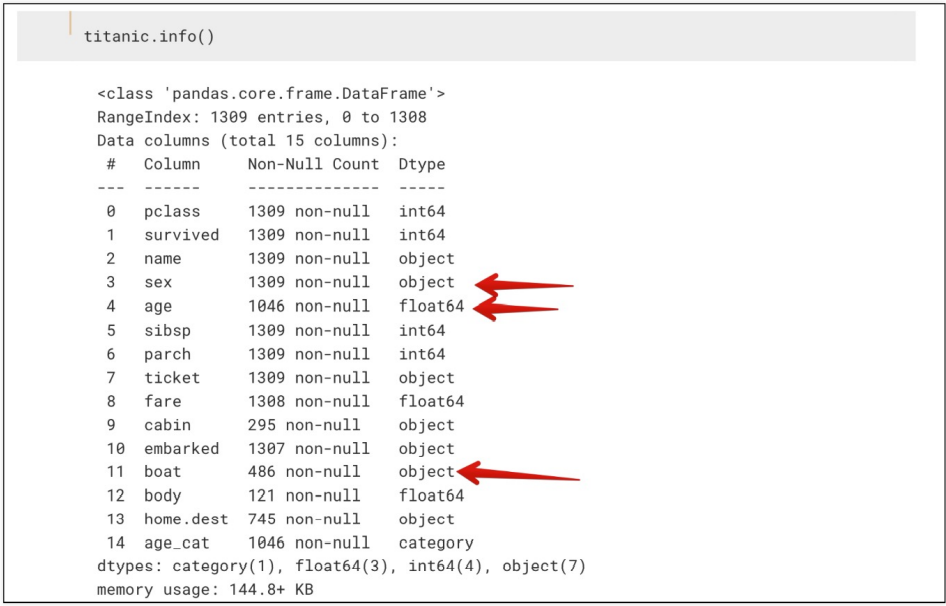

간단한 구조 확인

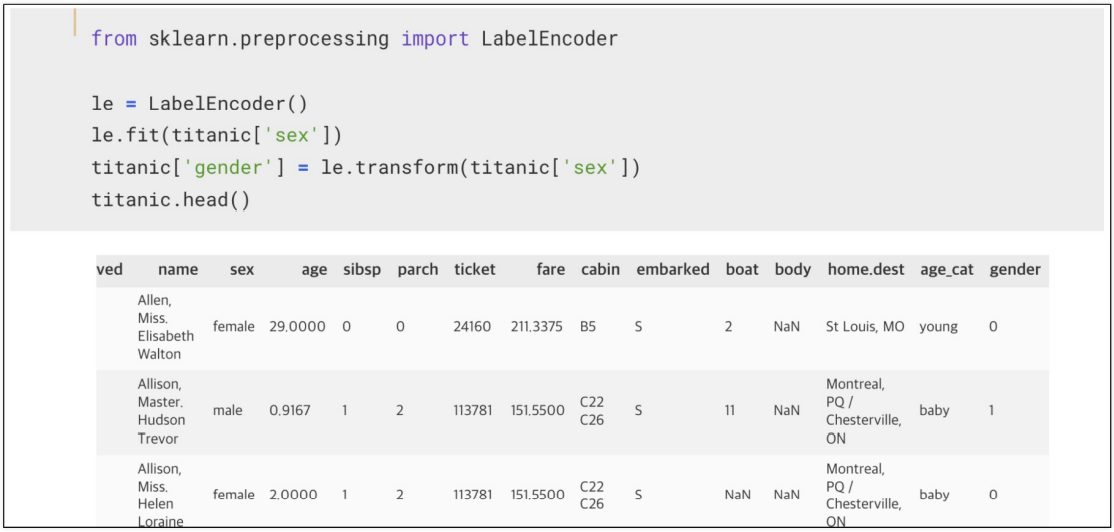

titanic.info()머신러닝을 위해 해당 컬럼을 숫자로 변경

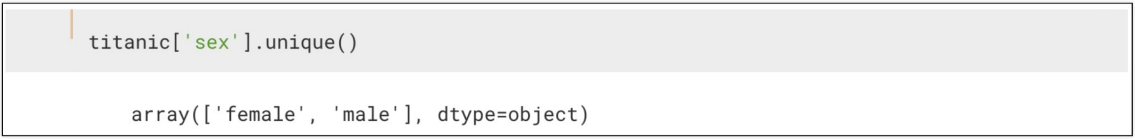

titanic['sex'].unique()Label Encode를 사용하면 편하다

- 모듈설치가 안되어있다면 pip install -U scikit-learn를 이용하여 설치하자

from sklearn.preprocessing import LabelEncoder

le = LabelEncoder()

le.fit(titanic['sex'])

titanic['gender'] = le.transform(titanic['sex'])

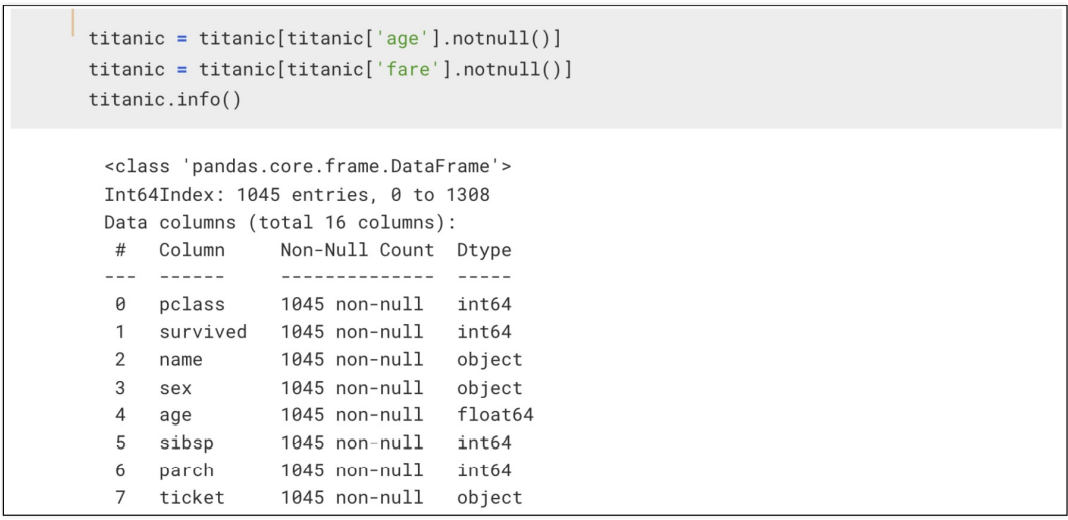

titanic.head()- 결측치는 어쩔 수 없이 포기

titanic = titanic[titanic['age'].notnull()]

titanic = titanic[titanic['fare'].notnull()]

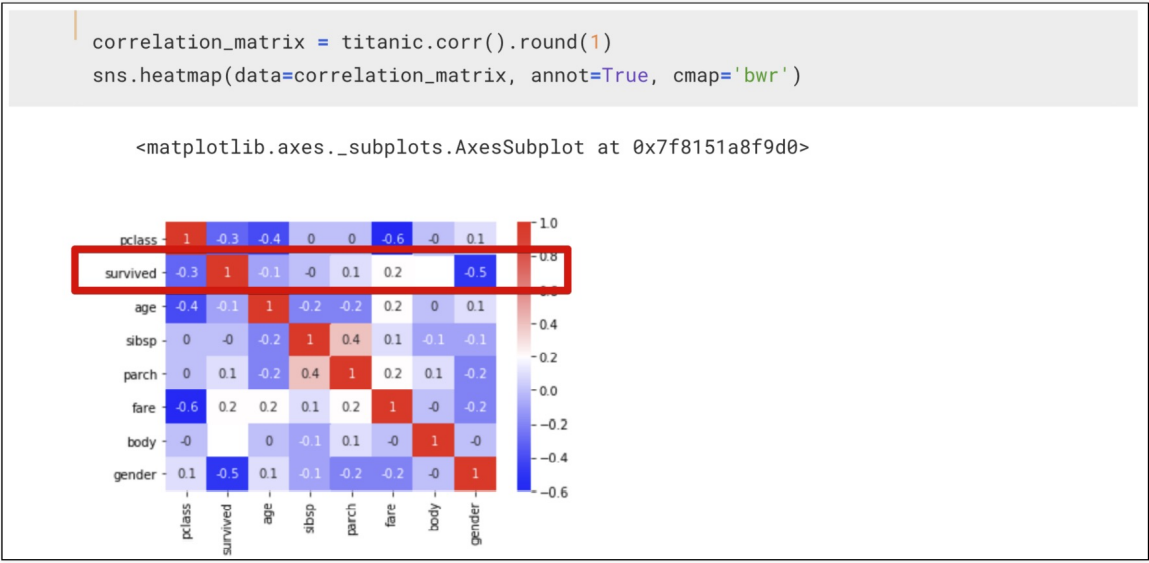

titanic.info()상관관계

correlation_matrix = titanic.corr().round(1)

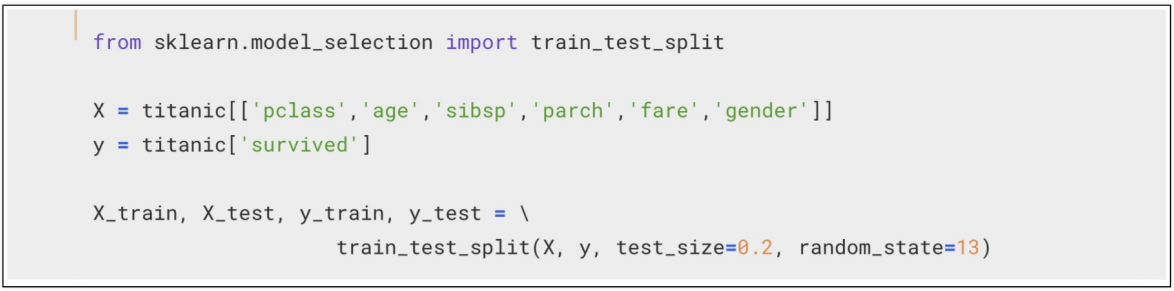

sns.heatmap(data=correlation_matrix, annot=True, cmap='bwr')- 먼저 특성을 선택하고, 데이터를 나누자

- 특성은 pclass, age, sibsp, parch, fare, gender로 선정

- 특성은 pclass, age, sibsp, parch, fare, gender로 선정

from sklearn.model_selection import train_test_split

x = titanic[['pclass', 'age', 'sibsp', 'parch', 'fare', 'gender']]

y = titanic['survived']

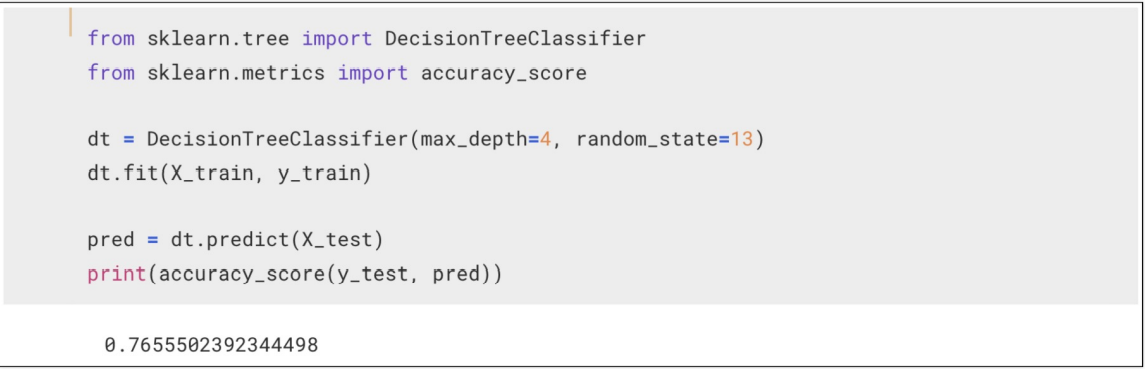

x_train, x_test, y_train, y_test = train_test_split(x, y, test_size=0.2, random_state=13)- 일단 DecisionTree

from sklearn.tree import DecisionTreeClassifier

from sklearn.metrics import accuracy_score

dt = DecisionTreeClassifier(max_depth=4, random_state=13)

dt.fit(x_train, y_train)

pred = dt.predict(x_test)

print(accuracy_score(y_test, pred))주인공의 생존률을 특정 지어보자

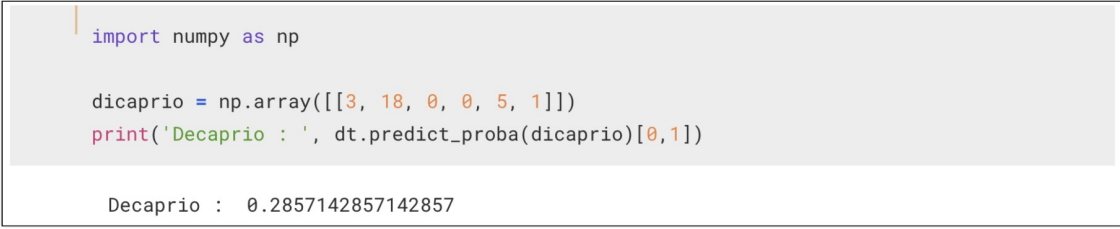

- 디카프리오의 생존률

import numpy as np

dicaprio = np.array([[3,18,0,0,5,1]])

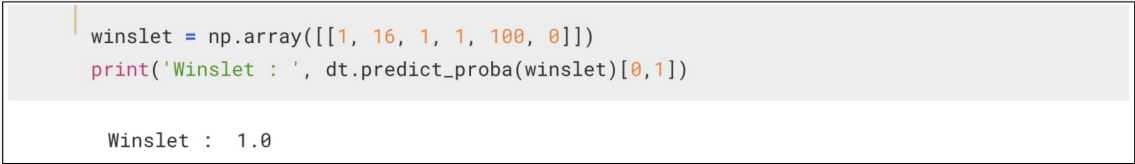

print('Dicaprio', dt.predict_proba(dicaprio)[0,1])- 윈슬릿의 생존률

winslet = np.array([[1, 16, 1, 1, 100, 0]])

print('Winslet', dt.predict_proba(winslet)[0,1])