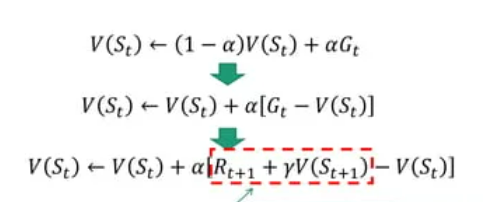

1. Temporal-Difference(TD) Learning

-

다음 State의 추정치(V(S_t+1))로 현재 State의 추정값(V(S_t))을 업데이트

-

TD(0): 주어진 Policy 평가

-

MC vs TD

- MC: full sequence of state 요구

- TD: only a step으로만 Learning 가능

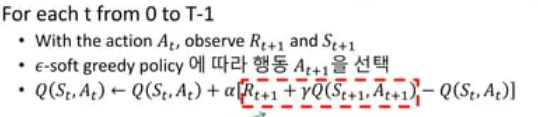

2. SARSA

-

value function 대신에 Q function(action-value fun)을 바로 학습

-

알고리즘

- 첫 state(S_0) 임의로 샘플링

- epsilon-soft greedy policy에 따라 행동 A0 선택

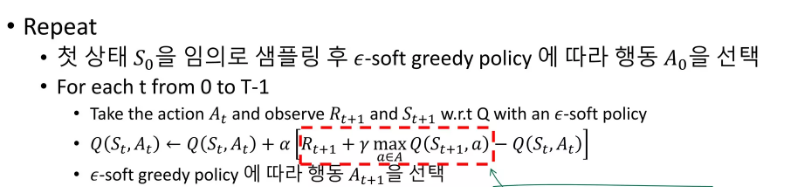

3. Q-Learning

-> SARSA와 Target이 다름

4. Gym

- 강화학습 알고리즘을 개발, 비교하기 위한 오픈 소스 Python 라이브러리

- 고전 게임을 기반으로 강화학습을 사용할 수 있는 기본적인 환경과 기본적인 강화학습 알고리즘들이 패키지로 준비되어 있는 Toolkit

-

API

1) env.reset() : 초기화

2) env.step(action): 주어진 action 실행, 리턴

3) env.render(): render ANSI text, image -

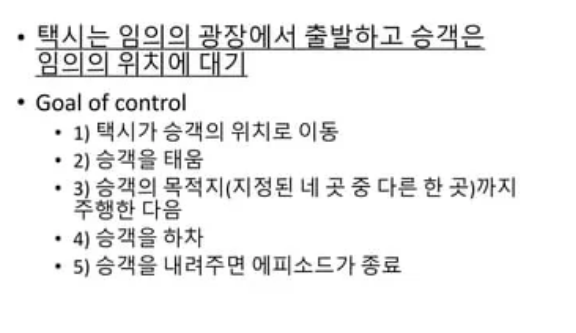

실습: Taxi Problem

# gym 설치

!pip install gym[atari,toy_text,accept_rom_license]

import numpy as np

import random

import gym

env = gym.make('Taxi-v3', new_step_api=True)

# step types

STEPTYPE_FIRST = 0

STEPTYPE_MID = 1

STEPTYPE_LAST = 2

Q = np.random.uniform(size=(500, 6))

# 환경의 첫 상태로 초기화

env.reset()

# action 0 수행

env.step(0)# wrapper for gym's blackjack environment

def generate_start_step():

return { 'observation': env.reset(), 'reward': 0., 'step_type': STEPTYPE_FIRST }

def generate_next_step(step, action):

obs, reward, done, _, info = env.step(action)

step_type = STEPTYPE_LAST if done else STEPTYPE_MID

return { 'observation': obs, 'reward': reward, 'step_type': step_type }

epsilon = 0.1

def get_eps_soft_action(step):

# epsilon-soft greedy policy

if random.random() < epsilon:

return np.random.choice(6, 1)[0]

else:

observ = step['observation']

return np.argmax(Q[observ])

def get_greedy_action(step):

observ = step['observation']

return np.argmax(Q[observ])

def get_random_action(step):

return random.randint(0, env.action_space.n-1)

behavior_prob_hit = 1. / float(env.action_space.n)

# return true if (observ, action) exists in epi

def in_episode(epi, observ, action):

for s, a in zip(*epi):

if s['observation'] == observ and a == action:

return True

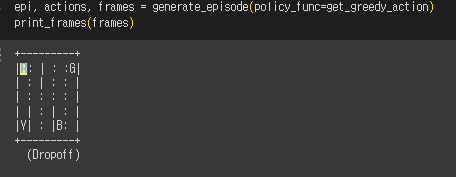

return False- episode 생성

def generate_episode(policy_func=get_random_action):

episode = list()

actions = list()

frames = list()

step = generate_start_step()

frames.append(env.render(mode='ansi'))

episode.append(step)

while step['step_type'] != STEPTYPE_LAST:

action = policy_func(step)

step = generate_next_step(step, action)

frames.append(env.render(mode='ansi'))

episode.append(step)

actions.append(action)

return episode, actions, frames- 학습

from IPython.display import clear_output

from time import sleep

def print_frames(frames):

for i, frame in enumerate(frames):

clear_output(wait=True)

print(frame)

sleep(.2)

maxiter = 100000

gamma = 1

epsilon = 0.3

lr_rate = 0.8

Q = np.random.uniform(size=(env.observation_space.n, env.action_space.n))

for _ in range(maxiter):

# starting step

step = generate_start_step()

action = get_random_action(step)

done = False

while not done:

next_step = generate_next_step(step, action)

if next_step['step_type'] == STEPTYPE_LAST:

state = step['observation']

idx1 = (state, action)

Q[idx1] = Q[idx1] + lr_rate * (next_step['reward'] - Q[idx1])

done = True

else:

best_action = get_greedy_action(next_step)

state = step['observation']

next_state = next_step['observation']

idx1 = (state, action)

idx2 = (next_state, best_action)

Q[idx1] = Q[idx1] + lr_rate * ((next_step['reward'] + gamma * Q[idx2]) - Q[idx1])

next_action = get_eps_soft_action(step)

step = next_step

action = next_action

5. Custom Gym Environments

- 실습: shooting