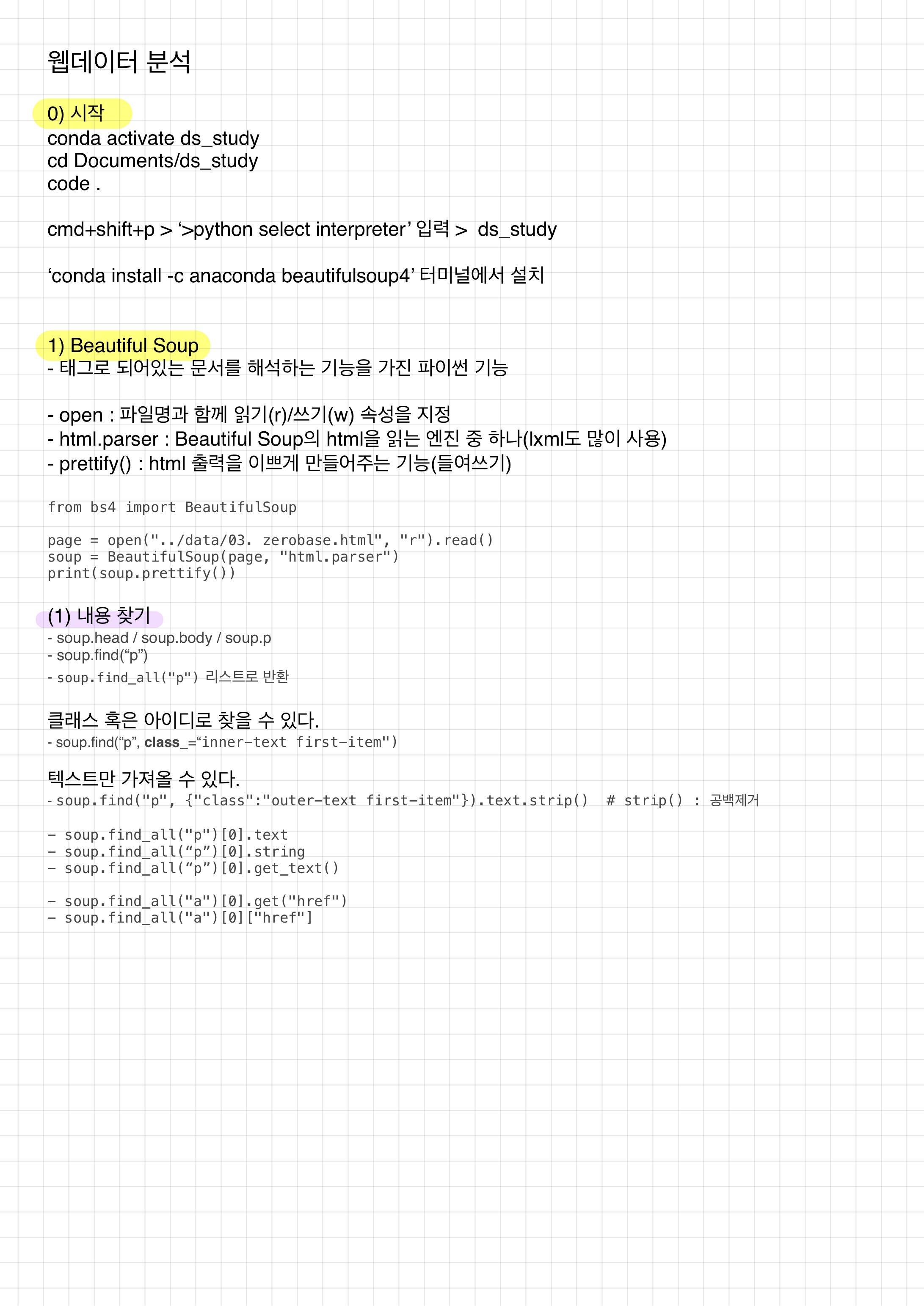

-

사용법 다르다.

-find, findall : soup.find("p", class="inner-text first-item")

-select_one, select : soup.select("#exchangeList > li") -

두 가지 모듈

-from urllib.request import urlopen

-import requests

네이버 금융데이터 가져오기

from urllib.request import urlopen

from bs4 import BeautifulSoup

url = "https://finance.naver.com/marketindex/"

page = urlopen(url)

soup = BeautifulSoup(page, "html.parser")

print(soup.prettify())

soup.find_all("span", "value")

# span 태그의 value 클래스

#- find, select_one : 단일선택

#- find_all, select : 다중선택

import requests

from bs4 import BeautifulSoup

url = "https://finance.naver.com/marketindex/"

response = requests.get(url)

soup = BeautifulSoup(response.text, "html.parser")

print(soup.prettify())

# id => #, class => .

exchangeList = soup.select("#exchangeList > li")

exchange_datas = []

baseUrl = "https://finance.naver.com"

for item in exchangeList:

data = {

"title" : item.select_one(".h_lst").text,

"exchange" : item.select_one(".value").text,

"change" : item.select_one(".change").text,

"updown" : item.select_one("div.head_info.point_up > .blind").text,

"link" : baseUrl + item.select_one("a").get("href")

}

exchange_datas.append(data)

df = pd.DataFrame(exchange_datas)

df.to_excel("./naverfinance.xlsx", encoding="utf-8")위키백과 문서정보 가져오기

import urllib

from urllib.request import urlopen, Request

html = "https://ko.wikipedia.org/wiki/{search_words}"

req = Request(html.format(search_words=urllib.parse.quote("여명의_눈동자"))) # 글자를 URL로 인코딩

response = urlopen(req)

soup = BeautifulSoup(response, "html.parser")

soup.find_all("ul")[32].text.strip().replace("\xa0", "").replace("\n", "")