자동 미분하기

- torch.autograd 로 경사 하강법에 의한 최적화에 필요한 미분값을 자동으로 계산할 수 있다.

- Gradient Vector는 x의 위치에서, 함수 f(x)의 변화가 가장 큰 방향과 크기를 의미한다.

- torch에서는 computational graph(계산 그래프)를 통해 미분 계산을 Chain Rule을 사용하여 자동으로 계산하게 된다.

- 자동 미분 계산은 torch.autograd.grad()나 tensor.backward()를 통해 계산할 수 있고, 특히, tensor.backward()는 역전파에 필요한 그래프의 역방향으로 미분을 자동 계산하게 된다.

Tensor.backward()

- backward()는 현재 텐서의 gradient를 계산한다.

- 정답과 예측값에 대한 손실 오차를 계산하고, 손실 오차 텐서의 backward()를 호출하여, 모델 파라미터에 대한 변화율을 역방향으로 계산한다.

- 텐서 객체를 연속적으로 사용할 때는 grad.zero_() 메서드로 grad의 속성을 0으로 초기화 한다.

- gradient = None 이면, 스칼라에 대한 미분을 계산하게 된다.

- 1차원 텐서는 gradient tensor와 같은 크기의 1로 초기화된 텐서를 전달하게 된다.

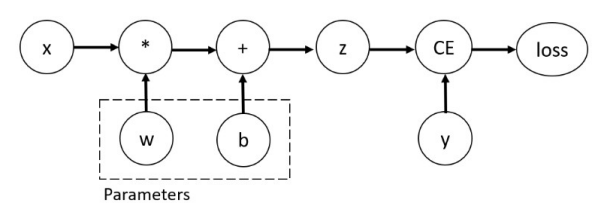

계산 그래프 예시

계산 그래프를 이용한 미분

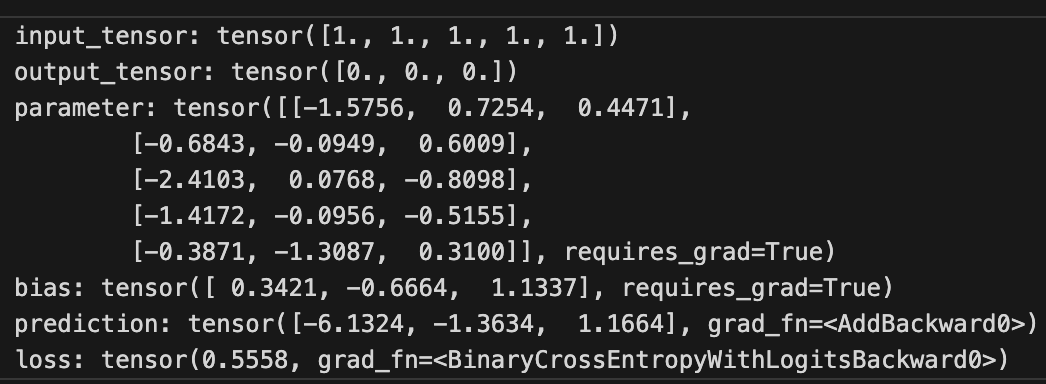

x = torch.ones(5)

y = torch.zeros(3)

w = torch.randn(5, 3, requires_grad = True)

b = torch.randn(3, requires_grad = True)

z = torch.matmul(x, w) + b

loss = torch.nn.functional.binary_cross_entropy_with_logits(z, y)

print('input_tensor:', x)

print('output_tensor:', y)

print('parameter:', w)

print('bias:', b)

print('prediction:', z)

print('loss:', loss)

- 자동 미분 계산은 실수나 복소수의 형태여야 한다.

- 텐서 생성시, requires_grad = True를 설정하여야 한다.

- requires_grad = True는 텐서를 생성후, 나중에도 사용할 수 있다.

- 텐서를 계산 그래프에서 분리하려면, detach()를 사용하여, 분리할 수 있다.

- 위의 계산그래프 사진의 예이다.

수치 미분의 계산

def f1(x):

return x**2

def diff(f, x):

h = 0.001

return (f(x+h) - f(x-h))/(2*h)

x = torch.tensor(2.0)

print('x=:', x)

dx = diff(f1, x)

print('dx=:', dx)x=: tensor(2.)

dx=: tensor(3.9999)

- x는 실수인 Input 텐서를 의미한다.

- diff 함수는 x를 중심으로 차분을 통해 개략적으로 계산된 f의 미분 값을 구해준다.

- 함수 f1을 x의 위치에서 미분한 값이 dx로 할당하여, 출력한 것이다.

def f2(x):

return torch.sum(x**2)

def gradient(f, x):

h = 0.001

grad = torch.zeros_like(x)

for i in range(x.shape[0]):

tmp = float(x[i])

x[i] = tmp + h

f1 = f(x)

x[i] = tmp - h

f2 = f(x)

grad[i] = (f1-f2)/(2*h)

return grad

x = torch.tensor([2.0])

grad1 = gradient(f2, x)

print('grad1:', grad1)grad1: tensor([3.9999])

- x는 실수인 Input 텐서를 의미한다.

- gradient 함수는 diff 함수를 반복문을 이요해, 순차적으로 미분 값을 계산하여, 반환한다.

- gradient 값이 동일한 값을 나타냄을 알 수 있다.

x2 = torch.tensor([2.0, 3.0])

grad2 = gradient(f2, x2)

print('grad2:', grad2)grad2: tensor([4.0002, 5.9996])

x3 = torch.tensor([2.0, 3.0, 4.0, 5.0])

grad3 = gradient(f2, x3)

print('grad3:', grad3)grad3: tensor([ 3.9997, 5.9986, 7.9994, 10.0002])

- Gradient 함수는 순차적 미분계산이 가능하므로, input tensor의 크기가 1 이상이라도, 각 성분에 대해서 미분값을 반환할 수 있다.

자동 미분의 계산

x = torch.tensor(2.0, requires_grad = True)

y = x**2

print('x=:', x)

print('y=:', y)

print('detached x:', x.detach().numpy())

print('detached y:', y.detach().numpy())

y.retain_grad()

y.backward()

print('x.grad=:', x.grad)

print('y.grad:', y.grad)x=: tensor(2., requires_grad=True)

y=: tensor(4)

detached x: 2.0

detached y: 4.0

- required_grad = True 이면, 계산 그래프에 의해 자동 미분을 허용한다.

- retain_grad()는 보통 중간 노드의 경우에, 텐서의 미분을 유지해 주는 역할을 하게 된다.

- backward()를 변화율을 역방향으로 계산할 수 있다.

x = torch.tensor(2.0, requires_grad = True)

y = x**2

y.backward()

print('x=:', x)

print('x.grad=:', x.grad)

x.grad.zero_()

print('x.grad=:', x.grad)

z = x**3

z.backward()

print('x.grad=:', x.grad)x=: tensor(2., requires_grad=True)

x.grad=: tensor(4.)

x.grad=: tensor(0.)

x.grad=: tensor(12.)

- x.grad는 x가 2.0에서의 미분값을 돌려준다.

- x.grad.zero_()는 앞에서 계산된 미분 텐서를 0으로 초기화 시켜 준다.

- 마지막 x.grad 값은 12.0 이다.

x = torch.tensor(2.0, requires_grad = True)

y = torch.tensor(3.0, requires_grad = True)

z = x**2 + y **2

z.backward()

print('x=:', x)

print('y=:', y)

print('z=:', z)

print('x.grad=:', x.grad)

print('y.grad=:', y.grad)x=: tensor(2., requires_grad=True)

y=: tensor(3., requires_grad=True)

z=: tensor(13.)

x.grad=: tensor(4.)

y.grad=: tensor(6.)

- x와 y가 각각 2.0과 3.0인 텐서 이므로, z = 13.0

- x.grad와 y.grad는 각각 편미분 값을 반환한다.

z = torch.tensor(4.0, requires_grad=True)

f = (x-y)*z

x.grad.zero_()

y.grad.zero_()

f.backward()

print('x.grad:', x.grad)

print('y.grad:', y.grad)

print('z.grad:', z.grad)x.grad: tensor(4.)

y.grad: tensor(-4.)

z.grad: tensor(-1.)

- grad.zero_()는 x와 y의 미분값을 0으로 초기화 시켜준다.

- 함수 f에 대한 backward()를 통해, 각각 x, y와 z에 대한 편미분 값을 계산해보면, 출력값처럼 나온다.

자동 미분 계산의 합

import torch

x = torch.tensor([2.0, 3.0, 4.0], requires_grad=True)

print('x=:', x)

y = x.sum()

print('y=:', y)

y.backward()

print('x.grad=:', x.grad)

z = x.mean()

print('z=:', z)

x.grad.zero_()

z.backward()

print('x.grad=:', x.grad)

x.grad.zero_()

w = (x**2).mean()

print('w=:', w)

w.backward()

print('x.grad=:', x.grad)x=: tensor([2., 3., 4.], requires_grad=True)

y=: tensor(9.,)

x.grad=: tensor([1., 1., 1.])

z=: tensor(3.,)

x.grad=: tensor([0.3333, 0.3333, 0.3333])

w=: tensor(9.6667)

x.grad=: tensor([1.3333, 2.0000, 2.6667])

- y는 텐서 x의 합과 미분을 계산한다.

- z는 텐서 x의 평균을 계산하고, 미분값을 0으로 초기화시켜, 미분을 계산한다.

- w는 계산된 미분값을 다시 초기화시킨후, x의 거듭 제곱과 미분 값을 계산한 것이다.

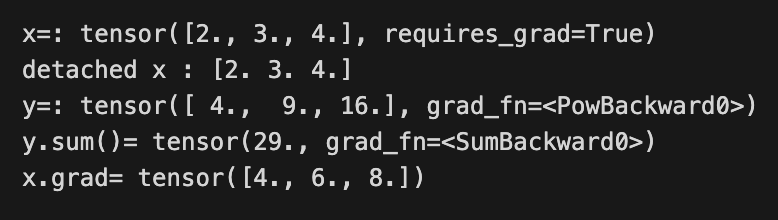

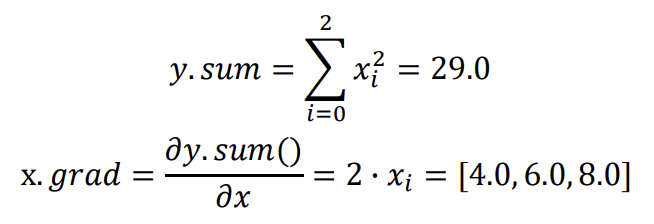

x = torch.tensor([2.0, 3.0, 4.0], requires_grad = True)

print('x=:', x)

print('detached x :', x.detach().numpy())

y = x**2

y.sum().backward(retain_graph = True)

print('y=:', y)

print('y.sum()=', y.sum())

print('x.grad=', x.grad)

- x는 계산 그래프를 연결해서 생성한 후, 출력한 것이다.

- y는 x의 제곱합을 구한 후, 미분값을 계산한 것이다.

자동 미분의 계산

x.grad.zero_()

y = x**2

y.backward(gradient = torch.ones_like(y))

print('x.grad=:', x.grad)

x.grad.zero_()

y = torch.tensor([-1.0, -2.0, -3.0], requires_grad = True)

z = x**2 + y**2

z.backward(gradient = torch.ones_like(z))

print('x.grad=:', x.grad)

print('y.grad=:', y.grad)x.grad=: tensor([4., 6., 8.])

x.grad=: tensor([4., 6., 8.])

y.grad=: tensor([-2., -4., -6.])

autograd.grad()를 이용한 계산

x = torch.tensor(2.0, requires_grad = True)

y = torch.tensor(3.0, requires_grad = True)

z = x**2 + y**2

dz_dx = torch.autograd.grad(z, x)

print('dz_dx=:', dz_dx)

print('dz_dx[0]=:', dz_dx[0])

print('x.grad=:', x.grad)dz_dx=: (tensor(4.),)

dz_dx[0]=: tensor(4.)

x.grad=: None

dz_dy = torch.autograd.grad(z, y)

print('dz_dy=:', dz_dy)

print('dz_dy[0]=:', dz_dy[0])

print('y.grad=:', y.grad)dz_dy=: (tensor(6.),)

dz_dy[0]=: tensor(6.)

y.grad=: None

- autugrad.grad()를 이용하여, 스칼라 텐서인 x, y, z에 대한 미분을 계산한 결과이다.

import torch

x = torch.tensor([2.0, 3.0, 4.0], requires_grad=True)

y = torch.tensor([-1.0, -2.0, -3.0], requires_grad=True)

z = x**2 + y**2

print('x=:', x)

print('y=:', y)

print('z=:', z)

print('detached x:', x.detach().numpy())

print('detached y:', y.detach().numpy())

print('detached z:', z.detach().numpy())

dz_dx = torch.autograd.grad(outputs = z,

inputs = x,

grad_outputs=torch.ones_like(z),

retain_graph=True)

print('dz_dx=:', dz_dx)

print('x.grad=:', x.grad)x=: tensor([2., 3., 4.], requires_grad=True)

y=: tensor([-1., -2., -3.], requires_grad=True)

z=: tensor([ 5., 13., 25.])

detached x: [2. 3. 4.]

detached y: [-1. -2. -3.]

detached z: [ 5. 13. 25.]

dz_dx=: (tensor([4., 6., 8.]),)

x.grad=: None

- x, y, z에 대한 1차원 텐서를 생성한다.

- 미분을 계산하여 반환하는 것이고, retain_graph는 True로 설정하는 경우, x와 y를 다시 사용할 수 있게 된다.

dz_dy = torch.autograd.grad(outputs = z,

inputs = y,

grad_outputs=torch.ones_like(z),

retain_graph=True)

print('dz_dx=:', dz_dy)

print('y.grad=:', y.grad)

# [43]번 셀 코드

v = grad_outputs = torch.ones_like(z)

w = 2*x + 3*y

dzw_dxy = torch.autograd.grad(outputs= [z,w],

inputs = [x,y],

grad_outputs = [v,v])

print('dzw_dxy[0]=:', dzw_dxy[0])

print('dzw_dxy[1]=:', dzw_dxy[1])dz_dx=: (tensor([-2., -4., -6.]),)

y.grad=: None

dzw_dxy[0]=: tensor([ 6., 8., 10.])

dzw_dxy[1]=: tensor([ 1., -1., -3.])

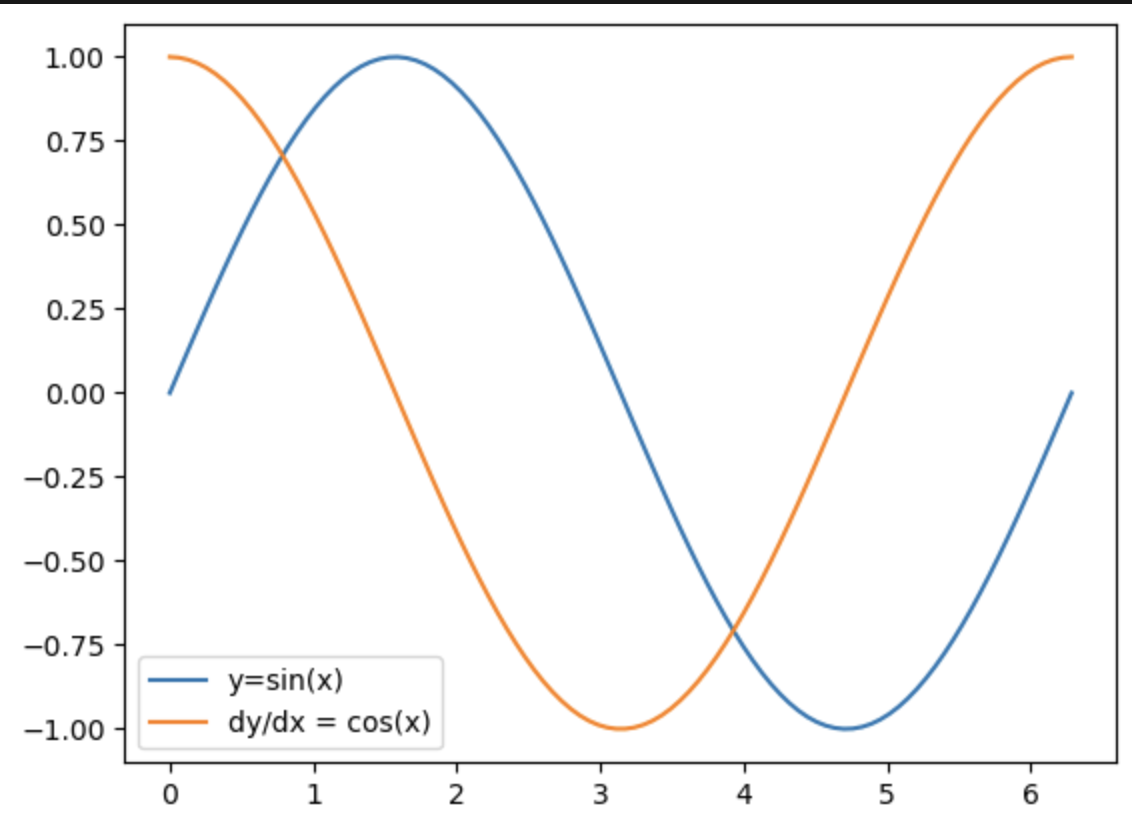

Sin(x), Cos(x)에 대한 자동 미분 계산

x = torch.linspace(0, 2*torch.pi, 100, requires_grad = True)

y = x.sin()

y.sum().backward(retain_graph = True)

dydx = x.grad

print(torch.allclose(x.cos(), dydx))

x.grad.zero_()

y.backward(torch.ones_like(y), retain_graph = True)

dydx2 = x.grad

print(torch.allclose(dydx, dydx2))

dydx3 = torch.autograd.grad(outputs=y, inputs = x, grad_outputs = torch.ones_like(y))[0]

print(torch.allclose(dydx, dydx3))True

True

True

- 삼각함수에 대한 미분을 이용하여, 그래프로 만들 수 있다.

- 그래프를 그리려면, y의 값을 유지해야 하므로, retain_graph = True로 지정한다.

- dy/dx, dy/dx2, dy/dx3는 모두 x.cos()의 값과 같다.

autograd.grad()를 이용한 계산

xa = x.detach().numpy()

ya = y.detach().numpy()

plt.plot(xa, ya, label = 'y=sin(x)')

plt.plot(xa, dydx, label = 'dy/dx = cos(x)')

plt.legend()

plt.show()

- 그래프를 그리기 위해서는 계산 그래프에서 분리시킨 후, numpy 배열로 변경해야 한다.

- 왼쪽과 같이 matplotlib.pyplot의 Plot을 이용하여, 시각화할 수 있다.

Jacobian() 계산하기

from torch.autograd.functional import jacobian

def f(x1, x2, x3):

return (x1**2, x2**2, x3**2)

x1 = torch.tensor(2.0)

x2 = torch.tensor(3.0)

x3 = torch.tensor(4.0)

J1 = jacobian(f, (x1, x2, x3))

print('J1=:', J1)J1=: ((tensor(4.), tensor(0.), tensor(0.)), (tensor(0.), tensor(6.), tensor(0.)), (tensor(0.), tensor(0.), tensor(8.)))

- jacobian 함수를 이용해서 직접 미분값을 계산하는 것도 가능하다.

- J1은 f에 대한 x1, x2, x3의 미분값을 계산하여 반환한다.

def f(x,y):

return x**2 + y**2

x = torch.tensor([2.0, 3.0, 4.0])

y = torch.tensor([-1.0, -2.0, -3.0])

J = jacobian(f, (x,y))

print('Jx =:', J[0])

print('Jy =:', J[1])Jx =: tensor([[4., 0., 0.],

[0., 6., 0.],

[0., 0., 8.]])

Jy =: tensor([[-2., -0., -0.],

[-0., -4., -0.],

[-0., -0., -6.]])

- jacobian 함수를 이용해서 앞에서와 같이 각각 1차원 텐서에 대해서도 직접 미분값을 얻을 수 있다.

- Jx와 Jy는 1차원 텐서인 x와 y에 대한 미분 값을 계산하여 반환한 것이다.