가시다(gasida) 님이 진행하는 Istio Hands-on Study 1기 과정을 참여하여 정리한 글입니다. 2주차는 Envoy, Istio Gateway 주제로 학습을 진행하였습니다.

1. Istio's Data Plane (Envoy Proxy)

1.1 Envoy 개요 및 주요 기능

Envoy는 언어 독립적이고 확장 가능한 고성능 프록시로서, 마이크로서비스 환경에서 트래픽 제어, 보안, 모니터링, 서비스 간 통신 최적화를 위한 핵심 구성 요소로 자리 잡고 있다. Istio와 같은 서비스 메시 구현에서 Envoy는 각 서비스에 사이드카 형태로 배포되어 네트워크 투명성 확보 및 운영 효율성을 극대화하는 역할을 수행한다.

1.1.1 Envoy란?

Envoy는 L7(애플리케이션 계층) 프록시이자 서비스 간 통신 버스로, 대규모 마이크로서비스 아키텍처에서 네트워크를 투명하게 만들고 문제의 원인을 쉽게 파악할 수 있도록 설계된 오픈소스 소프트웨어이다. 독립 실행형 프로세스로 동작하며, 다양한 언어로 작성된 서비스 간의 통신을 중계하고 제어하는 역할을 한다.

1.1.2 주요 기능

- Out-of-process 아키텍처

- Envoy는 애플리케이션과 분리된 독립 프로세스로 동작하며, 사이드카(sidecar) 형태로 서비스 인스턴스와 함께 배포된다.

- 다양한 프로그래밍 언어(Java, Go, Python 등)를 사용하는 이기종 환경에서도 통신이 가능하며, 애플리케이션 코드 수정 없이 배포 및 업그레이드가 용이하다.

- L3/L4 필터 아키텍처

- TCP/UDP 기반의 네트워크 트래픽을 처리하기 위한 플러그형 필터 체인 구조를 제공한다.

- 일반 TCP 프록시뿐만 아니라 Redis, MongoDB, Postgres 등 다양한 프로토콜에 대한 지원이 가능하다.

- HTTP(L7) 필터 아키텍처

- HTTP 트래픽을 처리하기 위한 고급 필터 기능을 제공한다.

- 필터를 통해 버퍼링, 라우팅, 속도 제한, 트래픽 감지 등 다양한 HTTP 관련 기능을 수행할 수 있다.

- HTTP/2 및 HTTP/3 지원

- HTTP/1.1과 HTTP/2를 모두 지원하며, 상호 변환이 가능하다.

- HTTP/3는 알파(Alpha) 단계로 지원되며, 다양한 프로토콜 간 변환도 지원한다.

- 고급 라우팅 기능

- 요청 경로(path), 호스트(authority), 콘텐츠 타입 등 다양한 조건을 기반으로 정교한 라우팅 및 리디렉션을 수행할 수 있다.

- gRPC 지원

- HTTP/2를 기반으로 하는 Google의 gRPC 프레임워크와 완전히 호환되며, 라우팅 및 로드 밸런싱 프록시로서 사용 가능하다.

- 동적 구성 및 서비스 디스커버리

- Envoy는 XDS API를 통해 클러스터, 라우팅, 리스너, 인증서 등을 동적으로 구성할 수 있으며, 간단한 구성에는 DNS 기반의 정적 설정도 가능하다.

- 헬스 체크

- 액티브 및 패시브 방식의 서비스 상태 점검 기능을 제공하며, 이를 통해 장애가 발생한 인스턴스를 자동으로 제외하고 트래픽을 전달할 수 있다.

- 고급 로드 밸런싱

- 자동 재시도, 회로 차단(Circuit Breaking), 요청 쉐도잉, 이상 탐지(Outlier Detection), 전역 속도 제한 등 다양한 고급 로드 밸런싱 기능을 지원한다.

- 엣지(Edge) 프록시 지원

- TLS 종료, 다양한 HTTP 프로토콜 지원, 고급 라우팅 기능을 통해 외부 트래픽을 처리하는 프론트 프록시(Edge Proxy)로 활용 가능하다.

- 우수한 관측성(Observability)

- 내장된 통계 수집 기능과 StatsD 연동, 관리용 API(관리 포트) 제공, 분산 추적(Distributed Tracing) 기능을 통해 네트워크 및 애플리케이션 상태를 효과적으로 모니터링할 수 있다.

1.2 Envoy Proxy Configuration

Envoy는 정적 및 동적 방식 모두를 지원하는 유연한 구성 시스템을 갖추고 있다. 정적 설정은 초기 학습 및 간단한 실습에 적합하며, 운영 환경에서는 동적 설정과 ADS를 통한 통합 관리가 선호된다. 특히 Istio와 같은 서비스 메시 환경에서는 ADS 기반의 설정 구조가 표준으로 자리 잡고 있다.

Envoy는 YAML 또는 JSON 형식의 설정 파일을 통해 구동된다. 이 설정 파일은 리스너, 라우팅, 클러스터 정의 외에도 Admin API 활성화 여부, 액세스 로그 저장 위치, 트레이싱 엔진 설정 등 서버 운영에 필요한 요소들을 포괄적으로 구성할 수 있다.

Envoy는 여러 설정 API 버전을 제공해왔으며, 현재는 v3가 표준으로 자리잡았다. 본 문서에서는 Istio에서도 사용되는 v3 API 기반 설정을 중심으로 Envoy의 정적 및 동적 설정 방법을 살펴본다.

1.2.1 Envoy 정적 설정 (Static Configuration)

- 설정 구조 개요

Envoy의 정적 설정은 static_resources 필드를 통해 구성된다. 다음은 간단한 정적 설정 예시이다.

static_resources:

listeners:

- name: httpbin-demo

address:

socket_address: { address: 0.0.0.0, port_value: 15001 }

filter_chains:

- filters:

- name: envoy.filters.network.http_connection_manager

typed_config:

"@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager

stat_prefix: ingress_http

http_filters:

- name: envoy.filters.http.router

route_config:

name: httpbin_local_route

virtual_hosts:

- name: httpbin_local_service

domains: ["*"]

routes:

- match: { prefix: "/" }

route:

auto_host_rewrite: true

cluster: httpbin_service

clusters:

- name: httpbin_service

connect_timeout: 5s

type: LOGICAL_DNS

dns_lookup_family: V4_ONLY

lb_policy: ROUND_ROBIN

load_assignment:

cluster_name: httpbin

endpoints:

- lb_endpoints:

- endpoint:

address:

socket_address:

address: httpbin

port_value: 80002 설정 요소 설명

- Listener: 클라이언트의 요청을 수신할 포트를 정의한다 (

0.0.0.0:15001). - Filter Chain: HTTP 요청을 처리하기 위해

http_connection_manager필터를 사용한다. - Route 설정: 모든 요청을

httpbin_service클러스터로 라우팅한다. - Cluster 설정: 업스트림 서비스(httpbin)에 대한 연결 방식, 로드 밸런싱 정책(ROUND_ROBIN), DNS 디스커버리 방식(LOGICAL_DNS) 등을 정의한다.

이 예시는 완전히 정적으로 구성된 설정으로, 모든 구성 요소가 명시적으로 정의되어 있다.

1.2.2 Envoy 동적 설정 (Dynamic Configuration)

- 개요

Envoy는 xDS API를 통해 다운타임 없이 실시간으로 설정을 업데이트할 수 있다. 필요한 것은 간단한 부트스트랩 설정 파일 하나이며, 나머지 설정은 동적으로 수신된다.

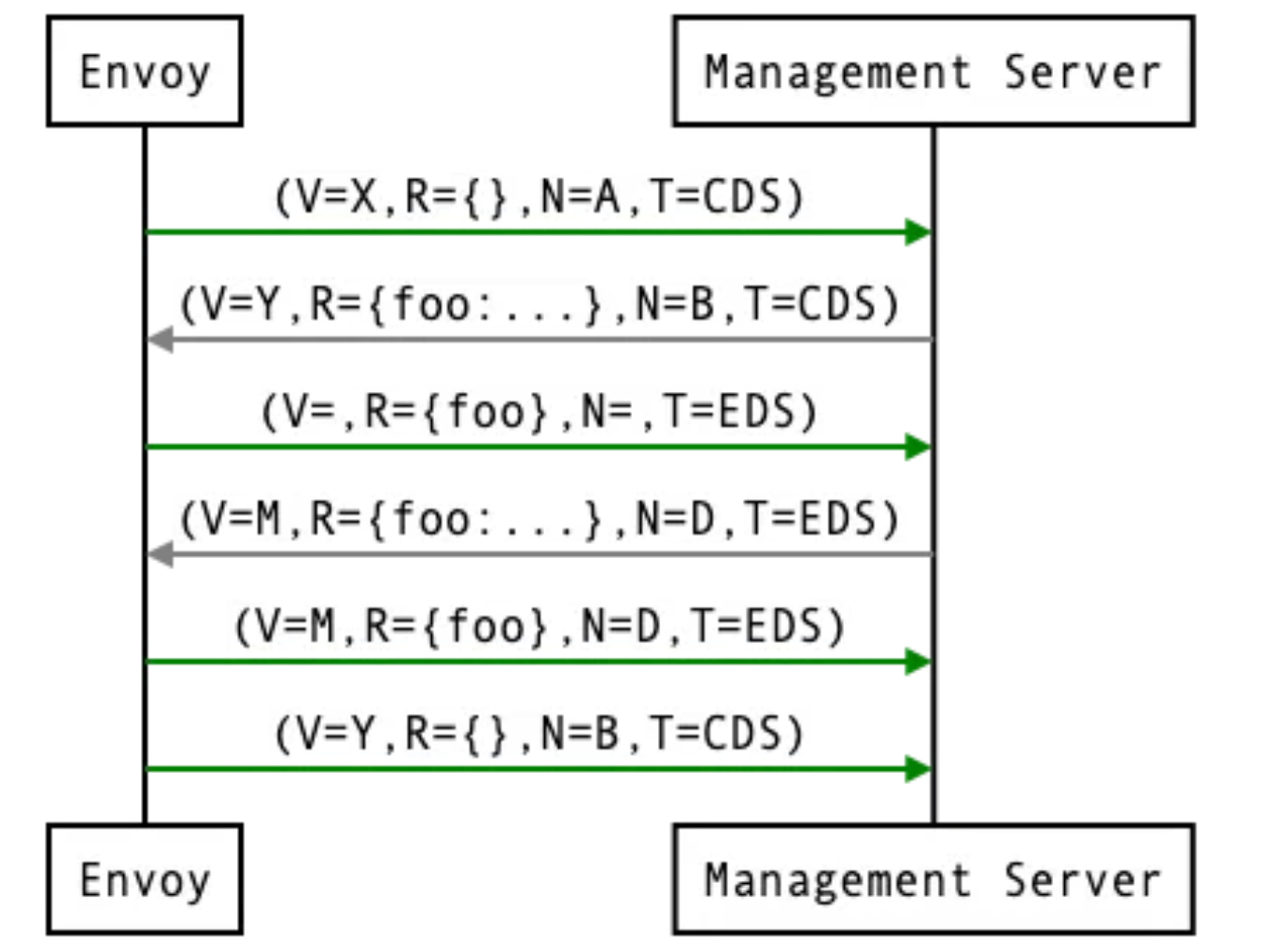

2 주요 Discovery API

| API 명칭 | 설명 |

|---|---|

| LDS (Listener Discovery Service) | 리스너 구성을 동적으로 가져옴 |

| RDS (Route Discovery Service) | 라우팅 규칙을 동적으로 가져옴 |

| CDS (Cluster Discovery Service) | 클러스터 구성을 동적으로 가져옴 |

| EDS (Endpoint Discovery Service) | 각 클러스터의 엔드포인트 목록을 제공 |

| SDS (Secret Discovery Service) | 인증서 정보 등을 제공 |

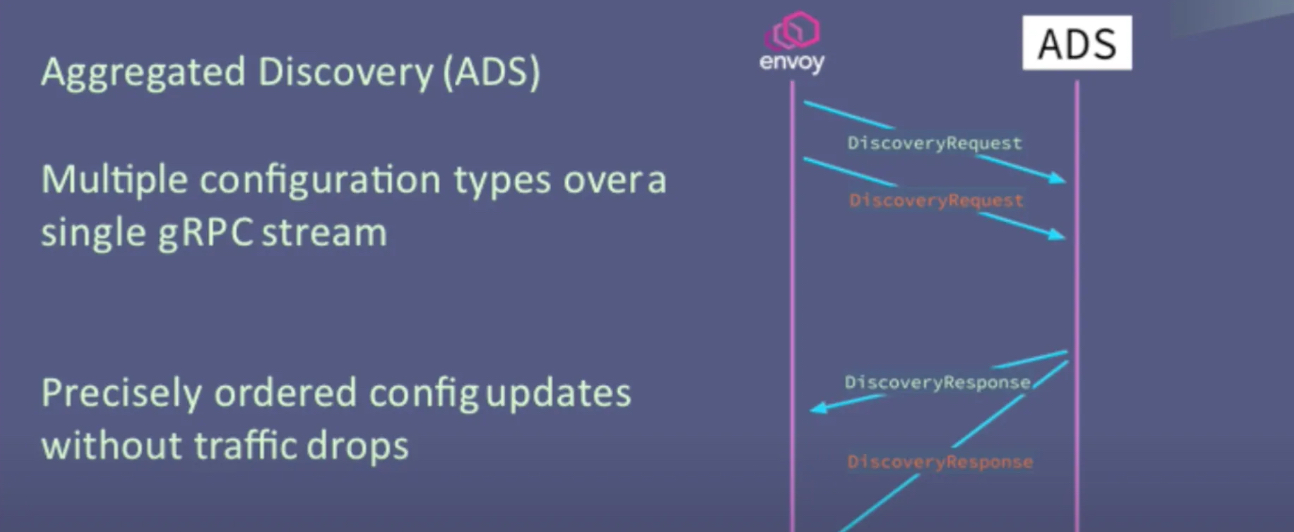

| ADS (Aggregated Discovery Service) | 위의 모든 설정을 통합하여 순차적으로 제공 |

3 예시: LDS 사용 설정

dynamic_resources:

lds_config:

api_config_source:

api_type: GRPC

grpc_services:

- envoy_grpc:

cluster_name: xds_cluster

clusters:

- name: xds_cluster

connect_timeout: 0.25s

type: STATIC

lb_policy: ROUND_ROBIN

http2_protocol_options: {}

hosts:

- socket_address:

address: 127.0.0.3

port_value: 5678이 설정은 xds_cluster라는 gRPC 클러스터를 통해 리스너 구성을 동적으로 받아온다. 클러스터 정의만 명시되어 있고, 리스너 정보는 런타임에 가져온다.

- Istio에서 사용하는 부트스트랩 설정 예시

Istio는 ADS를 이용하여 Envoy 프록시의 설정을 관리한다. ADS는 설정 간 종속성 문제 및 경쟁 조건(Race Condition)을 줄이기 위한 집계 API이다.

bootstrap:

dynamicResources:

ldsConfig:

ads: {}

cdsConfig:

ads: {}

adsConfig:

apiType: GRPC

grpcServices:

- envoyGrpc:

clusterName: xds-grpc

refreshDelay: 1.000s

staticResources:

clusters:

- name: xds-grpc

type: STRICT_DNS

connectTimeout: 10.000s

hosts:

- socketAddress:

address: istio-pilot.istio-system

portValue: 15010

circuitBreakers:

thresholds:

- maxConnections: 100000

maxPendingRequests: 100000

maxRequests: 100000

- priority: HIGH

maxConnections: 100000

maxPendingRequests: 100000

maxRequests: 100000

http2ProtocolOptions: {}이 설정은 istio-pilot을 통해 ADS로부터 리스너, 클러스터 설정 등을 받아온다. 정적으로는 ADS와 통신하기 위한 xds-grpc 클러스터만 명시되어 있다.

출처: https://blog.naver.com/alice_k106/222000680202

출처: https://blog.naver.com/alice_k106/222000680202

출처: https://www.envoyproxy.io/docs/envoy/latest/api-docs/xds_protocol#aggregated-discovery-service

출처: https://www.envoyproxy.io/docs/envoy/latest/api-docs/xds_protocol#aggregated-discovery-service

1.3 Envoy in action (실습)

1.3.1 기본 실습

- 엔보이는 C++로 작성돼 플랫폼에 맞게 컴파일 된다. 엔보이를 시작하기 가장 좋은 방법은 도커를 사용해 컨테이너를 실행하는 것이다.

- 도커 이미지 가져오기 : citizenstig/httpbin 는 arm CPU 미지원 - Link

# 도커 이미지 가져오기

docker pull envoyproxy/envoy:v1.19.0

v1.19.0: Pulling from envoyproxy/envoy

5ab476899135: Pull complete

ac0191b92803: Pull complete

103feb2666f8: Pull complete

b3aac349f16a: Pull complete

1bf3a194e2b3: Pull complete

0af831276ac1: Pull complete

4b52eda99c5b: Pull complete

b9bb7af7248f: Pull complete

Digest: sha256:ec7228053c7e99bf481901960b9074528be407ede2363b6152fb93a1eee872cf

Status: Downloaded newer image for envoyproxy/envoy:v1.19.0

docker.io/envoyproxy/envoy:v1.19.0

docker pull curlimages/curl

docker pull mccutchen/go-httpbin

# 확인

docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

curlimages/curl latest d43bdb28bae0 12 days ago 34.8MB

mccutchen/go-httpbin latest ff73c96c1445 2 weeks ago 67.8MB

envoyproxy/envoy v1.19.0 ec7228053c7e 3 years ago 178MB-

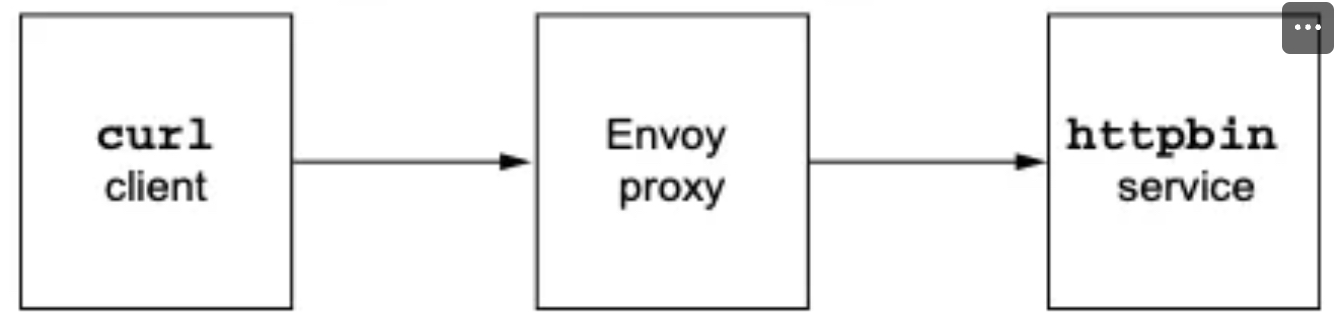

httpbin-Envoy 예제 아키텍처 요약

-

httpbin 서비스 시작

- httpbin은 다양한 HTTP 요청에 대해 헤더 반환, 지연, 오류 발생 등의 기능을 제공하는 테스트용 웹 서비스이다.

- 예:

http://httpbin.org/headers→ 요청 헤더를 그대로 반환.

- 예:

- httpbin은 다양한 HTTP 요청에 대해 헤더 반환, 지연, 오류 발생 등의 기능을 제공하는 테스트용 웹 서비스이다.

-

Envoy 설정 및 시작

- Envoy 프록시를 실행하고, 모든 클라이언트 요청이 httpbin 서비스로 라우팅되도록 설정.

-

클라이언트 앱 실행

- 클라이언트 앱이 Envoy를 통해 httpbin에 요청을 전달.

-

-

httpbin 서비스 실행

# mccutchen/go-httpbin 는 기본 8080 포트여서, 책 실습에 맞게 8000으로 변경

# docker run -d -e PORT=8000 --name httpbin mccutchen/go-httpbin -p 8000:8000

docker run -d -e PORT=8000 --name httpbin mccutchen/go-httpbin

docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

11f27618ecfe mccutchen/go-httpbin "/bin/go-httpbin" 13 seconds ago Up 12 seconds 8080/tcp httpbin

# curl 컨테이너로 httpbin 호출 확인

docker run -it --rm --link httpbin curlimages/curl curl -X GET http://httpbin:8000/headers

{

"headers": {

"Accept": [

"*/*"

],

"Host": [

"httpbin:8000"

],

"User-Agent": [

"curl/8.13.0"

]

}

}✍🏿 /headers 엔드포인트를 호출하는데 사용한 헤더가 함께 반환된다.

- 이제 엔보이 프록시를 실행하고 help 를 전달해 플래그와 명령줄 파라미터 중 일부를 살펴보자

#

docker run -it --rm envoyproxy/envoy:v1.19.0 envoy --help

...

--service-zone <string> # 프록시를 배포할 가용 영역을 지정

Zone name

--service-node <string> # 프록시에 고유한 이름 부여

Node name

...

-c <string>, --config-path <string> # 설정 파일을 전달

Path to configuration file- 엔보이 실행 : 프록시를 실행하려고 했지만 유효한 설정 파일을 전달하지 않았다.

docker run -it --rm envoyproxy/envoy:v1.19.0 envoy

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:338] initializing epoch 0 (base id=0, hot restart version=11.120)

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:340] statically linked extensions:

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.compression.compressor: envoy.compression.brotli.compressor, envoy.compression.gzip.compressor

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.stats_sinks: envoy.dog_statsd, envoy.graphite_statsd, envoy.metrics_service, envoy.stat_sinks.dog_statsd, envoy.stat_sinks.graphite_statsd, envoy.stat_sinks.hystrix, envoy.stat_sinks.metrics_service, envoy.stat_sinks.statsd, envoy.stat_sinks.wasm, envoy.statsd

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.wasm.runtime: envoy.wasm.runtime.null, envoy.wasm.runtime.v8

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.formatter: envoy.formatter.req_without_query

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.matching.http.input: request-headers, request-trailers, response-headers, response-trailers

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.thrift_proxy.transports: auto, framed, header, unframed

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.quic.server.crypto_stream: envoy.quic.crypto_stream.server.quiche

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.compression.decompressor: envoy.compression.brotli.decompressor, envoy.compression.gzip.decompressor

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.thrift_proxy.protocols: auto, binary, binary/non-strict, compact, twitter

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.thrift_proxy.filters: envoy.filters.thrift.rate_limit, envoy.filters.thrift.router

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.tls.cert_validator: envoy.tls.cert_validator.default, envoy.tls.cert_validator.spiffe

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.resource_monitors: envoy.resource_monitors.fixed_heap, envoy.resource_monitors.injected_resource

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.request_id: envoy.request_id.uuid

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.http.cache: envoy.extensions.http.cache.simple

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.filters.network: envoy.client_ssl_auth, envoy.echo, envoy.ext_authz, envoy.filters.network.client_ssl_auth, envoy.filters.network.connection_limit, envoy.filters.network.direct_response, envoy.filters.network.dubbo_proxy, envoy.filters.network.echo, envoy.filters.network.ext_authz, envoy.filters.network.http_connection_manager, envoy.filters.network.kafka_broker, envoy.filters.network.local_ratelimit, envoy.filters.network.mongo_proxy, envoy.filters.network.mysql_proxy, envoy.filters.network.postgres_proxy, envoy.filters.network.ratelimit, envoy.filters.network.rbac, envoy.filters.network.redis_proxy, envoy.filters.network.rocketmq_proxy, envoy.filters.network.sni_cluster, envoy.filters.network.sni_dynamic_forward_proxy, envoy.filters.network.tcp_proxy, envoy.filters.network.thrift_proxy, envoy.filters.network.wasm, envoy.filters.network.zookeeper_proxy, envoy.http_connection_manager, envoy.mongo_proxy, envoy.ratelimit, envoy.redis_proxy, envoy.tcp_proxy

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.dubbo_proxy.serializers: dubbo.hessian2

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.http.original_ip_detection: envoy.http.original_ip_detection.custom_header, envoy.http.original_ip_detection.xff

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.upstreams: envoy.filters.connection_pools.tcp.generic

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.matching.input_matchers: envoy.matching.matchers.consistent_hashing, envoy.matching.matchers.ip

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.filters.udp_listener: envoy.filters.udp.dns_filter, envoy.filters.udp_listener.udp_proxy

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.tracers: envoy.dynamic.ot, envoy.lightstep, envoy.tracers.datadog, envoy.tracers.dynamic_ot, envoy.tracers.lightstep, envoy.tracers.opencensus, envoy.tracers.skywalking, envoy.tracers.xray, envoy.tracers.zipkin, envoy.zipkin

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.access_loggers: envoy.access_loggers.file, envoy.access_loggers.http_grpc, envoy.access_loggers.open_telemetry, envoy.access_loggers.stderr, envoy.access_loggers.stdout, envoy.access_loggers.tcp_grpc, envoy.access_loggers.wasm, envoy.file_access_log, envoy.http_grpc_access_log, envoy.open_telemetry_access_log, envoy.stderr_access_log, envoy.stdout_access_log, envoy.tcp_grpc_access_log, envoy.wasm_access_log

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.bootstrap: envoy.bootstrap.wasm, envoy.extensions.network.socket_interface.default_socket_interface

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.matching.common_inputs: envoy.matching.common_inputs.environment_variable

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.internal_redirect_predicates: envoy.internal_redirect_predicates.allow_listed_routes, envoy.internal_redirect_predicates.previous_routes, envoy.internal_redirect_predicates.safe_cross_scheme

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.guarddog_actions: envoy.watchdog.abort_action, envoy.watchdog.profile_action

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.matching.action: composite-action, skip

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.transport_sockets.downstream: envoy.transport_sockets.alts, envoy.transport_sockets.quic, envoy.transport_sockets.raw_buffer, envoy.transport_sockets.starttls, envoy.transport_sockets.tap, envoy.transport_sockets.tls, raw_buffer, starttls, tls

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.rate_limit_descriptors: envoy.rate_limit_descriptors.expr

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.resolvers: envoy.ip

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.filters.listener: envoy.filters.listener.http_inspector, envoy.filters.listener.original_dst, envoy.filters.listener.original_src, envoy.filters.listener.proxy_protocol, envoy.filters.listener.tls_inspector, envoy.listener.http_inspector, envoy.listener.original_dst, envoy.listener.original_src, envoy.listener.proxy_protocol, envoy.listener.tls_inspector

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.upstream_options: envoy.extensions.upstreams.http.v3.HttpProtocolOptions, envoy.upstreams.http.http_protocol_options

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.dubbo_proxy.protocols: dubbo

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.quic.proof_source: envoy.quic.proof_source.filter_chain

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.transport_sockets.upstream: envoy.transport_sockets.alts, envoy.transport_sockets.quic, envoy.transport_sockets.raw_buffer, envoy.transport_sockets.starttls, envoy.transport_sockets.tap, envoy.transport_sockets.tls, envoy.transport_sockets.upstream_proxy_protocol, raw_buffer, starttls, tls

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.retry_priorities: envoy.retry_priorities.previous_priorities

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.dubbo_proxy.filters: envoy.filters.dubbo.router

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.retry_host_predicates: envoy.retry_host_predicates.omit_canary_hosts, envoy.retry_host_predicates.omit_host_metadata, envoy.retry_host_predicates.previous_hosts

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.grpc_credentials: envoy.grpc_credentials.aws_iam, envoy.grpc_credentials.default, envoy.grpc_credentials.file_based_metadata

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.clusters: envoy.cluster.eds, envoy.cluster.logical_dns, envoy.cluster.original_dst, envoy.cluster.static, envoy.cluster.strict_dns, envoy.clusters.aggregate, envoy.clusters.dynamic_forward_proxy, envoy.clusters.redis

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.dubbo_proxy.route_matchers: default

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.http.stateful_header_formatters: preserve_case

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.health_checkers: envoy.health_checkers.redis

[2025-04-19 01:59:31.556][1][info][main] [source/server/server.cc:342] envoy.filters.http: envoy.bandwidth_limit, envoy.buffer, envoy.cors, envoy.csrf, envoy.ext_authz, envoy.ext_proc, envoy.fault, envoy.filters.http.adaptive_concurrency, envoy.filters.http.admission_control, envoy.filters.http.alternate_protocols_cache, envoy.filters.http.aws_lambda, envoy.filters.http.aws_request_signing, envoy.filters.http.bandwidth_limit, envoy.filters.http.buffer, envoy.filters.http.cache, envoy.filters.http.cdn_loop, envoy.filters.http.composite, envoy.filters.http.compressor, envoy.filters.http.cors, envoy.filters.http.csrf, envoy.filters.http.decompressor, envoy.filters.http.dynamic_forward_proxy, envoy.filters.http.dynamo, envoy.filters.http.ext_authz, envoy.filters.http.ext_proc, envoy.filters.http.fault, envoy.filters.http.grpc_http1_bridge, envoy.filters.http.grpc_http1_reverse_bridge, envoy.filters.http.grpc_json_transcoder, envoy.filters.http.grpc_stats, envoy.filters.http.grpc_web, envoy.filters.http.header_to_metadata, envoy.filters.http.health_check, envoy.filters.http.ip_tagging, envoy.filters.http.jwt_authn, envoy.filters.http.local_ratelimit, envoy.filters.http.lua, envoy.filters.http.oauth2, envoy.filters.http.on_demand, envoy.filters.http.original_src, envoy.filters.http.ratelimit, envoy.filters.http.rbac, envoy.filters.http.router, envoy.filters.http.set_metadata, envoy.filters.http.squash, envoy.filters.http.tap, envoy.filters.http.wasm, envoy.grpc_http1_bridge, envoy.grpc_json_transcoder, envoy.grpc_web, envoy.health_check, envoy.http_dynamo_filter, envoy.ip_tagging, envoy.local_rate_limit, envoy.lua, envoy.rate_limit, envoy.router, envoy.squash, match-wrapper

[2025-04-19 01:59:31.558][1][critical][main] [source/server/server.cc:112] error initializing configuration '': At least one of --config-path or --config-yaml or Options::configProto() should be non-empty

[2025-04-19 01:59:31.558][1][info][main] [source/server/server.cc:855] exiting

At least one of --config-path or --config-yaml or Options::configProto() should be non-empty- 이를 수정하고 앞서 봤던 예제 설정 파일을 전달해보자.

admin:

address:

socket_address: { address: 0.0.0.0, port_value: 15000 }

static_resources:

listeners:

- name: httpbin-demo

address:

socket_address: { address: 0.0.0.0, port_value: 15001 }

filter_chains:

- filters:

- name: envoy.filters.network.http_connection_manager

typed_config:

"@type": type.googleapis.com/envoy.extensions.filters.network.http_connection_manager.v3.HttpConnectionManager

stat_prefix: ingress_http

http_filters:

- name: envoy.filters.http.router

route_config:

name: httpbin_local_route

virtual_hosts:

- name: httpbin_local_service

domains: ["*"]

routes:

- match: { prefix: "/" }

route:

auto_host_rewrite: true

cluster: httpbin_service

clusters:

- name: httpbin_service

connect_timeout: 5s

type: LOGICAL_DNS

dns_lookup_family: V4_ONLY

lb_policy: ROUND_ROBIN

load_assignment:

cluster_name: httpbin

endpoints:

- lb_endpoints:

- endpoint:

address:

socket_address:

address: httpbin

port_value: 8000

🤔 기본적으로 15001 포트에 단일 리스너를 노출하고 모든 트래픽을 httpbin 클러스터로 라우팅할 것이다.

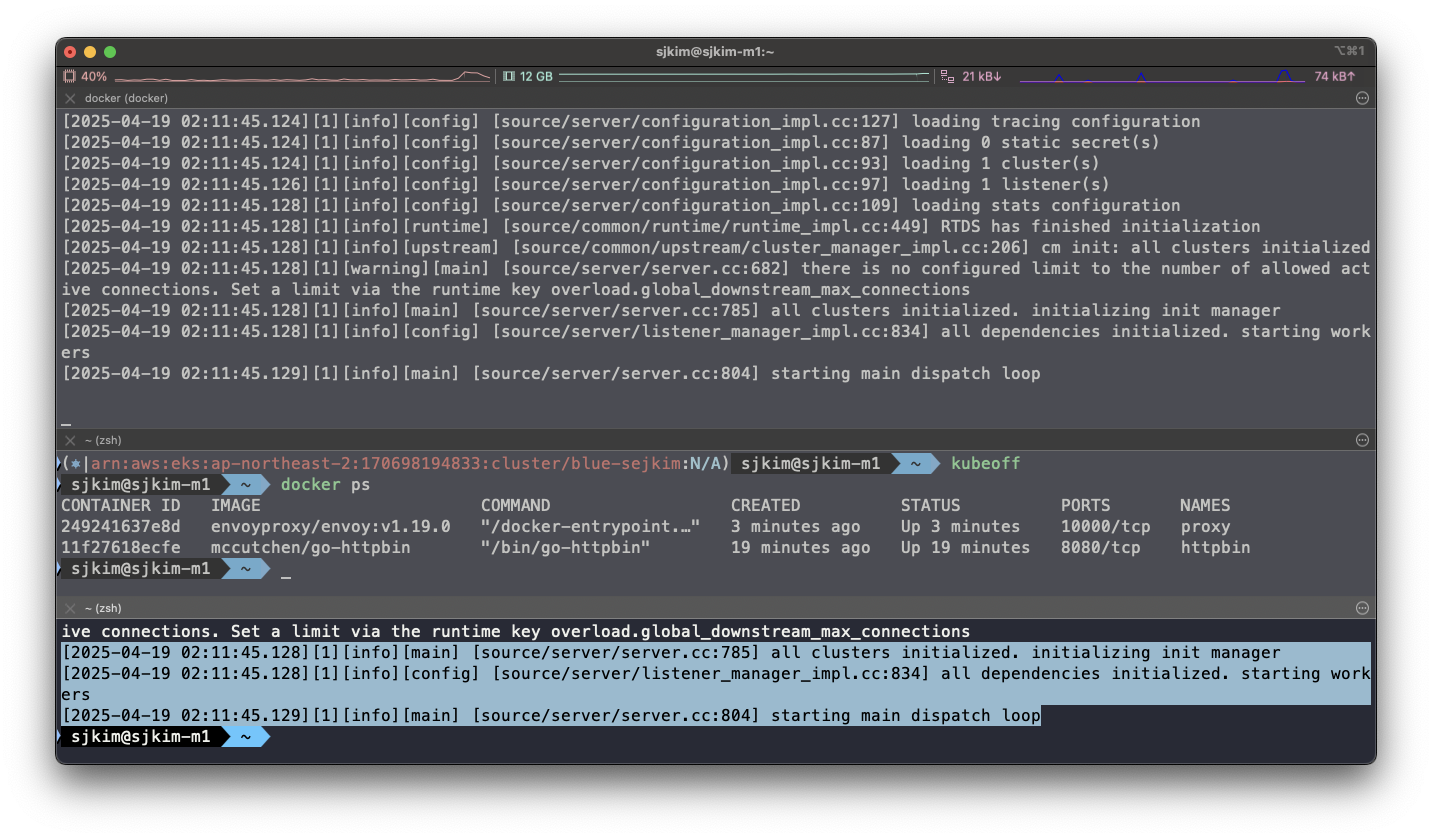

- 다시 엔보이 시작

#

cat ch3/simple.yaml

# 터미널1

docker run --name proxy --link httpbin envoyproxy/envoy:v1.19.0 --config-yaml "$(cat ch3/simple.yaml)"

# 터미널2

docker logs proxy

[2025-04-19 02:11:45.128][1][info][main] [source/server/server.cc:785] all clusters initialized. initializing init manager

[2025-04-19 02:11:45.128][1][info][config] [source/server/listener_manager_impl.cc:834] all dependencies initialized. starting workers

[2025-04-19 02:11:45.129][1][info][main] [source/server/server.cc:804] starting main dispatch loop🎉 프록시가 성공적으로 시작해 15001 포트를 리스닝하고 있다.

- curl로 프록시를 호출해 보자

# 터미널2

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15001/headers

{

"headers": {

"Accept": [

"*/*"

],

"Host": [

"httpbin"

],

"User-Agent": [

"curl/8.13.0"

],

"X-Envoy-Expected-Rq-Timeout-Ms": [

"15000"

],

"X-Forwarded-Proto": [

"http"

],

"X-Request-Id": [

"c96ff161-35ad-4556-b2b7-1b098beb1ace"

]

}

}🎯 프록시를 호출했는데도 트래픽이 httpbin 서비스로 정확하게 전송됐다. 또 다음과 같은 새로운 헤더도 추가됐다.

-

X-Envoy-Expected-Rq-Timeout-Ms

-

X-Request-Id

-

사소하게 보일 수 있지만, 이미 엔보이는 우리를 위해 많은 일을 하고 있다.

-

엔보이는 새 X-Request-Id를 만들었는데, X-Request-Id는 클러스터 내 다양한 요청 사이의 관계를 파악하고 요청을 처리하기 위해 여러 서비스를 거치는 동안(즉, 여러 홉)을 추적하는 데 활용할 수 있다.

-

두 번째 헤더인 X-Envoy-Expected-Rq-Timeout-Ms는 업스트림 서비스에 대한 힌트로, 요청이 15,000ms 후에 타임아웃될 것으로 기대한다는 의미다.

-

업스트림 시스템과 그 요청이 거치는 모든 홉은 이 힌트를 사용해 데드라인을 구현할 수 있다. 데드라인을 사용하면 업스트림 시스템에 타임아웃 의도를 전달할 수 있으며, 데드라인이 넘으면 처리를 중단하게 할 수 있다.

-

이렇게 하면 타임아웃된 후 묶여 있던 리소스가 풀려난다.

-

다음 실습을 위해 Envoy 종료

docker rm -f proxy -

이제 이 구성을 살짝 변경해 예상 요청 타임아웃을 1초로 설정해보자.

-

설정 파일에 라우팅 규칙을 업데이트하자.

- match: { prefix: "/" }

route:

auto_host_rewrite: true

cluster: httpbin_service

timeout: 1s- 실행

#

#docker run -p 15000:15000 --name proxy --link httpbin envoyproxy/envoy:v1.19.0 --config-yaml "$(cat ch3/simple_change_timeout.yaml)"

cat ch3/simple_change_timeout.yaml

docker run --name proxy --link httpbin envoyproxy/envoy:v1.19.0 --config-yaml "$(cat ch3/simple_change_timeout.yaml)"

docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

e20c923154e8 envoyproxy/envoy:v1.19.0 "/docker-entrypoint.…" 16 seconds ago Up 15 seconds 10000/tcp proxy

11f27618ecfe mccutchen/go-httpbin "/bin/go-httpbin" 28 minutes ago Up 28 minutes 8080/tcp httpbin

# 타임아웃 설정 변경 확인

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15001/headers

{

"headers": {

"Accept": [

"*/*"

],

"Host": [

"httpbin"

],

"User-Agent": [

"curl/8.13.0"

],

"X-Envoy-Expected-Rq-Timeout-Ms": [

"1000" ✅ 1000ms초 = 1초

],

"X-Forwarded-Proto": [

"http"

],

"X-Request-Id": [

"b1ba5664-dc66-4b43-b25e-15c31ff022a5"

]

}

}

# 추가 테스트 : Envoy Admin API(TCP 15000) 를 통해 delay 설정

docker run -it --rm --link proxy curlimages/curl curl -X POST http://proxy:15000/logging

active loggers:

admin: info

aws: info

assert: info

backtrace: info

cache_filter: info

client: info

config: info

connection: info

conn_handler: info

decompression: info

dubbo: info

envoy_bug: info

ext_authz: info

rocketmq: info

file: info

filter: info

forward_proxy: info

grpc: info

hc: info

health_checker: info

http: info

http2: info

hystrix: info

init: info

io: info

jwt: info

kafka: info

lua: info

main: info

matcher: info

misc: info

mongo: info

quic: info

quic_stream: info

pool: info

rbac: info

redis: info

router: info

runtime: info

stats: info

secret: info

tap: info

testing: info

thrift: info

tracing: info

upstream: info

udp: info

wasm: info

docker run -it --rm --link proxy curlimages/curl curl -X POST http://proxy:15000/logging?http=debug

http: debug

[2025-04-19 02:28:04.049][1][debug][http] [source/common/http/conn_manager_impl.cc:1456] [C5][S7246239971882766351] encoding headers via codec (end_stream=false):

':status', '200'

'content-type', 'text/plain; charset=UTF-8'

'cache-control', 'no-cache, max-age=0'

'x-content-type-options', 'nosniff'

'date', 'Sat, 19 Apr 2025 02:28:04 GMT'

'server', 'envoy'

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15001/delay/0.5

{

"args": {},

"headers": {

"Accept": [

"*/*"

],

"Host": [

"httpbin"

],

"User-Agent": [

"curl/8.13.0"

],

"X-Envoy-Expected-Rq-Timeout-Ms": [

"1000"

],

"X-Forwarded-Proto": [

"http"

],

"X-Request-Id": [

"52de6634-be18-4c61-8f4f-7f064317fd36"

]

},

"method": "GET",

"origin": "172.17.0.3:38458",

"url": "http://httpbin/delay/0.5",

"data": "",

"files": {},

"form": {},

"json": null

}

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15001/delay/1

upstream request timeout

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15001/delay/2

upstream request timeout1.3.2 Envoy’s Admin API

- 엔보이의 Admin API를 사용하면 프록시 동작에 대한 통찰력을 향상시킬 수 있고, 메트릭과 설정에 접근할 수 있다.

#

docker run --name proxy --link httpbin envoyproxy/envoy:v1.19.0 --config-yaml "$(cat ch3/simple_change_timeout.yaml)"

# admin API로 Envoy stat 확인 : 응답은 리스너, 클러스터, 서버에 대한 통계 및 메트릭

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15000/stats

cluster.httpbin_service.assignment_stale: 0

cluster.httpbin_service.assignment_timeout_received: 0

cluster.httpbin_service.bind_errors: 0

cluster.httpbin_service.circuit_breakers.default.cx_open: 0

cluster.httpbin_service.circuit_breakers.default.cx_pool_open: 0

cluster.httpbin_service.circuit_breakers.default.rq_open: 0

cluster.httpbin_service.circuit_breakers.default.rq_pending_open: 0

cluster.httpbin_service.circuit_breakers.default.rq_retry_open: 0

cluster.httpbin_service.circuit_breakers.high.cx_open: 0

cluster.httpbin_service.circuit_breakers.high.cx_pool_open: 0

cluster.httpbin_service.circuit_breakers.high.rq_open: 0

cluster.httpbin_service.circuit_breakers.high.rq_pending_open: 0

cluster.httpbin_service.circuit_breakers.high.rq_retry_open: 0

cluster.httpbin_service.default.total_match_count: 1

cluster.httpbin_service.lb_healthy_panic: 0

cluster.httpbin_service.lb_local_cluster_not_ok: 0

cluster.httpbin_service.lb_recalculate_zone_structures: 0

cluster.httpbin_service.lb_subsets_active: 0

cluster.httpbin_service.lb_subsets_created: 0

cluster.httpbin_service.lb_subsets_fallback: 0

cluster.httpbin_service.lb_subsets_fallback_panic: 0

cluster.httpbin_service.lb_subsets_removed: 0

cluster.httpbin_service.lb_subsets_selected: 0

cluster.httpbin_service.lb_zone_cluster_too_small: 0

cluster.httpbin_service.lb_zone_no_capacity_left: 0

cluster.httpbin_service.lb_zone_number_differs: 0

cluster.httpbin_service.lb_zone_routing_all_directly: 0

cluster.httpbin_service.lb_zone_routing_cross_zone: 0

cluster.httpbin_service.lb_zone_routing_sampled: 0

cluster.httpbin_service.max_host_weight: 0

cluster.httpbin_service.membership_change: 1

cluster.httpbin_service.membership_degraded: 0

cluster.httpbin_service.membership_excluded: 0

cluster.httpbin_service.membership_healthy: 1

cluster.httpbin_service.membership_total: 1

cluster.httpbin_service.original_dst_host_invalid: 0

cluster.httpbin_service.retry_or_shadow_abandoned: 0

cluster.httpbin_service.update_attempt: 4

cluster.httpbin_service.update_empty: 0

cluster.httpbin_service.update_failure: 0

cluster.httpbin_service.update_no_rebuild: 0

cluster.httpbin_service.update_success: 4

cluster.httpbin_service.upstream_cx_active: 0

cluster.httpbin_service.upstream_cx_close_notify: 0

cluster.httpbin_service.upstream_cx_connect_attempts_exceeded: 0

cluster.httpbin_service.upstream_cx_connect_fail: 0

cluster.httpbin_service.upstream_cx_connect_timeout: 0

cluster.httpbin_service.upstream_cx_destroy: 0

cluster.httpbin_service.upstream_cx_destroy_local: 0

cluster.httpbin_service.upstream_cx_destroy_local_with_active_rq: 0

cluster.httpbin_service.upstream_cx_destroy_remote: 0

cluster.httpbin_service.upstream_cx_destroy_remote_with_active_rq: 0

cluster.httpbin_service.upstream_cx_destroy_with_active_rq: 0

cluster.httpbin_service.upstream_cx_http1_total: 0

cluster.httpbin_service.upstream_cx_http2_total: 0

cluster.httpbin_service.upstream_cx_http3_total: 0

cluster.httpbin_service.upstream_cx_idle_timeout: 0

cluster.httpbin_service.upstream_cx_max_requests: 0

cluster.httpbin_service.upstream_cx_none_healthy: 0

cluster.httpbin_service.upstream_cx_overflow: 0

cluster.httpbin_service.upstream_cx_pool_overflow: 0

cluster.httpbin_service.upstream_cx_protocol_error: 0

cluster.httpbin_service.upstream_cx_rx_bytes_buffered: 0

cluster.httpbin_service.upstream_cx_rx_bytes_total: 0

cluster.httpbin_service.upstream_cx_total: 0

cluster.httpbin_service.upstream_cx_tx_bytes_buffered: 0

cluster.httpbin_service.upstream_cx_tx_bytes_total: 0

cluster.httpbin_service.upstream_flow_control_backed_up_total: 0

cluster.httpbin_service.upstream_flow_control_drained_total: 0

cluster.httpbin_service.upstream_flow_control_paused_reading_total: 0

cluster.httpbin_service.upstream_flow_control_resumed_reading_total: 0

cluster.httpbin_service.upstream_internal_redirect_failed_total: 0

cluster.httpbin_service.upstream_internal_redirect_succeeded_total: 0

cluster.httpbin_service.upstream_rq_active: 0

cluster.httpbin_service.upstream_rq_cancelled: 0

cluster.httpbin_service.upstream_rq_completed: 0

cluster.httpbin_service.upstream_rq_maintenance_mode: 0

cluster.httpbin_service.upstream_rq_max_duration_reached: 0

cluster.httpbin_service.upstream_rq_pending_active: 0

cluster.httpbin_service.upstream_rq_pending_failure_eject: 0

cluster.httpbin_service.upstream_rq_pending_overflow: 0

cluster.httpbin_service.upstream_rq_pending_total: 0

cluster.httpbin_service.upstream_rq_per_try_timeout: 0

cluster.httpbin_service.upstream_rq_retry: 0

cluster.httpbin_service.upstream_rq_retry_backoff_exponential: 0

cluster.httpbin_service.upstream_rq_retry_backoff_ratelimited: 0

cluster.httpbin_service.upstream_rq_retry_limit_exceeded: 0

cluster.httpbin_service.upstream_rq_retry_overflow: 0

cluster.httpbin_service.upstream_rq_retry_success: 0

cluster.httpbin_service.upstream_rq_rx_reset: 0

cluster.httpbin_service.upstream_rq_timeout: 0

cluster.httpbin_service.upstream_rq_total: 0

cluster.httpbin_service.upstream_rq_tx_reset: 0

cluster.httpbin_service.version: 0

cluster_manager.active_clusters: 1

cluster_manager.cluster_added: 1

cluster_manager.cluster_modified: 0

cluster_manager.cluster_removed: 0

cluster_manager.cluster_updated: 0

cluster_manager.cluster_updated_via_merge: 0

cluster_manager.update_merge_cancelled: 0

cluster_manager.update_out_of_merge_window: 0

cluster_manager.warming_clusters: 0

filesystem.flushed_by_timer: 0

filesystem.reopen_failed: 0

filesystem.write_buffered: 0

filesystem.write_completed: 0

filesystem.write_failed: 0

filesystem.write_total_buffered: 0

http.admin.downstream_cx_active: 1

http.admin.downstream_cx_delayed_close_timeout: 0

http.admin.downstream_cx_destroy: 0

http.admin.downstream_cx_destroy_active_rq: 0

http.admin.downstream_cx_destroy_local: 0

http.admin.downstream_cx_destroy_local_active_rq: 0

http.admin.downstream_cx_destroy_remote: 0

http.admin.downstream_cx_destroy_remote_active_rq: 0

http.admin.downstream_cx_drain_close: 0

http.admin.downstream_cx_http1_active: 1

http.admin.downstream_cx_http1_total: 1

http.admin.downstream_cx_http2_active: 0

http.admin.downstream_cx_http2_total: 0

http.admin.downstream_cx_http3_active: 0

http.admin.downstream_cx_http3_total: 0

http.admin.downstream_cx_idle_timeout: 0

http.admin.downstream_cx_max_duration_reached: 0

http.admin.downstream_cx_overload_disable_keepalive: 0

http.admin.downstream_cx_protocol_error: 0

http.admin.downstream_cx_rx_bytes_buffered: 80

http.admin.downstream_cx_rx_bytes_total: 80

http.admin.downstream_cx_ssl_active: 0

http.admin.downstream_cx_ssl_total: 0

http.admin.downstream_cx_total: 1

http.admin.downstream_cx_tx_bytes_buffered: 0

http.admin.downstream_cx_tx_bytes_total: 0

http.admin.downstream_cx_upgrades_active: 0

http.admin.downstream_cx_upgrades_total: 0

http.admin.downstream_flow_control_paused_reading_total: 0

http.admin.downstream_flow_control_resumed_reading_total: 0

http.admin.downstream_rq_1xx: 0

http.admin.downstream_rq_2xx: 0

http.admin.downstream_rq_3xx: 0

http.admin.downstream_rq_4xx: 0

http.admin.downstream_rq_5xx: 0

http.admin.downstream_rq_active: 1

http.admin.downstream_rq_completed: 0

http.admin.downstream_rq_failed_path_normalization: 0

http.admin.downstream_rq_header_timeout: 0

http.admin.downstream_rq_http1_total: 1

http.admin.downstream_rq_http2_total: 0

http.admin.downstream_rq_http3_total: 0

http.admin.downstream_rq_idle_timeout: 0

http.admin.downstream_rq_max_duration_reached: 0

http.admin.downstream_rq_non_relative_path: 0

http.admin.downstream_rq_overload_close: 0

http.admin.downstream_rq_redirected_with_normalized_path: 0

http.admin.downstream_rq_rejected_via_ip_detection: 0

http.admin.downstream_rq_response_before_rq_complete: 0

http.admin.downstream_rq_rx_reset: 0

http.admin.downstream_rq_timeout: 0

http.admin.downstream_rq_too_large: 0

http.admin.downstream_rq_total: 1

http.admin.downstream_rq_tx_reset: 0

http.admin.downstream_rq_ws_on_non_ws_route: 0

http.admin.rs_too_large: 0

http.async-client.no_cluster: 0

http.async-client.no_route: 0

http.async-client.passthrough_internal_redirect_bad_location: 0

http.async-client.passthrough_internal_redirect_no_route: 0

http.async-client.passthrough_internal_redirect_predicate: 0

http.async-client.passthrough_internal_redirect_too_many_redirects: 0

http.async-client.passthrough_internal_redirect_unsafe_scheme: 0

http.async-client.rq_direct_response: 0

http.async-client.rq_redirect: 0

http.async-client.rq_reset_after_downstream_response_started: 0

http.async-client.rq_total: 0

http.ingress_http.downstream_cx_active: 0

http.ingress_http.downstream_cx_delayed_close_timeout: 0

http.ingress_http.downstream_cx_destroy: 0

http.ingress_http.downstream_cx_destroy_active_rq: 0

http.ingress_http.downstream_cx_destroy_local: 0

http.ingress_http.downstream_cx_destroy_local_active_rq: 0

http.ingress_http.downstream_cx_destroy_remote: 0

http.ingress_http.downstream_cx_destroy_remote_active_rq: 0

http.ingress_http.downstream_cx_drain_close: 0

http.ingress_http.downstream_cx_http1_active: 0

http.ingress_http.downstream_cx_http1_total: 0

http.ingress_http.downstream_cx_http2_active: 0

http.ingress_http.downstream_cx_http2_total: 0

http.ingress_http.downstream_cx_http3_active: 0

http.ingress_http.downstream_cx_http3_total: 0

http.ingress_http.downstream_cx_idle_timeout: 0

http.ingress_http.downstream_cx_max_duration_reached: 0

http.ingress_http.downstream_cx_overload_disable_keepalive: 0

http.ingress_http.downstream_cx_protocol_error: 0

http.ingress_http.downstream_cx_rx_bytes_buffered: 0

http.ingress_http.downstream_cx_rx_bytes_total: 0

http.ingress_http.downstream_cx_ssl_active: 0

http.ingress_http.downstream_cx_ssl_total: 0

http.ingress_http.downstream_cx_total: 0

http.ingress_http.downstream_cx_tx_bytes_buffered: 0

http.ingress_http.downstream_cx_tx_bytes_total: 0

http.ingress_http.downstream_cx_upgrades_active: 0

http.ingress_http.downstream_cx_upgrades_total: 0

http.ingress_http.downstream_flow_control_paused_reading_total: 0

http.ingress_http.downstream_flow_control_resumed_reading_total: 0

http.ingress_http.downstream_rq_1xx: 0

http.ingress_http.downstream_rq_2xx: 0

http.ingress_http.downstream_rq_3xx: 0

http.ingress_http.downstream_rq_4xx: 0

http.ingress_http.downstream_rq_5xx: 0

http.ingress_http.downstream_rq_active: 0

http.ingress_http.downstream_rq_completed: 0

http.ingress_http.downstream_rq_failed_path_normalization: 0

http.ingress_http.downstream_rq_header_timeout: 0

http.ingress_http.downstream_rq_http1_total: 0

http.ingress_http.downstream_rq_http2_total: 0

http.ingress_http.downstream_rq_http3_total: 0

http.ingress_http.downstream_rq_idle_timeout: 0

http.ingress_http.downstream_rq_max_duration_reached: 0

http.ingress_http.downstream_rq_non_relative_path: 0

http.ingress_http.downstream_rq_overload_close: 0

http.ingress_http.downstream_rq_redirected_with_normalized_path: 0

http.ingress_http.downstream_rq_rejected_via_ip_detection: 0

http.ingress_http.downstream_rq_response_before_rq_complete: 0

http.ingress_http.downstream_rq_rx_reset: 0

http.ingress_http.downstream_rq_timeout: 0

http.ingress_http.downstream_rq_too_large: 0

http.ingress_http.downstream_rq_total: 0

http.ingress_http.downstream_rq_tx_reset: 0

http.ingress_http.downstream_rq_ws_on_non_ws_route: 0

http.ingress_http.no_cluster: 0

http.ingress_http.no_route: 0

http.ingress_http.passthrough_internal_redirect_bad_location: 0

http.ingress_http.passthrough_internal_redirect_no_route: 0

http.ingress_http.passthrough_internal_redirect_predicate: 0

http.ingress_http.passthrough_internal_redirect_too_many_redirects: 0

http.ingress_http.passthrough_internal_redirect_unsafe_scheme: 0

http.ingress_http.rq_direct_response: 0

http.ingress_http.rq_redirect: 0

http.ingress_http.rq_reset_after_downstream_response_started: 0

http.ingress_http.rq_total: 0

http.ingress_http.rs_too_large: 0

http.ingress_http.tracing.client_enabled: 0

http.ingress_http.tracing.health_check: 0

http.ingress_http.tracing.not_traceable: 0

http.ingress_http.tracing.random_sampling: 0

http.ingress_http.tracing.service_forced: 0

http1.dropped_headers_with_underscores: 0

http1.metadata_not_supported_error: 0

http1.requests_rejected_with_underscores_in_headers: 0

http1.response_flood: 0

listener.0.0.0.0_15001.downstream_cx_active: 0

listener.0.0.0.0_15001.downstream_cx_destroy: 0

listener.0.0.0.0_15001.downstream_cx_overflow: 0

listener.0.0.0.0_15001.downstream_cx_overload_reject: 0

listener.0.0.0.0_15001.downstream_cx_total: 0

listener.0.0.0.0_15001.downstream_global_cx_overflow: 0

listener.0.0.0.0_15001.downstream_pre_cx_active: 0

listener.0.0.0.0_15001.downstream_pre_cx_timeout: 0

listener.0.0.0.0_15001.http.ingress_http.downstream_rq_1xx: 0

listener.0.0.0.0_15001.http.ingress_http.downstream_rq_2xx: 0

listener.0.0.0.0_15001.http.ingress_http.downstream_rq_3xx: 0

listener.0.0.0.0_15001.http.ingress_http.downstream_rq_4xx: 0

listener.0.0.0.0_15001.http.ingress_http.downstream_rq_5xx: 0

listener.0.0.0.0_15001.http.ingress_http.downstream_rq_completed: 0

listener.0.0.0.0_15001.no_filter_chain_match: 0

listener.0.0.0.0_15001.worker_0.downstream_cx_active: 0

listener.0.0.0.0_15001.worker_0.downstream_cx_total: 0

listener.0.0.0.0_15001.worker_1.downstream_cx_active: 0

listener.0.0.0.0_15001.worker_1.downstream_cx_total: 0

listener.0.0.0.0_15001.worker_2.downstream_cx_active: 0

listener.0.0.0.0_15001.worker_2.downstream_cx_total: 0

listener.0.0.0.0_15001.worker_3.downstream_cx_active: 0

listener.0.0.0.0_15001.worker_3.downstream_cx_total: 0

listener.0.0.0.0_15001.worker_4.downstream_cx_active: 0

listener.0.0.0.0_15001.worker_4.downstream_cx_total: 0

listener.0.0.0.0_15001.worker_5.downstream_cx_active: 0

listener.0.0.0.0_15001.worker_5.downstream_cx_total: 0

listener.admin.downstream_cx_active: 1

listener.admin.downstream_cx_destroy: 0

listener.admin.downstream_cx_overflow: 0

listener.admin.downstream_cx_overload_reject: 0

listener.admin.downstream_cx_total: 1

listener.admin.downstream_global_cx_overflow: 0

listener.admin.downstream_pre_cx_active: 0

listener.admin.downstream_pre_cx_timeout: 0

listener.admin.http.admin.downstream_rq_1xx: 0

listener.admin.http.admin.downstream_rq_2xx: 0

listener.admin.http.admin.downstream_rq_3xx: 0

listener.admin.http.admin.downstream_rq_4xx: 0

listener.admin.http.admin.downstream_rq_5xx: 0

listener.admin.http.admin.downstream_rq_completed: 0

listener.admin.main_thread.downstream_cx_active: 1

listener.admin.main_thread.downstream_cx_total: 1

listener.admin.no_filter_chain_match: 0

listener_manager.listener_added: 1

listener_manager.listener_create_failure: 0

listener_manager.listener_create_success: 6

listener_manager.listener_in_place_updated: 0

listener_manager.listener_modified: 0

listener_manager.listener_removed: 0

listener_manager.listener_stopped: 0

listener_manager.total_filter_chains_draining: 0

listener_manager.total_listeners_active: 1

listener_manager.total_listeners_draining: 0

listener_manager.total_listeners_warming: 0

listener_manager.workers_started: 1

main_thread.watchdog_mega_miss: 0

main_thread.watchdog_miss: 0

runtime.admin_overrides_active: 0

runtime.deprecated_feature_seen_since_process_start: 0

runtime.deprecated_feature_use: 0

runtime.load_error: 0

runtime.load_success: 1

runtime.num_keys: 0

runtime.num_layers: 0

runtime.override_dir_exists: 0

runtime.override_dir_not_exists: 1

server.compilation_settings.fips_mode: 0

server.concurrency: 6

server.days_until_first_cert_expiring: 2147483647

server.debug_assertion_failures: 0

server.dropped_stat_flushes: 0

server.dynamic_unknown_fields: 0

server.envoy_bug_failures: 0

server.hot_restart_epoch: 0

server.hot_restart_generation: 1

server.live: 1

server.main_thread.watchdog_mega_miss: 0

server.main_thread.watchdog_miss: 0

server.memory_allocated: 7612680

server.memory_heap_size: 12582912

server.memory_physical_size: 17900694

server.parent_connections: 0

server.seconds_until_first_ocsp_response_expiring: 0

server.state: 0

server.static_unknown_fields: 0

server.stats_recent_lookups: 1303

server.total_connections: 0

server.uptime: 15

server.version: 6880851

server.worker_0.watchdog_mega_miss: 0

server.worker_0.watchdog_miss: 0

server.worker_1.watchdog_mega_miss: 0

server.worker_1.watchdog_miss: 0

server.worker_2.watchdog_mega_miss: 0

server.worker_2.watchdog_miss: 0

server.worker_3.watchdog_mega_miss: 0

server.worker_3.watchdog_miss: 0

server.worker_4.watchdog_mega_miss: 0

server.worker_4.watchdog_miss: 0

server.worker_5.watchdog_mega_miss: 0

server.worker_5.watchdog_miss: 0

vhost.httpbin_local_service.vcluster.other.upstream_rq_retry: 0

vhost.httpbin_local_service.vcluster.other.upstream_rq_retry_limit_exceeded: 0

vhost.httpbin_local_service.vcluster.other.upstream_rq_retry_overflow: 0

vhost.httpbin_local_service.vcluster.other.upstream_rq_retry_success: 0

vhost.httpbin_local_service.vcluster.other.upstream_rq_timeout: 0

vhost.httpbin_local_service.vcluster.other.upstream_rq_total: 0

workers.watchdog_mega_miss: 0

workers.watchdog_miss: 0

cluster.httpbin_service.upstream_cx_connect_ms: No recorded values

cluster.httpbin_service.upstream_cx_length_ms: No recorded values

http.admin.downstream_cx_length_ms: No recorded values

http.admin.downstream_rq_time: No recorded values

http.ingress_http.downstream_cx_length_ms: No recorded values

http.ingress_http.downstream_rq_time: No recorded values

listener.0.0.0.0_15001.downstream_cx_length_ms: No recorded values

listener.admin.downstream_cx_length_ms: No recorded values

server.initialization_time_ms: P0(nan,3.0) P25(nan,3.025) P50(nan,3.05) P75(nan,3.075) P90(nan,3.09) P95(nan,3.095) P99(nan,3.099) P99.5(nan,3.0995) P99.9(nan,3.0999) P100(nan,3.1)

# retry 통계만 확인

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15000/stats | grep retry

cluster.httpbin_service.circuit_breakers.default.rq_retry_open: 0

cluster.httpbin_service.circuit_breakers.high.rq_retry_open: 0

cluster.httpbin_service.retry_or_shadow_abandoned: 0

cluster.httpbin_service.upstream_rq_retry: 0

cluster.httpbin_service.upstream_rq_retry_backoff_exponential: 0

cluster.httpbin_service.upstream_rq_retry_backoff_ratelimited: 0

cluster.httpbin_service.upstream_rq_retry_limit_exceeded: 0

cluster.httpbin_service.upstream_rq_retry_overflow: 0

cluster.httpbin_service.upstream_rq_retry_success: 0

vhost.httpbin_local_service.vcluster.other.upstream_rq_retry: 0

vhost.httpbin_local_service.vcluster.other.upstream_rq_retry_limit_exceeded: 0

vhost.httpbin_local_service.vcluster.other.upstream_rq_retry_overflow: 0

vhost.httpbin_local_service.vcluster.other.upstream_rq_retry_success: 0

...

# 다른 엔드포인트 일부 목록들도 확인

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15000/certs # 머신상의 인증서

{

"certificates": []

}

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15000/clusters # 엔보이에 설정한 클러스터

httpbin_service::observability_name::httpbin_service

httpbin_service::default_priority::max_connections::1024

httpbin_service::default_priority::max_pending_requests::1024

httpbin_service::default_priority::max_requests::1024

httpbin_service::default_priority::max_retries::3

httpbin_service::high_priority::max_connections::1024

httpbin_service::high_priority::max_pending_requests::1024

httpbin_service::high_priority::max_requests::1024

httpbin_service::high_priority::max_retries::3

httpbin_service::added_via_api::false

httpbin_service::172.17.0.2:8000::cx_active::0

httpbin_service::172.17.0.2:8000::cx_connect_fail::0

httpbin_service::172.17.0.2:8000::cx_total::0

httpbin_service::172.17.0.2:8000::rq_active::0

httpbin_service::172.17.0.2:8000::rq_error::0

httpbin_service::172.17.0.2:8000::rq_success::0

httpbin_service::172.17.0.2:8000::rq_timeout::0

httpbin_service::172.17.0.2:8000::rq_total::0

httpbin_service::172.17.0.2:8000::hostname::httpbin

httpbin_service::172.17.0.2:8000::health_flags::healthy

httpbin_service::172.17.0.2:8000::weight::1

httpbin_service::172.17.0.2:8000::region::

httpbin_service::172.17.0.2:8000::zone::

httpbin_service::172.17.0.2:8000::sub_zone::

httpbin_service::172.17.0.2:8000::canary::false

httpbin_service::172.17.0.2:8000::priority::0

httpbin_service::172.17.0.2:8000::success_rate::-1.0

httpbin_service::172.17.0.2:8000::local_origin_success_rate::-1.0

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15000/config_dump # 엔보이 설정 덤프

{

"configs": [

{

"@type": "type.googleapis.com/envoy.admin.v3.BootstrapConfigDump",

"bootstrap": {

"node": {

"hidden_envoy_deprecated_build_version": "68fe53a889416fd8570506232052b06f5a531541/1.19.0/Clean/RELEASE/BoringSSL",

"user_agent_name": "envoy",

"user_agent_build_version": {

"version": {

"major_number": 1,

"minor_number": 19

},

"metadata": {

"build.type": "RELEASE",

"revision.sha": "68fe53a889416fd8570506232052b06f5a531541",

"revision.status": "Clean",

"ssl.version": "BoringSSL"

}

},

...

...

"type.googleapis.com/envoy.admin.v3.RoutesConfigDump",

"static_route_configs": [

{

"route_config": {

"@type": "type.googleapis.com/envoy.config.route.v3.RouteConfiguration",

"name": "httpbin_local_route",

"virtual_hosts": [

{

"name": "httpbin_local_service",

"domains": [

"*"

],

"routes": [

{

"match": {

"prefix": "/"

},

"route": {

"cluster": "httpbin_service",

"auto_host_rewrite": true,

"timeout": "1s"

}

}

]

}

]

},

"last_updated": "2025-04-19T02:43:16.053Z"

}

]

},

{

"@type": "type.googleapis.com/envoy.admin.v3.SecretsConfigDump"

}

]

}

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15000/listeners # 엔보이에 설정한 리스너

httpbin-demo::0.0.0.0:15001

docker run -it --rm --link proxy curlimages/curl curl -X POST http://proxy:15000/logging # 로깅 설정 확인 가능

docker run -it --rm --link proxy curlimages/curl curl -X POST http://proxy:15000/logging?http=debug # 로깅 설정 편집 가능

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15000/stats # 엔보이 통계

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15000/stats/prometheus # 엔보이 통계(프로메테우스 레코드 형식)

# TYPE envoy_cluster_assignment_stale counter

envoy_cluster_assignment_stale{envoy_cluster_name="httpbin_service"} 0

# TYPE envoy_cluster_assignment_timeout_received counter

envoy_cluster_assignment_timeout_received{envoy_cluster_name="httpbin_service"} 0

# TYPE envoy_cluster_bind_errors counter

envoy_cluster_bind_errors{envoy_cluster_name="httpbin_service"} 0

# TYPE envoy_cluster_default_total_match_count counter

envoy_cluster_default_total_match_count{envoy_cluster_name="httpbin_service"} 1

...

envoy_server_initialization_time_ms_bucket{le="300000"} 1

envoy_server_initialization_time_ms_bucket{le="600000"} 1

envoy_server_initialization_time_ms_bucket{le="1800000"} 1

envoy_server_initialization_time_ms_bucket{le="3600000"} 1

envoy_server_initialization_time_ms_bucket{le="+Inf"} 1

envoy_server_initialization_time_ms_sum{} 3.049999999999999822364316059975

envoy_server_initialization_time_ms_count{} 11.3.3 Envoy request retries

- httpbin 요청을 일부러 실패시켜 엔보이가 어떻게 요청을 자동으로 재시작하는지 살펴보자.

- 먼저

retry_policy를 사용하도록 설정 파일을 업데이트 한다.

- match: { prefix: "/" }

route:

auto_host_rewrite: true

cluster: httpbin_service

retry_policy:

retry_on: 5xx # 5xx 일때 재시도

num_retries: 3 # 재시도 횟수- 실습 진행

#

docker rm -f proxy

#

cat ch3/simple_retry.yaml

docker run -p 15000:15000 --name proxy --link httpbin envoyproxy/envoy:v1.19.0 --config-yaml "$(cat ch3/simple_retry.yaml)"

docker run -it --rm --link proxy curlimages/curl curl -X POST http://proxy:15000/logging?http=debug

# /stats/500 경로로 프록시를 호출 : 이 경로로 httphbin 호출하면 오류가 발생

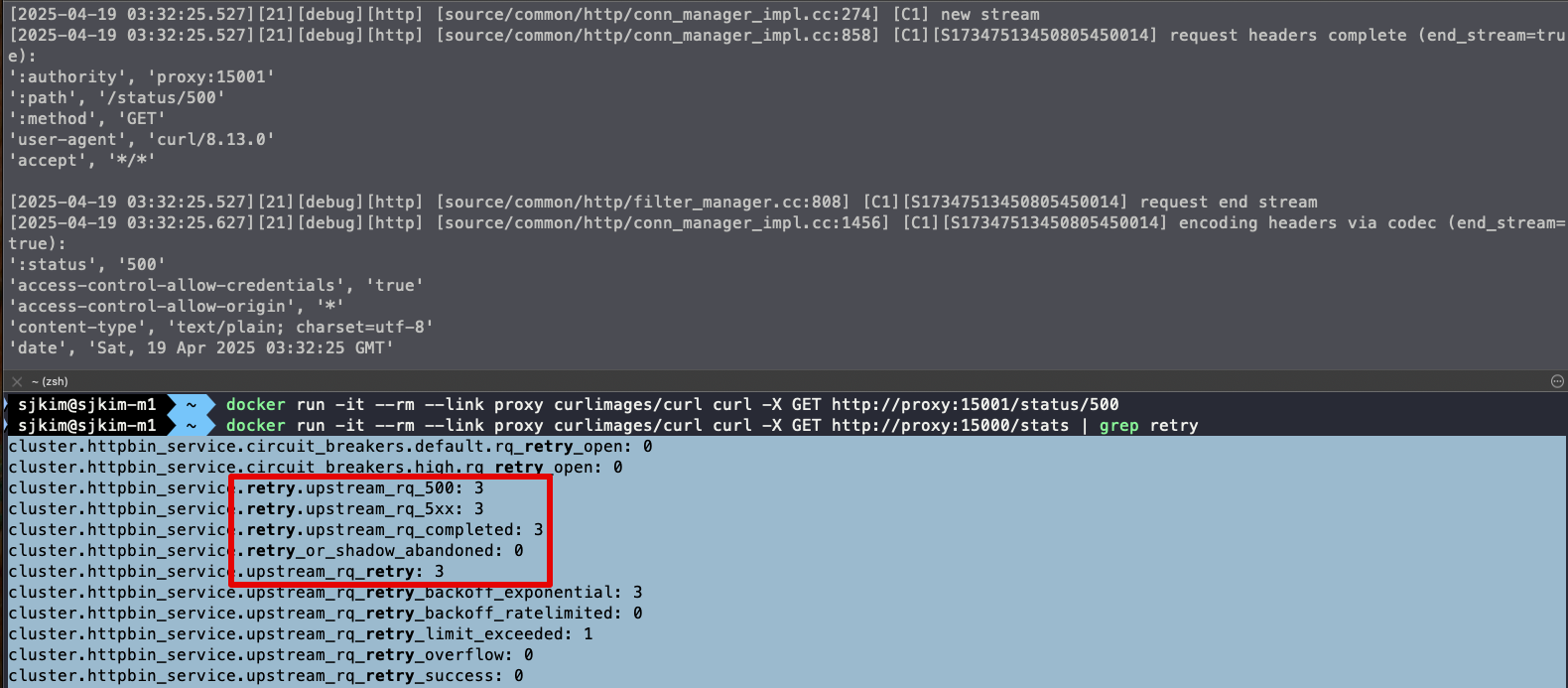

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15001/status/500

# 호출이 끝났는데 아무런 응답도 보이지 않는다. 엔보이 Admin API에 확인

docker run -it --rm --link proxy curlimages/curl curl -X GET http://proxy:15000/stats | grep retry

...

cluster.httpbin_service.retry.upstream_rq_500: 3

cluster.httpbin_service.retry.upstream_rq_5xx: 3

cluster.httpbin_service.retry.upstream_rq_completed: 3

cluster.httpbin_service.retry_or_shadow_abandoned: 0

cluster.httpbin_service.upstream_rq_retry: 3

...

🤔 엔보이는 업스트림 클러스터 httpbin 호출할 때 HTTP 500 응답을 받았다.

👏 엔보이는 요청을 재시도했으며, 이는 통계값에 cluster.httpbin_service.upstream_rq_retry: 3 으로 표시돼 있다.

-

이번 실습에서는 Envoy 프록시의 기본 기능을 직접 시연해보았다. Envoy는 애플리케이션 네트워크에 자동으로 신뢰성(Reliability)을 부여하며, 기본 설정만으로도 프록시, 라우팅, 필터링 등 다양한 기능을 수행할 수 있다.

-

실습에서는 Envoy의 기능을 추론하고 동작을 확인하기 위해 정적(static) 설정 파일을 활용했다. 이 정적 설정 방식은 설정 내용을 명확히 이해하고 기능을 세부적으로 조정하는 데 효과적이다. 다만, 규모가 커질수록 관리와 운영에 어려움이 있다.

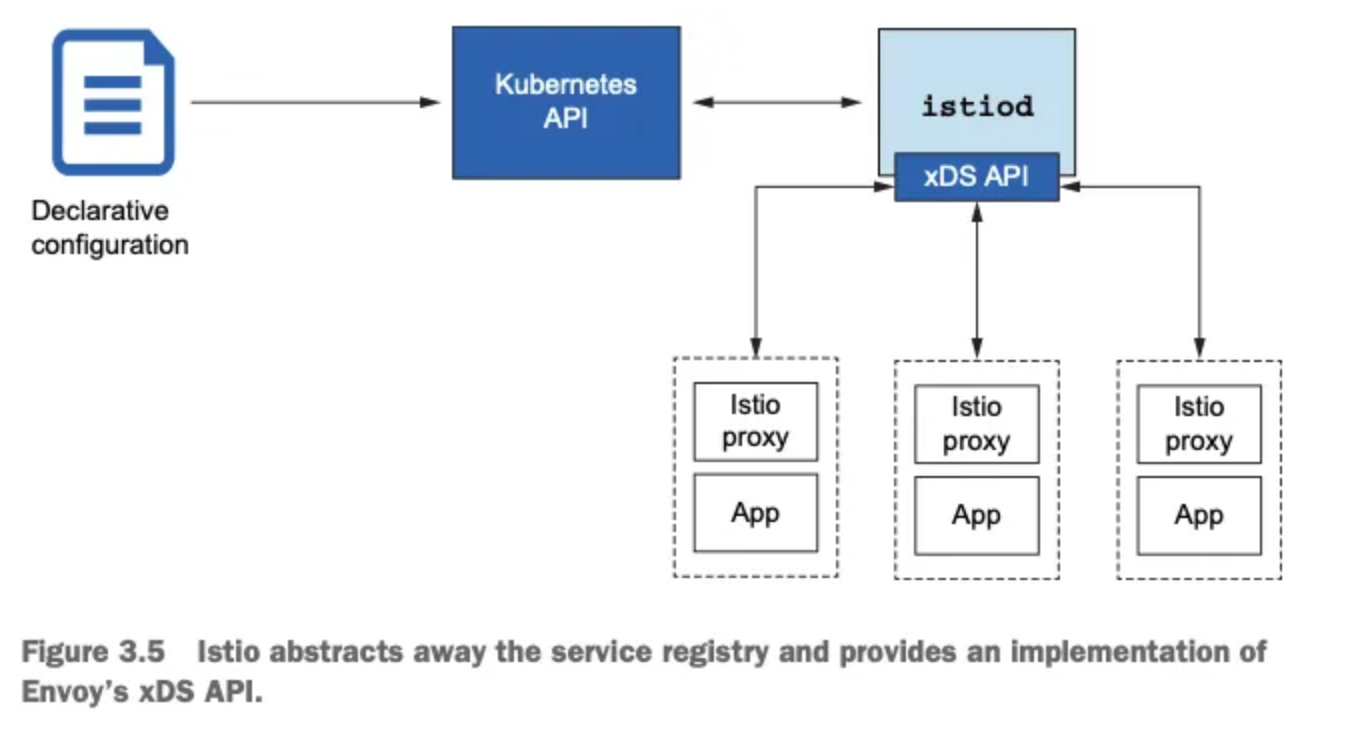

-

반면, Istio는 동적 설정(dynamic configuration) 기능을 통해 이러한 문제를 해결한다. Istio는 Envoy가 사용하는 설정들을 중앙에서 관리하며, xDS API 기반의 동적 구성을 활용하여 수십, 수백 개에 이르는 Envoy 프록시의 설정을 실시간으로 제어하고 자동화한다.

-

이러한 구조 덕분에 Istio는 복잡하고 규모가 큰 서비스 메시 환경에서도 유연하고 안정적인 네트워크 구성을 가능하게 하며, 운영자 개입 없이도 실시간으로 설정을 전파할 수 있다.

⛔️ 다음 실습을 위해 Envoy 종료 docker rm -f proxy && docker rm -f httpbin

1.3.4 엔보이는 어떻게 이스티오에 적합한가?

- Istio의 프록시 역할은 Envoy가 수행하며, Istio는 이를 컨트롤 플레인과 부가 기능으로 보완해줍니다. 앞으로 Envoy를 ‘Istio 프록시’로 부르며, 그 기능들을 Istio API를 통해 다뤄보게 될 것입니다. 하지만 잊지 말아야 할 점은, 그 많은 기능이 실제로는 Envoy가 구현하고 있다는 사실입니다.

- 서비스 메시(Service Mesh)를 구성할 때, Envoy는 그 중심에서 프록시 역할을 수행하며 핵심 기능들을 담당합니다. 하지만 Envoy 혼자 모든 일을 처리하는 것은 아닙니다. Istio는 이러한 Envoy를 보완하고 강화하는 다양한 컨트롤 플레인 구성 요소를 함께 제공합니다.

- Envoy, 서비스 메시의 프록시

-

Envoy는 고성능 L7 프록시로, 서비스 메시 내 트래픽을 라우팅하고, 필터링하고, 관찰할 수 있는 다양한 기능을 제공합니다. 이 책에서도 다루고 있듯이, Istio의 핵심 기능 대부분은 Envoy 위에서 동작합니다.

-

Envoy는 정적 설정 파일을 통해 기본적인 구성도 가능하지만, xDS API를 통해 리스너, 클러스터, 엔드포인트 등을 런타임에 동적으로 구성할 수 있습니다.

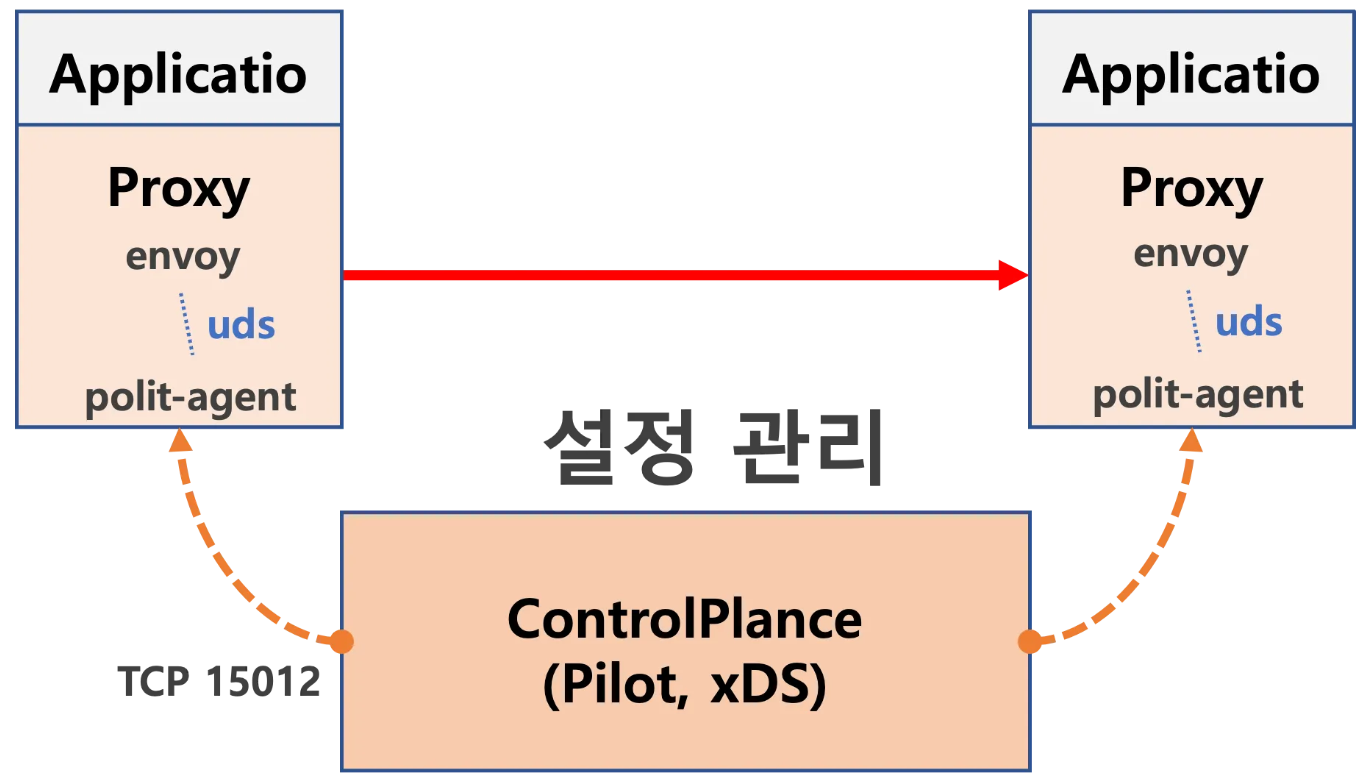

- Istio 컨트롤 플레인의 역할

-

이러한 동적 구성을 가능하게 하는 것이 바로 Istio의 컨트롤 플레인, 특히 istiod입니다. istiod는 Kubernetes API를 통해 VirtualService와 같은 Istio 리소스를 읽고, 이를 기반으로 Envoy 프록시에게 적절한 설정을 동적으로 내려보냅니다.

-

즉, 사용자는 쿠버네티스 자원을 정의하기만 하면 되고, Istio는 이를 해석하여 Envoy에 맞는 설정을 자동으로 구성해주는 구조입니다.

- 서비스 디스커버리: 추상화된 구현

-

Envoy는 서비스 디스커버리를 위해 서비스 레지스트리에 의존합니다. Istio는 Kubernetes 환경에서 Kubernetes 자체의 서비스 레지스트리를 활용하며, 이 구현 세부사항은 Envoy에게 완전히 추상화되어 감춰집니다.

-

개발자는 Envoy의 설정을 직접 조작할 필요 없이, Istio가 중간에서 모든 번역과 전파를 처리해줍니다.

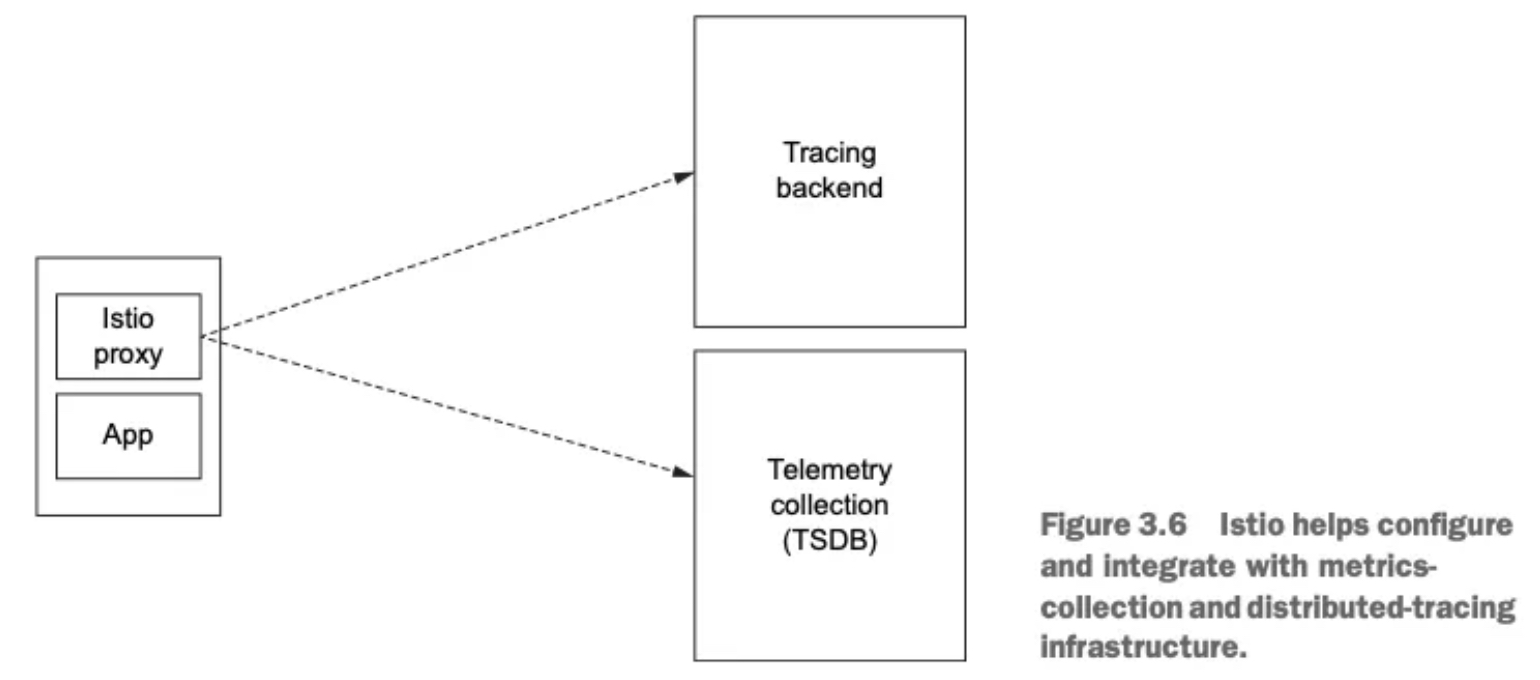

- 텔레메트리 및 트레이싱

-

Envoy는 풍부한 메트릭과 트레이싱 데이터를 내보낼 수 있지만, 이 데이터를 수집하고 분석하려면 수신 시스템이 필요합니다. Istio는 이러한 텔레메트리를 Prometheus, Jaeger, Zipkin과 같은 도구와 쉽게 연동할 수 있도록 설정을 제공합니다.

-

트래픽 흐름을 추적하는 분산 트레이싱도 Istio를 통해 간편하게 구성 가능하며, 이는 운영과 디버깅에 큰 도움을 줍니다.

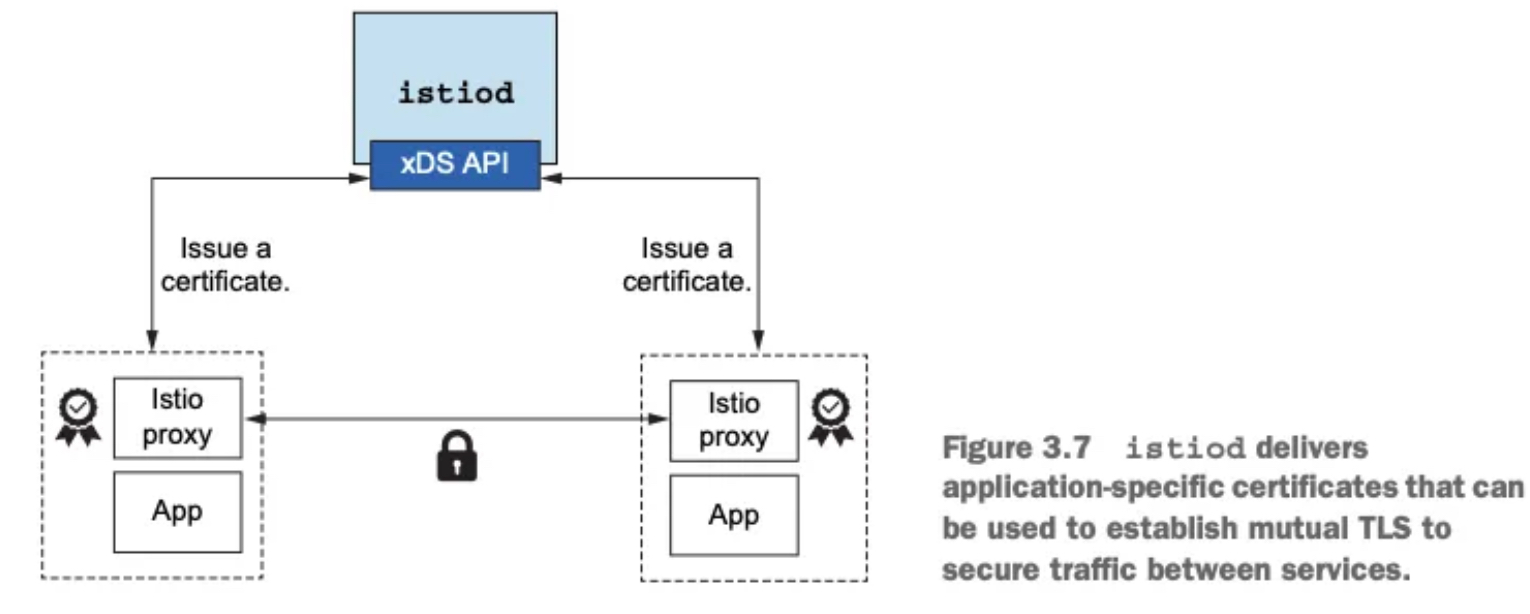

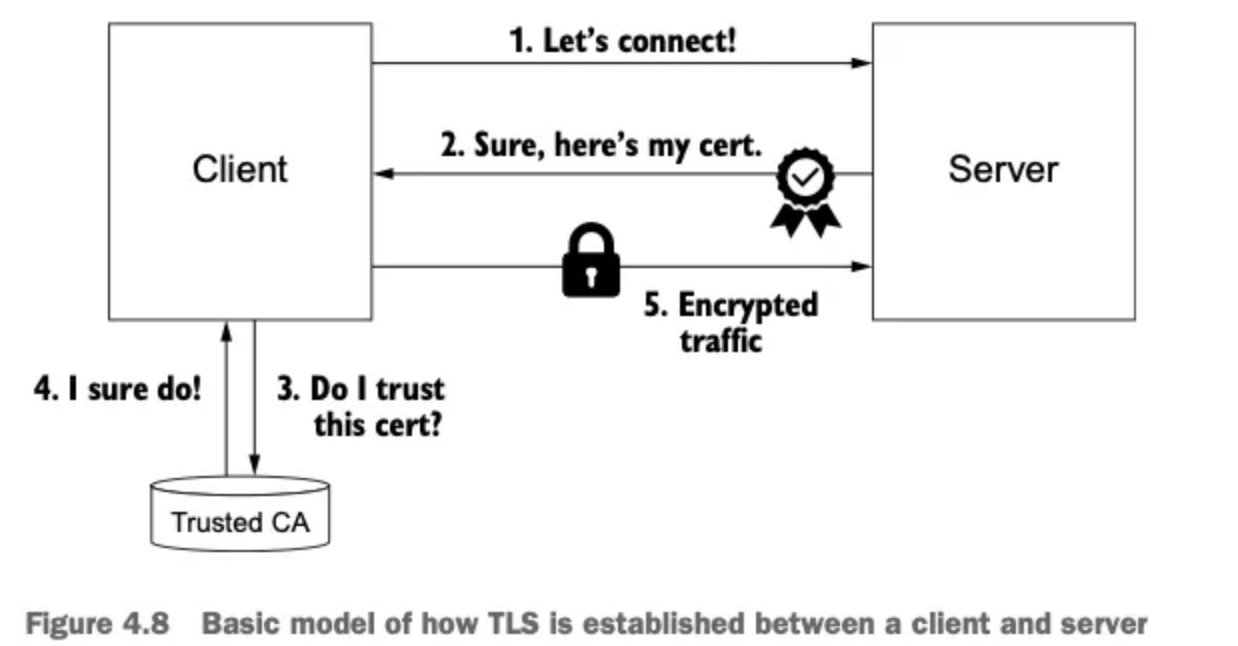

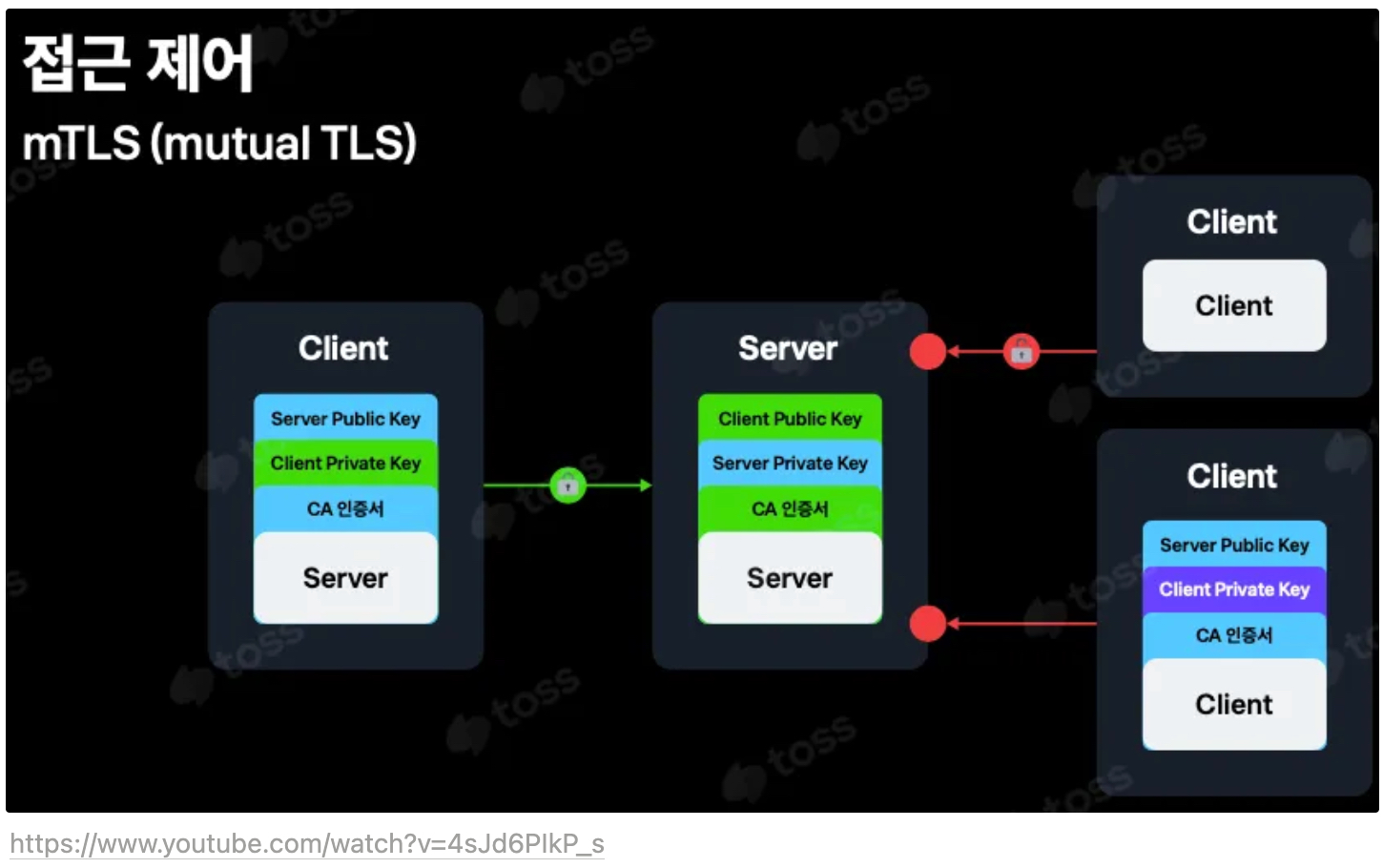

5 TLS 트래픽과 인증서 관리

- 서비스 메시 내에서의 보안 통신 역시 Istio가 책임집니다. Envoy는 TLS 트래픽을 종료하고 시작할 수 있으나, 이를 위해서는 인증서의 발급, 서명, 갱신 등의 작업이 필요합니다. Istio는 이 과정을 자동화하는 인증서 관리 인프라를 함께 제공합니다.

2. Istio Gateway

- Getting traffic into a cluster

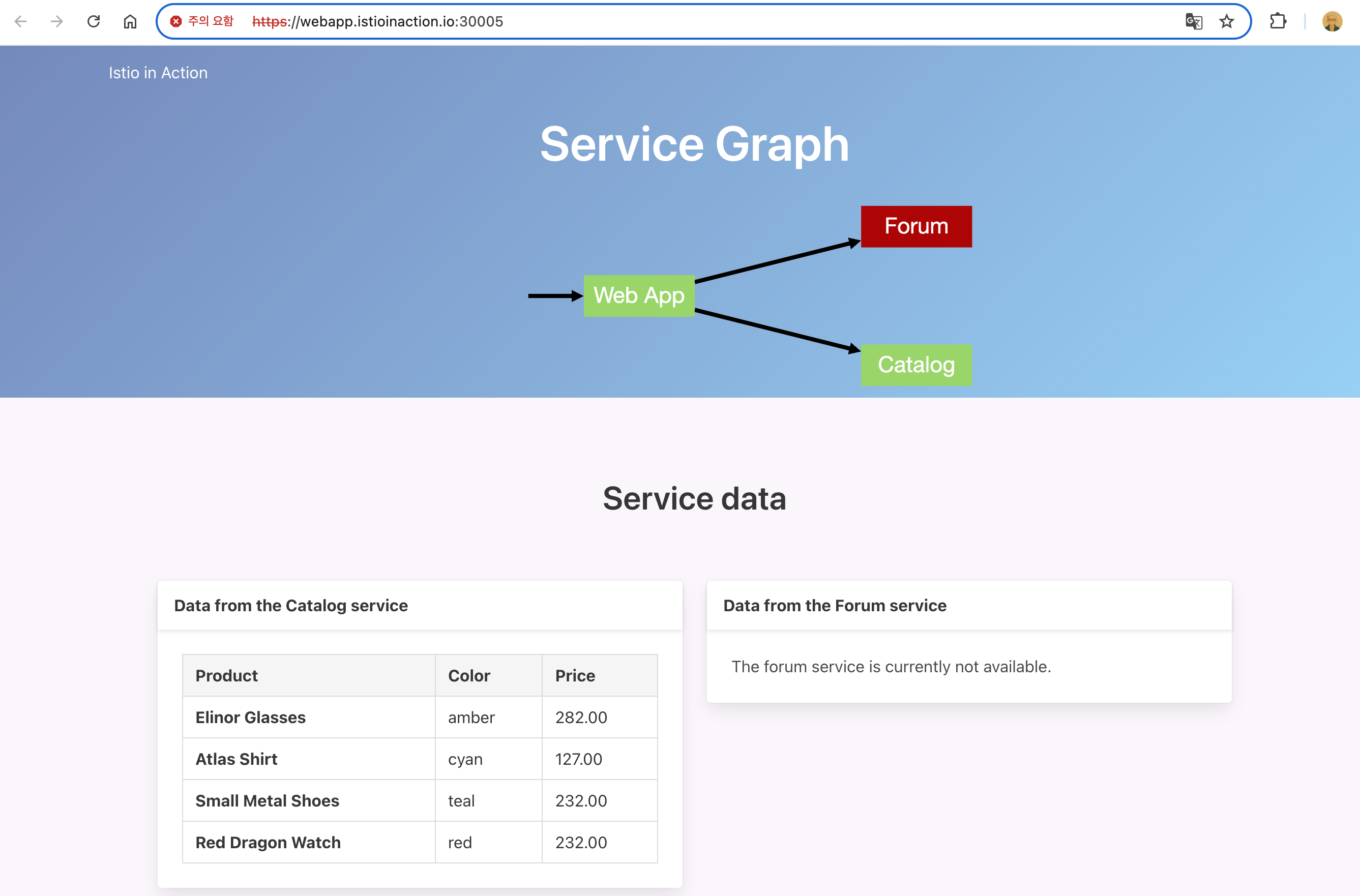

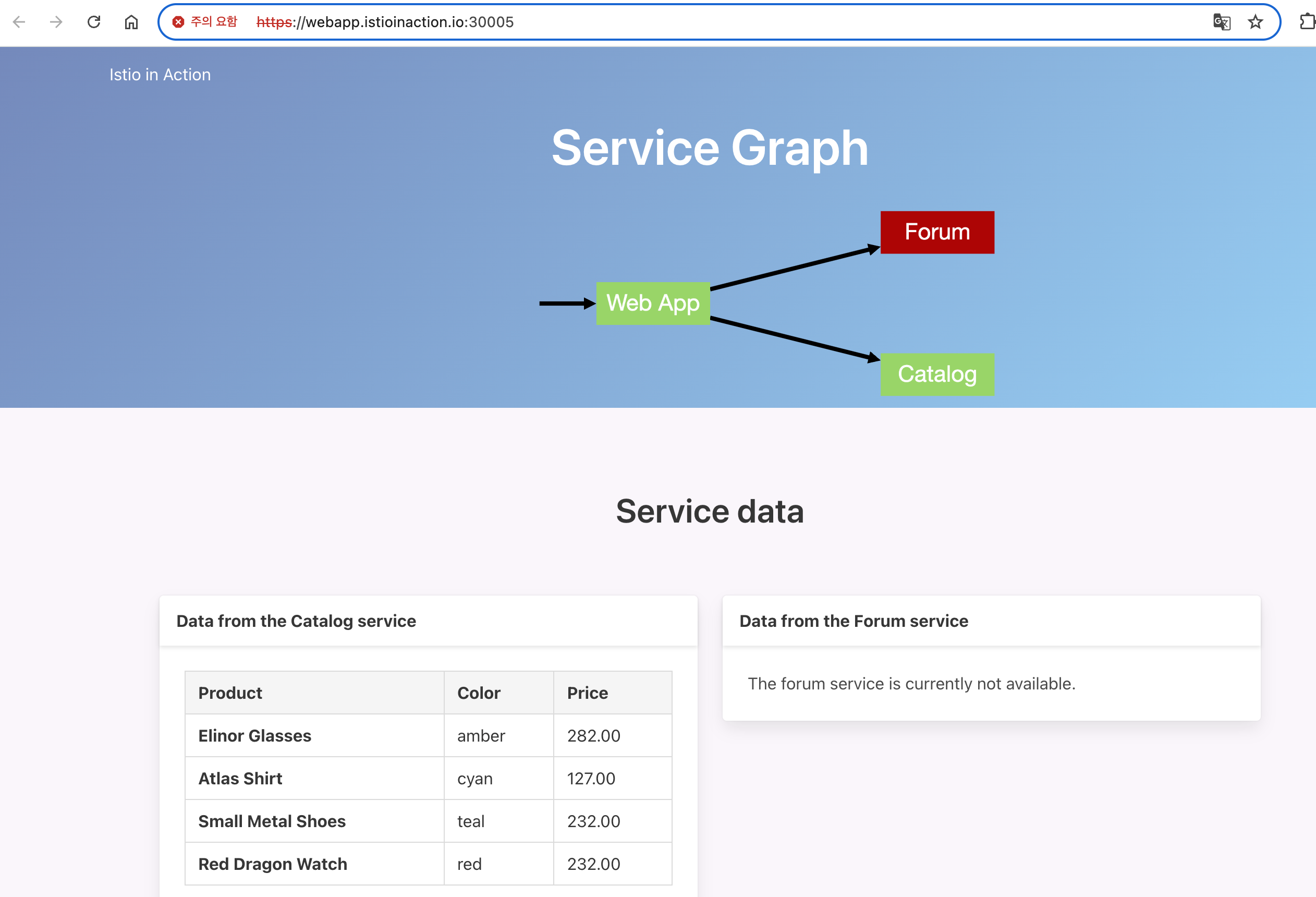

- 스터디 실습 환경 : docker (kind - k8s 1.23.17 ‘23.2.28) , istio 1.17.8(’23.10.11)

2.1 실습환경 구성

- k8s(1.23.17) 배포 : NodePort(30000 HTTP, 30005 HTTPS)

#

git clone https://github.com/AcornPublishing/istio-in-action

cd istio-in-action/book-source-code-master

pwd # 각자 자신의 pwd 경로

code .

# 아래 extramounts 생략 시, myk8s-control-plane 컨테이너 sh/bash 진입 후 직접 git clone 가능

kind create cluster --name myk8s --image kindest/node:v1.23.17 --config - <<EOF

kind: Cluster

apiVersion: kind.x-k8s.io/v1alpha4

nodes:

- role: control-plane

extraPortMappings:

- containerPort: 30000 # Sample Application (istio-ingrssgateway) HTTP

hostPort: 30000

- containerPort: 30001 # Prometheus

hostPort: 30001

- containerPort: 30002 # Grafana

hostPort: 30002

- containerPort: 30003 # Kiali

hostPort: 30003

- containerPort: 30004 # Tracing

hostPort: 30004

- containerPort: 30005 # Sample Application (istio-ingrssgateway) HTTPS

hostPort: 30005

- containerPort: 30006 # TCP Route

hostPort: 30006

- containerPort: 30007 # New Gateway

hostPort: 30007

extraMounts: # 해당 부분 생략 가능

- hostPath: /Users/sjkim/Labs/CloudNeta/istio/istio-in-action/book-source-code-master # 각자 자신의 pwd 경로로 설정

containerPath: /istiobook

networking:

podSubnet: 10.10.0.0/16

serviceSubnet: 10.200.1.0/24

EOF

Creating cluster "myk8s" ...

✓ Ensuring node image (kindest/node:v1.23.17) 🖼

✓ Preparing nodes 📦

✓ Writing configuration 📜

✓ Starting control-plane 🕹️

✓ Installing CNI 🔌

✓ Installing StorageClass 💾

Set kubectl context to "kind-myk8s"

You can now use your cluster with:

kubectl cluster-info --context kind-myk8s

Thanks for using kind! 😊

# 설치 확인

docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

681db4ee7249 kindest/node:v1.23.17 "/usr/local/bin/entr…" 53 seconds ago Up 51 seconds 0.0.0.0:30000-30007->30000-30007/tcp, 127.0.0.1:52121->6443/tcp myk8s-control-plane

# 노드에 기본 툴 설치

docker exec -it myk8s-control-plane sh -c 'apt update && apt install tree psmisc lsof wget bridge-utils net-tools dnsutils tcpdump ngrep iputils-ping git vim -y'

# (옵션) metrics-server

helm repo add metrics-server https://kubernetes-sigs.github.io/metrics-server/

helm install metrics-server metrics-server/metrics-server --set 'args[0]=--kubelet-insecure-tls' -n kube-system

LAST DEPLOYED: Sat Apr 19 15:17:01 2025

NAMESPACE: kube-system

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

***********************************************************************

* Metrics Server *

***********************************************************************

Chart version: 3.12.2

App version: 0.7.2

Image tag: registry.k8s.io/metrics-server/metrics-server:v0.7.2

***********************************************************************

kubectl get all -n kube-system -l app.kubernetes.io/instance=metrics-server

NAME READY STATUS RESTARTS AGE

pod/metrics-server-65bb6f47b6-c8bmp 1/1 Running 0 30s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/metrics-server ClusterIP 10.200.1.115 <none> 443/TCP 30s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/metrics-server 1/1 1 1 30s

NAME DESIRED CURRENT READY AGE

replicaset.apps/metrics-server-65bb6f47b6 1 1 1 30s# myk8s-control-plane 진입 후 설치 진행

docker exec -it myk8s-control-plane bash

-----------------------------------

# (옵션) 코드 파일들 마운트 확인

tree /istiobook/ -L 1

root@myk8s-control-plane:/# tree /istiobook/ -L 1

/istiobook/

|-- README.md

|-- appendices

|-- bin

|-- ch10

|-- ch11

|-- ch12

|-- ch13

|-- ch14

|-- ch2

|-- ch3

|-- ch4

|-- ch5

|-- ch6

|-- ch7

|-- ch8

|-- ch9

`-- services

17 directories, 1 file

혹은

git clone ... /istiobook

# istioctl 설치

export ISTIOV=1.17.8

echo 'export ISTIOV=1.17.8' >> /root/.bashrc

curl -s -L https://istio.io/downloadIstio | ISTIO_VERSION=$ISTIOV sh -

Downloading istio-1.17.8 from https://github.com/istio/istio/releases/download/1.17.8/istio-1.17.8-linux-arm64.tar.gz ...

Istio 1.17.8 download complete!

The Istio release archive has been downloaded to the istio-1.17.8 directory.

To configure the istioctl client tool for your workstation,

add the /istio-1.17.8/bin directory to your environment path variable with:

export PATH="$PATH:/istio-1.17.8/bin"

Begin the Istio pre-installation check by running:

istioctl x precheck

Try Istio in ambient mode

https://istio.io/latest/docs/ambient/getting-started/

Try Istio in sidecar mode

https://istio.io/latest/docs/setup/getting-started/

Install guides for ambient mode

https://istio.io/latest/docs/ambient/install/

Install guides for sidecar mode

https://istio.io/latest/docs/setup/install/

Need more information? Visit https://istio.io/latest/docs/

cp istio-$ISTIOV/bin/istioctl /usr/local/bin/istioctl

istioctl version --remote=false

1.17.8

# default 프로파일 컨트롤 플레인 배포

istioctl install --set profile=default -y

✔ Istio core installed

✔ Istiod installed

✔ Ingress gateways installed

✔ Installation complete Making this installation the default for injection and validation.

Thank you for installing Istio 1.17. Please take a few minutes to tell us about your install/upgrade experience! https://forms.gle/hMHGiwZHPU7UQRWe9

# 설치 확인 : istiod, istio-ingressgateway, crd 등

kubectl get istiooperators -n istio-system -o yaml

kubectl get all,svc,ep,sa,cm,secret,pdb -n istio-system

root@myk8s-control-plane:/# kubectl get all,svc,ep,sa,cm,secret,pdb -n istio-system

NAME READY STATUS RESTARTS AGE

pod/istio-ingressgateway-996bc6bb6-248ws 1/1 Running 0 77s

pod/istiod-7df6ffc78d-tg88q 1/1 Running 0 93s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/istio-ingressgateway LoadBalancer 10.200.1.202 <pending> 15021:31057/TCP,80:30122/TCP,443:30553/TCP 77s

service/istiod ClusterIP 10.200.1.203 <none> 15010/TCP,15012/TCP,443/TCP,15014/TCP 93s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/istio-ingressgateway 1/1 1 1 77s

deployment.apps/istiod 1/1 1 1 93s

NAME DESIRED CURRENT READY AGE

replicaset.apps/istio-ingressgateway-996bc6bb6 1 1 1 77s

replicaset.apps/istiod-7df6ffc78d 1 1 1 93s

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

horizontalpodautoscaler.autoscaling/istio-ingressgateway Deployment/istio-ingressgateway 8%/80% 1 5 1 77s

horizontalpodautoscaler.autoscaling/istiod Deployment/istiod 0%/80% 1 5 1 93s

NAME ENDPOINTS AGE

endpoints/istio-ingressgateway 10.10.0.7:15021,10.10.0.7:8080,10.10.0.7:8443 77s

endpoints/istiod 10.10.0.6:15012,10.10.0.6:15010,10.10.0.6:15017 + 1 more... 93s

NAME SECRETS AGE

serviceaccount/default 1 94s

serviceaccount/istio-ingressgateway-service-account 1 77s

serviceaccount/istio-reader-service-account 1 94s

serviceaccount/istiod 1 93s

serviceaccount/istiod-service-account 1 94s

NAME DATA AGE

configmap/istio 2 93s

configmap/istio-ca-root-cert 1 79s

configmap/istio-gateway-deployment-leader 0 79s

configmap/istio-gateway-status-leader 0 79s

configmap/istio-leader 0 79s

configmap/istio-namespace-controller-election 0 79s

configmap/istio-sidecar-injector 2 93s

configmap/kube-root-ca.crt 1 94s

NAME TYPE DATA AGE

secret/default-token-9fzjf kubernetes.io/service-account-token 3 94s

secret/istio-ca-secret istio.io/ca-root 5 80s

secret/istio-ingressgateway-service-account-token-xwcvj kubernetes.io/service-account-token 3 77s

secret/istio-reader-service-account-token-d2tbv kubernetes.io/service-account-token 3 94s

secret/istiod-service-account-token-p7s96 kubernetes.io/service-account-token 3 94s

secret/istiod-token-gtpmr kubernetes.io/service-account-token 3 93s

NAME MIN AVAILABLE MAX UNAVAILABLE ALLOWED DISRUPTIONS AGE

poddisruptionbudget.policy/istio-ingressgateway 1 N/A 0 77s

poddisruptionbudget.policy/istiod 1 N/A 0 93s

kubectl get cm -n istio-system istio -o yaml

apiVersion: v1

data:

mesh: |-

defaultConfig:

discoveryAddress: istiod.istio-system.svc:15012

proxyMetadata: {}

tracing:

zipkin:

address: zipkin.istio-system:9411

enablePrometheusMerge: true

rootNamespace: istio-system

trustDomain: cluster.local

meshNetworks: 'networks: {}'

kind: ConfigMap

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

labels:

install.operator.istio.io/owning-resource: unknown

install.operator.istio.io/owning-resource-namespace: istio-system

istio.io/rev: default

operator.istio.io/component: Pilot

operator.istio.io/managed: Reconcile

operator.istio.io/version: 1.17.8

release: istio

name: istio

namespace: istio-system

kubectl get crd | grep istio.io | sort

authorizationpolicies.security.istio.io 2025-04-19T06:24:33Z

destinationrules.networking.istio.io 2025-04-19T06:24:33Z

envoyfilters.networking.istio.io 2025-04-19T06:24:33Z

gateways.networking.istio.io 2025-04-19T06:24:33Z

istiooperators.install.istio.io 2025-04-19T06:24:33Z

peerauthentications.security.istio.io 2025-04-19T06:24:33Z

proxyconfigs.networking.istio.io 2025-04-19T06:24:33Z

requestauthentications.security.istio.io 2025-04-19T06:24:33Z

serviceentries.networking.istio.io 2025-04-19T06:24:33Z

sidecars.networking.istio.io 2025-04-19T06:24:33Z

telemetries.telemetry.istio.io 2025-04-19T06:24:33Z

virtualservices.networking.istio.io 2025-04-19T06:24:33Z

wasmplugins.extensions.istio.io 2025-04-19T06:24:33Z

workloadentries.networking.istio.io 2025-04-19T06:24:33Z

workloadgroups.networking.istio.io 2025-04-19T06:24:33Z

# 보조 도구 설치

kubectl apply -f istio-$ISTIOV/samples/addons

kubectl get pod -n istio-system

NAME READY STATUS RESTARTS AGE

grafana-b854c6c8-wfnqf 1/1 Running 0 65s

istio-ingressgateway-996bc6bb6-248ws 1/1 Running 0 4m52s

istiod-7df6ffc78d-tg88q 1/1 Running 0 5m8s

jaeger-5556cd8fcf-7mwbr 1/1 Running 0 65s

kiali-648847c8c4-sv7mz 1/1 Running 0 65s

prometheus-7b8b9dd44c-jvhnj 2/2 Running 0 65s

# 빠져나오기

exit

-----------------------------------

# 실습을 위한 네임스페이스 설정

kubectl create ns istioinaction

kubectl label namespace istioinaction istio-injection=enabled

kubectl get ns --show-labels

NAME STATUS AGE LABELS

default Active 17m kubernetes.io/metadata.name=default

istio-system Active 6m52s kubernetes.io/metadata.name=istio-system

istioinaction Active 18s istio-injection=enabled,kubernetes.io/metadata.name=istioinaction

kube-node-lease Active 17m kubernetes.io/metadata.name=kube-node-lease

kube-public Active 17m kubernetes.io/metadata.name=kube-public

kube-system Active 17m kubernetes.io/metadata.name=kube-system

local-path-storage Active 17m kubernetes.io/metadata.name=local-path-storage

# istio-ingressgateway 서비스 : NodePort 변경 및 nodeport 지정 변경 , externalTrafficPolicy 설정 (ClientIP 수집)

kubectl get svc -n istio-system | grep ingress

istio-ingressgateway LoadBalancer 10.200.1.202 <pending> 15021:31057/TCP,80:30122/TCP,443:30553/TCP 10m

kubectl patch svc -n istio-system istio-ingressgateway -p '{"spec": {"type": "NodePort", "ports": [{"port": 80, "targetPort": 8080, "nodePort": 30000}]}}'

kubectl patch svc -n istio-system istio-ingressgateway -p '{"spec": {"type": "NodePort", "ports": [{"port": 443, "targetPort": 8443, "nodePort": 30005}]}}'

kubectl patch svc -n istio-system istio-ingressgateway -p '{"spec":{"externalTrafficPolicy": "Local"}}'

kubectl describe svc -n istio-system istio-ingressgateway

service/istio-ingressgateway patched

Name: istio-ingressgateway

Namespace: istio-system

Labels: app=istio-ingressgateway

install.operator.istio.io/owning-resource=unknown

install.operator.istio.io/owning-resource-namespace=istio-system

istio=ingressgateway

istio.io/rev=default

operator.istio.io/component=IngressGateways

operator.istio.io/managed=Reconcile

operator.istio.io/version=1.17.8

release=istio

Annotations: <none>

Selector: app=istio-ingressgateway,istio=ingressgateway

Type: NodePort

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.200.1.202

IPs: 10.200.1.202

Port: status-port 15021/TCP

TargetPort: 15021/TCP

NodePort: status-port 31057/TCP

Endpoints: 10.10.0.7:15021

Port: http2 80/TCP

TargetPort: 8080/TCP

NodePort: http2 30000/TCP

Endpoints: 10.10.0.7:8080

Port: https 443/TCP

TargetPort: 8443/TCP

NodePort: https 30005/TCP

Endpoints: 10.10.0.7:8443

Session Affinity: None

External Traffic Policy: Local

Internal Traffic Policy: Cluster

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Type 95s service-controller LoadBalancer -> NodePort

$

$ kubectl patch svc -n istio-system istio-ingressgateway -p '{"spec":{"externalTrafficPolicy": "Local"}}'

service/istio-ingressgateway patched (no change)

$ kubectl describe svc -n istio-system istio-ingressgateway

Name: istio-ingressgateway

Namespace: istio-system

Labels: app=istio-ingressgateway

install.operator.istio.io/owning-resource=unknown

install.operator.istio.io/owning-resource-namespace=istio-system

istio=ingressgateway

istio.io/rev=default

operator.istio.io/component=IngressGateways

operator.istio.io/managed=Reconcile

operator.istio.io/version=1.17.8

release=istio

Annotations: <none>

Selector: app=istio-ingressgateway,istio=ingressgateway

Type: NodePort

IP Family Policy: SingleStack

IP Families: IPv4

IP: 10.200.1.202

IPs: 10.200.1.202

Port: status-port 15021/TCP

TargetPort: 15021/TCP

NodePort: status-port 31057/TCP

Endpoints: 10.10.0.7:15021

Port: http2 80/TCP

TargetPort: 8080/TCP

NodePort: http2 30000/TCP

Endpoints: 10.10.0.7:8080

Port: https 443/TCP

TargetPort: 8443/TCP

NodePort: https 30005/TCP

Endpoints: 10.10.0.7:8443

Session Affinity: None

External Traffic Policy: Local

Internal Traffic Policy: Cluster

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Type 2m23s service-controller LoadBalancer -> NodePort

# NodePort 변경 및 nodeport 30001~30003으로 변경 : prometheus(30001), grafana(30002), kiali(30003), tracing(30004)

kubectl patch svc -n istio-system prometheus -p '{"spec": {"type": "NodePort", "ports": [{"port": 9090, "targetPort": 9090, "nodePort": 30001}]}}'

kubectl patch svc -n istio-system grafana -p '{"spec": {"type": "NodePort", "ports": [{"port": 3000, "targetPort": 3000, "nodePort": 30002}]}}'

kubectl patch svc -n istio-system kiali -p '{"spec": {"type": "NodePort", "ports": [{"port": 20001, "targetPort": 20001, "nodePort": 30003}]}}'

kubectl patch svc -n istio-system tracing -p '{"spec": {"type": "NodePort", "ports": [{"port": 80, "targetPort": 16686, "nodePort": 30004}]}}'

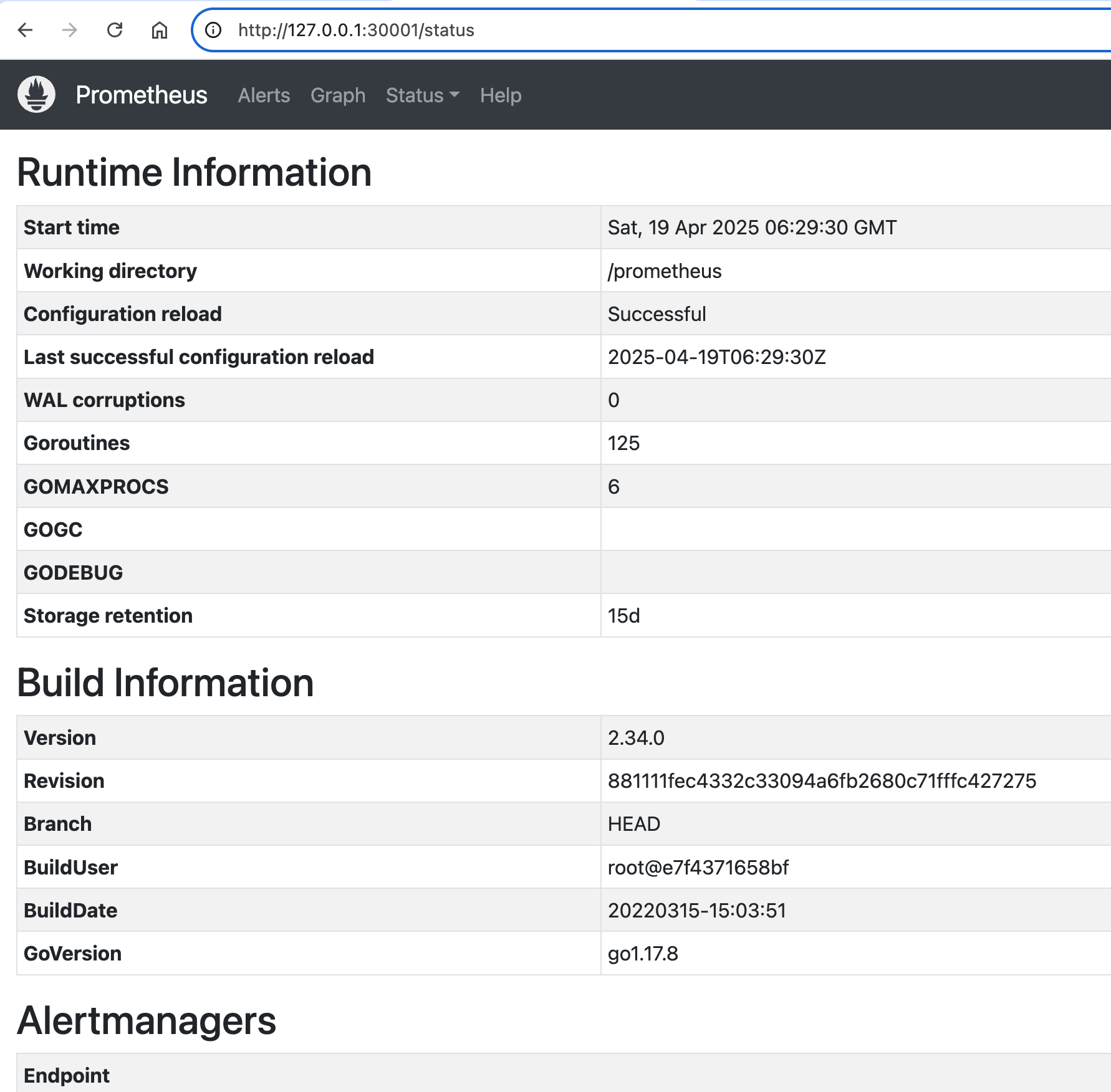

# Prometheus 접속 : envoy, istio 메트릭 확인

open http://127.0.0.1:30001

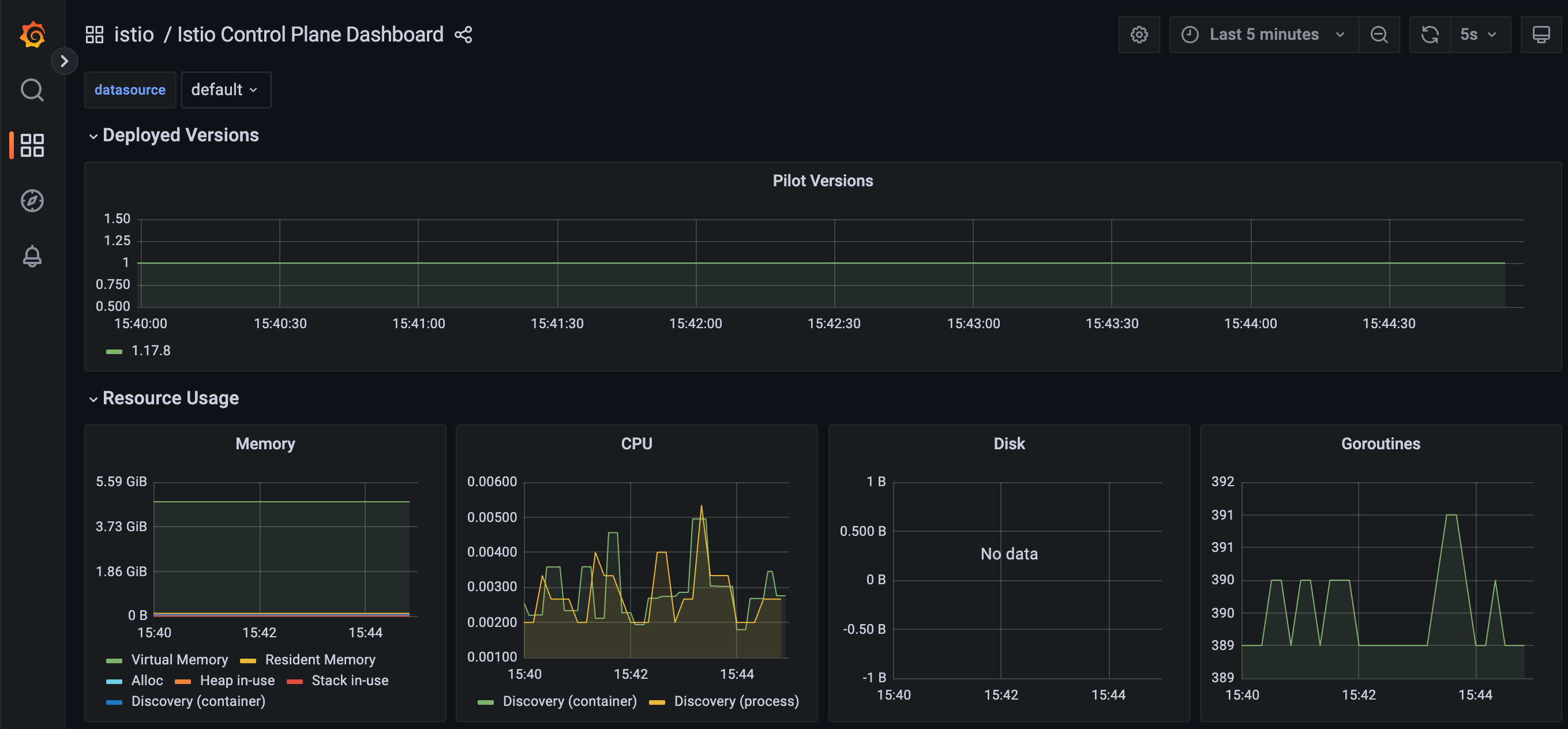

# Grafana 접속

open http://127.0.0.1:30002

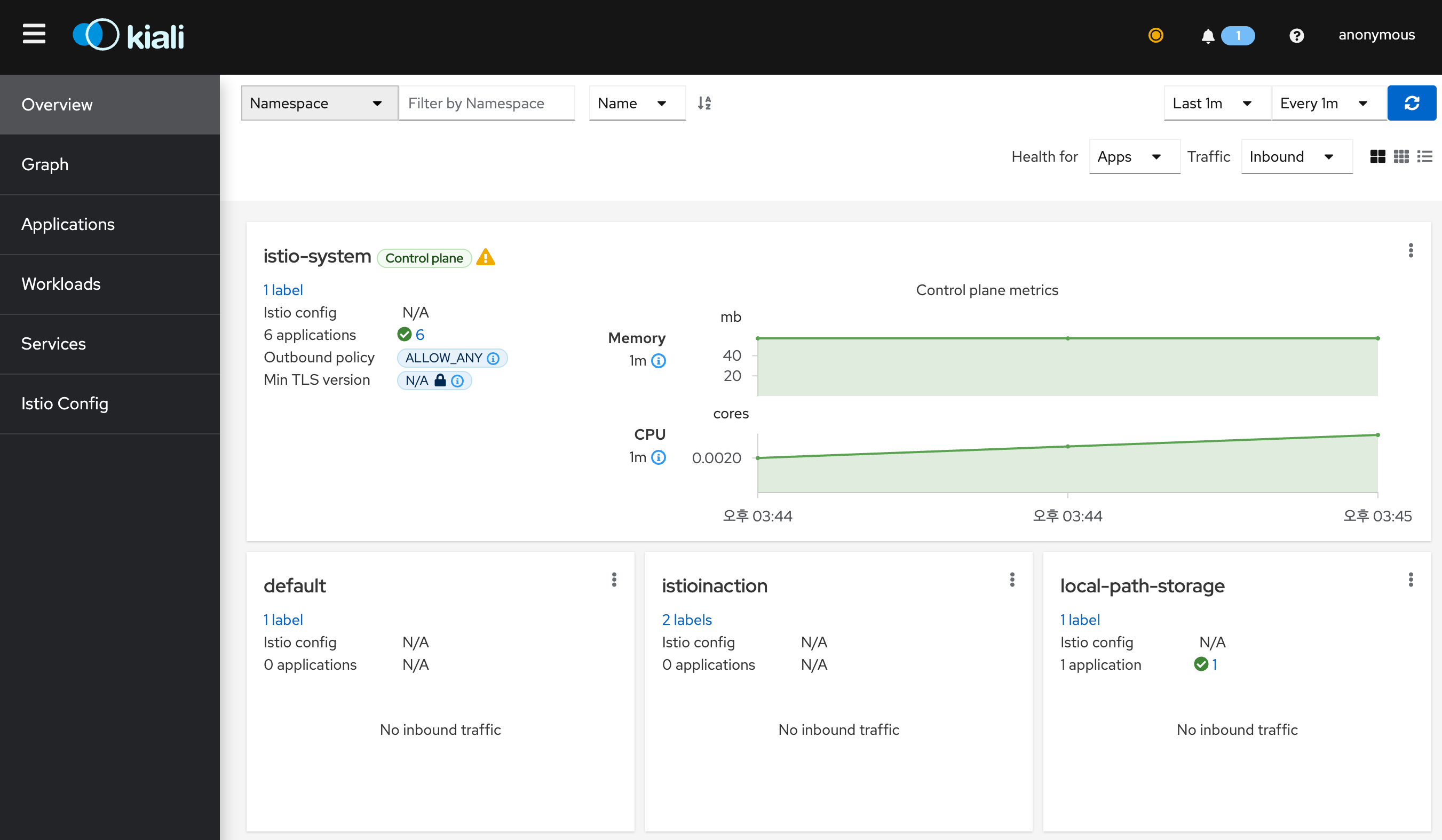

# Kiali 접속 1 : NodePort

open http://127.0.0.1:30003

# (옵션) Kiali 접속 2 : Port forward

kubectl port-forward deployment/kiali -n istio-system 20001:20001 &

open http://127.0.0.1:20001

# tracing 접속 : 예거 트레이싱 대시보드

open http://127.0.0.1:30004

# 접속 테스트용 netshoot 파드 생성

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: Pod

metadata:

name: netshoot

spec:

containers:

- name: netshoot

image: nicolaka/netshoot

command: ["tail"]

args: ["-f", "/dev/null"]

terminationGracePeriodSeconds: 0

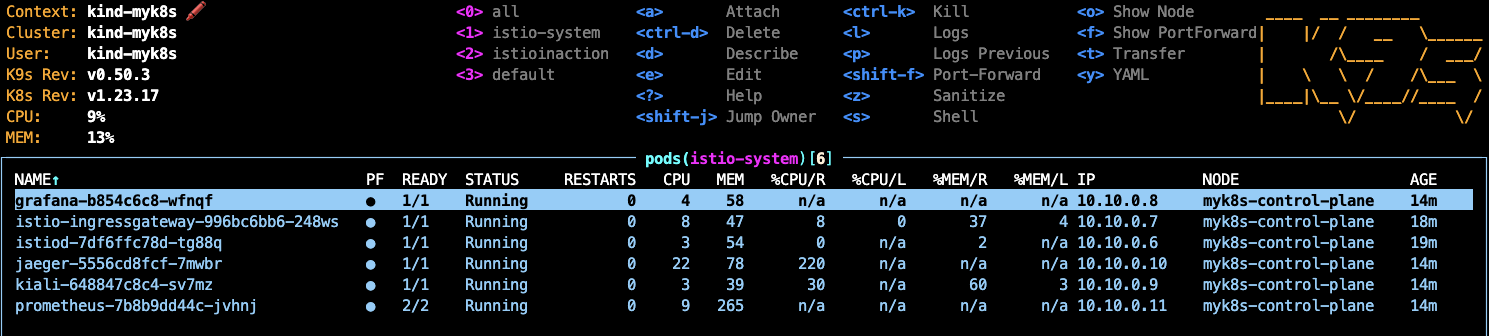

EOF- K9S

- Prometheus

- Grafana

- Kiali

- Jaeger UI

2.2 Istio Gateway는

- Defining entry points into a cluster 클러스터 진입 지점 정의

- Routing ingress traffic to deployments in your cluster 인입 트래픽을 클러스터 내 배포로 라우팅하기

- Securing ingress traffic 인입 트래픽 보호하기 (HTTPS, x.509)

- Routing non HTTP/S traffic HTTP아닌 트래픽 라우팅 (TCP)

2.3 Traffic Ingress Concept

2.3.1 Ingress와 Ingress Point

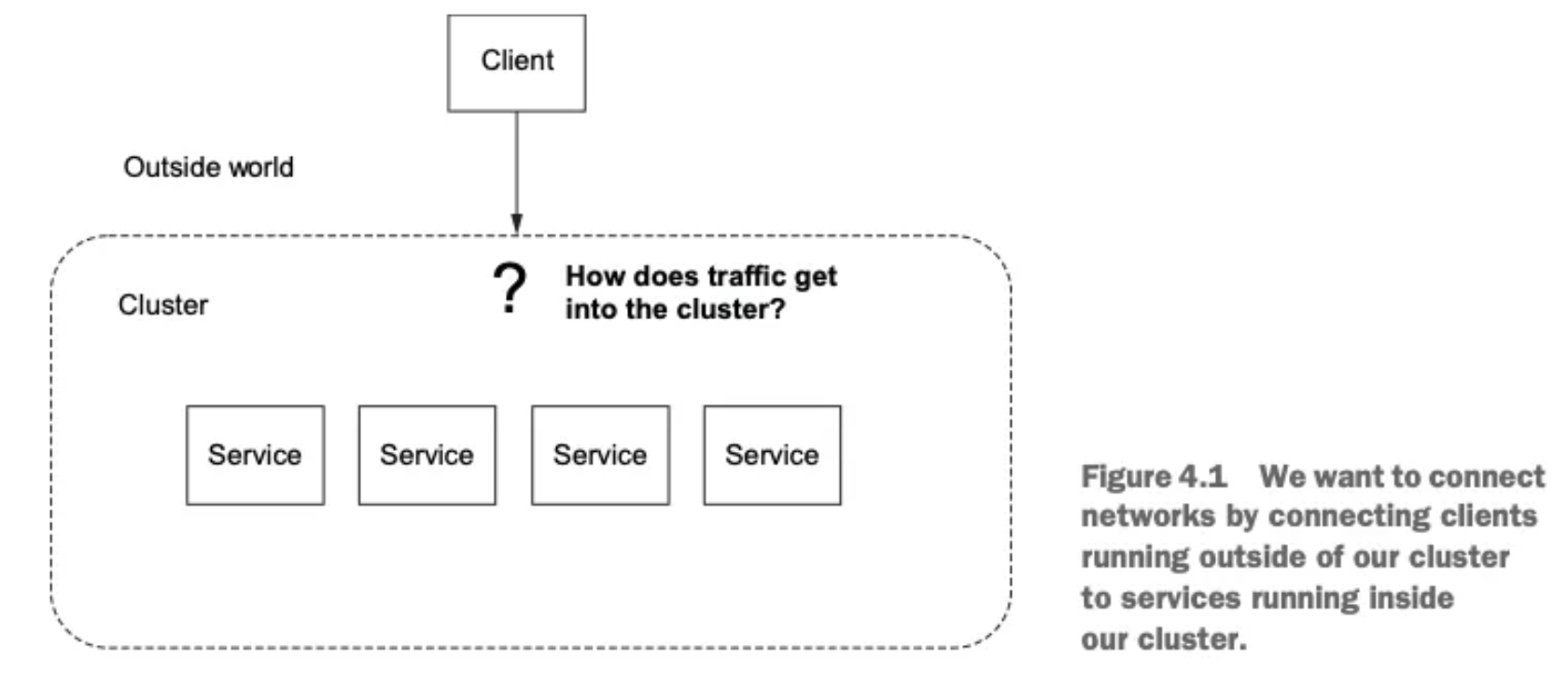

- 인그레스란 네트워크 외부에서 내부로 들어오는 트래픽을 의미한다.

- 이 트래픽은 먼저 인그레스 포인트를 거쳐야 하며, 이는 네트워크의 문지기 역할을 한다.

- 인그레스 포인트는 접근 정책과 규칙을 적용하여, 허용된 트래픽만 내부 엔드포인트로 프록시한다.

- 허용되지 않은 트래픽은 차단된다.

2.3.2 Virtual IPs

Simplifying services access 가상IP: 서비스 접근 단순화

-

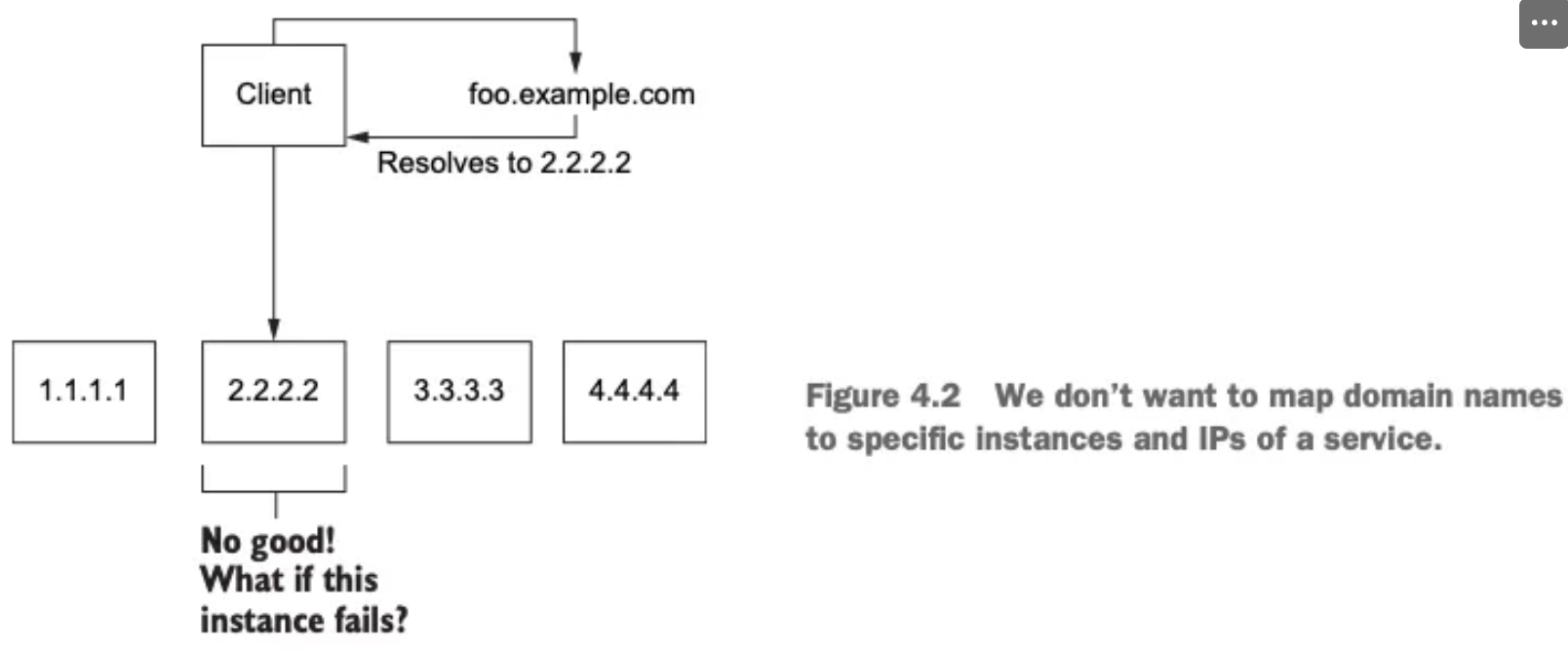

DNS와 서비스 노출의 첫걸음

- 외부 시스템이 api.istioinaction.io/v1/products 같은 엔드포인트에 접근하려면, 먼저 도메인 이름을 IP 주소로 해석(DNS 쿼리) 해야 한다.

- 이 과정은 DNS 서버를 통해 이루어지며, 도메인 이름은 적절한 서비스 IP 주소에 매핑돼야 한다.

-

문제점: 도메인을 단일 IP에 직접 매핑하면?

- 특정 IP 주소(단일 엔드포인트) 에 도메인을 매핑하면, 그 인스턴스가 다운될 경우 전체 서비스가 중단된다.

- DNS를 통해 새로운 IP로 매핑되기 전까지 클라이언트는 계속 오류를 경험한다.

- 이는 가용성을 크게 떨어뜨리는 구조로, 실무에서는 부적절하다.

-

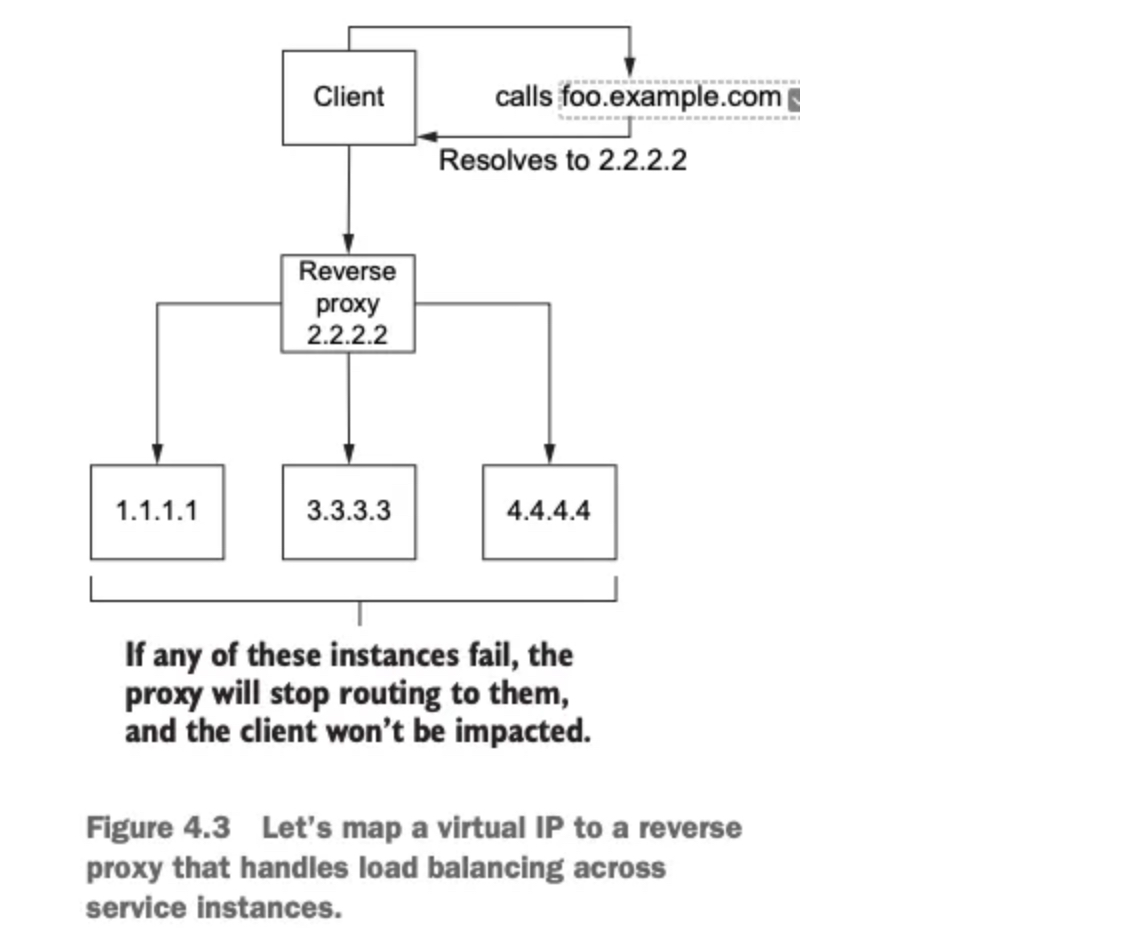

도메인 이름을 가상 IP에 매핑하는 이유

- 도메인 이름을 가상 IP 주소에 대응시키면, 이 IP가 여러 서비스 인스턴스로 트래픽을 분산시킬 수 있어 가용성과 유연성이 높아진다.

- 이 가상 IP는 리버스 프록시(인그레스 포인트의 한 유형) 에 바인딩된다.

-

리버스 프록시의 역할

- 백엔드 서비스에 트래픽을 분산시키는 중간 구성 요소다.

- 로드 밸런싱을 통해 특정 서비스가 과부하되지 않도록 조절할 수 있다.

- 하나의 고정 IP(가상 IP)를 통해 안정적이고 유연하게 서비스 진입점을 제공할 수 있다.

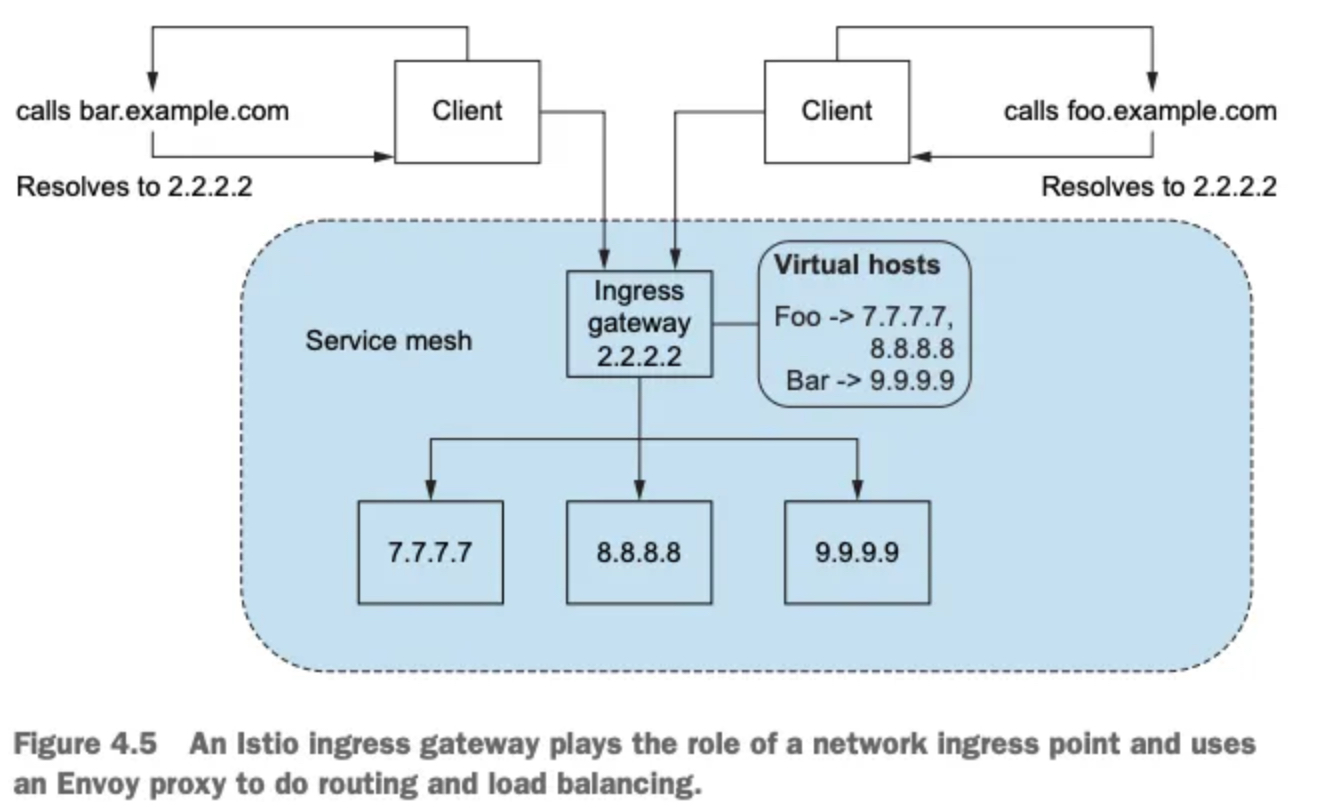

2.3.3 Virtual hosting

Multiple services from a single access point 가상호스팅: 단일 접근 지점의 여러 서비스

-

가상 IP와 호스트네임의 관계

- 여러 서비스 인스턴스가 있지만, 클라이언트는 오직 하나의 가상 IP만 사용한다.

- 여러 호스트네임(prod.istioinaction.io, api.istioinaction.io 등)을 하나의 가상 IP로 매핑할 수 있다.

- 따라서 모든 요청이 동일한 인그레스 리버스 프록시를 통해 들어오게 된다.

-

리버스 프록시의 라우팅 방식

- 리버스 프록시는 들어온 요청의 Host 헤더를 분석해, 요청을 올바른 서비스 그룹으로 라우팅할 수 있다.

- 즉, 하나의 IP로 여러 서비스를 구분해 처리할 수 있는 유연한 구조를 제공한다.

-

가상 호스팅이란: 하나의 진입점(IP)으로 여러 서비스를 동시에 호스팅하는 방식이다.

-

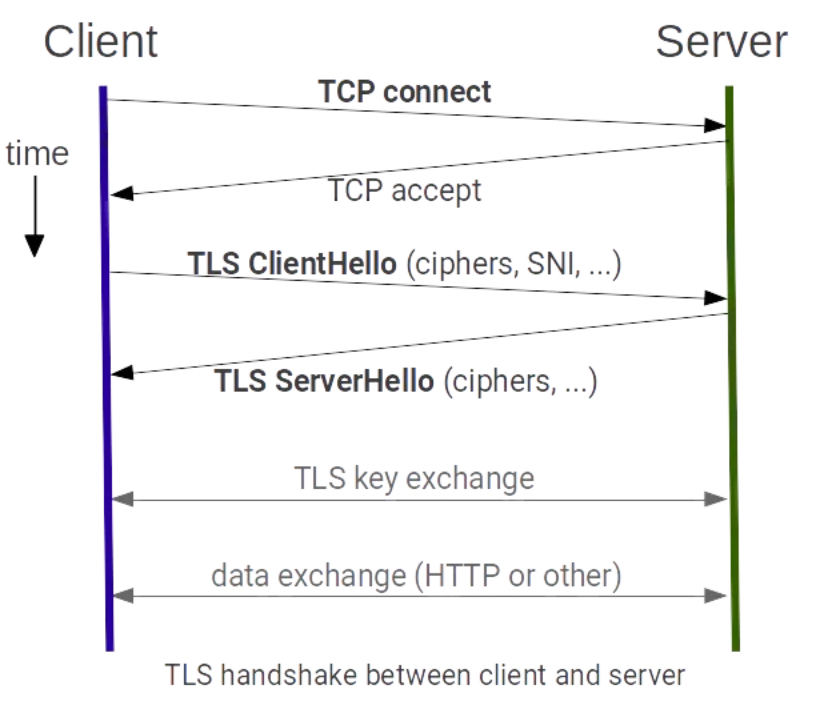

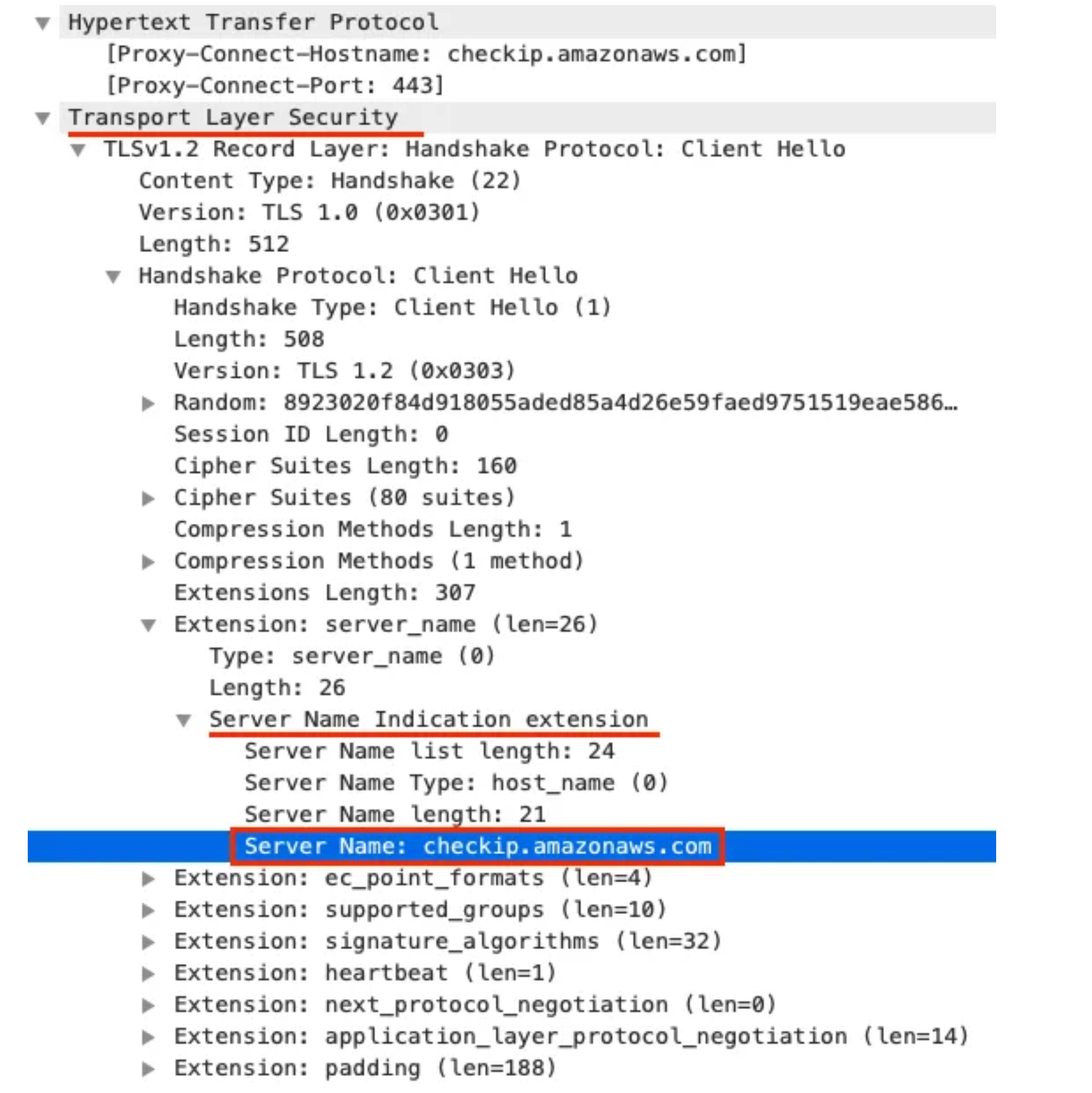

요청 구분 방법:

- HTTP/1.1: Host 헤더 사용

- HTTP/2: :authority 헤더 사용

- TLS 기반 TCP: SNI (Server Name Indication) 사용

-

Istio의 활용: Istio의 에지 인그레스 게이트웨이는 가상 IP + 가상 호스팅을 조합해 들어오는 트래픽을 클러스터 내부의

올바른 서비스로 라우팅한다.

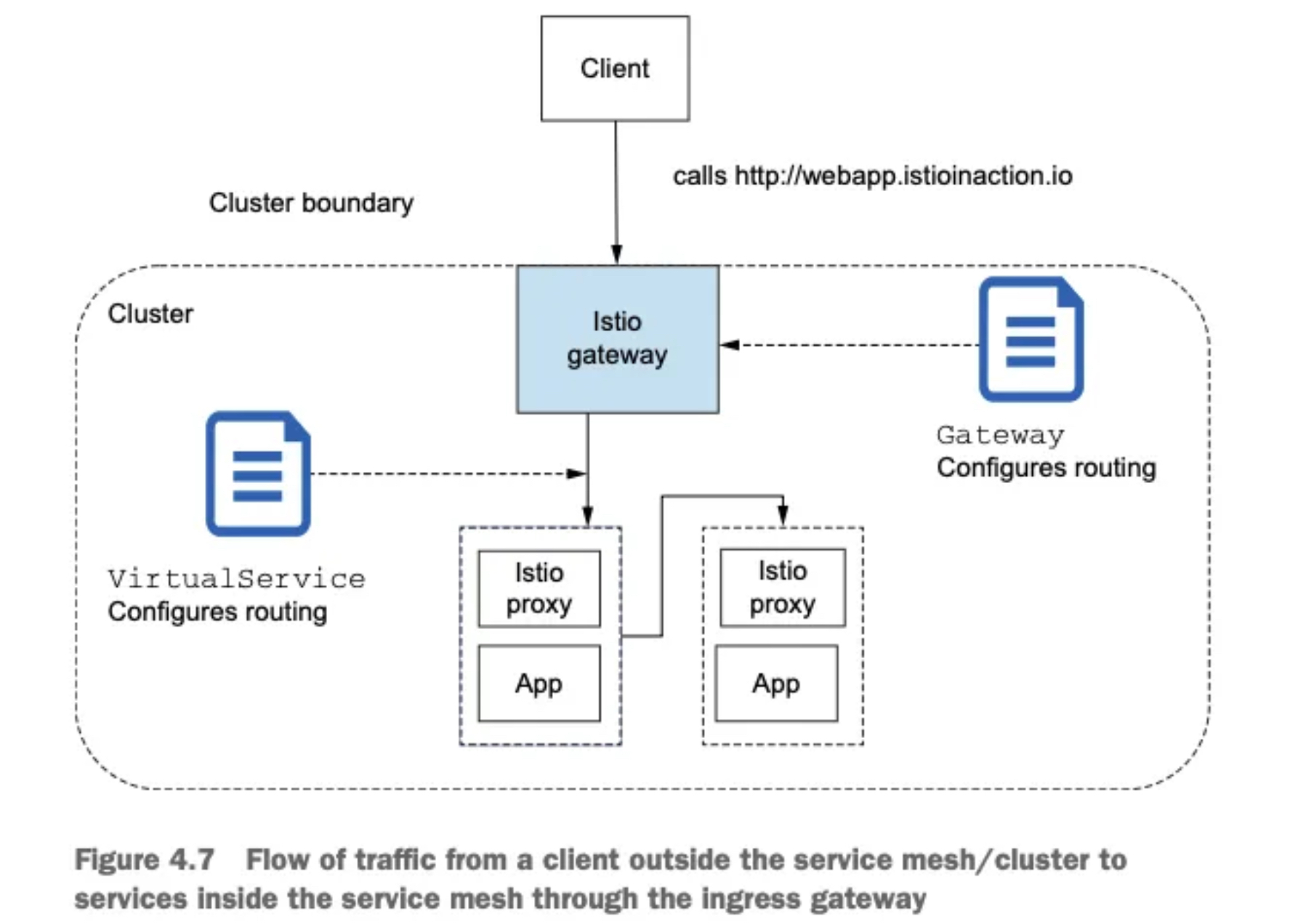

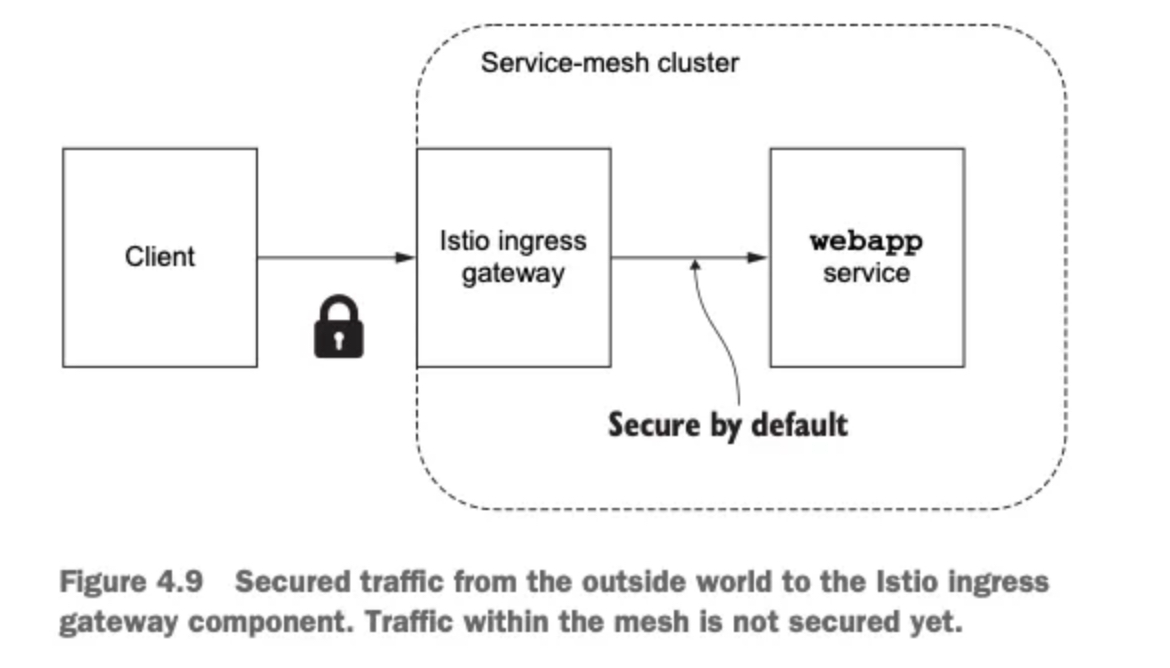

2.4 Istio ingress gateways

2.4.1 소개 (실습) Gateway(L4/L5) , VirtualService(L7)

-

역할: 클러스터 외부에서 시작된 트래픽이 클러스터 내부 서비스에 접근하는 것을 제어한다.

-

기능: 방화벽 역할, 로드 밸런싱, 가상 호스트 기반 라우팅, 리버스 프록시 역할 수행

-

구현: Istio는 단일 Envoy 프록시를 인그레스 게이트웨이로 사용한다.

✅ 엔보이의 기존 기능(TLS 종료, 트래픽 제어, 모니터링 등)은

인그레스 게이트웨이에서도 동일하게 사용 가능!

- 이스티오가 어떻게 엔보이를 사용해 인그레스 게이트웨이를 구현하는지 자세히 살펴보자.

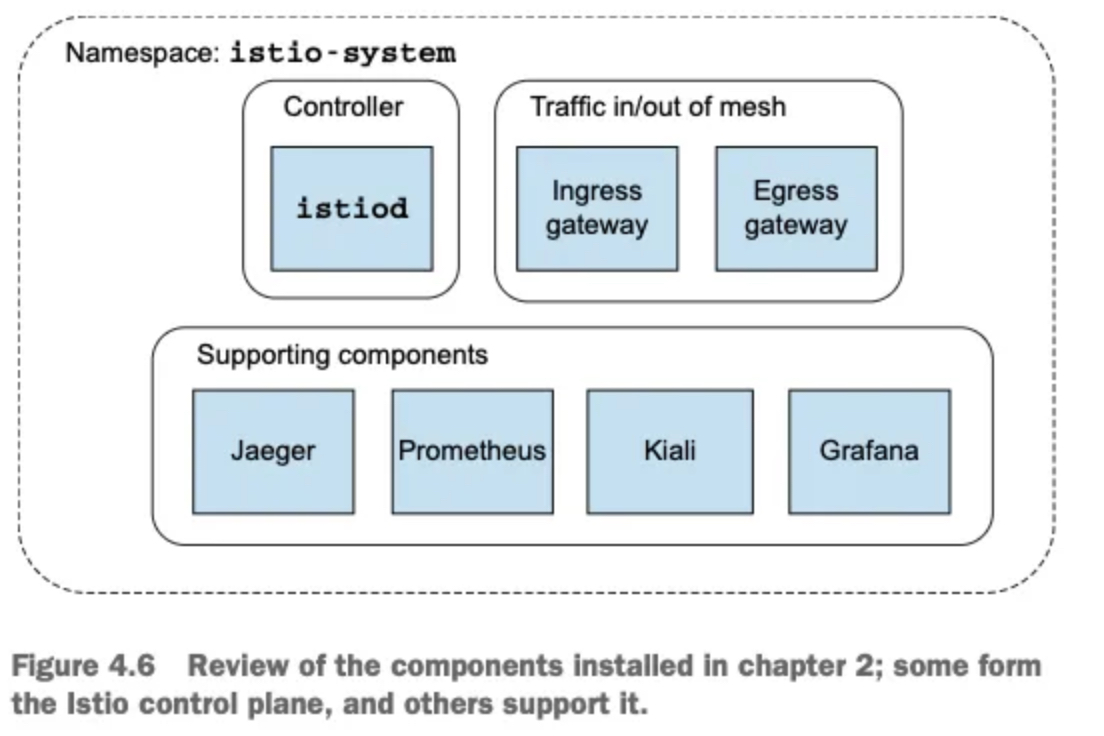

- 아래 그림은 컨트롤 플레인을 구성하는 요소와 컨트롤 플레인을 보조하는 추가 구성 요소의 목록을 보여준다.

- 이스티오 서비스 프록시(엔보이 프록시)가 이스티오 인그레스 게이트웨이에서 실제로 작동 중인지 확인해보자.

# 파드에 컨테이너 1개 기동 : 별도의 애플리케이션 컨테이너가 불필요.

kubectl get pod -n istio-system -l app=istio-ingressgateway

NAME READY STATUS RESTARTS AGE

istio-ingressgateway-996bc6bb6-248ws 1/1 Running 0 77m

# proxy 상태 확인

docker exec -it myk8s-control-plane istioctl proxy-status

NAME CLUSTER CDS LDS EDS RDS ECDS ISTIOD VERSION

istio-ingressgateway-996bc6bb6-248ws.istio-system Kubernetes SYNCED SYNCED SYNCED NOT SENT NOT SENT istiod-7df6ffc78d-tg88q 1.17.8

# proxy 설정 확인

docker exec -it myk8s-control-plane istioctl proxy-config all deploy/istio-ingressgateway.istio-system

SERVICE FQDN PORT SUBSET DIRECTION TYPE DESTINATION RULE

BlackHoleCluster - - - STATIC

agent - - - STATIC

grafana.istio-system.svc.cluster.local 3000 - outbound EDS

istio-ingressgateway.istio-system.svc.cluster.local 80 - outbound EDS

istio-ingressgateway.istio-system.svc.cluster.local 443 - outbound EDS

istio-ingressgateway.istio-system.svc.cluster.local 15021 - outbound EDS

istiod.istio-system.svc.cluster.local 443 - outbound EDS

istiod.istio-system.svc.cluster.local 15010 - outbound EDS

istiod.istio-system.svc.cluster.local 15012 - outbound EDS

istiod.istio-system.svc.cluster.local 15014 - outbound EDS

jaeger-collector.istio-system.svc.cluster.local 9411 - outbound EDS

jaeger-collector.istio-system.svc.cluster.local 14250 - outbound EDS

jaeger-collector.istio-system.svc.cluster.local 14268 - outbound EDS

kiali.istio-system.svc.cluster.local 9090 - outbound EDS

kiali.istio-system.svc.cluster.local 20001 - outbound EDS

kube-dns.kube-system.svc.cluster.local 53 - outbound EDS

kube-dns.kube-system.svc.cluster.local 9153 - outbound EDS

kubernetes.default.svc.cluster.local 443 - outbound EDS

metrics-server.kube-system.svc.cluster.local 443 - outbound EDS

prometheus.istio-system.svc.cluster.local 9090 - outbound EDS

prometheus_stats - - - STATIC

sds-grpc - - - STATIC

tracing.istio-system.svc.cluster.local 80 - outbound EDS

tracing.istio-system.svc.cluster.local 16685 - outbound EDS

xds-grpc - - - STATIC

zipkin - - - STRICT_DNS

zipkin.istio-system.svc.cluster.local 9411 - outbound EDS

ADDRESS PORT MATCH DESTINATION

0.0.0.0 15021 ALL Inline Route: /healthz/ready*

0.0.0.0 15090 ALL Inline Route: /stats/prometheus*