성능을 확보하기 위해 다양한 시도를 해보자!

1. 데이터 관찰

1-1. class(사기 유무) 컬럼의 불균형

frauds_rate = round(raw_data['Class'].value_counts()[1] / len(raw_data) * 100, 2)

print(f'frauds = {frauds_rate}% of the dataset')

>>>

frauds = 0.17% of the dataset1-2. 데이터 나누기

X = raw_data.iloc[:, 1:-1]

y = raw_data.iloc[:, -1]

X.shape, y.shape

>>>

((284807, 29), (284807,))나눈 데이터의 불균형 정도를 확인해본다.

import numpy as np

tmp = np.unique(y_train, return_counts=True)[1]

print(f'frauds = {round(tmp[1] / len(y_train) * 100, 2)}% of the dataset')

>>>

frauds = 0.17% of the dataset2. 함수 만들기

분류기의 성능을 return하는 함수

from sklearn.metrics import (accuracy_score, precision_score, recall_score,

f1_score, roc_auc_score, confusion_matrix)

def get_clf_eval(y_test, pred):

acc = accuracy_score(y_test, pred)

pre = precision_score(y_test, pred)

re = recall_score(y_test, pred)

f1 = f1_score(y_test, pred)

auc = roc_auc_score(y_test, pred)

return acc, pre, re, f1, auc

def print_clf_eval(y_test, pred):

confusion = confusion_matrix(y_test, pred)

acc, pre, re, f1, auc = get_clf_eval(y_test, pred)

print('[ confusion matrix ]')

print(confusion)

print('-----------------')

print('Accuracy = {0:.4f}, Precision = {1:.4f}'.format(acc, pre))

print('Recall = {0:.4f}, F1 = {1:.4f}, AUC = {2:.4f}'.format(re, f1, auc))모델과 데이터를 주면 성능을 출력하는 함수

def get_result(model, X_train, y_train, X_test, y_test):

model.fit(X_train, y_train)

pred = model.predict(X_test)

return get_clf_eval(y_test, pred)여러 모델의 성능을 정리해서 데이터프레임으로 반환하는 함수

def get_result_pd(models, model_names, X_train, y_train, X_test, y_test):

col_names = ['accuarcy', 'precision', 'recall', 'F1', 'roc_auc']

tmp = []

for model in models:

tmp.append(get_result(model, X_train, y_train, X_test, y_test))

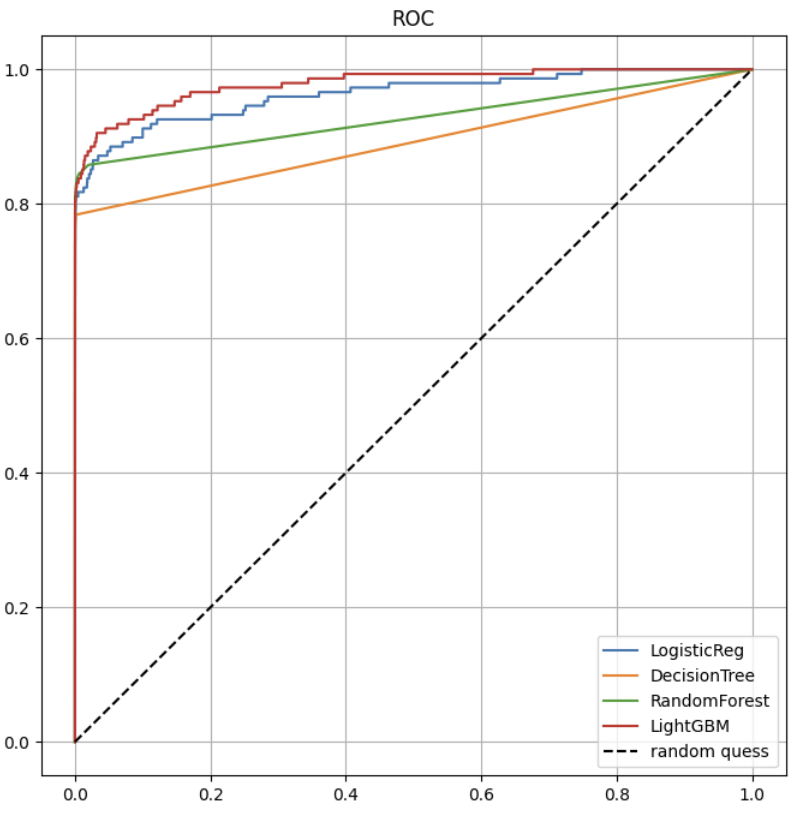

return pd.DataFrame(tmp, columns=col_names, index=model_names)모델별 ROC curve 그리는 함수

from sklearn.metrics import roc_curve

def draw_roc_curve(models, model_names, X_test, y_test):

plt.figure(figsize=(8, 8))

for i in range(len(models)):

pred = models[i].predict_proba(X_test)[:, 1]

fpr, tpr, thresholds = roc_curve(y_test, pred)

plt.plot(fpr, tpr, label=model_names[i])

plt.plot([0, 1], [0, 1], 'k--', label='random quess')

plt.title('ROC')

plt.legend()

plt.grid()

plt.show()3. 1st trial

np.unique(y_test, return_counts=True)

>>>

(array([0, 1]), array([85295, 148]))LogisticRegression

from sklearn.linear_model import LogisticRegression

lr_clf = LogisticRegression(random_state=13, solver='liblinear')

lr_clf.fit(X_train, y_train)

lr_pred = lr_clf.predict(X_test)

print_clf_eval(y_test, lr_pred)

>>>

[ confusion matrix ]

[[85284 11]

[ 60 88]]

-----------------

Accuracy = 0.9992, Precision = 0.8889

Recall = 0.5946, F1 = 0.7126, AUC = 0.7972DecisionTreeClassifier

from sklearn.tree import DecisionTreeClassifier

dt_clf = DecisionTreeClassifier(random_state=13, max_depth=4)

dt_clf.fit(X_train, y_train)

dt_pred = dt_clf.predict(X_test)

print_clf_eval(y_test, dt_pred)

>>>

[ confusion matrix ]

[[85281 14]

[ 42 106]]

-----------------

Accuracy = 0.9993, Precision = 0.8833

Recall = 0.7162, F1 = 0.7910, AUC = 0.8580RandomForestClassifier

from sklearn.ensemble import RandomForestClassifier

rf_clf = RandomForestClassifier(random_state=13, n_jobs=-1, n_estimators=100)

rf_clf.fit(X_train, y_train)

rf_pred = rf_clf.predict(X_test)

print_clf_eval(y_test, rf_pred)

>>>

[ confusion matrix ]

[[85290 5]

[ 38 110]]

-----------------

Accuracy = 0.9995, Precision = 0.9565

Recall = 0.7432, F1 = 0.8365, AUC = 0.8716LGBMClassifier

from lightgbm import LGBMClassifier

lgbm_clf = LGBMClassifier(random_state=13, n_jobs=-1,

n_estimators=1000, num_leaves=64,

boost_from_average=False)

lgbm_clf.fit(X_train, y_train)

lgbm_pred = lgbm_clf.predict(X_test)

print_clf_eval(y_test, lgbm_pred)

>>>

[ confusion matrix ]

[[85289 6]

[ 34 114]]

-----------------

Accuracy = 0.9995, Precision = 0.9500

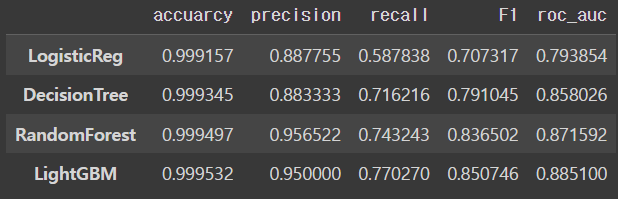

Recall = 0.7703, F1 = 0.8507, AUC = 0.88514개의 분류 모델을 한 번에 표로 정리하기

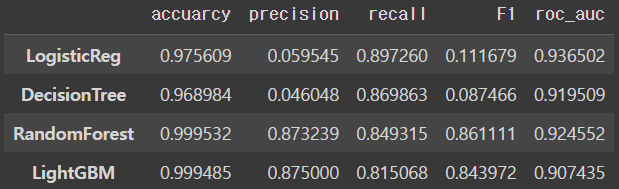

import time

models = [lr_clf, dt_clf, rf_clf, lgbm_clf]

model_names = ['LogisticReg', 'DecisionTree', 'RandomForest', 'LightGBM']

start_time = time.time()

results = get_result_pd(models, model_names, X_train, y_train, X_test, y_test)

print('FIT TIME :', time.time() - start_time)

results4. 2nd trial - StandardScaler 적용

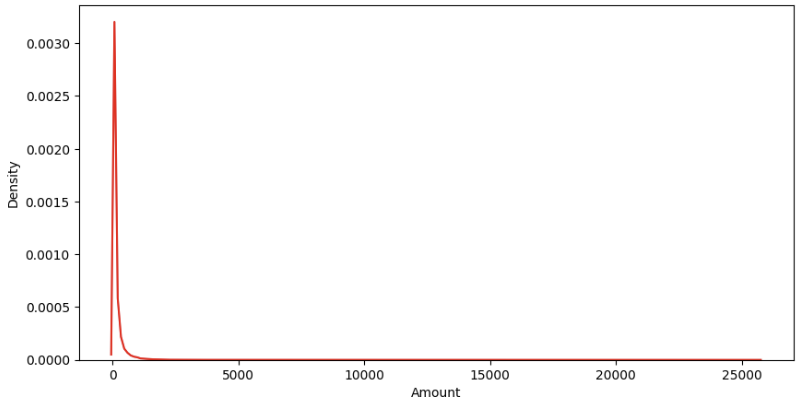

plt.figure(figsize=(10, 5))

sns.kdeplot(data=raw_data, x='Amount', color='r')

plt.show()

Amount 컬럼의 분포 ➡ 특정 대역이 아주 많음(신용카드 사용 금액은 대부분 비슷함)

Amount 컬럼이 중요한 역할을 한다면 문제가 될 수 있기 때문에 스케일러를 적용한다.

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

amount_n = scaler.fit_transform(raw_data['Amount'].values.reshape(-1, 1))

raw_data_copy = raw_data.iloc[:, 1:-2]

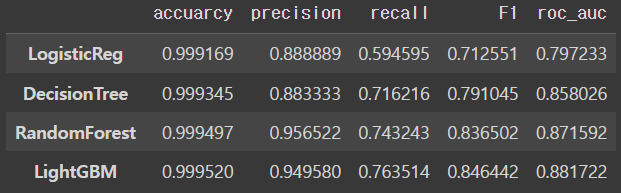

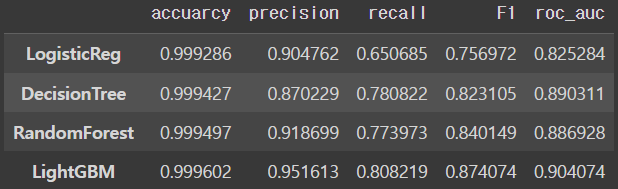

raw_data_copy['Amount_Scaled'] = amount_n다시! 4개의 분류 모델을 한 번에 표로 정리하기

변화가 거의 없다.

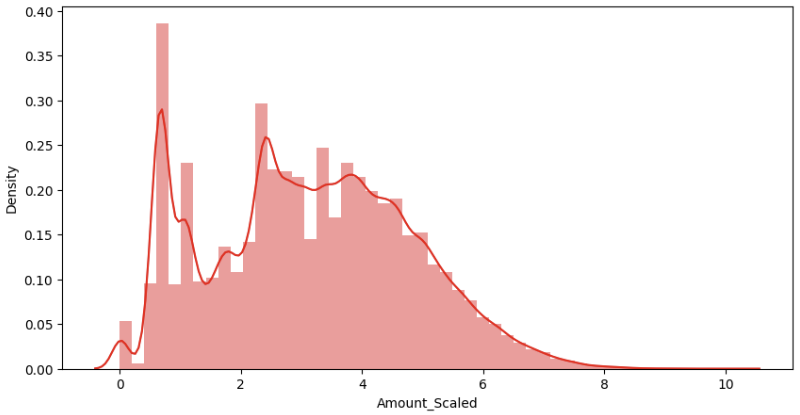

5. 2nd Trial - log scale 적용

amount_log = np.log1p(raw_data['Amount'])

raw_data_copy['Amount_Scaled'] = amount_log

plt.figure(figsize=(10, 5))

sns.distplot(raw_data_copy['Amount_Scaled'], color='r')

plt.show()

log를 적용하면 분포가 변화한다.(x가 커질수록 y를 억제하는 효과)

큰 변화는 보이지 않는다.

6. 3rd Trial - 아웃라이어 정리

# 아웃라이어 찾는 함수

def get_outlier(df=None, column=None, weight=1.5):

# fraud 데이터에 대해서만 아웃라이어 확인

fraud = df[df['Class']==1][column]

quantile_25 = np.percentile(fraud.values, 25)

quantile_75 = np.percentile(fraud.values, 75)

iqr = quantile_75 - quantile_25

iqr_weight = iqr * weight

lowest_val = quantile_25 - iqr_weight

highest_val = quantile_75 - iqr_weight

outlier_index = fraud[(fraud < lowest_val) | (fraud > highest_val)]

return outlier_indexoutlier_index = get_outlier(df=raw_data, column='V14')

raw_data_copy.drop(outlier_index, axis=0, inplace=True)X = raw_data_copy

raw_data.drop(outlier_index, axis=0, inplace=True)

y = raw_data.iloc[:, -1]

X_train, X_test, y_train, y_test = train_test_split ...

이번에는 살짝 변화가 있다.

7. 4th Trial - SMOTE Oversampling

데이터의 불균형이 심하다면? 두 클래스의 분포를 강제로 맞춰본다.

- Undersampling : 많은 수의 데이터를 적은 수의 데이터로 조정

- Oversampling : 원본 데이터의 특성 값들을 약간 변경하여 증식

- SMOTE(Synthetic Minority Oversampling Technique) : 적은 데이터 세트에 있는 개별 데이터를 kNN 방법으로 찾아서 데이터 분포 사이에 새로운 데이터를 넣음

- imbalanced-learn 패키지

pip install imbalanced-learn❗❗데이터를 조작할 때는 train 세트에만 해야 한다.(스케일링은 예외)

from imblearn.over_sampling import SMOTE

smote = SMOTE(random_state=13)

X_train_over, y_train_over = smote.fit_resample(X_train, y_train)print(np.unique(y_train, return_counts=True))

print(np.unique(y_train_over, return_counts=True))

>>>

(array([0, 1]), array([199020, 342]))

(array([0, 1]), array([199020, 199020]))