Natural Language Processing

1.[NLP] 1. Intro- Tokens

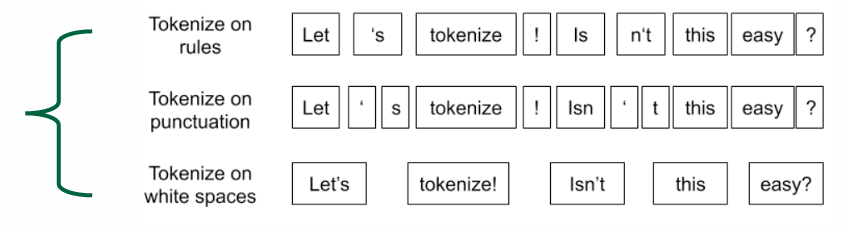

1. Introduction Tokens & Tokenization Tokenization: The process of representing raw text in smaller units called tokens. Tokens: Basic units created

2024년 11월 5일

2.[NLP] 2. Word Embedding

2. Word Embedding : Embedding -> Vector, Feature, Representation the representation of words in the form of a real-valued number Good word embedding e

2024년 12월 4일

3.[NLP] 기말대비

기말 대비 목차, 용어 정리 3. Word Embedding Word2Vec skip-gram: center에서 context 맞춤 CBoW: context에서 center negative log-likelihood -> objective function(loss function) $vw$ w is center word, $uw$ w is contex...

2024년 12월 4일