Sentiment Classification

Task

- 네이버에서 영화평을 가지고 positive/negative인지 구분해보자.

- 데이터 불러오기를 제외한 딥러닝 트레이닝 과정을 직접 구현해보는 것이 목표 입니다.

Dataset

- Naver sentiment movie corpus v1.0

Base code

- Dataset: train, val, test로 split

- Input data shape: (batch_size, max_sequence_length)

- Output data shape: (batch_size, 1)

- Training

- Evaluation

Try some techniques

- Training-epochs 조절

- Change model architectures (Custom model)

- Use another cells (LSTM, GRU, etc.)

- Use dropout layers

- Embedding size 조절

- Number of words in the vocabulary 변화

- pad 옵션 변화

- Data augmentation (if possible)

Import modules

from google.colab import drive

drive.mount('/content/drive')

!pip install sentencepiece

Collecting sentencepiece

Downloading sentencepiece-0.1.99-cp310-cp310-manylinux_2_17_x86_64.manylinux2014_x86_64.whl (1.3 MB)

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 1.3/1.3 MB 9.0 MB/s eta 0:00:00

Installing collected packages: sentencepiece

Successfully installed sentencepiece-0.1.99

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

from __future__ import unicode_literals

import os

import time

import shutil

import tarfile

import numpy as np

import matplotlib.pyplot as plt

%matplotlib inline

from IPython.display import clear_output

import urllib.request

import pandas as pd

import tensorflow as tf

from tensorflow.keras.preprocessing.sequence import pad_sequences

import sentencepiece as spm

from collections import Counter, defaultdict

Load Data

- ratings_train.txt: 훈련용으로 사용되는 15만 개의 리뷰

- ratings_test.txt: 테스트용으로 보류된 5만 개의 리뷰

- 모든 리뷰는 140자 이내입니다

- 각 감정 클래스는 동등하게 샘플링되었습니다 (즉, 무작위 추측은 50%의 정확도를 보입니다)

- 10만 개의 부정적 리뷰 (원래 1-4점의 리뷰)

- 10만 개의 긍정적 리뷰 (원래 9-10점의 리뷰)

- 중립적 리뷰 (원래 5-8점의 리뷰)는 제외되었습니다

urllib.request.urlretrieve("https://raw.githubusercontent.com/e9t/nsmc/master/ratings_train.txt", filename="ratings_train.txt")

urllib.request.urlretrieve("https://raw.githubusercontent.com/e9t/nsmc/master/ratings_test.txt", filename="ratings_test.txt")

train_data = pd.read_table('ratings_train.txt')

train_data = train_data.dropna()

test_data = pd.read_table('ratings_test.txt')

test_data = test_data.dropna()

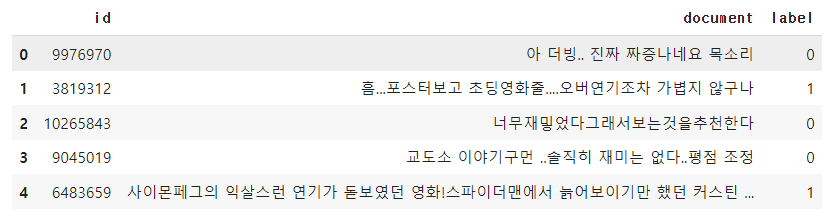

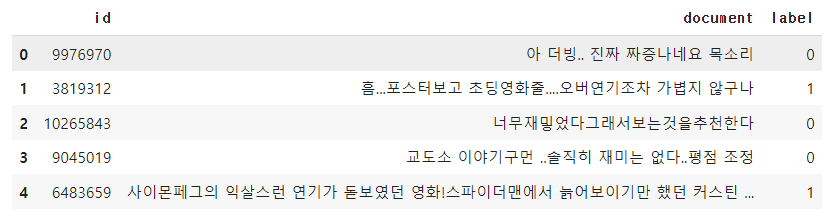

train_data.head()

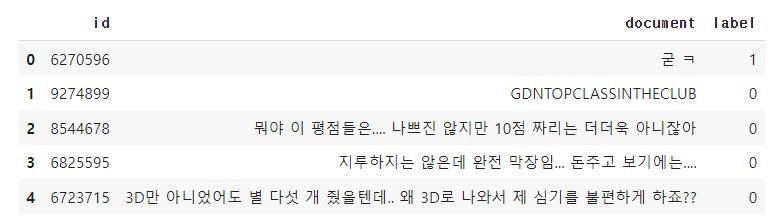

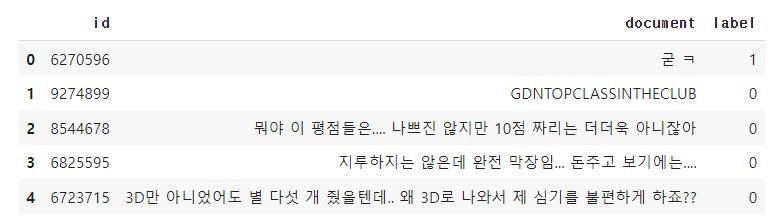

test_data.head()

Tokenizing

sp = spm.SentencePieceProcessor()

sp.load('/content/drive/MyDrive/dataset/naver_review/naver_review.model')

def tokenizer(text):

return sp.encode_as_pieces(text)

for i, (line) in enumerate(train_data['document']):

print(line)

print(sp.encode_as_pieces(line))

print(sp.encode_as_ids(line))

if i == 5:

break

아 더빙.. 진짜 짜증나네요 목소리

['▁아', '▁더빙', '..', '▁진짜', '▁짜증나', '네요', '▁목소리']

[14, 1226, 7, 88, 2990, 55, 2393]

흠...포스터보고 초딩영화줄....오버연기조차 가볍지 않구나

['▁흠', '...', '포스터', '보고', '▁초딩', '영화', '줄', '....', '오', '버', '연기', '조차', '▁가볍', '지', '▁않', '구나']

[1949, 16, 5829, 233, 1469, 10, 6601, 47, 6454, 6564, 355, 2103, 2338, 6387, 108, 508]

너무재밓었다그래서보는것을추천한다

['▁너무', '재', '밓', '었다', '그래서', '보는', '것을', '추천', '한다']

[39, 6416, 1, 164, 4556, 515, 1409, 2176, 367]

교도소 이야기구먼 ..솔직히 재미는 없다..평점 조정

['▁교', '도', '소', '▁이야기', '구', '먼', '▁..', '솔직히', '▁재미는', '▁없다', '..', '평점', '▁조', '정']

[729, 6392, 6487, 372, 6478, 6879, 516, 5346, 1686, 309, 7, 1187, 188, 6424]

사이몬페그의 익살스런 연기가 돋보였던 영화!스파이더맨에서 늙어보이기만 했던 커스틴 던스트가 너무나도 이뻐보였다

['▁사이', '몬', '페', '그', '의', '▁익', '살', '스런', '▁연기가', '▁돋보', '였던', '▁영화', '!', '스', '파이', '더', '맨', '에서', '▁늙', '어', '보이', '기만', '▁했던', '▁커', '스', '틴', '▁던', '스트', '가', '▁너무나도', '▁이뻐', '보', '였다']

[2855, 7223, 6958, 6415, 6400, 3665, 6598, 2275, 776, 1967, 2639, 11, 6409, 6418, 2182, 6470, 6720, 62, 2895, 6399, 2780, 1375, 3042, 1299, 6418, 7124, 3527, 692, 6391, 2677, 3827, 6398, 475]

막 걸음마 뗀 3세부터 초등학교 1학년생인 8살용영화.ㅋㅋㅋ...별반개도 아까움.

['▁막', '▁걸', '음', '마', '▁', '뗀', '▁3', '세', '부터', '▁초등', '학교', '▁1', '학년', '생', '인', '▁8', '살', '용', '영화', '.', 'ᄏᄏᄏ', '...', '별', '반', '개도', '▁아까움', '.']

[419, 519, 6436, 6427, 6371, 1, 34, 6512, 340, 2325, 979, 8, 3847, 6460, 6414, 15, 6598, 6510, 10, 6372, 472, 16, 6551, 6534, 1521, 2060, 6372]

eos_token = '[BOS]'

eos_id = sp.piece_to_id(eos_token)

print(f"토큰 '{eos_token}'의 ID: {eos_id}")

sp.encode_as_ids(['[EOS]'])

BOS_id = sp.piece_to_id('[BOS]')

EOS_id = sp.piece_to_id('[EOS]')

lengths = []

input_train_text, target_train_text = [], []

for line in train_data['document']:

input_line =

target_line =

input_train_text.append(tf.convert_to_tensor(input_line, dtype=tf.int32))

target_train_text.append(tf.convert_to_tensor(target_line, dtype=tf.int32))

lengths.append(len(line))

input_test_text, target_test_text = [], []

for line in test_data['document']:

input_line =

target_line =

input_test_text.append(tf.convert_to_tensor(input_line, dtype=tf.int32))

target_test_text.append(tf.convert_to_tensor(target_line, dtype=tf.int32))

lengths.append(len(line))

print(max(lengths))

print(len(input_test_text), len(target_train_text))

print(input_test_text[0], target_train_text[0])

Padding and truncating data using pad sequences

batch_size = 128

max_seq_length = 147

input_train_data_pad = pad_sequences(input_train_text,

maxlen=max_seq_length,

padding='post',

value=0)

target_train_data_pad = pad_sequences(target_train_text,

maxlen=max_seq_length,

padding='post',

value=0)

input_test_data_pad = pad_sequences(input_test_text,

maxlen=max_seq_length,

padding='post',

value=0)

target_test_data_pad = pad_sequences(target_test_text,

maxlen=max_seq_length,

padding='post',

value=0)

print(input_train_data_pad.shape, target_train_data_pad.shape)

Dataset 구성

train_dataset = tf.data.Dataset.from_tensor_slices((

train_dataset = train_dataset.shuffle(10000).repeat().batch(batch_size=

print(train_dataset)

test_dataset = tf.data.Dataset.from_tensor_slices((

test_dataset = test_dataset.batch(batch_size=

print(test_dataset)

Build the model

Setup hyper-parameters

kargs = {'model_name': 'GPT',

'num_layers': 12,

'd_model': 768,

'num_heads': 12,

'dff': 768 * 4,

'input_vocab_size': sp.get_piece_size(),

'target_vocab_size': sp.get_piece_size(),

'maximum_position_encoding': max_seq_length,

'segment_encoding': 2,

'end_token_idx': sp.piece_to_id('[EOS]'),

'rate': 0.1

}

def get_angles(pos, i, d_model):

angle_rates = 1 / np.power(10000, (2 * i//2) / np.float32(d_model))

return pos * angle_rates

def positional_encoding(position, d_model):

angle_rads = get_angles(np.arange(position)[:, np.newaxis],

np.arange(d_model)[np.newaxis, :],

d_model)

angle_rads[:, 0::2] = np.sin(angle_rads[:, 0::2])

angle_rads[:, 1::2] = np.cos(angle_rads[:, 1::2])

pos_encoding = angle_rads[np.newaxis, ...]

return tf.cast(pos_encoding, dtype=tf.float32)

def scaled_dot_product_attention(q, k, v, mask):

"""Calculate the attention weights.

q, k, v must have matching leading dimensions.

k, v must have matching penultimate dimension, i.e.: seq_len_k = seq_len_v.

The mask has different shapes depending on its type(padding or look ahead)

but it must be broadcastable for addition.

Args:

q: query shape == (..., seq_len_q, depth)

k: key shape == (..., seq_len_k, depth)

v: value shape == (..., seq_len_v, depth_v)

mask: Float tensor with shape broadcastable

to (..., seq_len_q, seq_len_k). Defaults to None.

Returns:

output, attention_weights

"""

matmul_qk = tf.matmul(q, k, transpose_b=True)

dk = tf.cast(tf.shape(k)[-1], tf.float32)

scaled_attention_logits = matmul_qk / tf.math.sqrt(dk)

if mask is not None:

scaled_attention_logits += (mask * -1e9)

attention_weights = tf.nn.softmax(scaled_attention_logits, axis=-1)

output = tf.matmul(attention_weights, v)

return output, attention_weights

class MultiHeadAttention(tf.keras.layers.Layer):

def __init__(self, **kargs):

super(MultiHeadAttention, self).__init__()

self.num_heads = kargs['num_heads']

self.d_model = kargs['d_model']

assert self.d_model % self.num_heads == 0

self.depth = self.d_model // self.num_heads

self.wq = tf.keras.layers.Dense(kargs['d_model'])

self.wk = tf.keras.layers.Dense(kargs['d_model'])

self.wv = tf.keras.layers.Dense(kargs['d_model'])

self.dense = tf.keras.layers.Dense(kargs['d_model'])

def split_heads(self, x, batch_size):

"""Split the last dimension into (num_heads, depth).

Transpose the result such that the shape is (batch_size, num_heads, seq_len, depth)

"""

x = tf.reshape(x, (batch_size, -1, self.num_heads, self.depth))

return tf.transpose(x, perm=[0, 2, 1, 3])

def call(self, v, k, q, mask):

batch_size = tf.shape(q)[0]

q = self.wq(q)

k = self.wk(k)

v = self.wv(v)

q = self.split_heads(q, batch_size)

k = self.split_heads(k, batch_size)

v = self.split_heads(v, batch_size)

scaled_attention, attention_weights = scaled_dot_product_attention(

q, k, v, mask)

scaled_attention = tf.transpose(scaled_attention, perm=[0, 2, 1, 3])

concat_attention = tf.reshape(scaled_attention,

(batch_size, -1, self.d_model))

output = self.dense(concat_attention)

return output, attention_weights

def point_wise_feed_forward_network(**kargs):

return tf.keras.Sequential([

tf.keras.layers.Conv1D(kargs['dff'], 1, activation='gelu'),

tf.keras.layers.Conv1D(kargs['d_model'], 1)

])

class DecoderLayer(tf.keras.layers.Layer):

def __init__(self, **kargs):

super(DecoderLayer, self).__init__()

self.mha = MultiHeadAttention(**kargs)

self.ffn = point_wise_feed_forward_network(**kargs)

self.layernorm1 = tf.keras.layers.LayerNormalization(epsilon=1e-6)

self.layernorm2 = tf.keras.layers.LayerNormalization(epsilon=1e-6)

self.layernorm3 = tf.keras.layers.LayerNormalization(epsilon=1e-6)

self.dropout1 = tf.keras.layers.Dropout(kargs['rate'])

self.dropout2 = tf.keras.layers.Dropout(kargs['rate'])

self.dropout3 = tf.keras.layers.Dropout(kargs['rate'])

def call(self, x, look_ahead_mask, padding_mask):

attn1, attn_weights_block1 = self.mha(x, x, x, look_ahead_mask)

attn1 = self.dropout1(attn1)

out1 = self.layernorm1(attn1 + x)

ffn_output = self.ffn(out1)

ffn_output = self.dropout3(ffn_output)

out2 = self.layernorm3(ffn_output + out1)

return out2, attn_weights_block1

class Decoder(tf.keras.layers.Layer):

def __init__(self, **kargs):

super(Decoder, self).__init__()

self.d_model = kargs['d_model']

self.num_layers = kargs['num_layers']

self.embedding = tf.keras.layers.Embedding(kargs['target_vocab_size'], self.d_model)

self.pos_encoding = positional_encoding(kargs['maximum_position_encoding'], self.d_model)

self.dec_layers = [DecoderLayer(**kargs)

for _ in range(self.num_layers)]

self.dropout = tf.keras.layers.Dropout(kargs['rate'])

def call(self, x, look_ahead_mask, padding_mask):

seq_len = tf.shape(x)[1]

attention_weights = {}

x = self.embedding(x)

x *= tf.math.sqrt(tf.cast(self.d_model, tf.float32))

x += self.pos_encoding[:, :seq_len, :]

x = self.dropout(x)

for i in range(self.num_layers):

x, block1 = self.dec_layers[i](x, look_ahead_mask, padding_mask)

attention_weights['decoder_layer{}_block1'.format(i+1)] = block1

return x, attention_weights

def create_padding_mask(seq):

seq = tf.cast(tf.math.equal(seq, 0), tf.float32)

return seq[:, tf.newaxis, tf.newaxis, :]

def create_look_ahead_mask(size):

mask = 1 - tf.linalg.band_part(tf.ones((size, size)), -1, 0)

return mask

def create_masks(input):

dec_padding_mask = create_padding_mask(input)

look_ahead_mask = create_look_ahead_mask(tf.shape(input)[1])

dec_target_padding_mask = create_padding_mask(input)

look_ahead_mask = tf.maximum(dec_target_padding_mask, look_ahead_mask)

return look_ahead_mask, dec_padding_mask

class GPT(tf.keras.Model):

def __init__(self, **kargs):

super(GPT, self).__init__(name=kargs['model_name'])

self.end_token_idx = kargs['end_token_idx']

self.decoder = Decoder(**kargs)

self.outputs_layer = tf.keras.layers.Dense(kargs['d_model'],

activation='gelu')

self.final_layer = tf.keras.layers.Dense(sp.get_piece_size())

def call(self, x):

look_ahead_mask, mask = create_masks(x)

dec_output, attn = self.decoder(x, look_ahead_mask, mask)

dec_output = self.outputs_layer(dec_output)

final_output = self.final_layer(dec_output)

return final_output

model = GPT(**kargs)

Train the model

loss_object = tf.keras.losses.SparseCategoricalCrossentropy(

from_logits=True, reduction='none')

train_accuracy = tf.keras.metrics.SparseCategoricalAccuracy(name='accuracy')

def loss(real, pred):

mask = tf.math.logical_not(tf.math.equal(real, 0))

loss_ = loss_object(real, pred)

mask = tf.cast(mask, dtype=loss_.dtype)

loss_ *= mask

return tf.reduce_mean(loss_)

def accuracy(real, pred):

mask = tf.math.logical_not(tf.math.equal(real, 0))

mask = tf.expand_dims(tf.cast(mask, dtype=pred.dtype), axis=-1)

pred *= mask

acc = train_accuracy(real, pred)

return tf.reduce_mean(acc)

model.compile(optimizer=tf.keras.optimizers.Adam(1e-4),

loss=loss,

metrics=[accuracy])

early_stopping_cb = tf.keras.callbacks.EarlyStopping(patience=10,

monitor='val_loss',

restore_best_weights=True,

verbose=1)

history = model.fit(train_dataset,

epochs=100,

validation_data=test_dataset,

steps_per_epoch=len(input_train_text) // batch_size,

validation_steps=len(input_test_text) // batch_size,

callbacks=[early_stopping_cb]

)

Epoch 1/100

1171/1171 [==============================] - 890s 731ms/step - loss: 0.9125 - accuracy: 0.8834 - val_loss: 1.3276 - val_accuracy: 0.8834

Epoch 2/100

1171/1171 [==============================] - 851s 727ms/step - loss: 0.9123 - accuracy: 0.8833 - val_loss: 1.4063 - val_accuracy: 0.8833

Epoch 3/100

1171/1171 [==============================] - 851s 727ms/step - loss: 0.9120 - accuracy: 0.8832 - val_loss: 1.3818 - val_accuracy: 0.8832

Epoch 4/100

1171/1171 [==============================] - 852s 727ms/step - loss: 0.9119 - accuracy: 0.8832 - val_loss: 1.4354 - val_accuracy: 0.8832

Epoch 5/100

1171/1171 [==============================] - 852s 728ms/step - loss: 0.9117 - accuracy: 0.8832 - val_loss: 1.4488 - val_accuracy: 0.8832

Epoch 6/100

1171/1171 [==============================] - 851s 727ms/step - loss: 0.9118 - accuracy: 0.8831 - val_loss: 1.4032 - val_accuracy: 0.8831

Epoch 7/100

1171/1171 [==============================] - 851s 727ms/step - loss: 0.9115 - accuracy: 0.8831 - val_loss: 1.4648 - val_accuracy: 0.8831

Epoch 8/100

1171/1171 [==============================] - 852s 727ms/step - loss: 0.9113 - accuracy: 0.8831 - val_loss: 1.4896 - val_accuracy: 0.8831

Epoch 9/100

1171/1171 [==============================] - 852s 727ms/step - loss: 0.9118 - accuracy: 0.8831 - val_loss: 1.4475 - val_accuracy: 0.8831

Epoch 10/100

1171/1171 [==============================] - 851s 727ms/step - loss: 0.9113 - accuracy: 0.8830 - val_loss: 1.4846 - val_accuracy: 0.8831

Epoch 11/100

1171/1171 [==============================] - ETA: 0s - loss: 0.9114 - accuracy: 0.8830Restoring model weights from the end of the best epoch: 1.

1171/1171 [==============================] - 851s 727ms/step - loss: 0.9114 - accuracy: 0.8830 - val_loss: 1.4495 - val_accuracy: 0.8830

Epoch 11: early stopping

Test the model

results = model.evaluate(test_dataset)

print("loss value: {:.3f}".format(results[0]))

print("accuracy value: {:.3f}".format(results[1]))

391/391 [==============================] - 117s 298ms/step - loss: 1.3274 - accuracy: 0.8830

loss value: 1.327

accuracy value: 0.883