〰️ 내용

[1] Backpropagation and NN

How does backpropagation work?

Let's look at Computational Graphs & easy implementation

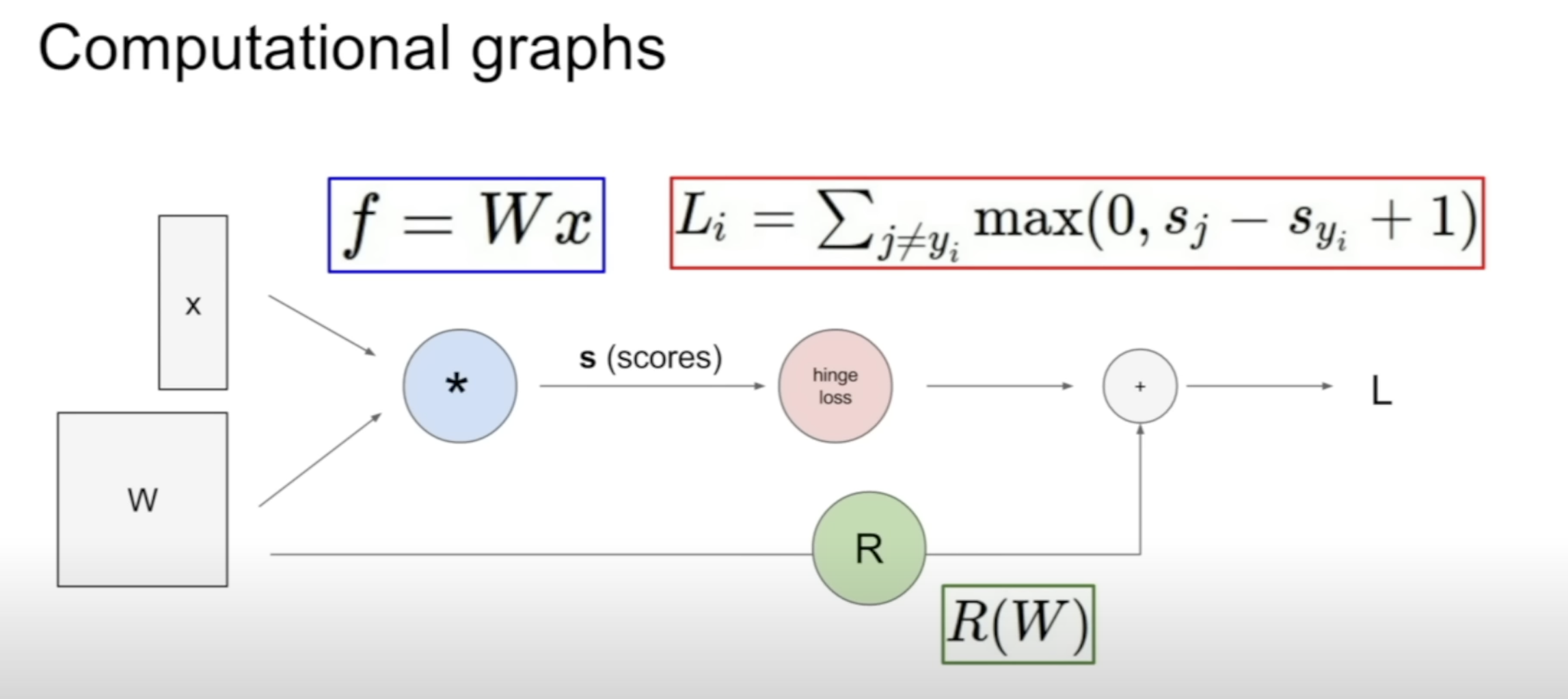

(1) Computational Graphs

Calculating the Loss using hinge loss & regularization term

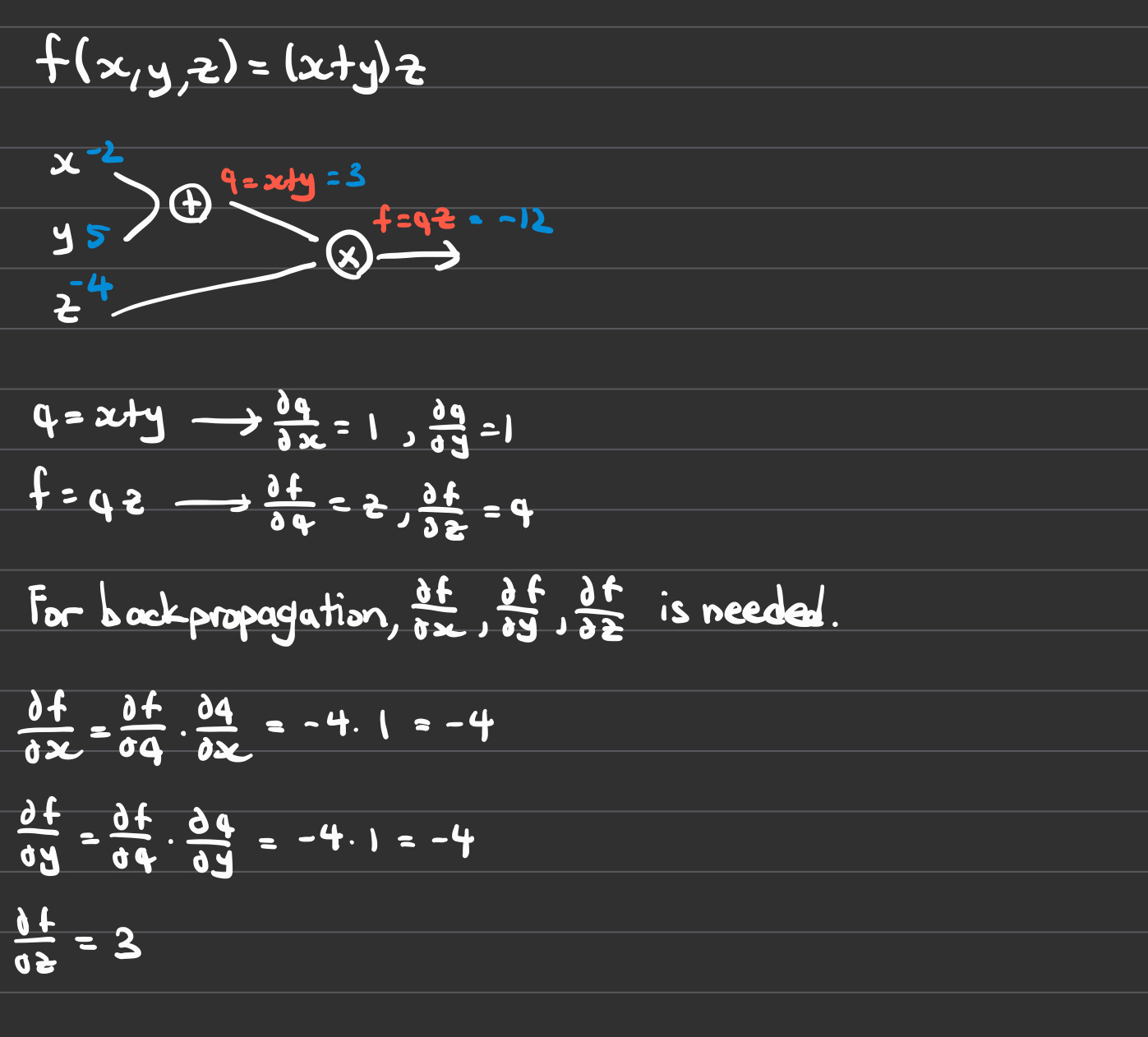

Let's simplify it and try calculating with an easy example below

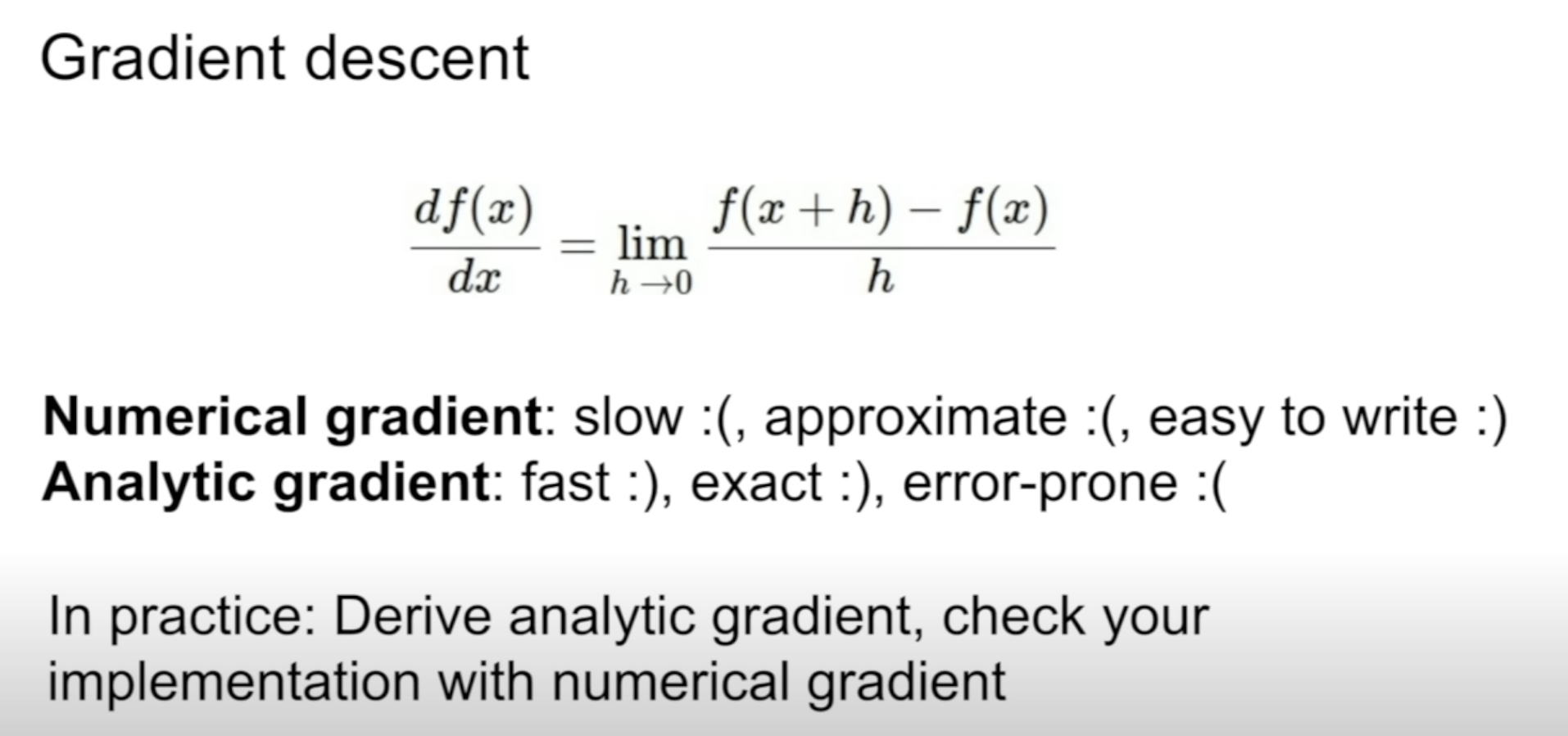

First we compute the gradients.

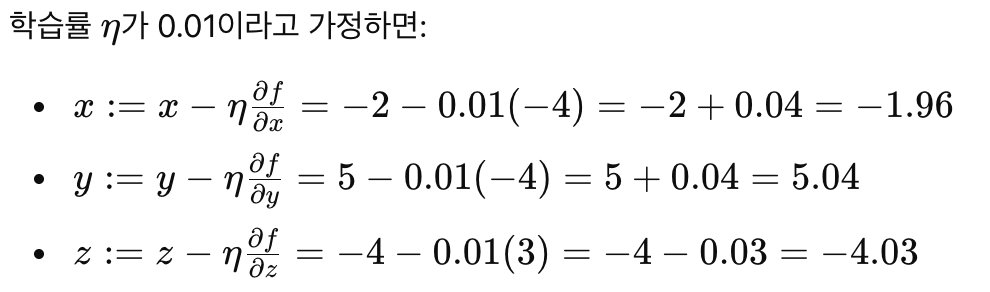

Next, we update the x, y, and z using Gradient Descent and chain rule.

x_new = x - (step_size) X df/dx

y_new = x - (step_size) X df/dy

z_new = x - (step_size) X df/dzBelow is how the changes would be after one iteration with step_size of 0.01

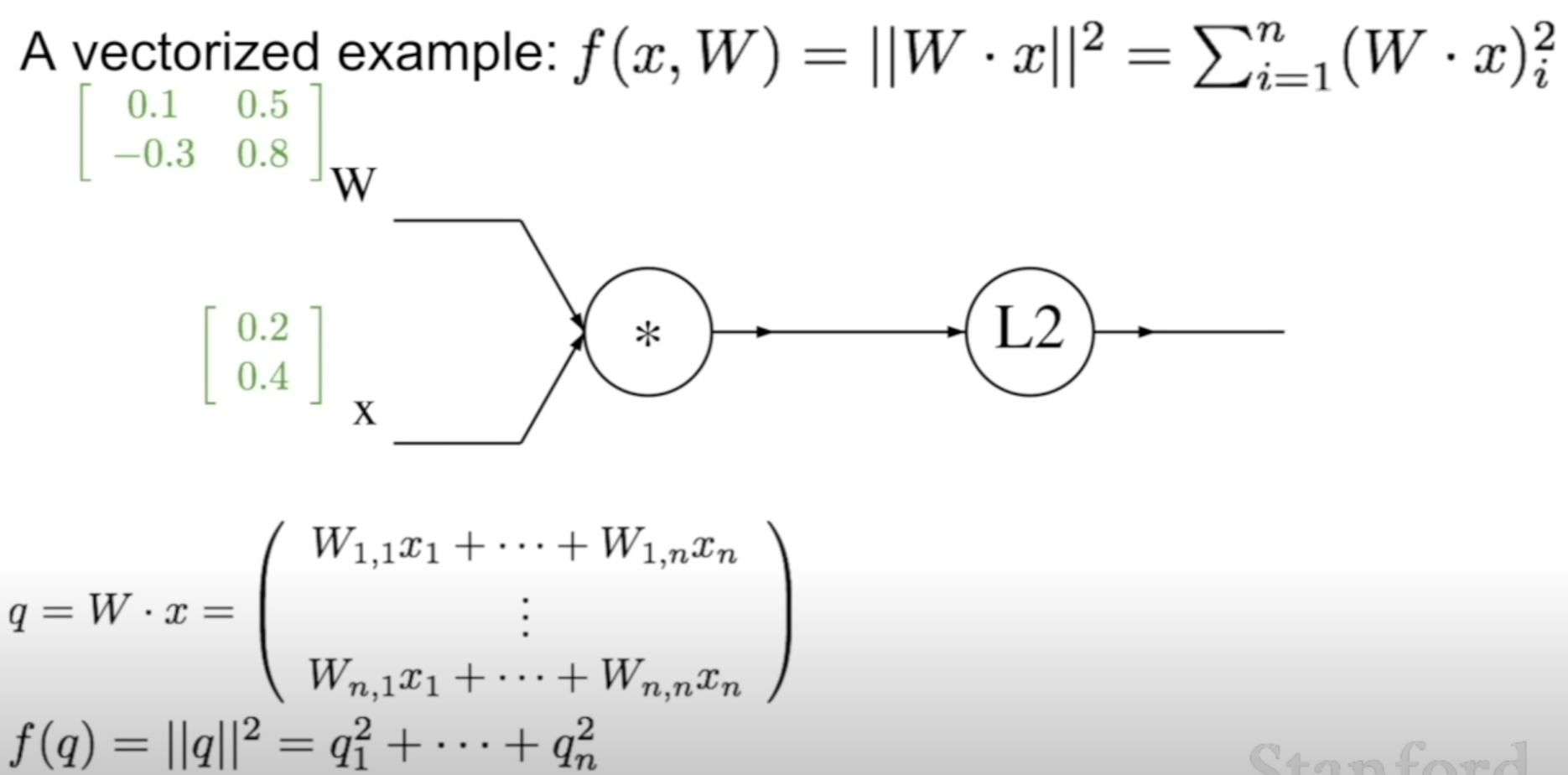

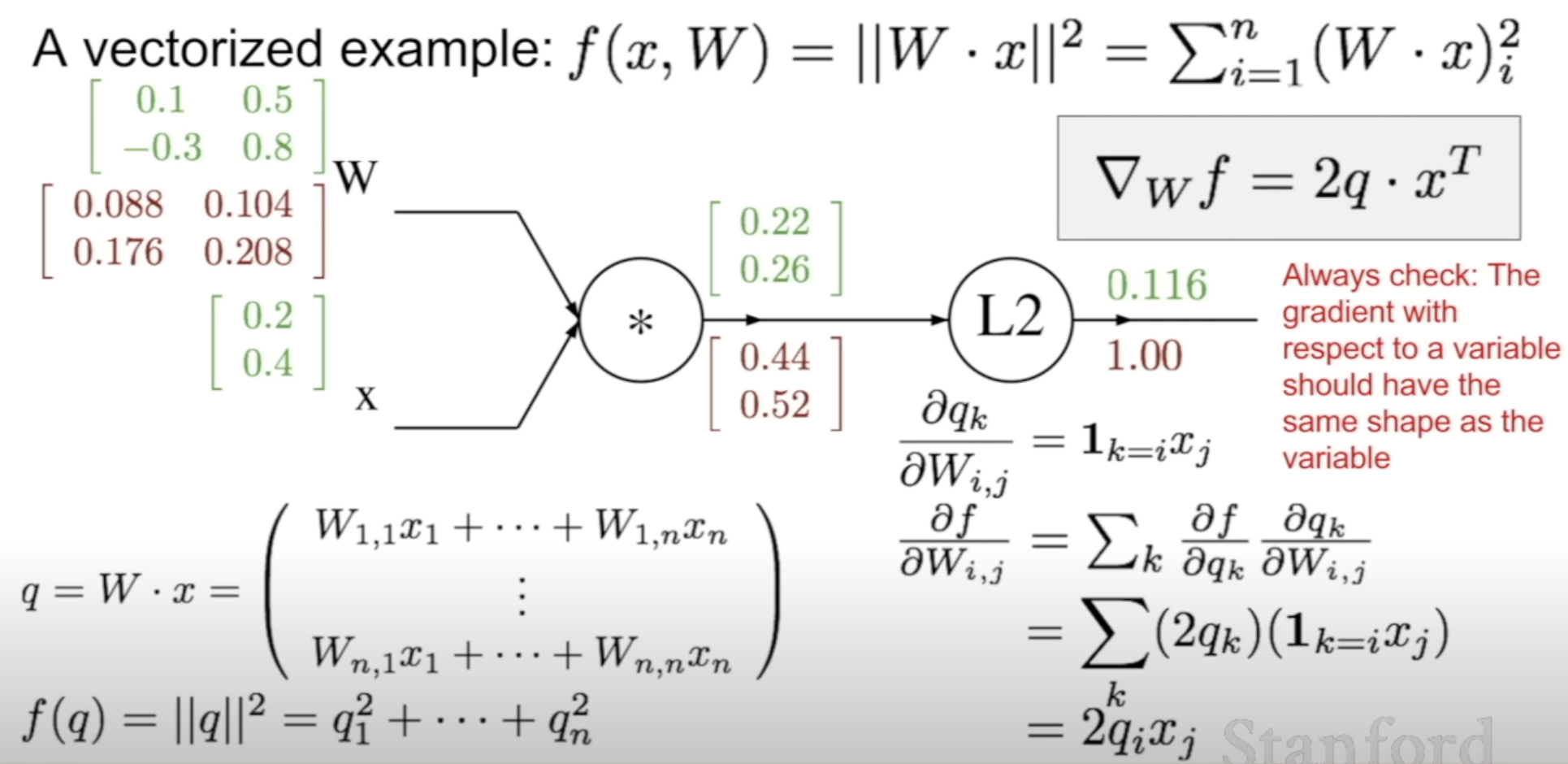

(2) Vectorized Example

Let's look at this example

This is how W would be updated

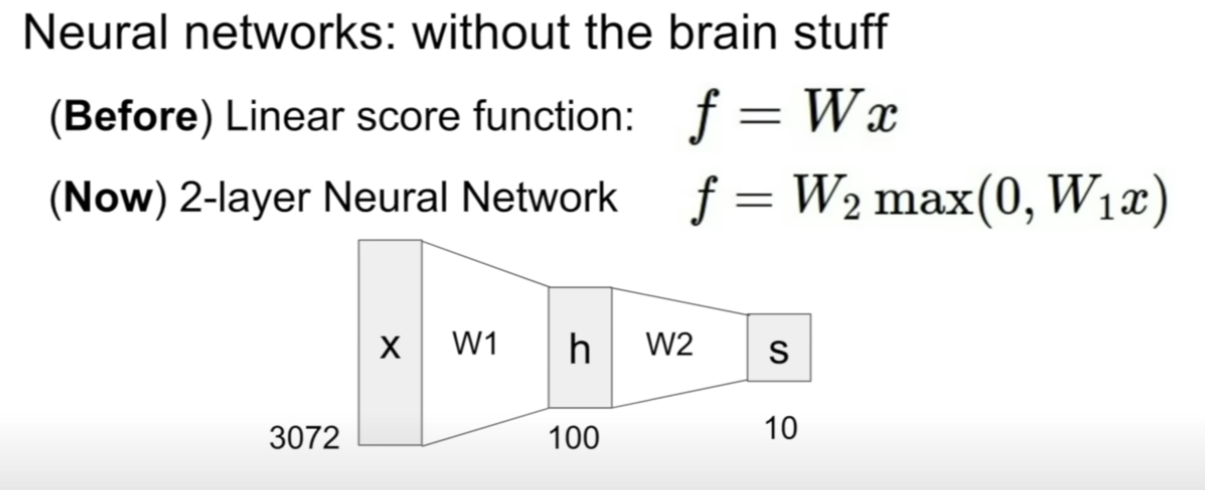

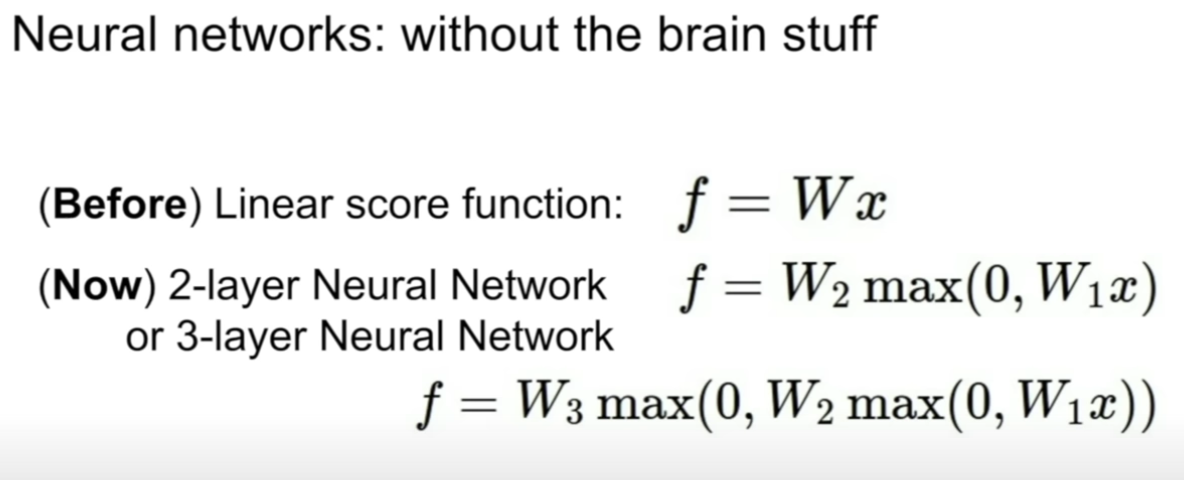

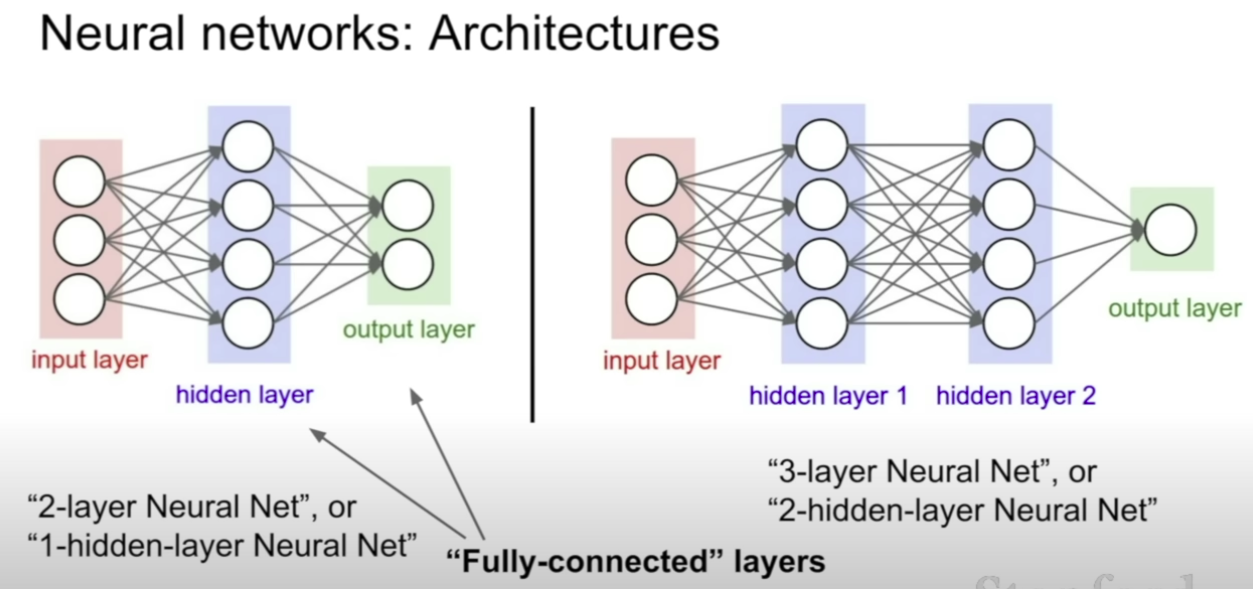

(2) Expanding to Neural Networks

Up to now, we've discussed a Linear score function.

Let's stack one layer on it.

The 2-layer Neural Network works like this : Linear -> ReLU -> Linear -> score

Just add another layer and it becomes 3 layer Neural Network

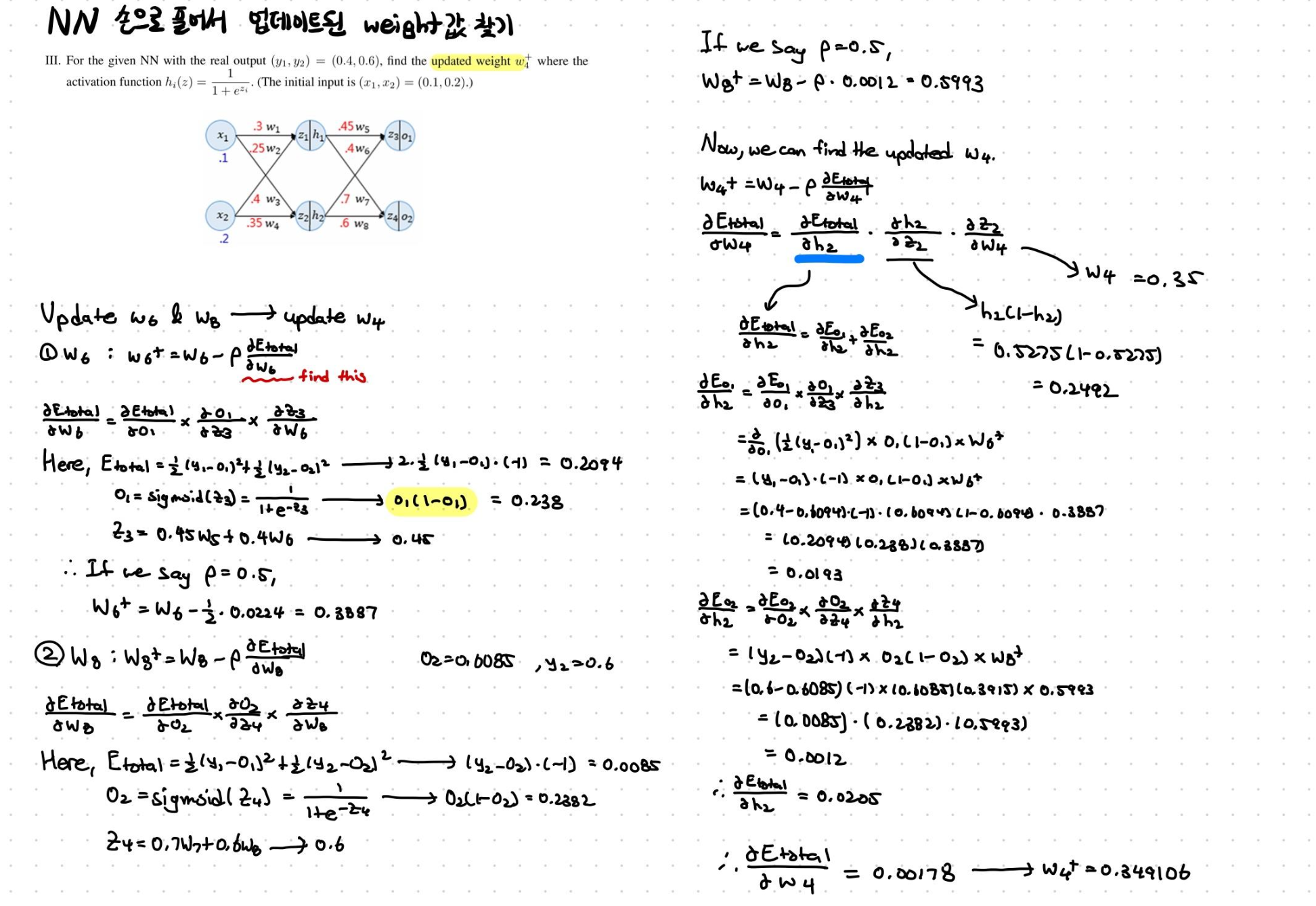

[2] Let's calculate NN, the simplest one!

Very simple FFNN

Input layer : x1 & x2

Hidden layer : z1 & z2

Output layer : z3 & z4

Ex) Image Classification of only two features used

〰️ 질문

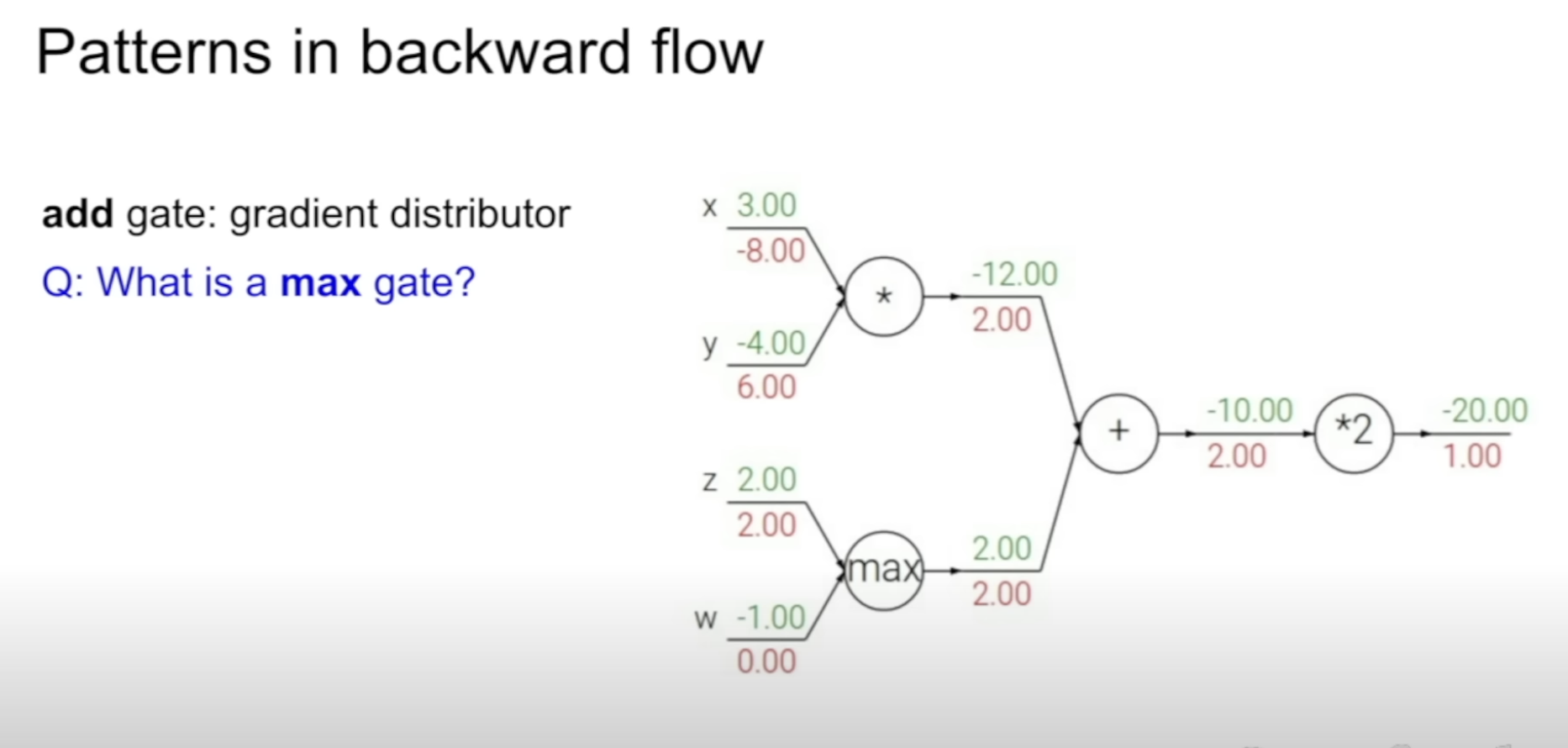

add gate : gradient distributor

max gate : gradient router

mul gate : gradient switcher