이제 드디어 YOLO 를 분석할 시기가 온듯..

- ultralytics

- ultralytics 의 코드 너무 깔끔하다.

- 내가 원하는 구조.

YOLOWorld라니..

tasks

task_map architecture

-

model, trainer, validator, predictor 를 task 별로 정해서 가져온다.

"classify": { "model": ClassificationModel, "trainer": yolo.classify.ClassificationTrainer, "validator": yolo.classify.ClassificationValidator, "predictor": yolo.classify.ClassificationPredictor, }, "detect": { "model": DetectionModel, "trainer": yolo.detect.DetectionTrainer, "validator": yolo.detect.DetectionValidator, "predictor": yolo.detect.DetectionPredictor, }, "segment": { "model": SegmentationModel, "trainer": yolo.segment.SegmentationTrainer, "validator": yolo.segment.SegmentationValidator, "predictor": yolo.segment.SegmentationPredictor, }, "pose": { "model": PoseModel, "trainer": yolo.pose.PoseTrainer, "validator": yolo.pose.PoseValidator, "predictor": yolo.pose.PosePredictor, }, "obb": { "model": OBBModel, "trainer": yolo.obb.OBBTrainer, "validator": yolo.obb.OBBValidator, "predictor": yolo.obb.OBBPredictor, },- 구조 깔끔하게 나왔다.

start yolo v8

model = YOLO("yolov8x.pt")-

mapping architecture

yolov8.yaml# Ultralytics YOLO 🚀, AGPL-3.0 license # YOLOv8 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect # Parameters nc: 80 # number of classes scales: # model compound scaling constants, i.e. 'model=yolov8n.yaml' will call yolov8.yaml with scale 'n' # [depth, width, max_channels] n: [0.33, 0.25, 1024] # YOLOv8n summary: 225 layers, 3157200 parameters, 3157184 gradients, 8.9 GFLOPs s: [0.33, 0.50, 1024] # YOLOv8s summary: 225 layers, 11166560 parameters, 11166544 gradients, 28.8 GFLOPs m: [0.67, 0.75, 768] # YOLOv8m summary: 295 layers, 25902640 parameters, 25902624 gradients, 79.3 GFLOPs l: [1.00, 1.00, 512] # YOLOv8l summary: 365 layers, 43691520 parameters, 43691504 gradients, 165.7 GFLOPs x: [1.00, 1.25, 512] # YOLOv8x summary: 365 layers, 68229648 parameters, 68229632 gradients, 258.5 GFLOPs # YOLOv8.0n backbone backbone: # [from, repeats, module, args] - [-1, 1, Conv, [64, 3, 2]] # 0-P1/2 - [-1, 1, Conv, [128, 3, 2]] # 1-P2/4 - [-1, 3, C2f, [128, True]] - [-1, 1, Conv, [256, 3, 2]] # 3-P3/8 - [-1, 6, C2f, [256, True]] - [-1, 1, Conv, [512, 3, 2]] # 5-P4/16 - [-1, 6, C2f, [512, True]] - [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32 - [-1, 3, C2f, [1024, True]] - [-1, 1, SPPF, [1024, 5]] # 9 # YOLOv8.0n head head: - [-1, 1, nn.Upsample, [None, 2, "nearest"]] - [[-1, 6], 1, Concat, [1]] # cat backbone P4 - [-1, 3, C2f, [512]] # 12 - [-1, 1, nn.Upsample, [None, 2, "nearest"]] - [[-1, 4], 1, Concat, [1]] # cat backbone P3 - [-1, 3, C2f, [256]] # 15 (P3/8-small) - [-1, 1, Conv, [256, 3, 2]] - [[-1, 12], 1, Concat, [1]] # cat head P4 - [-1, 3, C2f, [512]] # 18 (P4/16-medium) - [-1, 1, Conv, [512, 3, 2]] - [[-1, 9], 1, Concat, [1]] # cat head P5 - [-1, 3, C2f, [1024]] # 21 (P5/32-large) - [[15, 18, 21], 1, Detect, [nc]] # Detect(P3, P4, P5) -

Segmentation, Pose, OBB model 은

DetectionModel을 상속받는다. -

Classification 과 Detection 모델은

BaseModel을 상속 받고, -

load model 은

parse_model이 메인 인 것 같다.

loss functions of each tasks

non-linear equation 을 back propagation 할 때,

- 내가 모르는 space 의 모르는 distribution 으로 fitting 하기 위해

- gradient discent 를 하면서

- optimal minima 를 찾기 위해

loss function 이 난 제일 중요하다고 생각하는데..

1. classify

- ML task 의 가장 기본이 되는 task.

- 역시 loss func 는 cross_entropy.

2. detect ( 3 losses )

loss[0], loss[2]: bbox loss (iou = box loss, dfl loss)# IoU iou <= inter / union- dfl loss 는 https://arxiv.org/pdf/2006.04388 참고.

loss[1]: cls loss (varifocal_loss -> BCEWithLogitsLoss)- 아무래도,

True / False로 구분하기 위한 loss.

return loss.sum() * batch_size, loss.detach() # loss(box, cls, dfl)- 아무래도,

3. segment ( 4 losses )

class TaskAlignedAssigner(nn.Module):- 이 클래스는 gt 를 가지고 분류와 box 정보를 결합하여 target 에 대한 information 을 뽑는다.

usingclass TaskAlignedAssigner(nn.Module): ... def forward(self, pd_scores, pd_bboxes, anc_points, gt_labels, gt_bboxes, mask_gt): ... return target_labels, target_bboxes, target_scores, fg_mask.bool(), target_gt_idxassigner... 와_, target_bboxes, target_scores, fg_mask, _ = self.assigner( pred_scores.detach().sigmoid(), (pred_bboxes.detach() * stride_tensor).type(gt_bboxes.dtype), anchor_points * stride_tensor, gt_labels, gt_bboxes, mask_gt, ) - gt 와 구해진 target 을 가지고

loss[2]: BCEWithLogitsLoss 로 cls loss.loss[0], loss[3]: bbox loss ( iou = box loss , dfl loss )loss[1]: segmentation loss ( anchor box )

4. pose ( 5 losses )

pose loss 라니.. 설레이는데..

- segment tasks 와 같이 gt 를 가지고 box 정보를 결합, target 에 대한 information 을 뽑는다.

loss[3]: BCEWithLogitsLoss 로 cls loss.loss[0], loss[4]: bbox loss (iou = box loss, dfl loss )loss[1], loss[2]: keypoints loss ( keypoints, keypoints object )

source : LearnOpenCV

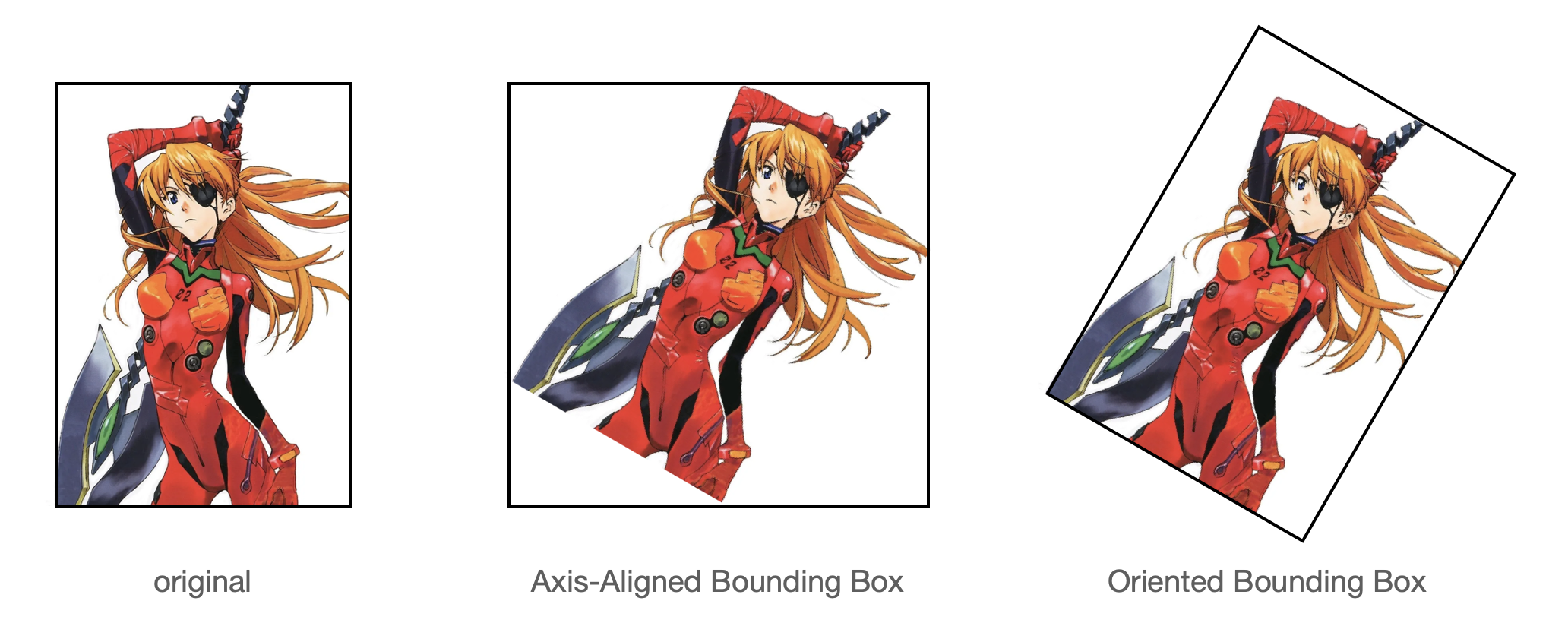

5. obb ( 3 losses )

Oriented Bounding Box

- 그림에서 보면, OBB 는 rotate 가 들어가므로, gt 와 box 정보를 결합하는데, rotate 를 사용

- assigner 는 TaskAlignedAssigner 를 상속 받아서 적용.

- bbox loss 또한 BboxLoss 를 상속 받아서 적용.

- probiou 에서 pred 와 target obb 의 probabilistic IoU 를 계산.

- OBB 들 사이의 prob IoU 를 계산 : https://arxiv.org/pdf/2106.06072v1

- detect 와 같은 형식의 loss

loss[1]: cls loss (varifocal_loss -> BCEWithLogitsLoss)loss[0], loss[2]: bbox loss (iou = box loss, dfl loss)

result

이 코드 구조, 내가 원했던 구조. 내 코드의 간략 리뷰를 하자면..

- base class 상속은 가져왔지만, task 까지 분기로 쳐서 될 줄 알았다.

- 사실 loss function 이나 그런 것만 바꾸면 되는 걸로 했지만.. 하다 보니 섞이는 문제..

- 역시, task 별로 predict, train, valid 로 가져가는게 맞다.

이젠 너무 task 에 대한 weight 이 커져서, scratch 학습은 사실 ImageNet 1k 정도가 maximum 이지 않을 까 한다. ImageNet 21k 를 해봤지만.. 그 비용과 효율보다는 weight 을 가져다 사용하면서, 필요한 새로운 데이터를 튜닝하는것이 ..

Ref

- ultralytics

https://github.com/ultralytics/ultralytics