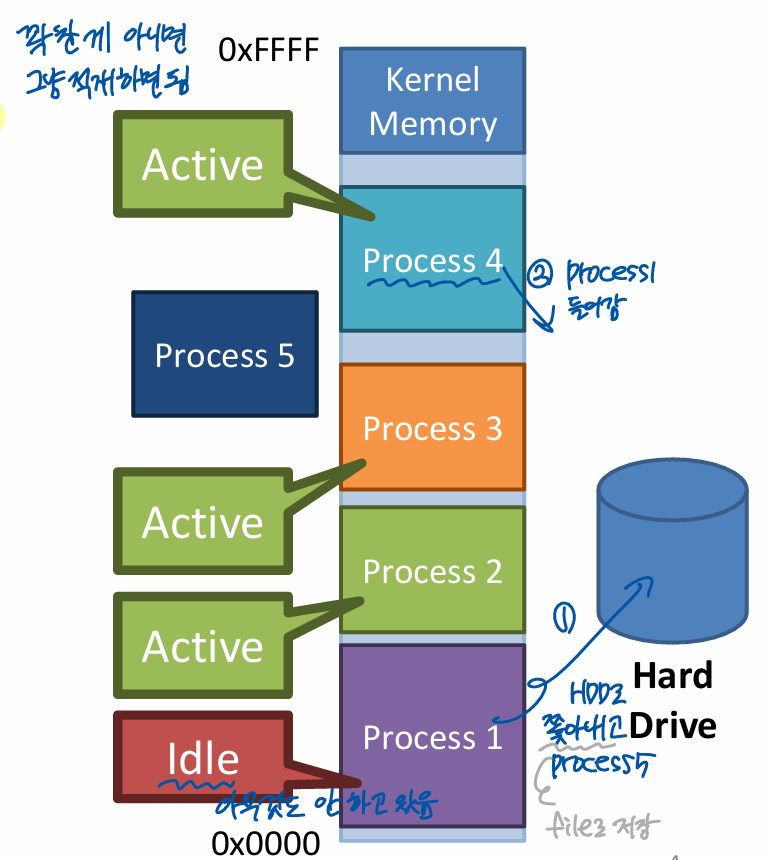

Swapping

Swap frames between physical memory and disk

Load data from swap back into memory on-demand

- If a process access a page that has been swapped out, page fault occurs

- A page-fault occurs and the instruction pauses

- 접근하려는 게 DRAM에 없으면 오류

- The OS can swap the frame back in, insert it into the page table, and restart the instruction

옮겨서 저장 = swap write

Naive E.g.

- Suppose memory is full

- The user opens a new program

- Swap out idle pages to disk

- If the idle pages are accessed, page them back in

Implementing Swap

- Data strctures are needed to track the mapping between pages in memory and pages on disk

- Meta-data about memory pages must be kept

- When should pages be evicted?

- Which page should be evicted?

- OS's page fault handler must be modified

- 기존에는 없는 page에 대해서만 처리했으나, swap을 위해 수정

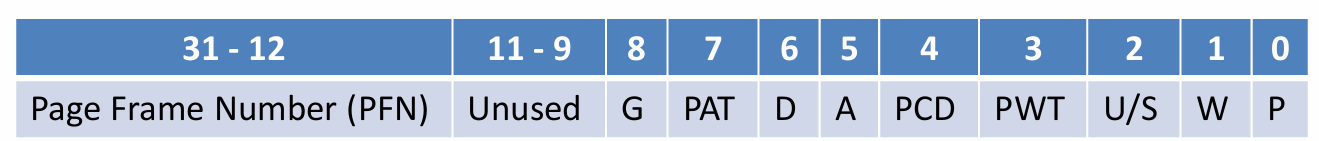

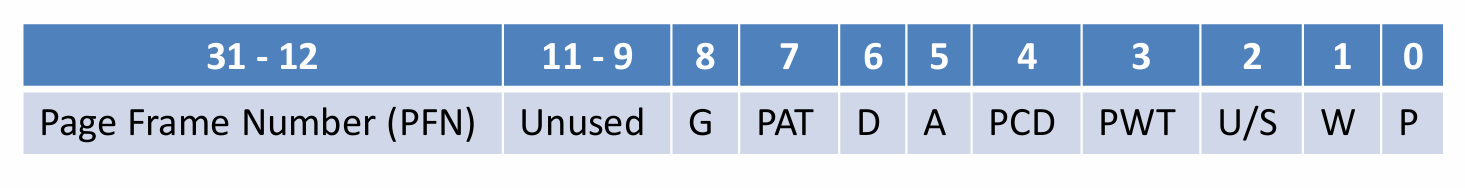

x86 Page Table Entry

- P - Present bit - is in physical memory?

- 1 means the page is in physical memory

- 0 means the page is valid, but swapped to disk

- Attempts to access an invalid page or a page isn't present trigger a page fault

Present bit = 0 or invalid page이면 page fault 발생

둘 다 page fault이지만 present=0이면 memory로 다시 읽어오고, invalid이면 즉시 종료

Handling Page Fault

If the PTE is invalid, the OS still kills the process

If the PTE is valid, but present = 0

- OS swaps the page back into memory

- OS updates the PTE

- TLB도 update

- OS instructs the CPU to retry the instruction

When should the OS evict pages?

On-demand approach

- If a page needs to be created and no free pages exist,

swap a page to disk

Proactive approach

- Most OSes try to maintain a small pool of free pages

- High watermark = threshold(e.g. 8 ~ 90)

- Once memory utilization crosses the high watermark, a background process starts swapping out pages

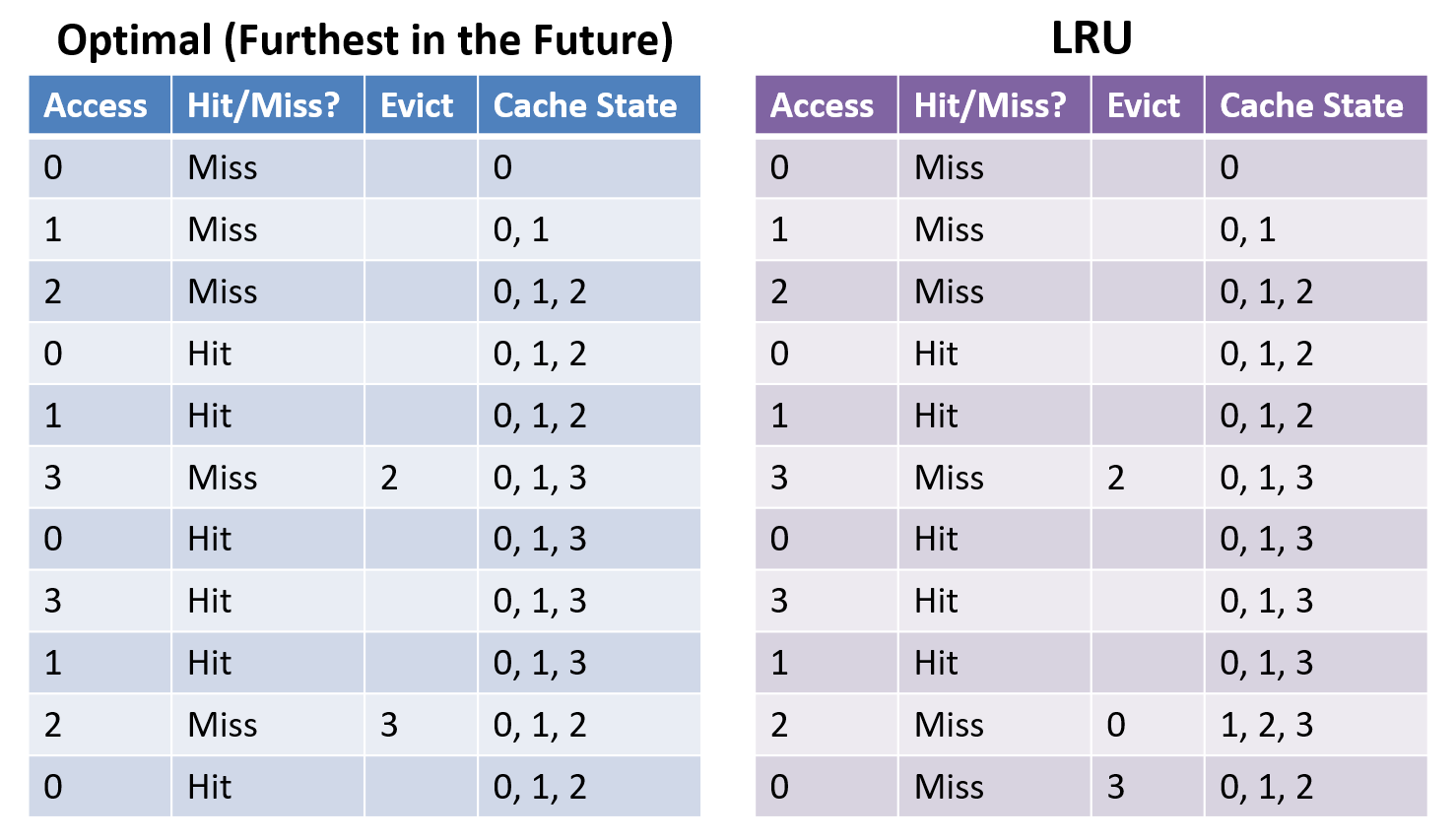

What pages should be evicted?

Page Replacement Policy

- The optimal eviction stragy

- Evict the page that will be accessed furthest in the future

- Unfortunately, impossible to implement (we don't know about the future)

LRU가 optimal의 2배를 넘지 않는 것이 수학적으로 증명됨

cold miss 빼면 1.5배 차이

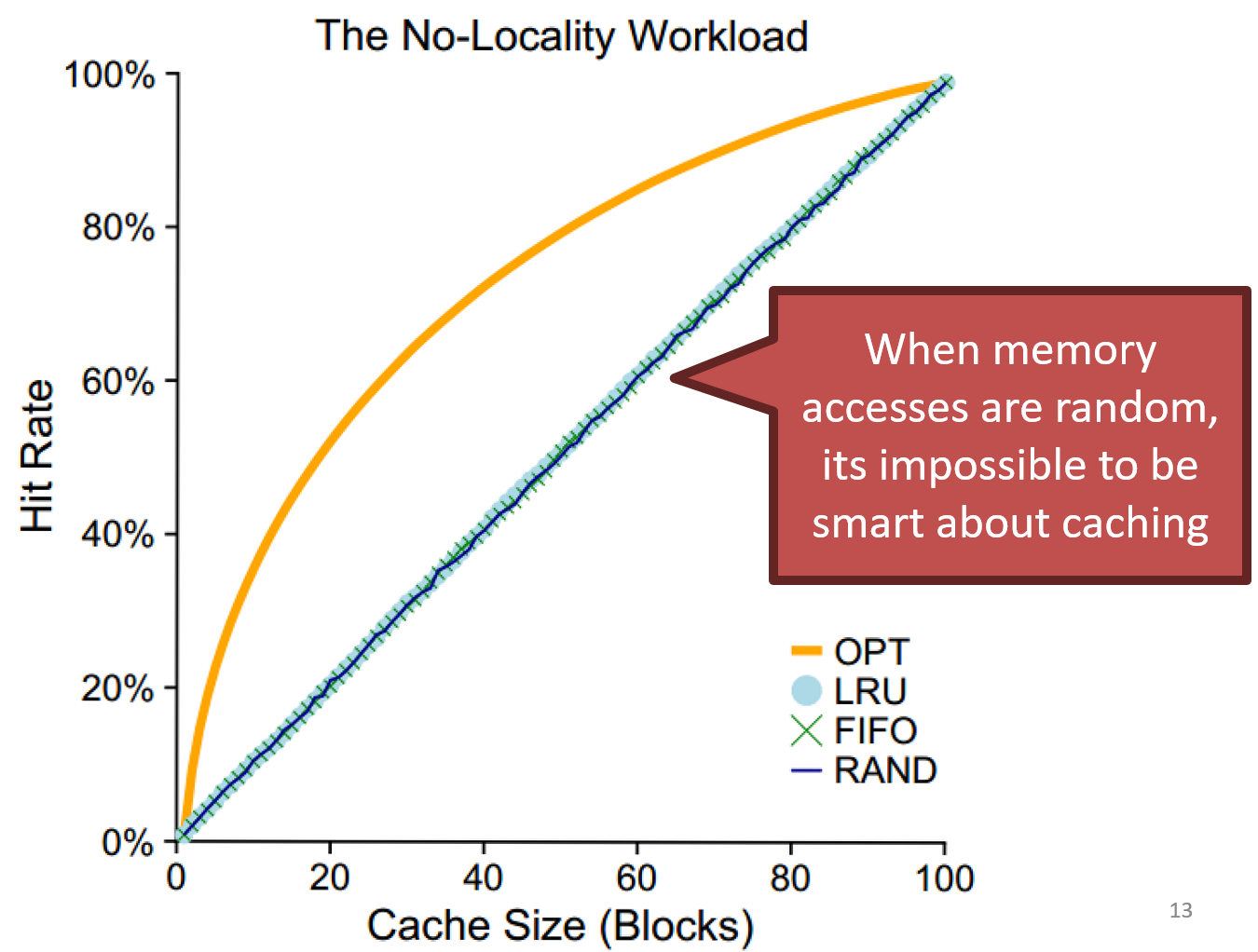

All memory accesses are to 100% random pages

- random => no locality

- only cache size dependent

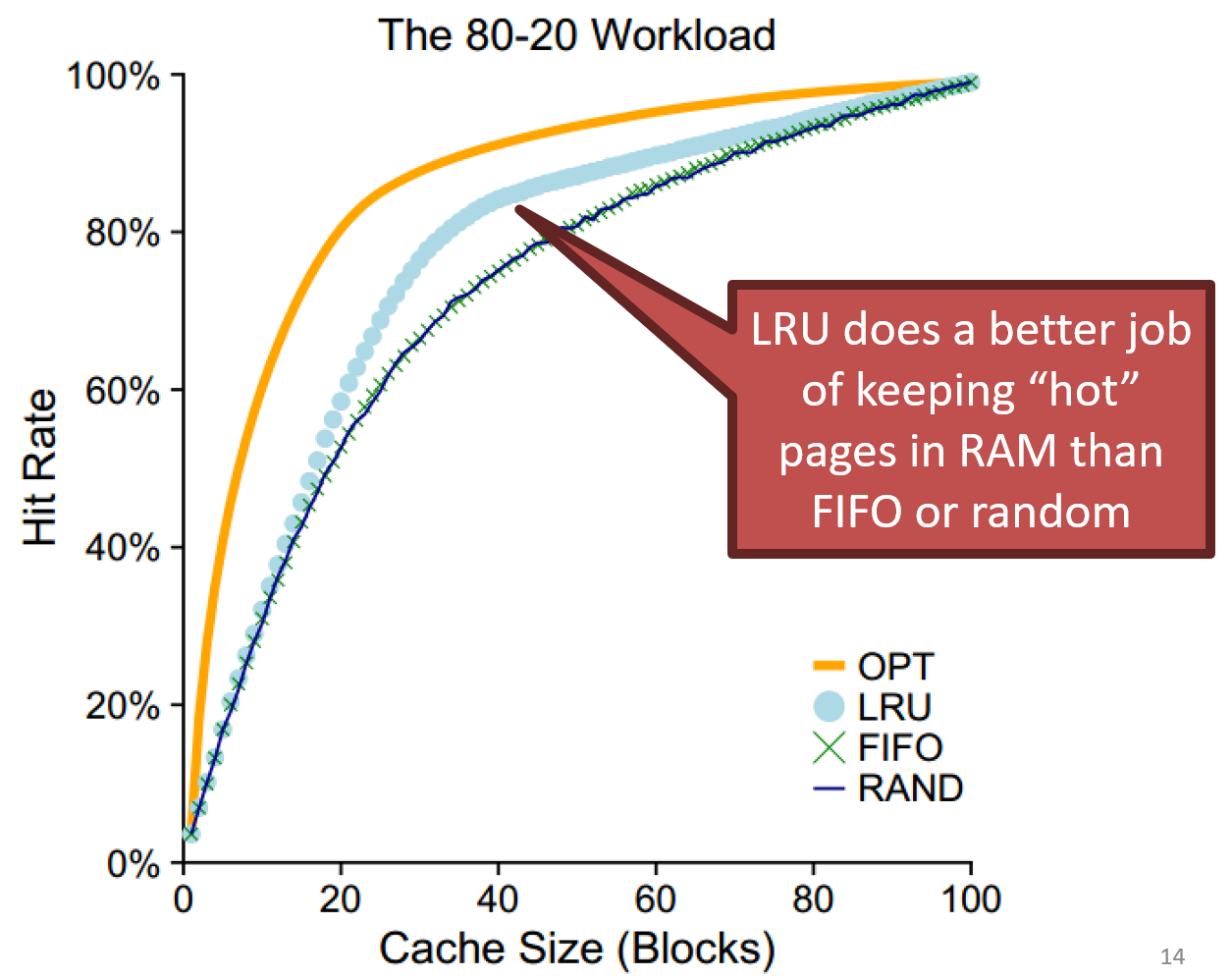

80% memory accesses are for 20% of pages

- LRU가 optimal에 가까움

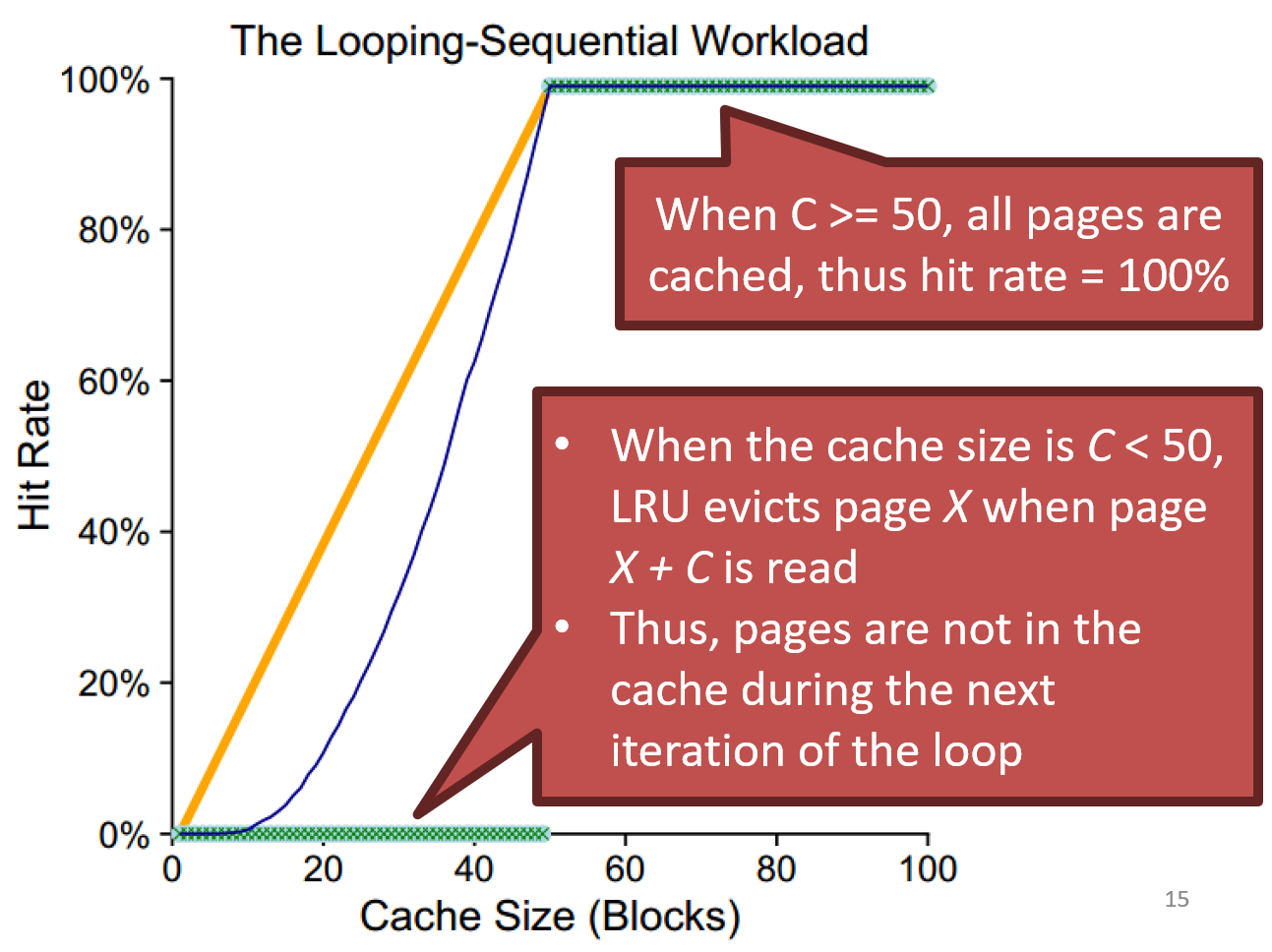

Sequential access in 50 pages, then loops

Implementing historical algorithms

- Record each access to the page table

- Additional overhead to page table lookups

- Approximate LRU with help from the hardware

- A - accessed bit - has been read recently?

- D - dirty bit - has been written recently?

- MMU sets the accessed bit when it reads a PTE

- MMU sets the dirty bit when it writes to the page

- OS may clear these flags as it wishes

Approximating LRU

On eviction, LRU needs to scan all PTEs to determine which have not been used

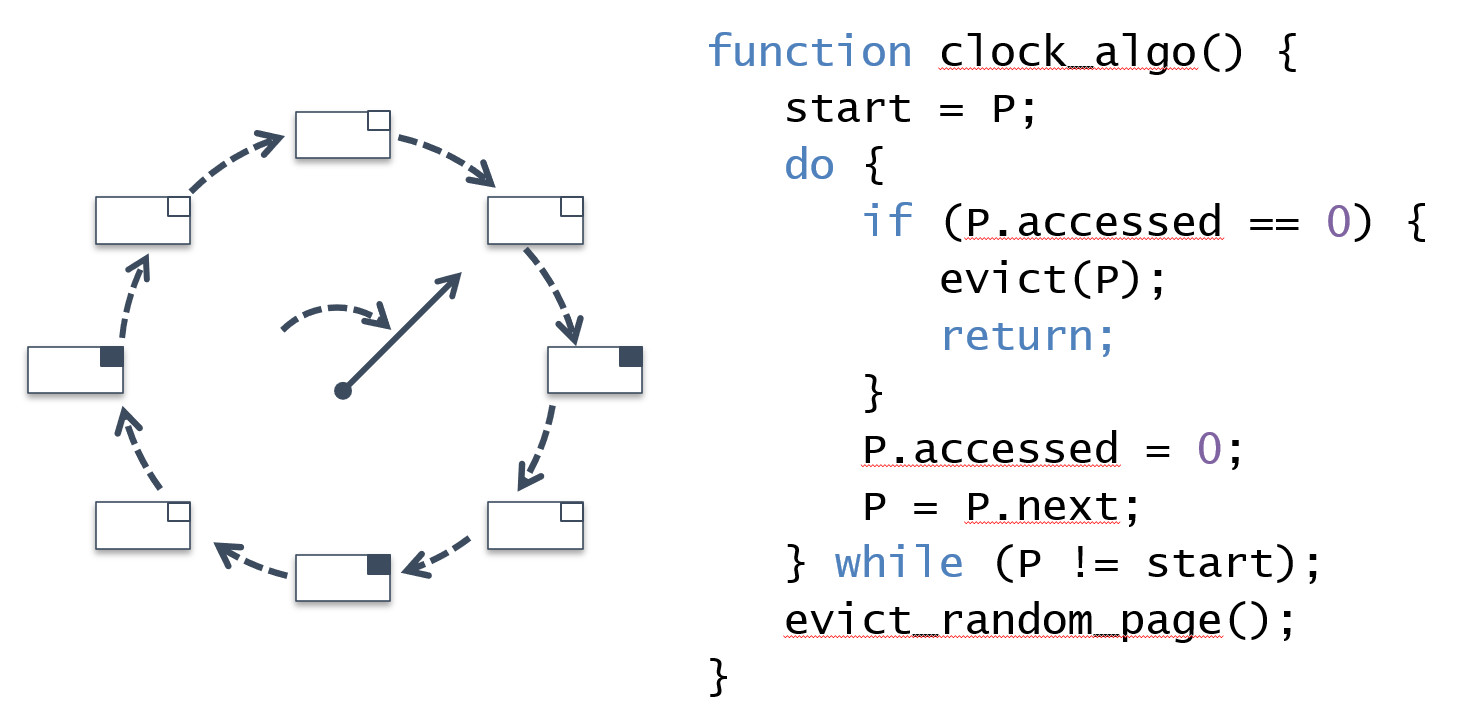

The Clock Algorithm

- If the access bit is 0, evict

- else set the access bit to 0 and pass

한 바퀴 돌면서 os가 accessed bit 0으로 만드는데, 이때 1이 되어있는 건 MMU(hw)가 access로 판단한 것으로 쫓아낼 수 없음

Incorporating the Dirty Bit

- Evict the non-dirty pages first

- When modified pages(Dirty bit is 1) are evicted, Dirty pages must always be written to disk

dirty bit가 다르다는 건 변동사항이 존재한다는 것, disk에 기록해야 함

RAM as cache

- RAM can be viewed as a high-speed cache for your large-but-slow spinning disk storage

- Ideally, you want the most important things to be resident in the cache (RAM)

RAM은 느리지만 HDD에 비하면 high-speed-cache