모델은 작지만, 성능은 강력한 Mobile용 DNN MoblieNetv2를 소개합니다!

https://arxiv.org/abs/1801.04381

https://github.com/tonylins/pytorch-mobilenet-v2

MobileNetv2의 특징

Depthwise Separable Convolution

- channel별 convolution 수행 후, 이후 pointwise 추가 연산

- 연산량 감소 효과

Linear Bottlneck

- narrow - wide - narrow에서 block간 연결에서 ReLU를 사용하지 않음 (ReLU6와는 다름)

- 더 중요한 것은 narrow layer를 중요 정보가 저장되어 있는 것으로 생각, 이를 skip conn.에 태움

- skip conn.을 narrow로 태우기에 연산량 감소 효과

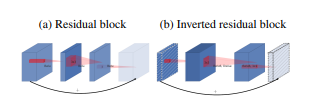

Inverted Residual

- 기존 Resnet과 다르게 narrow - wide - narrow

- ReLU6

코드 분석

"""

Creates a MobileNetV2 Model as defined in:

Mark Sandler, Andrew Howard, Menglong Zhu, Andrey Zhmoginov, Liang-Chieh Chen. (2018).

MobileNetV2: Inverted Residuals and Linear Bottlenecks

arXiv preprint arXiv:1801.04381.

import from https://github.com/tonylins/pytorch-mobilenet-v2

"""

import torch.nn as nn

import math

__all__ = ['mobilenetv2']

# 나뉠수 있게 해주기 => 모든 channel number를 8로 나눌 수 있게 만드러주기! : divisor가 아마 8일듯?

def _make_divisible(v, divisor, min_value=None):

"""

This function is taken from the original tf repo.

It ensures that all layers have a channel number that is divisible by 8

It can be seen here:

https://github.com/tensorflow/models/blob/master/research/slim/nets/mobilenet/mobilenet.py

:param v:

:param divisor:

:param min_value:

:return:

"""

if min_value is None:

min_value = divisor

new_v = max(min_value, int(v + divisor / 2) // divisor * divisor)

# Make sure that round down does not go down by more than 10%.

if new_v < 0.9 * v:

new_v += divisor

return new_v

# conv 33 bn relu6

def conv_3x3_bn(inp, oup, stride):

return nn.Sequential(

nn.Conv2d(inp, oup, 3, stride, 1, bias=False),

nn.BatchNorm2d(oup),

nn.ReLU6(inplace=True)

)

# conv11 bn relu6

def conv_1x1_bn(inp, oup):

return nn.Sequential(

nn.Conv2d(inp, oup, 1, 1, 0, bias=False),

nn.BatchNorm2d(oup),

nn.ReLU6(inplace=True)

)

# narrow - wide - narrow 형식 InvertedResidual

class InvertedResidual(nn.Module):

def __init__(self, inp, oup, stride, expand_ratio):

super(InvertedResidual, self).__init__()

# stride는 반드시 1, 2여야 함

assert stride in [1, 2]

# expand ratio를 이용해서 channel 확장! : narrow - wide - narrow

hidden_dim = round(inp * expand_ratio)

# skip connection을 이용하기 위한 w h 크기 check

self.identity = stride == 1 and inp == oup

# channel 수 변경이 없는경우? =>

if expand_ratio == 1:

self.conv = nn.Sequential(

# depthwise

nn.Conv2d(hidden_dim, hidden_dim, 3, stride, 1, groups=hidden_dim, bias=False), # channel수 == groups => depthwise(channelwise) conv. => 어짜피 그 이후 값 더해주니까 depthwise + pointwise

nn.BatchNorm2d(hidden_dim),

nn.ReLU6(inplace=True),

# pointwise-linear

nn.Conv2d(hidden_dim, oup, 1, 1, 0, bias=False),

nn.BatchNorm2d(oup),

)

# inverted residual conv

else:

self.conv = nn.Sequential(

# pointwise

nn.Conv2d(inp, hidden_dim, 1, 1, 0, bias=False),

nn.BatchNorm2d(hidden_dim),

nn.ReLU6(inplace=True),

# depthwise

nn.Conv2d(hidden_dim, hidden_dim, 3, stride, 1, groups=hidden_dim, bias=False),

nn.BatchNorm2d(hidden_dim),

nn.ReLU6(inplace=True),

# pointwise-linear

nn.Conv2d(hidden_dim, oup, 1, 1, 0, bias=False),

nn.BatchNorm2d(oup),

)

# skip or not.

def forward(self, x):

if self.identity:

return x + self.conv(x)

else:

return self.conv(x)

class MobileNetV2(nn.Module):

def __init__(self, num_classes=1000, width_mult=1.):

super(MobileNetV2, self).__init__()

# setting of inverted residual blocks

self.cfgs = [

# t, c, n, s => expansion factor, output channel 수, 반복수, stride

[1, 16, 1, 1],

[6, 24, 2, 2],

[6, 32, 3, 2],

[6, 64, 4, 2],

[6, 96, 3, 1],

[6, 160, 3, 2],

[6, 320, 1, 1],

]

# building first layer

# channel 생성 : 4 / 8로 나눠떨어지게

input_channel = _make_divisible(32 * width_mult, 4 if width_mult == 0.1 else 8)

layers = [conv_3x3_bn(3, input_channel, 2)] # w h는 half, channel수 => input_channel

# building inverted residual blocks

block = InvertedResidual

for t, c, n, s in self.cfgs:

output_channel = _make_divisible(c * width_mult, 4 if width_mult == 0.1 else 8)

for i in range(n):

layers.append(block(input_channel, output_channel, s if i == 0 else 1, t)) # block 당 stride는 첫번째에만 수행 : 나머지는 1 => 각 block 첫 layer에서만 downsampling

input_channel = output_channel

self.features = nn.Sequential(*layers) # 싹다모아서 만들기

# building last several layers

output_channel = _make_divisible(1280 * width_mult, 4 if width_mult == 0.1 else 8) if width_mult > 1.0 else 1280

self.conv = conv_1x1_bn(input_channel, output_channel)

self.avgpool = nn.AdaptiveAvgPool2d((1, 1)) # channel수로 만들어주고

self.classifier = nn.Linear(output_channel, num_classes) # FC!

self._initialize_weights()

def forward(self, x):

x = self.features(x)

x = self.conv(x)

x = self.avgpool(x)

x = x.view(x.size(0), -1)

x = self.classifier(x)

return x

def _initialize_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

n = m.kernel_size[0] * m.kernel_size[1] * m.out_channels

m.weight.data.normal_(0, math.sqrt(2. / n))

if m.bias is not None:

m.bias.data.zero_()

elif isinstance(m, nn.BatchNorm2d):

m.weight.data.fill_(1)

m.bias.data.zero_()

elif isinstance(m, nn.Linear):

m.weight.data.normal_(0, 0.01)

m.bias.data.zero_()

def mobilenetv2(**kwargs):

"""

Constructs a MobileNet V2 model

"""

return MobileNetV2(**kwargs)