Signatures of Criticality in Efficient Coding Networks

This paper studies two big ideas in neuroscience: criticality (the brain operating near a critical state) and efficient coding (neurons encoding inputs optimally). Using a network of leaky integrate-and-fire (LIF) neurons, the authors test whether optimizing for efficient coding naturally leads to signatures of criticality—like power-law distributions in neural avalanches.

Why Avalanches?

- Avalanches: Neuronal avalanches are cascades of spikes spreading through a network, like a chain reaction.

- this is a hallmark of criticality. If their sizes and durations follow a power-law distribution, it signals the network is in a critical state: balanced between order (over-synchronization) and chaos (random firing).

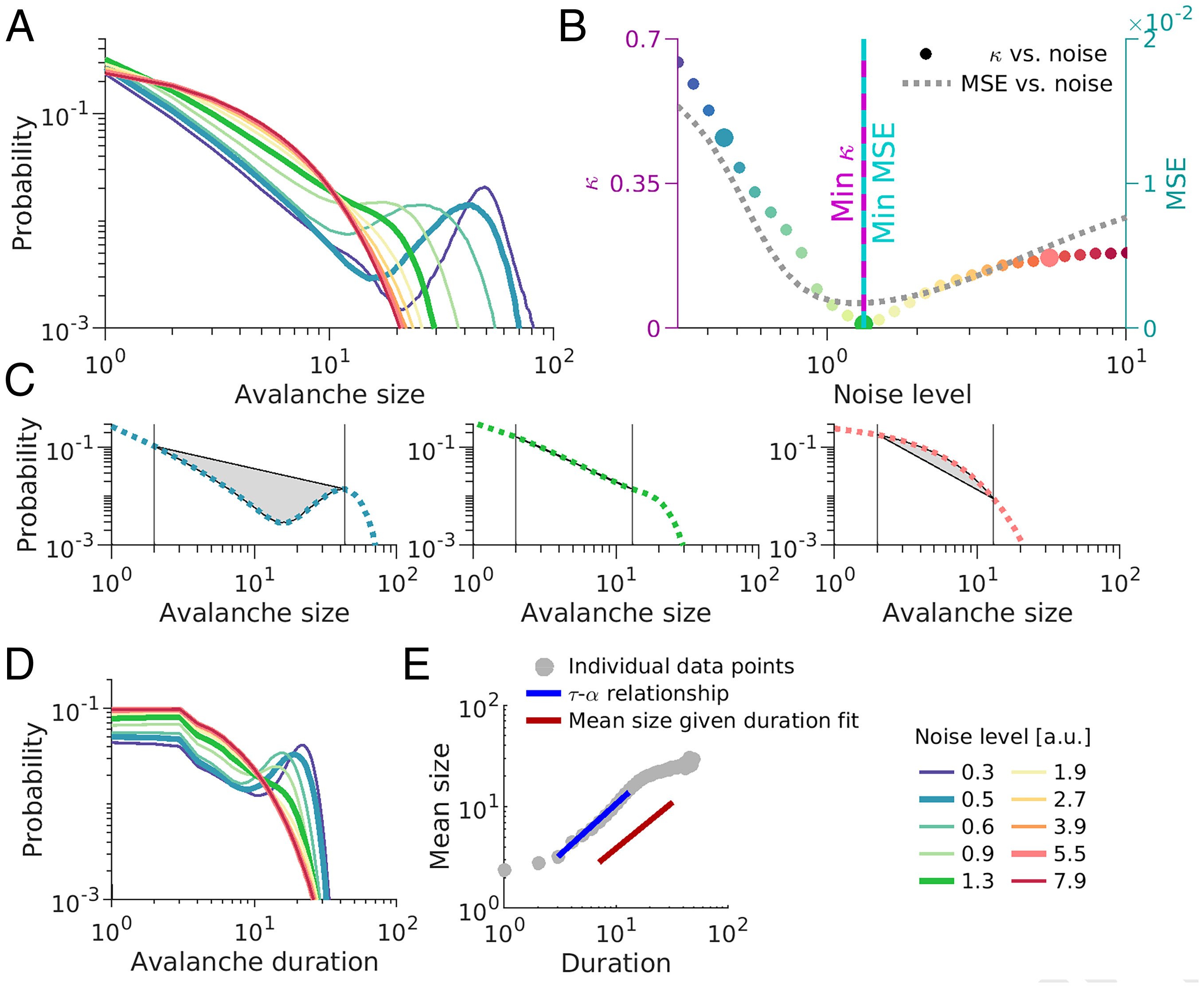

Noise Tunes Criticality and Coding

- examines how noise levels affect avalanche size distributions and coding performance (MSE)

- Low Noise (blue line): Neurons over-synchronize, causing large avalanches (a "bump" in the tail): a supercritical state.

- High Noise (red line): Activity fragments into small avalanches (exponential decay): a subcritical state.

- Moderate Noise (green line): Avalanches follow a power-law distribution (linear in log-log): a critical state.

- The noise level where avalanches are most scale-free (lowest ) matches where coding error (MSE) is minimized.

- Criticality and efficient coding align

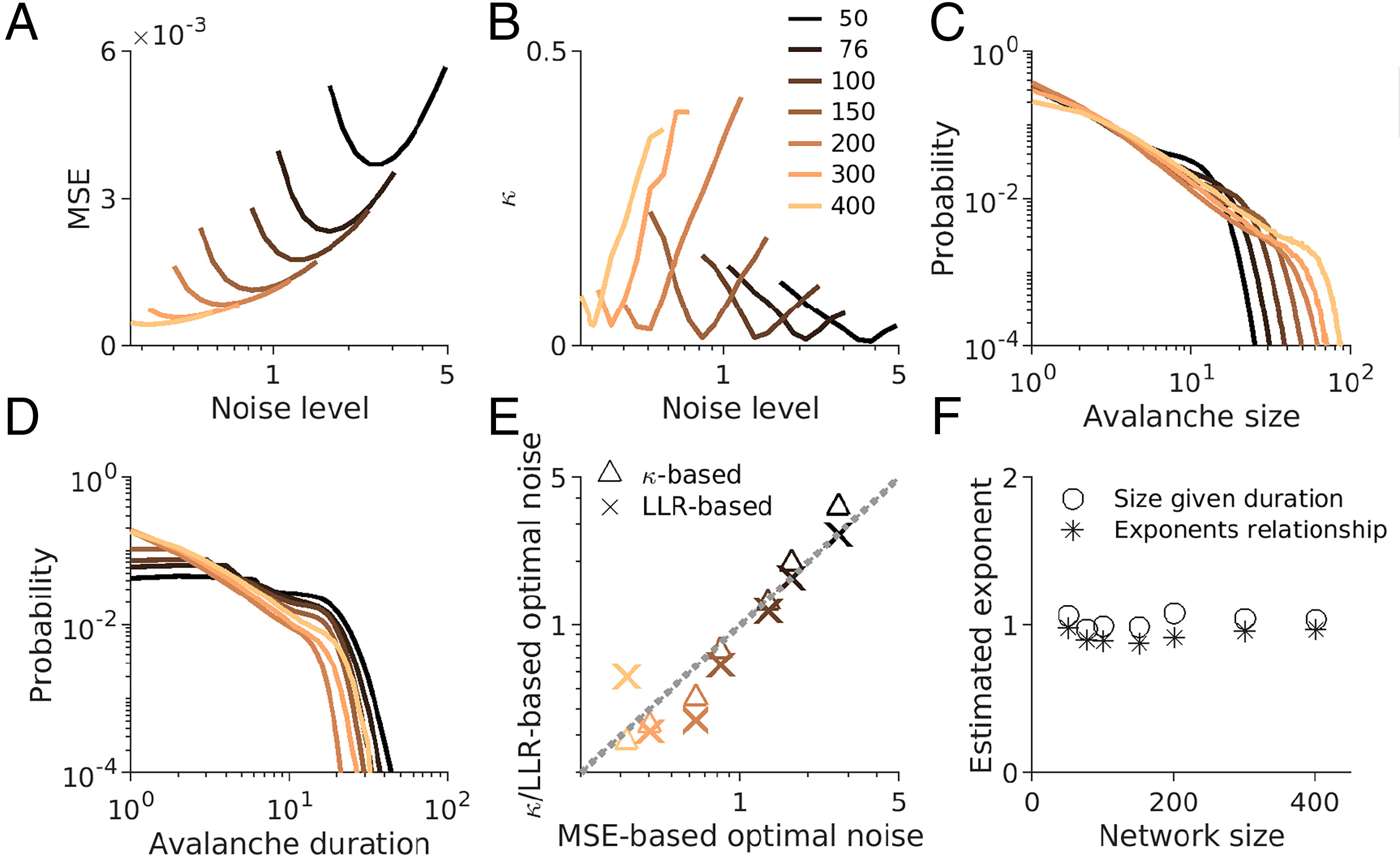

Robust Across Network Sizes

- results of fig1 hold across different network sizes? (50 to 400 neurons).

- MSE (Fig. 2A) and (Fig. 2B) show similar nonmonotonic patterns with noise, regardless of size.

Discussion

- The study suggests that criticality and efficient coding aren’t separate theories but deeply connected.

- Excessive synchronization reduces the diversity of firing patterns, and this could be explained as being trapped in a single attractor (?!)