Image classification : a core computer vision task

input: image —> output : assign image to one of a fixed set of categories

fine-grained categories

an image classifier

no obvious way to hard-code the algorithm for recognizing a cat, or other classes.

machine learning : data- drvien approach

- collect a dataset of images and labels

- use machine learning to train a classifier

- evaluate the classifier on new images

def train(images, labels):

# Machine Learning

return model

def predict(model, test_images):

# Use model to predict labels

return test_labelsDataset

MNIST, CIFAR10, CIFAR100, ImageNet,

ImageNet : Performance metric - top 5 accuracy : algortihm predicts 5 labels for each image; one of them needs to be right

Omniglot

1623 categories : characters from 50 different alphabet

20 images per category

Meant to test few shot learning

Nearest Neighbor

def train(images, labels):

# Machine Learning

return model → memorize all data and labels

def predict(model, test_images):

# Use model to predict labels

return test_labels→ predict the label of the most similar training image

Distance Metric to compare images

L1 distance (Manhattan)

L2 distance (Euclidean)

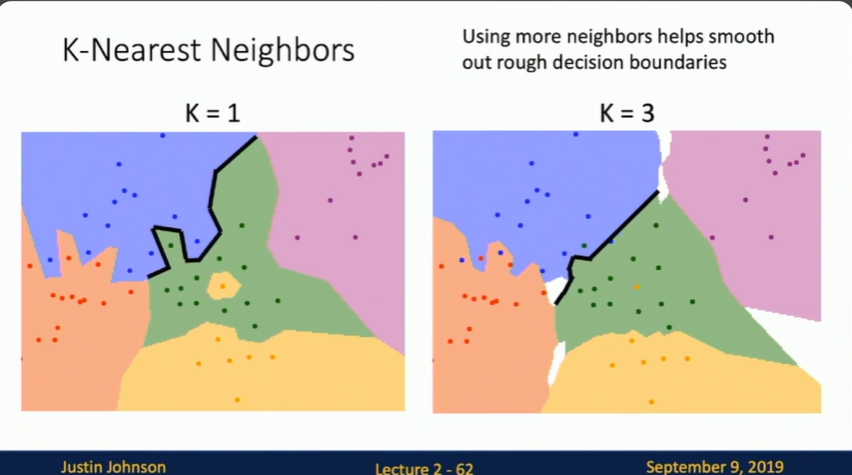

k-Nearest Neighbors

tf-idf similarity

robust and can apply various type od data

hyperparameters

choices about our learning algorithm that we don’t learn from the training data; instead we set them at the start of the learning process

very problem-dependent.

how to set hyperparameters

- choose hyperparameters that work best on the data —> K=1 always works perfectly on training data

- split data into train and test, chosse hyperparameters that work best on test data —> no idea how algorithm will perform on new data

- split data into train, val, and test ; choose hyperparameters on val and evaluate on test —> Better!

- cross-validation : split data into folds, try each fold as validation and average the results.

curse of dimensionality

curse of dimensionality : for uniform coverage of space, number of training points needed grows exponentially with demension

K-Nearest Neighbor on raw pixels is seldom used

- very slow at test time

- distance metrics on pixels are not informative

nearest neighbor with convNet features works well!

image captioning with nearest neighbor approach works well