import torch

import torchvision.datasets as dsets

import torchvision.transforms as transforms

import matplotlib.pyplot as plt

import random

device = 'cuda' if torch.cuda.is_available() else 'cpu'

random.seed(777)

torch.manual_seed(777)

if device == 'cuda':

torch.cuda.manual_seed_all(777)

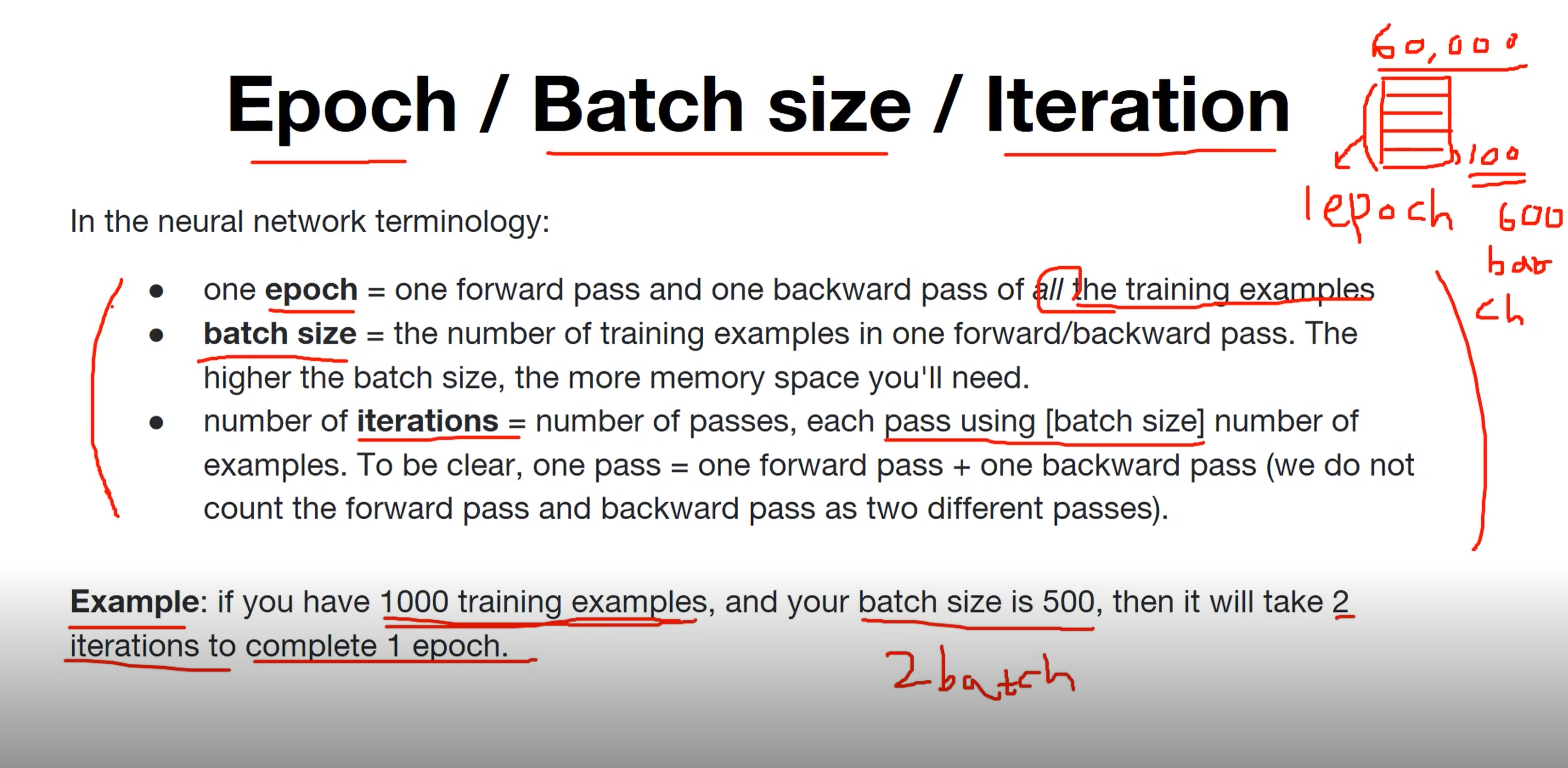

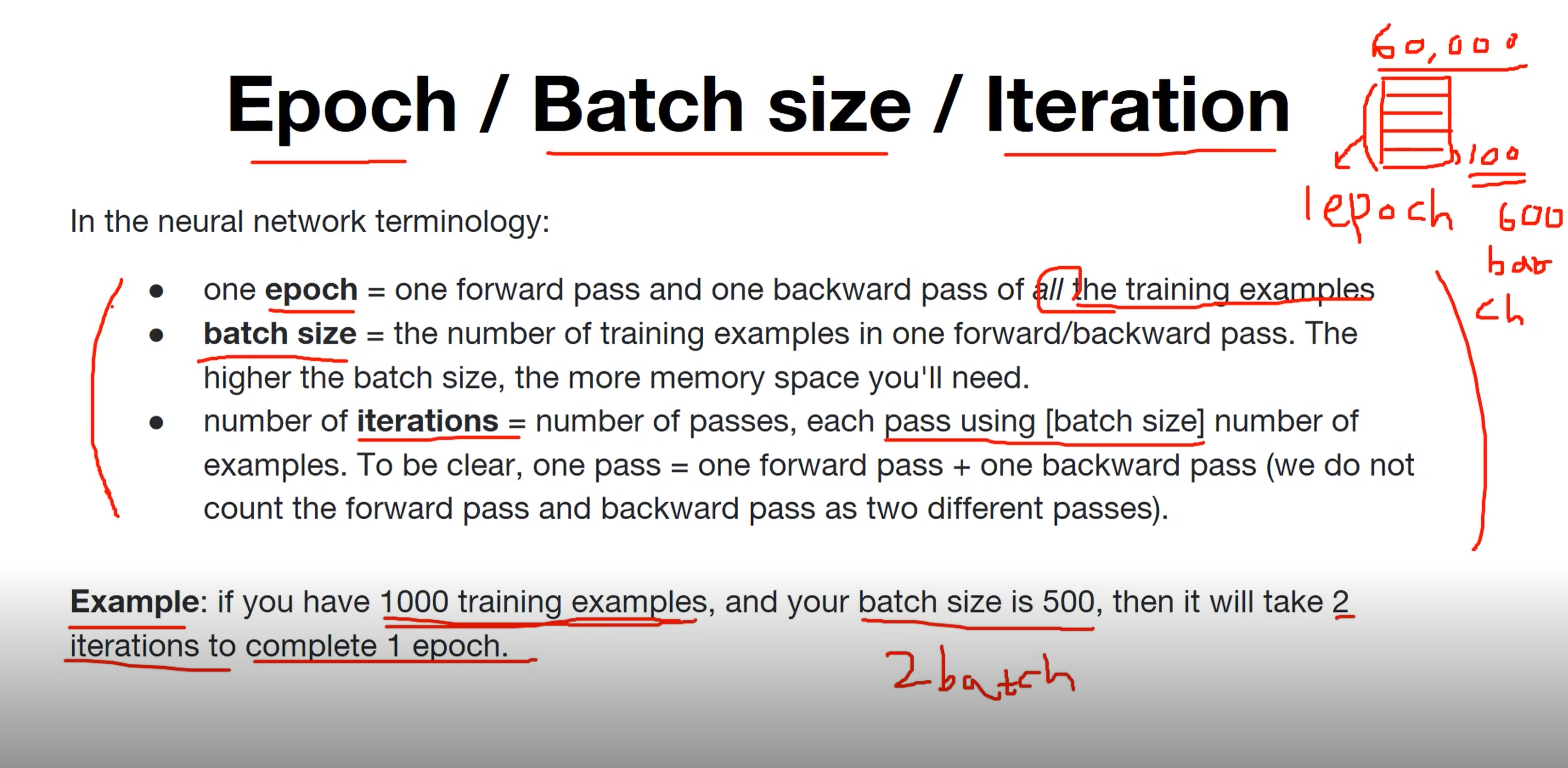

training_epochs = 15

batch_size = 100

mnist_train = dsets.MNIST(root='MNIST_data/',

train=True,

transform=transforms.ToTensor(),

download=True)

mnist_test = dsets.MNIST(root='MNIST_data/',

train=False,

transform=transforms.ToTensor(),

download=True)

data_loader = torch.utils.data.DataLoader(dataset=mnist_train,

batch_size=batch_size,

shuffle=True,

drop_last=True)

linear = torch.nn.Linear(784, 10, bias=True).to(device)

criterion = torch.nn.CrossEntropyLoss().to(device)

optimizer = torch.optim.SGD(linear.parameters(), lr=0.1)

for epoch in range(training_epochs):

avg_cost = 0

total_batch = len(data_loader)

for X, Y in data_loader:

X = X.view(-1, 28 * 28).to(device)

Y = Y.to(device)

optimizer.zero_grad()

hypothesis = linear(X)

cost = criterion(hypothesis, Y)

cost.backward()

optimizer.step()

avg_cost += cost / total_batch

print('Epoch:', '%04d' % (epoch + 1), 'cost =', '{:.9f}'.format(avg_cost))

print('Learning finished')

Epoch: 0001 cost = 0.535468459

Epoch: 0002 cost = 0.359274179

Epoch: 0003 cost = 0.331187546

Epoch: 0004 cost = 0.316578031

Epoch: 0005 cost = 0.307158172

Epoch: 0006 cost = 0.300180733

Epoch: 0007 cost = 0.295130193

Epoch: 0008 cost = 0.290851533

Epoch: 0009 cost = 0.287417084

Epoch: 0010 cost = 0.284379542

Epoch: 0011 cost = 0.281825215

Epoch: 0012 cost = 0.279800713

Epoch: 0013 cost = 0.277808994

Epoch: 0014 cost = 0.276154280

Epoch: 0015 cost = 0.274440825

Learning finished

with torch.no_grad():

X_test = mnist_test.test_data.view(-1, 28 * 28).float().to(device)

Y_test = mnist_test.test_labels.to(device)

prediction = linear(X_test)

correct_prediction = torch.argmax(prediction, 1) == Y_test

accuracy = correct_prediction.float().mean()

print('Accuracy:', accuracy.item())

r = random.randint(0, len(mnist_test) - 1)

X_single_data = mnist_test.test_data[r:r + 1].view(-1, 28 * 28).float().to(device)

Y_single_data = mnist_test.test_labels[r:r + 1].to(device)

print('Label: ', Y_single_data.item())

single_prediction = linear(X_single_data)

print('Prediction: ', torch.argmax(single_prediction, 1).item())

plt.imshow(mnist_test.test_data[r:r + 1].view(28, 28), cmap='Greys', interpolation='nearest')

plt.show()

Accuracy: 0.8862999677658081

Label: 8

Prediction: 3