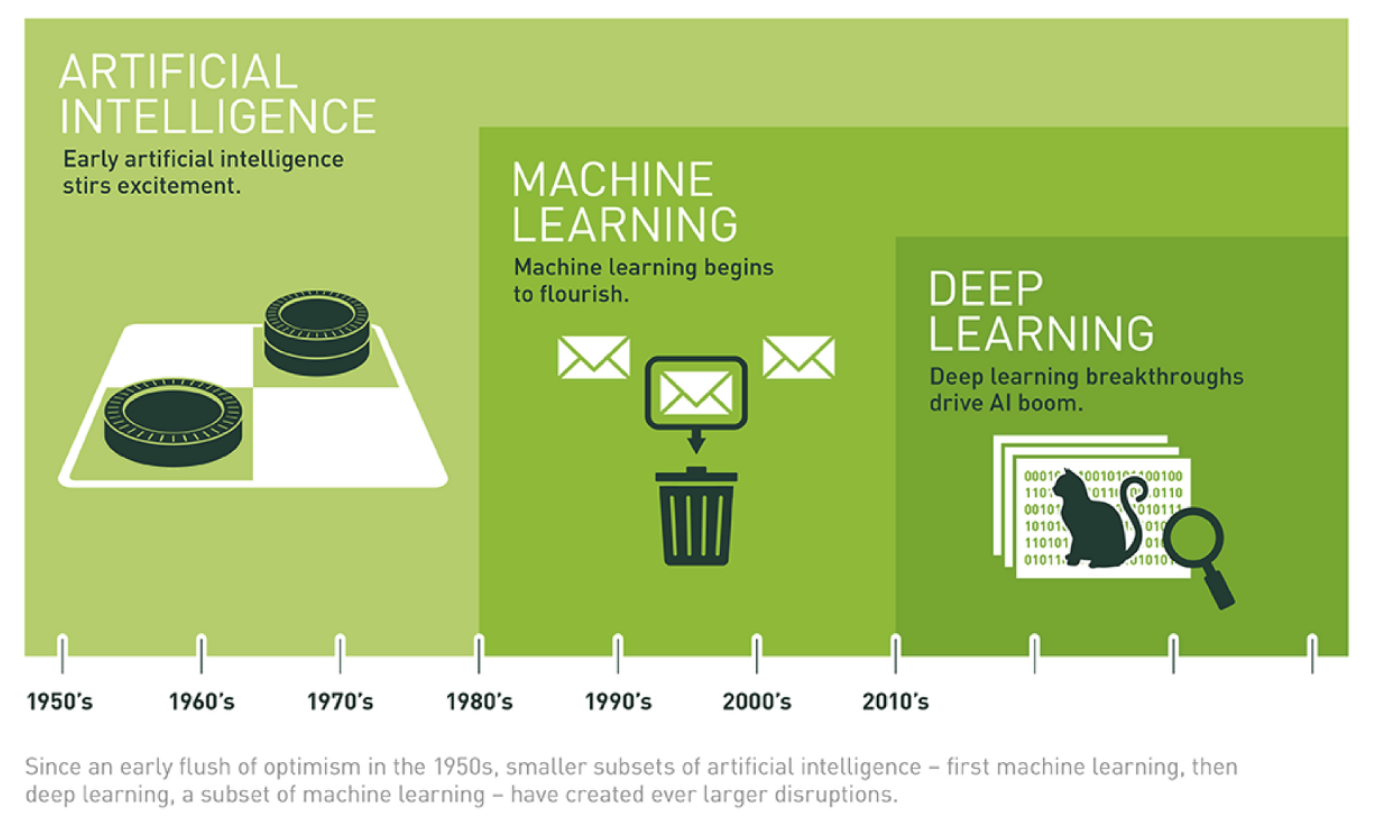

AI, MACHINE LEARNING, DEEP LEARNING : WHAT'S THE DIFFERENCE?

-

AI : Imparting cognitive ability to a machine

Machines building their own rule systems, not programmed content by human -

Machine Learning : Analysize data on it's own, learn patterns from data and judges based on what it has learned

-

Deep learning : Learning model is a neural network form

"Deep learning is inspired by neural networks of the brain to build learning machines which discover rich and useful internal representations, computed as a composition of learned features and functions." -Yoshua Benjio

Representation Learning

there are several ways to "express" the data

- molecular representation : data it self

- image : visual form of data , usually an array

- table : has features of each data

- category : important to choose right category for data

These four different ways of expressions are hierarchical order

molecular and image is raw and sensory representation. no artificial processing is envolved.

but table and category is more abstract and internal representation

it need relatively more human intervention

deep learning's ultimate goal is that express data in a abstract and internal way without human intervention

Connectionism and Deeplearning

Connectionism: Processing information is done through the connections of numerous neurons in the brain.

-

Connectivism explains that in the process from stimulation to reaction, a connected model like a neuron processes information.

-

Accordingly, the intelligence is initially 'blank', and slowly 'learn' by giving a number of cases and 'experience'.

Deep learning follows this very connectionism.

Neural networks are stimulated, go through a series of information processing processes internally, and then output.

A Function in Programming and Mathmatics

A function from mathnatics and function from programming isn't so different

- mathematic function

y = f(x) :

take x as an input, spit y for output through function f

- programming function

def f(x): y = 3 * x + 2 return y

import math def f(x): y = x**2 + math.exp(x+1) return y f(5)

What does Function do ?

- Relation : indicate the relationship between x and y

- x is dependent on y's change

- the degree of y change caused by x change is determined by the function

- Transformation : transforming x into somewhat form that we want.

Transformation is just a fancy word for function. takes in input and spit out output for each one

- In the context of linear algebra, transformation take in some vector and spit out another vector

- input vector move over to its corresponding output vector.

- transformation in 2-dimension is basically moving the axis. depending on which transformation, two originally vertival and horizontal axes can be moved,bent,or twisted

- linear algebra limits itself to a special type of transformation

- all Lines remain lines

- origin ramins fixed

import numpy as np def linear_transformation(X,A) : transformed_vector = np.matmul(X,A) return transformed_vector

X = np.array([-1,2]) A = np.array([[1,-2],[3,0]]) linear_transformation(X,A) #result : array([5,2])

- Mapping :maps from space x to space y

- one-to-one :

- both input and output values are scalar

f(x) = wx + b = y- enter one value , get one value.

univariagte ragression- Many-to-one :

- recieving various information(vector) and outputting one value(scalar).

- this is case of multivariate Regression

- Many-to-Many :

- mainly used in a classification problem.

- outputting a vector consisting of number of categories

Difference between Function and Model

what is model ?

All functions that receive data and output data in the desired form are called 'model'

Machine learning and deep learning are not about find 'perfect functions', but about trying to get closer to 'the functions that can be best approximated'

How to find 'That function'?

- determine the function space in which function form to represent model.

- in that process 'inductive bias' occurs

- find optimal function in it and train model to learn

- in neural network cases, by using gradient descent, model can be learned more with optimal functions

Iductive Bias : A hypothesis that the optimal function can explain data will exist in specific function space.

Depending what imformation the data contains, the optimal form of function of that data is different.

Using domain knowledge about data, you can hypothesize what form the function that best represents the data will be.

To make a better model, we need a good Inductive Bias.

DeepLearning

In a low layer, simple factors such as dots, lines, and planes are extracted, and as we reach a higher layer, complex factors are extracted, and finally, images are recognized.

Perceptron?

A synthetic function that goes through a linear function and its result up to a nonlinear function activation function.

-

A perceptron consists of three layers. Input layer, Output layer, and all layers located between two layers are called hidden layers.

-

Perceptron as the basic building block, when neural network layers connected to eachother according to this pattern is reffered to multi-layer perception(MLP).

As the number of hidden layers increases, the neural network is called 'deep'

and this 'deep enough' neural network is used as lerning model for deep learning

this sufficiently deep neural network is called deep neural network(DNN)

e.g. fully connected , convolutional, and circulating layers are commonly used for DNN

Strength and weekness points of deep learning

- strength point :

- Deep learning has the ability to "feature representation learning,"

- which means it has ability to learn on its own how to express factors that can be used to perform optimal performance from original data and to find weights that perform optimal performance more effectively.

- weekness point :

- Deep learning model requires a lot of data to learn effectively, and the learning computation amount is very high, so it takes a long time to learn.

Is deep learning a black box ?

No : because we know how it exactly works!

neural network is a combination of several functions

The operation of several functions consists of simple calculations and we knows how the functions are calculated in a synthesized form and how they work

Yes : we can't explain why or how this output produced!

It is impossible to predict what results the learned deep learning model will produce, and it is also impossible to explain on what basis the model's judges were made on .

Imterpretable Machine laerning/ Explainable AI

if you can explain what process the model predicted was, you can trust the results

LIME library : helps interpretable Machine learning

- The main idea of LIME :

"If the prediction value of the model changes significantly when the input value is only slightly changed, the variable is an important variable."- for the image cases, slice the image in to very small peices and check the output value when each part is eraised. if the output value changes significantly, that part played an important role in making predictions

3개의 댓글

Understanding the distinctions between AI, Machine Learning, and Deep Learning is crucial in navigating the landscape of artificial intelligence technologies. While often used interchangeably, these terms refer to distinct concepts within the broader field of AI. AI encompasses the overarching goal of creating systems that can perform tasks requiring human-like intelligence. Machine Learning, a subset of AI, focuses on algorithms that enable computers to learn from data and make predictions or decisions without being explicitly programmed. Deep Learning, in turn, is a subset of Machine Learning that employs neural networks with multiple layers to extract features from data, allowing for more complex learning and decision-making processes. Platforms like https://advancewithai.net offer comprehensive resources and training to deepen understanding and proficiency in these areas, empowering individuals to harness the transformative potential of AI technologies effectively.

Don't wait to book your air duct cleaning appointment with Space Air Duct Cleaning. Trust me, the difference in your indoor air quality will be noticeable! https://www.tumblr.com/spaceairductcleaning

The most obvious advantage of waterproof golf shoes is their ability to repel water, keeping your feet dry and comfortable even when playing in wet or rainy conditions. https://golfaccent.com/best-golf-cart-batteries/