OSTEP) 28. Locks

이 포스팅은 Operating Systems: Three Easy Pieces, Remzi H. Arpaci-Dusseau & Andrea C. Arpaci-Dusseau을 읽고 개인 학습용으로 정리한 글입니다.

➖ 22-01-19

-

we would like to execute a series of instructions atomically, but due to the presence of interrupts on a single processor (or multiple threads executing on multiple processors concurrently), we couldn’t.

-

Programmers annotate source code with locks, putting them around critical sections, and thus ensure that any such critical section executes as if it were a single atomic instruction.

28.1 Locks: The Basic Idea

-

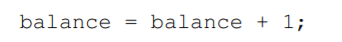

Assume our critical section looks like this:

-

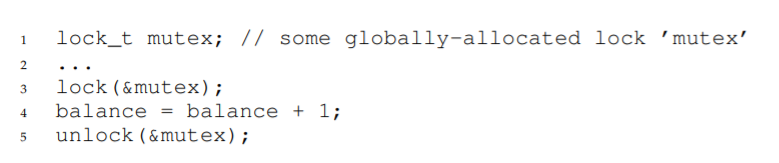

To use a lock, we add some code around the critical section like this:

-

A lock is just a variable.

-> to use one, you must declare a lock variable of some kind (such as mutex above). -

This lock variable holds the state of the lock at any instant in time.

- available (= unlocked = free)

-> no thread holds the lock - acquired (= locked o= held)

-> exactly one thread holds the lock and presumably is in a critical section.

- We could store other information in the data type as well

-> ex. which thread holds the lock, a queue for ordering lock acquisition, ...

-> but information like that is hidden from the user of the lock.

➕

-

semantics of the lock() and unlock() routines are simple.

-

Calling the routine lock() tries to acquire the lock.

-> if no other thread holds the lock, the thread will acquire the lock and enter the critical section (this thread is the owner of the lock). -

If another thread then calls lock() on that same lock variable, it will not return while the lock is held by another thread.

-> in this way, other threads are prevented from entering the critical section

while the first thread that holds the lock is in there. -

Once the owner of the lock calls unlock(), the lock is now available again. -> If no other threads are waiting for the lock, the state of the lock is

simply changed to free.

-> If there are waiting threads (stuck in lock()), one of them will notice be informed of this change of the lock’s state, acquire the lock, and enter the critical section.

28.2 Pthread Locks

-

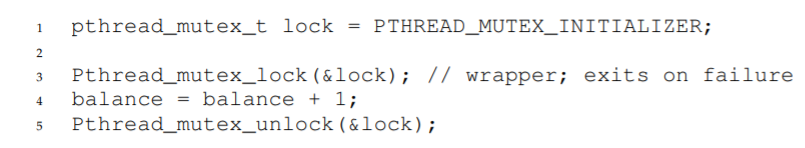

The name that the POSIX library uses for a lock is a mutex, as it is used to provide mutual exclusion between threads.

-

The following POSIX threads code is doing the same thing as above (we use wrappers that check for errors upon lock and unlock):

-

The POSIX version passes a variable to lock and unlock, as we may be using different locks to protect different variables.

-

a coarse-grained locking strategy: using one big lock that is used any time any critical section is accessed.

-

a more fine-grained approach: protect different data and data structures with different locks, allowing more threads to be in locked code at once.

28.3 Building A Lock

-

But how should we build a lock? What hardware support is needed? What OS support?

-

To build a working lock, we will need some help from the hardware, as well as the OS.

28.4 Evaluating Locks

- how to evaluate the efficacy of a particular lock implementation?

-

whether the lock provides mutual exclusion.

-

fairness.

-> Does each thread contending for the lock get a fair shot at acquiring it once it is free?

-> does any thread contending for the lock starve while doing so, thus never obtaining it? -

performance (the time overheads added by using the lock).

-> In case of no contention: when a single thread is running and grabs and releases the lock, what is the overhead of doing so?

-> In case where multiple threads are contending for the lock on a single CPU, are there performance concerns?

-> how does the lock perform when there are multiple CPUs involved, and threads on each contending for the lock?

28.5 Controlling Interrupts

-

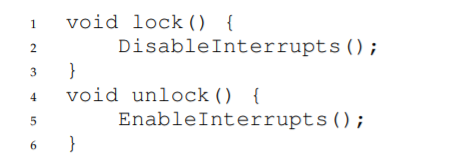

One of the earliest solutions used to provide mutual exclusion was to disable interrupts for critical sections.

-> this solution was invented for single-processor systems. -

The code would look like this:

-

By turning off interrupts (via a hardware instruction) before entering a critical section, we ensure that the code inside the critical section will not be interrupted, and thus will execute as if it were atomic.

-

When we are finished, we re-enable interrupts (again, via a hardware instruction) and thus the program proceeds as usual.

➕

- The positives: simplicity.

- The negatives: unfortunately, are many.

1.

-

Requires us to allow any calling thread to perform a privileged operation (turning interrupts on and off), and thus trust that this facility is not abused.

-

Any time we are required to trust an arbitrary program, we are probably in trouble.

-

ex. a greedy program could call lock() at the beginning of its execution and thus monopolize the processor

-

ex. an errant or malicious program could call lock() and go into an endless loop.

-> the OS never regains control of the system, and there is only one recourse: restart the system.

2.

-

Does not work on multiprocessors.

-

If multiple threads are running on different CPUs, and each try to enter the same critical section, it does not matter whether interrupts are disabled.

-> threads will be able to run on other processors and thus, could enter the critical section.

3.

-

Turning off interrupts for extended periods of time can lead to interrupts becoming lost, which can lead to serious systems problems.

-

ex. If the CPU missed the fact that a disk device has finished a read request, how will the OS know to wake the process waiting for said read?

4.

-

Can be inefficient.

-

Compared to normal instruction execution, code that masks or unmasks interrupts tends to be executed slowly by modern CPUs.

➕

-

For these reasons, turning off interrupts is only used in limited contexts as a mutual-exclusion primitive.

-

ex. An operating system itself will use interrupt masking to guarantee atomicity when accessing its own data structures.

-> This usage makes sense, as the trust issue disappears inside the OS.

28.6 A Failed Attempt: Just Using Loads/Stores

- To move beyond interrupt-based techniques, we will have to rely on CPU hardware and the instructions it provides us to build a proper lock.

➕

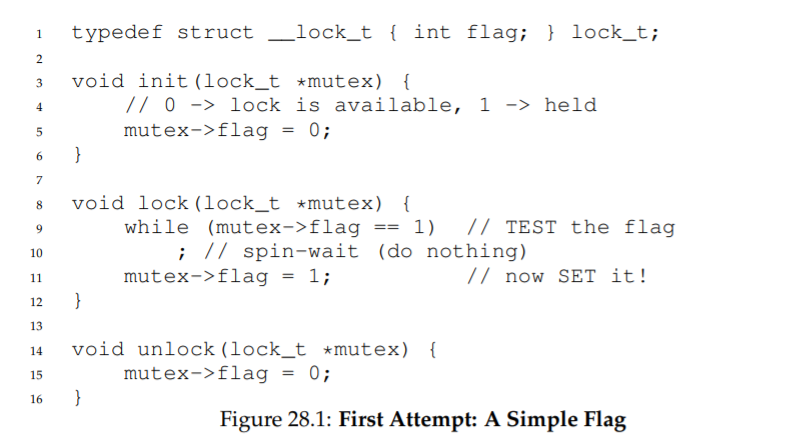

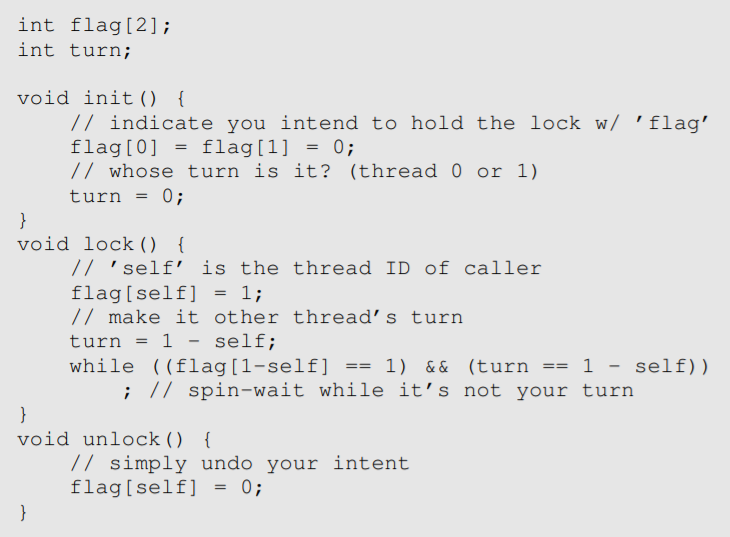

- Let’s first try to build a simple lock by using a single flag variable

-> a simple variable (flag) indicates whether some thread has possession of a lock.

-

(Figure 28.1)

-

The first thread that enters the critical section will call lock(), which

tests whether the flag is equal to 1 (in this case, it is not), and then sets

the flag to 1 to indicate that the thread now holds the lock. -

When finished with the critical section, the thread calls unlock() and clears the flag, thus indicating that the lock is no longer held.

-

If another thread happens to call lock() while that first thread is in

the critical section, it will simply spin-wait in the while loop for that

thread to call unlock() and clear the flag. -

Once that first thread does so, the waiting thread will fall out of the while loop, set the flag to 1 for itself, and proceed into the critical section.

➕

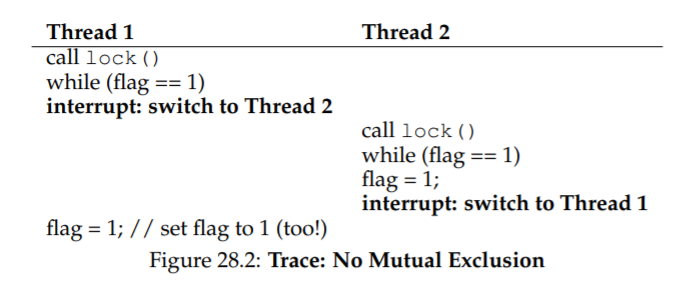

- Unfortunately, the code has two problems: correctness, performance.

correctness

-

Imagine the code interleaving (assume flag=0 to begin):

-

(Figure 28.2) with timely interrupts, we can easily produce a case where both threads set the flag to 1 and thus, both threads are able to enter the critical section.

-

we have obviously failed to provide the most basic requirement: providing mutual exclusion.

performance

-

the way a thread waits to acquire a lock that is already held: it endlessly checks the value of flag, a technique known as spin-waiting.

-

Spin-waiting wastes time waiting for another thread to release a lock.

-

The waste is exceptionally high on a uniprocessor, where the thread that the waiter is waiting for cannot even run until a context switch occurs!

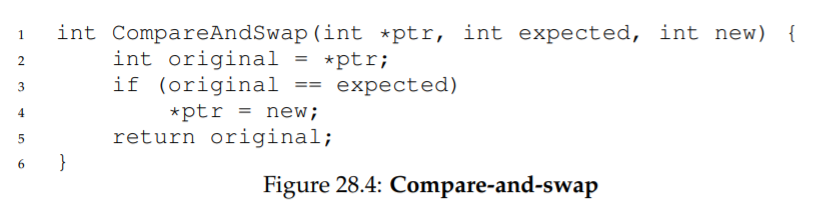

⚡ASIDE: DEKKER’S AND PETERSON’S ALGORITHMS

-

Theodorus Jozef Dekker came up with a solution to the concurrency problem.

-

Dekker’s algorithm uses just loads and stores assuming they are atomic with respect to each other, which was true on early hardware) without special hardware support.

-

Dekker’s approach was later refined by Peterson.

➖ 22-01-20

28.7 Building Working Spin Locks with Test-And-Set

-

Because disabling interrupts does not work on multiple processors, and because simple approaches using loads and stores don’t work, system designers started to invent hardware support for locking.

-

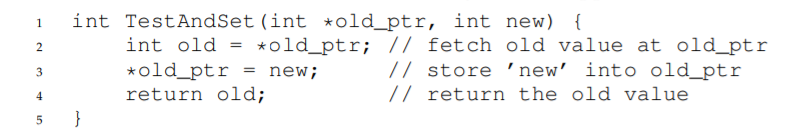

The simplest bit of hardware support to understand is known as a test-and-set (or atomic exchange) instruction.

-

We define what the testand-set instruction does via the following C code snippet:

-

the test-and-set instruction returns the old value pointed to by the old ptr, and simultaneously updates said value to new.

-

This sequence of operations is performed atomically.

-

It enables you to “test” the old value (which is what is returned) while simultaneously “setting” the memory location to a new value.

➕

- the test-and-set instruction is enough to build a simple spin lock(Figure 28.3):

case 1

-

Imagine first the case where a thread calls lock() and no other thread currently holds the lock.

-> thus, flag should be 0. -

When the thread calls TestAndSet(flag, 1), the routine will return the old value of flag, which is 0.

-> thus, the calling thread, which is testing the value of flag, will not get caught spinning in the while loop and will acquire the lock.

-> The thread will also atomically set the value to 1, thus indicating that the lock is now held. -

When the thread is finished with its critical section, it calls unlock() to set the flag back to zero.

case 2

-

The second case we can imagine arises when one thread already has

the lock held.

-> thus, flag is 1. -

In this case, this thread will call lock() and then call TestAndSet(flag, 1) as well.

-

This time, TestAndSet() will return the old value at flag, which is 1 (because the lock is held), while simultaneously setting it to 1 again.

-> As long as the lock is held by another thread, TestAndSet() will repeatedly return 1.

-> thus, this thread will spin and spin until the lock is finally released. -

When the flag is finally set to 0 by some other thread, this thread will call TestAndSet() again, which will now return 0 while atomically setting the value to 1 and thus acquire the lock and enter the critical section.

➕

-

By making both the test (of the old lock value) and set (of the new value) a single atomic operation, we ensure that only one thread acquires the lock.

-

This type of lock is usually referred to as a spin lock.

-> It is the simplest type of lock to build, and simply spins, using CPU cycles, until the lock becomes available. -

To work correctly on a single processor, it requires a preemptive scheduler (i.e., one that will interrupt a thread via a timer, in order to run a different thread, from time to time).

-> Without preemption, spin locks don’t make much sense on a single CPU, as a thread spinning on a CPU will never relinquish it.

Evaluating Spin Locks

- Given our basic spin lock, we can now evaluate how effective it is along our previously described axes.

correctness

-

Does it provide mutual exclusion?

-> The answer here is yes. -

the spin lock only allows a single thread to enter the critical section at a time.

fairness.

-

How fair is a spin lock to a waiting thread? Can you guarantee that a waiting thread will ever enter the critical section?

-> The answer is, spin locks don’t provide any fairness guarantees. -

Indeed, a thread spinning may spin forever, under contention.

-> Simple spin locks may lead to starvation.

performance.

- What are the costs of using a spin lock?

case 1

-

consider threads competing for the lock on a single processor.

-> On the single CPU, spin locks performance overheads can be quite painful. -

imagine the case where the thread holding the lock is preempted within a critical section.

-

The scheduler might then run every other thread (imagine there are N − 1 others), each of which tries to acquire the lock.

-

In this case, each of those threads will spin for the duration of a time slice before giving up the CPU, a waste of CPU cycles.

case 2

-

consider threads spread out across many CPUs.

-> On multiple CPUs, spin locks work reasonably well (if the number of threads roughly equals the number of CPUs). -

imagine Thread A on CPU 1 and Thread B on CPU 2, both contending for a lock.

-

If Thread A (CPU 1) grabs the lock, and then Thread B tries to, B will spin (on CPU 2).

-

However, presumably the critical section is short, and thus soon the lock becomes available, and is acquired by Thread B.

-> Spinning to wait for a lock held on another processor doesn’t waste many cycles in this case, and thus can be effective.

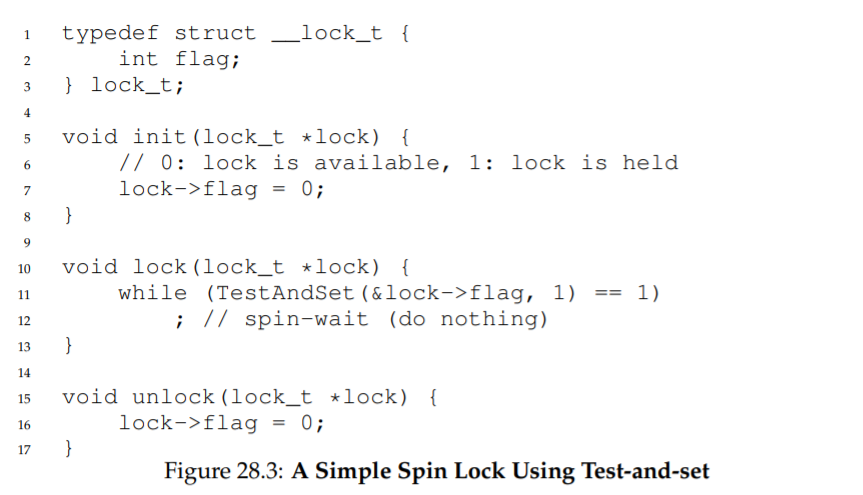

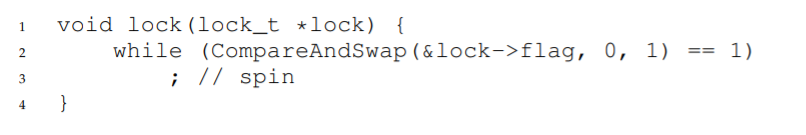

28.9 Compare-And-Swap

-

Another hardware primitive that some systems provide is known as

the compare-and-swap instruction (as it is called on SPARC, for example), or compare-and-exchange (as it called on x86). -

The C pseudocode for this single instruction (Figure 28.4):

-

The basic idea is for compare-and-swap to test whether the value at the

address specified by ptr is equal to expected.

-> if so, update the memory location pointed to by ptr with the new value.

-> If not, do nothing. -

In either case, return the original value at that memory location, thus allowing the code calling compare-and-swap to know whether it succeeded or

not.

➕

-

With the compare-and-swap instruction, we can build a lock in a manner similar to that with test-and-set.

-

For example, we could just replace the lock() routine with the following:

-> The rest of the code is the same as the test-and-set example.

-

This code simply checks if the flag is 0 and if so, atomically swaps in a 1 thus acquiring the lock.

-

Threads that try to acquire the lock while it is held will get stuck spinning until the lock is finally released.

➕

-

compare-and-swap is a more powerful instruction than test-and-set.

-

However, if we just build a simple spin lock with it, its behavior is identical to the spin lock we analyzed above.

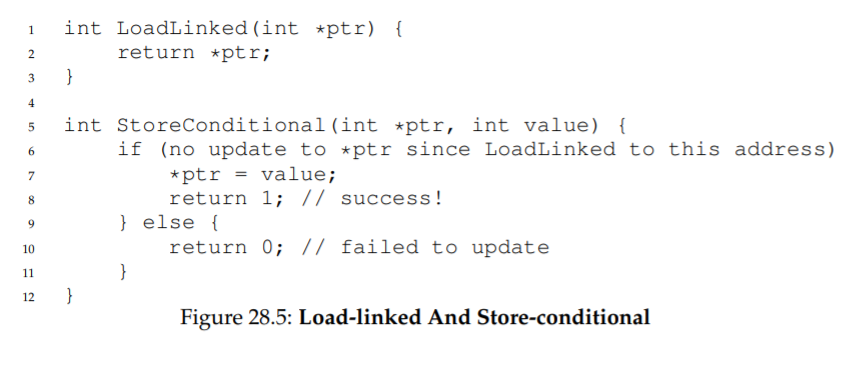

28.10 Load-Linked and Store-Conditional

- Some platforms provide a pair of instructions that work in concert to help build critical sections.

-For example, the load-linked and store-conditional instructions can be used together to build locks and other concurrent structures.

-

The C pseudocode for these instructions (Figure 28.5):

-

The load-linked operates much like a typical load instruction, and simply fetches a value from memory and places it in a register.

-

The key difference comes with the store-conditional, which only succeeds (and updates the value stored at the address just load-linked from) if no intervening store to the address has taken place.

-> in the case of success, the store-conditional returns 1 and updates the value at ptr to value.

-> if it fails, the value at ptr is not updated and 0 is returned.

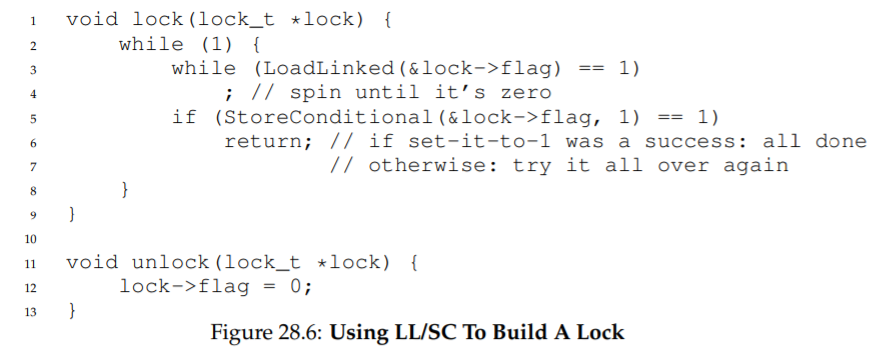

➕

-

(Figure 28.6)

-

First, a thread spins waiting for the flag to be set to 0 (and thus indicate the lock is not held).

-

Once so, the thread tries to acquire the lock via the store-conditional.

-> if it succeeds, the thread has atomically changed the flag’s value to 1 and thus can proceed into the critical section.

➕

-

Note how failure of the store-conditional might arise.

-

One thread calls lock() and executes the load-linked, returning 0 as the lock is not held.

-

Before it can attempt the store-conditional, it is interrupted and another thread enters the lock code, also executing the load-linked instruction,and also getting a 0 and continuing.

-

At this point, two threads have each executed the load-linked and each are about to attempt the storeconditional.

-

The key feature of these instructions is that only one of these threads will succeed in updating the flag to 1 and thus acquire the lock.

-> the second thread to attempt the store-conditional will fail (because the

other thread updated the value of flag between its load-linked and storeconditional) and thus have to try to acquire the lock again.

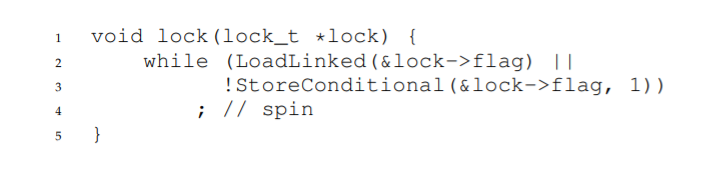

➕

- a more concise form of the above:

TIP: LESS CODE IS BETTER CODE (LAUER’S LAW)

-

Short, concise code is always preferred.

-> It is likely easier to understand and has fewer bugs. -

As Hugh Lauer said, when discussing the construction of the Pilot operating system: “If the same people had twice as much time, they could produce as good of a system in half the code.”

-> Lauer’s Law.

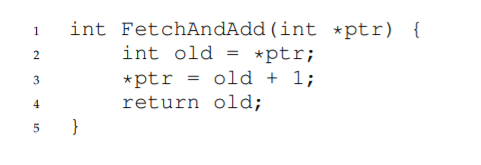

28.11 Fetch-And-Add

-

One final hardware primitive is the fetch-and-add instruction, which atomically increments a value while returning the old value at a particular address.

-

The C pseudocode for the fetch-and-add instruction:

➕

-

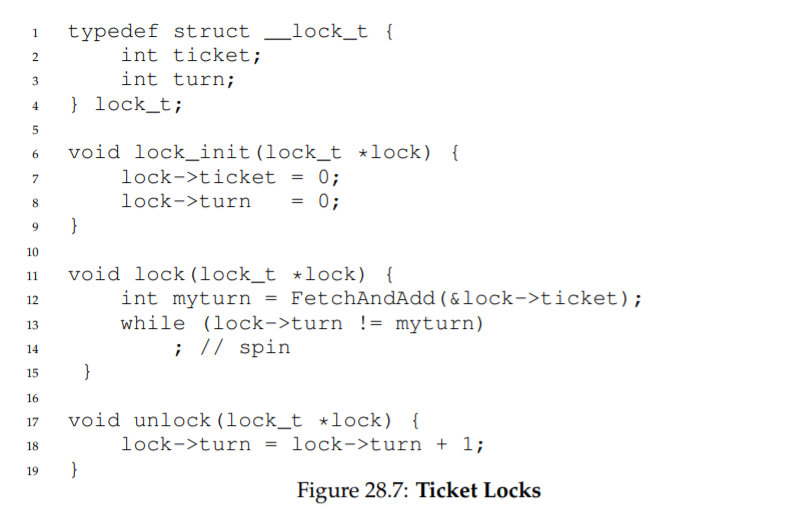

We’ll use fetch-and-add to build a ticket lock, as introduced by Mellor-Crummey and Scott.

-

The lock and unlock code (Figure 28.7):

-> Instead of a single value, this solution uses a ticket and turn variable in combination to build a lock.

-

When a thread wishes to acquire a lock, it first does an atomic fetch-and-add on the ticket value.

-> that value is now considered this thread’s “turn” (myturn). -

The globally shared lock->turn is then used to determine which thread’s turn it is.

-> when (myturn == turn) for a given thread, it is that thread’s turn to enter the critical section. -

Unlock is accomplished simply by incrementing the turn such that the next waiting thread (if there is one) can now enter the critical section.

➕

-

Note one important difference with this solution versus our previous

attempts: it ensures progress for all threads. -

Once a thread is assigned its ticket value, it will be scheduled at some point in the future (once those in front of it have passed through the critical section and released the lock).

-

In our previous attempts, no such guarantee existed; a thread spinning

on test-and-set (for example) could spin forever even as other threads

acquire and release the lock.

28.12 Too Much Spinning: What Now?

-

Our simple hardware-based locks are simple and they work.

-> However, in some cases, these solutions can be quite inefficient. -

Imagine you are running two threads on a single processor.

-

Now imagine that one thread (thread 0) is in a critical section and thus has a lock held, and unfortunately gets interrupted.

-

The second thread (thread 1) now tries to acquire the lock, but finds that it is held.

-> Thus, it begins to spin. And spin. Then it spins some more. -

And finally, a timer interrupt goes off, thread 0 is run again, which releases the lock, and finally, thread 1 won’t have to spin so much and will be able to acquire the lock.

-

Thus, any time a thread gets caught spinning in a situation like this, it wastes an entire time slice doing nothing but checking a value that isn’t

going to change! -

The problem gets worse with N threads contending for a lock.

-> N − 1 time slices may be wasted in a similar manner, simply spinning and waiting for a single thread to release the lock.

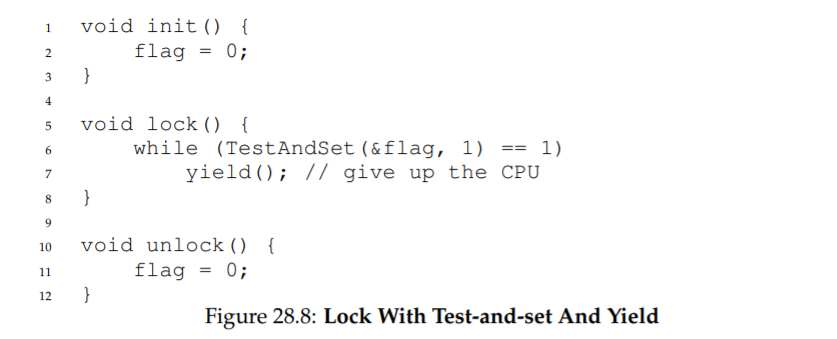

28.13 A Simple Approach: Just Yield, Baby

-

Hardware support got us pretty far: working locks, and even (as with

the case of the ticket lock) fairness in lock acquisition. -

However, we still have a problem.

-> what to do when a context switch occurs in a critical section, and threads start to spin endlessly, waiting for the interrupted (lock-holding) thread to be run again?

➕

- Our try: when you are going to spin, instead give up the CPU to another thread (Figure 28.8):

-

We assume an operating system primitive yield() which a thread can call when it wants to give up the CPU and let another thread run.

-

A thread can be in one of three states (running, ready, or blocked).

-> yield is simply a system call that moves the caller from the running state to the ready state.

-> thus, promotes another thread to running (the yielding thread essentially deschedules itself).

1.

-

Think about the example with two threads on one CPU*.

-> in this case, our yield-based approach works quite well. -

If a thread happens to call lock() and find a lock held, it will simply yield the CPU, and thus the other thread will run and finish its critical section.

2.

-

Let us now consider the case where there are many threads (say 100) contending for a lock repeatedly.

-

In this case, if one thread acquires the lock and is preempted before releasing it, the other 99 will each call lock(), find the lock held, and yield the CPU.

-

Assuming some kind of round-robin scheduler, each of the 99 will execute this run-and-yield pattern before the thread holding the lock gets to run again.

-

While better than our spinning approach (which would waste 99 time slices spinning), this approach is still costly.

-> the cost of a context switch is plenty of waste.

case 3

- Worse, we have not tackled the starvation problem at all.

-> A thread may get caught in an endless yield loop while other threads repeatedly enter and exit the critical section.

➖22-01-21

ASIDE: MORE REASON TO AVOID SPINNING: PRIORITY INVERSION

-

One good reason to avoid spin locks is performance.

-> if a thread is interrupted while holding a lock, other threads that use spin locks will spend a large amount of CPU time just waiting for the lock to become available. -

Another reason to avoid spin locks is correctness.

-> The problem to be wary of is known as priority inversion

➕

-

Let’s assume there are two threads in a system.

-

Thread 2 (T2) has a high scheduling priority, and Thread 1 (T1) has lower priority.

-> the CPU scheduler will always run T2 over T1, if indeed both are runnable.

-> T1 only runs when T2 is not able to do so (ex. when T2 is blocked on I/O). -

Assume T2 is blocked for some reason.

-> So T1 runs, grabs a spin lock, and enters a critical section. -

T2 now becomes unblocked (ex. because an I/O completed), and the CPU scheduler immediately schedules it (thus descheduling T1).

-

T2 now tries to acquire the lock, and because it can’t (T1 holds the lock), it just keeps spinning.

-> Because the lock is a spin lock, T2 spins forever, and the system is hung.

➕

-

Just avoiding the use of spin locks, unfortunately, does not avoid the

problem of inversion. -

Imagine three threads, T1, T2, and T3, with T3 at the highest priority, and T1 the lowest.

-

Imagine now that T1 grabs a lock.

-

T3 then starts, and because it is higher priority than T1, runs immediately (preempting T1).

-

T3 tries to acquire the lock that T1 holds, but gets stuck waiting, because T1 still holds it.

-

If T2 starts to run, it will have higher priority than T1, and thus it will run.

-

T3, which is higher priority than T2, is stuck waiting for T1, which may never run now that T2 is running.

➕

-

You can address the priority inversion problem in a number of ways.

-

- In the specific case where spin locks cause the problem, you can avoid using spin locks.

-

- More generally, a higher-priority thread waiting for a lower-priority thread can temporarily boost the lower thread’s priority, thus enabling it to run and overcoming the inversion.

-> priority inheritance.

- More generally, a higher-priority thread waiting for a lower-priority thread can temporarily boost the lower thread’s priority, thus enabling it to run and overcoming the inversion.

-

- ensure all threads have the same priority.

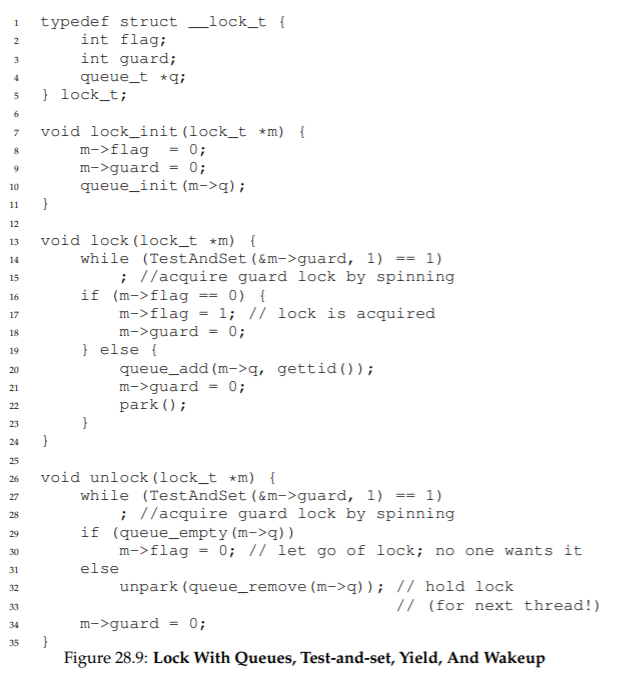

28.14 Using Queues: Sleeping Instead Of Spinning

- The scheduler determines which thread runs next.

-> if the scheduler makes a bad choice, a thread runs that must either spin

waiting for the lock (our first approach), or yield the CPU immediately (our second approach).

-> Either way, there is potential for waste and no prevention of starvation.

➕

-

Thus, we must explicitly exert some control over which thread next gets to acquire the lock after the current holder releases it.

-> we will need more OS support & a queue to keep track of which threads are waiting to acquire the lock. -

For simplicity, we will use the support provided by Solaris, in terms of two calls.

-> park() to put a calling thread to sleep.

-> unpark(threadID) to wake a particular thread as designated by threadID. -

These two routines can be used together to build a lock (Figure 28.9):

-> Lock puts a caller to sleep if it tries to acquire a held lock and wakes it when the lock is free

1.

-

The guard is used as a spin-lock around the flag and queue manipulations the lock is using.

-> This approach thus doesn’t avoid spin-waiting entirely. -

a thread might be interrupted while acquiring or releasing the lock, and thus cause other threads to spin-wait for this one to run again.

-

However, the time spent spinning is quite limited (just a few instructions inside the lock and unlock code, instead of the user-defined critical section).

-> thus, this approach may be reasonable.

2.

- In lock(), when a thread can not acquire the lock (it is already held), we are careful to add ourselves to a queue (bycalling the gettid() function to get the thread ID of the current thread), set guard to 0, and yield the CPU.

3.

-

The flag does not get set back to 0 when another thread gets woken up.

-

When a thread is woken up, it will be as if it is returning from park(); however, it does not hold the guard at that point in the code and thus cannot even try to set the flag to 1.

-

Thus, we just pass the lock directly from the thread releasing the lock to the next thread acquiring it.

-> flag is not set to 0 in-between.

4.

-

You might notice the perceived race condition in the solution,

just before the call to park(). -

With just the wrong timing, a thread will be about to park, assuming that it should sleep until the lock is no longer held.

-> A switch at that time to another thread (say, a thread holding the lock) could lead to trouble, for example, if that thread then released the lock. -

The subsequent park by the first thread would then sleep forever (potentially).

-> a problem sometimes called the wakeup/waiting race.

➕

-

Solaris solves this problem by adding a third system call: setpark().

-> By calling this routine, a thread can indicate it is about to park. -

If it then happens to be interrupted and another thread calls unpark before park is actually called, the subsequent park returns immediately instead of sleeping.

-

The code modification, inside of lock():

28.15 Different OS, Different Support

-

We have thus far seen one type of support that an OS can provide in order to build a more efficient lock in a thread library.

-

Other OS’s provide similar support; the details vary.

-

For example, Linux provides a futex which is similar to the Solaris interface but provides more in-kernel functionality.

c

➕

-

Specifically, each futex has associated with it a specific physical memory location, as well as a per-futex in-kernel queue.

-

Callers can use futex calls to sleep and wake as need be.

-

The call to futex_wait(address, expected) puts the calling thread to sleep, assuming the value at address is equal to expected.

-> If it is not equal, the call returns immediately. -

The call to the routine_futex wake(address) wakes one thread that is waiting on the queue.

➕

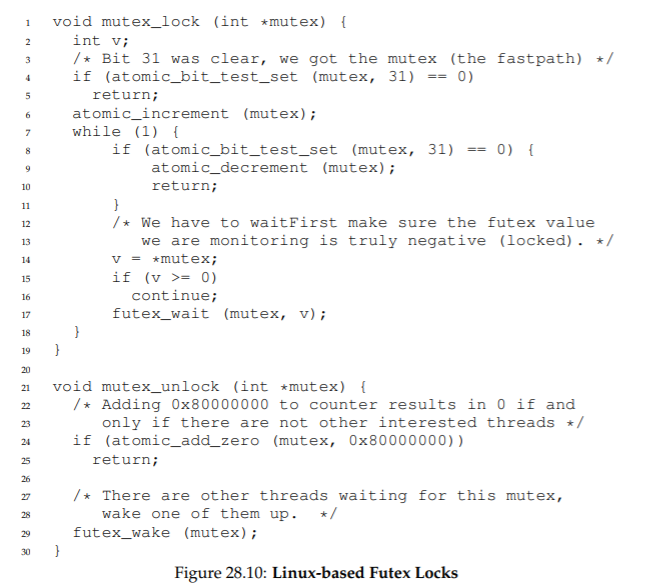

- The usage of these calls in a Linux mutex (Figure 28.10):

-> This code snippet from lowlevellock.h in the nptl library (part of the gnu libc library).

- it uses a single integer to track both whether the lock is held or not (the high bit of the integer) and the number of waiters on the lock (all the other bits).

-> Thus, if the lock is negative, it is held (the high bit determines the sign of the integer).

- it uses a single integer to track both whether the lock is held or not (the high bit of the integer) and the number of waiters on the lock (all the other bits).

- The code snippet shows how to optimize for the common case, specifically when there is no contention for the lock.

-> with only one thread acquiring and releasing a lock, very little work is done (the atomic bit test-and-set to lock and an atomic add to release the lock).

28.16 Two-Phase Locks

-

the Linux approach is now referred to as a two-phase lock.

-

A two-phase lock realizes that spinning can be useful, particularly if the lock is about to be released.

-

In the first phase, the lock spins for a while, hoping that it can acquire the lock.

-

If the lock is not acquired during the first spin phase, a second phase is entered, where the caller is put to sleep, and only woken up when the lock becomes free later.

-

The Linux lock above is a form of such a lock, but it only spins once.

-> a generalization of this could spin in a loop for a fixed amount of time before using futex support to sleep. -

Two-phase locks are yet another instance of a hybrid approach, where

combining two good ideas may indeed yield a better one.

28.17 Summary

- The above approach shows how real locks are built these days.

-> some hardware support (in the form of a more powerful instruction) & some operating system support (e.g., in the form of park() and unpark() primitives on Solaris, or futex on Linux).