--19.인공신경망.ipynb--

인공신경망

FashionMNIST 데이터셋

-

MNIST 는

- 손글씨 이미지 데이터셋

- 0 ~ 9 까지의 숫자 손글씨

-

FashionMNIST 는 숫자 대신 패션 아이템 이미지로 구성

- 많은 딥러닝 라이브러리에서 이 데이터를 가져올수 있도록 제공함

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import os

import tensorflow as tf

실행마다 동일한 결과를 얻기 위해 keras에 랜덤시드 사용

tf.keras.utils.set_random_seed(42)

tf.config.experimental.enable_op_determinism()

from tensorflow import keras

FashionMNIST 다운로드

(train_input, train_target), (test_input,test_target) = keras.datasets.fashion_mnist.load_data()

train_input.shape, train_target.shape

훈련 데이터는 총 60000장의 gray scale 이미지 (h,w) 는 (28, 28)

test_input.shape, test_target.shape

일부 샘플을 시각화

fig, axs = plt.subplots(1, 10 ,figsize=(10,10))

for i in range(10) :

axs[i].imshow(train_input[i], cmap='gray')

axs[i].axis('off')

plt.show()

타겟값 일부 확인

[train_target[i] for i in range(10)]

FasionMNIST 의 레이블 의미

0 1 2 3 4 5 6 7 8 9

티셔츠 바지 스웨터 드레스 코트 샌달 셔츠 스니커즈 가방 앵클부츠

각 레이블당 샘플 개수 확인

np.unique(train_target, return_counts=True)

로지스틱 회귀로 분류해보기

전처리 (정규화)

정규화

각 픽셀값 0~255 => 0~1 사이의 값으로 정규화

train_scaled = train_input / 255.0

1차원 배열로 펼치기

train_scaled = train_scaled.reshape(-1, 28*28)

train_scaled.shape

정규화 결과 확인

np.min(train_scaled), np.max(train_scaled)

from sklearn.model_selection import cross_validate

from sklearn.linear_model import SGDClassifier

sc = SGDClassifier(loss='log_loss', max_iter=5, random_state=42)

scores = cross_validate(sc, train_scaled, train_target, n_jobs=-1)

np.mean(scores['test_score'])

"""

z = a x (Weight) + b x (Length) + c x (Diagonal) + d x (Height) + e x (Width) + f

위 식을 FashionMNIST 데이터에 맞게 변형하면 다음과 같을거다.

▶ 첫번째 레이블 '티셔츠'에 대한 선형방정식

z_티셔츠 = w1 x (픽셀1) + w2 x (픽셀2) + .... + w784 x (픽셀784) + b

▶ 두번째 레이블 '바지'에 대한 선형방정식

z_바지 = w1' x (픽셀1) + w2' x (픽셀2) + .... + w784' x (픽셀784) + b'

"""

None

인공신경망

Artificial neural network(ANN)

인공신경망에서는 '교차검증'을 잘 사용하지 않고 검증 세트를 별도로 덜어내어 사용하곤 함.

- 이유1 : 딥러닝 분야의 데이터셋은 이미 충분히 크다. (검증 점수가 안정적)

- 이유2 : 교차검증 수행하기에 훈련시간이 너무 오래 걸린다.

훈련데이터에서 검증 데이터 쪼개기

from sklearn.model_selection import train_test_split

train_scaled, val_scaled, train_target, val_target = \

train_test_split(train_scaled, train_target, test_size=0.2, random_state=42)

train_scaled.shape, train_target.shape

val_scaled.shape, val_target.shape

Dense Layer

밀집층

keras.layers 패키지에 다양한 layer들이 있다

Dense Layer는 워낙 빽빽하게 연결되는 층이고

양쪽의 뉴런을 '모두' 연결하고 있기 떄문에 Fully Connected Layer(완전 연결층)이라고도 부릅니다.

dense = keras.layers.Dense(10, activation='softmax', input_shape=(784,))

# Dense(뉴런의 개수, 뉴런의 출력에 적용할 함수, 입력의 크기)뉴런개수 10 개 <= 10개의 패션아이템 분류

입력은 784 픽셀

활성화함수 (activation function) : 뉴런의 선형방정식 계산 결과에 적용되는 함수

신경망 모델 생성

model = keras.Sequential(dense) # dense layer를 가진 신경망 모델 생성

인공신경망으로 분류하기

keras 모델을 훈련하기 전에 설정단계

compile() 수행

- loss function을 지정

- 측정값 (metric)을 지정

model.compile(loss='sparse_categorical_crossentropy', metrics = 'accuracy')

이전에 keras 에선 이렇게 나누어 부른다

이진분류 -> 이진 크로스 엔트로치 손실 함수 사용 -> 'binary_crossentropy'

다중분류 -> 크로스 엔트로피 손실 함수 사용 -> 'categorical_crossentropy'

sparse 란?

이진 크로스 엔트로피 손실을 위해 -log(예측확률) x 타겟값(정답)

양성샘플 (타겟값1) -log(a) x 타겟값

음성샘플 (타겟값0) -log(1-a) x (1-타겟값)

이진 분류의 경우는 오직 양성 클래스에 대한 확률(a) 만 출력하니까 계산이 간단했다.

그러나 FashionMNIST는?

샘플이 티셔츠일 확률 a1

a1 ----> -log(a1) x 타겟값 ---> -log(a1)

a2 ----> -log(a1) x 타겟값

.. ..... ....

a10 ----> -log(a1) x 타겟값

이진분류와 달리 각 클래스에 대한 확률이 모두 출력되기 때문에 타겟에 해당하는 확률만

남겨놓기 위해서 나머지 확률에는 모두 0을 곱한다.

이렇게 하기 위해 티셔츠 샘플의 타겟값을 첫번째 원소만 1이고 나머지는 모두 0인 배열로 만들 수 있다.

[1, 0, 0, 0, 0, 0, 0, 0, 0, 0]

이와 같이 1, 0으로 구성된 배열로 만드는 것을 One-Hot encoding이라 한다.

위 배열과 출력층의 활성화 값의 배열과 곱하면 된다.

[a1, a2, ....., a10] x [1, 0, 0, 0, 0, 0, 0, 0, 0, 0]

바지 =. [0, 1, 0, 0, 0, 0, 0, 0, 0, 0]

train_target[:10]

그러나! 텐서플로 정수로 된 타겟값을 one-hot encoding으로 바꾸지 않고도 사용 할 수 있다

정수로 된 타겟값을 사용해 크로스 엔트로피 손실을 계산하는 것이 바로

'sparse_categorical_crossentropy'

model.fit(train_scaled, train_target, epochs=5)

Epoch 1/5

1500/1500 [==============================] - 4s 2ms/step - loss: 0.6069 - accuracy: 0.7947

1500은 batch의 개수

↑ 로지스틱 회귀에 비해 나온 점수가 나왔다

검증세트로 모델의 성능을 확인

evaluate()

model.evaluate(val_scaled, val_target)

[0.45262545347213745, 0.8464999794960022] <= [loss, metrics score]

[종합]

dense = keras.layers.Dense(10, activation='softmax', input_shape=(784,))

모델

model = keras.Sequential(dense)

model.compile(loss='sparse_categorical_crossentropy', metrics='accuracy')

훈련

model.fit(train_scaled, train_target, epochs=5)

평가

model.evaluate(val_scaled, val_target)

심층 신경망

여러개의 layer추가해보기

은닉층 (hidden layer) : 입력층과 출력층 사이의 모든 레이어

활성화 함수 (activation function)

: 선형방정식 계산결과에 적용되는 함수

출력층에 적용되는 활성화 함수는 제한적

이진분류 -> 시그모이드 함수, 다중분류는 -> 소프트 맥스 ★(분류모델에서 쓰는 활성화 함수)

반면, 은닉층에 적용되는 활성화 함수는 다양하다!

sigmoid, Relu, Tanh, ...

참고] 회귀모델의 출력층에 사용하는 활성화 함수

필요없다. 회귀의 출력은 임의의 숫자이기 때문에.

출력층의 선형방정식 계산결과를 그래도 출력.

Dense layer의 activation= 매개변수에 값을 지정하지 않습니다.

은닉층은 왜 활성화 함수가 필요한가?

2개의 선형 방정식을 생각해보자

a x 4 + 2 = b

b x 3 - 5 = c

b 대신에 위의 식을 대입하면 -> a x 12 + 1 = c

이렇게 합치면 b가 사라진다!!

신경망도 마찬가지. 은닉층에서 선형적인 산술계산만 수행한다면 수행역할이 없는 셈이 된다.

선형계산을 적당하게 비선형적으로 비틀어 주어야한다.

a x 4 + 2 = b

log(b) = k

k x 3 - 5 = c

시그모이드 함수를 사용한 은닉층과 소프트 맥스 함수를 사용한 출력층

dense1 = keras.layers.Dense(100, activation='sigmoid', input_shape=(784,))

dense2 = keras.layers.Dense(10, activation='softmax')

은닉층의 뉴런의 개수는 몇개면 적당할까?

정해진 기준은 없다. 이를 판단하기 위해서는 상당한 경험이 필요하다...

단, 출력층의 뉴런 보다는 많게는 만들어야한다. 은닉층의 뉴런이 10개보다 적다면 부족한 정보가 전달 될 것이다.

심층 신경망 (deep neural network, DNN) 만들기

model = keras.Sequential([dense1, dense2]) # 주의! 출력층을 가장 마지막에 두어야한다.

keras는 모델의 layer에 대한 정보를 출력해준다.

model.summary()

"""

↑ 모델에 들어있는 layer의 순서대로 나열

각 layer 마다 이름, 클래스, 출력크기, 모델 파라미터 개수가 출력.

"""

None

dense1 의 parameter

78500 = (입력 784 + bias) x 100

dense2 의 parameter

1010 = (입력 100 + bias) x 10

Squential 클래스에 layer를 추가하는 다른방법

model = keras.Sequential([

keras.layers.Dense(100, activation='sigmoid', input_shape=(784,), name='hidden'),

keras.layers.Dense(10, activation='softmax', name='output')

], name='Fashion MNIST 모델')

model.summary()

add() 사용하여 layer 추가

model = keras.Sequential()

model.add(keras.layers.Dense(100, activation='sigmoid', input_shape=(784,), name='hidden'))

model.add(keras.layers.Dense(10, activation='softmax', name='output'))

model.summary()

model.compile(loss='sparse_categorical_crossentropy', metrics='accuracy')

model.fit(train_scaled, train_target, epochs=5)

"""

Epoch 1/5

1500/1500 [==============================] - 4s 2ms/step - loss: 0.6069 - accuracy: 0.7947

Epoch 2/5

1500/1500 [==============================] - 3s 2ms/step - loss: 0.4742 - accuracy: 0.8382

Epoch 3/5

1500/1500 [==============================] - 3s 2ms/step - loss: 0.4508 - accuracy: 0.8474

Epoch 4/5

1500/1500 [==============================] - 3s 2ms/step - loss: 0.4367 - accuracy: 0.8527

Epoch 5/5

1500/1500 [==============================] - 3s 2ms/step - loss: 0.4280 - accuracy: 0.8555

<keras.src.callbacks.History at 0x798055148760>

Epoch 1/5

1500/1500 [==============================] - 4s 3ms/step - loss: 0.5711 - accuracy: 0.8067

Epoch 2/5

1500/1500 [==============================] - 5s 4ms/step - loss: 0.4134 - accuracy: 0.8506

Epoch 3/5

1500/1500 [==============================] - 4s 3ms/step - loss: 0.3792 - accuracy: 0.8641

Epoch 4/5

1500/1500 [==============================] - 4s 2ms/step - loss: 0.3548 - accuracy: 0.8719

Epoch 5/5

1500/1500 [==============================] - 5s 3ms/step - loss: 0.3364 - accuracy: 0.8771

<keras.src.callbacks.History at 0x7980544cb670>

"""

None

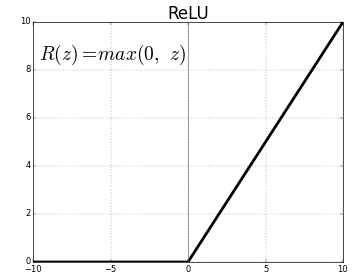

ReLU 활성화 함수

이미지 분류에 많이 사용되는 활성화 함수, DNN에서 뛰어난 성능.

Flatten layer

batch 차원을 제외하고 나머지 입력차원을 모두 일렬로 펼치는 역할

입력에 곱해지는 가중치나 절편은 없다. 즉, 학습 대상은 아니다.

model = keras.Sequential()

model.add(keras.layers.Flatten(input_shape=(28,28)))

model.add(keras.layers.Dense(100, activation='relu'))

model.add(keras.layers.Dense(10, activation='softmax'))

model.summary()

"""

Flatten을 사용하는 장점

- '입력값의 차원'을 짐작 할 수 있다는 점.

- keras API 철학 : '입력데이터에 대한 전처리 과정'을 가능한 모델에 포함

"""

None

(train_input, train_target), (test_input,test_target) = \

keras.datasets.fashion_mnist.load_data()

train_scaled = train_input /255.0

train_scaled, val_scaled, train_target, val_target =\

train_test_split(train_scaled, train_target, test_size=0.2, random_state=42)

모델 컴파일, 훈련

model.compile(loss='sparse_categorical_crossentropy', metrics='accuracy')

model.fit(train_scaled, train_target, epochs=5)

model.evaluate(val_scaled, val_target)

'하이퍼 파라미터'

모델이 학습하는 파라미터 가 아니다

학습전에 사람이 지정해 주어야 하는 파라미터다.

인공신경망에서의 하이퍼 파라미터들은 많~다

(지금까지 배운 범위 에선 다음과 같은 하이퍼 파라미터 들이 있다)

- 추가할 은닉층의 개수

- 각 층의 뉴런 개수

- 활성화 함수

- 층의 종류

- 배치(batch) 사이즈 : fit() 의 batch_size= 매개변수

- epoch : fit() 의 epochs= 매개변수

Optimizer

- 옵티마이저

- keras가 제공하는 경사하강법 알고리즘(들)

keras 는 기본적으로 '미니배치 경사하강법' 을 사용한다

미니배치(mini-batch) 의 개수 기본값은 32개 입니다

fit() 의 batch_size 매개변수에서 이를 조정해줄수 있다

경사하강법 알고리즘은

compile() 의 optimizer= 매개변수에서 이를 설정해줄수 있다

기본값은 RMSprop 사용.

keras 는 다양한 종류의 경사하강법 알고리즘 제공하며

이를 옵티마이저 (optimizer) 라 한다

어떠한 옵티마이저 를 선택하는것도 하이퍼 파라미터다.

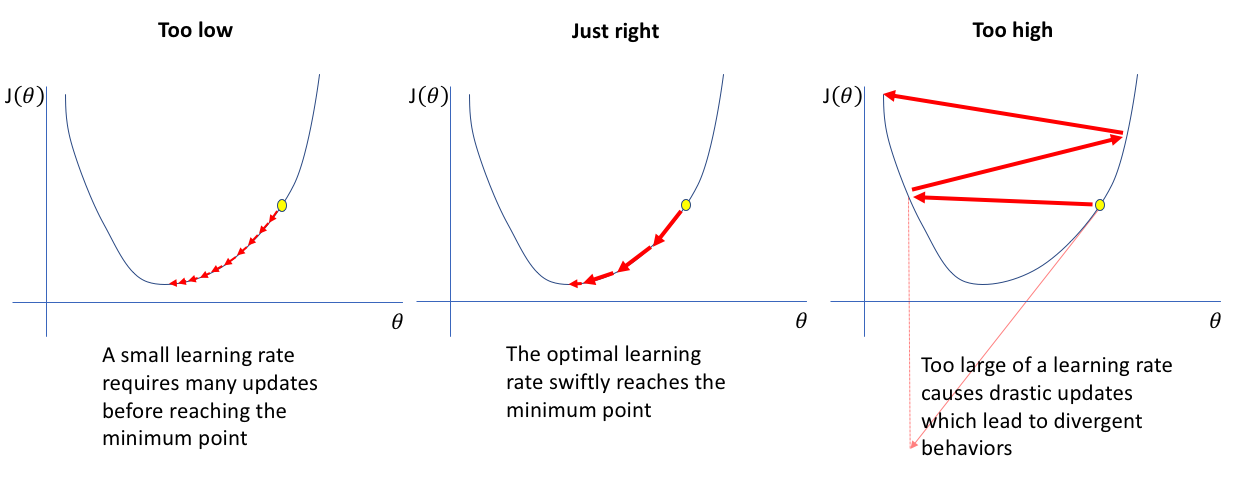

경사하강법 알고리즘의 학습률(learning rate) 파라미터 또한 조정가능한 하이퍼 파라미터다.

- Convex function (볼록 함수)

- Non-convex function (비볼록 함수)

- local minimum

- saddle point 문제

-

momentum

-

learning rate

SGD optimizer

이름은 stochastic 이긴 하지만

1개씩 샘플 뽑아서 훈련하는건 아니다.

이 또한 미니배치(mini-batch) 사용함

model.compile(optimizer='sgd', loss='sparse_categorical_crossentropy', metrics='accuracy')

tensorflow.keras.optimizers 패키지 아래에 Optimizer 클래스들이 있다.

위와 같이 'sgd' <- 문자열로 지정하면 SGD 클래스의 '기본매개변수'로 생성한 객체로 동작함.

위와 동일한 코드를 이와 같이 작성 가능

sgd = keras.optimizers.SGD()

model.compile(optimizer=sgd, loss='sparse_categorical_crossentropy', metrics='accuracy')

learning rate (학습률)

learning_rate = 매개변수 지정. (기본값 0.01)

sgd = keras.optimizers.SGD(learning_rate=0.1)

다양한 Optimizer 들

ppt 참조

adagrad = keras.optimizers.Adagrad() # 생성자에 매개변수 지정 가능.

model.compile(optimizer=adagrad, loss='sparse_categorical_crossentropy', metrics='accuracy')

rmsprop = keras.optimizers.RMSprop()

model.compile(optimizer=rmsprop, loss='sparse_categorical_crossentropy', metrics='accuracy')

Adam 사용한 모델 생성 및 훈련

model = keras.Sequential()

model.add(keras.layers.Flatten(input_shape=(28,28)))

model.add(keras.layers.Dense(100, activation='relu'))

model.add(keras.layers.Dense(10, activation='softmax'))

model.compile(optimizer='adam', loss='sparse_categorical_crossentropy', metrics='accuracy')

model.fit(train_scaled, train_target, epochs=5)

model.evaluate(val_scaled, val_target)