다중 선형 회귀 (Multiple Linear Regression)와 행렬 연산

입력 변수()가 여러 개일 때 데이터를 어떻게 처리해야 하는지, 특히 행렬(Matrix)의 차원을 맞추는 법을 중점적으로 확인해보자

1. 다중 선형 회귀란?

- 입력 데이터(Feature)가 1개가 아니라 여러 개()인 경우

- 단순 회귀가 2차원 평면의 '직선'을 찾는다면, 다중 회귀는 고차원 공간의 '평면(Hyperplane)'을 찾는 과정

수식:

행렬식:

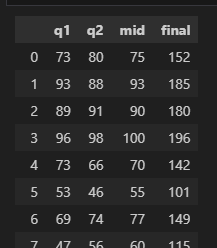

2. 예제: 퀴즈 점수로 기말고사 예측하기 (변수 3개)

세 번의 쪽지시험 점수(q1, q2, mid)를 통해 기말고사 점수(final)를 예측하기

1) 데이터 준비

import pandas as pd

import numpy as np

from sklearn.linear_model import LinearRegression

import matplotlib.pyplot as plt

# 데이터 로드 (header가 없는 경우)

df = pd.read_csv('data-01.csv', header=None)

df.columns = ['q1', 'q2', 'mid', 'final']

df.head()

2) 학습 (Fit)

독립변수()가 3개(

q1,q2,mid)이고 종속변수()가 1개(final)입니다.

# X: 마지막 열을 제외한 모든 열 (특성 3개)

# Y: 마지막 열 (라벨 1개)

x = df.iloc[:, :-1].values

y = df.iloc[:, [-1]].values

model = LinearRegression()

model.fit(x, y)

# 학습된 가중치(w)와 절편(b) 확인

print('가중치(Coefficients):', model.coef_)

print('절편(Intercept):', model.intercept_)

# 출력 예시

# 가중치 [[0.35593822 0.54251876 1.16744422]] -> 변수가 3개라 w도 3개

# 절편 [-4.3361024]3) 예측 (Predict) 및 행렬 연산 검증

Q. q1: 90, q2: 90, mid: 95점인 학생의 기말고사 점수는?

예측 시 입력 데이터의 Shape(형태)를 맞추는 것이 핵심

# 모델이 학습할 때 X가 (N, 3) 형태였으므로,

# 예측할 때도 (1, 3) 형태로 넣어줘야 함 (대괄호 2개 [[ ]])

model.predict([[90, 90, 95]])

# 결과

# array([[187.43222601]])3. 핵심 정리: 머신러닝과 행렬 차원(Shape)

- 행렬 곱(

matmul)이 성립하기 위해 각 데이터의 차원은 다음과 같은 규칙을 가진다.

-

가중치(, 기울기):형태:

[컬럼(특성)의 개수, 라벨의 개수]

이번 예제: 특성 3개, 라벨 1개 (3, 1) -

특성 데이터():형태:

[데이터 건수(행), 컬럼의 개수(열)] -

라벨 데이터():형태:

[데이터 건수(행), 라벨의 개수(열)](대부분 1열) -

예측 시(): 반드시

[행, 컬럼의 개수]형태를 맞춰야 함.

계산 원리:

예측값 스칼라 도출 -

Tip: Scikit-learn에서는 내부적으로 Transpose 등을 처리해주지만, 개념적으로는 "앞 행렬의 열 개수와 뒤 행렬의 행 개수가 같아야 곱셈이 된다"는 원칙을 꼭 기억해야 한다.

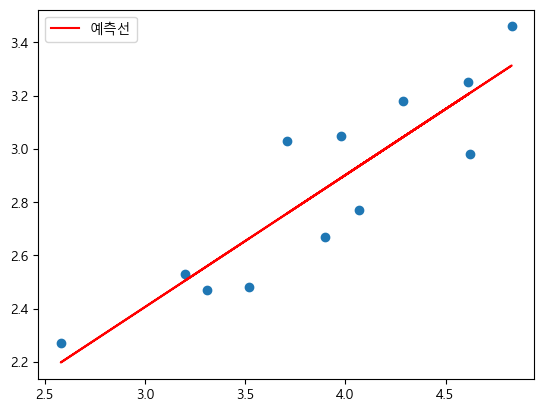

4. 예제 1: 전기 생산량 vs 사용량 (단순 회귀 복습)

- 데이터가 적을 때(

n=1)는 시각화가 직관적

# 1. 데이터 준비

elecDF = pd.read_csv('data/electric.csv', index_col='Unnamed: 0')

x = elecDF.iloc[:, :-1].values # 생산량

y = elecDF.iloc[:, [-1]].values # 사용량

# 2. 모델 학습

model = LinearRegression()

model.fit(x, y)

print(f"Shape 확인 - x:{x.shape}, y:{y.shape}")

# Shape 확인 - x:(12, 1), y:(12, 1)

print(f"기울기:{model.coef_}, 절편:{model.intercept_}")

# 기울기:[[0.49560324]], 절편:[0.91958143]

# 3. 예측 (생산량이 4와 5일 때)

# 2개의 데이터를 예측하므로 (2, 1) 행렬로 입력

print(model.predict([[4], [5]]))

# array([[2.90199437],

# [3.39759761]])

# 4. 시각화

plt.scatter(x, y, label='실제값')

plt.plot(x, model.predict(x), 'r', label='예측선') # 회귀선

plt.legend()

plt.show()

5. 예제 2: 나무의 부피 예측 (다중 회귀)

- 나무의 둘레(

Girth)와 키(Height)를 이용하여 부피(Volume)를 예측한다. (변수 2개 결과 1개)

# 1. 데이터 준비

treeDF = pd.read_csv('data/trees.csv')

# Girth, Height, Volume 컬럼 존재

x = treeDF.iloc[:, :-1].values # Girth, Height (특성 2개)

y = treeDF.iloc[:, [-1]].values # Volume (라벨)

# 2. 모델 학습

model = LinearRegression()

model.fit(x, y)

# 3. 예측

# Case: (둘레 11, 키 66) 과 (둘레 11, 키 75) 인 나무의 부피는?

# 입력 데이터 Shape: (2, 2)

result = model.predict([[11, 66], [11, 75]])

print(result)

# 결과

# array([[16.19268807],

# [19.24594918]])

# 4. 시각화 (Line Chart 비교)

# 다중 회귀는 3차원 차트가 필요하므로,

# 실제값(y)과 예측값(pred)을 나란히 그려서 추세를 비교합니다.

pred = model.predict(x)

plt.plot(y, 'b', label='실제값(Volume)')

plt.plot(pred, 'r--', label='예측값(Predicted)')

plt.legend()

plt.title("Actual vs Predicted Volume")

plt.show()

해석: 파란 실선(실제값)과 빨간 점선(예측값)이 비슷하게 움직인다면 모델 학습이 잘 된 것

요약

- 다중 선형 회귀는 입력 변수()가 여러 개일 때 사용한다.

- 학습된 모델의 가중치() 개수는 입력 변수의 개수와 같다.

predict메서드 사용 시, 학습 데이터와 동일한 열(Column) 개수를 가진 2차원 행렬(Array)을 입력해야 한다.- 행렬 곱의 원리()를 이해하면 에러 없이 데이터를 다룰 수 있다.