지난번에 LM-Studio에서 llm 서버를 동작하여 Obsidian에서 챗봇을 돌려보았다.

이 글에서는 파이썬 코드상에서 langchain을 llm 서버에 연결하여 동작시켜보고자 한다.

필요한 모듈 설치

pip install langchain langchain_openailm-studio 서버 연결

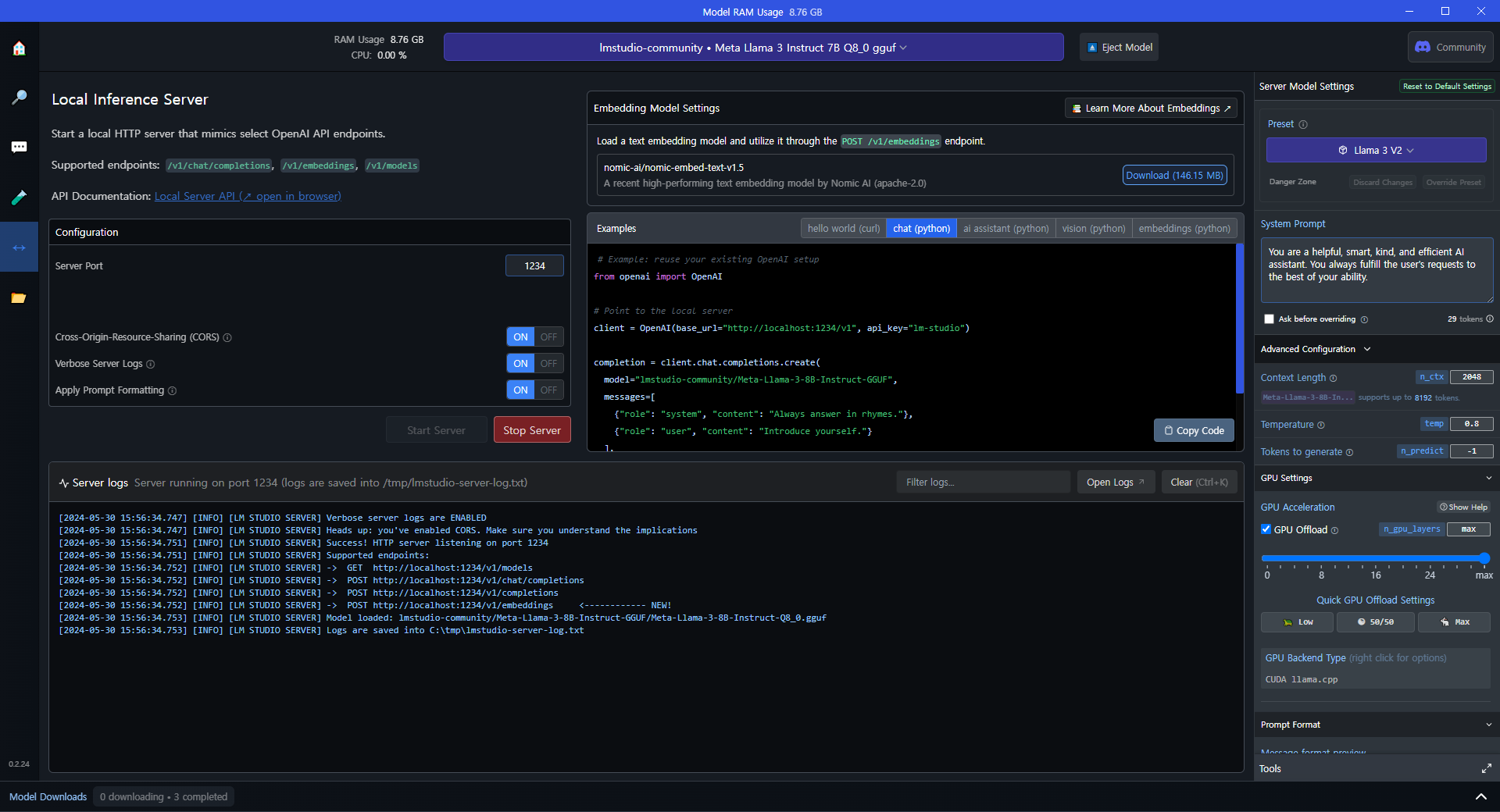

- 먼저 LM Studio 에서 llm 로컬 서버를 실행해 준다.

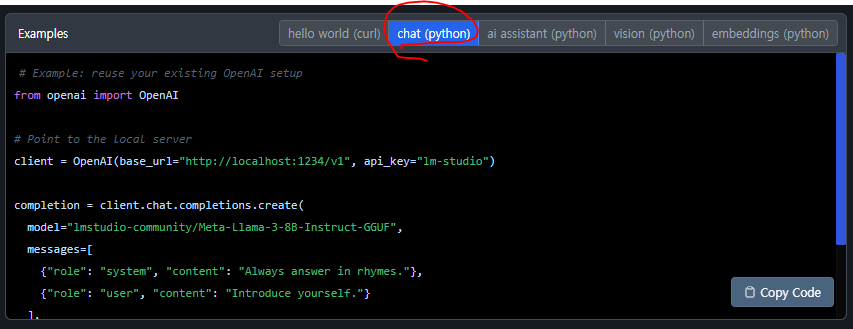

- 코드 창을 자세히 보면 서버의 URL과 api key가 주어진다.

- 주어진 URL과 api key를 참고하여 아래 코드처럼 작성한다.

- 모델 파라미터는 실행한 로컬서버의 llm 모델 이름이다.

from langchain_openai import ChatOpenAI

from langchain_core.callbacks.streaming_stdout import StreamingStdOutCallbackHandler

llm = ChatOpenAI(

base_url="http://localhost:1234/v1",

api_key="lm-studio",

model="lmstudio-community/Meta-Llama-3-8B-Instruct-GGUF",

temperature=0.1,

streaming=True,

callbacks=[StreamingStdOutCallbackHandler()], # 스트림 출력 콜백

)프롬프트 작성

- 간단하게 프롬프트를 작성할 때는 ChatPromptTemplate.from_template을 사용하면 된다.

from langchain_core.prompts import ChatPromptTemplate

from langchain_core.output_parsers import StrOutputParser

prompt = ChatPromptTemplate.from_template(

"{input} 한국어로 답변해줘."

)

chain = prompt | llm | StrOutputParser()

response = chain.invoke("안녕!")

# response = chain.invoke({'input' : "안녕!"})- llm을 불러올 때 streaming=True와 콜백을 주었기 때문에 실행 중에도 자동으로 한 글자씩 출력이 된다.

안녕하세요! 😊한국어로 답변하겠습니다. 무엇을 도와드릴까요? 🤔- streaming=False 일 때, 강제로 stream 하기 위해서는 다음과 같이 사용하면 된다.

for t in chain.stream("안녕!"):

print(t, end='')- StrOutputParser()는 뭘까? 주석처리로 비교해보자.

chain = prompt | llm | StrOutputParser()

response = chain.invoke("안녕!")

response'안녕하세요! 😊한국어로 답변하겠습니다. 무엇을 도와드릴까요? 🤔'chain = prompt | llm# | StrOutputParser()

response = chain.invoke("안녕!")

responseAIMessage(content='안녕하세요! 😊한국어로 답변하겠습니다! 무엇을 도와드릴까요? 🤔', response_metadata={'token_usage': {'completion_tokens': 20, 'prompt_tokens': 56, 'total_tokens': 76}, 'model_name': 'lmstudio-community/Meta-Llama-3-8B-Instruct-GGUF', 'system_fingerprint': None, 'finish_reason': 'stop', 'logprobs': None}, id='run-da48ac13-8be5-47d2-9d63-b86b462dbc09-0')프롬프트 작성 2

- 이번엔 시스템 프롬프트 작성 방법을 알아보자.

- 아래 코드와 같이 from_template 대신 from_messages을 사용하면 된다.

prompt = ChatPromptTemplate.from_messages([

("system", "You are a helpful, smart, kind, and efficient AI assistant. You always fulfill the user's requests to the best of your ability. You must always answer in Korean."),

("user", "{input}")

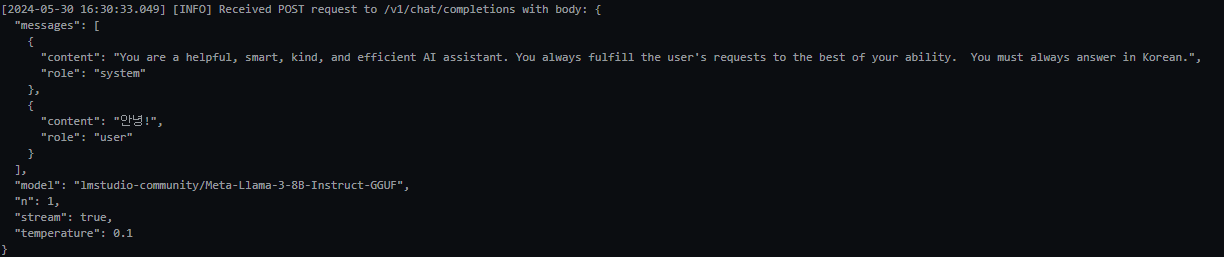

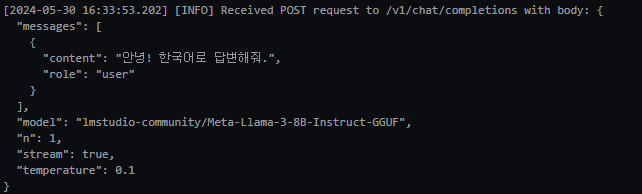

])- lm-studio 로그를 보면서 비교해보자.

- 아래는 from_template을 사용했을때의 로그이다.

- 비교 해보니 from_template은 user의 프롬프트를 수정한다.