Tensorflow와 scikit-learn을 주로 다루었던 나에게 pytorch는 아직 생소하기는 하다. 그렇다고 하더라도 유사한 점이 많고 과제를 하면서 많은 부분을 배울 수 있었다(부덕아 고마워,,,)

수업 내용 정리 이후, 과제에서 눈여겨 볼만 한 것은 다음 포스트로 남기고자 한다. 그럼 잡소리는 여기까지

💡 torch.nn.Module

1. torch.nn.module

- 딥러닝을 구성하는 Layer의 base class라고 생각하면 된다. 이 모듈들은 다른 모듈의 구성이 될 수 있고 하나의 모듈을 여러 기능을 모아둔 집합체이다.

- Input, Output, Forward, Backward 정의

- Backward는 보통 Autograd(자동미분 : 해당 weight 값들을 내보내준다)을 위해 사용되는 경우가 많다. Backward에 대한 내용은 다음 포스트에 주로 다룰 것이다.

- 학습의 대상이 되는 parameter(tensor) 정의

- input이 있고 input에 대해서 돌아가는 process(formula) 그리고 그것으로 나오는 output으로 구성이 된다.

- backward에서는 input 보다는 모듈 안에 설정된 W와 b에 대해서 gradient를 진행해주는 것

2. nn.Parameter

- Tensor 객체의 상속 객체

- nn.Module 내에 attribute가 될 때는 required_grad=True로 지정되어 학습 대상이 되는 Tensor

- 보통은 모델에 이미 선언되어 있기 때문에 우리가 직접 지정할 일은 잘 없음

- 대부분의 layer에는 weights 값들이 지정되어 있음

class MyLiner(nn.Module):

def __init__(self, in_features, out_features, bias=True):

super().__init__()

self.in_features = in_features

self.out_features = out_features

self.weights = nn.Parameter( torch.randn(in_features, out_features))

self.bias = nn.Parameter(torch.randn(out_features))

def forward(self, x : Tensor):

return x @ self.weights + self.bias- 3 X 7을 받아서 3 X 5로 만들어주기 위해 in_features는 7 그리고 out_features는 5가 될 것

- 그럼 왜 Parameter로 선언을 하고 Tensor로 선언을 하지 않는가??

class MyLiner_Tensor(nn.Module):

def __init__(self, in_features, out_features, bias=True):

super().__init__()

self.in_features = in_features

self.out_features = out_features

self.weights = Tensor( torch.randn(in_features, out_features))

self.bias = Tensor(torch.randn(out_features))

def forward(self, x : Tensor):

return x @ self.weights + self.bias

layer = Mylinear()

for value in layer.parameters():

print(value)

>> 각 파라미터들이 출력된다

layer = Mylinear_Tensor()

for value in layer.parameters():

print(value)

파라미터가 나오지 않는다(backward propagation) 대상이 아니기 때문에

Backward

- Layer에 있는 Parameter들의 미분을 수행한다

- 이미 선언되어 있는 method임

- Forward의 결과값 (model의 output=예측치)과 실제값간의 차이(loss) 에 대해 미분을 수행

- 해당 값으로 Parameter 업데이트

for epoch in range(epochs): ......

# Clear gradient buffers because we don't want any gradient

#from previous epoch to carry forward

optimizer.zero_grad()

# get output from the model, given the inputs

outputs = model(inputs)

# get loss for the predicted output

loss = criterion(outputs, labels) print(loss)

# get gradients w.r.t to parameters loss.backward()

# update parameters

optimizer.step()- 기본적으로 optimizer를 zero_grad()로 바꿔서 이전의 gradient값이 지금의 학습에 영향을 주지 않기 위해 초기화를 하는 것

- 위의 코드는 바로 이해할 수 있는 수준이어야 한다

import numpy as np

# create dummy data for training

x_values = [i for i in range(11)]

x_train = np.array(x_values, dtype=np.float32)

x_train = x_train.reshape(-1, 1)

y_values = [2*i + 1 for i in x_values]

y_train = np.array(y_values, dtype=np.float32)

y_train = y_train.reshape(-1, 1)

import torch

from torch.autograd import Variable

class LinearRegression(torch.nn.Module):

def __init__(self, inputSize, outputSize):

super(LinearRegression, self).__init__()

self.linear = torch.nn.Linear(inputSize, outputSize)

def forward(self, x):

out = self.linear(x)

return out

inputDim = 1 # takes variable 'x'

outputDim = 1 # takes variable 'y'

learningRate = 0.01

epochs = 100

model = LinearRegression(inputDim, outputDim)

##### For GPU #######

if torch.cuda.is_available():

model.cuda()

else:

model.forward()

criterion = torch.nn.MSELoss()

optimizer = torch.optim.SGD(model.parameters(), lr=learningRate)

# Stochastic Gradient Descent의 대상이 되는 것은 모델의 파라미터들

for epoch in range(epochs):

# 지금은 데이터가 적기 때문에 한 번 epoch 때마다 모든 데이터를 다루게 된다.

# 그러나 데이터가 많다면 dataloader를 사용해야 할 것

if torch.cuda.is_available():

inputs = Variable(torch.from_numpy(x_train).cuda())

labels = Variable(torch.from_numpy(y_train).cuda())

else:

inputs = Variable(torch.from_numpy(x_train))

labels = Variable(torch.from_numpy(y_train))

# Clear gradient buffers because we don't want any gradient

# from previous epoch to carry forward, dont want to cummulate gradients

optimizer.zero_grad()

# get output from the model, given the inputs

outputs = model(inputs)

# get loss for the predicted output

loss = criterion(outputs, labels)

print(loss)

# get gradients w.r.t to parameters

loss.backward()

# update parameters

optimizer.step()

print('epoch {}, loss {}'.format(epoch, loss.item()))

with torch.no_grad(): # we don't need gradients in the testing phase

if torch.cuda.is_available():

predicted = model(Variable(torch.from_numpy(x_train).cuda())).cpu().data.numpy()

else:

predicted = model(Variable(torch.from_numpy(x_train))).data.numpy()

print(predicted)

for p in model.parameters():

if p.requires_grad:

print(p.name, p.data)- 기본적 학습 프로세스

- 한 번의 epoch마다

- zeor_grad()

- output = model(input)

- loss = cirterion(output, label)

- loss.backward()

- optimizer.step()

- 한 번의 epoch마다

- Layer에 있는 Parameter들의 미분을 수행한다.

- Forward의 결과값 (model의 output=예측치)과 실제값간의 차이(loss) 에 대해 미분을 수행

- 해당 값으로 Parameter 업데이트

- 즉 연전파를 진행해주는 것이다.

3. Logistic Regression Backward

- Logistic Regression에 대한 Backward

- Logistic Regression은 Sigmoid Function에 Linear 함수를 넣어주는 것

class LR(nn.Module):

def __init__(self, dim, lr=torch.scalar_tensor(0.01)):

super(LR, self).__init__()

# intialize parameters

self.w = torch.zeros(dim, 1, dtype=torch.float).to(device)

self.b = torch.scalar_tensor(0).to(device)

self.grads = {"dw": torch.zeros(dim, 1, dtype=torch.float).to(device),

"db": torch.scalar_tensor(0).to(device)} self.lr = lr.to(device)

def forward(self, x):

## compute forward

z = torch.mm(self.w.T, x) a = self.sigmoid(z) return a

def sigmoid(self, z):

return 1/(1 + torch.exp(-z))

def backward(self, x, yhat, y):

## compute backward

self.grads["dw"] = (1/x.shape[1]) * torch.mm(x, (yhat - y).T)

self.grads["db"] = (1/x.shape[1]) * torch.sum(yhat - y)

def optimize(self):

## optimization step

self.w = self.w - self.lr * self.grads["dw"]

self.b = self.b - self.lr * self.grads["db"]- 자동미분의 대상이 아니기 때문에 파라미터가 아닌 Tensor로 선언되었다

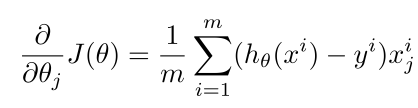

- 위는 W에 대한 미분

- bias에 대한 미분은 Error만 나타나게 된다(xi가 소거되고 b도 1차식이기 때문)

- 이것이 아래와 같이 업데이트가 진행된다

2. Dataset

1. Dataset 개요

- 데이터를 모으고 데이터셋이라는 클래스를 가지고 데이터를 어떻게 불러올 것인지 총 길이가 얼마인지, map-style이라고 해서 하나의 데이터를 불러올 때 어떻게 반환(인덱스)을 해주는지에 대해 정의

- 전처리를 정의를 해준다.

- 즉, 이미지 데이터일 때는 하나의 사진을 데이터로 어떻게 보낼지 설정

- 이미지 같은 데이터를 위해 transforms을 통해 데이터를 변형시켜준다

- Transform에서는 데이터를 숫자로 변경시켜준다

- ToTensor( ) : Tensor로 데이터를 변형시켜준다

- dataset을 전처리하는 부분과 텐서로 변경시켜주는 부분은 구분된다

- DataLoader : Data들이 주어지면 그것들을 묶어서 fit-in 시켜주는 역할을 한다

- batch, shuffle

- 모델에는 DataLoader를 거친 데이터들이 들어가게 될 것

2. Dataset 클래스

- 데이터 입력 형태를 정의하는 클래스이다.

- 데이터를 입력하는 방식의 표준화(따라서 맞춰서 만들어줘야 하겠죠?)

- Image, Text, Audio 등에 따른 다른 입력정의

import torch

from torch.utils.data import Dataset

class CustomDataset(Dataset):

def __init__(self, text, labels):

self.labels = labels

self.data = text

def __len__(self):

return len(self.labels)

def __getitem__(self, idx): label = self.labels[idx]

text = self.data[idx]

sample = {"Text": text, "Class": label}

return sample

text = ['Happy', 'Amazing', 'Sad', 'Unhapy', 'Glum']

labels = ['Positive', 'Positive', 'Negative', 'Negative', 'Negative']

MyDataset = CustomDataset(text, labels)- init은 초기 데이터를 어떻게 불러오는지

- image 같은 경우는 데이터의 폴더/directory를 정의

- len은 데이터의 길이를 정의

- getitem은 idx를 부여할 때, 그 index에 해당하는 데이터를 Dict 타입으로 반환

3. Dataset 클래스 생성시 유의점

- 데이터 형태에 따라 각 함수를 다르게 정의함

- NLP 같은 경우 단어들이 주어지면 다음 단어를 예측하는 모델

- 글자가 다를 수도 있고 가변길이가 다를 수도 있고 다양

- 데이터의 특징에 따라 함수를 조금씩 다르게 설정해줘야 할 필요가 있다

- 모든 것을 데이터 생성 시점에 처리할 필요는 없음

- image의 Tensor 변화는 학습에 필요한 시점에 변환 (Transform을 통해)

- getitem을 할 때는 변환된 tensor를 반환해주는 것이 아니다

- 초기화시에 해야할 필요가 없다

- 데이터 셋에 대한 표준화된 처리방법 제공 필요

- 후속 연구자 또는 동료에게는 빛과 같은 존재

- FAST API 등등이 있다.

- 최근에는 HuggingFace등 표준화된 라이브러리 사용

4. DataLoader 클래스

- Data의 Batch를 생성해주는 클래스

- Dataset은 하나의 데이터를 어떻게 가져올까

- DataLoader은 index를 가지고 여러 개의 데이터를 한 번에 묶어서 모델에 던져주는 역할

- 학습직전(GPU feed전) 데이터의 변환을 책임

- Tensor로 변환 + Batch 처리가 메인 업무

- 병렬적인 데이터 전처리 코드의 고민 필요

text = ['Happy', 'Amazing', 'Sad', 'Unhapy', 'Glum']

labels = ['Positive', 'Positive', 'Negative', 'Negative', 'Negative']

MyDataset = CustomDataset(text, labels)

MyDataLoader = DataLoader(MyDataset, batch_size=2, shuffle=True)

next(iter(MyDataLoader))

# {'Text': ['Glum', 'Sad'], 'Class': ['Negative', 'Negative']}

MyDataLoader = DataLoader(MyDataset, batch_size=2, shuffle=True)

for dataset in MyDataLoader:

print(dataset)

# {'Text': ['Glum', 'Unhapy'], 'Class': ['Negative', 'Negative']} # {'Text': ['Sad', 'Amazing'], 'Class': ['Negative', 'Positive']} # {'Text': ['Happy'], 'Class': ['Positive']}- 보통은 batch size만큼 원래 데이터를 나누어주어서 그것만큼 for문이 돌아가는 형태를 만든다.

DataLoader(dataset, batch_size=1, shuffle=False, sampler=None,

batch_sampler=None, num_workers=0, collate_fn=None, pin_memory=False,

drop_last=False, timeout=0, worker_init_fn=None, *, prefetch_factor=2,

persistent_workers=False)- sampler : 데이터를 어떻게 뽑을지 index를 정해주는 기법

- collate_fn : [ [ data, label ], [ ... ] ] 이 형태를 [Data, Data, ... ] , [Label ... ] 형태로 변환시켜준다

- Variable length의 문장들이 있을 때(15글자, 17글자) batch size로 들어오기 때문에 빨리 끝나는 대상들에 대해 끝부분에 0으로 padding을 시켜줘야 한다

- 각각의 X 데이터에 동일하게 padding을 해줘야 하는데 그런 것을 collate_fn에 정의해준다

Backward, DatasetLoader, Dataset에 대해서는 다음 포스트에 다루도록 하겠다.

참고자료 : 네이버부스트캠프자료

https://pytorch.org/docs/stable/index.html