CvT

1.CvT: Introducing Convolutions to Vision Transformers 제1부

링크

2022년 6월 29일

2.CvT: Introducing Convolutions to Vision Transformers 제2부

Transformers 31, 10 have recently dominated a wide range of tasks in natural language processing (NLP) 32.트랜스포머31, 10는 최근 자연어 처리(NLP)32에서 광범위한 작업을 지배했

2022년 6월 29일

3.CvT: Introducing Convolutions to Vision Transformers 제3부

Transformers that exclusively rely on the self-attention mechanism to capture global dependencies have dominated in natural language modelling 31, 10,

2022년 6월 29일

4.CvT: Introducing Convolutions to Vision Transformers 제4부

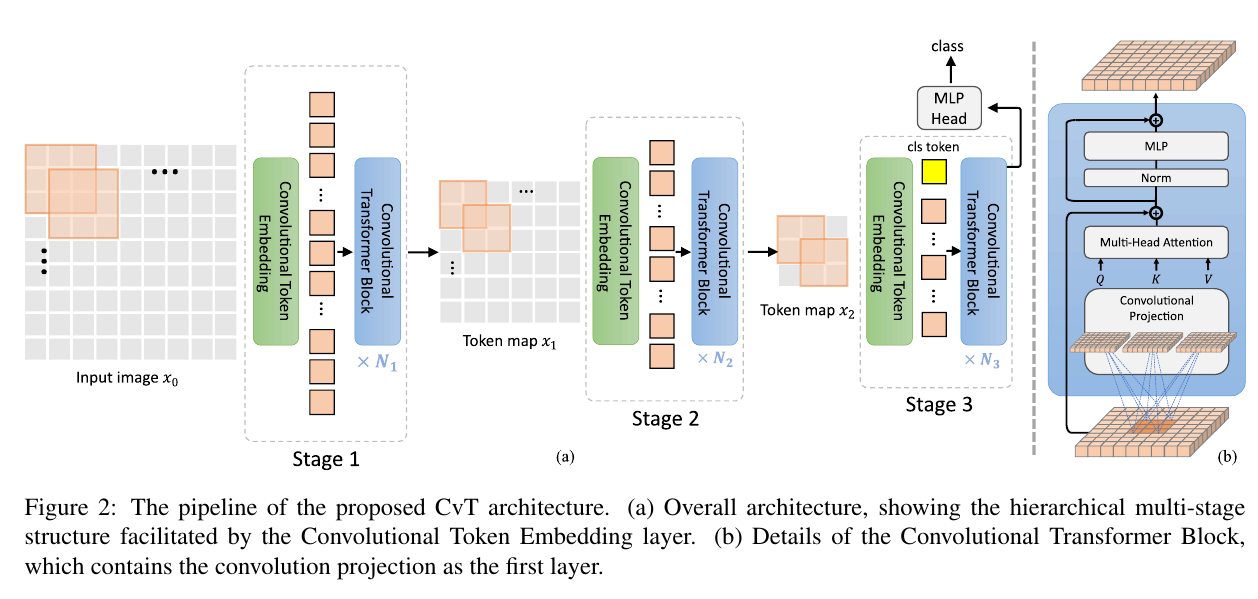

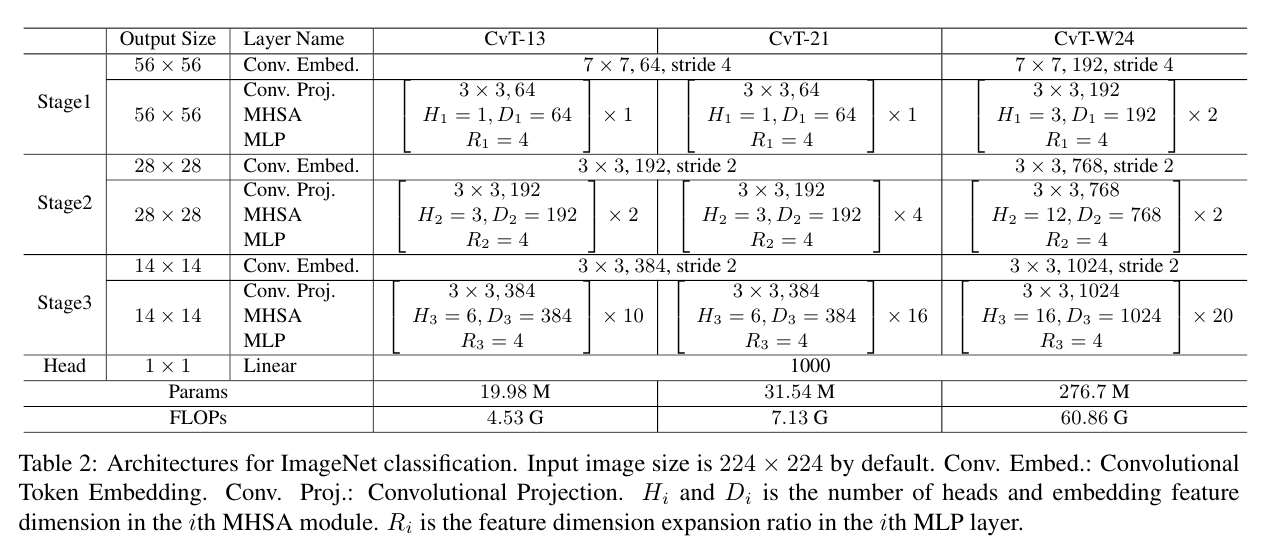

facilitated 가능하게하다, 용이하게하다 ,촉진하다

2022년 6월 29일

5.CvT: Introducing Convolutions to Vision Transformers 제5부

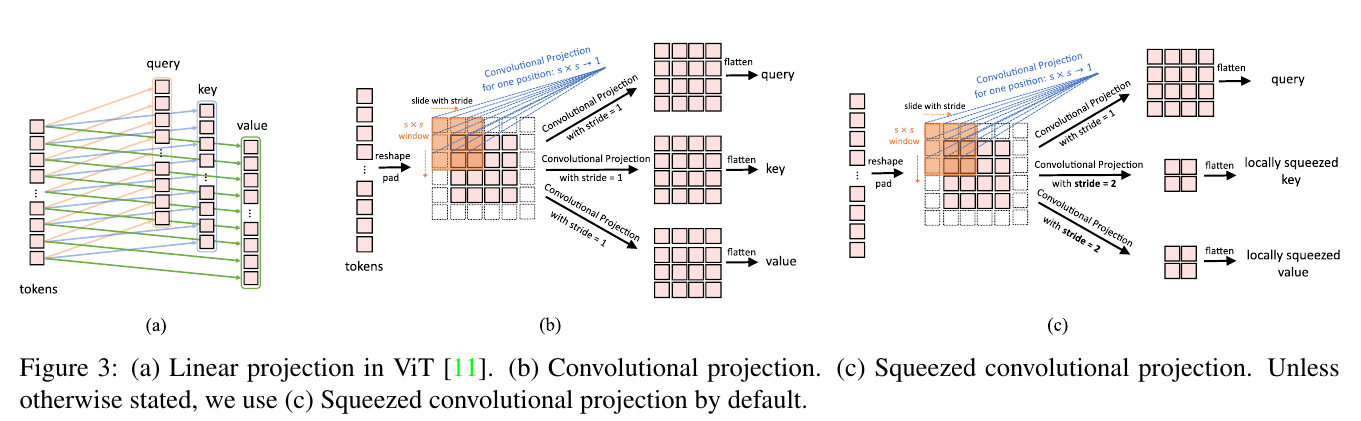

a) Linear projection in ViT 11. (b) Convolutional projection. (c) Squeezed convolutional projection. Unless otherwise stated, we use (c) Squeezed conv

2022년 6월 30일

6.CvT: Introducing Convolutions to Vision Transformers 제6부

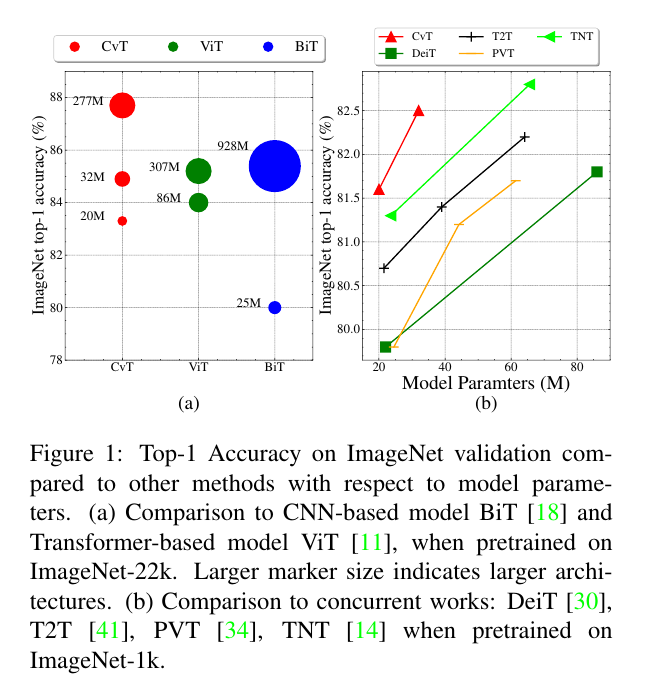

In this section, we evaluate the CvT model on large-scale image classification datasets and transfer to various downstream datasets. 이 섹션에서는 대규모 이미지 분

2022년 7월 3일