Multi-modal Trading AI Agent

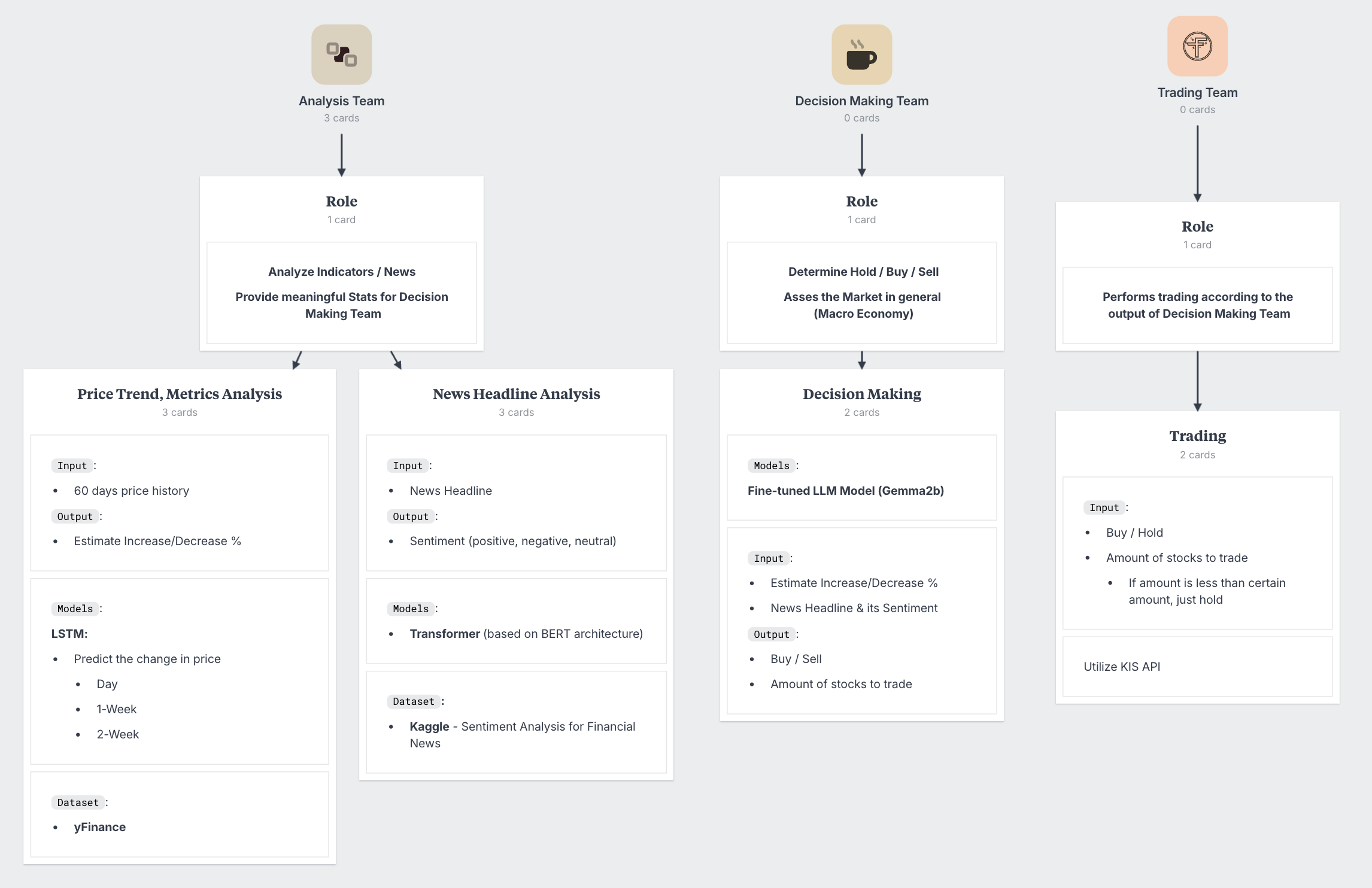

1.Multi-modal Trading AI Agent (MMTA)

The purpose of this project is to learn and get hands-on experience on different parts of deep learning. Followings are the areas I will be exploring:

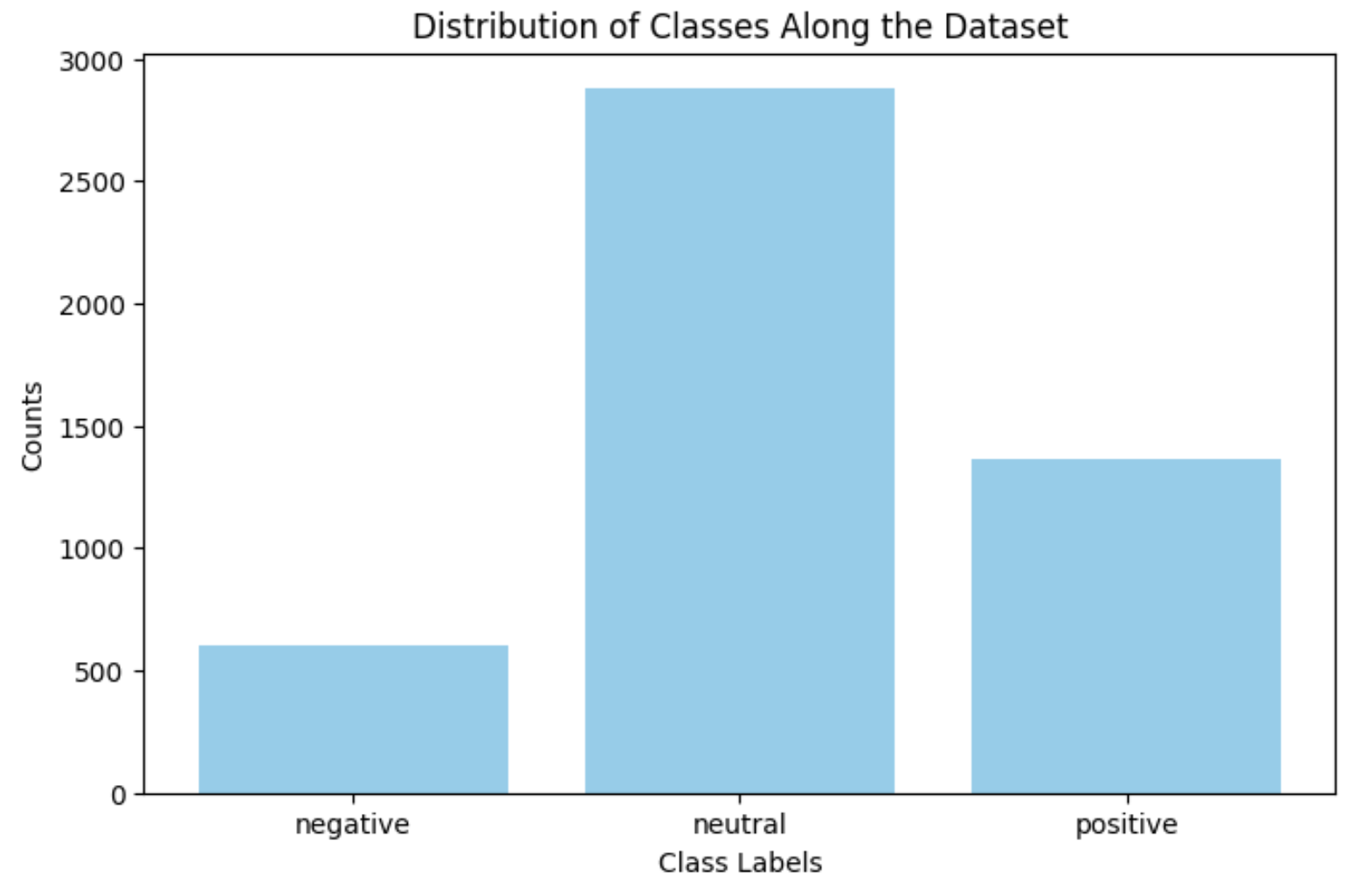

2.[MMTA] Implementing News Headline Analysis Model Through Transformer pt.1

I plan to use a BERT-based Transformer model for this task.As I am working on a laptop, to be as efficient as possible, I used EarlyStopping CallbackW

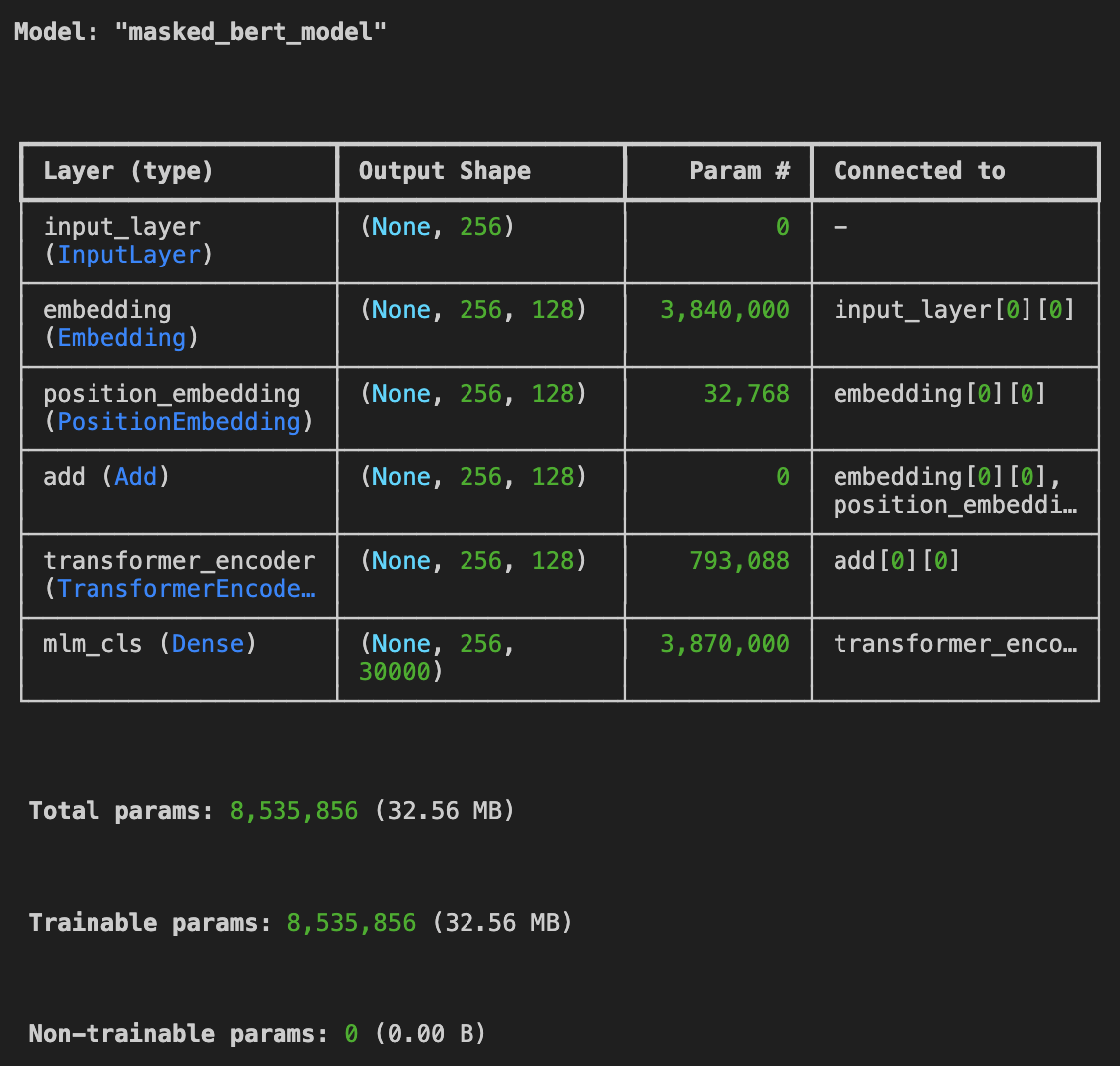

3.[MMTA] Masked Language Modeling pt.1

For Masked Language Modeling, I referred to keras’s official document: keras_masked_langauge_modelingI got the dataset from : \[Kaggle] A Million News

4.Connecting Jupyter Lab with Google Colab Server

When I was training my model with a large dataset (1,200,000 x 1) on my mac, it took 19 hours for the model to finish 1 epoch. I knew that google cola

5.[MMTA] LSTM Model For Stock Prediction

My current BERT model is generating the same output for any input, and I wasn’t able to find the reason behind it. So I started working on my LSTM mod

6.[MMTA] LSTM Model For Stock Prediction pt.2

Last time, my model was overfitted because I did not handle the noises within the data. This time, I was inspired by the article: Denoising Stock Pri

7.[MMTA] News Headline Analysis Model Through Transformer pt.3

I finally found out why my Sentiment Classification BERT Model was keep on overfitting to the dataset. The Transformer block I made wasn’t capable of

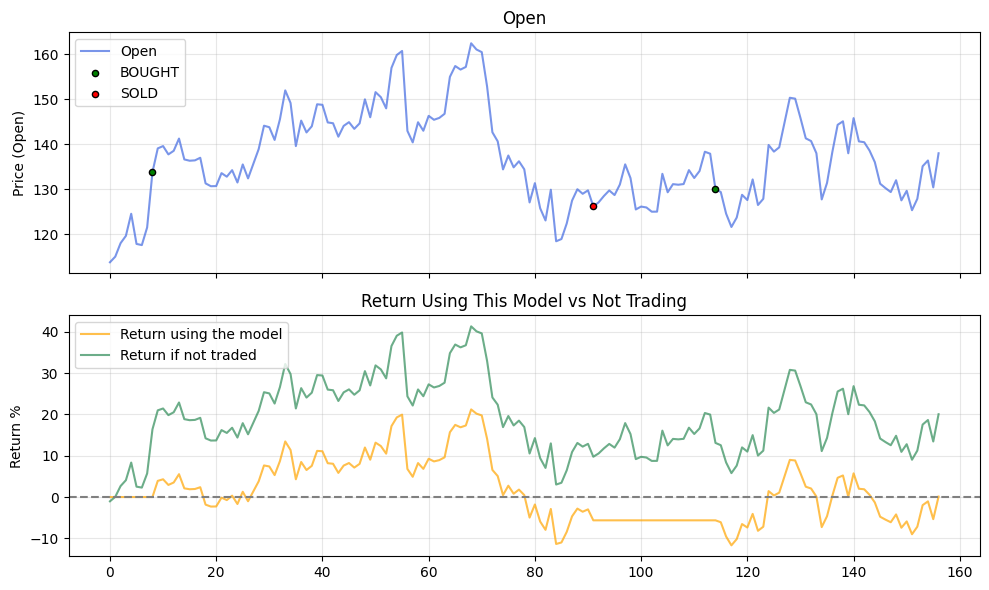

8.[MMTA] LSTM Model For Stock Prediction pt.3

There were a lot of improvement for this model.The biggest change is that I added more columns for the dataset. It now refers to:RSIMACDBollinger Band