Boosting Zero-shot Learning via constrastive Optimization of Attribute Representations

1.Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 제1부

Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 속성 표현의 대조 최적화를 통한 제로샷 학습 향상 Abstract Zero-shot learning (ZSL)

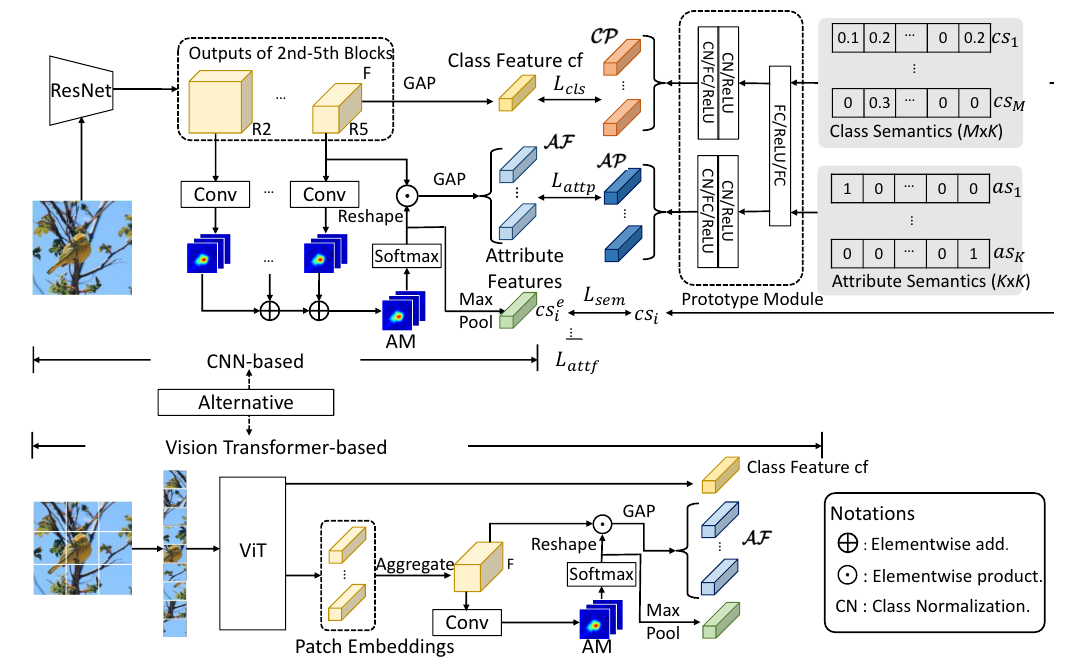

2.Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 제2부 method

Visiual recognition flourishes in the presence of deep neural networks (DNNs) 1–3. flourishes 번창하다시각적 인식은 심층 신경망(DNN)의 존재 하에서 번창한다1–3.

3.Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 제2-2부 method

ㅇ

4.Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 제3부 method

Visiual recognition flourishes in the presence of deep neural networks (DNNs) 1–3. flourishes 번창하다시각적 인식은 심층 신경망(DNN)의 존재 하에서 번창한다1–3.Zero-shot learni

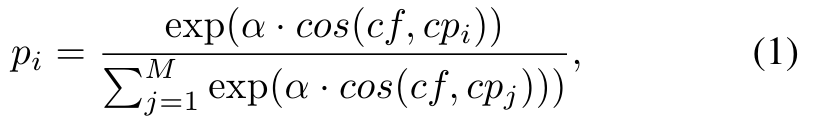

5.Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 제3-2부 method

4) Inference: At testing, the input class-semantics for seen class are replaced by the corresponding semantics of unseen classes (for ZSL) or of all c

6.Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 제3-3부 method

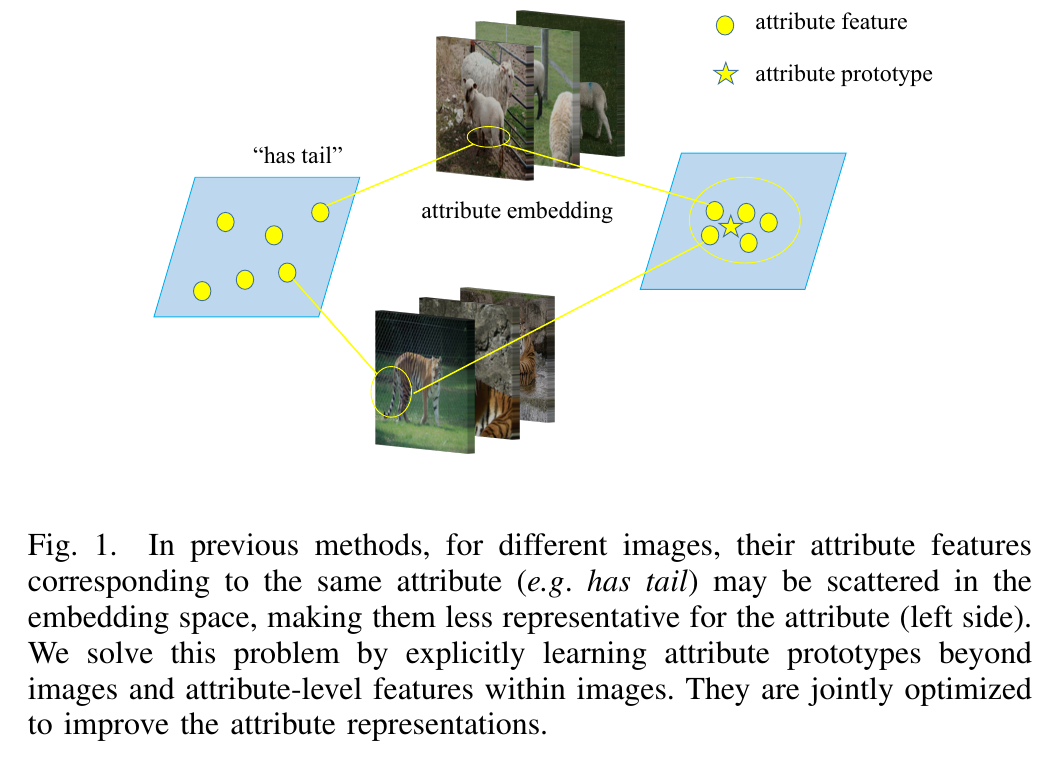

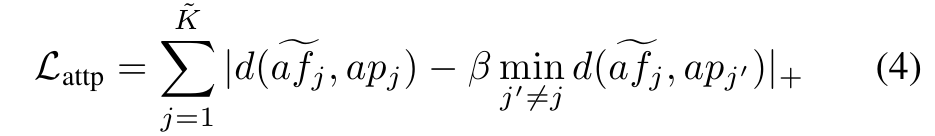

Contrastive optimization of attribute-level features against attribute prototypes.속성 프로토타입에 대한 속성 수준 기능의 대조적 최적화.Given the set of attribute-level feat

7.Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 제3-4부 method

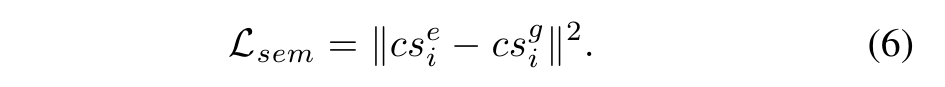

$\\mathcal L{attp}$ and $\\mathcal L{attf}$ are defined in the visual space as a result of the semantic-to-visual mapping (from $a$s to $ap$).$\\mathc

8.Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 제4-1부 method

Modern ZSL methods can be broadly categorized as either generative-based or embedding-based 31. broadly 대체로 be categorized as 로 분류되다현대의 ZSL 방법은 크게 생성

9.Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 제4-2부 method

The goal of contrastive learning is to learn an embedding space in which similar samples are pushed close and dissimilar ones are pulled away 43.push

10.Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 제5-1부 method

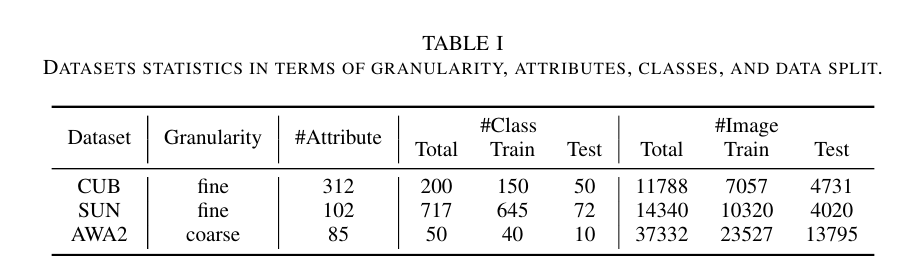

We evaluate our method on three most widely used datasets CUB 28, SUN 29 and AwA2 30, and follow the proposed train and test split in 30. 우리는 가장 널리 사용

11.Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 제5-2부 method

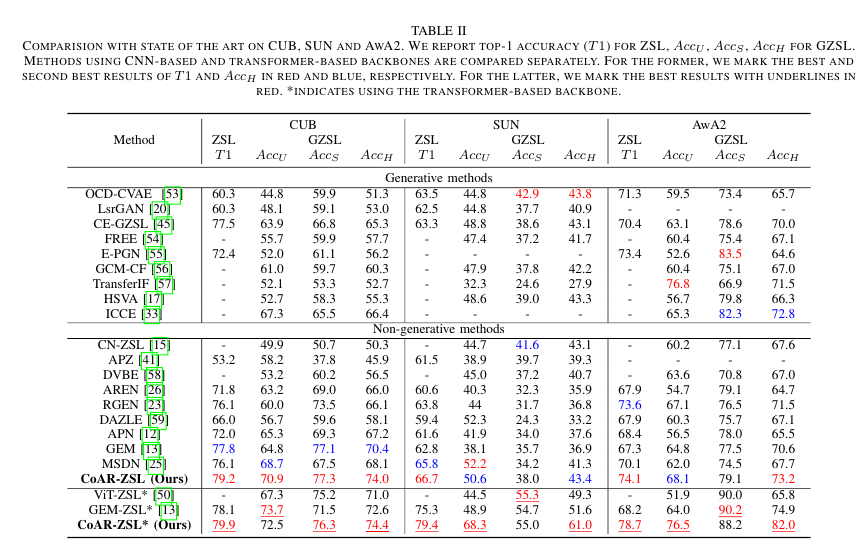

COMPARISION WITH STATE OF THE ART ON CUB, SUN AND AWA2. WE REPORT TOP-1 ACCURACY (T1) FOR ZSL, Acc U , Acc S , Acc H FOR GZSL.CUB, SUN 및 AWA2에 대한 최신 기

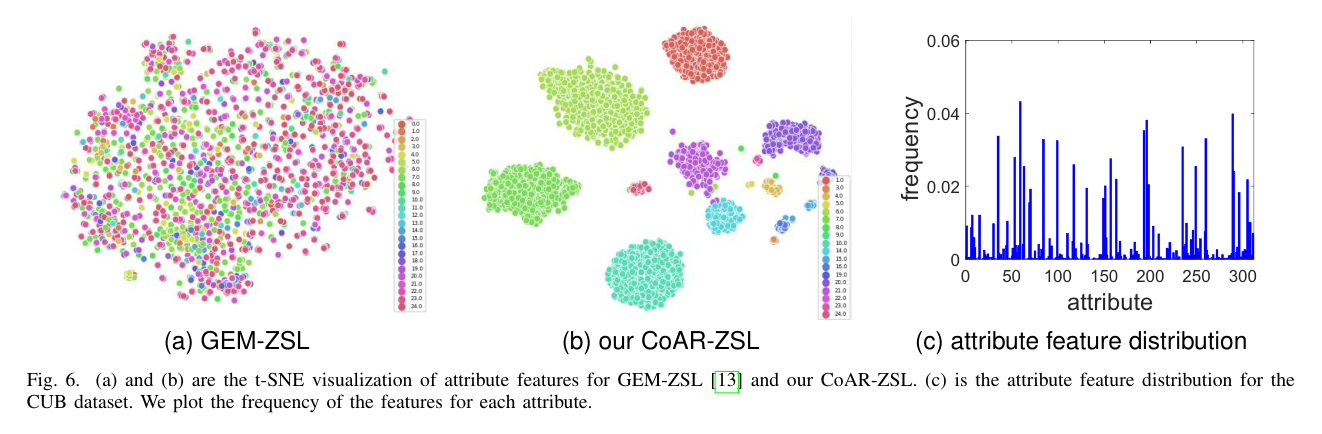

12.Boosting Zero-shot Learning via Contrastive Optimization of Attribute Representations 제5-3부 method

In Fig. 3, we evaluate the effect of temperature τ in Eq. (5).그림 3에서 우리는 Eq.에서 온도 τ의 영향을 평가합니다. (5).We vary it from 0.05 to 1 on the three datasets an